Position Management: Scaling Into Winners With A Falling-Risk Pyramid

We introduce CPyramidBridge, a thin MQL5 layer that maps bet-sizing results to CPyramidEngine. The bridge applies probability to initial lot sizing, enforces a capacity-aware entry gate, promotes add-ons from dynamic divergence, adapts the trailing stop to reserve estimates, and syncs signals on close, allowing an Expert Advisor to convert model confidence and concurrency into a structured, decreasing-risk pyramid.

MQL5 Wizard Techniques you should know (Part 92): Using B-Tree Indexing and a Bayesian NN in a Custom Signal Class

In this article we present yet another custom MQL5 Signal Class that we are labelling ‘CSignalBTreeBayesian’. We are marrying the algorithm of a balanced tree with a neural network that is built on Bayesian principles to formulate yet another custom signal testable independently or with other signals thanks to the MQL5 Wizard.

Beyond GARCH (Part IV): Partition Analysis in MQL5

In this article, we shift from Python research to native MQL5 engineering. We build the first module of the MMAR library: a shared constants header, an SVD-based OLS regression class, a Generalized Hurst Exponent estimator, and the partition analysis engine that computes the partition function, extracts tau(q), estimates H via zero-crossing interpolation, and scores multifractality through three diagnostic tests. Tested on 500,000 bars of EURUSD M10, the engine correctly classifies the data as multifractal in under four seconds. Part 4 of an eight-part series. Part 5 fits the tau(q) curve to four candidate distributions via the Legendre transform.

Beyond the Clock (Part 2): Building Runs Bars in MQL5

We implement tick-, volume-, and dollar-runs bars in Python and MQL5 and align them with the existing bar‑building framework. The article details the dual‑accumulator update, offline calibration with per‑side seeds, state persistence for EAs, and parity verification to match Python and MQL5 outputs. Runs bars expose one‑sided bursts that net imbalance can hide, improving coverage during quiet sessions and for mean‑reversion models.

Detecting and Classifying Fractal Patterns Using Machine Learning

In this article, we will touch upon the intriguing topic of fractal analysis and market forecasting using machine learning. These are just the first steps towards exploring the diverse fractal structures that form on financial price charts. We will use the correlation to find patterns and the CatBoost algorithm to classify these patterns.

Joint Recurrence Quantification Analysis (JRQA) in MQL5: Detecting Simultaneous Recurrence in Two Series

We extend the RQA library for MetaTrader 5 with JRQA, which detects when two series simultaneously revisit their own past states. The article covers the joint recurrence matrix, twelve JRQA metrics (including TREND and COMPLEXITY), dual-epsilon configuration, and a rolling-window engine with OpenCL acceleration and automatic CPU fallback. A practical indicator plots JRR, JDET, JLAM, JENTR, and JTREND for any symbol pair with timestamp alignment and normalization.

Meta-Labeling the Classics (Part 1): Filtering and Sizing RSI Trades

RSI accumulates losses in trending conditions by firing at every threshold crossing regardless of market regime. A Random Forest secondary classifier trained on 12 contextual features — RSI momentum slope, EMA50 trend velocity, ATR-normalised trend stretch, and nine others — filters raw signals and scales position size by classifier confidence on EURUSD H1. Results compare plain RSI, meta-filtered RSI, and bet-sized RSI across a 16-month out-of-sample period with per-trade metrics and drawdown diagnostics.

Covariance Matrix Adaptation Evolution Strategy (CMA-ES)

The article explores one of the most interesting non-gradient optimization algorithms, which learns to understand the geometry of the objective function. We will focus on the classical implementation of CMA-ES with a slight modification - replacing the normal distribution with the power one. We will thoroughly examine the math behind the algorithm, as well as practical implementation, and check where CMA-ES is unbeatable and where it should be avoided.

Integrating AI into 3 Smart Money Concepts (SMC): OB, BOS, and FVG

This guide integrates a trained XGBoost model (ONNX) into an SMC EA to evaluate trade setups before execution. The Python pipeline labels historical XAUUSD events and produces a 12-feature representation aligned with the EA. The result is a reproducible method to train, export, and embed the model so the EA can filter OB, FVG, and BOS signals programmatically.

An Introduction to the Study of Fractal Market Structures Using Machine Learning

The article attempts to examine financial time series from the perspective of self-similar fractal structures. Since we have too many analogies that confirm the possibility of considering market quotes as self-similar fractals, this allows us to think about the forecasting horizons of such structures.

MQL5 Wizard Techniques you should know (Part 91): Using Skip Lists and a Hopfield Network in a Custom Trailing Class

For our next Exploration on notions that are testable with the MQL5 Wizard we examine if Skip Lists and the Hopfield Network can give us a profit-guarding trailing strategy. Trailing Stop Management, as already argued, can be overlooked in most trading systems at the expense of Entry Signals or even Money Management. Trailing stops can make all the difference in certain situations such as trending markets, and thus we test this out with GBP USD.

Feature Engineering for ML (Part 4): Implementing Time Features in MQL5

Applying Python session boundaries to MQL5 broker timestamps misclassifies session membership by two to three hours on any non-UTC broker, corrupting session flags across the full backtest history. We implement CTimeFeatures.mqh, containing CRingBuffer and CTimeFeatures, with three EA-facing methods: Initialize (UTC offset capture and frequency gate configuration), Update (log return push to session-conditional ring buffers), and Calculate (cyclical encoding, session flags, and session volatility). The output is a flat double array drop-compatible with Python's get_time_features for sub-hourly, hourly, and daily timeframes.

Beyond GARCH (Part III): Building the MMAR and the Verdict

With the multifractal parameters from Part 2 in hand, this article builds the full MMAR process. We construct the multiplicative cascade for trading time, generate Fractional Brownian Motion via Davies-Harte FFT, and combine both into X(t) = B_H[theta(t)]. A 100-path Monte Carlo simulation produces the volatility forecast, which we then pit against GARCH on the same EURUSD M5 data. Does Mandelbrot's fractal architecture outforecast Engle's conditional variance framework? Part 3 of a eight-part series leading to a native MQL5 library and Expert Advisor.

MetaTrader 5 Machine Learning Blueprint (Part 16): Nested CV for Unbiased Evaluation

The article presents a V-in-V nested cross-validation pipeline for financial data that breaks leakage at three decision points: hyperparameter search, calibration, and final evaluation. A temporal three‑zone split isolates an inner walk‑forward search with the 1‑SE rule from an outer walk‑forward or CPCV evaluation, while OOF isotonic calibration is fitted independently. The resulting UnifiedValidationCalibrator delivers unbiased out‑of‑sample scores and well‑calibrated probabilities for deployment.

MQL5 Wizard Techniques you should know (Part 90): Fenwick Tree Money Management with 1D CNN in MQL5

This article implements a Fenwick Tree (Binary Indexed Tree) for volume-aware money management inside an MQL5 Wizard Expert Advisor. We structure cumulative volume in O(log n) and apply four scaling modes—linear, conservative, aggressive, and mean-reversion—optionally gated by a lightweight 1D CNN. Practical tests compare the algorithm alone versus the CNN‑filtered approach to illustrate adaptive lot sizing and risk control under varying volume topologies.

Eagle Strategy (ES)

Eagle Strategy is an algorithm that mimics the eagle's two-phase hunting strategy: global search via Levy flights using Mantegna method, alternating with intense local exploitation using the firefly algorithm, a mathematically sound approach to balancing exploration and exploitation, and a bioinspired concept that combines two natural phenomena into a single computational method.

Beyond GARCH (Part II): Measuring the Fractal Dimension of Markets

Building on the partition function analysis from Part 1, this article deepens the theoretical foundation before completing the analytical pipeline. We first give a full treatment of the Hurst exponent: what it measures, what it implies about market memory, and why it matters for the MMAR. This is followed by an intuitive exploration of multifractal spectra and what f(α) reveals about volatility heterogeneity. We then move to implementation: extracting the scaling function τ(q), estimating H via R/S analysis, and fitting the multifractal spectrum across four candidate distributions. By the end, we have the complete parameter set needed to construct the MMAR process in Part 3. Part 2 of an eight-part series.

Determining Fair Exchange Rates Using PPP and IMF Data

Building a purchasing power parity (PPP)-based exchange rate analysis system using Python. The author developed an algorithm with 5 methods for calculating fair exchange rates using IMF data. A practical guide to fundamental currency analysis, economic data processing, and integration with trading systems. Full code in open source.

Building an Object-Oriented ONNX Inference Engine in MQL5

This article shows how to run Python-trained models natively in MetaTrader 5 via the terminal's ONNX functions. We build an MQL5 class that encapsulates session creation, fixes input/output tensor shapes, applies min-max feature normalization to mirror training, and executes OnnxRun once per bar to protect the CPU, the result is a reliable, maintainable inference path for live charts and the Strategy Tester without sockets or DLLs.

Integrating MQL5 with Data Processing Packages (Part 9): Entropy-Based Adaptive Volatility

This work presents an end-to-end pipeline: collect MetaTrader 5 data, engineer entropy/volatility/trend features, train a PyTorch classifier, and expose predictions through a Flask API. An MQL5 EA posts rolling prices each tick, receives probability and regime, and applies adaptive position sizing and stop distances. The result is a clear recipe for integrating ML inference with MetaTrader 5.

MQL5 Wizard Techniques you should know (Part 89): Using Bitwise Vectorization with Perceptron Classifiers

This article presents a custom MQL5 signal class, CSignalBitwisePerceptron, for ultra-lightweight entry logic. It packs 64 bars into a single uint64 via bitwise vectorization and evaluates them with a perceptron that sums weights only for active bits. A two-gate flow (algorithmic hash map plus neural threshold) minimizes array iteration and heavy math. Readers get a practical template to cut latency and refine entry validation.

Beyond GARCH (Part I): Mandelbrot's MMAR versus Engle's GARCH

This article starts the MMAR pipeline on EURUSD M5 data. We load market data via the MetaTrader5 Python API and run partition-function analysis with non-overlapping intervals to test for multifractal scaling. The result is an evidence-based decision on fractality, a prerequisite for building MMAR and for choosing whether to proceed beyond GARCH.

Downloading International Monetary Fund Data Using Python

Downloading international monetary fund data in Python: Mining IMF data for use in macroeconomic currency strategies. How can macroeconomics help an ordinary and an algorithmic trader?

Biogeography-Based Optimization (BBO)

Biogeography-Based Optimization (BBO) is an elegant global optimization method inspired by natural processes of species migration between islands within archipelagos. The algorithm is based on a simple yet powerful idea: high-quality solutions actively share their characteristics, while low-quality ones actively adopt new features, creating a natural flow of information from the best solutions to the worst. A unique adaptive mutation operator provides an excellent balance between exploration and exploitation. BBO demonstrates high efficiency on a variety of tasks.

From Matrices to Models: How to Build an ML Pipeline in MQL5 and Export It to ONNX

The article describes the arrangement of a coordinated ML pipeline in MetaTrader 5 with separation of roles: Python trains and exports the model to ONNX, MQL5 reproduces normalization and PCA via matrix/vector and performs inference. This approach makes the model's inputs stable and verifiable, and the MetaTrader 5 strategy tester provides metrics for analyzing the system behavior.

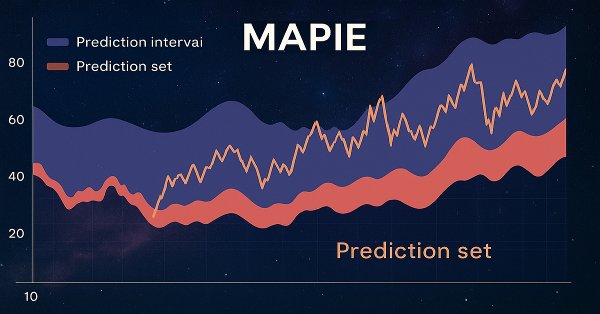

Exploring Conformal Forecasting of Financial Time Series

In this article, we will consider conformal predictions and the MAPIE library that implements them. This approach is one of the most modern ones in machine learning and allows us to focus on risk management for existing diverse machine learning models. Conformal predictions, by themselves, are not a way to find patterns in data. They only determine the degree of confidence of existing models in predicting specific examples and allow filtering for reliable predictions.

MetaTrader 5 Machine Learning Blueprint (Part 15): How to Calibrate Profit-Taking and Stop-Loss Targets from Synthetic Data

This article applies the Optimal Trading Rule from AFML Chapter 13 to set profit targets and stop-losses without in-sample calibration. We model post-entry P&L with a discrete Ornstein–Uhlenbeck process, run a 100,000-path search, and implement Python, multiprocessing, and a Numba @njit parallel kernel (242× faster). The result is an optimal (PT, SL) under three forecast specifications, constrained by the prop-firm daily loss limit.

Market Microstructure in MQL5: Robust Foundation (Part 1)

This article builds the foundation layer of a twelve-part MQL5 market microstructure toolkit. It implements guarded math helpers (SafeDivide, SafeLog, SafeSqrt, SafeExp, SafeTanh), robust data validation (ValidateSymbolV2, SafeCopyClose), trimmed statistical estimators (robust mean var), a linear regression slope, shared structs, and an FFT. You compile a single include file that hardens indicators and expert advisors against silent numerical failures and standardizes data flow for later parts.

MQL5 Wizard Techniques you should know (Part 88): Using Blooms Filter with a Custom Trailing Class

Our next focus in these series on ideas that can be rapidly prototyped with the MQL5 Wizard, is a Custom Trailing class that uses the Blooming Filter. Trailing Stop systems are an optional but very resourceful part to any trading system that we want to explore more in these series besides the traditional Entry Signals.

Feature Engineering for ML (Part 2): Implementing Fixed-Width Fractional Differentiation in MQL5

This article delivers a production-grade MQL5 implementation of fixed-width fractional differentiation for live MetaTrader 5 feeds. We introduce a header-only CFFDEngine that precomputes weights without a fixed cap, performs O(width) per-bar updates, and avoids per-tick allocations. The FFD.mq5 indicator supports all ENUM_APPLIED_PRICE types and prev_calculated optimization. Validation scripts confirm numerical equivalence with the standard Python frac diff_ffd pipeline.

Python + MetaTrader 5: Fast Research Framework for Data, Features, and Prototypes

The article demonstrates how Python and MetaTrader 5 integration combines research flexibility and trade execution into a single workflow. Python is used for data analysis, feature selection and model training, while MetaTrader 5 is used for testing and trading automation. This approach simplifies the transfer of solutions into practice, increases reproducibility, and makes the development of trading systems faster and more structured.

CFTC Data Mining in Python and Building an AI Model

Let's try mining CFTC data, downloading COT and TFF reports via Python, connecting all this with MetaTrader 5 quotes and an AI model, and get forecasts. What are COT reports in the Forex market? How to use COT and TFF reports for forecasting?

Mining Central Bank Balance Sheet Data to Get a Picture of Global Liquidity

Mining central bank balance sheet data provides a picture of global liquidity in the Forex market and key currencies. We combine data from the Fed, ECB, BOJ and PBoC into a composite index and use machine learning to uncover hidden patterns. This approach turns raw data into real trading signals by combining fundamental and technical analysis.

MQL5 Wizard Techniques you should know (Part 87): Volatility-Scaled Money Management with Monotonic Queue in MQL5

This article presents a custom MQL5 money management class that adapts position sizing to real-time volatility using a monotonic queue for O(N) sliding-window extremes. The class applies inverse volatility scaling and optionally validates risk with an RBF network. We show implementation details in the Optimize method and compare results with the inbuilt Size-Optimized class to assess latency and risk control benefits.

CAPM Model Indicator for the Forex Market

Adaptation of the classical CAPM model for the Forex currency market in MQL5. The indicator calculates expected return and risk premium based on historical volatility. The indicators rise at peaks and bottoms, reflecting the fundamental principles of pricing. Practical application for counter-trend and trend-following strategies, taking into account the dynamics of the risk-reward ratio in real time. The article includes mathematical apparatus and technical implementation.

Neural Networks in Trading: Detecting Anomalies in the Frequency Domain (CATCH)

The CATCH framework combines Fourier transform and frequency patching to accurately identify market anomalies beyond the reach of traditional methods. Let us examine how this approach reveals hidden patterns in financial data.

Neural Networks in Trading: Adaptive Detection of Market Anomalies (Final Part)

We continue to build the algorithms that form the basis of the DADA framework, which is an advanced tool for detecting anomalies in time series. This approach enables effective distinguishing random fluctuations from significant deviations. Unlike classical methods, DADA dynamically adapts to different data types, choosing the optimal compression level in each specific case.

Deterministic Oscillatory Search (DOS)

Deterministic Oscillatory Search (DOS) algorithm is an innovative global optimization method that combines the advantages of gradient and swarm algorithms without the use of random numbers. The fitness oscillation and slope mechanism allows DOS to explore complex search spaces in a deterministic manner.

MetaTrader 5 Machine Learning Blueprint (Part 13): Implementing Bet Sizing in MQL5

We build a production MQL5 bet‑sizing toolkit: utilities, snippets, and user‑level functions that mirror the Python originals. The methods cover probability‑to‑size mapping with overlap correction, dynamic forecast‑price sizing (calibrated sigmoid/power with limit price), occupancy‑based budgeting, and mixture‑model reserve sizing (EF3M). The result is a signed [−1, ..., 1] position plus diagnostics you can plug directly into order logic.

Self-Learning Expert Advisor with a Neural Network Based on a Markov State-Transition Matrix

Self-training EA with a neural network based on a state matrix. We combine Markov chains with a multilayer neural network MLP developed using the ALGLIB MQL5 library. How can Markov chains and neural networks be combined for Forex forecasting?