Hybridization of population algorithms. Sequential and parallel structures

Here we will dive into the world of hybridization of optimization algorithms by looking at three key types: strategy mixing, sequential and parallel hybridization. We will conduct a series of experiments combining and testing relevant optimization algorithms.

Neural Networks in Trading: Hierarchical Dual-Tower Transformer (Final Part)

We continue to build the Hidformer hierarchical dual-tower transformer model designed for analyzing and forecasting complex multivariate time series. In this article, we will bring the work we started earlier to its logical conclusion — we will test the model on real historical data.

Category Theory in MQL5 (Part 12): Orders

This article which is part of a series that follows Category Theory implementation of Graphs in MQL5, delves in Orders. We examine how concepts of Order-Theory can support monoid sets in informing trade decisions by considering two major ordering types.

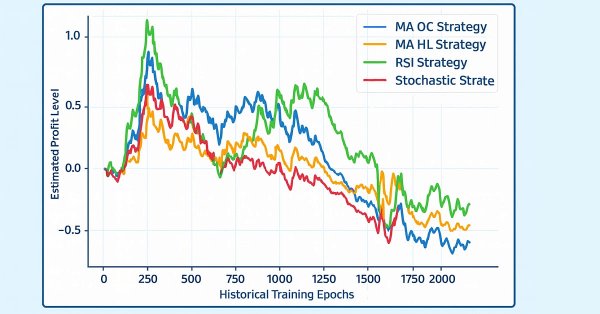

Overcoming The Limitation of Machine Learning (Part 7): Automatic Strategy Selection

This article demonstrates how to automatically identify potentially profitable trading strategies using MetaTrader 5. White-box solutions, powered by unsupervised matrix factorization, are faster to configure, more interpretable, and provide clear guidance on which strategies to retain. Black-box solutions, while more time-consuming, are better suited for complex market conditions that white-box approaches may not capture. Join us as we discuss how our trading strategies can help us carefully identify profitable strategies under any circumstance.

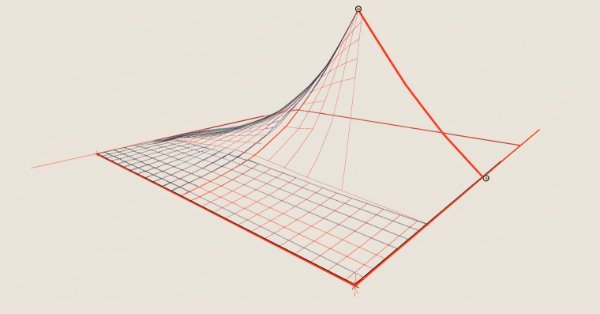

Neural Network in Practice: Least Squares

In this article, we'll look at a few ideas, including how mathematical formulas are more complex in appearance than when implemented in code. In addition, we will consider how to set up a chart quadrant, as well as one interesting problem that may arise in your MQL5 code. Although, to be honest, I still don't quite understand how to explain it. Anyway, I'll show you how to fix it in code.

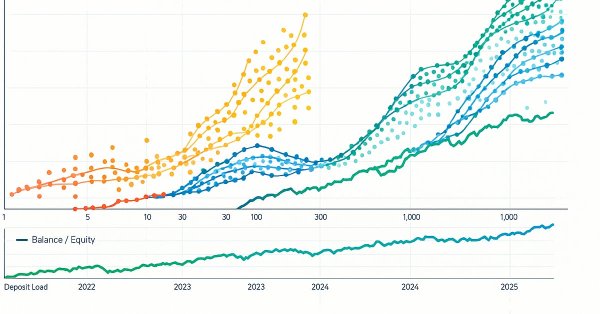

Overcoming The Limitation of Machine Learning (Part 2): Lack of Reproducibility

The article explores why trading results can differ significantly between brokers, even when using the same strategy and financial symbol, due to decentralized pricing and data discrepancies. The piece helps MQL5 developers understand why their products may receive mixed reviews on the MQL5 Marketplace, and urges developers to tailor their approaches to specific brokers to ensure transparent and reproducible outcomes. This could grow to become an important domain-bound best practice that will serve our community well if the practice were to be widely adopted.

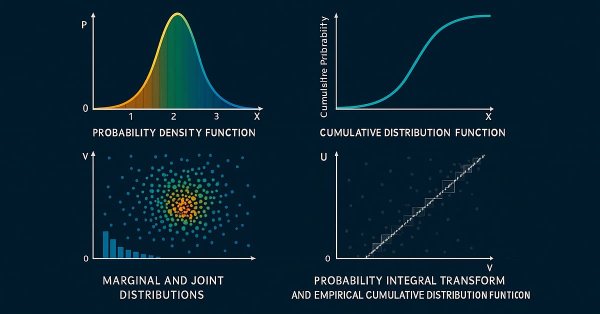

MetaTrader 5 Machine Learning Blueprint (Part 10): Bet Sizing for Financial Machine Learning

Fixed fractions and raw probabilities misallocate risk under overlapping labels and induce overtrading. This article delivers four AFML-compliant sizers: probability-based (z-score → CDF, active-bet averaging, discretization), forecast-price (sigmoid/power with w calibration and limit price), budget-constrained (direction-only), and reserve (mixture-CDF via EF3M). You get a signed, bounded position series with documented conditions of use.

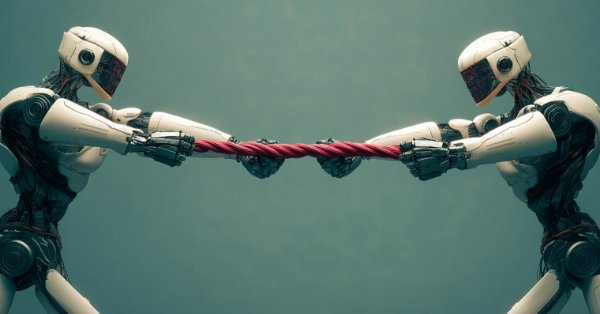

Generative Adversarial Networks (GANs) for Synthetic Data in Financial Modeling (Part 2): Creating Synthetic Symbol for Testing

In this article we are creating a synthetic symbol using a Generative Adversarial Network (GAN) involves generating realistic Financial data that mimics the behavior of actual market instruments, such as EURUSD. The GAN model learns patterns and volatility from historical market data and creates synthetic price data with similar characteristics.

African Buffalo Optimization (ABO)

The article presents the African Buffalo Optimization (ABO) algorithm, a metaheuristic approach developed in 2015 based on the unique behavior of these animals. The article describes in detail the stages of the algorithm implementation and its efficiency in finding solutions to complex problems, which makes it a valuable tool in the field of optimization.

MQL5 Wizard Techniques you should know (Part 68): Using Patterns of TRIX and the Williams Percent Range with a Cosine Kernel Network

We follow up our last article, where we introduced the indicator pair of TRIX and Williams Percent Range, by considering how this indicator pairing could be extended with Machine Learning. TRIX and William’s Percent are a trend and support/ resistance complimentary pairing. Our machine learning approach uses a convolution neural network that engages the cosine kernel in its architecture when fine-tuning the forecasts of this indicator pairing. As always, this is done in a custom signal class file that works with the MQL5 wizard to assemble an Expert Advisor.

Brain Storm Optimization algorithm (Part I): Clustering

In this article, we will look at an innovative optimization method called BSO (Brain Storm Optimization) inspired by a natural phenomenon called "brainstorming". We will also discuss a new approach to solving multimodal optimization problems the BSO method applies. It allows finding multiple optimal solutions without the need to pre-determine the number of subpopulations. We will also consider the K-Means and K-Means++ clustering methods.

Applying Localized Feature Selection in Python and MQL5

This article explores a feature selection algorithm introduced in the paper 'Local Feature Selection for Data Classification' by Narges Armanfard et al. The algorithm is implemented in Python to build binary classifier models that can be integrated with MetaTrader 5 applications for inference.

Population optimization algorithms: Whale Optimization Algorithm (WOA)

Whale Optimization Algorithm (WOA) is a metaheuristic algorithm inspired by the behavior and hunting strategies of humpback whales. The main idea of WOA is to mimic the so-called "bubble-net" feeding method, in which whales create bubbles around prey and then attack it in a spiral motion.

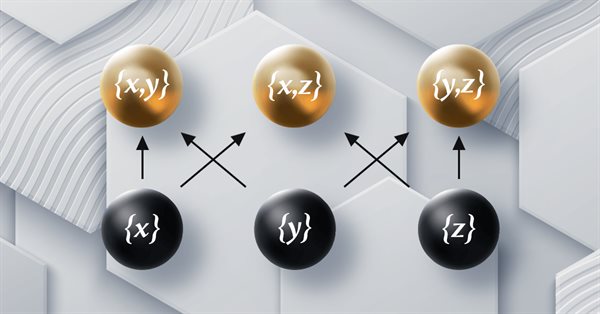

Mutual information as criteria for Stepwise Feature Selection

In this article, we present an MQL5 implementation of Stepwise Feature Selection based on the mutual information between an optimal predictor set and a target variable.

Ensemble methods to enhance classification tasks in MQL5

In this article, we present the implementation of several ensemble classifiers in MQL5 and discuss their efficacy in varying situations.

MQL5 Wizard Techniques you should know (Part 47): Reinforcement Learning with Temporal Difference

Temporal Difference is another algorithm in reinforcement learning that updates Q-Values basing on the difference between predicted and actual rewards during agent training. It specifically dwells on updating Q-Values without minding their state-action pairing. We therefore look to see how to apply this, as we have with previous articles, in a wizard assembled Expert Advisor.

Analyzing weather impact on currencies of agricultural countries using Python

What is the relationship between weather and Forex? Classical economic theory has long ignored the influence of such factors as weather on market behavior. But everything has changed. Let's try to find connections between the weather conditions and the position of agricultural currencies on the market.

MQL5 Wizard Techniques you should know (14): Multi Objective Timeseries Forecasting with STF

Spatial Temporal Fusion which is using both ‘space’ and time metrics in modelling data is primarily useful in remote-sensing, and a host of other visual based activities in gaining a better understanding of our surroundings. Thanks to a published paper, we take a novel approach in using it by examining its potential to traders.

Neural Networks in Trading: Reducing Memory Consumption with Adam-mini Optimization

One of the directions for increasing the efficiency of the model training and convergence process is the improvement of optimization methods. Adam-mini is an adaptive optimization method designed to improve on the basic Adam algorithm.

Artificial Tribe Algorithm (ATA)

The article provides a detailed discussion of the key components and innovations of the ATA optimization algorithm, which is an evolutionary method with a unique dual behavior system that adapts depending on the situation. ATA combines individual and social learning while using crossover for explorations and migration to find solutions when stuck in local optima.

Role of random number generator quality in the efficiency of optimization algorithms

In this article, we will look at the Mersenne Twister random number generator and compare it with the standard one in MQL5. We will also find out the influence of the random number generator quality on the results of optimization algorithms.

Building Volatility models in MQL5 (Part I): The Initial Implementation

In this article, we present an MQL5 library for modeling volatility, designed to function similarly to Python's arch package. The library currently supports the specification of common conditional mean (HAR, AR, Constant Mean, Zero Mean) and conditional volatility (Constant Variance, ARCH, GARCH) models.

Example of Causality Network Analysis (CNA) and Vector Auto-Regression Model for Market Event Prediction

This article presents a comprehensive guide to implementing a sophisticated trading system using Causality Network Analysis (CNA) and Vector Autoregression (VAR) in MQL5. It covers the theoretical background of these methods, provides detailed explanations of key functions in the trading algorithm, and includes example code for implementation.

MQL5 Wizard Techniques you should know (Part 54): Reinforcement Learning with hybrid SAC and Tensors

Soft Actor Critic is a Reinforcement Learning algorithm that we looked at in a previous article, where we also introduced python and ONNX to these series as efficient approaches to training networks. We revisit the algorithm with the aim of exploiting tensors, computational graphs that are often exploited in Python.

Population optimization algorithms: Evolution of Social Groups (ESG)

We will consider the principle of constructing multi-population algorithms. As an example of this type of algorithm, we will have a look at the new custom algorithm - Evolution of Social Groups (ESG). We will analyze the basic concepts, population interaction mechanisms and advantages of this algorithm, as well as examine its performance in optimization problems.

Population optimization algorithms: Charged System Search (CSS) algorithm

In this article, we will consider another optimization algorithm inspired by inanimate nature - Charged System Search (CSS) algorithm. The purpose of this article is to present a new optimization algorithm based on the principles of physics and mechanics.

Neuroboids Optimization Algorithm 2 (NOA2)

The new proprietary optimization algorithm NOA2 (Neuroboids Optimization Algorithm 2) combines the principles of swarm intelligence with neural control. NOA2 combines the mechanics of a neuroboid swarm with an adaptive neural system that allows agents to self-correct their behavior while searching for the optimum. The algorithm is under active development and demonstrates potential for solving complex optimization problems.

Central Force Optimization (CFO) algorithm

The article presents the Central Force Optimization (CFO) algorithm inspired by the laws of gravity. It explores how principles of physical attraction can solve optimization problems where "heavier" solutions attract less successful counterparts.

Neural Networks in Trading: Multi-Task Learning Based on the ResNeXt Model (Final Part)

We continue exploring a multi-task learning framework based on ResNeXt, which is characterized by modularity, high computational efficiency, and the ability to identify stable patterns in data. Using a single encoder and specialized "heads" reduces the risk of model overfitting and improves the quality of forecasts.

MQL5 Wizard Techniques you should know (Part 20): Symbolic Regression

Symbolic Regression is a form of regression that starts with minimal to no assumptions on what the underlying model that maps the sets of data under study would look like. Even though it can be implemented by Bayesian Methods or Neural Networks, we look at how an implementation with Genetic Algorithms can help customize an expert signal class usable in the MQL5 wizard.

Battle Royale Optimizer (BRO)

The article explores the Battle Royale Optimizer algorithm — a metaheuristic in which solutions compete with their nearest neighbors, accumulate “damage,” are replaced when a threshold is exceeded, and periodically shrink the search space around the current best solution. It presents both pseudocode and an MQL5 implementation of the CAOBRO class, including neighbor search, movement toward the best solution, and an adaptive delta interval. Test results on the Hilly, Forest, and Megacity functions highlight the strengths and limitations of the approach. The reader is provided with a ready-to-use foundation for experimentation and tuning key parameters such as popSize and maxDamage.

Bivariate Copulae in MQL5 (Part 1): Implementing Gaussian and Student's t-Copulae for Dependency Modeling

This is the first part of an article series presenting the implementation of bivariate copulae in MQL5. This article presents code implementing Gaussian and Student's t-copulae. It also delves into the fundamentals of statistical copulae and related topics. The code is based on the Arbitragelab Python package by Hudson and Thames.

Overcoming The Limitation of Machine Learning (Part 4): Overcoming Irreducible Error Using Multiple Forecast Horizons

Machine learning is often viewed through statistical or linear algebraic lenses, but this article emphasizes a geometric perspective of model predictions. It demonstrates that models do not truly approximate the target but rather map it onto a new coordinate system, creating an inherent misalignment that results in irreducible error. The article proposes that multi-step predictions, comparing the model’s forecasts across different horizons, offer a more effective approach than direct comparisons with the target. By applying this method to a trading model, the article demonstrates significant improvements in profitability and accuracy without changing the underlying model.

CFTC Data Mining in Python and Building an AI Model

Let's try mining CFTC data, downloading COT and TFF reports via Python, connecting all this with MetaTrader 5 quotes and an AI model, and get forecasts. What are COT reports in the Forex market? How to use COT and TFF reports for forecasting?

Integrating Computer Vision into Trading in MQL5 (Part 2): Extending the Architecture to 2D RGB Image Analysis

Computer vision for trading: how it works and how to develop it step by step. We create an algorithm for recognition of RGB images of price charts using the attention mechanism and a bidirectional LSTM layer. As a result, we obtain a working model for forecasting the EURUSD price with the accuracy of up to 55% in the validation section.

Neural Networks in Trading: Detecting Anomalies in the Frequency Domain (CATCH)

The CATCH framework combines Fourier transform and frequency patching to accurately identify market anomalies beyond the reach of traditional methods. Let us examine how this approach reveals hidden patterns in financial data.

MetaTrader 5 Machine Learning Blueprint (Part 13): Implementing Bet Sizing in MQL5

We build a production MQL5 bet‑sizing toolkit: utilities, snippets, and user‑level functions that mirror the Python originals. The methods cover probability‑to‑size mapping with overlap correction, dynamic forecast‑price sizing (calibrated sigmoid/power with limit price), occupancy‑based budgeting, and mixture‑model reserve sizing (EF3M). The result is a signed [−1, ..., 1] position plus diagnostics you can plug directly into order logic.

Bacterial Chemotaxis Optimization (BCO)

The article presents the original version of the Bacterial Chemotaxis Optimization (BCO) algorithm and its modified version. We will take a closer look at all the differences, with a special focus on the new version of BCOm, which simplifies the bacterial movement mechanism, reduces the dependence on positional history, and uses simpler math than the computationally heavy original version. We will also conduct the tests and summarize the results.

Population optimization algorithms: Artificial Multi-Social Search Objects (MSO)

This is a continuation of the previous article considering the idea of social groups. The article explores the evolution of social groups using movement and memory algorithms. The results will help to understand the evolution of social systems and apply them in optimization and search for solutions.

MQL5 Wizard Techniques you should know (Part 60): Inference Learning (Wasserstein-VAE) with Moving Average and Stochastic Oscillator Patterns

We wrap our look into the complementary pairing of the MA & Stochastic oscillator by examining what role inference-learning can play in a post supervised-learning & reinforcement-learning situation. There are clearly a multitude of ways one can choose to go about inference learning in this case, our approach, however, is to use variational auto encoders. We explore this in python before exporting our trained model by ONNX for use in a wizard assembled Expert Advisor in MetaTrader.