MQL5 Wizard Techniques you should know (Part 22): Conditional GANs

Generative Adversarial Networks are a pairing of Neural Networks that train off of each other for more accurate results. We adopt the conditional type of these networks as we look to possible application in forecasting Financial time series within an Expert Signal Class.

Neural networks made easy (Part 52): Research with optimism and distribution correction

As the model is trained based on the experience reproduction buffer, the current Actor policy moves further and further away from the stored examples, which reduces the efficiency of training the model as a whole. In this article, we will look at the algorithm of improving the efficiency of using samples in reinforcement learning algorithms.

Data Science and ML (Part 35): NumPy in MQL5 – The Art of Making Complex Algorithms with Less Code

NumPy library is powering almost all the machine learning algorithms to the core in Python programming language, In this article we are going to implement a similar module which has a collection of all the complex code to aid us in building sophisticated models and algorithms of any kind.

MQL5 Wizard Techniques you should know (Part 79): Using Gator Oscillator and Accumulation/Distribution Oscillator with Supervised Learning

In the last piece, we concluded our look at the pairing of the gator oscillator and the accumulation/distribution oscillator when used in their typical setting of the raw signals they generate. These two indicators are complimentary as trend and volume indicators, respectively. We now follow up that piece, by examining the effect that supervised learning can have on enhancing some of the feature patterns we had reviewed. Our supervised learning approach is a CNN that engages with kernel regression and dot product similarity to size its kernels and channels. As always, we do this in a custom signal class file that works with the MQL5 wizard to assemble an Expert Advisor.

Population optimization algorithms: Changing shape, shifting probability distributions and testing on Smart Cephalopod (SC)

The article examines the impact of changing the shape of probability distributions on the performance of optimization algorithms. We will conduct experiments using the Smart Cephalopod (SC) test algorithm to evaluate the efficiency of various probability distributions in the context of optimization problems.

Comet Tail Algorithm (CTA)

In this article, we will look at the Comet Tail Optimization Algorithm (CTA), which draws inspiration from unique space objects - comets and their impressive tails that form when approaching the Sun. The algorithm is based on the concept of the motion of comets and their tails, and is designed to find optimal solutions in optimization problems.

Neural Networks Made Easy (Part 91): Frequency Domain Forecasting (FreDF)

We continue to explore the analysis and forecasting of time series in the frequency domain. In this article, we will get acquainted with a new method to forecast data in the frequency domain, which can be added to many of the algorithms we have studied previously.

MQL5 Wizard Techniques you should know (Part 11): Number Walls

Number Walls are a variant of Linear Shift Back Registers that prescreen sequences for predictability by checking for convergence. We look at how these ideas could be of use in MQL5.

Neural Networks in Trading: Integrating Chaos Theory into Time Series Forecasting (Final Part)

We continue to integrate methods proposed by the authors of the Attraos framework into trading models. Let me remind you that this framework uses concepts of chaos theory to solve time series forecasting problems, interpreting them as projections of multidimensional chaotic dynamic systems.

Neural Networks in Trading: Hierarchical Feature Learning for Point Clouds

We continue to study algorithms for extracting features from a point cloud. In this article, we will get acquainted with the mechanisms for increasing the efficiency of the PointNet method.

Population optimization algorithms: Intelligent Water Drops (IWD) algorithm

The article considers an interesting algorithm derived from inanimate nature - intelligent water drops (IWD) simulating the process of river bed formation. The ideas of this algorithm made it possible to significantly improve the previous leader of the rating - SDS. As usual, the new leader (modified SDSm) can be found in the attachment.

Chaos Game Optimization (CGO)

The article presents a new metaheuristic algorithm, Chaos Game Optimization (CGO), which demonstrates a unique ability to maintain high efficiency when dealing with high-dimensional problems. Unlike most optimization algorithms, CGO not only does not lose, but sometimes even increases performance when scaling a problem, which is its key feature.

Data Science and ML (Part 39): News + Artificial Intelligence, Would You Bet on it?

News drives the financial markets, especially major releases like Non-Farm Payrolls (NFPs). We've all witnessed how a single headline can trigger sharp price movements. In this article, we dive into the powerful intersection of news data and Artificial Intelligence.

Feature Engineering With Python And MQL5 (Part II): Angle Of Price

There are many posts in the MQL5 Forum asking for help calculating the slope of price changes. This article will demonstrate one possible way of calculating the angle formed by the changes in price in any market you wish to trade. Additionally, we will answer if engineering this new feature is worth the extra effort and time invested. We will explore if the slope of the price can improve any of our AI model's accuracy when forecasting the USDZAR pair on the M1.

Coral Reefs Optimization (CRO)

The article presents a comprehensive analysis of the Coral Reef Optimization (CRO) algorithm, a metaheuristic method inspired by the biological processes of coral reef formation and development. The algorithm models key aspects of coral evolution: broadcast spawning, brooding, larval settlement, asexual reproduction, and competition for limited reef space. Particular attention is paid to the improved version of the algorithm.

Dialectic Search (DA)

The article introduces the dialectical algorithm (DA), a new global optimization method inspired by the philosophical concept of dialectics. The algorithm exploits a unique division of the population into speculative and practical thinkers. Testing shows impressive performance of up to 98% on low-dimensional problems and overall efficiency of 57.95%. The article explains these metrics and presents a detailed description of the algorithm and the results of experiments on different types of functions.

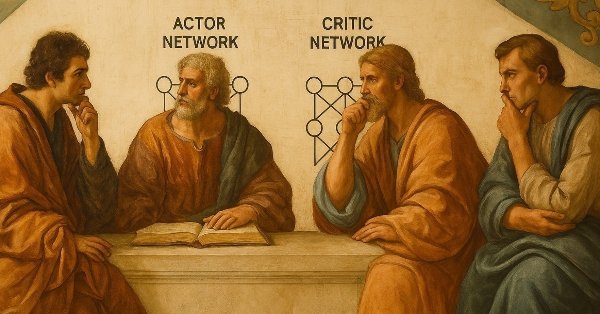

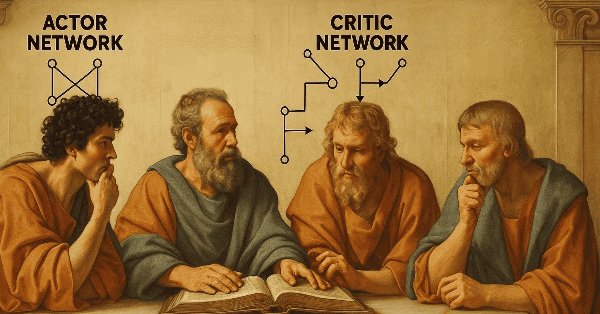

MQL5 Wizard Techniques you should know (Part 58): Reinforcement Learning (DDPG) with Moving Average and Stochastic Oscillator Patterns

Moving Average and Stochastic Oscillator are very common indicators whose collective patterns we explored in the prior article, via a supervised learning network, to see which “patterns-would-stick”. We take our analyses from that article, a step further by considering the effects' reinforcement learning, when used with this trained network, would have on performance. Readers should note our testing is over a very limited time window. Nonetheless, we continue to harness the minimal coding requirements afforded by the MQL5 wizard in showcasing this.

Neural Networks in Trading: Hierarchical Vector Transformer (Final Part)

We continue studying the Hierarchical Vector Transformer method. In this article, we will complete the construction of the model. We will also train and test it on real historical data.

Big Bang - Big Crunch (BBBC) algorithm

The article presents the Big Bang - Big Crunch method, which has two key phases: cyclic generation of random points and their compression to the optimal solution. This approach combines exploration and refinement, allowing us to gradually find better solutions and open up new optimization opportunities.

MQL5 Wizard Techniques you should know (Part 31): Selecting the Loss Function

Loss Function is the key metric of machine learning algorithms that provides feedback to the training process by quantifying how well a given set of parameters are performing when compared to their intended target. We explore the various formats of this function in an MQL5 custom wizard class.

MQL5 Wizard Techniques you should know (Part 59): Reinforcement Learning (DDPG) with Moving Average and Stochastic Oscillator Patterns

We continue our last article on DDPG with MA and stochastic indicators by examining other key Reinforcement Learning classes crucial for implementing DDPG. Though we are mostly coding in python, the final product, of a trained network will be exported to as an ONNX to MQL5 where we integrate it as a resource in a wizard assembled Expert Advisor.

Data Science and ML (Part 34): Time series decomposition, Breaking the stock market down to the core

In a world overflowing with noisy and unpredictable data, identifying meaningful patterns can be challenging. In this article, we'll explore seasonal decomposition, a powerful analytical technique that helps separate data into its key components: trend, seasonal patterns, and noise. By breaking data down this way, we can uncover hidden insights and work with cleaner, more interpretable information.

Neural Networks in Trading: Contrastive Pattern Transformer (Final Part)

In the previous last article within this series, we looked at the Atom-Motif Contrastive Transformer (AMCT) framework, which uses contrastive learning to discover key patterns at all levels, from basic elements to complex structures. In this article, we continue implementing AMCT approaches using MQL5.

Neural Networks in Trading: Adaptive Detection of Market Anomalies (DADA)

We invite you to get acquainted with the DADA framework, which is an innovative method for detecting anomalies in time series. It helps distinguish random fluctuations from suspicious deviations. Unlike traditional methods, DADA is flexible and adapts to different data. Instead of a fixed compression level, it uses several options and chooses the most appropriate one for each case.

Royal Flush Optimization (RFO)

The original Royal Flush Optimization algorithm offers a new approach to solving optimization problems, replacing the classic binary coding of genetic algorithms with a sector-based approach inspired by poker principles. RFO demonstrates how simplifying basic principles can lead to an efficient and practical optimization method. The article presents a detailed analysis of the algorithm and test results.

Integrating MQL5 with data processing packages (Part 4): Big Data Handling

Exploring advanced techniques to integrate MQL5 with powerful data processing tools, this part focuses on efficient handling of big data to enhance trading analysis and decision-making.

Population optimization algorithms: Evolution Strategies, (μ,λ)-ES and (μ+λ)-ES

The article considers a group of optimization algorithms known as Evolution Strategies (ES). They are among the very first population algorithms to use evolutionary principles for finding optimal solutions. We will implement changes to the conventional ES variants and revise the test function and test stand methodology for the algorithms.

MQL5 Wizard Techniques you should know (Part 36): Q-Learning with Markov Chains

Reinforcement Learning is one of the three main tenets in machine learning, alongside supervised learning and unsupervised learning. It is therefore concerned with optimal control, or learning the best long-term policy that will best suit the objective function. It is with this back-drop, that we explore its possible role in informing the learning-process to an MLP of a wizard assembled Expert Advisor.

Artificial Ecosystem-based Optimization (AEO) algorithm

The article considers a metaheuristic Artificial Ecosystem-based Optimization (AEO) algorithm, which simulates interactions between ecosystem components by creating an initial population of solutions and applying adaptive update strategies, and describes in detail the stages of AEO operation, including the consumption and decomposition phases, as well as different agent behavior strategies. The article introduces the features and advantages of this algorithm.

MQL5 Wizard Techniques you should know (Part 41): Deep-Q-Networks

The Deep-Q-Network is a reinforcement learning algorithm that engages neural networks in projecting the next Q-value and ideal action during the training process of a machine learning module. We have already considered an alternative reinforcement learning algorithm, Q-Learning. This article therefore presents another example of how an MLP trained with reinforcement learning, can be used within a custom signal class.

Chemical reaction optimization (CRO) algorithm (Part II): Assembling and results

In the second part, we will collect chemical operators into a single algorithm and present a detailed analysis of its results. Let's find out how the Chemical reaction optimization (CRO) method copes with solving complex problems on test functions.

The base class of population algorithms as the backbone of efficient optimization

The article represents a unique research attempt to combine a variety of population algorithms into a single class to simplify the application of optimization methods. This approach not only opens up opportunities for the development of new algorithms, including hybrid variants, but also creates a universal basic test stand. This stand becomes a key tool for choosing the optimal algorithm depending on a specific task.

Neural networks made easy (Part 79): Feature Aggregated Queries (FAQ) in the context of state

In the previous article, we got acquainted with one of the methods for detecting objects in an image. However, processing a static image is somewhat different from working with dynamic time series, such as the dynamics of the prices we analyze. In this article, we will consider the method of detecting objects in video, which is somewhat closer to the problem we are solving.

Data label for time series mining (Part 5):Apply and Test in EA Using Socket

This series of articles introduces several time series labeling methods, which can create data that meets most artificial intelligence models, and targeted data labeling according to needs can make the trained artificial intelligence model more in line with the expected design, improve the accuracy of our model, and even help the model make a qualitative leap!

Neural Networks Made Easy (Part 81): Context-Guided Motion Analysis (CCMR)

In previous works, we always assessed the current state of the environment. At the same time, the dynamics of changes in indicators always remained "behind the scenes". In this article I want to introduce you to an algorithm that allows you to evaluate the direct change in data between 2 successive environmental states.

MQL5 Wizard Techniques you should know (Part 74): Using Patterns of Ichimoku and the ADX-Wilder with Supervised Learning

We follow up on our last article, where we introduced the indicator pair of the Ichimoku and the ADX, by looking at how this duo could be improved with Supervised Learning. Ichimoku and ADX are a support/resistance plus trend complimentary pairing. Our supervised learning approach uses a neural network that engages the Deep Spectral Mixture Kernel to fine tune the forecasts of this indicator pairing. As per usual, this is done in a custom signal class file that works with the MQL5 wizard to assemble an Expert Advisor.

Gain an Edge Over Any Market (Part III): Visa Spending Index

In the world of big data, there are millions of alternative datasets that hold the potential to enhance our trading strategies. In this series of articles, we will help you identify the most informative public datasets.

Reimagining Classic Strategies: Crude Oil

In this article, we revisit a classic crude oil trading strategy with the aim of enhancing it by leveraging supervised machine learning algorithms. We will construct a least-squares model to predict future Brent crude oil prices based on the spread between Brent and WTI crude oil prices. Our goal is to identify a leading indicator of future changes in Brent prices.

Artificial Cooperative Search (ACS) algorithm

Artificial Cooperative Search (ACS) is an innovative method using a binary matrix and multiple dynamic populations based on mutualistic relationships and cooperation to find optimal solutions quickly and accurately. ACS unique approach to predators and prey enables it to achieve excellent results in numerical optimization problems.

Population optimization algorithms: Bird Swarm Algorithm (BSA)

The article explores the bird swarm-based algorithm (BSA) inspired by the collective flocking interactions of birds in nature. The different search strategies of individuals in BSA, including switching between flight, vigilance and foraging behavior, make this algorithm multifaceted. It uses the principles of bird flocking, communication, adaptability, leading and following to efficiently find optimal solutions.