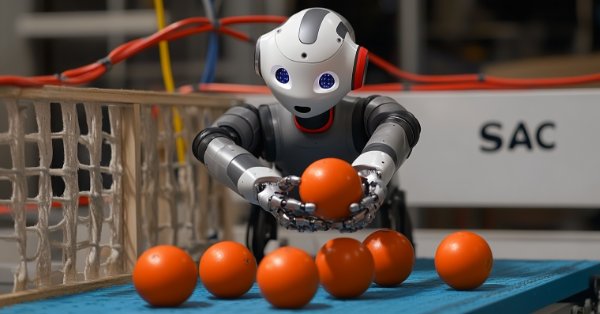

MQL5 Wizard Techniques you should know (Part 51): Reinforcement Learning with SAC

Soft Actor Critic is a Reinforcement Learning algorithm that utilizes 3 neural networks. An actor network and 2 critic networks. These machine learning models are paired in a master slave partnership where the critics are modelled to improve the forecast accuracy of the actor network. While also introducing ONNX in these series, we explore how these ideas could be put to test as a custom signal of a wizard assembled Expert Advisor.

Data label for time series mining (Part 4):Interpretability Decomposition Using Label Data

This series of articles introduces several time series labeling methods, which can create data that meets most artificial intelligence models, and targeted data labeling according to needs can make the trained artificial intelligence model more in line with the expected design, improve the accuracy of our model, and even help the model make a qualitative leap!

Population optimization algorithms: Mind Evolutionary Computation (MEC) algorithm

The article considers the algorithm of the MEC family called the simple mind evolutionary computation algorithm (Simple MEC, SMEC). The algorithm is distinguished by the beauty of its idea and ease of implementation.

Neural Networks in Trading: Dual Clustering of Multivariate Time Series (DUET)

The DUET framework offers an innovative approach to time series analysis, combining temporal and channel clustering to uncover hidden patterns in the analyzed data. This allows models to adapt to changes over time and improve forecasting quality by eliminating noise.

Example of CNA (Causality Network Analysis), SMOC (Stochastic Model Optimal Control) and Nash Game Theory with Deep Learning

We will add Deep Learning to those three examples that were published in previous articles and compare results with previous. The aim is to learn how to add DL to other EA.

Overcoming The Limitation of Machine Learning (Part 6): Effective Memory Cross Validation

In this discussion, we contrast the classical approach to time series cross-validation with modern alternatives that challenge its core assumptions. We expose key blind spots in the traditional method—especially its failure to account for evolving market conditions. To address these gaps, we introduce Effective Memory Cross-Validation (EMCV), a domain-aware approach that questions the long-held belief that more historical data always improves performance.

MQL5 Wizard Techniques you should know (Part 28): GANs Revisited with a Primer on Learning Rates

The Learning Rate, is a step size towards a training target in many machine learning algorithms’ training processes. We examine the impact its many schedules and formats can have on the performance of a Generative Adversarial Network, a type of neural network that we had examined in an earlier article.

Neural Networks in Trading: Directional Diffusion Models (DDM)

In this article, we discuss Directional Diffusion Models that exploit data-dependent anisotropic and directed noise in a forward diffusion process to capture meaningful graph representations.

Neural networks made easy (Part 40): Using Go-Explore on large amounts of data

This article discusses the use of the Go-Explore algorithm over a long training period, since the random action selection strategy may not lead to a profitable pass as training time increases.

Neural networks made easy (Part 57): Stochastic Marginal Actor-Critic (SMAC)

Here I will consider the fairly new Stochastic Marginal Actor-Critic (SMAC) algorithm, which allows building latent variable policies within the framework of entropy maximization.

Gain An Edge Over Any Market (Part IV): CBOE Euro And Gold Volatility Indexes

We will analyze alternative data curated by the Chicago Board Of Options Exchange (CBOE) to improve the accuracy of our deep neural networks when forecasting the XAUEUR symbol.

MQL5 Wizard Techniques you should know (Part 72): Using Patterns of MACD and the OBV with Supervised Learning

We follow up on our last article, where we introduced the indicator pair of the MACD and the OBV, by looking at how this pairing could be enhanced with Machine Learning. MACD and OBV are a trend and volume complimentary pairing. Our machine learning approach uses a convolution neural network that engages the Exponential kernel in sizing its kernels and channels, when fine-tuning the forecasts of this indicator pairing. As always, this is done in a custom signal class file that works with the MQL5 wizard to assemble an Expert Advisor.

Across Neighbourhood Search (ANS)

The article reveals the potential of the ANS algorithm as an important step in the development of flexible and intelligent optimization methods that can take into account the specifics of the problem and the dynamics of the environment in the search space.

Implementing Practical Modules from Other Languages in MQL5 (Part 03): Schedule Module from Python, the OnTimer Event on Steroids

The schedule module in Python offers a simple way to schedule repeated tasks. While MQL5 lacks a built-in equivalent, in this article we’ll implement a similar library to make it easier to set up timed events in MetaTrader 5.

Neural Networks in Trading: Controlled Segmentation

In this article. we will discuss a method of complex multimodal interaction analysis and feature understanding.

Analyzing Overbought and Oversold Trends Via Chaos Theory Approaches

We determine the overbought and oversold condition of the market according to chaos theory: integrating the principles of chaos theory, fractal geometry and neural networks to forecast financial markets. The study demonstrates the use of the Lyapunov exponent as a measure of market randomness and the dynamic adaptation of trading signals. The methodology includes an algorithm for generating fractal noise, hyperbolic tangent activation, and moment optimization.

Neural Networks Made Easy (Part 97): Training Models With MSFformer

When exploring various model architecture designs, we often devote insufficient attention to the process of model training. In this article, I aim to address this gap.

Reimagining Classic Strategies (Part 17): Modelling Technical Indicators

In this discussion, we focus on how we can break the glass ceiling imposed by classical machine learning techniques in finance. It appears that the greatest limitation to the value we can extract from statistical models does not lie in the models themselves — neither in the data nor in the complexity of the algorithms — but rather in the methodology we use to apply them. In other words, the true bottleneck may be how we employ the model, not the model’s intrinsic capability.

Generative Adversarial Networks (GANs) for Synthetic Data in Financial Modeling (Part 1): Introduction to GANs and Synthetic Data in Financial Modeling

This article introduces traders to Generative Adversarial Networks (GANs) for generating Synthetic Financial data, addressing data limitations in model training. It covers GAN basics, python and MQL5 code implementations, and practical applications in finance, empowering traders to enhance model accuracy and robustness through synthetic data.

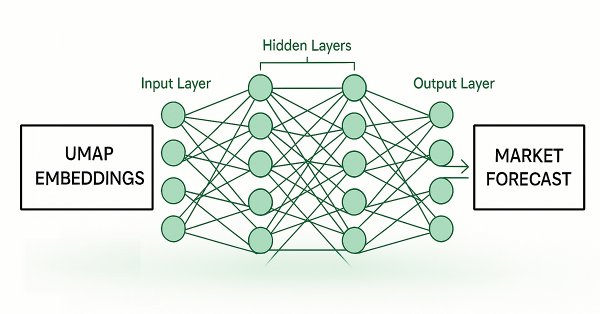

Feature Engineering With Python And MQL5 (Part IV): Candlestick Pattern Recognition With UMAP Regression

Dimension reduction techniques are widely used to improve the performance of machine learning models. Let us discuss a relatively new technique known as Uniform Manifold Approximation and Projection (UMAP). This new technique has been developed to explicitly overcome the limitations of legacy methods that create artifacts and distortions in the data. UMAP is a powerful dimension reduction technique, and it helps us group similar candle sticks in a novel and effective way that reduces our error rates on out of sample data and improves our trading performance.

MQL5 Wizard Techniques you should know (Part 21): Testing with Economic Calendar Data

Economic Calendar Data is not available for testing with Expert Advisors within Strategy Tester, by default. We look at how Databases could help in providing a work around this limitation. So, for this article we explore how SQLite databases can be used to archive Economic Calendar news such that wizard assembled Expert Advisors can use this to generate trade signals.

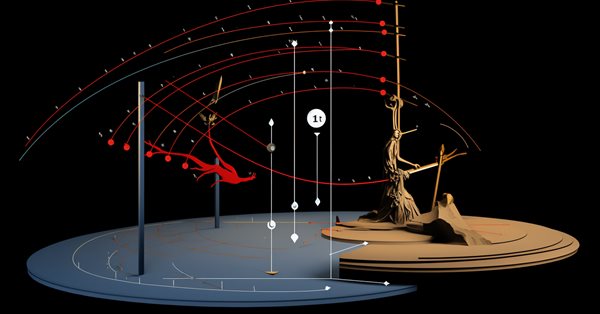

Neural networks made easy (Part 72): Trajectory prediction in noisy environments

The quality of future state predictions plays an important role in the Goal-Conditioned Predictive Coding method, which we discussed in the previous article. In this article I want to introduce you to an algorithm that can significantly improve the prediction quality in stochastic environments, such as financial markets.

Neural Networks in Trading: Spatio-Temporal Neural Network (STNN)

In this article we will talk about using space-time transformations to effectively predict upcoming price movement. To improve the numerical prediction accuracy in STNN, a continuous attention mechanism is proposed that allows the model to better consider important aspects of the data.

Category Theory in MQL5 (Part 23): A different look at the Double Exponential Moving Average

In this article we continue with our theme in the last of tackling everyday trading indicators viewed in a ‘new’ light. We are handling horizontal composition of natural transformations for this piece and the best indicator for this, that expands on what we just covered, is the double exponential moving average (DEMA).

Reimagining Classic Strategies (Part IX): Multiple Time Frame Analysis (II)

In today's discussion, we examine the strategy of multiple time-frame analysis to learn on which time frame our AI model performs best. Our analysis leads us to conclude that the Monthly and Hourly time-frames produce models with relatively low error rates on the EURUSD pair. We used this to our advantage and created a trading algorithm that makes AI predictions on the Monthly time frame, and executes its trades on the Hourly time frame.

Neural Network in Practice: Pseudoinverse (I)

Today we will begin to consider how to implement the calculation of pseudo-inverse in pure MQL5 language. The code we are going to look at will be much more complex for beginners than I expected, and I'm still figuring out how to explain it in a simple way. So for now, consider this an opportunity to learn some unusual code. Calmly and attentively. Although it is not aimed at efficient or quick application, its goal is to be as didactic as possible.

Forex arbitrage trading: Analyzing synthetic currencies movements and their mean reversion

In this article, we will examine the movements of synthetic currencies using Python and MQL5 and explore how feasible Forex arbitrage is today. We will also consider ready-made Python code for analyzing synthetic currencies and share more details on what synthetic currencies are in Forex.

Neural networks made easy (Part 62): Using Decision Transformer in hierarchical models

In recent articles, we have seen several options for using the Decision Transformer method. The method allows analyzing not only the current state, but also the trajectory of previous states and actions performed in them. In this article, we will focus on using this method in hierarchical models.

Neural networks made easy (Part 64): ConserWeightive Behavioral Cloning (CWBC) method

As a result of tests performed in previous articles, we came to the conclusion that the optimality of the trained strategy largely depends on the training set used. In this article, we will get acquainted with a fairly simple yet effective method for selecting trajectories to train models.

Neural Network in Practice: Straight Line Function

In this article, we will take a quick look at some methods to get a function that can represent our data in the database. I will not go into detail about how to use statistics and probability studies to interpret the results. Let's leave that for those who really want to delve into the mathematical side of the matter. Exploring these questions will be critical to understanding what is involved in studying neural networks. Here we will consider this issue quite calmly.

Blood inheritance optimization (BIO)

I present to you my new population optimization algorithm - Blood Inheritance Optimization (BIO), inspired by the human blood group inheritance system. In this algorithm, each solution has its own "blood type" that determines the way it evolves. Just as in nature where a child's blood type is inherited according to specific rules, in BIO new solutions acquire their characteristics through a system of inheritance and mutations.

Neural networks made easy (Part 41): Hierarchical models

The article describes hierarchical training models that offer an effective approach to solving complex machine learning problems. Hierarchical models consist of several levels, each of which is responsible for different aspects of the task.

Population optimization algorithms: Simulated Annealing (SA) algorithm. Part I

The Simulated Annealing algorithm is a metaheuristic inspired by the metal annealing process. In the article, we will conduct a thorough analysis of the algorithm and debunk a number of common beliefs and myths surrounding this widely known optimization method. The second part of the article will consider the custom Simulated Isotropic Annealing (SIA) algorithm.

Quantitative Analysis of Trends: Collecting Statistics in Python

What is quantitative trend analysis in the Forex market? We collect statistics on trends, their magnitude and distribution across the EURUSD currency pair. How quantitative trend analysis can help you create a profitable trading expert advisor.

Artificial Bee Hive Algorithm (ABHA): Tests and results

In this article, we will continue exploring the Artificial Bee Hive Algorithm (ABHA) by diving into the code and considering the remaining methods. As you might remember, each bee in the model is represented as an individual agent whose behavior depends on internal and external information, as well as motivational state. We will test the algorithm on various functions and summarize the results by presenting them in the rating table.

Neural Networks in Trading: Superpoint Transformer (SPFormer)

In this article, we introduce a method for segmenting 3D objects based on Superpoint Transformer (SPFormer), which eliminates the need for intermediate data aggregation. This speeds up the segmentation process and improves the performance of the model.

Neural Networks in Trading: Market Analysis Using a Pattern Transformer

When we use models to analyze the market situation, we mainly focus on the candlestick. However, it has long been known that candlestick patterns can help in predicting future price movements. In this article, we will get acquainted with a method that allows us to integrate both of these approaches.

Neural networks made easy (Part 65): Distance Weighted Supervised Learning (DWSL)

In this article, we will get acquainted with an interesting algorithm that is built at the intersection of supervised and reinforcement learning methods.

Data Science and ML (Part 47): Forecasting the Market Using the DeepAR model in Python

In this article, we will attempt to predict the market with a decent model for time series forecasting named DeepAR. A model that is a combination of deep neural networks and autoregressive properties found in models like ARIMA and Vector Autoregressive (VAR).

Neural networks made easy (Part 89): Frequency Enhanced Decomposition Transformer (FEDformer)

All the models we have considered so far analyze the state of the environment as a time sequence. However, the time series can also be represented in the form of frequency features. In this article, I introduce you to an algorithm that uses frequency components of a time sequence to predict future states.