Deterministic Oscillatory Search (DOS)

Contents

Introduction

Optimization algorithms continue to evolve in an effort to overcome the fundamental limitations of traditional methods. Most metaheuristic optimization algorithms rely heavily on stochastic processes and random numbers. While these approaches demonstrate impressive ability to avoid local optima, their non-deterministic nature can create problems in areas requiring precise reproducibility of results.

The article introduces Deterministic Oscillatory Search (DOS), a new metaheuristic algorithm that combines the advantages of traditional gradient-based methods with the efficiency of swarm algorithms, but completely avoids the use of random numbers.

Designed to solve complex global optimization problems in 2017 by Archana, DOS is based on the concept of oscillating particle motion in a search space with a deterministic distribution of initial positions. The key feature of the algorithm is its ability to handle multidimensional problems while maintaining full reproducibility: given the same initial conditions, the algorithm always arrives at the same result.

Unlike most metaheuristic algorithms, DOS introduces the concept of "fitness slope" - a mechanism that allows particles to assess whether their current direction is improving the solution and adapt their search strategy. Particles can be in one of three slope states: positive (movement improves the solution), negative (movement worsens the solution), or unknown.

This information is used to control the oscillatory behavior of the particles. When conventional gradient methods reach a point where all directions lead to a deterioration of the objective function, they stop. DOS overcomes this limitation through a swarming mechanism that is activated when oscillating motion fails to provide improvement. In this case, the particle begins to move in the direction of the best known global solution.

In this article, we will examine in detail the mathematical foundations of the DOS algorithm, analyze its characteristics and implementation features, and demonstrate its efficiency on a number of test problems.

Implementation of the algorithm

Now let's see how the DOS algorithm works. Instead of a random walk, it uses a systematic approach consisting of a few simple rules. DOS does not rely on randomness. The algorithm starts by placing several "explorers" (particles) at different points in the landscape. The placement is not random - it is determined by a specific equation designed to cover the region uniformly. Each explorer has an initial direction and movement velocity. As the explorer moves forward, it remembers whether the terrain becomes higher (better) or lower (worse). This is called a "slope" - a simple way to track whether you are moving in the right direction.

Now the fun begins. When an explorer finds that it has stopped ascending and has started descending (its fitness has worsened), it does not simply stop or turn back. Instead, it does a "bounce" - it turns around and continues moving in the opposite direction, but at a slower velocity (half of its previous velocity). It is like a tennis ball bouncing off a wall, but with less energy.

This bouncing creates an oscillating motion, hence the name of the algorithm. With each rebound, the explorer localizes the extremum more accurately. But what if the explorer is trapped - a small hill with everything around it getting lower, and this extremum is not the highest point in the region? Here DOS uses a different technique. If the explorer tries to move in different directions, but encounters a descent everywhere, it switches to another mode - "swarming". In this mode, it begins to move towards the best point found by all explorers so far.

Unlike random search or trial and error, DOS systematically explores the solution space using information about the direction of improvement and the collective experience of all explorers. At the same time, it does not require complex derivative calculations or storing a large search history. Thus, step by step, applying simple rules of movement, reflection and swarming, DOS finds optimal solutions to complex problems.

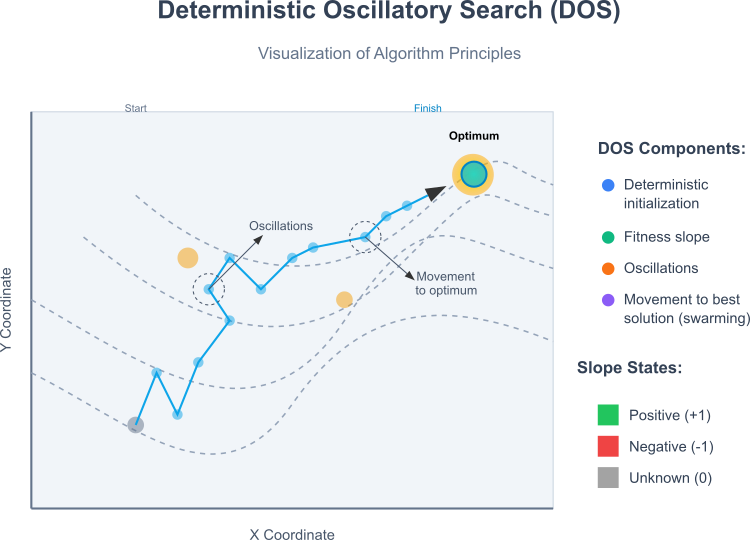

The figure below shows the following key aspects of the algorithm: a two-dimensional search space with a global optimum and several local optima, represented by contour lines, and a path from the starting point to the global optimum, demonstrating the characteristic behavior of the DOS algorithm:

- initial deterministic position of the particle,

- oscillating (zigzag) movement when exploring space,

- adaptive movement towards the global optimum.

The key components of the algorithm are: deterministic particle initialization, the use of the fitness slope concept, oscillatory motion, and a swarming mechanism (movement towards the best known solution).

The slope state that can be:

- positive (+1) - current movement improves fitness,

- negative (-1) - current movement worsens fitness,

- unknown (0) - state during initialization or after a change of strategy.

Figure 1. Algorithm operation

The illustration clearly shows how DOS combines the characteristics of classical gradient methods (local search through oscillations) with the capabilities of swarm algorithms, while maintaining fully deterministic behavior. With the operating principles covered, let's move on to writing the pseudocode of the algorithm.

Step 1: Initial preparation

- Create arrays to store positions, velocities, previous fitness values, and slopes for each particle

- Initialize the best found solution with the minimum possible fitness value

Step 2: Deterministic particle initialization

- For each particle:

- locate in the search space using a deterministic equation that ensures uniform coverage

- set the initial velocity to zero

- set the initial slope to "unknown" (0)

- set the previous fitness value to the minimum possible value

Step 3: The main optimization loop

- Repeat until the maximum number of iterations is reached: Part 1: Particle Movement

- For each particle:

- save the current fitness value as the previous one

- update position by adding the current velocity

- if the new position is outside the search boundaries, limit it to the permitted limits

- For each particle:

- calculate the new fitness value

- if the fitness is better than the best solution found, update the best solution

- if the slope is "unknown" (0):

- if the new fitness is better than the previous one, set the slope to "positive" (1)

- if the new fitness is worse than the previous one, set the slope to "negative" (-1)

- if the fitness has not changed, leave the slope as "unknown"

- if the slope is "positive" (1):

- If the new fitness is worse than the previous one:

- change the movement direction to the opposite one

- reduce the velocity by half

- set the slope to "negative" (-1)

- If the new fitness is worse than the previous one:

- if the slope is "negative" (-1):

- If the new fitness is worse than the previous one:

- apply the swarming mechanism: add to the current velocity the vector directed to the best solution, multiplied by the movement factor

- set the slope to "unknown" (0)

- If the new fitness is worse than the previous one:

- check if the velocity is zero or close to zero:

- if the velocity is almost zero:

- set the velocity in the direction of the best solution found multiplied by the motion factor

- set the slope to "unknown" (0)

- if the velocity is almost zero:

- For each particle:

Step 4: Finishing

- Return the best solution found and its fitness value

Now we can start writing the DOS algorithm code. Let's create a structure to store the particle state during the optimization. This structure will contain a field for defining the velocity slope (positive, negative, or undefined) and an array of velocity components by dimension, which will allow convenient storage and processing of particle motion parameters.

To initialize the structure, a method is provided that sets the slope to a default value and creates a velocity array of a given size, filling it with zeros, which ensures a correct initial state before starting work.

Additionally, a zero velocity check has been implemented - a method that checks all velocity components and returns "true" if they are all practically zero given the specified precision, or "false" otherwise. This helps determine whether the particle has reached a stable state or needs to continue moving.

// Structure for storing particle velocity struct S_DOS_Velocity { int slope; // Particle slope (-1: negative, 0: unknown, 1: positive) double v []; // Velocity components for each dimension void Init (int dims) { slope = 0; ArrayResize (v, dims); ArrayInitialize (v, 0.0); // Quick initialization of the entire array to zeros } // Check for zero velocity bool IsZero (double epsilon = 1e-10) { for (int i = 0; i < ArraySize (v); i++) if (MathAbs (v [i]) > epsilon) return false; return true; } }; //——————————————————————————————————————————————————————————————————————————————

The C_AO_DOS class will represent the implementation of the algorithm. It will inherit functionality from the base C_AO class, adding specific logic for the DOS method. The main characteristics of the class: the constructor initializes default parameters, including the population size and the factor of movement towards the best solution, and creates an array of parameters. The SetParams() method provides the ability to set and check parameters, such as popSize and movementFactor, subject to constraints. The Init() method is responsible for preparing the initial conditions: search ranges, change steps, and the number of iterations.

Particle motion logic, methods:- Moving () implements the basic mechanism for moving particles in the search space, based on current velocities and assessments.

- Revision () is used to adjust positions or velocities after each step.

Inside the class, the S_DOS_Velocity velocities structure is defined, which stores the velocity components of each particle in all dimensions and contains methods for initialization and zero velocity testing.

Internal methods:- InitializeParticles() and ProcessParticleMovement() are auxiliary functions for initializing and updating particle positions. They provide algorithmic logic.

Overall, the class implements a structured approach to the DOS method, where each number and variable aims to guide exploration of the solution space using particle positions, velocities, and search directions.

//—————————————————————————————————————————————————————————————————————————————— class C_AO_DOS : public C_AO { public: //-------------------------------------------------------------------- ~C_AO_DOS () { } C_AO_DOS () { ao_name = "DOS"; ao_desc = "Deterministic Oscillatory Search"; ao_link = "https://www.mql5.com/en/articles/18154"; // Set default parameters popSize = 30; // population size movementFactor = 0.95; // movement factor towards the best solution // Create and initialize the parameters array ArrayResize (params, 2); params [0].name = "Population Size"; params [0].val = popSize; params [1].name = "Movement Factor"; params [1].val = movementFactor; } void SetParams () { // Set parameter values with validation popSize = (int)MathMax (5, params [0].val); // Minimum 5 particles for efficiency movementFactor = MathMax (0.1, MathMin (1.0, params [1].val)); // Limit from 0.1 to 1.0 } bool Init (const double &rangeMinP [], // minimum values const double &rangeMaxP [], // maximum values const double &rangeStepP [], // step change const int epochsP = 0); void Moving (); void Revision (); //---------------------------------------------------------------------------- double movementFactor; // movement factor towards the best solution S_DOS_Velocity velocities []; // Array of particle velocity structures private: //------------------------------------------------------------------- void InitializeParticles (); void ProcessParticleMovement (int particleIndex); }; //——————————————————————————————————————————————————————————————————————————————

The Init method is responsible for preparing the algorithm for execution. First, the base initialization method is called, which sets up the initial search ranges and steps required for the operation. After successful basic initialization, memory is allocated for arrays, in which information about particle velocities will be stored.

For each element in the population, initial velocities are calculated in all dimensions to determine the starting dynamics of particle motion. Finally, a method is called that places the particles in their initial positions in a certain deterministic manner, specifying their starting points in the search space.

The result of this method is a prepared population of particles with certain initial conditions, ready to begin the iterative search process.

//—————————————————————————————————————————————————————————————————————————————— bool C_AO_DOS::Init (const double &rangeMinP [], // minimum values const double &rangeMaxP [], // maximum values const double &rangeStepP [], // step change const int epochsP = 0) // number of epochs { // Standard C_AO initialization if (!StandardInit (rangeMinP, rangeMaxP, rangeStepP)) return false; //---------------------------------------------------------------------------- // Allocating memory for arrays ArrayResize (velocities, popSize); // Initialize the velocities for each dimension for (int i = 0; i < popSize; i++) velocities [i].Init (coords); // Initialize particle positions deterministically InitializeParticles (); return true; } //——————————————————————————————————————————————————————————————————————————————

The Moving method implements the movement of particles in the search space of the DOS algorithm and sequentially processes each particle in the population, going through them from the first to the last. For each particle, its current fitness function value "f" is stored. This is necessary for subsequent comparison and determination of improvement or deterioration of the particle’s position.

For each coordinate (dimension) of the particle, a new value is calculated by adding the corresponding velocity component to the current coordinate. New coordinates are limited to the specified rangeMin[d] and rangeMax[d] ranges according to the step, so that particles do not go beyond the allowed search area.

As a result, the Moving method changes the positions of particles in the search space, preserving information about their previous state and ensuring the validity of the new coordinates, taking into account constraints and discretization.

//+----------------------------------------------------------------------------+ //| Basic method of particle movement | //+----------------------------------------------------------------------------+ void C_AO_DOS::Moving () { // Handle all particles for (int i = 0; i < popSize; i++) { // Save the fitness value a [i].fP = a [i].f; // Calculate new coordinates based on velocity for (int d = 0; d < coords; d++) { // Update position a [i].c [d] += velocities [i].v [d]; // Round to the nearest acceptable step a [i].c [d] = u.SeInDiSp (a [i].c [d], rangeMin [d], rangeMax [d], rangeStep [d]); } } } //——————————————————————————————————————————————————————————————————————————————

The Revision method is responsible for updating information about the best solutions and handling particle movements during the search. The method sequentially handles all particles in the population. For each of them, a check is made whether the current solution of the particle improves the fB global best fitness value. If yes, the global best score is updated and the corresponding coordinates are saved. For each particle, a separate method is called, which is responsible for recalculating and adjusting its position based on changes in the fitness function.

The result of the method operation is updating the best solutions and preparing the system for the next stage of the search, when particles can continue moving taking into account the latest updates.

//+----------------------------------------------------------------------------+ //| Fitness function update method | //+----------------------------------------------------------------------------+ void C_AO_DOS::Revision () { // Handle each particle for (int i = 0; i < popSize; i++) { // Update the best solution if the current solution is better if (a [i].f > fB) { fB = a [i].f; ArrayCopy (cB, a [i].c, 0, 0, WHOLE_ARRAY); } // Handle particle motion based on fitness change ProcessParticleMovement (i); } } //——————————————————————————————————————————————————————————————————————————————

The ProcessParticleMovement method is responsible for adjusting the movement of an individual particle in the search space based on changes in its fitness function and current directions. If the index is invalid, the method terminates. To speed up access, the current fitness particle, the previous fitness value, and the current movement slope are saved. Based on the difference between the current and previous fitness, as well as the current slope, the algorithm decides which direction to choose for the particle to move if:

- the slope is unknown, its direction (positive, negative or undefined) is determined based on the change in fitness;

- the slope was positive, but fitness worsened, the slope switches to negative, and the velocities along all axes are halved, which contributes to a change in the direction of movement;

- the slope was negative and the fitness deteriorates, "swarming" occurs - a movement towards the global optimum, in which the velocities along the axes increase towards the best solution.

If the velocities in all directions are zero, movement towards the global optimum is initiated by setting the velocity based on the difference between the current coordinates and the coordinates of the global best solution, taking into account the movement ratio. As a result, the direction and magnitude of the velocity are adjusted depending on the situation, which allows the particle to adequately respond to changes in fitness and "learn" to move in the optimal direction.

The ultimate goal of the method is to provide dynamic and adaptive motion of particles, taking into account changes in their local and global solutions for an efficient search for the optimum.

//+----------------------------------------------------------------------------+ //| Handle particle motion after fitness update | //+----------------------------------------------------------------------------+ void C_AO_DOS::ProcessParticleMovement (int particleIndex) { // Local variables for access optimization double currentFitness = a [particleIndex].f; double previousFitness = a [particleIndex].fP; int currentSlope = velocities [particleIndex].slope; // Comparison of fitnesses to determine the movement direction double fitnessDiff = currentFitness - previousFitness; // Handle a slope according to the current state if (currentSlope == 0) // Unknown slope { // Determine the slope based on the change in fitness velocities [particleIndex].slope = (fitnessDiff > 0) ? 1 : (fitnessDiff < 0) ? -1 : 0; } else if (currentSlope == 1 && fitnessDiff < 0) // Positive slope and deterioration of fitness { // Change direction and decrease velocity for (int d = 0; d < coords; d++) velocities [particleIndex].v [d] *= -0.5; // Optimized form of division by 2 velocities [particleIndex].slope = -1; // Change the slope to negative } else if (currentSlope == -1 && fitnessDiff < 0) // Negative slope and fitness degradation { // Apply the swarming mechanism - movement towards the global optimum for (int d = 0; d < coords; d++) velocities [particleIndex].v [d] += (cB [d] - a [particleIndex].c [d]) * movementFactor; velocities [particleIndex].slope = 0; // Reset the slope as unknown } // Check for zero velocity using the structure method if (velocities [particleIndex].IsZero ()) { // Initialize the velocity by moving towards the global optimum for (int d = 0; d < coords; d++) velocities [particleIndex].v [d] = (cB [d] - a [particleIndex].c [d]) * movementFactor; // Reset the slope velocities [particleIndex].slope = 0; } } //——————————————————————————————————————————————————————————————————————————————

Test results

Now let's look at the results. It can be seen that the algorithm based on a gradient method with a built-in swarming effect copes with the tasks better than conventional gradient methods.

=============================

5 Hilly's; Func runs: 10000; result: 0.3422040822277234

25 Hilly's; Func runs: 10000; result: 0.3421751631202356

500 Hilly's; Func runs: 10000; result: 0.3421605659711745

=============================

5 Forest's; Func runs: 10000; result: 0.5708601371368296

25 Forest's; Func runs: 10000; result: 0.34628707444514434

500 Forest's; Func runs: 10000; result: 0.32879379664917996

=============================

5 Megacity's; Func runs: 10000; result: 0.19999999999999998

25 Megacity's; Func runs: 10000; result: 0.20923076923076928

500 Megacity's; Func runs: 10000; result: 0.23076923076922945

=============================

All score: 2.91248 (32.36%)

The visualization shows a large scatter in the results for low-dimensional functions.

DOS on the Hilly test function

DOS on the Forest test function

DOS on the Megacity test function

In the rating table of population optimization algorithms, DOS is presented for informational purposes.

| # | AO | Description | Hilly | Hilly Final | Forest | Forest Final | Megacity (discrete) | Megacity Final | Final Result | % of MAX | ||||||

| 10 p (5 F) | 50 p (25 F) | 1000 p (500 F) | 10 p (5 F) | 50 p (25 F) | 1000 p (500 F) | 10 p (5 F) | 50 p (25 F) | 1000 p (500 F) | ||||||||

| 1 | ANS | across neighbourhood search | 0.94948 | 0.84776 | 0.43857 | 2.23581 | 1.00000 | 0.92334 | 0.39988 | 2.32323 | 0.70923 | 0.63477 | 0.23091 | 1.57491 | 6.134 | 68.15 |

| 2 | CLA | code lock algorithm (joo) | 0.95345 | 0.87107 | 0.37590 | 2.20042 | 0.98942 | 0.91709 | 0.31642 | 2.22294 | 0.79692 | 0.69385 | 0.19303 | 1.68380 | 6.107 | 67.86 |

| 3 | AMOm | animal migration ptimization M | 0.90358 | 0.84317 | 0.46284 | 2.20959 | 0.99001 | 0.92436 | 0.46598 | 2.38034 | 0.56769 | 0.59132 | 0.23773 | 1.39675 | 5.987 | 66.52 |

| 4 | (P+O)ES | (P+O) evolution strategies | 0.92256 | 0.88101 | 0.40021 | 2.20379 | 0.97750 | 0.87490 | 0.31945 | 2.17185 | 0.67385 | 0.62985 | 0.18634 | 1.49003 | 5.866 | 65.17 |

| 5 | CTA | comet tail algorithm (joo) | 0.95346 | 0.86319 | 0.27770 | 2.09435 | 0.99794 | 0.85740 | 0.33949 | 2.19484 | 0.88769 | 0.56431 | 0.10512 | 1.55712 | 5.846 | 64.96 |

| 6 | TETA | time evolution travel algorithm (joo) | 0.91362 | 0.82349 | 0.31990 | 2.05701 | 0.97096 | 0.89532 | 0.29324 | 2.15952 | 0.73462 | 0.68569 | 0.16021 | 1.58052 | 5.797 | 64.41 |

| 7 | SDSm | stochastic diffusion search M | 0.93066 | 0.85445 | 0.39476 | 2.17988 | 0.99983 | 0.89244 | 0.19619 | 2.08846 | 0.72333 | 0.61100 | 0.10670 | 1.44103 | 5.709 | 63.44 |

| 8 | BOAm | billiards optimization algorithm M | 0.95757 | 0.82599 | 0.25235 | 2.03590 | 1.00000 | 0.90036 | 0.30502 | 2.20538 | 0.73538 | 0.52523 | 0.09563 | 1.35625 | 5.598 | 62.19 |

| 9 | AAm | archery algorithm M | 0.91744 | 0.70876 | 0.42160 | 2.04780 | 0.92527 | 0.75802 | 0.35328 | 2.03657 | 0.67385 | 0.55200 | 0.23738 | 1.46323 | 5.548 | 61.64 |

| 10 | ESG | evolution of social groups (joo) | 0.99906 | 0.79654 | 0.35056 | 2.14616 | 1.00000 | 0.82863 | 0.13102 | 1.95965 | 0.82333 | 0.55300 | 0.04725 | 1.42358 | 5.529 | 61.44 |

| 11 | SIA | simulated isotropic annealing (joo) | 0.95784 | 0.84264 | 0.41465 | 2.21513 | 0.98239 | 0.79586 | 0.20507 | 1.98332 | 0.68667 | 0.49300 | 0.09053 | 1.27020 | 5.469 | 60.76 |

| 12 | ACS | artificial cooperative search | 0.75547 | 0.74744 | 0.30407 | 1.80698 | 1.00000 | 0.88861 | 0.22413 | 2.11274 | 0.69077 | 0.48185 | 0.13322 | 1.30583 | 5.226 | 58.06 |

| 13 | DA | dialectical algorithm | 0.86183 | 0.70033 | 0.33724 | 1.89940 | 0.98163 | 0.72772 | 0.28718 | 1.99653 | 0.70308 | 0.45292 | 0.16367 | 1.31967 | 5.216 | 57.95 |

| 14 | BHAm | black hole algorithm M | 0.75236 | 0.76675 | 0.34583 | 1.86493 | 0.93593 | 0.80152 | 0.27177 | 2.00923 | 0.65077 | 0.51646 | 0.15472 | 1.32195 | 5.196 | 57.73 |

| 15 | ASO | anarchy society optimization | 0.84872 | 0.74646 | 0.31465 | 1.90983 | 0.96148 | 0.79150 | 0.23803 | 1.99101 | 0.57077 | 0.54062 | 0.16614 | 1.27752 | 5.178 | 57.54 |

| 16 | RFO | royal flush optimization (joo) | 0.83361 | 0.73742 | 0.34629 | 1.91733 | 0.89424 | 0.73824 | 0.24098 | 1.87346 | 0.63154 | 0.50292 | 0.16421 | 1.29867 | 5.089 | 56.55 |

| 17 | AOSm | atomic orbital search M | 0.80232 | 0.70449 | 0.31021 | 1.81702 | 0.85660 | 0.69451 | 0.21996 | 1.77107 | 0.74615 | 0.52862 | 0.14358 | 1.41835 | 5.006 | 55.63 |

| 18 | TSEA | turtle shell evolution algorithm (joo) | 0.96798 | 0.64480 | 0.29672 | 1.90949 | 0.99449 | 0.61981 | 0.22708 | 1.84139 | 0.69077 | 0.42646 | 0.13598 | 1.25322 | 5.004 | 55.60 |

| 19 | DE | differential evolution | 0.95044 | 0.61674 | 0.30308 | 1.87026 | 0.95317 | 0.78896 | 0.16652 | 1.90865 | 0.78667 | 0.36033 | 0.02953 | 1.17653 | 4.955 | 55.06 |

| 20 | SRA | successful restaurateur algorithm (joo) | 0.96883 | 0.63455 | 0.29217 | 1.89555 | 0.94637 | 0.55506 | 0.19124 | 1.69267 | 0.74923 | 0.44031 | 0.12526 | 1.31480 | 4.903 | 54.48 |

| 21 | CRO | chemical reaction optimization | 0.94629 | 0.66112 | 0.29853 | 1.90593 | 0.87906 | 0.58422 | 0.21146 | 1.67473 | 0.75846 | 0.42646 | 0.12686 | 1.31178 | 4.892 | 54.36 |

| 22 | BIO | blood inheritance optimization (joo) | 0.81568 | 0.65336 | 0.30877 | 1.77781 | 0.89937 | 0.65319 | 0.21760 | 1.77016 | 0.67846 | 0.47631 | 0.13902 | 1.29378 | 4.842 | 53.80 |

| 23 | BSA | bird swarm algorithm | 0.89306 | 0.64900 | 0.26250 | 1.80455 | 0.92420 | 0.71121 | 0.24939 | 1.88479 | 0.69385 | 0.32615 | 0.10012 | 1.12012 | 4.809 | 53.44 |

| 24 | HS | harmony search | 0.86509 | 0.68782 | 0.32527 | 1.87818 | 0.99999 | 0.68002 | 0.09590 | 1.77592 | 0.62000 | 0.42267 | 0.05458 | 1.09725 | 4.751 | 52.79 |

| 25 | SSG | saplings sowing and growing | 0.77839 | 0.64925 | 0.39543 | 1.82308 | 0.85973 | 0.62467 | 0.17429 | 1.65869 | 0.64667 | 0.44133 | 0.10598 | 1.19398 | 4.676 | 51.95 |

| 26 | BCOm | bacterial chemotaxis optimization M | 0.75953 | 0.62268 | 0.31483 | 1.69704 | 0.89378 | 0.61339 | 0.22542 | 1.73259 | 0.65385 | 0.42092 | 0.14435 | 1.21912 | 4.649 | 51.65 |

| 27 | ABO | african buffalo optimization | 0.83337 | 0.62247 | 0.29964 | 1.75548 | 0.92170 | 0.58618 | 0.19723 | 1.70511 | 0.61000 | 0.43154 | 0.13225 | 1.17378 | 4.634 | 51.49 |

| 28 | (PO)ES | (PO) evolution strategies | 0.79025 | 0.62647 | 0.42935 | 1.84606 | 0.87616 | 0.60943 | 0.19591 | 1.68151 | 0.59000 | 0.37933 | 0.11322 | 1.08255 | 4.610 | 51.22 |

| 29 | FBA | Fractal-Based Algorithm | 0.79000 | 0.65134 | 0.28965 | 1.73099 | 0.87158 | 0.56823 | 0.18877 | 1.62858 | 0.61077 | 0.46062 | 0.12398 | 1.19537 | 4.555 | 50.61 |

| 30 | TSm | tabu search M | 0.87795 | 0.61431 | 0.29104 | 1.78330 | 0.92885 | 0.51844 | 0.19054 | 1.63783 | 0.61077 | 0.38215 | 0.12157 | 1.11449 | 4.536 | 50.40 |

| 31 | BSO | brain storm optimization | 0.93736 | 0.57616 | 0.29688 | 1.81041 | 0.93131 | 0.55866 | 0.23537 | 1.72534 | 0.55231 | 0.29077 | 0.11914 | 0.96222 | 4.498 | 49.98 |

| 32 | WOAm | wale optimization algorithm M | 0.84521 | 0.56298 | 0.26263 | 1.67081 | 0.93100 | 0.52278 | 0.16365 | 1.61743 | 0.66308 | 0.41138 | 0.11357 | 1.18803 | 4.476 | 49.74 |

| 33 | AEFA | artificial electric field algorithm | 0.87700 | 0.61753 | 0.25235 | 1.74688 | 0.92729 | 0.72698 | 0.18064 | 1.83490 | 0.66615 | 0.11631 | 0.09508 | 0.87754 | 4.459 | 49.55 |

| 34 | AEO | artificial ecosystem-based optimization algorithm | 0.91380 | 0.46713 | 0.26470 | 1.64563 | 0.90223 | 0.43705 | 0.21400 | 1.55327 | 0.66154 | 0.30800 | 0.28563 | 1.25517 | 4.454 | 49.49 |

| 35 | CAm | camel algorithm M | 0.78684 | 0.56042 | 0.35133 | 1.69859 | 0.82772 | 0.56041 | 0.24336 | 1.63149 | 0.64846 | 0.33092 | 0.13418 | 1.11356 | 4.444 | 49.37 |

| 36 | ACOm | ant colony optimization M | 0.88190 | 0.66127 | 0.30377 | 1.84693 | 0.85873 | 0.58680 | 0.15051 | 1.59604 | 0.59667 | 0.37333 | 0.02472 | 0.99472 | 4.438 | 49.31 |

| 37 | BFO-GA | bacterial foraging optimization - ga | 0.89150 | 0.55111 | 0.31529 | 1.75790 | 0.96982 | 0.39612 | 0.06305 | 1.42899 | 0.72667 | 0.27500 | 0.03525 | 1.03692 | 4.224 | 46.93 |

| 38 | SOA | simple optimization algorithm | 0.91520 | 0.46976 | 0.27089 | 1.65585 | 0.89675 | 0.37401 | 0.16984 | 1.44060 | 0.69538 | 0.28031 | 0.10852 | 1.08422 | 4.181 | 46.45 |

| 39 | ABHA | artificial bee hive algorithm | 0.84131 | 0.54227 | 0.26304 | 1.64663 | 0.87858 | 0.47779 | 0.17181 | 1.52818 | 0.50923 | 0.33877 | 0.10397 | 0.95197 | 4.127 | 45.85 |

| 40 | ACMO | atmospheric cloud model optimization | 0.90321 | 0.48546 | 0.30403 | 1.69270 | 0.80268 | 0.37857 | 0.19178 | 1.37303 | 0.62308 | 0.24400 | 0.10795 | 0.97503 | 4.041 | 44.90 |

| 41 | ADAMm | adaptive moment estimation M | 0.88635 | 0.44766 | 0.26613 | 1.60014 | 0.84497 | 0.38493 | 0.16889 | 1.39880 | 0.66154 | 0.27046 | 0.10594 | 1.03794 | 4.037 | 44.85 |

| 42 | CGO | chaos game optimization | 0.57256 | 0.37158 | 0.32018 | 1.26432 | 0.61176 | 0.61931 | 0.62161 | 1.85267 | 0.37538 | 0.21923 | 0.19028 | 0.78490 | 3.902 | 43.35 |

| 43 | ATAm | artificial tribe algorithm M | 0.71771 | 0.55304 | 0.25235 | 1.52310 | 0.82491 | 0.55904 | 0.20473 | 1.58867 | 0.44000 | 0.18615 | 0.09411 | 0.72026 | 3.832 | 42.58 |

| 44 | CROm | coral reefs optimization M | 0.78512 | 0.46032 | 0.25958 | 1.50502 | 0.86688 | 0.35297 | 0.16267 | 1.38252 | 0.63231 | 0.26738 | 0.10734 | 1.00703 | 3.895 | 43.27 |

| 45 | CFO | central force optimization | 0.60961 | 0.54958 | 0.27831 | 1.43750 | 0.63418 | 0.46833 | 0.22541 | 1.32792 | 0.57231 | 0.23477 | 0.09586 | 0.90294 | 3.668 | 40.76 |

| DOS | deterministic oscillatory search | 0.34220 | 0.34218 | 0.34216 | 1.02654 | 0.57086 | 0.34629 | 0.32879 | 1.24594 | 0.20000 | 0.20923 | 0.23077 | 0.64000 | 2.912 | 32.36 | |

| RW | neuroboids optimization algorithm 2(joo) | 0.48754 | 0.32159 | 0.25781 | 1.06694 | 0.37554 | 0.21944 | 0.15877 | 0.75375 | 0.27969 | 0.14917 | 0.09847 | 0.52734 | 2.348 | 26.09 | |

Summary

The algorithm has a minimal number of parameters to configure (population size and movement factor), which simplifies its application and reduces the risk of misconfiguration.

The three-state slope system (positive, negative, unknown) ensures adaptive particle behavior depending on the quality of the current search direction. This is the algorithm's main innovation. The absence of complex mathematical operations makes it computationally efficient.

Its main strength lies in the combination of the efficiency of swarm algorithms with the reliability and reproducibility of deterministic methods, although in general deterministic methods are inferior to stochastic ones, nevertheless, for solving some problems such methods are justified.

Figure 2. Color gradation of algorithms according to the corresponding tests

Figure 3. Histogram of algorithm testing results (scale from 0 to 100, the higher the better, where 100 is the maximum possible theoretical result, in the archive there is a script for calculating the rating table)

DOS pros and cons:

Pros:

- Fast.

- Very simple implementation.

Cons:

- Scatter of results on low-dimensional functions.

The article is accompanied by an archive with the current versions of the algorithm codes. The author of the article is not responsible for the absolute accuracy in the description of canonical algorithms. Changes have been made to many of them to improve search capabilities. The conclusions and judgments presented in the articles are based on the results of the experiments.

Programs used in the article

| # | Name | Type | Description |

|---|---|---|---|

| 1 | #C_AO.mqh | Include | Parent class of population optimization algorithms |

| 2 | #C_AO_enum.mqh | Include | Enumeration of population optimization algorithms |

| 3 | TestFunctions.mqh | Include | Library of test functions |

| 4 | TestStandFunctions.mqh | Include | Test stand function library |

| 5 | Utilities.mqh | Include | Library of auxiliary functions |

| 6 | CalculationTestResults.mqh | Include | Script for calculating results in the comparison table |

| 7 | Testing AOs.mq5 | Script | The unified test stand for all population optimization algorithms |

| 8 | Simple use of population optimization algorithms.mq5 | Script | A simple example of using population optimization algorithms without visualization |

| 9 | Test_AO_DOS.mq5 | Script | DOS test stand |

Translated from Russian by MetaQuotes Ltd.

Original article: https://www.mql5.com/ru/articles/18154

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

Neural Networks in Trading: Adaptive Detection of Market Anomalies (Final Part)

Neural Networks in Trading: Adaptive Detection of Market Anomalies (Final Part)

From Novice to Expert: Automating Base-Candle Geometry for Liquidity Zones in MQL5

From Novice to Expert: Automating Base-Candle Geometry for Liquidity Zones in MQL5

Features of Experts Advisors

Features of Experts Advisors

Engineering Trading Discipline into Code (Part 4): Enforcing Trading Hours and News Disabling in MQL5

Engineering Trading Discipline into Code (Part 4): Enforcing Trading Hours and News Disabling in MQL5

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use