Neural Networks in Trading: Adaptive Detection of Market Anomalies (Final Part)

Introduction

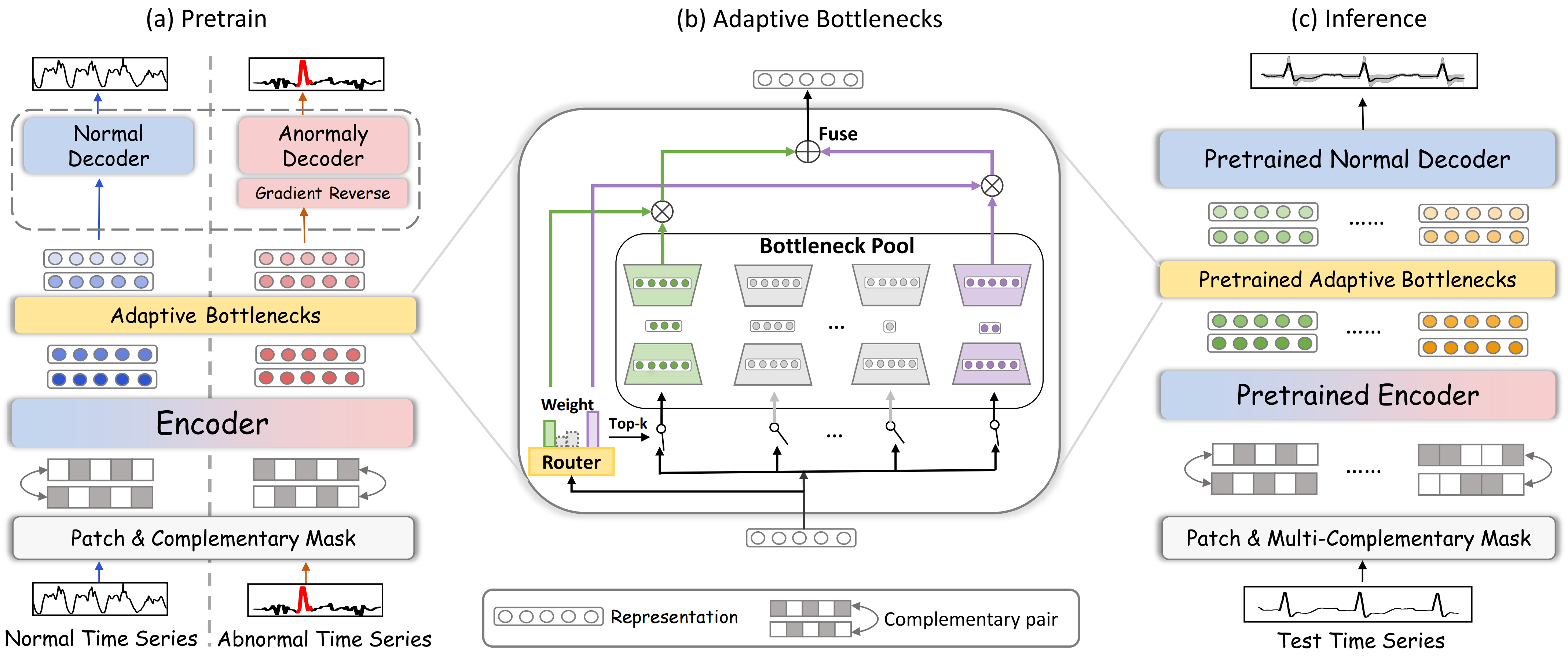

In the previous article, we explored the theoretical foundations of the DADA framework (Adaptive Bottlenecks and Dual Adversarial Decoders), designed to detect anomalies in time series using deep learning techniques. This tool enables effective data analysis and the identification of abnormal market conditions, which is particularly critical in highly volatile environments. By using adaptive information processing methods, the model can flexibly adjust to changing market dynamics, making it a versatile solution for analyzing a wide range of time series.

The architecture of the DADA framework is built around three key components, each serving a distinct purpose. The first is the Adaptive Bottlenecks module, which can dynamically adjust the level of compression applied to the input data. This approach helps preserve the most important characteristics of market data while minimizing information loss that could degrade analytical accuracy. Unlike traditional models with fixed compression parameters, this system adapts in real time to current market conditions.

The second major component is a pair of adversarial decoders. The first decoder reconstructs normal market states, allowing the model to better capture typical behavioral patterns. The second focuses on anomalous data, enabling clear separation between standard and abnormal scenarios. This dual-decoder setup reduces false positives and improves overall model robustnes.

The third key element is the patching and masking mechanism, which plays an essential role in processing time series data It allows the model to dynamically highlight critical segments, suppress noise, and improve the quality of data representation. Patching breaks the data into smaller segments, enabling the model to analyze local patterns more effectively. Random masking enhances training. It forces the model to reconstruct hidden portions, thereby learning latent dependencies in the data. This improves its ability to detect complex structures and patterns. Together, these techniques increase anomaly detection accuracy and make the model more resilient to market fluctuations. Masking also improves generalization, preventing overfitting to specific segments of market data.

One of DADA's main advantages is its adaptability. Unlike traditional algorithms that require retraining when market conditions change, DADA can automatically adjust its parameters. This is especially important in high-frequency trading, where decisions must be made in fractions of a second. The ability to adapt dynamically allows the model to perform effectively across a wide range of market scenarios, from stable trends to sudden spikes in volatility.

The original visualization of the DADA framework is presented below.

In the practical part of the previous article, we implemented a multi-window convolutional layer object CNeuronMultiWindowsConvOCL. It's worth noting that this component does not directly follow from the original description of the DADA architecture. However, in our implementation, it plays a key role within the Adaptive Bottlenecks module. Specifically, it enables dynamic adjustment of the data compression level.

Adaptive Bottlenecks Module

The next major step is the actual construction of the Adaptive Bottlenecks module. This module is a powerful tool for dynamically processing input data, allowing efficient analysis of complex time series and the detection of anomalies in their behavior.

As mentioned earlier, it is conceptually similar to the Mixture of Experts (MoE) module, previously implemented in the CNeuronMoE object. Both approaches rely on multiple smaller models operating in parallel to analyze the input data. The system dynamically selects the top k most relevant mini-models for processing each segment, based on its context. This improves both adaptability and accuracy by focusing computation on the most relevant patterns.

The defining feature of Adaptive Bottlenecks is the use of a set of autoencoders as these mini-models, each with a different compression level in the latent space. This allows the model to flexibly adapt to changing conditions and adjust the level of detail in the data representation depending on the characteristics of the time series. As a result, the Adaptive Bottlenecks module effectively reduces redundancy, suppresses noise, and improves the detection of anomalous patterns.

Our implementation of Adaptive Bottlenecks is built within the CNeuronAdaBN object. As expected, it inherits from CNeuronMoE. This approach allows us to reuse key mechanisms of Mixture of Experts such as dynamic load distribution and adaptive expert selection, both of which align naturally with the Adaptive Bottlenecks concept. This is reflected in the structure of the new object.

class CNeuronAdaBN : public CNeuronMoE { public: CNeuronAdaBN(void) {}; ~CNeuronAdaBN(void) {}; virtual bool Init(uint numOutputs, uint myIndex, COpenCLMy *open_cl, uint window, uint window_out, uint units_count, uint &bottlenecks[], uint top_k, uint variables, ENUM_OPTIMIZATION optimization_type, uint batch); //--- virtual int Type(void) override const { return defNeuronAdaBN; } };

As you can see, we only override the initialization method, without introducing additional internal objects.

Recall that the parent class CNeuronMoE includes a gating mechanism (cGates) for selecting the top k experts, as well as a dynamic array (cExperts) containing pointers to the internal model objects. At first glance, the idea of parallel experts may seem at odds with traditional sequential models. However, we use a sequence of convolutional layers capable of processing independent sequences in parallel. This allows us to construct specialized mini-MLPs that operate concurrently, each with its own trainable parameters.

This architectural choice significantly improves adaptability, as each expert specializes in its own subtask, enabling more effective detection of complex patterns in time series data. Furthermore, the dynamic selection mechanism ensures that only the most relevant experts are engaged during analysis, improving predictive accuracy.

class CNeuronMoE : public CNeuronBaseOCL { protected: CNeuronTopKGates cGates; CLayer cExperts; //--- .......... .......... .......... };

The inherited mechanism for selecting the top k experts fully meets the requirements of the CNeuronAdaBN module. So we reuse it without modification. We only need to populate the inherited dynamic array with a new sequence of objects tailored to our task. The parent class also handles forward and backward propagation, so no additional implementation is required in that regard.

The sequence of internal objects is defined in the Init method, which we override.

bool CNeuronAdaBN::Init(uint numOutputs, uint myIndex, COpenCLMy *open_cl, uint window, uint window_out, uint units_count, uint &bottlenecks[], uint top_k, uint variables, ENUM_OPTIMIZATION optimization_type, uint batch) { if(!CNeuronBaseOCL::Init(numOutputs, myIndex, open_cl, window * units_count * variables, optimization_type, batch)) return false;

As usual, the method parameters include a set of constants that define the architecture of the class. Among them, a key parameter is the bottlenecks array, which specifies the latent dimensions of the autoencoders being created.

Inside the method, we first call the initialization method of the base fully connected neural layer. Recall that this class serves as the common ancestor for all neural layer objects in our library. We intentionally skip initializing the direct parent class, as we do not want to initialize its expert pool. However, this decision requires us to manually initialize all inherited components.

After successfully initializing the base interfaces, we proceed to initialize the components inherited from the parent class. First, we initialize the module responsible for selecting the top k experts. The total number of experts is determined by the size of the bottlenecks array. The remaining parameters are passed from the external program.

int index = 0; if(!cGates.Init(0, index, OpenCL, window, units_count * variables, bottlenecks.Size(), top_k, optimization, iBatch)) return false;

Next, we prepare a dynamic array and local variables to temporarily store pointers to the internal objects of the Adaptive Bottlenecks module.

cExperts.Clear(); cExperts.SetOpenCL(OpenCL); CNeuronConvOCL *conv = NULL; CNeuronMultiWindowsConvOCL *mwconv = NULL; CNeuronTransposeRCDOCL *transp = NULL;

We then run a loop to compute the total size of all latent states across the autoencoders.

uint bn_size = 0; for(uint i = 0; i < bottlenecks.Size(); i++) bn_size += bottlenecks[i];

With the preparation complete, we move on to constructing the sequence of autoencoder components.

Interestingly, the first layer we create is a standard convolutional layer. At first glance, this may seem like an unusual choice given the complexity of the Adaptive Bottlenecks architecture. However, it plays an important role in the processing pipeline.

The key idea is that the number of filters in this convolutional layer is set equal to the sum of all latent dimensions of the autoencoders. Since each autoencoder processes the same input data, the level of compression depends on its latent size. Each filter operates independently, producing a single element in the output buffer. Conceptually, this can be viewed as a large number of mini-models compressing the input into individual scalar values. These outputs can then be grouped into segments of arbitrary sizes, corresponding to the latent spaces of different autoencoders. The only constraint is that the total number of elements remains constant.

index++; conv = new CNeuronConvOCL(); if(!conv || !conv.Init(0, index, OpenCL, window, window, bn_size, units_count, variables, optimization, iBatch) || !cExperts.Add(conv)) { delete conv; return false; } conv.SetActivationFunction(SoftPlus);

We will use this property in the training process. Next, we introduce the multi-window convolutional layer. Each convolution window processes its own group of elements, forming the latent representation of a specific autoencoder. And then it reconstructs the data to the desired size.

index++; mwconv = new CNeuronMultiWindowsConvOCL(); if(!mwconv || !mwconv.Init(0, index, OpenCL, bottlenecks, window_out, units_count, variables, optimization, iBatch) || !cExperts.Add(mwconv)) { delete conv; return false; } mwconv.SetActivationFunction(SoftPlus);

We then add another layer to the decoder part of the autoencoders. Each autoencoder must have its own layer with unique trainable parameters. However, the output of the multi-window convolutional layer can be viewed as a 4D tensor: [Variable, Units, Autoencoder, Dimension]. A standard convolutional layer would not allow us to assign unique parameters to each autoencoder. However, this can be easily implemented by putting the autoencoder dimension first. So next, we declare a data transposition layer.

transp = new CNeuronTransposeRCDOCL(); index++; if(!transp || !transp.Init(0, index, OpenCL, units_count * variables, bottlenecks.Size(), window_out, optimization, iBatch) || !cExperts.Add(transp)) { delete transp; return false; } transp.SetActivationFunction((ENUM_ACTIVATION)conv.Activation());

After that, we add a convolutional layer, specifying the number of variables equal to the number of autoencoders. This signals the layer to initialize separate weight matrices for each one.

index++; conv = new CNeuronConvOCL(); if(!conv || !conv.Init(0, index, OpenCL, window_out, window_out, window, units_count * variables, bottlenecks.Size(), optimization, iBatch) || !cExperts.Add(conv)) { delete conv; return false; } conv.SetActivationFunction(None);

Finally, we add another transpose layer to restore the original data layout.

transp = new CNeuronTransposeRCDOCL(); index++; if(!transp || !transp.Init(0, index, OpenCL, bottlenecks.Size(), units_count * variables, window, optimization, iBatch) || !cExperts.Add(transp)) { delete transp; return false; } transp.SetActivationFunction((ENUM_ACTIVATION)conv.Activation()); //--- return true; }

With that, the method is complete. Before returning, we pass a boolean result back to the calling program to indicate successful execution.

This concludes our discussion of the implementation approach for the Adaptive Bottlenecks module. As mentioned earlier, forward and backward propagation are handled by the inherited functionality of the parent class. The full source code for this object and all its methods is available in the attached materials.

Model Architecture

In this iteration, we chose not to bundle the entire DADA framework into a single monolithic object. Instead, we rely on well-tested components from our library, integrate the Adaptive Bottlenecks module developed earlier, and assemble everything into a flexible, linear architecture for the trainable model.

This approach gives us significantly more freedom. First, it makes the system more modular — individual components can be replaced or optimized without rewriting the entire codebase. Second, it removes constraints on the encoder and decoder design. We are no longer locked into a rigid structure and can experiment, tailor the model to specific tasks, and search for optimal configurations.

This modular design makes the model description somewhat more complex, but it's a reasonable trade-off for flexibility and adaptability.

In this experiment, we train three models simultaneously, each playing a key role in the overall analysis and decision-making system. This setup forms a multi-level intelligent system capable of analyzing data, adapting to changing market dynamics, forecasting price movements, and refining strategy based on comprehensive data analysis.

The first model is the Environment State Encoder, built on the DADA framework architecture with a normal-state decoder. It is trained as a classical autoencoder, whose primary goal is to reconstruct the input data from the latent space with minimal loss. This allows the system to learn the most informative compressed representation of the data while preserving essential characteristics of the environment. Beyond simple dimensionality reduction, this mechanism uncovers hidden dependencies in the data—crucial for building a high-precision analytical model.

The second key component is the Actor, which replaces the anomalous decoder found in the original DADA architecture. This module is responsible for active interaction with the market environment. Its main objectives are to identify stable trends, detect potential reversal points, and make decisions that optimize the trading strategy.

The Actor processes multidimensional input data, recognizes recurring patterns and evolving market dynamics, and generates trading signals based on these insights. This transforms the system from a purely analytical tool into an adaptive one—capable not only of understanding the data but also of responding to changes and identifying optimal entry and exit points.

However, even with a strong analysis and signal-generation mechanism, forecast accuracy remains critical. This is where the third model comes in. Its primary function is to estimate the most likely direction of future price movement. This component complements the Actor and serves as an additional filter for trading decisions.

The architectures of all three models are defined in the CreateDescriptions method. This method receives three pointers to dynamic data buffers, where we construct the architectural descriptions of the models.

bool CreateDescriptions(CArrayObj *&encoder, CArrayObj *&actor, CArrayObj *&probability) { //--- CLayerDescription *descr; //--- if(!encoder) { encoder = new CArrayObj(); if(!encoder) return false; } if(!actor) { actor = new CArrayObj(); if(!actor) return false; } if(!probability) { probability = new CArrayObj(); if(!probability) return false; }

At the start of the method, we validate the pointers and create new instances if necessary.

We begin by defining the architecture of the Environment State Encoder. As expected, this model takes as input a tensor describing the current state of the environment. To handle the raw input data, we first introduce a fully connected layer of sufficient size.

//--- Encoder encoder.Clear(); //--- Input layer if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; int prev_count = descr.count = (HistoryBars * BarDescr); descr.activation = None; descr.optimization = ADAM; if(!encoder.Add(descr)) { delete descr; return false; }

The input consists of raw data from the terminal. Obviously, different variables within this multimodal representation follow different distributions. This makes analysis more difficult. To address this, we apply a batch normalization layer to bring the data into a comparable scale.

//--- layer 1 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBatchNormOCL; descr.count = prev_count; descr.batch = 1e4; descr.activation = None; descr.optimization = ADAM; if(!encoder.Add(descr)) { delete descr; return false; }

Next, following the DADA framework, we apply patching and masking. For random masking of 20% of the data, we use a Dropout layer.

//--- layer 2 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronDropoutOCL; descr.count = prev_count; descr.probability = 0.2f; descr.activation = None; if(!encoder.Add(descr)) { delete descr; return false; }

In the original DADA approach, patching operates on unit sequences. In our case, we transpose the data to create optimal conditions for processing such sequences.

//--- layer 3 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronTransposeOCL; descr.window = BarDescr; prev_count = descr.count = HistoryBars; descr.activation = SoftPlus; if(!encoder.Add(descr)) { delete descr; return false; }

We then use convolutional layers to independently process segments of a given size. Specifically, we apply two consecutive convolutional layers, which both encode non-overlapping segments in parallel and act as the encoder for the environment model.

//--- layer 4 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronConvOCL; descr.window = HistoryBars / Segments; prev_count = descr.count = (HistoryBars + descr.window - 1) / descr.window; descr.step = descr.window; descr.layers = BarDescr; descr.activation = SoftPlus; int prev_wout = descr.window_out = EmbeddingSize; if(!encoder.Add(descr)) { delete descr; return false; } //--- layer 5 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronConvOCL; descr.count = prev_count; descr.window = prev_wout; descr.step = prev_wout; prev_wout = descr.window_out = EmbeddingSize; descr.layers = BarDescr; descr.activation = TANH; if(!encoder.Add(descr)) { delete descr; return false; }

This is followed by the Adaptive Bottlenecks module, where we create 15 small autoencoders with latent dimensions that are multiples of 8. For each segment, we select the 3 most suitable autoencoders for encoding.

//--- layer 6 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronAdaBN; descr.window = prev_wout; descr.count = prev_count; descr.window_out = 256; descr.step = 3; // Top K descr.layers = BarDescr; // Variables { int temp[15]; for(uint i = 0; i < temp.Size(); i++) temp[i] = int(i + 1) * 8; if(ArrayCopy(descr.windows, temp) < (int)temp.Size()) return false; } descr.batch = 1e4; descr.activation = None; descr.optimization = ADAM; if(!encoder.Add(descr)) { delete descr; return false; }

To reconstruct the segments, we use a decoder implemented as two consecutive convolutional layers, mirroring the encoder structure.

//--- layer 7 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronConvOCL; descr.count = prev_count * BarDescr; descr.window = prev_wout; descr.step = prev_wout; prev_wout = descr.window_out = EmbeddingSize / 2; descr.activation = SoftPlus; if(!encoder.Add(descr)) { delete descr; return false; } //--- layer 8 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronConvOCL; descr.count = prev_count * BarDescr; descr.window = prev_wout; descr.step = prev_wout; prev_wout = descr.window_out = HistoryBars / Segments; descr.activation = TANH; if(!encoder.Add(descr)) { delete descr; return false; }

Note that the decoder output uses the hyperbolic tangent (tanh) activation function. This is intentional. After batch normalization, the data is centered around zero with a variance close to one. According to the three-sigma rule, about 68% of normally distributed values lie within one standard deviation of the mean. With a standard deviation of 1, this corresponds to the range [-1, 1]. It is exactly the range of the tanh function. This ensures that the decoder output remains within the most probable value range while suppressing outliers.

To evaluate reconstruction quality, we compare the decoder output with the original data. Since we previously transposed the data for processing, we first restore it to its original format via inverse transposition.

//--- layer 9 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronTransposeOCL; descr.count = BarDescr; descr.window = HistoryBars; descr.activation = None; if(!encoder.Add(descr)) { delete descr; return false; }

And we return the obtained values to the distribution of the original data.

//--- layer 10 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronRevInDenormOCL; descr.count = HistoryBars * BarDescr; descr.layers = 1; descr.activation = None; if(!encoder.Add(descr)) { delete descr; return false; }

The remaining two models operate on the latent representation generated by the Adaptive Bottlenecks module. Therefore, we store the parameters of this module in a local variable.

//--- Latent CLayerDescription *latent = encoder.At(LatentLayer); if(!latent) return false;

Next, we define the architecture of the Actor. The Actor analyzes the environment in the context of the current account balance and open positions, then determines the optimal trading action. To enable this, the model takes as input a vector describing the account state. We first process this input with a fully connected layer.

//--- Actor actor.Clear(); //--- Input layer if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; prev_count = descr.count = AccountDescr; descr.activation = None; descr.optimization = ADAM; if(!actor.Add(descr)) { delete descr; return false; }

The data is normalized.

//--- layer 1 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBatchNormOCL; descr.count = prev_count; descr.batch = 1e4; descr.activation = None; descr.optimization = ADAM; if(!actor.Add(descr)) { delete descr; return false; }

We then apply a concatenation layer, combining two data sources: the account state and the compressed environment representation from the Encoder's latent space. This allows the model to consider both financial metrics and abstract environmental features when making decisions.

//--- layer 2 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronConcatenate; descr.count = LatentCount; descr.window = prev_count; descr.step = latent.count * latent.window * latent.layers; descr.batch = 1e4; descr.activation = SoftPlus; descr.optimization = ADAM; if(!actor.Add(descr)) { delete descr; return false; }

The combined data is passed through a decision-making module consisting of three fully connected layers. This module extracts key patterns and produces the final action output.

//--- layer 3 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; descr.count = LatentCount; descr.batch = 1e4; descr.activation = TANH; descr.optimization = ADAM; if(!actor.Add(descr)) { delete descr; return false; } //--- layer 4 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; descr.count = LatentCount; descr.activation = TANH; descr.batch = 1e4; descr.optimization = ADAM; if(!actor.Add(descr)) { delete descr; return false; } //--- layer 5 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; prev_count = descr.count = NActions; descr.activation = SIGMOID; descr.batch = 1e4; descr.optimization = ADAM; if(!actor.Add(descr)) { delete descr; return false; }

The third model, responsible for predicting the probability of future price movement, has the simplest architecture. It operates solely on the compressed representation of the environment state. We begin with a fully connected layer to process the latent input.

probability.Clear(); //--- Input layer if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; prev_count = descr.count = latent.count * latent.window * latent.layers; descr.activation = latent.activation; descr.optimization = ADAM; if(!probability.Add(descr)) { delete descr; return false; }

This is followed by a decision module consisting of three fully connected layers.

//--- layer 1 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; descr.count = LatentCount; descr.activation = TANH; descr.batch = 1e4; descr.optimization = ADAM; if(!probability.Add(descr)) { delete descr; return false; } //--- layer 2 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; descr.count = LatentCount; descr.activation = TANH; descr.batch = 1e4; descr.optimization = ADAM; if(!probability.Add(descr)) { delete descr; return false; } //--- layer 3 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronBaseOCL; prev_count = descr.count = NActions / 3; descr.activation = SoftPlus; descr.batch = 1e4; descr.optimization = ADAM; if(!probability.Add(descr)) { delete descr; return false; }

Finally, the output is transformed into probabilities using the SoftMax function.

//--- layer 4 if(!(descr = new CLayerDescription())) return false; descr.type = defNeuronSoftMaxOCL; prev_count = descr.count = prev_count; descr.step = 1; descr.activation = None; descr.batch = 1e4; descr.optimization = ADAM; if(!probability.Add(descr)) { delete descr; return false; } //--- return true; }

A complete architectural description of the models is provided in the attachment.

Model Training

After defining the model architectures, we move on to the next stage—training. The training algorithm is implemented in the Expert Advisor "...\DADA\Study.mq5". Since we need to train three models in parallel, some adjustments were required in the EA's logic. In this article, we won't go through the entire code in detail. We'll focus on the core training method Train.

At the beginning of the method, we perform some basic setup by declaring several local variables.

void Train(void) { //--- vector<float> probability = vector<float>::Full(Buffer.Size(), 1.0f / Buffer.Size()); //--- vector<float> result, target, state; matrix<float> fstate = matrix<float>::Zeros(1, NForecast * BarDescr); bool Stop = false; //--- uint ticks = GetTickCount();

The actual training takes place within a nested loop structure. The outer loop iterates over training batches. For each batch, a trajectory is randomly sampled from the experience replay buffer, along with a starting point for training within that trajectory.

for(int iter = 0; (iter < Iterations && !IsStopped() && !Stop); iter += Batch) { int tr = SampleTrajectory(probability); int start = (int)((MathRand() * MathRand() / MathPow(32767, 2)) * (Buffer[tr].Total - 2 - NForecast - Batch)); if(start <= 0) { iter -= Batch; continue; } if(!Encoder.Clear() || !Actor.Clear()) { PrintFormat("%s -> %d", __FUNCTION__, __LINE__); Stop = true; break; } result = vector<float>::Zeros(NActions);

Inside the inner loop, the models are trained on consecutive states within a single batch.

It should be noted here that the models developed within the framework of this article do not contain recurrent blocks. Typically, such models are trained on completely random states extracted from the experience replay buffer. However, in this case, training will be performed on "almost ideal" trajectories, the actions of which are generated directly by this EA's algorithm based on available information about subsequent states of the environment. Unlike real-time model training, when working with a replay buffer, we have data on subsequent states of the environment for all stored records except the last one. This allows for more precise guidance of the training process.

But there is another side to the coin. In this case, we have no information about open positions. However, it is important for us to train the model not only to open positions, but also to manage them by searching for the optimal exit point. Therefore, during the training process, we will form small training batches while also forming "optimal" positions.

for(int i = start; i < MathMin(Buffer[tr].Total, start + Batch); i++) { if(!state.Assign(Buffer[tr].States[i].state) || MathAbs(state).Sum() == 0 || !bState.AssignArray(state)) { iter -= Batch + start - i; break; } //--- bTime.Clear(); double time = (double)Buffer[tr].States[i].account[7]; double x = time / (double)(D'2024.01.01' - D'2023.01.01'); bTime.Add((float)MathSin(x != 0 ? 2.0 * M_PI * x : 0)); x = time / (double)PeriodSeconds(PERIOD_MN1); bTime.Add((float)MathCos(x != 0 ? 2.0 * M_PI * x : 0)); x = time / (double)PeriodSeconds(PERIOD_W1); bTime.Add((float)MathSin(x != 0 ? 2.0 * M_PI * x : 0)); x = time / (double)PeriodSeconds(PERIOD_D1); bTime.Add((float)MathSin(x != 0 ? 2.0 * M_PI * x : 0)); if(bTime.GetIndex() >= 0) bTime.BufferWrite(); //--- Account float PrevBalance = Buffer[tr].States[MathMax(i - 1, 0)].account[0]; float PrevEquity = Buffer[tr].States[MathMax(i - 1, 0)].account[1]; float profit = float(bState[0] / _Point * (result[0] - result[3])); bAccount.Clear(); bAccount.Add(1); bAccount.Add((PrevEquity + profit) / PrevEquity); bAccount.Add(profit / PrevEquity); bAccount.Add(MathMax(result[0] - result[3], 0)); bAccount.Add(MathMax(result[3] - result[0], 0)); bAccount.Add((bAccount[3] > 0 ? profit / PrevEquity : 0)); bAccount.Add((bAccount[4] > 0 ? profit / PrevEquity : 0)); bAccount.Add(0); bAccount.AddArray(GetPointer(bTime)); if(bAccount.GetIndex() >= 0) bAccount.BufferWrite();

In the loop body, we first extract information from the data replay buffer and form the source data objects for the models to be trained. After which, we call the environment state Encoder feed-forward methods.

//--- Feed Forward if(!Encoder.feedForward((CBufferFloat*)GetPointer(bState), 1, false, (CBufferFloat*)NULL)) { PrintFormat("%s -> %d", __FUNCTION__, __LINE__); Stop = true; break; }

After that, we call similar methods of the other two models, feeding them with a pointer to the environment state Encoder object.

if(!Actor.feedForward((CBufferFloat*)GetPointer(bAccount), 1, false, GetPointer(Encoder), LatentLayer)) { PrintFormat("%s -> %d", __FUNCTION__, __LINE__); Stop = true; break; } if(!Probability.feedForward(GetPointer(Encoder), LatentLayer, (CBufferFloat*)NULL)) { PrintFormat("%s -> %d", __FUNCTION__, __LINE__); Stop = true; break; }

This is followed by the process of generating the "optimal trading operation". This logic was fully carried over from a previous article on multitask learning. So we won't repeat it here, as you can find its complete description at this link.

Once the target values are prepared, we proceed to optimize the model parameters to minimize the deviation from these targets. Training starts with the environment state Encoder. For the target tensor, we pass to it the vector describing the analyzed environment state, which we also used in the feed-forward pass.

//--- State Encoder if(!Encoder.backProp(GetPointer(bState), (CBufferFloat*)NULL, NULL)) { PrintFormat("%s -> %d", __FUNCTION__, __LINE__); Stop = true; break; }

The Actor is trained next, minimizing the deviation from the "optimal trading operation".

//--- Actor Policy if(!Actor.backProp(GetPointer(bActions), (CNet*)GetPointer(Encoder), LatentLayer) || !Encoder.backPropGradient((CBufferFloat*)NULL, (CBufferFloat*)NULL, LatentLayer, true) ) { PrintFormat("%s -> %d", __FUNCTION__, __LINE__); Stop = true; break; }

For the third model, we determine the direction of future price movement based on the color of the next bar.

target = vector<float>::Zeros(NActions / 3); if(fstate[0, 0] > 0) target[0] = 1; else if(fstate[0, 0] < 0) target[1] = 1; if(!Result.AssignArray(target) || !Probability.backProp(Result, (CBufferFloat*)NULL) || !Encoder.backPropGradient((CBufferFloat*)NULL, (CBufferFloat*)NULL, LatentLayer) ) { PrintFormat("%s -> %d", __FUNCTION__, __LINE__); Stop = true; break; }

Finally, we log the training progress and move on to the next iteration of the loop.

//--- if(GetTickCount() - ticks > 500) { double percent = double(iter + i - start) * 100.0 / (Iterations); string str = StringFormat("%-13s %6.2f%% -> Error %15.8f\n", "Encoder", percent, Encoder.getRecentAverageError()); str += StringFormat("%-14s %6.2f%% -> Error %15.8f\n", "Actor", percent, Actor.getRecentAverageError()); str += StringFormat("%-13s %6.2f%% -> Error %15.8f\n", "Probability", percent, Probability.getRecentAverageError()); Comment(str); ticks = GetTickCount(); } } }

Once all training iterations are complete, we output the results to the log and initiate the shutdown process of the Expert Advisor.

Comment(""); //--- PrintFormat("%s -> %d -> %-15s %10.7f", __FUNCTION__, __LINE__, "Actor", Actor.getRecentAverageError()); PrintFormat("%s -> %d -> %-15s %10.7f", __FUNCTION__, __LINE__, "Probability", Probability.getRecentAverageError()); ExpertRemove(); //--- }

The full source code of the EA is provided in the appendix and is available for independent study. The attachment also include programs for interacting with the environment and testing the trained models.

Testing

After implementing the DADA framework concepts in MQL5 and integrating them into trainable models, the next critical step is evaluating their performance on real historical data. This allows us to assess their viability in practical trading conditions.

For training, we generated a dataset of random runs using the MetaTrader 5 strategy tester on historical EURUSD data (M1 timeframe) for 2024. The data was collected using standard indicator settings to ensure a clean experiment and eliminate external influences.

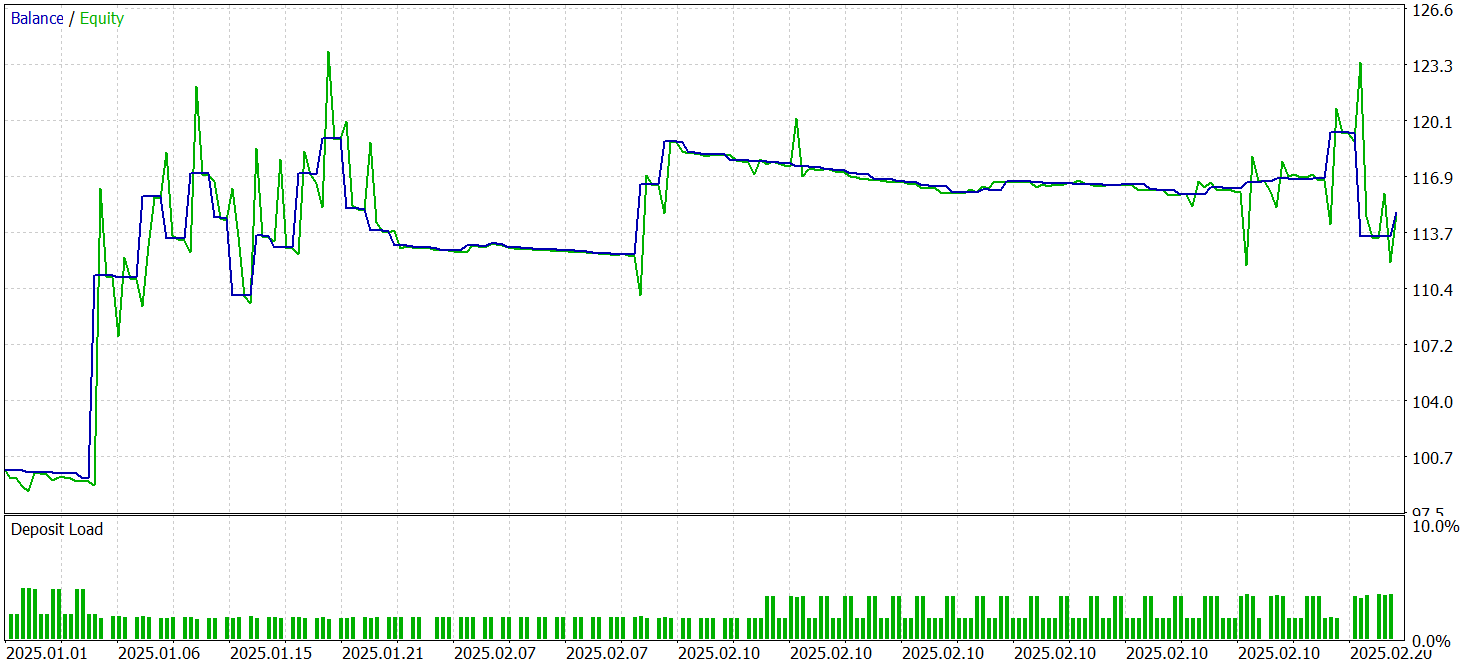

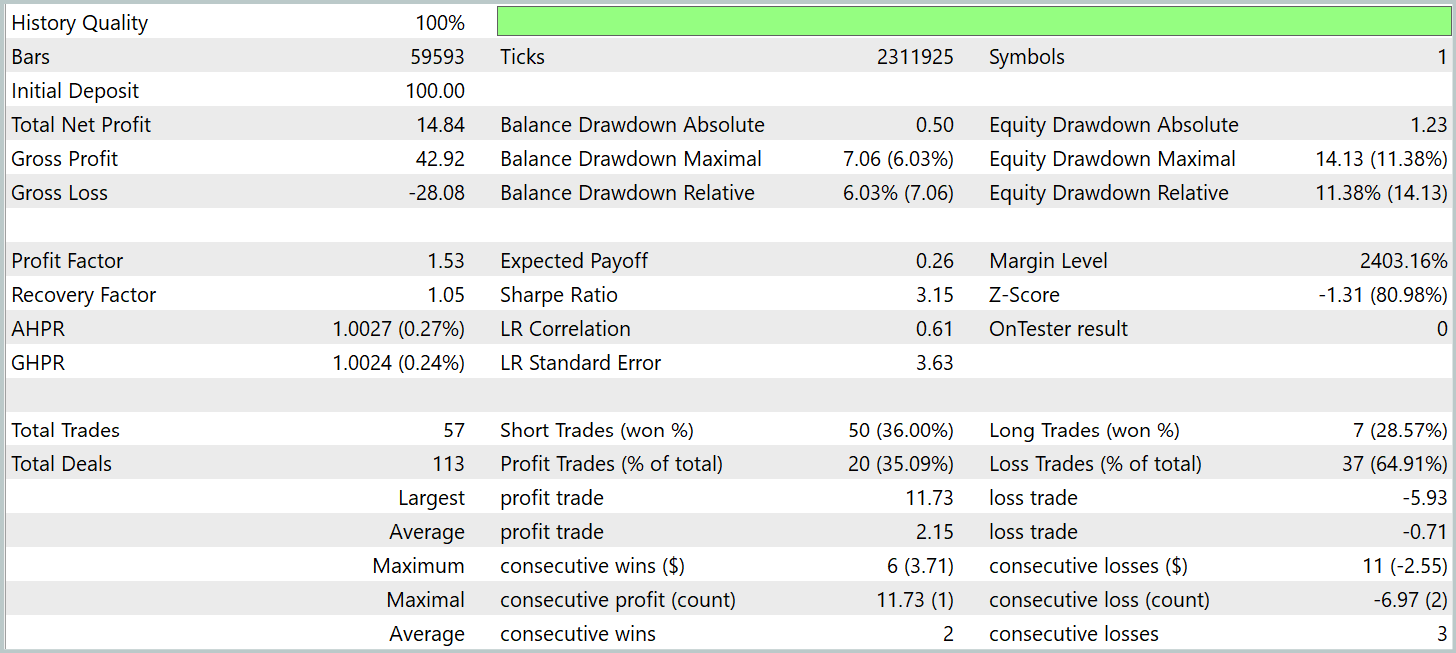

The trained models were then tested on historical data from January–February 2025. All experimental parameters were kept unchanged to ensure an objective evaluation of the Actor's learned behavior. Testing on data not used during training is a crucial validation step, as it reflects how the model performs under near-real conditions.

The testing results are presented below.

During the test period, the model executed 57 trades, with over 35% closed in profit. Despite this relatively modest win rate, the average profit per winning trade was roughly three times higher than the average loss, allowing the model to achieve an overall profit and a profit factor of 1.53.

However, it is important to note that most of the profit was generated in the first half of January. During the remainder of the test period, the equity curve fluctuated within a narrow range. This suggests that further optimization of the model may be necessary.

It should also be noted that several DADA architectural modifications were introduced during implementation. This means that the results are specific to this particular version of the DADA framework.

Conclusion

In this work, we explored the DADA framework, which introduces an innovative approach by combining adaptive bottlenecks with dual parallel decoders for more accurate time series analysis. A key advantage of this method is its ability to dynamically adapt to different data structures without requiring prior adjustment.

We implemented our own version of the proposed approach using MQL5. We integrated this version into trainable models. These models were trained on real historical data and demonstrated profitability during testing. However, the equity curve did not show a stable upward trend, indicating that further refinement and optimization of the strategy are needed.

Related Links

- Towards a General Time Series Anomaly Detector with Adaptive Bottlenecks and Dual Adversarial Decoders

- Other articles from this series

Programs Used in the Article

| # | Name | Type | Description |

|---|---|---|---|

| 1 | Research.mq5 | Expert Advisor | Expert Advisor for collecting datasets |

| 2 | ResearchRealORL.mq5 | Expert Advisor | Expert Advisor for collecting datasets using the Real-ORL method |

| 3 | Study.mq5 | Expert Advisor | Model Training Expert Advisor |

| 4 | Test.mq5 | Expert Advisor | Model Testing Expert Advisor |

| 5 | Trajectory.mqh | Class Library | System state and model architecture description structure |

| 6 | NeuroNet.mqh | Class Library | A library of classes for creating a neural network |

| 7 | NeuroNet.cl | Library | OpenCL program code |

Translated from Russian by MetaQuotes Ltd.

Original article: https://www.mql5.com/ru/articles/17577

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

Features of Custom Indicators Creation

Features of Custom Indicators Creation

Deterministic Oscillatory Search (DOS)

Deterministic Oscillatory Search (DOS)

Features of Experts Advisors

Features of Experts Advisors

From Novice to Expert: Automating Base-Candle Geometry for Liquidity Zones in MQL5

From Novice to Expert: Automating Base-Candle Geometry for Liquidity Zones in MQL5

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use