Chaos optimization algorithm (COA)

Contents

Introduction

In modern computing problems, especially in financial markets and algorithmic trading, efficient optimization methods play a crucial role. Among the many global optimization algorithms, approaches inspired by natural phenomena occupy a special place. Chaos Optimization Algorithm (COA) is one such innovative solution that combines chaos theory and global optimization methods.

In this article, I present an improved chaotic optimization algorithm that exploits fundamental properties of chaotic systems — ergodicity, sensitivity to initial conditions, and quasi-stochastic behavior. The algorithm uses various chaotic maps to efficiently explore the search space and attempt to avoid local optima, a problem that often arises in optimization methods.

A distinctive feature of the proposed algorithm is that it combines chaotic search with the weighted gradient method and adaptive mechanisms that allow dynamic adjustment of search parameters. By using several types of chaotic maps — logistic, sinusoidal, and tent map — the algorithm demonstrates good resistance to stagnation and the ability to find global optima in complex multi-modal functions. As usual, we will analyze the entire algorithm and then test its capabilities on our now-standard test functions.

Implementation of the algorithm

The algorithm consists of three main phases:

- chaotic search with the first carrier wave, where the initial chaotic variables are initialized, then successive values of the chaotic variables are generated through a logistic map, and the chaos variables are mapped onto the range of variables to be optimized, and the best solution found is stored;

- search along the direction of the weighted gradient;

- chaotic search with a second carrier wave, where a local search is carried out around the best solution, with a fractal approach to implementing the step size.

In the image below, I tried to illustrate the essence of the chaotic optimization algorithm, the main idea of which is to use chaos as a useful optimization tool, rather than a random process. The global optimum (bright yellow glow in the center) is the target we want to find. The blue glowing particles are search agents that move along chaotic trajectories, these trajectories are shown by glowing lines demonstrating the non-linear nature of the movement.

Demonstration of key properties of chaotic behavior: determinism (trajectories are smooth, not random), ergodicity (particles explore the entire space), sensitivity to initial conditions (different particles move along different trajectories), while the search dynamics display a glow of varying intensity, showing the "energy" of the search in different areas, where concentric circles around the optimum symbolize areas of attraction, blurring and gradients convey the continuity of the search space. The main stages of the algorithm:

- wide search away from the center (distant particles),

- gradual approach to promising zones (average trajectories),

- local search near the optimum (particles close to the center).

The resulting image can be seen as a "portrait" of chaotic optimization, where chaos is presented not as disorder, but as a controlled process of exploring the solution space.

Figure 1. Visualization of chaotic optimization

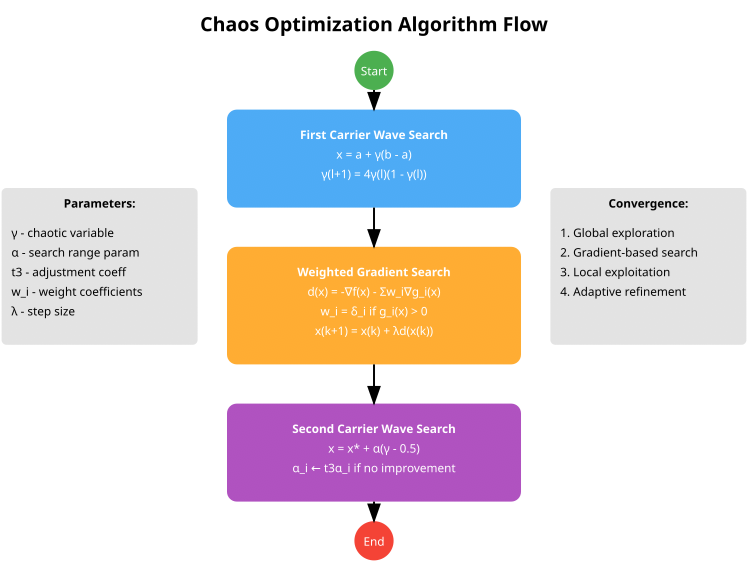

The visual diagram of the algorithm presented below reflects three main stages:

- First Carrier Wave Search (blue block) uses a chaotic map for global search and transforms the chaotic variables into the search space,

- Weighted Gradient Search (orange block) is a weighted gradient method that includes handling constraints via weight ratios,

- Second Carrier Wave Search (purple block) — local search around the best solution and adaptive tuning of the α parameter.

Figure 2. Operation scheme of the chaotic optimization algorithm

The diagram represents the basic three-stage structure of the COA algorithm (CHAOS). In my implementation (and there were several of them), I decided to settle on a more advanced and practical version of the algorithm, expanded the agent structure, added a movement counter, a stagnation counter, and chaotic variables for each dimension. Adding variety to the chaotic maps was also important; the authors relied only on the logistic map, while my version added sinusoidal and tent maps. This implementation includes automatic adaptation of penalty parameters, adaptation of search parameters, depending on success with inertia adjustment. Additionally, a more complex initialization using the Latin hypercube was added.

Let's start writing the pseudocode of the algorithm:

Algorithm initialization

- Setting algorithm parameters:

- population size (popSize)

- number of iterations of the first phase (S1)

- number of iterations of the second phase (S2)

- penalty parameter (sigma)

- adjustment ratio (t3)

- small constant for weight ratios (eps)

- inertia parameter (inertia)

- social influence parameter (socialFactor)

- mutation probability (mutationRate)

- Initializing agents:

- For each agent in the population:

- initialize chaotic variables (gamma) with different initial values

- reset velocity vectors (velocity)

- set the stagnation counter to 0

- For each agent in the population:

- Initializing search parameters (alpha):

- adapt parameters depending on the size of the search space

- alpha[c] = 0.1 * (rangeMax[c] - rangeMin[c]) / sqrt(coords)

Phase 1: Initial population with chaotic distribution

- Creating an initial population using a Latin hypercube:

- generate values for each dimension

- mix the values to ensure even distribution

- map values to a range of variables

- Use various agent initialization strategies:

- for the first quarter of agents: uniform distribution

- for the second quarter: clustering around several points

- for the third quarter: random positions with a shift to the boundaries

- for the last quarter: chaotic positions with different maps

Phase 2: Chaotic search with the first carrier wave

- For each iteration up to S1:

- For each agent in the population:

- if the mutation probability is triggered, apply the mutation

- otherwise, for each coordinate:

- update the chaotic variable via the chosen map (logistic, sinusoidal or tent)

- determine the strategy (global or local search) based on the optimization phase

- for global search: use chaotic values to determine the new position

- for local search: combine attraction to the best solutions with chaotic perturbation

- update the velocity taking into account inertia

- apply velocity and position limits

- check for violations of restrictions and apply corrections

- Rating and update:

- calculate the penalty function for each agent

- update the best personal and global solutions

- dynamically update the penalty parameter

- adapt the search parameter (alpha) based on success

- For each agent in the population:

Phase 3: Chaotic search with the second carrier wave

- For each iteration from S1 to S1+S2:

- check for convergence

- for each agent in the population:

- if convergence is detected, apply random mutation to some agents

- otherwise, for each coordinate:

- update the chaotic variable

- adaptively narrow the search radius

- select a base point with priority given to the best solutions

- add chaotic disturbance and Levy noise for random long jumps

- update velocity and position with inertia

- apply position restrictions

- Rating and update:

- calculate the penalty function for each agent

- update the best personal and global solutions

- update the history of the global best solution to determine convergence

- reset stuck agents if necessary

Auxiliary functions

- Chaotic maps:

- LogisticMap(x): x[n+1] = rx[n](1-x[n]) with validation

- SineMap(x): x[n+1] = sin(π*x[n]) with normalization

- TentMap(x): x[n+1] = μ*min(x[n], 1-x[n]) with validation

- SelectChaosMap(x, type): select a map based on type

- Handling limitations and penalties:

- CalculateConstraintValue(agent, coord): calculate the constraint violation

- CalculateConstraintGradient(agent, coord): calculate the constraint gradient

- CalculateWeightedGradient(agent, coord): calculate the weighted gradient

- CalculatePenaltyFunction(agent): calculate the value of the penalty function

- Handling stagnation and convergence:

- IsConverged(): check convergence based on the history of best solutions

- ResetStagnatingAgents(): reset stagnating agents

- ApplyMutation(agent): apply different types of mutations

- UpdateSigma(): dynamically update the penalty parameter

- UpdateBestHistory(newBest): update the history of best values

Now we can move on to the description of the implementation of the COA (CHAOS) algorithm. In this article, we will examine all the key implementation methods, and in the next one, we will move directly to testing and the algorithm's performance results. Let's implement the S_COA_Agent structure and the structure fields:

- gamma [] — set of chaotic variables of the pseudo-random type, used to introduce elements of randomness and diversity into the agent behavior,

- velocity [] — array of velocities, allows the agent to move more dynamically through space, taking into account inertia,

- stagnationCounter — counter that increases if the agent shows no improvement in its solution; it helps implement mechanisms for restarting strategies in case of stagnation.

The Init() method is where the initial values of arrays are created and set. For gamma[], a uniform distribution between 0.1 and 0.9 is used to introduce variety into the initial conditions of the chaotic variables. The velocity [] speeds start at zero values, the stagnation counter is set to zero.

//—————————————————————————————————————————————————————————————————————————————— // Improved agent structure with additional fields struct S_COA_Agent { double gamma []; // chaotic variables double velocity []; // movement velocity (to increase inertia) int stagnationCounter; // stagnation counter void Init (int coords) { ArrayResize (gamma, coords); ArrayResize (velocity, coords); // Uniform distribution of gamma values for (int i = 0; i < coords; i++) { // Use different initial values for better variety gamma [i] = 0.1 + 0.8 * (i % coords) / (double)MathMax (1, coords - 1); // Initialize velocity with zeros velocity [i] = 0.0; } stagnationCounter = 0; } }; //——————————————————————————————————————————————————————————————————————————————

The C_AO_COA_chaos class is derived from the C_AO base class and is an implementation of the COA(CHAOS) algorithm. It includes the methods and parameters required for operation, as well as additional functions for controlling the behavior of agents, based on the concepts of chaotic search. Class components:

- SetParams () — method for setting algorithm parameters,

- Init () — initialization method that accepts ranges and parameters for the algorithm to operate,

- Moving () — method responsible for moving agents in the solution space,

- Revision () — method for revising the positions of agents.

- S1, S2 — number of iterations in two phases of the algorithm.

- sigma, t3, eps, inertia, socialFactor, mutationRate — parameters that influence the behavior of agents and the algorithm as a whole.

- alpha [] — array of parameters used for searching.

- agent [] — array of agents that make up the algorithm's population.

- methods for calculating gradients, constraint values and penalty functions, and checking the feasibility of solutions (IsFeasible),

- methods for chaotic maps (LogisticMap, SineMap, TentMap, SelectChaosMap).

- variables storing information about the current epoch (epochs, epochNow) and the dynamic penalty value (currentSigma),

- globalBestHistory [] — array for storing globally best values over multiple iterations,

- History index (historyIndex) for tracking the position in the best values array,

- The methods for managing the agent population (InitialPopulation), performing the different search phases (FirstCarrierWaveSearch, SecondCarrierWaveSearch), mutating agents (ApplyMutation), updating the penalty (UpdateSigma), and checking for convergence (IsConverged), as well as resetting stagnant agents (ResetStagnatingAgents).

So, the C_AO_COA_chaos class is a complex component of an optimization system that uses agents to find solutions. It integrates the parameters, methods, and logic needed to control agents within an algorithm, including both deterministic and chaotic strategies.

//—————————————————————————————————————————————————————————————————————————————— class C_AO_COA_chaos : public C_AO { public: //-------------------------------------------------------------------- ~C_AO_COA_chaos () { } C_AO_COA_chaos () { ao_name = "COA(CHAOS)"; ao_desc = "Chaos Optimization Algorithm"; ao_link = "https://www.mql5.com/en/articles/16729"; // Internal parameters (not externally configurable) inertia = 0.7; socialFactor = 1.5; mutationRate = 0.05; // Default parameters popSize = 50; S1 = 30; S2 = 20; sigma = 2.0; t3 = 1.2; eps = 0.0001; // Initialize the parameter array for the C_AO interface ArrayResize (params, 6); params [0].name = "popSize"; params [0].val = popSize; params [1].name = "S1"; params [1].val = S1; params [2].name = "S2"; params [2].val = S2; params [3].name = "sigma"; params [3].val = sigma; params [4].name = "t3"; params [4].val = t3; params [5].name = "eps"; params [5].val = eps; } void SetParams () { // Update internal parameters from the params array popSize = (int)params [0].val; S1 = (int)params [1].val; S2 = (int)params [2].val; sigma = params [3].val; t3 = params [4].val; eps = params [5].val; } bool Init (const double &rangeMinP [], // minimum search range const double &rangeMaxP [], // maximum search range const double &rangeStepP [], // search step const int epochsP = 0); // number of epochs void Moving (); void Revision (); //---------------------------------------------------------------------------- // External algorithm parameters int S1; // first phase iterations int S2; // second phase iterations double sigma; // penalty parameter double t3; // alpha correction ratio double eps; // small number for weighting ratios // Internal algorithm parameters double inertia; // inertia parameter for movement (internal) double socialFactor; // social influence parameter (internal) double mutationRate; // mutation probability (internal) S_COA_Agent agent []; // array of agents private: //------------------------------------------------------------------- int epochNow; double currentSigma; // Dynamic penalty parameter double alpha []; // search parameters double globalBestHistory [10]; // History of global best solution values int historyIndex; // Auxiliary methods double CalculateWeightedGradient (int agentIdx, int coordIdx); double CalculateConstraintValue (int agentIdx, int coordIdx); double CalculateConstraintGradient (int agentIdx, int coordIdx); double CalculatePenaltyFunction (int agentIdx); // Method for checking the solution feasibility bool IsFeasible (int agentIdx); // Chaotic maps double LogisticMap (double x); double SineMap (double x); double TentMap (double x); double SelectChaosMap (double x, int type); void InitialPopulation (); void FirstCarrierWaveSearch (); void SecondCarrierWaveSearch (); void ApplyMutation (int agentIdx); void UpdateSigma (); void UpdateBestHistory (double newBest); bool IsConverged (); void ResetStagnatingAgents (); }; //——————————————————————————————————————————————————————————————————————————————

The Init method of the C_AO_COA class is responsible for the initial setup and preparation of the algorithm for operation. It performs several important tasks: first, it performs basic initialization using the StandardInit() method, which sets up ranges, steps, and other parameters. If it does not succeed, the method fails.

Next, parameters related to the number of epochs, the current epoch (epochNow), and the penalty ratio (currentSigma) are set. A history of best solutions is initialized to help track progress. The size of the arrays of minimum and maximum value ranges to search for is checked. If the sizes do not match or are not specified, initialization is aborted.

Next, arrays are initialized in which agents, alpha ratios, and the best solutions found are stored. Each agent receives an initial position based on various strategies:

- some agents are distributed evenly across the entire range,

- other "clustering" - around several points within a range,

- others are positioned randomly taking into account the boundaries,

- the rest use chaotic map functions to obtain starting solutions.

The Init method of the C_AO_COA_chaos class sets the initial parameters and arrays necessary to find the optimal solution. The process involves checking the correctness of the input data, setting up search ranges, initializing an array of agents with different starting position strategies, and setting the values of global variables such as the best solution found. During the execution of the method, data structures necessary for the further iterative optimization process are created, and parameters are set that regulate the behavior of agents and the algorithm as a whole.

//—————————————————————————————————————————————————————————————————————————————— bool C_AO_COA_chaos::Init (const double &rangeMinP [], const double &rangeMaxP [], const double &rangeStepP [], const int epochsP = 0) { if (!StandardInit (rangeMinP, rangeMaxP, rangeStepP)) return false; //---------------------------------------------------------------------------- epochNow = 0; currentSigma = sigma; historyIndex = 0; // Initialize the history of best values for (int i = 0; i < 10; i++) globalBestHistory [i] = -DBL_MAX; // Check and initialize the main arrays int arraySize = ArraySize (rangeMinP); if (arraySize <= 0 || arraySize != ArraySize (rangeMaxP) || arraySize != ArraySize (rangeStepP)) { return false; } ArrayResize (agent, popSize); ArrayResize (alpha, coords); // Adaptive alpha initialization depending on the search range for (int c = 0; c < coords; c++) { // alpha depends on the size of the search space double range = rangeMax [c] - rangeMin [c]; alpha [c] = 0.1 * range / MathSqrt (MathMax (1.0, (double)coords)); } // Initialize of agents with various strategies for (int i = 0; i < popSize; i++) { agent [i].Init (coords); for (int c = 0; c < coords; c++) { double position; // Different initialization strategies if (i < popSize / 4) { // Uniform distribution in space position = rangeMin [c] + (i * (rangeMax [c] - rangeMin [c])) / MathMax (1, popSize / 4); } else if (i < popSize / 2) { // Clustering around multiple points int cluster = (i - popSize / 4) % 3; double clusterCenter = rangeMin [c] + (cluster + 1) * (rangeMax [c] - rangeMin [c]) / 4.0; position = clusterCenter + u.RNDfromCI (-0.1, 0.1) * (rangeMax [c] - rangeMin [c]); } else if (i < 3 * popSize / 4) { // Random positions with an offset towards the boundaries double r = u.RNDprobab (); if (r < 0.5) position = rangeMin [c] + 0.2 * r * (rangeMax [c] - rangeMin [c]); else position = rangeMax [c] - 0.2 * (1.0 - r) * (rangeMax [c] - rangeMin [c]); } else { // Chaotic positions using different maps int mapType = i % 3; double chaosValue = SelectChaosMap (agent [i].gamma [c], mapType); position = rangeMin [c] + chaosValue * (rangeMax [c] - rangeMin [c]); } a [i].cB [c] = u.SeInDiSp (position, rangeMin [c], rangeMax [c], rangeStep [c]); } } return true; } //——————————————————————————————————————————————————————————————————————————————

The LogisticMap method implements the logistic map used to generate chaotic sequences. This function is used in the algorithm to introduce randomness and variety into the search for solutions. The main idea of the method is to calculate a new state value based on the current one using the logistic map equation with a parameter that is slightly varied to enhance chaotic behavior.

Before calculation, the input value is checked for validity and range; if it does not match, it is replaced with a random number within the specified range. After calculating the new value, it is also checked for an acceptable range and, if necessary, replaced with a random number to maintain the stability of the function. As a result, the internal logic ensures that the next state is generated while remaining within acceptable limits.

//—————————————————————————————————————————————————————————————————————————————— // Improved chaotic maps double C_AO_COA_chaos::LogisticMap (double x) { // Protection against incorrect inputs if (x < 0.0 || x > 1.0 || MathIsValidNumber (x) == false) { x = 0.2 + 0.6 * u.RNDprobab (); } // x(n+1) = r*x(n)*(1-x(n)) double r = 3.9 + 0.1 * u.RNDprobab (); // Slightly randomized parameter to avoid loops double result = r * x * (1.0 - x); // Additional validation if (result < 0.0 || result > 1.0 || MathIsValidNumber (result) == false) { result = 0.2 + 0.6 * u.RNDprobab (); } return result; } //——————————————————————————————————————————————————————————————————————————————

The SineMap method implements a chaotic map based on the sine function. It takes the current state, checks its validity, and if it is incorrect or outside the range of [0, 1], replaces it with a random value in that range. It then calculates a new value using the sine function, normalizes it so that it is again in the range [0, 1], and performs an additional check.

If the final value is out of bounds or invalid, it is replaced again with a random number in the range [0.2, 0.8]. As a result, the method returns a new state, which is obtained based on the current one using chaotic map.

//—————————————————————————————————————————————————————————————————————————————— double C_AO_COA_chaos::SineMap (double x) { // Protection against incorrect inputs if (x < 0.0 || x > 1.0 || MathIsValidNumber (x) == false) { x = 0.2 + 0.6 * u.RNDprobab (); } // x(n+1) = sin(π*x(n)) double result = MathSin (M_PI * x); // Normalize the result to the range [0, 1] result = (result + 1.0) / 2.0; // Additional validation if (result < 0.0 || result > 1.0 || MathIsValidNumber (result) == false) { result = 0.2 + 0.6 * u.RNDprobab (); } return result; } //——————————————————————————————————————————————————————————————————————————————

The TentMap method implements a tent map to generate a chaotic sequence. The method takes an input value of "x" which should be between 0 and 1, checks "x" for validity and, if necessary, replaces it with a random value within the acceptable range. Next, using a "mu" parameter close to 2, a new value is calculated based on a piecewise linear function characteristic of the "tent" map.

After the calculation, another check is performed to ensure the value is valid, and, if necessary, it is normalized with a random number. The method then returns a new, randomly generated value.

//—————————————————————————————————————————————————————————————————————————————— double C_AO_COA_chaos::TentMap (double x) { // Protection against incorrect inputs if (x < 0.0 || x > 1.0 || MathIsValidNumber (x) == false) { x = 0.2 + 0.6 * u.RNDprobab (); } // Tent map: x(n+1) = μ*min(x(n), 1-x(n)) double mu = 1.99; // Parameter close to 2 for chaotic behavior double result; if (x <= 0.5) result = mu * x; else result = mu * (1.0 - x); // Additional validation if (result < 0.0 || result > 1.0 || MathIsValidNumber (result) == false) { result = 0.2 + 0.6 * u.RNDprobab (); } return result; } //——————————————————————————————————————————————————————————————————————————————

The SelectChaosMap method is designed to select and apply a chaotic map function depending on the specified type. It takes an "x" value and a "type" parameter that specifies the specific kind of chaotic map. The basic idea of the method is to use the remainder of the division of the type by 3 to determine the map option, which provides a cyclic choice between three different chaotic maps: logistic, sinusoidal and tent. Depending on the result, the corresponding function is called, which transforms the input value of "x" into a new one, using the selected chaotic dynamics.

If for some reason the type does not fall within the expected range (0, 1, 2), the logistic map is applied by default. Each of these maps simulates chaotic behavior and is used to generate diverse and unpredictable numbers as part of the optimization.

//—————————————————————————————————————————————————————————————————————————————— double C_AO_COA_chaos::SelectChaosMap (double x, int type) { // Select a chaotic map based on type switch (type % 3) { case 0: return LogisticMap (x); case 1: return SineMap (x); case 2: return TentMap (x); default: return LogisticMap (x); } } //——————————————————————————————————————————————————————————————————————————————

The InitialPopulation method is designed to initialize the initial population of the optimization algorithm using the Latin Hypercube Sampling (LHS) technique. LHS is a stratified sampling method that provides more uniform coverage of the multidimensional search space compared to random sampling, thereby improving the quality of the initial population.

The method starts by declaring arrays for the Latin hypercube values and temporary values that will aid in generation. The method attempts to allocate memory for the required arrays, and if memory allocation fails, a backup scenario occurs that creates the initial population randomly. This ensures that the program does not crash due to lack of memory.

The method then generates values for the Latin hypercube. For each coordinate, an ordered array of values is created, which is then randomly shuffled. The shuffled values are assigned to the hypercube array. The values of the Latin hypercube are transformed into coordinates of individuals in the search space. The calculation is performed using the specified ranges, and the obtained values are limited to the required range and step.

At the end, the method sets a flag indicating that the initial population has been modified or created. The advantage of this approach is that it creates a more diversified starting population.

//—————————————————————————————————————————————————————————————————————————————— void C_AO_COA_chaos::InitialPopulation () { // Create Latin Hypercube for the initial population double latinCube []; // One-dimensional array for storing hypercube values double tempValues []; // Temporary array for storing and shuffling values ArrayResize (latinCube, popSize * coords); ArrayResize (tempValues, popSize); // Generate a Latin hypercube for (int c = 0; c < coords; c++) { // Create ordered values for (int i = 0; i < popSize; i++) { tempValues [i] = (double)i / popSize; } // Shuffle the values for (int i = popSize - 1; i > 0; i--) { int j = (int)(u.RNDprobab () * (i + 1)); if (j < popSize) { double temp = tempValues [i]; tempValues [i] = tempValues [j]; tempValues [j] = temp; } } // Assign the mixed values for (int i = 0; i < popSize; i++) { latinCube [i * coords + c] = tempValues [i]; } } // Convert the Latin hypercube values to coordinates for (int i = 0; i < popSize; i++) { for (int c = 0; c < coords; c++) { double x = rangeMin [c] + latinCube [i * coords + c] * (rangeMax [c] - rangeMin [c]); a [i].c [c] = u.SeInDiSp (x, rangeMin [c], rangeMax [c], rangeStep [c]); } } } //——————————————————————————————————————————————————————————————————————————————

The FirstCarrierWaveSearch method implements a search stage in the algorithm aimed at balancing between global exploration of space and local exploitation of known good solutions. Its main task is to update the positions and velocities of agents, continuing to find and improve potential solutions. At the beginning of the method, a ratio is defined that controls the degree of exploration in the current search epoch. This ratio decreases quadratically as the epochs progress, which ensures a gradual shift in emphasis from global search operations to local improvement. Then, for each agent in the population, a mutation test is performed - with some probability, a mutation is performed to increase the diversity of solutions. After that, for each search direction (coordinates):

- the type of chaotic map used to generate new potential solutions is selected,

- either global or local search strategy is selected.

In global search, the agent updates its position using a chaotic component, and the velocity is adjusted to take into account inertia and direction of motion. In the case of local search, the agent focuses on the best solutions found, making a weighted attraction to them with a small random variation to prevent looping. In both cases, the velocity is limited to avoid jumping too far outside the search space boundaries. Agent positions are updated taking into account the search space constraints and, if necessary, corrections are made if constraint violations are detected. In this case, positions are adjusted and velocities are reduced to smooth out subsequent steps.

//—————————————————————————————————————————————————————————————————————————————— void C_AO_COA_chaos::FirstCarrierWaveSearch () { // Adaptive balance between exploration and exploitation double globalPhase = (double)epochNow / S1; double explorationRate = 1.0 - globalPhase * globalPhase; // Quadratic decrease // For each agent for (int i = 0; i < popSize; i++) { // Apply mutations with some probability to increase diversity if (u.RNDprobab () < mutationRate * (1.0 + explorationRate)) { ApplyMutation (i); continue; } for (int c = 0; c < coords; c++) { // Select a chaotic map with uniform distribution int mapType = ((i + c + epochNow) % 3); // Safely check access to the gamma array if (c < ArraySize (agent [i].gamma)) { agent [i].gamma [c] = SelectChaosMap (agent [i].gamma [c], mapType); } else { continue; // Skip if the index is invalid } // Determine the relationship between global and local search double strategy = u.RNDprobab (); double x; if (strategy < explorationRate) { // Global search with a chaotic component x = rangeMin [c] + agent [i].gamma [c] * (rangeMax [c] - rangeMin [c]); // Add a velocity component to maintain the movement direction agent [i].velocity [c] = inertia * agent [i].velocity [c] + (1.0 - inertia) * (x - a [i].c [c]); } else { // Local search around the best solutions double personalAttraction = u.RNDprobab (); double globalAttraction = u.RNDprobab (); // Balanced attraction to the best solutions double attractionTerm = //personalAttraction * (agent [i].cPrev [c] - a [i].c [c]) + personalAttraction * (a [i].cB [c] - a [i].c [c]) + socialFactor * globalAttraction * (cB [c] - a [i].c [c]); // Chaotic disturbance to prevent being stuck double chaosRange = alpha [c] * explorationRate; double chaosTerm = chaosRange * (2.0 * agent [i].gamma [c] - 1.0); // Update velocity with inertia agent [i].velocity [c] = inertia * agent [i].velocity [c] + (1.0 - inertia) * (attractionTerm + chaosTerm); } // Limit the velocity to prevent too large steps double maxVelocity = 0.1 * (rangeMax [c] - rangeMin [c]); if (MathAbs (agent [i].velocity [c]) > maxVelocity) { agent [i].velocity [c] = maxVelocity * (agent [i].velocity [c] > 0 ? 1.0 : -1.0); } // Apply the velocity to the position x = a [i].c [c] + agent [i].velocity [c]; // Apply search space restrictions a [i].c [c] = u.SeInDiSp (x, rangeMin [c], rangeMax [c], rangeStep [c]); // Check the constraints and apply a smooth correction double violation = CalculateConstraintValue (i, c); if (violation > eps) { double gradient = CalculateWeightedGradient (i, c); double correction = -gradient * violation * (1.0 - globalPhase); a [i].c [c] = u.SeInDiSp (a [i].c [c] + correction, rangeMin [c], rangeMax [c], rangeStep [c]); // Reset the velocity when correcting violations agent [i].velocity [c] *= 0.5; } } } } //——————————————————————————————————————————————————————————————————————————————

The SecondCarrierWaveSearch method is an optimization stage that develops after the initial search and is aimed at deepening and refining the solutions found. The main goal of this method is to improve the results achieved in the previous step by applying more sophisticated search strategies and parameter adaptation.

The method starts by calculating a parameter reflecting the local search phase, which intensifies over time. This allows the algorithm to gradually move from a broad search to a more detailed and precise exploration of the area of already known solutions. At the beginning of the method, a check is made to see if convergence has been achieved. If the algorithm has reached a stable state, some agents undergo mutation to increase the diversity of solutions and avoid local extremes.

For each agent, a sequential search for new solutions is carried out in its space. Chaotic maps are defined that help introduce elements of randomness. The search parameter decreases as we approach the optimal solution. This provides a narrower focus when searching near the current best solutions. When updating each position, the agents' past achievements are taken into account. A base point is determined, which can be either the absolute global optimum or the agent's previous personal achievements, which helps to take into account both individual and general results of the entire population.

The position update process uses both chaotic bias and random noise (e.g., Lévy noise), which adds an element of randomness and facilitates the search for new, potentially better solutions. The method takes into account inertia when updating agent velocities, which allows for smoother changes and prevents aggressive movements. As a result, updated positions are limited by the specified boundaries, which ensures that the problem conditions are met.

The SecondCarrierWaveSearch method is aimed at more accurate and in-depth optimization of existing solutions.

//—————————————————————————————————————————————————————————————————————————————— void C_AO_COA_chaos::SecondCarrierWaveSearch () { // Refining local search with adaptive parameters double localPhase = (double)(epochNow - S1) / S2; double intensificationRate = localPhase * localPhase; // Quadratic increase in intensification // Check the algorithm convergence bool isConverged = IsConverged (); // For each agent for (int i = 0; i < popSize; i++) { // If convergence is detected, add a random mutation to some agents if (isConverged && i % 3 == 0) { ApplyMutation (i); continue; } for (int c = 0; c < coords; c++) { // Select a chaotic map with uniform distribution int mapType = ((i * c + epochNow) % 3); agent [i].gamma [c] = SelectChaosMap (agent [i].gamma [c], mapType); // Adaptive search radius with narrowing towards the end of optimization double adaptiveAlpha = alpha [c] * (1.0 - 0.8 * intensificationRate); // Select a base point with priority to the best solutions double basePoint; if (a [i].f > a [i].fB) { basePoint = a [i].c [c]; // The current position is better } else { double r = u.RNDprobab (); if (r < 0.7 * (1.0 + intensificationRate)) // Increase attraction to the global best { basePoint = cB [c]; // Global best } else { basePoint = a [i].cB [c]; // Personal best } } // Local search with a chaotic component double chaosOffset = adaptiveAlpha * (2.0 * agent [i].gamma [c] - 1.0); // Add Levy noise for random long jumps (heavy tailed distribution) double levyNoise = 0.0; if (u.RNDprobab () < 0.1 * (1.0 - intensificationRate)) { // Simplified approximation of Levy noise double u1 = u.RNDprobab (); double u2 = u.RNDprobab (); if (u2 > 0.01) // Protection against division by very small numbers { levyNoise = 0.01 * u1 / MathPow (u2, 0.5) * adaptiveAlpha * (rangeMax [c] - rangeMin [c]); } } // Update the velocity with inertia agent [i].velocity [c] = inertia * (1.0 - 0.5 * intensificationRate) * agent [i].velocity [c] + (1.0 - inertia) * (chaosOffset + levyNoise); // Apply the velocity to the position double x = basePoint + agent [i].velocity [c]; // Limit the position a [i].c [c] = u.SeInDiSp (x, rangeMin [c], rangeMax [c], rangeStep [c]); } } } //——————————————————————————————————————————————————————————————————————————————

In the next article, we will continue to examine the remaining methods of the algorithm, conduct tests and summarize the results.

Translated from Russian by MetaQuotes Ltd.

Original article: https://www.mql5.com/ru/articles/16729

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

Chaos optimization algorithm (COA): Continued

Chaos optimization algorithm (COA): Continued

Can DOOM Run in MetaTrader 5: DLLs, Rendering, and MQL5 Input?

Can DOOM Run in MetaTrader 5: DLLs, Rendering, and MQL5 Input?

Forex Arbitrage Trading: A Matrix Trading System for Return to Fair Value with Risk Control

Forex Arbitrage Trading: A Matrix Trading System for Return to Fair Value with Risk Control

From Simple Close Buttons to a Rule-Based Risk Dashboard in MQL5

From Simple Close Buttons to a Rule-Based Risk Dashboard in MQL5

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use