MQL5交易策略自动化(第二十一部分):借助自适应学习率提升神经网络交易效果

概述

在前一篇文章(第二十部分)中,我们开发了基于商品通道指数(CCI)和动量震荡指标(AO)的多品种交易策略,在MetaQuotes Language 5(MQL5)中实现了针对多种货币对的趋势反转自动化交易,该策略具备强大的信号生成和风险管理功能。本系列第二十一部分中,我们将转向基于神经网络的交易策略,通过引入自适应学习率机制,优化市场走势预测的准确性。我们将涵盖以下主题:

阅读完本文后,您将掌握一套完整的MQL5交易系统,该系统利用神经网络实现动态学习率调整,为进一步优化做好准备——让我们开始深入探讨吧!

理解自适应神经网络学习率策略

在第二十部分中,我们开发了一个多品种交易系统,该系统利用了商品通道指数和动量震荡指标,实现了在多个货币对上的自动化趋势反转交易。在本系列第二十一部分中,我们将深入探讨一种基于动态神经网络的交易策略,借助神经网络——一种模仿人脑神经元互联结构的计算模型——处理多种市场指标,并根据市场波动性调整学习过程,从而更精准地预测市场价格走势。我们的目标是构建一个灵活、高性能的交易系统,利用神经网络分析复杂的市场模式,并通过自适应学习率机制以优化精度执行交易。

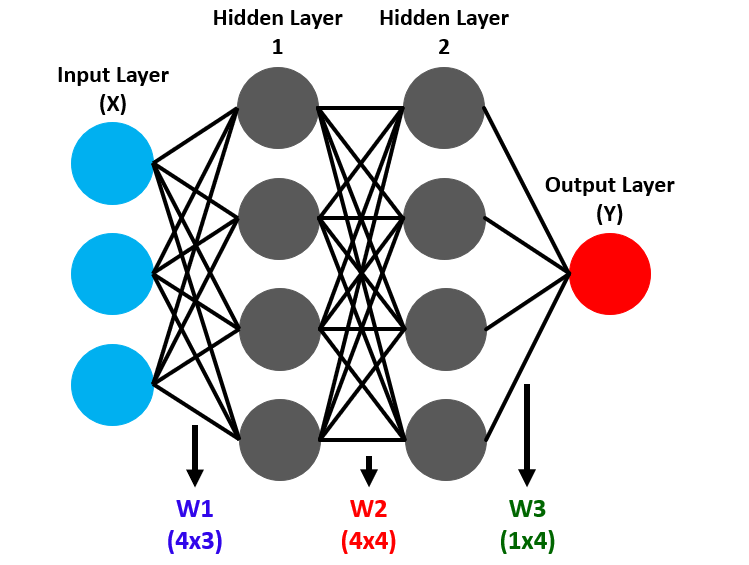

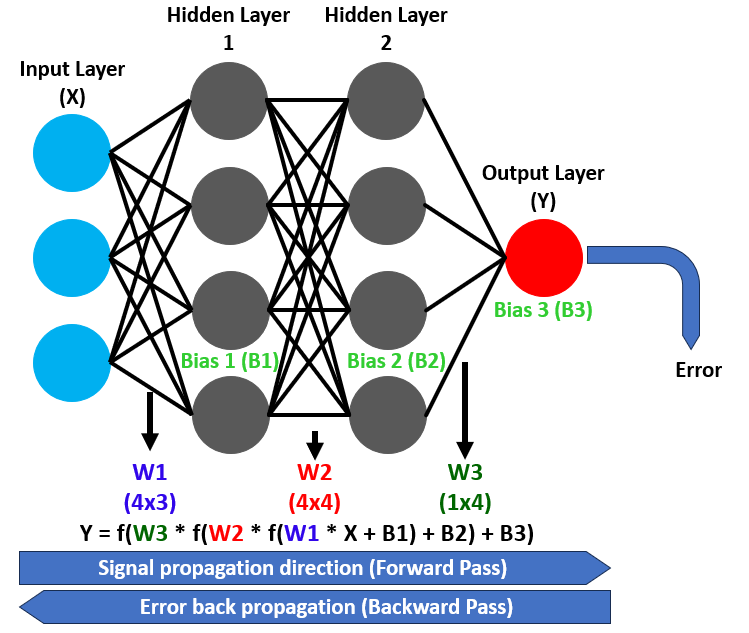

神经网络由节点(神经元)构成的层级结构工作,这些层级包括用于捕获市场数据的输入层、用于揭示复杂模式特征的隐藏层,以及用于生成交易信号(如预测价格涨跌方向)的输出层。数据在这些层级间通过前向传播流动,神经元对输入数据施加权重和偏置,将其转化为预测结果。具体如下:

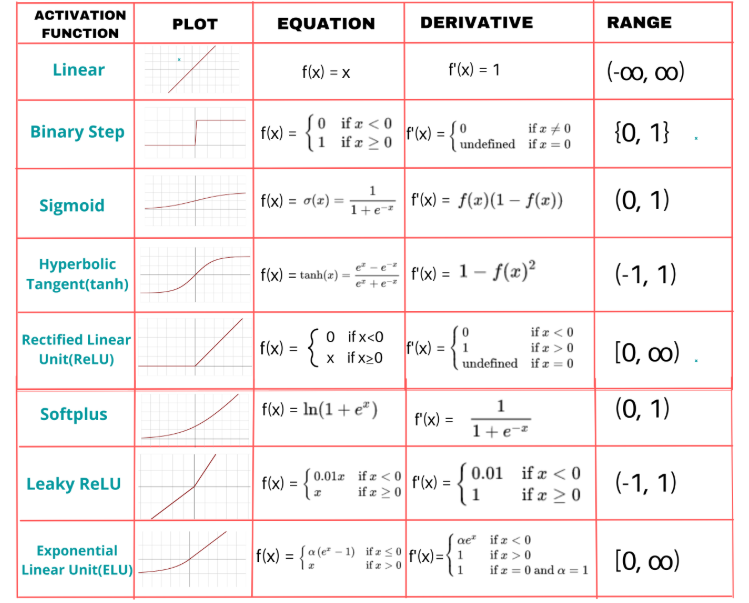

激活函数是一个关键组件,它为这些变换引入了非线性特性,使网络能够对复杂关系进行建模;例如,sigmoid激活函数将数值映射到0到1的范围,使其非常适合用于诸如买入或卖出决策等二元分类任务。反向传播则通过从预测误差反向推导来优化这一过程,并调整权重和偏置,随着时间的推移提高预测准确性。具体如下:

为使网络具备动态性,我们计划实施一种自适应学习率策略,能够根据反向传播过程中权重更新的速度进行调整——当预测结果与市场实际走势相符时加快学习速度,当误差激增时减缓学习速度以确保稳定性。

我们规划设计一个系统,将移动平均线和动量指标等市场指标输入神经网络的输入层,通过隐藏层处理这些指标以检测模式,并通过输出层生成可靠的交易信号。计划使用sigmoid激活函数对神经元输出进行转换,以确保为交易决策提供平滑、可解释的预测结果。选用该函数是为了能够得到两种输出选项。以下是其他可使用的函数示例:

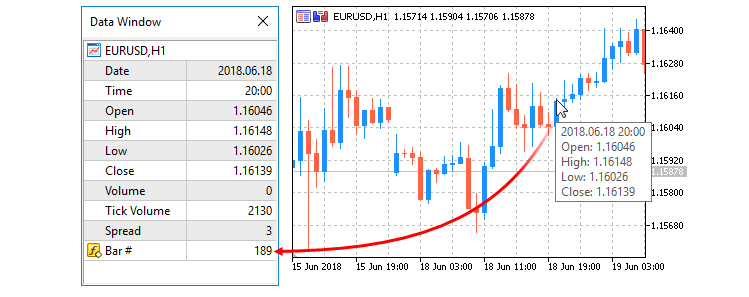

此外,我们将根据训练表现动态调整学习率,并依据市场波动性调整隐藏层神经元的数量,从而构建一个兼具复杂性与高效性的响应式系统。这一策略为稳健的系统实现和全面的测试奠定了基础。我们将采用两条移动平均线(MA)、相对强弱指数 (RSI)和平均真实波幅(ATR)作为输入指标,一旦生成交易信号,便执行开仓操作。以下是系统实现后的预期效果示意图:

在MQL5中的实现

要在MQL5中创建该程序,请打开MetaEditor,在导航器中找到“指标”文件夹,点击“新建”选项卡,并按照向导提示创建文件。完成代码编写环境的准备后,我们将首先声明一些输入变量、结构体和类,以采用面向对象编程(OOP)方法实现相关功能。

//+------------------------------------------------------------------+ //| Neural Networks Propagation EA.mq5 | //| Copyright 2025, Allan Munene Mutiiria. | //| https://t.me/Forex_Algo_Trader | //+------------------------------------------------------------------+ #property copyright "Copyright 2025, Allan Munene Mutiiria." #property link "https://t.me/Forex_Algo_Trader" #property version "1.00" #include <Trade/Trade.mqh> CTrade tradeObject; //--- Instantiate trade object for executing trades // Input parameters with clear, meaningful names input double LotSize = 0.1; // Lot Size input int StopLossPoints = 100; // Stop Loss (points) input int TakeProfitPoints = 100; // Take Profit (points) input int MinHiddenNeurons = 10; // Minimum Hidden Neurons input int MaxHiddenNeurons = 50; // Maximum Hidden Neurons input int TrainingBarCount = 1000; // Training Bars input double MinPredictionAccuracy = 0.7; // Minimum Prediction Accuracy input double MinLearningRate = 0.01; // Minimum Learning Rate input double MaxLearningRate = 0.5; // Maximum Learning Rate input string InputToHiddenWeights = "0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1"; // Input-to-Hidden Weights input string HiddenToOutputWeights = "0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1"; // Hidden-to-Output Weights input string HiddenBiases = "0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1,0.1,-0.1"; // Hidden Biases input string OutputBiases = "0.1,-0.1"; // Output Biases // Neural Network Structure Constants const int INPUT_NEURON_COUNT = 10; //--- Define number of input neurons const int OUTPUT_NEURON_COUNT = 2; //--- Define number of output neurons const int MAX_HISTORY_SIZE = 10; //--- Define maximum history size for accuracy and error tracking // Indicator handles int ma20IndicatorHandle; //--- Handle for 20-period moving average int ma50IndicatorHandle; //--- Handle for 50-period moving average int rsiIndicatorHandle; //--- Handle for RSI indicator int atrIndicatorHandle; //--- Handle for ATR indicator // Training related structures struct TrainingData { double inputValues[]; //--- Array to store input values for training double targetValues[]; //--- Array to store target values for training };

在此阶段,我们通过初始化交易执行与数据处理的关键组件,为基于自适应学习率神经网络的交易策略奠定基础。我们引入了"Trade.mqh"库,并创建了"CTrade"类的"tradeObject"实例以管理交易操作,从而能够根据神经网络的预测结果执行买入和卖出订单。

我们定义了输入参数以配置该策略,设置"LotSize"以控制交易量,通过"StopLossPoints"和"TakeProfitPoints"进行风险管理,并设置"MinHiddenNeurons"和"MaxHiddenNeurons"以定义神经网络中隐藏层神经元的数量范围。此外,我们还指定"TrainingBarCount"用于确定训练所使用的历史K线数量,设置"MinPredictionAccuracy"作为准确率阈值,并设定"MinLearningRate"和"MaxLearningRate"以界定自适应学习率的范围。同时提供"InputToHiddenWeights"、"HiddenToOutputWeights"、"HiddenBiases"和"OutputBiases"作为字符串输入,用于初始化神经网络的权重和偏置,允许采用预设或默认配置。

接下来,我们为神经网络结构设定常量,将"INPUT_NEURON_COUNT"定义为10(用于市场数据输入),"OUTPUT_NEURON_COUNT"定义为2(用于生成买入/卖出信号输出),并将"MAX_HISTORY_SIZE"定义为10(用于跟踪训练准确率和误差)。创建指标句柄"ma20IndicatorHandle"、"ma50IndicatorHandle"、"rsiIndicatorHandle"和"atrIndicatorHandle",分别引用20周期和50周期MA、RSI以及ATR指标,以便将市场数据输入神经网络。最后,我们定义了"TrainingData"结构体,其中包含"inputValues"和"targetValues"数组,存储训练所需的输入特征和预期输出,确保神经网络学习过程中的数据管理井然有序。在填充数据时,我们将按如下方式存储数据:

接下来,我们需要定义一个类,用于封装大部分需要频繁使用的基本成员变量。

// Neural Network Class class CNeuralNetwork { private: int inputNeuronCount; //--- Number of input neurons int hiddenNeuronCount; //--- Number of hidden neurons int outputNeuronCount; //--- Number of output neurons double inputLayer[]; //--- Array for input layer values double hiddenLayer[]; //--- Array for hidden layer values double outputLayer[]; //--- Array for output layer values double inputToHiddenWeights[]; //--- Weights between input and hidden layers double hiddenToOutputWeights[];//--- Weights between hidden and output layers double hiddenLayerBiases[]; //--- Biases for hidden layer double outputLayerBiases[]; //--- Biases for output layer double outputDeltas[]; //--- Delta values for output layer double hiddenDeltas[]; //--- Delta values for hidden layer double trainingError; //--- Current training error double currentLearningRate; //--- Current learning rate double accuracyHistory[]; //--- History of training accuracy double errorHistory[]; //--- History of training errors int historyRecordCount; //--- Number of recorded history entries };

在此阶段,我们通过创建“CNeuralNetwork”类来实现神经网络的核心结构。我们定义了私有成员变量,管理网络架构和训练过程,首先设置“inputNeuronCount”、“hiddenNeuronCount”和“outputNeuronCount”,分别用于确定输入层、隐藏层和输出层的神经元数量,这与策略中处理市场数据并生成交易信号的设计相契合。

通过建立数组来存储各层数值,包括存储市场指标输入的“inputLayer”、处理中间模式的“hiddenLayer”,以及生成买入/卖出预测的“outputLayer”。为了处理神经网络计算,我们创建了“inputToHiddenWeights”和“hiddenToOutputWeights”数组,存储各层之间的权重连接,同时创建了“hiddenLayerBiases”和“outputLayerBiases”数组,对隐藏层和输出层进行偏置调整。对于反向传播过程,我们定义了“outputDeltas”和“hiddenDeltas”,存储误差梯度,从而在训练过程中实现权重和偏置的更新。

此外,我们还引入了“trainingError”以跟踪当前误差,使用“currentLearningRate”管理自适应学习率,采用“accuracyHistory”和“errorHistory”数组持续监控训练表现,并通过“historyRecordCount”统计记录条目数,确保网络能够根据性能趋势动态调整其学习过程。该类为后续函数中实现前向传播、反向传播以及自适应学习率调整奠定了基础。在私有访问修饰符内,我们可以定义一个方法,将字符串输入解析为数组以供使用。

// Parse comma-separated string to array bool ParseStringToArray(string inputString, double &output[], int expectedSize) { //--- Check if input string is empty if(inputString == "") return false; string values[]; //--- Initialize array for parsed values ArrayResize(values, 0); //--- Split input string by comma int count = StringSplit(inputString, 44, values); //--- Check if string splitting failed if(count <= 0) { Print("Error: StringSplit failed for input: ", inputString, ". Error code: ", GetLastError()); return false; } //--- Verify correct number of values if(count != expectedSize) { Print("Error: Invalid number of values in input string. Expected: ", expectedSize, ", Got: ", count); return false; } //--- Resize output array to expected size ArrayResize(output, expectedSize); //--- Convert string values to doubles and normalize for(int i = 0; i < count; i++) { output[i] = StringToDouble(values[i]); //--- Clamp values between -1.0 and 1.0 if(MathAbs(output[i]) > 1.0) output[i] = MathMax(-1.0, MathMin(1.0, output[i])); } return true; }

我们在“CNeuralNetwork”类中实现了“ParseStringToArray”函数,处理神经网络权重和偏置的逗号分隔字符串。首先检查“inputString”是否为空,如果无效则返回“false”,并使用StringSplit以逗号为分隔符将其拆分为“values”数组。如果拆分失败或“values”数组的元素数量“count”与预期大小“expectedSize”不匹配,则使用Print函数记录错误信息,并返回“false”。我们通过ArrayResize调整“output”数组的大小,使用StringToDouble将“values”数组中的字符串转换为双精度浮点数,再利用MathMax和MathMin函数将数值标准化为-1.0至1.0的范围内,最后在成功解析后返回“true”。其余辅助函数可在公共访问修饰符部分按如下方式声明,后续再进行定义。

public: CNeuralNetwork(int inputs, int hidden, int outputs); //--- Constructor void InitializeWeights(); //--- Initialize network weights double Sigmoid(double x); //--- Apply sigmoid activation function void ForwardPropagate(); //--- Perform forward propagation void Backpropagate(const double &targets[]); //--- Perform backpropagation void SetInput(double &inputs[]); //--- Set input values void GetOutput(double &outputs[]); //--- Retrieve output values double TrainOnHistoricalData(TrainingData &data[]); //--- Train on historical data void UpdateNetworkWithRecentData(); //--- Update with recent data void InitializeTraining(); //--- Initialize training arrays void ResizeNetwork(int newHiddenNeurons); //--- Resize network void AdjustLearningRate(); //--- Adjust learning rate dynamically double GetRecentAccuracy(); //--- Get recent training accuracy double GetRecentError(); //--- Get recent training error bool ShouldRetrain(); //--- Check if retraining is needed double CalculateDynamicNeurons(); //--- Calculate dynamic neuron count int GetHiddenNeurons() { return hiddenNeuronCount; //--- Get current hidden neuron count }

在此阶段,我们定义了“CNeuralNetwork”类的公共接口。我们创建了“CNeuralNetwork”构造函数,该构造函数接受“inputs”、“hidden”和“outputs”)作为参数,用于初始化网络结构;同时定义了“InitializeWeights”方法,用于配置网络的权重和偏置。实现“Sigmoid”方法以激活函数计算,以及“ForwardPropagate”和“Backpropagate”方法,分别基于目标值“targets”进行前向传播处理和反向传播更新;还实现了“SetInput”和“GetOutput”方法,处理“inputs”和“outputs”。

我们开发了“TrainOnHistoricalData”方法,该方法利用“TrainingData”结构体对历史数据进行训练;设计了“UpdateNetworkWithRecentData”方法,用于使用最新数据进行网络更新;编写了“InitializeTraining”方法,用于初始化相关数组;实现了“ResizeNetwork”方法,以便根据“newHiddenNeurons”参数调整网络隐藏层神经元数量;开发了“AdjustLearningRate”方法,用于实现动态学习率调整;还实现了“GetRecentAccuracy”、“GetRecentError”、“ShouldRetrain”、“CalculateDynamicNeurons”和“GetHiddenNeurons”等方法,用于监控并根据需要调整隐藏层神经元数量“hiddenNeuronCount”。现在,我们开始按如下方式实现并定义这些函数:

// Constructor CNeuralNetwork::CNeuralNetwork(int inputs, int hidden, int outputs) { //--- Set input neuron count inputNeuronCount = inputs; //--- Set output neuron count outputNeuronCount = outputs; //--- Initialize learning rate to minimum currentLearningRate = MinLearningRate; //--- Set hidden neuron count hiddenNeuronCount = hidden; //--- Ensure hidden neurons within bounds if(hiddenNeuronCount < MinHiddenNeurons) hiddenNeuronCount = MinHiddenNeurons; if(hiddenNeuronCount > MaxHiddenNeurons) hiddenNeuronCount = MaxHiddenNeurons; //--- Resize input layer array ArrayResize(inputLayer, inputs); //--- Resize hidden layer array ArrayResize(hiddenLayer, hiddenNeuronCount); //--- Resize output layer array ArrayResize(outputLayer, outputs); //--- Resize input-to-hidden weights array ArrayResize(inputToHiddenWeights, inputs * hiddenNeuronCount); //--- Resize hidden-to-output weights array ArrayResize(hiddenToOutputWeights, hiddenNeuronCount * outputs); //--- Resize hidden biases array ArrayResize(hiddenLayerBiases, hiddenNeuronCount); //--- Resize output biases array ArrayResize(outputLayerBiases, outputs); //--- Resize accuracy history array ArrayResize(accuracyHistory, MAX_HISTORY_SIZE); //--- Resize error history array ArrayResize(errorHistory, MAX_HISTORY_SIZE); //--- Initialize history record count historyRecordCount = 0; //--- Initialize training error trainingError = 0.0; //--- Initialize network weights InitializeWeights(); //--- Initialize training arrays InitializeTraining(); } // Initialize training arrays void CNeuralNetwork::InitializeTraining() { //--- Resize output deltas array ArrayResize(outputDeltas, outputNeuronCount); //--- Resize hidden deltas array ArrayResize(hiddenDeltas, hiddenNeuronCount); } // Initialize weights void CNeuralNetwork::InitializeWeights() { //--- Track if weights and biases are set bool isInputToHiddenWeightsSet = false; bool isHiddenToOutputWeightsSet = false; bool isHiddenBiasesSet = false; bool isOutputBiasesSet = false; double tempInputToHiddenWeights[]; double tempHiddenToOutputWeights[]; double tempHiddenBiases[]; double tempOutputBiases[]; //--- Parse and set input-to-hidden weights if provided if(InputToHiddenWeights != "" && ParseStringToArray(InputToHiddenWeights, tempInputToHiddenWeights, inputNeuronCount * hiddenNeuronCount)) { //--- Copy parsed weights to main array ArrayCopy(inputToHiddenWeights, tempInputToHiddenWeights); isInputToHiddenWeightsSet = true; //--- Log weight initialization Print("Initialized input-to-hidden weights from input: ", InputToHiddenWeights); } //--- Parse and set hidden-to-output weights if provided if(HiddenToOutputWeights != "" && ParseStringToArray(HiddenToOutputWeights, tempHiddenToOutputWeights, hiddenNeuronCount * outputNeuronCount)) { //--- Copy parsed weights to main array ArrayCopy(hiddenToOutputWeights, tempHiddenToOutputWeights); isHiddenToOutputWeightsSet = true; //--- Log weight initialization Print("Initialized hidden-to-output weights from input: ", HiddenToOutputWeights); } //--- Parse and set hidden biases if provided if(HiddenBiases != "" && ParseStringToArray(HiddenBiases, tempHiddenBiases, hiddenNeuronCount)) { //--- Copy parsed biases to main array ArrayCopy(hiddenLayerBiases, tempHiddenBiases); isHiddenBiasesSet = true; //--- Log bias initialization Print("Initialized hidden biases from input: ", HiddenBiases); } //--- Parse and set output biases if provided if(OutputBiases != "" && ParseStringToArray(OutputBiases, tempOutputBiases, outputNeuronCount)) { //--- Copy parsed biases to main array ArrayCopy(outputLayerBiases, tempOutputBiases); isOutputBiasesSet = true; //--- Log bias initialization Print("Initialized output biases from input: ", OutputBiases); } //--- Initialize input-to-hidden weights randomly if not set if(!isInputToHiddenWeightsSet) { for(int i = 0; i < ArraySize(inputToHiddenWeights); i++) inputToHiddenWeights[i] = (MathRand() / 32767.0) * 2 - 1; } //--- Initialize hidden-to-output weights randomly if not set if(!isHiddenToOutputWeightsSet) { for(int i = 0; i < ArraySize(hiddenToOutputWeights); i++) hiddenToOutputWeights[i] = (MathRand() / 32767.0) * 2 - 1; } //--- Initialize hidden biases randomly if not set if(!isHiddenBiasesSet) { for(int i = 0; i < ArraySize(hiddenLayerBiases); i++) hiddenLayerBiases[i] = (MathRand() / 32767.0) * 2 - 1; } //--- Initialize output biases randomly if not set if(!isOutputBiasesSet) { for(int i = 0; i < ArraySize(outputLayerBiases); i++) outputLayerBiases[i] = (MathRand() / 32767.0) * 2 - 1; } } // Sigmoid activation function double CNeuralNetwork::Sigmoid(double x) { //--- Compute and return sigmoid value return 1.0 / (1.0 + MathExp(-x)); } // Set input void CNeuralNetwork::SetInput(double &inputs[]) { //--- Check for input array size mismatch if(ArraySize(inputs) != inputNeuronCount) { Print("Error: Input array size mismatch. Expected: ", inputNeuronCount, ", Got: ", ArraySize(inputs)); return; } //--- Copy inputs to input layer ArrayCopy(inputLayer, inputs); } // Forward propagation void CNeuralNetwork::ForwardPropagate() { //--- Compute hidden layer values for(int j = 0; j < hiddenNeuronCount; j++) { double sum = 0; //--- Calculate weighted sum for hidden neuron for(int i = 0; i < inputNeuronCount; i++) sum += inputLayer[i] * inputToHiddenWeights[i * hiddenNeuronCount + j]; //--- Apply sigmoid activation hiddenLayer[j] = Sigmoid(sum + hiddenLayerBiases[j]); } //--- Compute output layer values for(int j = 0; j < outputNeuronCount; j++) { double sum = 0; //--- Calculate weighted sum for output neuron for(int i = 0; i < hiddenNeuronCount; i++) sum += hiddenLayer[i] * hiddenToOutputWeights[i * outputNeuronCount + j]; //--- Apply sigmoid activation outputLayer[j] = Sigmoid(sum + outputLayerBiases[j]); } } // Get output void CNeuralNetwork::GetOutput(double &outputs[]) { //--- Resize output array ArrayResize(outputs, outputNeuronCount); //--- Copy output layer to outputs ArrayCopy(outputs, outputLayer); } // Backpropagation void CNeuralNetwork::Backpropagate(const double &targets[]) { //--- Calculate output layer deltas for(int i = 0; i < outputNeuronCount; i++) { double output = outputLayer[i]; //--- Compute delta for output neuron outputDeltas[i] = output * (1 - output) * (targets[i] - output); } //--- Calculate hidden layer deltas for(int i = 0; i < hiddenNeuronCount; i++) { double error = 0; //--- Sum weighted errors from output layer for(int j = 0; j < outputNeuronCount; j++) error += outputDeltas[j] * hiddenToOutputWeights[i * outputNeuronCount + j]; double output = hiddenLayer[i]; //--- Compute delta for hidden neuron hiddenDeltas[i] = output * (1 - output) * error; } //--- Update hidden-to-output weights for(int i = 0; i < hiddenNeuronCount; i++) { for(int j = 0; j < outputNeuronCount; j++) { int idx = i * outputNeuronCount + j; //--- Adjust weight based on learning rate and delta hiddenToOutputWeights[idx] += currentLearningRate * outputDeltas[j] * hiddenLayer[i]; } } //--- Update input-to-hidden weights for(int i = 0; i < inputNeuronCount; i++) { for(int j = 0; j < hiddenNeuronCount; j++) { int idx = i * hiddenNeuronCount + j; //--- Adjust weight based on learning rate and delta inputToHiddenWeights[idx] += currentLearningRate * hiddenDeltas[j] * inputLayer[i]; } } //--- Update hidden biases for(int i = 0; i < hiddenNeuronCount; i++) //--- Adjust bias based on learning rate and delta hiddenLayerBiases[i] += currentLearningRate * hiddenDeltas[i]; //--- Update output biases for(int i = 0; i < outputNeuronCount; i++) //--- Adjust bias based on learning rate and delta outputLayerBiases[i] += currentLearningRate * outputDeltas[i]; } // Resize network (adjust hidden neurons) void CNeuralNetwork::ResizeNetwork(int newHiddenNeurons) { //--- Clamp new neuron count within bounds newHiddenNeurons = MathMax(MinHiddenNeurons, MathMin(newHiddenNeurons, MaxHiddenNeurons)); //--- Check if resizing is necessary if(newHiddenNeurons == hiddenNeuronCount) return; //--- Log resizing information Print("Resizing network. New hidden neurons: ", newHiddenNeurons, ", Previous: ", hiddenNeuronCount); //--- Update hidden neuron count hiddenNeuronCount = newHiddenNeurons; //--- Resize hidden layer array ArrayResize(hiddenLayer, hiddenNeuronCount); //--- Resize input-to-hidden weights array ArrayResize(inputToHiddenWeights, inputNeuronCount * hiddenNeuronCount); //--- Resize hidden-to-output weights array ArrayResize(hiddenToOutputWeights, hiddenNeuronCount * outputNeuronCount); //--- Resize hidden biases array ArrayResize(hiddenLayerBiases, hiddenNeuronCount); //--- Resize hidden deltas array ArrayResize(hiddenDeltas, hiddenNeuronCount); //--- Reinitialize weights InitializeWeights(); }

在此阶段,我们实现神经网络的关键组件。我们新建“CNeuralNetwork”构造函数,接收“inputs”、“hidden”和“outputs”作为参数,设置“inputNeuronCount”、“outputNeuronCount”以及“hiddenNeuronCount”(需被限制在“MinHiddenNeurons”和“MaxHiddenNeurons”的范围内)。由于我们在前一部分已经对类似的类原型做过详细说明,此处无需再过多解释。

接下来,我们将“currentLearningRate”初始化为“MinLearningRate”,使用 ArrayResize调整“inputLayer”、“hiddenLayer”、“outputLayer”、“inputToHiddenWeights”、“hiddenToOutputWeights”、“hiddenLayerBiases”、“outputLayerBiases”、“accuracyHistory”以及“errorHistory”等数组的大小,并调用“InitializeWeights”和“InitializeTraining”以配置神经网络。

我们开发了“InitializeTraining”函数,用于调整“outputDeltas”和“hiddenDeltas”数组的大小,以支持反向传播过程,确保能够正确存储误差梯度。“InitializeWeights”函数负责设置权重和偏置:如果提供了如“InputToHiddenWeights”等输入参数,则使用“ParseStringToArray”函数从这些参数中加载“inputToHiddenWeights”、“hiddenToOutputWeights”、“hiddenLayerBiases”和“outputLayerBiases”;如果未提供这些参数,则使用MathRand函数将权重和偏置随机初始化为-1到1之间的值,并使用“Print”函数记录相关操作。我们实现了“Sigmoid”函数,通过MathExp函数计算给定输入“x”的σ激活值。

“SetInput”函数会先通过ArraySize验证输入数据“inputs”的大小是否与预期匹配,如果不匹配则通过“Print”函数记录错误信息;当匹配成功后,将“inputs”的数据复制到“inputLayer”数组中。我们创建了“ForwardPropagate”函数,该函数通过计算加权和并应用“Sigmoid”激活函数(结合“hiddenLayerBiases”和“outputLayerBiases”偏置),来计算“hiddenLayer”和“outputLayer”的值。“GetOutput”函数会先通过“ArrayResize”调整“outputs”数组的大小,然后使用ArrayCopy将“outputLayer”数组的值复制到“outputs”中。

我们实现了“Backpropagate”函数,该函数根据目标值“targets”计算“outputDeltas”和“hiddenDeltas”,并使用“currentLearningRate”更新“hiddenToOutputWeights”、“inputToHiddenWeights”、“hiddenLayerBiases”和“outputLayerBiases”。最后,“ResizeNetwork”函数会在合理的范围内调整“hiddenNeuronCount”,调整“hiddenLayer”和“hiddenDeltas”等数组的大小,并通过“InitializeWeights”重新初始化权重,同时使用“Print”记录相关变更。接下来,需要根据误差趋势调整学习率,因此,让我们仔细深入地探讨这一点。

// Adjust learning rate based on error trend void CNeuralNetwork::AdjustLearningRate() { //--- Check if enough history exists if(historyRecordCount < 2) return; //--- Get last and previous errors double lastError = errorHistory[historyRecordCount - 1]; double prevError = errorHistory[historyRecordCount - 2]; //--- Calculate error difference double errorDiff = lastError - prevError; //--- Increase learning rate if error decreased if(lastError < prevError) currentLearningRate = MathMin(currentLearningRate * 1.05, MaxLearningRate); //--- Decrease learning rate if error increased significantly else if(lastError > prevError * 1.2) currentLearningRate = MathMax(currentLearningRate * 0.9, MinLearningRate); //--- Slightly decrease learning rate otherwise else currentLearningRate = MathMax(currentLearningRate * 0.99, MinLearningRate); //--- Log learning rate adjustment Print("Adjusted learning rate to: ", currentLearningRate, ", Last Error: ", lastError, ", Prev Error: ", prevError, ", Error Diff: ", errorDiff); }

我们在“CNeuralNetwork”类中通过实现“AdjustLearningRate”函数来构建自适应学习率机制。首先,我们会检查“historyRecordCount”是否至少为2,以确保“errorHistory”数组中有足够的数据,如果不足则直接退出函数以避免无效调整。接下来,我们从“errorHistory”数组中获取最新的误差值作为“lastError”,以及前一次的误差值作为“prevError”,然后计算它们的差值并存储于“errorDiff”中,以此评估训练表现。

如果“lastError”小于“prevError”,我们使用MathMin函数将“currentLearningRate”提高5%,同时确保其不超过“MaxLearningRate”;如果“lastError”比“prevError”高出20%以上,则使用MathMax函数将“currentLearningRate”降低10%,同时确保其不低于“MinLearningRate”。在其他情况下,我们使用“MathMax”函数将“currentLearningRate”略微降低1%。最后,我们使用“Print”函数记录调整后的“currentLearningRate”、“lastError”、“prevError”和“errorDiff”,以实现神经网络学习过程的动态优化。随后,我们利用ATR指标动态计算隐藏层神经元的数量,以便根据市场波动性进行调整。

// Calculate dynamic number of hidden neurons based on ATR double CNeuralNetwork::CalculateDynamicNeurons() { double atrValues[]; //--- Set ATR array as series ArraySetAsSeries(atrValues, true); //--- Copy ATR buffer if(CopyBuffer(atrIndicatorHandle, 0, 0, 10, atrValues) < 10) { Print("Error: Failed to copy ATR for dynamic neurons. Using default: ", hiddenNeuronCount); return hiddenNeuronCount; } //--- Calculate average ATR double avgATR = 0; for(int i = 0; i < 10; i++) avgATR += atrValues[i]; avgATR /= 10; //--- Get current close price double closePrice = iClose(_Symbol, PERIOD_CURRENT, 0); //--- Check for valid close price if(MathAbs(closePrice) < 0.000001) { Print("Error: Invalid close price for ATR ratio. Using default: ", hiddenNeuronCount); return hiddenNeuronCount; } //--- Calculate ATR ratio double atrRatio = atrValues[0] / closePrice; //--- Compute new neuron count int newNeurons = MinHiddenNeurons + (int)((MaxHiddenNeurons - MinHiddenNeurons) * MathMin(atrRatio * 100, 1.0)); //--- Return clamped neuron count return MathMax(MinHiddenNeurons, MathMin(newNeurons, MaxHiddenNeurons)); }

为了根据市场波动性动态调整隐藏层神经元的数量,我们声明一个名为“atrValues”的数组,并使用ArraySetAsSeries函数将其配置为时间序列数组,随后利用CopyBuffer函数从“atrIndicatorHandle”(中获取最近10根K线的ATR数据,存入“atrValues”数组。如果“CopyBuffer”函数获取到的数据少于10个值,我们使用“Print”函数记录错误信息,并返回默认的“hiddenNeuronCount”。将数组设置为时间序列至关重要,这样最新获取的数据会映射到数组的初始索引位置(即数组开头),从而确保我们能够优先使用最新数据进行计算。以下是相关示例说明:

我们通过将“atrValues”数组中最近10根K线的ATR值求和并除以10,计算ATR均值,并将结果存储在“avgATR”变量中。接着,我们使用iClose函数获取当前品种_Symbol在当前周期“PERIOD_CURRENT”的收盘价,并将其存储在“closePrice”变量中。如果“closePrice”接近0,我们使用“Print”函数记录错误信息,并返回当前的“hiddenNeuronCount”。然后,我们计算ATR比率,即用最新的ATR值“atrValues[0]”除以“closePrice”,再通过缩放该比率来计算新的神经元数量“newNeurons”,缩放范围在“MinHiddenNeurons”和“MaxHiddenNeurons”之间,并使用MathMin函数确保结果不超过最大值。最后,我们结合MathMax和“MathMin”函数对计算出的神经元数量进行上下限约束,确保其处于合理范围内,并返回调整后的神经元数量,确保根据市场条件动态调整。现在,我们可以定义其余的训练和更新函数,具体如下:

// Train network on historical data double CNeuralNetwork::TrainOnHistoricalData(TrainingData &data[]) { const int maxEpochs = 100; //--- Maximum training epochs const double targetError = 0.01; //--- Target error threshold double accuracy = 0; //--- Training accuracy //--- Reset learning rate currentLearningRate = MinLearningRate; //--- Iterate through epochs for(int epoch = 0; epoch < maxEpochs; epoch++) { double totalError = 0; //--- Total error for epoch int correctPredictions = 0; //--- Count of correct predictions //--- Process each training sample for(int i = 0; i < ArraySize(data); i++) { //--- Check target array size if(ArraySize(data[i].targetValues) != outputNeuronCount) { Print("Error: Mismatch in targets size for training data at index ", i); continue; } //--- Set input values SetInput(data[i].inputValues); //--- Perform forward propagation ForwardPropagate(); double error = 0; //--- Calculate error for(int j = 0; j < outputNeuronCount; j++) error += MathPow(data[i].targetValues[j] - outputLayer[j], 2); totalError += error; //--- Check prediction correctness if((outputLayer[0] > outputLayer[1] && data[i].targetValues[0] > data[i].targetValues[1]) || (outputLayer[0] < outputLayer[1] && data[i].targetValues[0] < data[i].targetValues[1])) correctPredictions++; //--- Perform backpropagation Backpropagate(data[i].targetValues); } //--- Calculate accuracy accuracy = (double)correctPredictions / ArraySize(data); //--- Update training error trainingError = totalError / ArraySize(data); //--- Update history if(historyRecordCount < MAX_HISTORY_SIZE) { accuracyHistory[historyRecordCount] = accuracy; errorHistory[historyRecordCount] = trainingError; historyRecordCount++; } else { //--- Shift history arrays for(int i = 1; i < MAX_HISTORY_SIZE; i++) { accuracyHistory[i - 1] = accuracyHistory[i]; errorHistory[i - 1] = errorHistory[i]; } //--- Add new values accuracyHistory[MAX_HISTORY_SIZE - 1] = accuracy; errorHistory[MAX_HISTORY_SIZE - 1] = trainingError; } //--- Log error history update Print("Error history updated: ", errorHistory[historyRecordCount - 1]); //--- Adjust learning rate AdjustLearningRate(); //--- Log progress every 10 epochs if(epoch % 10 == 0) Print("Epoch ", epoch, ": Error = ", trainingError, ", Accuracy = ", accuracy); //--- Check for early stopping if(trainingError < targetError && accuracy >= MinPredictionAccuracy) break; } //--- Return final accuracy return accuracy; } // Update network with recent data void CNeuralNetwork::UpdateNetworkWithRecentData() { const int recentBarCount = 10; //--- Number of recent bars to process TrainingData recentData[]; //--- Collect recent training data if(!CollectTrainingData(recentData, recentBarCount)) return; //--- Process each recent data sample for(int i = 0; i < ArraySize(recentData); i++) { //--- Set input values SetInput(recentData[i].inputValues); //--- Perform forward propagation ForwardPropagate(); //--- Perform backpropagation Backpropagate(recentData[i].targetValues); } } // Get recent accuracy double CNeuralNetwork::GetRecentAccuracy() { //--- Check if history exists if(historyRecordCount == 0) return 0.0; //--- Return most recent accuracy return accuracyHistory[historyRecordCount - 1]; } // Get recent error double CNeuralNetwork::GetRecentError() { //--- Check if history exists if(historyRecordCount == 0) return 0.0; //--- Return most recent error return errorHistory[historyRecordCount - 1]; } // Check if retraining is needed bool CNeuralNetwork::ShouldRetrain() { //--- Check if enough history exists if(historyRecordCount < 2) return false; //--- Get recent metrics double recentAccuracy = GetRecentAccuracy(); double recentError = GetRecentError(); double prevError = errorHistory[historyRecordCount - 2]; //--- Determine if retraining is needed return (recentAccuracy < MinPredictionAccuracy || recentError > prevError * 1.5); }

在此阶段,我们实现了神经网络关键训练与更新机制。我们创建了"TrainOnHistoricalData"函数,通过"TrainingData"结构体数组训练网络,设置最大训练轮次"maxEpochs"为100,目标误差"targetError"为0.01,并将"currentLearningRate"重置为"MinLearningRate"。在每轮训练中,我们遍历数据集"data",验证"data[i].targetValues"大小是否与输出层神经元数量匹配,并使用"SetInput"函数加载输入数据"data[i].inputValues",通过"ForwardPropagate"函数计算预测结果。我们使用MathPow计算输出层"outputLayer"与目标值"data[i].targetValues"的误差,统计正确预测数量"correctPredictions",并且利用"Backpropagate"函数进行反向传播。

我们更新准确率"accuracy"和训练误差"trainingError",使用"historyRecordCount"将历史数据存储到"accuracyHistory"和"errorHistory"中,当数组满时自动移位,通过"Print"函数记录更新日志,并且使用"AdjustLearningRate"函数优化学习率,如果"trainingError"和"accuracy"达到"targetError"和"MinPredictionAccuracy",则提前终止训练。

我们开发了"UpdateNetworkWithRecentData"函数,设置最近K线数量"recentBarCount"为10,使用"CollectTrainingData"函数填充"recentData",遍历每个样本执行"SetInput"、"ForwardPropagate"和"Backpropagate"操作。"GetRecentAccuracy"函数返回最新的"accuracyHistory"值(如果记录数为0则返回0.0),"GetRecentError"函数对"errorHistory"执行相同操作。

我们构建了"ShouldRetrain"函数,检查"historyRecordCount"是否至少为2,通过"GetRecentAccuracy"和"GetRecentError"获取最新准确率"recentAccuracy"和误差"recentError",将当前值与"MinPredictionAccuracy"和1.5倍的前次误差值"errorHistory"比较,返回是否需要重新训练的布尔值。现在我们可以创建实际使用的类实例:

// Global neural network instance CNeuralNetwork *neuralNetwork; //--- Global neural network object

通过创建一个指向"CNeuralNetwork"类实例的"neuralNetwork"指针,我们建立了神经网络的全局作用域,该全局对象将实现神经网络功能的集中访问,包括训练、前向传播、反向传播和自适应学习率调整,确保与EA操作的无缝集成。

通过定义全局"neuralNetwork",以便在初始化、行情处理和反初始化函数中使用,从而管理策略的预测和交易能力。我们可以进一步定义模块化函数来初始化输入和收集训练数据:

// Prepare inputs from market data void PrepareInputs(double &inputs[]) { //--- Resize inputs array if necessary if(ArraySize(inputs) != INPUT_NEURON_COUNT) ArrayResize(inputs, INPUT_NEURON_COUNT); double ma20Values[], ma50Values[], rsiValues[], atrValues[]; //--- Set arrays as series ArraySetAsSeries(ma20Values, true); ArraySetAsSeries(ma50Values, true); ArraySetAsSeries(rsiValues, true); ArraySetAsSeries(atrValues, true); //--- Copy MA20 buffer if(CopyBuffer(ma20IndicatorHandle, 0, 0, 2, ma20Values) <= 0) { Print("Error: Failed to copy MA20 buffer. Error code: ", GetLastError()); return; } //--- Copy MA50 buffer if(CopyBuffer(ma50IndicatorHandle, 0, 0, 2, ma50Values) <= 0) { Print("Error: Failed to copy MA50 buffer. Error code: ", GetLastError()); return; } //--- Copy RSI buffer if(CopyBuffer(rsiIndicatorHandle, 0, 0, 2, rsiValues) <= 0) { Print("Error: Failed to copy RSI buffer. Error code: ", GetLastError()); return; } //--- Copy ATR buffer if(CopyBuffer(atrIndicatorHandle, 0, 0, 2, atrValues) <= 0) { Print("Error: Failed to copy ATR buffer. Error code: ", GetLastError()); return; } //--- Check array sizes if(ArraySize(ma20Values) < 2 || ArraySize(ma50Values) < 2 || ArraySize(rsiValues) < 2 || ArraySize(atrValues) < 2) { Print("Error: Insufficient data in indicator arrays"); return; } //--- Get current market prices double closePrice = iClose(_Symbol, PERIOD_CURRENT, 0); double openPrice = iOpen(_Symbol, PERIOD_CURRENT, 0); double highPrice = iHigh(_Symbol, PERIOD_CURRENT, 0); double lowPrice = iLow(_Symbol, PERIOD_CURRENT, 0); //--- Calculate input features inputs[0] = (MathAbs(openPrice) > 0.000001) ? (closePrice - openPrice) / openPrice : 0; inputs[1] = (MathAbs(lowPrice) > 0.000001) ? (highPrice - lowPrice) / lowPrice : 0; inputs[2] = (MathAbs(ma20Values[0]) > 0.000001) ? (closePrice - ma20Values[0]) / ma20Values[0] : 0; inputs[3] = (MathAbs(ma50Values[0]) > 0.000001) ? (ma20Values[0] - ma50Values[0]) / ma50Values[0] : 0; inputs[4] = rsiValues[0] / 100.0; double highLowRange = highPrice - lowPrice; if(MathAbs(highLowRange) > 0.000001) { inputs[5] = (closePrice - lowPrice) / highLowRange; inputs[7] = MathAbs(closePrice - openPrice) / highLowRange; inputs[8] = (highPrice - closePrice) / highLowRange; inputs[9] = (closePrice - lowPrice) / highLowRange; } else { inputs[5] = 0; inputs[7] = 0; inputs[8] = 0; inputs[9] = 0; } inputs[6] = (MathAbs(closePrice) > 0.000001) ? atrValues[0] / closePrice : 0; //--- Log input preparation Print("Prepared inputs. Size: ", ArraySize(inputs)); } // Collect training data bool CollectTrainingData(TrainingData &data[], int barCount) { //--- Check output neuron count if(OUTPUT_NEURON_COUNT != 2) { Print("Error: OUTPUT_NEURON_COUNT must be 2 for binary classification."); return false; } //--- Resize data array ArrayResize(data, barCount); double ma20Values[], ma50Values[], rsiValues[], atrValues[]; //--- Set arrays as series ArraySetAsSeries(ma20Values, true); ArraySetAsSeries(ma50Values, true); ArraySetAsSeries(rsiValues, true); ArraySetAsSeries(atrValues, true); //--- Copy MA20 buffer if(CopyBuffer(ma20IndicatorHandle, 0, 0, barCount + 1, ma20Values) < barCount + 1) { Print("Error: Failed to copy MA20 buffer for training. Error code: ", GetLastError()); return false; } //--- Copy MA50 buffer if(CopyBuffer(ma50IndicatorHandle, 0, 0, barCount + 1, ma50Values) < barCount + 1) { Print("Error: Failed to copy MA50 buffer for training. Error code: ", GetLastError()); return false; } //--- Copy RSI buffer if(CopyBuffer(rsiIndicatorHandle, 0, 0, barCount + 1, rsiValues) < barCount + 1) { Print("Error: Failed to copy RSI buffer for training. Error code: ", GetLastError()); return false; } //--- Copy ATR buffer if(CopyBuffer(atrIndicatorHandle, 0, 0, barCount + 1, atrValues) < barCount + 1) { Print("Error: Failed to copy ATR buffer for training. Error code: ", GetLastError()); return false; } MqlRates priceData[]; //--- Set rates array as series ArraySetAsSeries(priceData, true); //--- Copy price data if(CopyRates(_Symbol, PERIOD_CURRENT, 0, barCount + 1, priceData) < barCount + 1) { Print("Error: Failed to copy rates for training. Error code: ", GetLastError()); return false; } //--- Process each bar for(int i = 0; i < barCount; i++) { //--- Resize input and target arrays ArrayResize(data[i].inputValues, INPUT_NEURON_COUNT); ArrayResize(data[i].targetValues, OUTPUT_NEURON_COUNT); //--- Get price data double closePrice = priceData[i].close; double openPrice = priceData[i].open; double highPrice = priceData[i].high; double lowPrice = priceData[i].low; double highLowRange = highPrice - lowPrice; //--- Calculate input features data[i].inputValues[0] = (MathAbs(openPrice) > 0.000001) ? (closePrice - openPrice) / openPrice : 0; data[i].inputValues[1] = (MathAbs(lowPrice) > 0.000001) ? (highPrice - lowPrice) / lowPrice : 0; data[i].inputValues[2] = (MathAbs(ma20Values[i]) > 0.000001) ? (closePrice - ma20Values[i]) / ma20Values[i] : 0; data[i].inputValues[3] = (MathAbs(ma50Values[i]) > 0.000001) ? (ma20Values[i] - ma50Values[i]) / ma50Values[i] : 0; data[i].inputValues[4] = rsiValues[i] / 100.0; if(MathAbs(highLowRange) > 0.000001) { data[i].inputValues[5] = (closePrice - lowPrice) / highLowRange; data[i].inputValues[7] = MathAbs(closePrice - openPrice) / highLowRange; data[i].inputValues[8] = (highPrice - closePrice) / highLowRange; data[i].inputValues[9] = (closePrice - lowPrice) / highLowRange; } data[i].inputValues[6] = (MathAbs(closePrice) > 0.000001) ? atrValues[i] / closePrice : 0; //--- Set target values based on price movement if(i < barCount - 1) { double futureClose = priceData[i + 1].close; double priceChange = futureClose - closePrice; if(priceChange > 0) { data[i].targetValues[0] = 1; data[i].targetValues[1] = 0; } else { data[i].targetValues[0] = 0; data[i].targetValues[1] = 1; } } else { data[i].targetValues[0] = 0; data[i].targetValues[1] = 0; } } //--- Return success return true; }

在此阶段,我们通过构建“PrepareInputs”函数来实现数据预处理,为神经网络生成输入特征。我们使用ArrayResize函数调整"inputs"数组大小,确保其与预定义的"INPUT_NEURON_COUNT"匹配,接着通过ArraySetAsSeries函数将声明的"ma20Values"、"ma50Values"、"rsiValues"和"atrValues"数组设置为时间序列格式。我们使用CopyBuffer函数从指标句柄"ma20IndicatorHandle"、"ma50IndicatorHandle"、"rsiIndicatorHandle"和"atrIndicatorHandle"中提取两根K线数据,如果失败则用"Print"函数记录错误并退出。

我们依据ArraySize验证数组大小,如果不足则通过"Print"记录错误,并使用iClose、"iOpen"、"iHigh"和"iLow"获取当前品种_Symbol在PERIOD_CURRENT时间周期下的收盘价、开盘价、最高价和最低价,分别存入"closePrice"、"openPrice"、"highPrice"和"lowPrice"中。我们为"inputs"计算输入特征,其中包括:标准化的价格差值、MA偏差、缩放至0到1范围内的RSI值、相对于"closePrice"的ATR值(通过MathAbs检查避免除0错误)和基于"highLowRange"的区间特征。成功完成后,通过"Print"和"ArraySize"记录日志。

此外,我们创建"CollectTrainingData"函数,为"barCount"根K线准备"TrainingData"结构体数组的训练数据。我们验证"OUTPUT_NEURON_COUNT"是否为2,使用"ArrayResize"调整"data"数组大小,通过"CopyBuffer"从指标句柄获取数据,并通过"CopyRates"获取价格数据存入"priceData"中,失败时用"Print"记录错误。对于每根K线,使用"ArrayResize"设置"data[i].inputValues"和"data[i].targetValues"大小,采用与"PrepareInputs"类似的方式计算输入特征,并且根据"priceData"中的价格走势设置"data[i].targetValues"的目标值,成功时返回"true"。接下来,我们需要基于接收到的数据训练网络。

// Train the neural network bool TrainNetwork() { //--- Log training start Print("Starting neural network training..."); TrainingData trainingData[]; //--- Collect training data if(!CollectTrainingData(trainingData, TrainingBarCount)) { Print("Failed to collect training data"); return false; } //--- Train network double accuracy = neuralNetwork.TrainOnHistoricalData(trainingData); //--- Log training completion Print("Training completed. Final accuracy: ", accuracy); //--- Return training success return (accuracy >= MinPredictionAccuracy); }

我们创建"TrainNetwork"函数,并通过"Print"函数记录训练开始日志,随后声明"TrainingData"结构体数组"trainingData",存储输入值和目标值。我们调用"CollectTrainingData"函数,利用"TrainingBarCount"根K线的数据填充"trainingData"。如果数据收集失败,则通过"Print"记录错误并返回"false"。

我们使用神经网络对象"neuralNetwork"的"TrainOnHistoricalData"函数训练网络,将结果存储于"accuracy"中,通过"Print"记录完成日志(包含"accuracy"值)。如果"accuracy"达到或超过"MinPredictionAccuracy",则返回"true",确保网络已充分训练以便用于交易。最后,我们可以按照如下方式创建函数来验证交易信号:

// Validate Stop Loss and Take Profit levels bool CheckStopLossTakeprofit(ENUM_ORDER_TYPE orderType, double price, double stopLoss, double takeProfit) { //--- Get minimum stop level double stopLevel = SymbolInfoInteger(_Symbol, SYMBOL_TRADE_STOPS_LEVEL) * _Point; //--- Validate buy order if(orderType == ORDER_TYPE_BUY) { //--- Check stop loss distance if(MathAbs(price - stopLoss) < stopLevel) { Print("Buy Stop Loss too close. Minimum distance: ", stopLevel); return false; } //--- Check take profit distance if(MathAbs(takeProfit - price) < stopLevel) { Print("Buy Take Profit too close. Minimum distance: ", stopLevel); return false; } } //--- Validate sell order else if(orderType == ORDER_TYPE_SELL) { //--- Check stop loss distance if(MathAbs(stopLoss - price) < stopLevel) { Print("Sell Stop Loss too close. Minimum distance: ", stopLevel); return false; } //--- Check take profit distance if(MathAbs(price - takeProfit) < stopLevel) { Print("Sell Take Profit too close. Minimum distance: ", stopLevel); return false; } } //--- Return validation success return true; }

在此阶段,我们仅创建一个布尔型函数来验证交易点位,以避免因经纪商限制导致的潜在错误。当前,我们可以在OnInit事件处理器中初始化程序,具体包括初始化技术指标和神经网络类的实例,以完成核心计算任务,代码如下:

// Expert initialization function int OnInit() { //--- Initialize 20-period MA indicator ma20IndicatorHandle = iMA(_Symbol, PERIOD_CURRENT, 20, 0, MODE_SMA, PRICE_CLOSE); //--- Initialize 50-period MA indicator ma50IndicatorHandle = iMA(_Symbol, PERIOD_CURRENT, 50, 0, MODE_SMA, PRICE_CLOSE); //--- Initialize RSI indicator rsiIndicatorHandle = iRSI(_Symbol, PERIOD_CURRENT, 14, PRICE_CLOSE); //--- Initialize ATR indicator atrIndicatorHandle = iATR(_Symbol, PERIOD_CURRENT, 14); //--- Check MA20 handle if(ma20IndicatorHandle == INVALID_HANDLE) Print("Error: Failed to initialize MA20 handle. Error code: ", GetLastError()); //--- Check MA50 handle if(ma50IndicatorHandle == INVALID_HANDLE) Print("Error: Failed to initialize MA50 handle. Error code: ", GetLastError()); //--- Check RSI handle if(rsiIndicatorHandle == INVALID_HANDLE) Print("Error: Failed to initialize RSI handle. Error code: ", GetLastError()); //--- Check ATR handle if(atrIndicatorHandle == INVALID_HANDLE) Print("Error: Failed to initialize ATR handle. Error code: ", GetLastError()); //--- Check for any invalid handles if(ma20IndicatorHandle == INVALID_HANDLE || ma50IndicatorHandle == INVALID_HANDLE || rsiIndicatorHandle == INVALID_HANDLE || atrIndicatorHandle == INVALID_HANDLE) { Print("Error initializing indicators"); return INIT_FAILED; } //--- Create neural network instance neuralNetwork = new CNeuralNetwork(INPUT_NEURON_COUNT, MinHiddenNeurons, OUTPUT_NEURON_COUNT); //--- Check neural network creation if(neuralNetwork == NULL) { Print("Failed to create neural network"); return INIT_FAILED; } //--- Log initialization Print("Initializing neural network..."); //--- Return success return(INIT_SUCCEEDED); }

我们在OnInit函数中实现初始化逻辑。使用iMA函数初始化"ma20IndicatorHandle"和"ma50IndicatorHandle",分别计算当前品种"_Symbol"在PERIOD_CURRENT时间周期下的20周期和50周期简单移动平均线(价格类型为"PRICE_CLOSE"),与此同时,使用"iRSI"和iATR函数分别设置 "rsiIndicatorHandle" 和 "atrIndicatorHandle",用于计算14周期的RSI指标和ATR指标。对每个指标句柄进行"INVALID_HANDLE"检查,如果初始化失败,通过"Print"函数记录错误信息,并且调用"GetLastError"获取详细错误代码,一旦有指标初始化失败,立即返回INIT_FAILED。

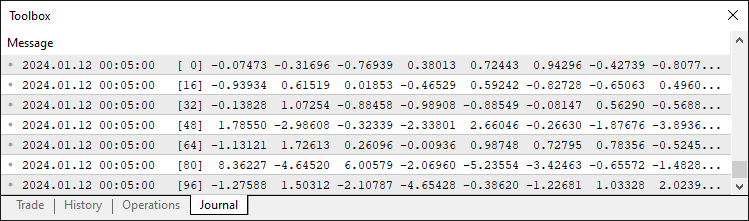

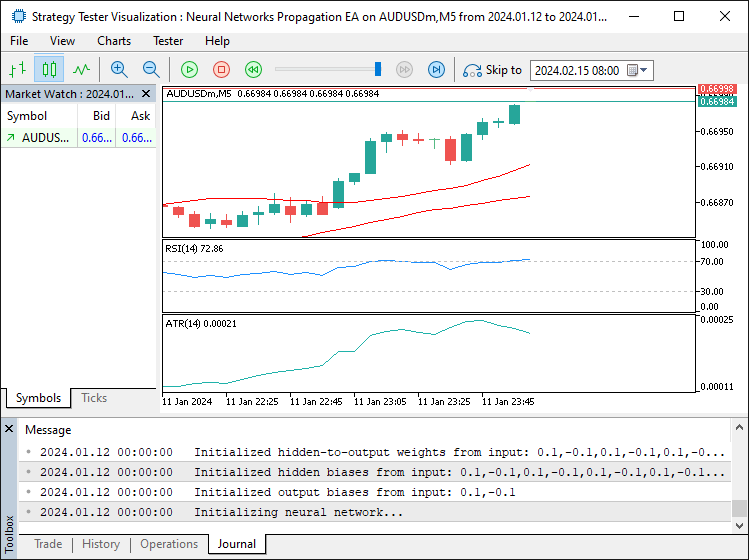

我们使用"INPUT_NEURON_COUNT"、"MinHiddenNeurons"和"OUTPUT_NEURON_COUNT"参数,为"neuralNetwork"创建"CNeuralNetwork"类的新实例以配置网络结构。如果"neuralNetwork"实例创建失败(为"NULL"),则通过"Print"函数记录错误日志,并返回"INIT_FAILED"。最后,使用"Print"函数记录初始化成功信息,并返回INIT_SUCCEEDED,确保EA已正确配置并可用于交易。程序运行后,输出如下:

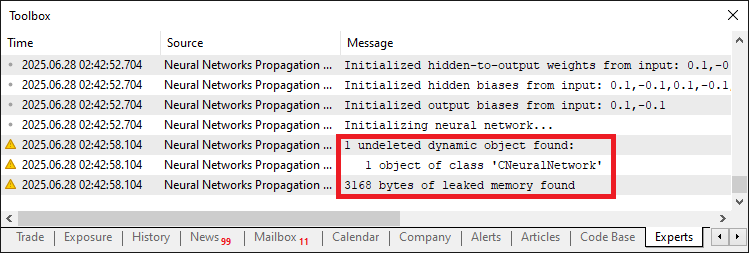

由图可见,程序已成功完成初始化。既然我们已经创建了神经网络实例,还需要在程序卸载时将其删除以避免内存泄漏。以下是实现这一逻辑的方法:

// Expert deinitialization function void OnDeinit(const int reason) { //--- Release MA20 indicator handle if(ma20IndicatorHandle != INVALID_HANDLE) IndicatorRelease(ma20IndicatorHandle); //--- Release MA50 indicator handle if(ma50IndicatorHandle != INVALID_HANDLE) IndicatorRelease(ma50IndicatorHandle); //--- Release RSI indicator handle if(rsiIndicatorHandle != INVALID_HANDLE) IndicatorRelease(rsiIndicatorHandle); //--- Release ATR indicator handle if(atrIndicatorHandle != INVALID_HANDLE) IndicatorRelease(atrIndicatorHandle); //--- Delete neural network instance if(neuralNetwork != NULL) delete neuralNetwork; //--- Log deinitialization Print("Expert Advisor deinitialized - ", EnumToString((ENUM_INIT_RETCODE)reason)); }

我们在OnDeinit函数中实现资源清理,如果"ma20IndicatorHandle"、"ma50IndicatorHandle"、"rsiIndicatorHandle"和"atrIndicatorHandle"指标句柄其中之一为"INVALID_HANDLE",则使用IndicatorRelease函数释放资源,确保正确释放MA、RSI和ATR指标。我们检查"neuralNetwork"是否为非"NULL",如果存在则使用"delete"操作符释放"CNeuralNetwork"类实例,完成神经网络内存清理。最后通过"Print"函数结合EnumToString记录卸载原因,确认EA所有资源已正确释放。未删除类实例将导致内存泄漏,示例如下:

在解决内存泄漏问题后,我们可以在OnTick事件处理器中实现核心的数据采集、模型训练及实时交易逻辑。

// Expert tick function void OnTick() { static datetime lastBarTime = 0; //--- Track last processed bar time //--- Get current bar time datetime currentBarTime = iTime(_Symbol, PERIOD_CURRENT, 0); //--- Skip if same bar if(lastBarTime == currentBarTime) return; //--- Update last bar time lastBarTime = currentBarTime; //--- Calculate dynamic neuron count int newNeuronCount = (int)neuralNetwork.CalculateDynamicNeurons(); //--- Resize network if necessary if(newNeuronCount != neuralNetwork.GetHiddenNeurons()) neuralNetwork.ResizeNetwork(newNeuronCount); //--- Check if retraining is needed if(TimeCurrent() - iTime(_Symbol, PERIOD_CURRENT, TrainingBarCount) >= 12 * 3600 || neuralNetwork.ShouldRetrain()) { //--- Log training start Print("Starting network training..."); //--- Train network if(!TrainNetwork()) { Print("Training failed or insufficient accuracy"); return; } } //--- Update network with recent data neuralNetwork.UpdateNetworkWithRecentData(); //--- Check for open positions if(PositionsTotal() > 0) { //--- Iterate through positions for(int i = PositionsTotal() - 1; i >= 0; i--) { //--- Skip if position is for current symbol if(PositionGetSymbol(i) == _Symbol) return; } } //--- Prepare input data double currentInputs[]; ArrayResize(currentInputs, INPUT_NEURON_COUNT); PrepareInputs(currentInputs); //--- Verify input array size if(ArraySize(currentInputs) != INPUT_NEURON_COUNT) { Print("Error: Inputs array not properly initialized. Size: ", ArraySize(currentInputs)); return; } //--- Set network inputs neuralNetwork.SetInput(currentInputs); //--- Perform forward propagation neuralNetwork.ForwardPropagate(); double outputValues[]; //--- Resize output array ArrayResize(outputValues, OUTPUT_NEURON_COUNT); //--- Get network outputs neuralNetwork.GetOutput(outputValues); //--- Verify output array size if(ArraySize(outputValues) != OUTPUT_NEURON_COUNT) { Print("Error: Outputs array not properly initialized. Size: ", ArraySize(outputValues)); return; } //--- Get market prices double askPrice = SymbolInfoDouble(_Symbol, SYMBOL_ASK); double bidPrice = SymbolInfoDouble(_Symbol, SYMBOL_BID); //--- Calculate stop loss and take profit levels double buyStopLoss = NormalizeDouble(askPrice - StopLossPoints * _Point, _Digits); double buyTakeProfit = NormalizeDouble(askPrice + TakeProfitPoints * _Point, _Digits); double sellStopLoss = NormalizeDouble(bidPrice + StopLossPoints * _Point, _Digits); double sellTakeProfit = NormalizeDouble(bidPrice - TakeProfitPoints * _Point, _Digits); //--- Validate stop loss and take profit if(!CheckStopLossTakeprofit(ORDER_TYPE_BUY, askPrice, buyStopLoss, buyTakeProfit) || !CheckStopLossTakeprofit(ORDER_TYPE_SELL, bidPrice, sellStopLoss, sellTakeProfit)) { return; } // Trading logic const double CONFIDENCE_THRESHOLD = 0.8; //--- Confidence threshold for trading //--- Check for buy signal if(outputValues[0] > CONFIDENCE_THRESHOLD && outputValues[1] < (1 - CONFIDENCE_THRESHOLD)) { //--- Set trade magic number tradeObject.SetExpertMagicNumber(123456); //--- Place buy order if(tradeObject.Buy(LotSize, _Symbol, askPrice, buyStopLoss, buyTakeProfit, "Neural Buy")) { //--- Log successful buy order Print("Buy order placed - Signal Strength: ", outputValues[0]); } else { //--- Log buy order failure Print("Buy order failed. Error: ", GetLastError()); } } //--- Check for sell signal else if(outputValues[0] < (1 - CONFIDENCE_THRESHOLD) && outputValues[1] > CONFIDENCE_THRESHOLD) { //--- Set trade magic number tradeObject.SetExpertMagicNumber(123456); //--- Place sell order if(tradeObject.Sell(LotSize, _Symbol, bidPrice, sellStopLoss, sellTakeProfit, "Neural Sell")) { //--- Log successful sell order Print("Sell order placed - Signal Strength: ", outputValues[1]); } else { //--- Log sell order failure Print("Sell order failed. Error: ", GetLastError()); } } } //+------------------------------------------------------------------+

在此阶段,我们在OnTick函数中实现神经网络策略的核心交易逻辑。我们声明变量"lastBarTime"记录上次处理的K线时间,通过iTime函数获取"_Symbol"和PERIOD_CURRENT的当前K线时间"currentBarTime",如果时间无变化则直接退出,确保每根新K线仅处理一次。我们更新"lastBarTime"为当前K线时间,使用"CalculateDynamicNeurons"函数计算最优神经元数量"newNeuronCount",如果"newNeuronCount"与通过 "GetHiddenNeurons"函数获取的当前隐藏层神经元数不一致,则调用"ResizeNetwork"函数调整网络结构。

我们通过比较TimeCurrent减去"TrainingBarCount"根K线对应的时间(通过"iTime"获取)是否超过12小时,或使用"ShouldRetrain"函数来判断是否需要重新训练,然后调用"TrainNetwork"函数进行再训练,如果失败则退出。我们调用"UpdateNetworkWithRecentData"函数来优化网络。如果PositionsTotal显示有持仓,则通过"PositionGetSymbol"检查是否包含当前品种_Symbol,如果有则跳过交易。我们声明动态数组"currentInputs",通过"ArrayResize"调整大小为"INPUT_NEURON_COUNT",然后调用"PrepareInputs"函数填充数据,并通过ArraySize验证尺寸,失败时用"Print"记录错误信息。

我们使用"SetInput"函数加载"currentInputs",调用"ForwardPropagate"函数生成预测,并使用"GetOutput"函数获取"outputValues"(事先通过"ArrayResize"调整为"OUTPUT_NEURON_COUNT"大小),如果无效则用"Print"记录错误。我们通过SymbolInfoDouble获取"askPrice"和"bidPrice",使用NormalizeDouble配合"StopLossPoints"、"TakeProfitPoints"、"_Point"和"_Digits"计算"buyStopLoss"、"buyTakeProfit"、"sellStopLoss"和"sellTakeProfit",并利用"CheckStopLossTakeprofit"函数验证。

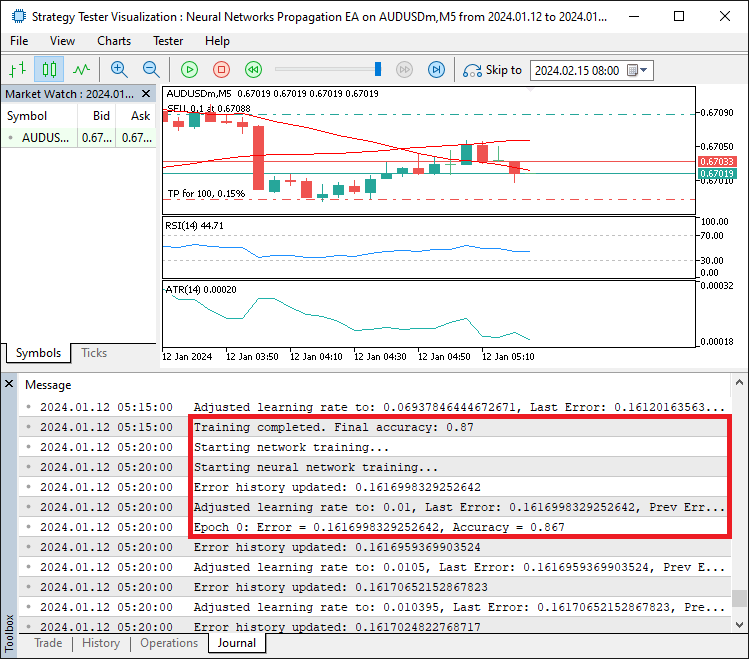

交易方面,我们将"CONFIDENCE_THRESHOLD"设置为0.8,如果"outputValues[0]"超过该阈值且"outputValues[1]"低于其补数,我们会在使用"SetExpertMagicNumber"设置magic数字后,调用"tradeObject.Buy"函数执行买入,并通过"Print"和"GetLastError"记录成功或失败信息。同理,对于卖出信号使用"tradeObject.Sell"函数,确保交易执行稳健可靠。编译后,我们得到以下输出。

由图可见,我们训练神经网络以获取误差,在每个训练周期内传播误差,然后根据误差的精度调整学习率。接下来需完成的工作是程序回测,相关内容将在下一章节详细阐述。

测试与优化学习率调整

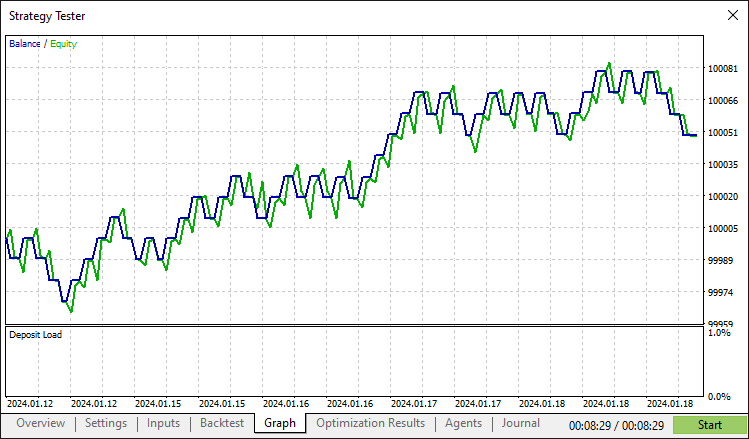

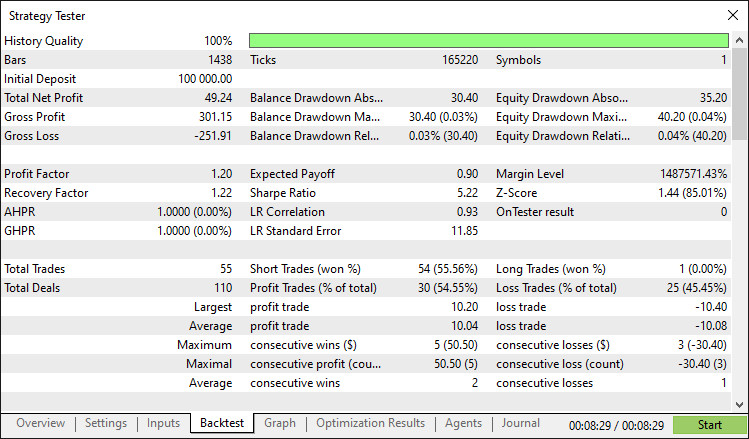

经过全面回测后,我们得到以下结果:

回测图:

回测报告:

结论

总结而言,我们已开发出一套MQL5程序,实现了基于神经网络的交易策略,并具备自适应学习率,利用"CNeuralNetwork"类处理市场指标并执行交易,可动态调整学习速度和网络规模以达到最优性能。通过"TrainingData"结构体以及"AdjustLearningRate"和"TrainNetwork"等函数的模块化组件,该系统提供了灵活的框架。您可以通过微调参数或集成额外的市场指标来增强系统,以契合您的交易偏好。

免责声明:本文仅用于教学目的。交易存在重大财务风险,市场剧烈波动可能导致资金损失。在实盘操作前,务必进行充分的历史回测,并建立严格的风险控制机制。

通过利用所提出的概念和实现,您可以优化这个神经网络交易系统,或调整其架构以创建新策略,助力您在算法交易领域的探索之旅。祝您交易顺利!

本文由MetaQuotes Ltd译自英文

原文地址: https://www.mql5.com/en/articles/18660

注意: MetaQuotes Ltd.将保留所有关于这些材料的权利。全部或部分复制或者转载这些材料将被禁止。

本文由网站的一位用户撰写,反映了他们的个人观点。MetaQuotes Ltd 不对所提供信息的准确性负责,也不对因使用所述解决方案、策略或建议而产生的任何后果负责。

您应当知道的 MQL5 向导技术(第 63 部分):运用 DeMarker 和包络通道形态

您应当知道的 MQL5 向导技术(第 63 部分):运用 DeMarker 和包络通道形态

MQL5 简介(第 19 部分):沃尔夫波浪自动检测

MQL5 简介(第 19 部分):沃尔夫波浪自动检测

从新手到专家:使用 MQL5 制作动画新闻标题(七)—— 新闻交易的后冲击策略

从新手到专家:使用 MQL5 制作动画新闻标题(七)—— 新闻交易的后冲击策略

您应当知道的 MQL5 向导技术(第 62 部分):结合 ADX 与 CCI 形态的强化学习 TRPO

您应当知道的 MQL5 向导技术(第 62 部分):结合 ADX 与 CCI 形态的强化学习 TRPO