Neural networks made easy (Part 47): Continuous action space

In this article, we expand the range of tasks of our agent. The training process will include some aspects of money and risk management, which are an integral part of any trading strategy.

Neural Networks in Trading: Enhancing Transformer Efficiency by Reducing Sharpness (Final Part)

SAMformer offers a solution to the key drawbacks of Transformer models in long-term time series forecasting, such as training complexity and poor generalization on small datasets. Its shallow architecture and sharpness-aware optimization help avoid suboptimal local minima. In this article, we will continue to implement approaches using MQL5 and evaluate their practical value.

Trading Insights Through Volume: Moving Beyond OHLC Charts

Algorithmic trading system that combines volume analysis with machine learning techniques, specifically LSTM neural networks. Unlike traditional trading approaches that primarily focus on price movements, this system emphasizes volume patterns and their derivatives to predict market movements. The methodology incorporates three main components: volume derivatives analysis (first and second derivatives), LSTM predictions for volume patterns, and traditional technical indicators.

Neural Networks in Trading: A Multi-Agent Self-Adaptive Model (Final Part)

In the previous article, we introduced the multi-agent self-adaptive framework MASA, which combines reinforcement learning approaches and self-adaptive strategies, providing a harmonious balance between profitability and risk in turbulent market conditions. We have built the functionality of individual agents within this framework. In this article, we will continue the work we started, bringing it to its logical conclusion.

Neural Networks in Trading: Using Language Models for Time Series Forecasting

We continue to study time series forecasting models. In this article, we get acquainted with a complex algorithm built on the use of a pre-trained language model.

Category Theory in MQL5 (Part 20): A detour to Self-Attention and the Transformer

We digress in our series by pondering at part of the algorithm to chatGPT. Are there any similarities or concepts borrowed from natural transformations? We attempt to answer these and other questions in a fun piece, with our code in a signal class format.

Population optimization algorithms: Nelder–Mead, or simplex search (NM) method

The article presents a complete exploration of the Nelder-Mead method, explaining how the simplex (function parameter space) is modified and rearranged at each iteration to achieve an optimal solution, and describes how the method can be improved.

Neural Networks in Trading: Transformer with Relative Encoding

Self-supervised learning can be an effective way to analyze large amounts of unlabeled data. The efficiency is provided by the adaptation of models to the specific features of financial markets, which helps improve the effectiveness of traditional methods. This article introduces an alternative attention mechanism that takes into account the relative dependencies and relationships between inputs.

Gain An Edge Over Any Market (Part II): Forecasting Technical Indicators

Did you know that we can gain more accuracy forecasting certain technical indicators than predicting the underlying price of a traded symbol? Join us to explore how to leverage this insight for better trading strategies.

Billiards Optimization Algorithm (BOA)

The BOA method is inspired by the classic game of billiards and simulates the search for optimal solutions as a game with balls trying to fall into pockets representing the best results. In this article, we will consider the basics of BOA, its mathematical model, and its efficiency in solving various optimization problems.

Reimagining Classic Strategies (Part III): Forecasting Higher Highs And Lower Lows

In this series article, we will empirically analyze classic trading strategies to see if we can improve them using AI. In today's discussion, we tried to predict higher highs and lower lows using the Linear Discriminant Analysis model.

Data Science and ML (Part 29): Essential Tips for Selecting the Best Forex Data for AI Training Purposes

In this article, we dive deep into the crucial aspects of choosing the most relevant and high-quality Forex data to enhance the performance of AI models.

Using association rules in Forex data analysis

How to apply predictive rules of supermarket retail analytics to the real Forex market? How are purchases of cookies, milk and bread related to stock exchange transactions? The article discusses an innovative approach to algorithmic trading based on the use of association rules.

Reimagining Classic Strategies (Part VI): Multiple Time-Frame Analysis

In this series of articles, we revisit classic strategies to see if we can improve them using AI. In today's article, we will examine the popular strategy of multiple time-frame analysis to judge if the strategy would be enhanced with AI.

Category Theory in MQL5 (Part 2)

Category Theory is a diverse and expanding branch of Mathematics which as of yet is relatively uncovered in the MQL5 community. These series of articles look to introduce and examine some of its concepts with the overall goal of establishing an open library that attracts comments and discussion while hopefully furthering the use of this remarkable field in Traders' strategy development.

Population optimization algorithms: Saplings Sowing and Growing up (SSG)

Saplings Sowing and Growing up (SSG) algorithm is inspired by one of the most resilient organisms on the planet demonstrating outstanding capability for survival in a wide variety of conditions.

Integrate Your Own LLM into EA (Part 4): Training Your Own LLM with GPU

With the rapid development of artificial intelligence today, language models (LLMs) are an important part of artificial intelligence, so we should think about how to integrate powerful LLMs into our algorithmic trading. For most people, it is difficult to fine-tune these powerful models according to their needs, deploy them locally, and then apply them to algorithmic trading. This series of articles will take a step-by-step approach to achieve this goal.

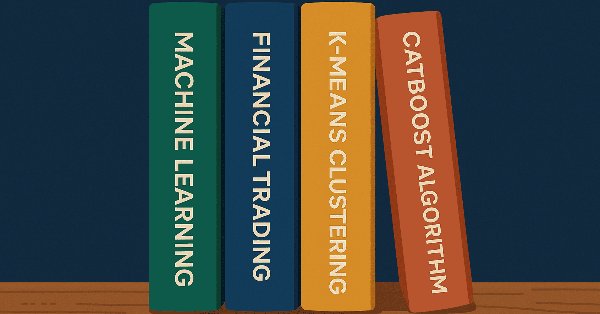

Quantization in machine learning (Part 1): Theory, sample code, analysis of implementation in CatBoost

The article considers the theoretical application of quantization in the construction of tree models and showcases the implemented quantization methods in CatBoost. No complex mathematical equations are used.

ARIMA Forecasting Indicator in MQL5

In this article we are implementing ARIMA forecasting indicator in MQL5. It examines how the ARIMA model generates forecasts, its applicability to the Forex market and the stock market in general. It also explains what AR autoregression is, how autoregressive models are used for forecasting, and how the autoregression mechanism works.

Neural Networks Made Easy (Part 92): Adaptive Forecasting in Frequency and Time Domains

The authors of the FreDF method experimentally confirmed the advantage of combined forecasting in the frequency and time domains. However, the use of the weight hyperparameter is not optimal for non-stationary time series. In this article, we will get acquainted with the method of adaptive combination of forecasts in frequency and time domains.

Neural Networks in Trading: Dual Clustering of Multivariate Time Series (Final Part)

We continue to implement approaches proposed vy the authors of the DUET framework, which offers an innovative approach to time series analysis, combining temporal and channel clustering to uncover hidden patterns in the analyzed data.

Self Optimizing Expert Advisor With MQL5 And Python (Part IV): Stacking Models

Today, we will demonstrate how you can build AI-powered trading applications capable of learning from their own mistakes. We will demonstrate a technique known as stacking, whereby we use 2 models to make 1 prediction. The first model is typically a weaker learner, and the second model is typically a more powerful model that learns the residuals of our weaker learner. Our goal is to create an ensemble of models, to hopefully attain higher accuracy.

Build a Remote Forex Risk Management System in Python

We are making a remote professional risk manager for Forex in Python, deploying it on the server step by step. In the course of the article, we will understand how to programmatically manage Forex risks, and how not to waste a Forex deposit any more.

Population optimization algorithms: Bat algorithm (BA)

In this article, I will consider the Bat Algorithm (BA), which shows good convergence on smooth functions.

Neural Networks in Trading: Two-Dimensional Connection Space Models (Final Part)

We continue to explore the innovative Chimera framework – a two-dimensional state-space model that uses neural network technologies to analyze multidimensional time series. This method provides high forecasting accuracy with low computational cost.

Neural networks made easy (Part 34): Fully Parameterized Quantile Function

We continue studying distributed Q-learning algorithms. In previous articles, we have considered distributed and quantile Q-learning algorithms. In the first algorithm, we trained the probabilities of given ranges of values. In the second algorithm, we trained ranges with a given probability. In both of them, we used a priori knowledge of one distribution and trained another one. In this article, we will consider an algorithm which allows the model to train for both distributions.

Neural Networks in Trading: Multi-Task Learning Based on the ResNeXt Model

A multi-task learning framework based on ResNeXt optimizes the analysis of financial data, taking into account its high dimensionality, nonlinearity, and time dependencies. The use of group convolution and specialized heads allows the model to effectively extract key features from the input data.

Neural networks made easy (Part 80): Graph Transformer Generative Adversarial Model (GTGAN)

In this article, I will get acquainted with the GTGAN algorithm, which was introduced in January 2024 to solve complex problems of generation architectural layouts with graph constraints.

Atomic Orbital Search (AOS) algorithm: Modification

In the second part of the article, we will continue developing a modified version of the AOS (Atomic Orbital Search) algorithm focusing on specific operators to improve its efficiency and adaptability. After analyzing the fundamentals and mechanics of the algorithm, we will discuss ideas for improving its performance and the ability to analyze complex solution spaces, proposing new approaches to extend its functionality as an optimization tool.

Integrate Your Own LLM into EA (Part 3): Training Your Own LLM with CPU

With the rapid development of artificial intelligence today, language models (LLMs) are an important part of artificial intelligence, so we should think about how to integrate powerful LLMs into our algorithmic trading. For most people, it is difficult to fine-tune these powerful models according to their needs, deploy them locally, and then apply them to algorithmic trading. This series of articles will take a step-by-step approach to achieve this goal.

Neural Networks in Trading: State Space Models

A large number of the models we have reviewed so far are based on the Transformer architecture. However, they may be inefficient when dealing with long sequences. And in this article, we will get acquainted with an alternative direction of time series forecasting based on state space models.

Neural Networks Made Easy (Part 84): Reversible Normalization (RevIN)

We already know that pre-processing of the input data plays a major role in the stability of model training. To process "raw" input data online, we often use a batch normalization layer. But sometimes we need a reverse procedure. In this article, we discuss one of the possible approaches to solving this problem.

Brain Storm Optimization algorithm (Part II): Multimodality

In the second part of the article, we will move on to the practical implementation of the BSO algorithm, conduct tests on test functions and compare the efficiency of BSO with other optimization methods.

MQL5 Wizard Techniques you should know (Part 61): Using Patterns of ADX and CCI with Supervised Learning

The ADX Oscillator and CCI oscillator are trend following and momentum indicators that can be paired when developing an Expert Advisor. We look at how this can be systemized by using all the 3 main training modes of Machine Learning. Wizard Assembled Expert Advisors allow us to evaluate the patterns presented by these two indicators, and we start by looking at how Supervised-Learning can be applied with these Patterns.

MQL5 Wizard Techniques you should know (Part 64): Using Patterns of DeMarker and Envelope Channels with the White-Noise Kernel

The DeMarker Oscillator and the Envelopes' indicator are momentum and support/ resistance tools that can be paired when developing an Expert Advisor. We continue from our last article that introduced these pair of indicators by adding machine learning to the mix. We are using a recurrent neural network that uses the white-noise kernel to process vectorized signals from these two indicators. This is done in a custom signal class file that works with the MQL5 wizard to assemble an Expert Advisor.

Neural Networks in Trading: Practical Results of the TEMPO Method

We continue our acquaintance with the TEMPO method. In this article we will evaluate the actual effectiveness of the proposed approaches on real historical data.

Algorithmic Trading Strategies: AI and Its Road to Golden Pinnacles

This article demonstrates an approach to creating trading strategies for gold using machine learning. Considering the proposed approach to the analysis and forecasting of time series from different angles, it is possible to determine its advantages and disadvantages in comparison with other ways of creating trading systems which are based solely on the analysis and forecasting of financial time series.

Price Action Analysis Toolkit Development (Part 34): Turning Raw Market Data into Predictive Models Using an Advanced Ingestion Pipeline

Have you ever missed a sudden market spike or been caught off‑guard when one occurred? The best way to anticipate live events is to learn from historical patterns. Intending to train an ML model, this article begins by showing you how to create a script in MetaTrader 5 that ingests historical data and sends it to Python for storage—laying the foundation for your spike‑detection system. Read on to see each step in action.

Implementing the Truncated Newton Conjugate-Gradient Algorithm in MQL5

This article implements a box‑constrained Truncated Newton Conjugate‑Gradient (TNC) optimizer in MQL5 and details its core components: scaling, projection to bounds, line search, and Hessian‑vector products via finite differences. It provides an objective wrapper supporting analytic or numerical derivatives and validates the solver on the Rosenbrock benchmark. A logistic regression example shows how to use TNC as a drop‑in alternative to LBFGS.

Forex Arbitrage Trading: A Matrix Trading System for Return to Fair Value with Risk Control

The article contains a detailed description of the cross-rate calculation algorithm, a visualization of the imbalance matrix, and recommendations for optimally setting the MinDiscrepancy and MaxRisk parameters for efficient trading. The system automatically calculates the "fair value" of each currency pair using cross rates, generating buy signals in case of negative deviations and sell signals in case of positive ones.