MetaTrader 5 Machine Learning Blueprint (Part 8): Bayesian Hyperparameter Optimization with Purged Cross-Validation and Trial Pruning

Table of Contents

- The Problem with Standard HPO in Financial ML

- Optuna — Architecture and Core Concepts

- The Data Contract and _WeightedEstimator

- FinancialModelSuggester

- Objective Function — Purged K-Fold with Pruning

- Financial-Aware Pruning

- Orchestration and Storage

- From Optuna Study to Scikit-Learn cv_results_

- Visualization

- Practical Considerations

- Conclusion

- Attached Files

Introduction

You are building a financial ML classifier on triple‑barrier or meta‑labels and need to select hyperparameters fairly — without turning the search itself into an overfitting factory. Standard tools fail on three concrete counts for this use case. GridSearchCV and RandomizedSearchCV do not learn from past trials, so they waste budget revisiting regions of the hyperparameter space already shown to be poor. They cannot stop an unpromising configuration after the first expensive PurgedKFold fold, so every trial pays the full cost of all folds even when the first fold already signals failure. And they do not integrate naturally with the financial data contract — PurgedKFold as the only valid splitter, separate weights for fitting and scoring, and persistent storage so that a long search survives crashes and supports parallel workers. The measurable symptoms are wasted compute, biased out‑of‑sample comparisons caused by information leakage across the purge boundary, and fragile experiments that must restart from scratch after any interruption.

This article shows a practical replacement: Optuna (TPE sampler + pruning + SQLite storage) wired natively to the financial cross‑validation and weighting conventions established in earlier parts of this series. After reading you will have a concrete, runnable HPO contour composed of five components:

- an objective function that runs PurgedKFold cross‑validation with return‑attribution weighted scoring and reports fold‑level results for pruning;

- FinancialModelSuggester, a parameter translation layer that converts scikit‑learn distribution specs into trial.suggest_*() calls and simultaneously optimizes the sample weighting scheme and decay;

- a financially‑aware pruner (TradingModelPruner) that enforces an entropy‑based economic baseline and regime‑scaled volatility tolerance;

- an orchestrator (optimize_trading_model) with SQLite storage for resumption and parallel workers;

- a study‑to‑cv_results_ converter for compatibility with existing scikit‑learn analytics.

The output artifacts are a persisted Study, a refit best_estimator_ (_WeightedEstimator wrapping a tuned base model), and a cv_results_ DataFrame with per‑fold scores — ready for the same downstream diagnostics used in the rest of the pipeline.

This article is Part 8.1 of the MetaTrader 5 Machine Learning Blueprint series. The preceding articles built the components this system depends on: Part 1 fixed data leakage at the bar level; Part 2 introduced triple‑barrier and meta‑labels; Part 3 added trend‑scanning labels; Part 4 addressed label concurrency and introduced average uniqueness weights; Part 5 introduced sequential bootstrapping and established the convention of separate training and scoring weights; Part 6 built the caching infrastructure; and Part 7 assembled everything into a reproducible production pipeline. The production pipeline integration of this article's components — including the clf_hyper_fit wrapper and caching — is covered in Part 8.2.

1. The Problem with Standard HPO in Financial ML

1.1 Why Hyperparameter Optimization Matters More in Finance

In most ML domains, a reasonably tuned model with good features produces acceptable results. In finance, the signal-to-noise ratio is low, non-stationarity is the norm, and the cost of overfitting is measured in capital. As López de Prado emphasises, every degree of freedom in a pipeline is an opportunity to overfit. Hyperparameters are degrees of freedom, and the method used to search them determines whether the resulting configuration generalizes or memorizes.

The problem is compounded by the structure of financial data. As established in Part 4, triple-barrier labels overlap in time. The resulting label concurrency means that standard k-fold CV will leak information across the train-test boundary, and any score produced by it is optimistically biased. The HPO tool must work natively with PurgedKFold to produce valid comparisons across trials.

1.2 Scikit-Learn's Limitations

GridSearchCV scales exponentially: a random forest with five values each across four hyperparameters produces 625 combinations, which is 3,125 model fits at 5-fold CV. RandomizedSearchCV reduces cost but remains uninformed — each trial is independent. Neither supports early stopping, inter-trial learning, or persistent storage.

| Limitation | Impact on Financial ML | |

|---|---|---|

| 1. | No inter-trial learning | Compute wasted on poor regions of the hyperparameter space |

| 2. | No early stopping of bad trials | All folds run even when early folds already show poor scores |

| 3. | No persistent storage | Interrupted searches cannot be resumed; cross-experiment comparison requires manual bookkeeping |

| 4. | Rigid CV integration | Fold-level reporting for pruning requires workarounds that break the standard CV interface |

1.3 A Critical Boundary: HPO vs Strategy Optimization

Before proceeding, one boundary must be drawn clearly. Intelligent search is appropriate for HPO and is harmful for strategy parameter optimization. Timothy Masters identifies this precisely: a TPE sampler or genetic algorithm finds the global optimum of the in-sample fitness surface efficiently — but in financial data that surface is mostly noise. The more effectively you search it, the more overfit the result.

The rule is simple: if your objective function is a statistical measure on labelled data (cross-validated accuracy, log-loss on triple-barrier labels), use Optuna. If it is a financial measure on historical equity curves (Sharpe ratio, drawdown), do not. Optuna's advantage in HPO is the same property that makes it dangerous for strategy optimization — it finds the optimum too reliably.

2. Optuna — Architecture and Core Concepts

2.1 How Optuna Works

Optuna is built around three concepts:

- Study: A complete optimization session with a direction (maximize or minimize) that stores all results. With SQLite storage, a study persists across Python sessions — exactly the kind of time-aware reproducibility established in Part 6.

- Trial: A single evaluation of one hyperparameter configuration. Each trial suggests values, evaluates the objective function, and reports the result.

- Sampler: The algorithm that selects which values to try next. The default is TPE (Tree-structured Parzen Estimator), a Bayesian optimization method that learns from completed trials.

The key difference from scikit-learn's tools: Optuna learns from completed trials. The TPE sampler models the relationship between hyperparameters and the objective, progressively focusing on promising regions. Each subsequent trial is more likely to explore configurations near known good results.

2.2 Pruning: HyperbandPruner

Pruning stops a trial before completion if intermediate results indicate it will not be competitive. In a 5-fold purged CV setup, if the first fold produces a poor score, the remaining folds can be skipped — directly reducing total compute.

HyperbandPruner is the correct unconditional choice for financial CV. With only 5–10 folds per trial, most pruners — which operate within a trial — have almost nothing to work with. Hyperband operates across trials. Rather than asking "is this trial performing badly compared to all others at this step?", Hyperband asks a resource-allocation question: "given a fixed compute budget, which trials deserve more folds?"

How the brackets work

Using the exact parameters from the code — min_resource=1, max_resource=5 (for cv=5), reduction_factor=3 (η=3) — the number of brackets is:

s_max = ⌊log₃(5/1)⌋ = ⌊1.46⌋ = 1

So there are two brackets, s=0 and s=1, running simultaneously across the trial budget. Think of them as two parallel races.

Bracket s=1 — the aggressive bracket: Three trials enter. Each is evaluated at fold 1 only. The top ⌈3/3⌉ = 1 survivor continues to folds 2–5. The other two are pruned after a single fold evaluation. Total fold evaluations: 3×1 + 1×4 = 7.

Bracket s=0 — the conservative bracket: Two trials enter and both run unconditionally to fold 5. No pruning fires in this bracket regardless of intermediate scores. Total fold evaluations: 2×5 = 10.

The conservative bracket is not a fallback — it is a guaranteed hedge running in parallel. A parameter combination that looks bad at fold 1 (perhaps that fold covers a crisis period) but is genuinely the best configuration will always receive a full evaluation via the conservative bracket. This is what distinguishes Hyperband from SuccessiveHalvingPruner, which is simply bracket s=1 in isolation and would discard that trial entirely.

| Bracket s=1 (aggressive) | Bracket s=0 (conservative) | |

|---|---|---|

| Trials started | 3 | 2 |

| Pruned after fold 1 | 2 | 0 |

| Completes all folds | 1 | 2 |

| Total fold evaluations | 7 | 10 |

![HyperbandPruner Bracket Structure] HyperbandPruner Bracket Structure]](https://c.mql5.com/2/200/6490.png) Fig. 1 — HyperbandPruner Bracket Structure

Fig. 1 — HyperbandPruner Bracket Structure

Should you build a custom pruner on top of HyperbandPruner?

No — and wrapping it would actively break it. HyperbandPruner manages internal state through bracket assignments made at trial creation time. When trial.should_prune() is called, Hyperband checks which bracket this trial belongs to, which rung it is at, and whether it falls below the top 1/η of trials at that rung. This is a stateful tournament management system, not a simple threshold check. Subclassing it and adding financial rules in prune() creates two failure modes: your rules might kill a trial that Hyperband had earmarked for its conservative bracket (breaking the full-evaluation guarantee), and Hyperband's rung records become inconsistent because a trial pruned by your rule was never compared at its scheduled rung, corrupting the median comparisons for all subsequent trials.

TradingModelPruner works precisely because it wraps MedianPruner, which has no bracket state to corrupt. The correct way to add financial domain logic when using HyperbandPruner is to place the checks inside the objective function before calling trial.should_prune():

for fold_idx, (train_idx, val_idx) in enumerate(cv.split(X, y)): # ... fit and score ... fold_scores.append(score) # 1. Financial domain check fires first — before Hyperband's bracket logic if score < min_score_threshold: trial.set_user_attr("pruned_reason", "below_baseline") raise TrialPruned(f"Below economic baseline at fold {fold_idx}") # 2. Hyperband bracket management — rung comparison across trials trial.report(score, step=fold_idx) if trial.should_prune(): raise TrialPruned()

The financial logic fires before reporting to Hyperband, so the trial simply does not appear at that rung and Hyperband's internal state remains consistent. The two mechanisms are cleanly separated.

from optuna.pruners import HyperbandPruner pruner = HyperbandPruner( min_resource=1, max_resource=n_splits, reduction_factor=3, )

2.3 Define-by-Run API

Optuna specifies the search space inside the objective function. This enables conditional hyperparameter spaces and is what allows FinancialModelSuggester to add weight_scheme, weight_decay, and weight_linear alongside the base model parameters, optimizing the weighting strategy jointly with the model.

2.4 Persistent Storage and Resumability

Optuna stores study results in SQLite (or PostgreSQL, MySQL). A crashed or timed-out run resumes from the last completed trial via load_if_exists=True. This aligns with the reproducibility principle established in Part 7: every experiment must be resumable, auditable, and produce identical results when re-run. Multiple workers can run trials in parallel against the same study, with the 30-second SQLite timeout preventing lock contention.

3. The Data Contract and _WeightedEstimator

All components in this system share a common data contract inherited from the production pipeline established in Part 7:

- X: Feature DataFrame with a DatetimeIndex, produced by the feature engineering pipeline.

- y: Target Series aligned with X — triple-barrier or trend-scanning labels from Part 2 and Part 3.

- events: DataFrame indexed by event time, containing at minimum: t1 (barrier touch time), w (return-attribution weight |return|), and tW (uniqueness-based weight from Part 4).

- data_index: The DatetimeIndex of the full bar dataset — used by _WeightedEstimator to compute weights over the correct universe of bars.

3.1 Two Distinct Weight Roles

Before describing the code, weight handling requires explicit treatment because Prado's examples conflate two quantities that serve different purposes. This distinction was foreshadowed in Part 5, which introduced ml_cross_val_scores_all with separate sample_weight_train and sample_weight_score parameters.

Average uniqueness weights (events['tW']) answer: "how much independent information does this observation contribute?" They belong in estimator.fit() to prevent the model from implicitly overweighting redundant overlapping observations — the concurrency problem identified in Part 4.

Return-attribution weights (events['w']) answer: "how much does a correct or incorrect prediction on this observation matter to P&L?" They belong in the validation scorer. Without them, the CV metric treats a prediction on a flat day as equally important as one on the day of a large move, silently distorting comparisons across trials.

# Fitting: _WeightedEstimator applies tW internally via scheme='uniqueness' fit = clone(model).fit(X_train, y_train) # Scoring: return-attribution weights from events['w'] w_val = events['w'].iloc[val_idx].to_numpy() score = -log_loss(y_val, y_prob, sample_weight=w_val)

Prado computes a single combined weight (uniqueness × |return|) and uses it for both fitting and scoring. That is a reasonable approximation. Using separate weights as above is strictly more correct because each weight is doing the job it is suited for. Furthermore, the weight scheme itself — unweighted, uniqueness, or return — is a hyperparameter that FinancialModelSuggester optimizes jointly with the model parameters.

One important note on strategy type: trend-following models may benefit from return-attribution weights for fitting rather than uniqueness weights. Average uniqueness penalises label overlap. In trending markets, labels are long and heavily overlapping by design, so a trend model trained with uniqueness weights is taught to distrust the exact conditions it is supposed to exploit. Return-attribution weights have no opinion about overlap — they simply up-weight large moves. The weight_scheme hyperparameter makes this a searchable dimension.

3.2 _WeightedEstimator

_WeightedEstimator is a sklearn-compatible wrapper (from afml.production.model_development) that encapsulates weight computation entirely. It accepts the base estimator, the events DataFrame, the full bar data_index, and the weight scheme parameters sampled by Optuna. When fit(X_train, y_train) is called with no explicit sample_weight argument, _WeightedEstimator internally computes the appropriate weights using data_index and events, then passes them to the base estimator. This design keeps weight computation out of the objective function loop and makes the weight scheme a first-class hyperparameter.

4. FinancialModelSuggester

FinancialModelSuggester translates scikit-learn-style parameter distributions into trial.suggest_*() calls and configures a _WeightedEstimator with the sampled parameters. It exposes two methods that form a dual pair: (1) suggest_and_apply for stochastic use inside the objective, and (2) apply_from_params for deterministic reconstruction from study.best_params. A central WEIGHT_KEYS registry separates weight hyperparameters from model hyperparameters in both methods, ensuring that the validation step in apply_from_params only checks weight keys against the base model's accepted parameters when appropriate.

class FinancialModelSuggester: """ Translates Scikit-Learn style distribution dictionaries into Optuna trial suggestions for rigorous statistical HPT. Two core methods form a dual pair: suggest_and_apply — Trial → params → model (stochastic, used in objective) apply_from_params — params → model (deterministic, used for refit) """ # Central registry: separates weight keys from model keys in both methods WEIGHT_KEYS = frozenset({"weight_scheme", "weight_decay", "weight_linear"}) @classmethod def suggest_and_apply( cls, trial: optuna.Trial, base_model, param_distributions: dict, events: pd.DataFrame, data_index: pd.DatetimeIndex, ): # 1. Suggest weight hyperparameters — optimised jointly with the model scheme = trial.suggest_categorical("weight_scheme", ["unweighted", "uniqueness", "return"]) decay = trial.suggest_float("weight_decay", 0.1, 1.0) linear = trial.suggest_categorical("weight_linear", [True, False]) # 2. Suggest base model hyperparameters from scikit-learn-style distributions sampled_params = {} for name, dist in param_distributions.items(): if isinstance(dist, list): sampled_params[name] = trial.suggest_categorical(name, dist) elif hasattr(dist, 'ppf'): # scipy.stats distribution low, high = dist.support() if dist.dist.name == "randint": sampled_params[name] = trial.suggest_int(name, int(low), int(high)) else: is_log = dist.dist.name in ['reciprocal', 'loguniform'] sampled_params[name] = trial.suggest_float(name, low, high, log=is_log) elif isinstance(dist, range): try: sampled_params[name] = trial.suggest_int(name, dist.start, dist.stop - 1) except AttributeError: low, high = dist.support() sampled_params[name] = trial.suggest_int(name, int(low), int(high)) else: sampled_params[name] = dist # 3. Clone and configure — _WeightedEstimator handles weight computation internally new_base = clone(base_model) new_base.set_params(**sampled_params) return _WeightedEstimator( base_estimator=new_base, events=events, data_index=data_index, scheme=scheme, decay=decay, linear=linear, ) @classmethod def apply_from_params( cls, params: dict, base_model, events: pd.DataFrame, data_index: pd.DatetimeIndex, ) -> "_WeightedEstimator": """ Reconstruct a WeightedEstimator from a flat params dict (e.g. study.best_params). Validates model params against the base model's accepted parameter set. """ weight_params = {k: params[k] for k in cls.WEIGHT_KEYS if k in params} model_params = {k: v for k, v in params.items() if k not in cls.WEIGHT_KEYS} # Defensive validation: catch invalid params before refit valid_keys = set(base_model.get_params().keys()) invalid = set(model_params) - valid_keys if invalid: raise ValueError( f"Parameters {invalid} are not valid for " f"{type(base_model).__name__}. Valid: {sorted(valid_keys)}" ) new_base = clone(base_model) new_base.set_params(**model_params) return _WeightedEstimator( base_estimator=new_base, events=events, data_index=data_index, scheme=weight_params.get("weight_scheme", "unweighted"), decay=weight_params.get("weight_decay", 1.0), linear=weight_params.get("weight_linear", False), ) @classmethod def get_search_space(cls, model_name: str): """ Returns curated parameter distributions for financial models. Note min_weight_fraction_leaf for random forests: requires each leaf to account for at least a fraction of total sample weight. This interacts directly with return-attribution weights and is a stronger regulariser than min_samples_leaf when weights vary widely across market regimes. """ spaces = { "random_forest": { "n_estimators": range(100, 1000), "max_depth": range(3, 7), "min_weight_fraction_leaf": stats.uniform(0.025, 0.1), "max_features": ["sqrt", "log2", 0.5, 1.0], "ccp_alpha": stats.loguniform(1e-5, 1e-2), }, "xgboost": { "n_estimators": range(100, 1000), "learning_rate": stats.loguniform(1e-3, 0.1), "max_depth": range(2, 8), "subsample": stats.uniform(0.6, 0.4), "colsample_bytree": stats.uniform(0.6, 0.4), "gamma": stats.uniform(0, 5), }, } return spaces.get(model_name.lower(), {})

5. Objective Function — Purged K-Fold with Pruning

The objective function is the integration point. It runs PurgedKFold cross-validation (introduced in Part 4), applies return-attribution weights to the validation metric, and reports fold-level scores to HyperbandPruner after each fold via trial.report(). This direct fold-level reporting is the key capability that scikit-learn's GridSearchCV cannot provide without significant workarounds.

Two details are worth noting. First, clone(model).fit(X_train, y_train) with no explicit sample_weight argument is correct — _WeightedEstimator computes and applies the training weights internally based on the scheme sampled for this trial. Second, w_val from events['w'] is always the return-attribution weight for scoring regardless of which training scheme was selected, because we always evaluate predictions by economic significance. This separation mirrors the sample_weight_train / sample_weight_score convention from ml_cross_val_scores_all in Part 5.

def optimize_trading_model_with_pruning( trial: optuna.Trial, X, y, events, data_index, classifier, param_distributions: dict, n_splits: int = 5, metric: str = "neg_log_loss", ): """ Objective function for tuning models using Purged K-Fold cross-validation. Uses separate weights for fitting (_WeightedEstimator) and scoring (events['w']), mirroring the sample_weight_train / sample_weight_score convention from Part 5. """ suggester = FinancialModelSuggester() model = suggester.suggest_and_apply( trial, classifier, param_distributions, events, data_index ) t1 = events['t1'] cv = PurgedKFold(n_splits=n_splits, t1=t1, pct_embargo=0.01) # Convert once before the loop — avoids repeated pandas overhead per fold # and ensures val_idx (numpy integer array from PurgedKFold) indexes correctly w_score = events['w'].to_numpy() fold_scores = [] for fold_idx, (train_idx, val_idx) in enumerate(cv.split(X, y)): X_train, X_val = X.iloc[train_idx], X.iloc[val_idx] y_train, y_val = y.iloc[train_idx], y.iloc[val_idx] # _WeightedEstimator applies training weights (uniqueness or return) internally fit = clone(model).fit(X_train, y_train) # Slice pre-converted numpy array — no pandas overhead inside the hot loop w_val = w_score[val_idx] if metric == "neg_log_loss": y_prob = fit.predict_proba(X_val) score = -log_loss(y_val, y_prob, sample_weight=w_val) else: y_pred = fit.predict(X_val) score = f1_score(y_val, y_pred, sample_weight=w_val) fold_scores.append(score) # Financial baseline check fires before Hyperband's bracket logic # (only active when TradingModelPruner is not the pruner) trial.report(score, step=fold_idx) if trial.should_prune(): avg_score_so_far = np.mean(fold_scores) trial.set_user_attr("pruned_at_fold", fold_idx) trial.set_user_attr("score_when_pruned", avg_score_so_far) trial.set_user_attr("total_folds_attempted", len(fold_scores)) raise TrialPruned(f"Pruned at fold {fold_idx}. Avg: {avg_score_so_far:.4f}") final_score = np.mean(fold_scores) trial.set_user_attr("fold_scores", fold_scores) trial.set_user_attr("score_std", np.std(fold_scores)) return final_score

The fold scores stored in trial.user_attrs["fold_scores"] are not just diagnostic metadata. They power the check_for_overfitting callback and the fold-level columns in the cv_results_ DataFrame. A high score_std across a 5-fold purged split often indicates a model capturing regime-specific patterns rather than genuinely generalizable signal — exactly the kind of regime sensitivity that the multi-regime bar sampling discussed in Part 7 is designed to surface.

6. Financial-Aware Pruning

TradingModelPruner extends MedianPruner with three financially-grounded rules. It is not a universal replacement for HyperbandPruner — it has a specific viability window. Because it wraps MedianPruner, it is completely silent for the first n_startup_trials=10 completed trials and only produces reliable comparisons after 20–30 more. On a short study of fewer than 30 trials it provides less savings than HyperbandPruner. The four conditions under which it becomes the better choice are as follows.

Large trial budget on a well-understood instrument. Running 100 or more trials on an instrument with known characteristics gives you a calibrated expectation of what a reasonable log-loss floor looks like. A trial scoring far below that floor after fold 1 is not a regime-sensitive underperformer — it is a broken configuration. The entropy baseline kills it immediately and unconditionally. HyperbandPruner cannot make this determination because it compares relative rank across trials, not against an absolute economic floor. Example: on EURUSD triple-barrier labels calibrated to a 3:1 barrier ratio, log-loss consistently sits near −0.65. A trial returning −0.92 after fold 1 warrants immediate termination regardless of what other trials are doing.

Trending instrument with high return-weight variance. On a strongly trending instrument, events['w'] has high coefficient of variation — a few large-move observations carry weights an order of magnitude above the rest. TradingModelPruner scales volatility_tolerance proportionally to this CV, meaning it accepts large fold-score swings without pruning a trend model that happens to look poor on a mean-reverting fold. HyperbandPruner prunes by relative rank regardless of whether the swing reflects model quality or market structure. Example: a GBPJPY model trained on weekly triple-barrier labels during a trending period will show high fold-score variance simply because each fold covers a distinct momentum phase. TradingModelPruner accommodates this; HyperbandPruner may discard it.

CV window spanning a known regime break. If the training window covers a structural break — a central bank policy shift, a volatility regime change, a microstructure change — one fold will look categorically different from the others. The model that performs best across both regimes will not necessarily look best after fold 1. TradingModelPruner's n_warmup_steps=2 grants a two-fold grace period before variance-based pruning fires. Example: a study whose CV window spans 2019–2022 will have one fold covering COVID-era volatility. Allowing two folds before variance-based pruning fires prevents that fold from eliminating configurations that generalize well across regimes.

Follow-up study after an initial HyperbandPruner run. A practical workflow is to run an initial 50-trial study with HyperbandPruner to establish the score landscape, then run a focused 150-trial follow-up with TradingModelPruner. The first study gives you a calibrated score range to set multiplier precisely. The second study prevents the TPE sampler — which now has a prior from the first run — from revisiting configurations already shown to be near the noise floor.

The baseline entropy threshold uses the return-attribution weighted label distribution — not the raw distribution. This is the correct baseline because a naive classifier that always predicts the dominant class achieves this entropy when classes are weighted by their economic significance. The volatility tolerance is proportional to the coefficient of variation of the return-attribution weights: trending markets (high CV) receive a wider tolerance; mean-reverting markets (low CV) receive a tighter one.

class TradingModelPruner(MedianPruner): """ Financial-aware pruner that adjusts thresholds based on label entropy and return-attribution weighted volatility. """ def __init__( self, y, sample_weight, # Return-attribution weights: events['w'] n_startup_trials: int = 10, n_warmup_steps: int = 2, multiplier: float = 1.15, ): super().__init__(n_startup_trials=n_startup_trials, n_warmup_steps=n_warmup_steps) # Baseline entropy from return-attribution weighted label distribution weighted_counts = pd.Series(sample_weight).groupby(y.values).sum() probs = weighted_counts / weighted_counts.sum() if set(y.unique()) != {0, 1}: self.baseline_entropy = -np.sum(probs * np.log(probs)) self.min_score_threshold = -self.baseline_entropy * multiplier else: majority_ratio = probs.max() self.min_score_threshold = majority_ratio / multiplier # Volatility tolerance scales with CV of weights: # trending regimes (high CV) → higher fold-score variance is acceptable weight_cv = np.std(sample_weight) / np.mean(sample_weight) self.volatility_tolerance = 0.1 * (1 + weight_cv) def prune(self, study, trial) -> bool: step = trial.last_step if step is None: return False if trial.number >= 5 and len(trial.intermediate_values) >= 3: # Rule 1: Worse than economically-weighted naive baseline? current_score = trial.intermediate_values.get(step) if trial.number > 1 and current_score < self.min_score_threshold: return True # Rule 2: Unstable across recent folds? recent_scores = list(trial.intermediate_values.values())[-3:] if np.std(recent_scores) > self.volatility_tolerance: return True # Rule 3: Standard median pruning return super().prune(study, trial)

Summary decision rule: n_trials < 30 → use HyperbandPruner; n_trials ≥ 100 and at least one of the above conditions applies → use TradingModelPruner; first study on an unknown instrument → use HyperbandPruner.

7. Orchestration and Storage

optimize_trading_model creates the study, wires up the pruner and sampler, connects to SQLite storage, and handles the refit. HyperbandPruner is the default pruner_type. Setting n_jobs=1 on the classifier before the study and restoring -1 after refit prevents oversubscription: with study.optimize running parallel workers, each worker already occupies a core — an inner classifier also requesting multiple cores would cause nested parallelism that degrades rather than improves throughput. The same principle applies in the clf_hyper_fit wrapper from Part 7.

load_if_exists=True means a timed-out run resumes from the last completed trial when called with the same study_name and db_path. Combined with the caching architecture from Part 6, this means a full pipeline re-run after interruption reloads all cached preprocessing results and resumes exactly where the Optuna study left off — with no wasted compute.

def optimize_trading_model( classifier, X: pd.DataFrame, y: pd.Series, events: pd.DataFrame, data_index: pd.DatetimeIndex, param_distributions: dict, n_trials: int = 100, timeout: int = 3600, n_splits: int = 5, pruner_type: str = "hyperband", metric: str = "neg_log_loss", study_name: str = None, db_path: str = None, random_state: int = 42, refit: bool = True, ): if pruner_type == "median": pruner = TradingModelPruner(y, sample_weight=events.loc[X.index, 'w']) elif pruner_type == "hyperband": pruner = HyperbandPruner(min_resource=1, max_resource=n_splits, reduction_factor=3) else: pruner = SuccessiveHalvingPruner() sampler = TPESampler(seed=random_state) storage_url = f"sqlite:///{db_path}.db?timeout=30" # 30s timeout for parallel workers try: study = optuna.create_study( direction="maximize", sampler=sampler, pruner=pruner, study_name=study_name, storage=storage_url, load_if_exists=True, # resume from last completed trial ) if hasattr(classifier, 'n_jobs'): classifier.set_params(n_jobs=1) # prevent oversubscription if hasattr(classifier, 'random_state'): classifier.set_params(random_state=random_state) def objective(trial): return optimize_trading_model_with_pruning( trial, X, y, events, data_index, classifier, param_distributions, n_splits, metric ) study.optimize( objective, n_trials=n_trials, timeout=timeout, callbacks=[print_best_trial, save_intermediate_results, check_for_overfitting], ) if refit: best_model = FinancialModelSuggester.apply_from_params( study.best_trial.params, classifier, events, data_index ) if hasattr(best_model, 'n_jobs'): best_model.set_params(n_jobs=-1) best_model.fit(X, y) study.best_estimator_ = best_model cv_results = optuna_to_cv_results(study) return study, cv_results except StorageInternalError as e: logger.error(f"Storage Error: {db_path} is locked or unreachable. {e}") except Exception as e: raise e

The three callbacks provide observability without modifying the objective. print_best_trial logs score improvements to the console. save_intermediate_results writes each completed trial to disk as JSON in an optuna_results/ directory — a lightweight audit trail analogous to the logging infrastructure from Part 7. check_for_overfitting emits a warning when score_std exceeds 0.3, flagging models that are sensitive to which market regime a fold covers.

8. From Optuna Study to Scikit-Learn cv_results_

Existing analytics code in the pipeline — including the hyperparameter analysis functions in afml.cross_validation.hyper_fit_analysis — expects a scikit-learn cv_results_ DataFrame. Conditional search spaces produce sparse rows: the xgboost search space includes learning_rate and gamma, which do not exist in random forest trials. optuna_to_cv_results handles this by building a DataFrame with one row per completed trial, filling absent parameters with NaN. The per-fold scores stored in trial.user_attrs["fold_scores"] are expanded into split0_test_score, split1_test_score, etc., enabling the same fold-level consistency analysis available in scikit-learn's native CV results.

def optuna_to_cv_results(study): """Converts an Optuna study into a Scikit-Learn style cv_results_ DataFrame.""" rows = [] for trial in study.trials: if trial.state != optuna.trial.TrialState.COMPLETE: continue # exclude pruned trials from ranking res = { "mean_test_score": trial.value, "std_test_score": trial.user_attrs.get("score_std", 0), "mean_fit_time": (trial.datetime_complete - trial.datetime_start).total_seconds(), "params": trial.params, } for k, v in trial.params.items(): res[f"param_{k}"] = v fold_scores = trial.user_attrs.get("fold_scores", []) for i, score in enumerate(fold_scores): res[f"split{i}_test_score"] = score rows.append(res) return pd.DataFrame(rows)

9. Visualization

After a study completes, Optuna's visualization suite provides diagnostic insight into the search process. All functions return Plotly figures available via optuna.visualization. The diagrams below are representative examples generated from a synthetic financial ML study. These plots are particularly valuable because they surface information about the hyperparameter landscape that the cv_results_ DataFrame alone cannot convey.

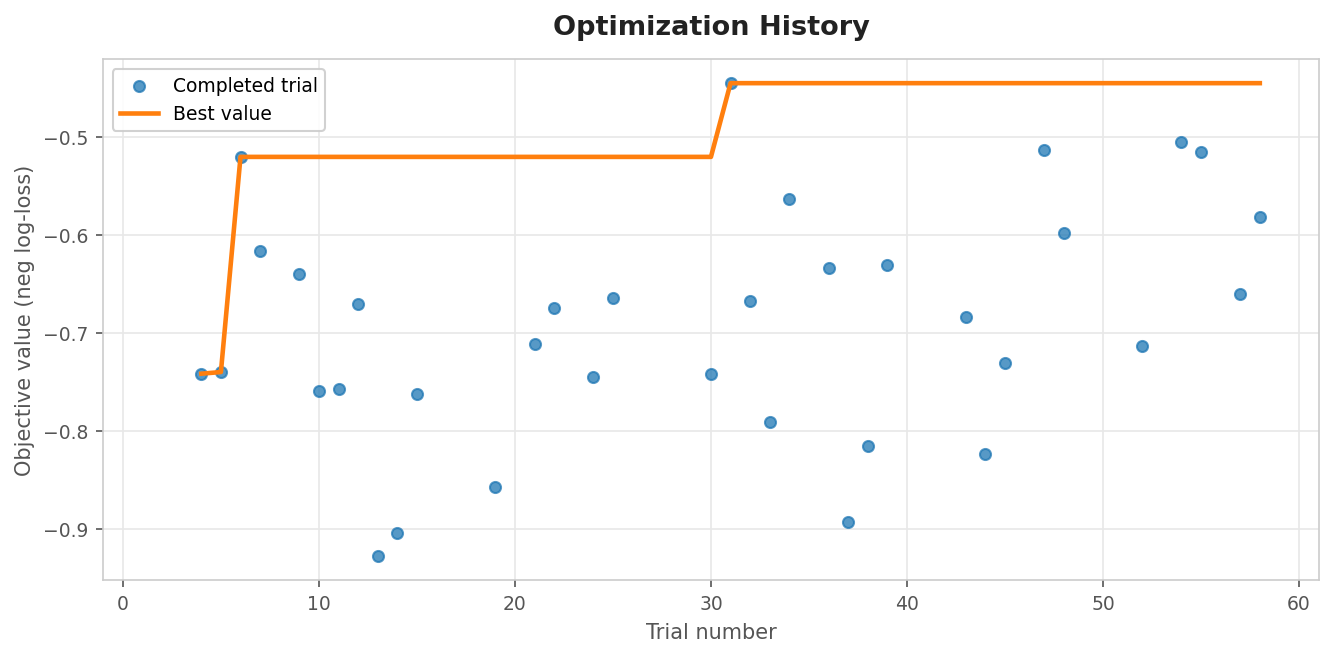

plot_optimization_history(study)

Shows objective value per trial (dots) and the running best (line). The gap between individual trial scores and the running best narrows as TPE builds its model of the objective surface. A flat running best indicates convergence; a still-improving best suggests more trials are warranted. In practice, TPE typically converges within 30–60 trials for a 4–5 parameter random forest space.

Fig. 2 — Optimization History

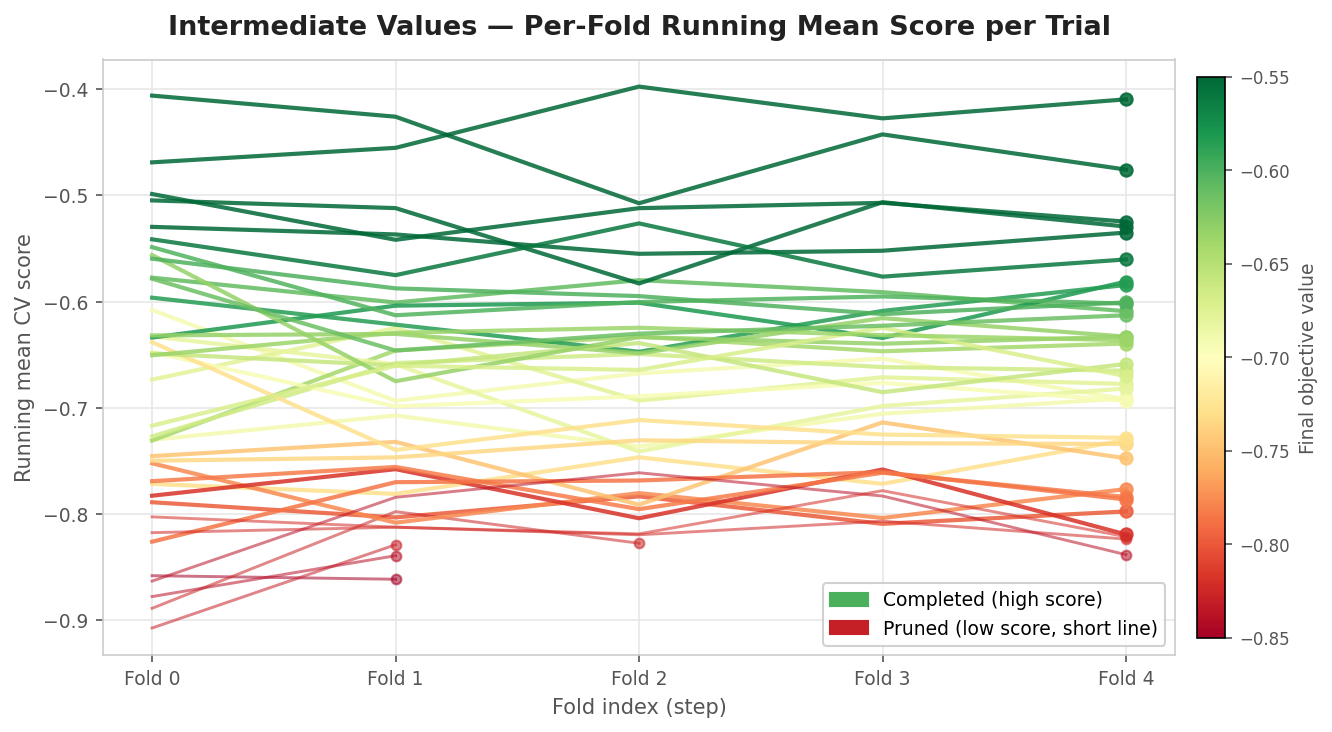

plot_intermediate_values(study)

Shows the per-fold running mean for every trial, with pruned trials as short lines terminating early. This is the primary diagnostic for HyperbandPruner. Lines colored green complete all folds and tend to cluster at the top of the score range; lines colored red are pruned early and terminate at fold 1. If no lines are pruned, reduction_factor may need adjustment, or the objective surface is too flat for fold-1 scores to be informative.

Fig. 3 — Intermediate Values (Pruning Diagnostic)

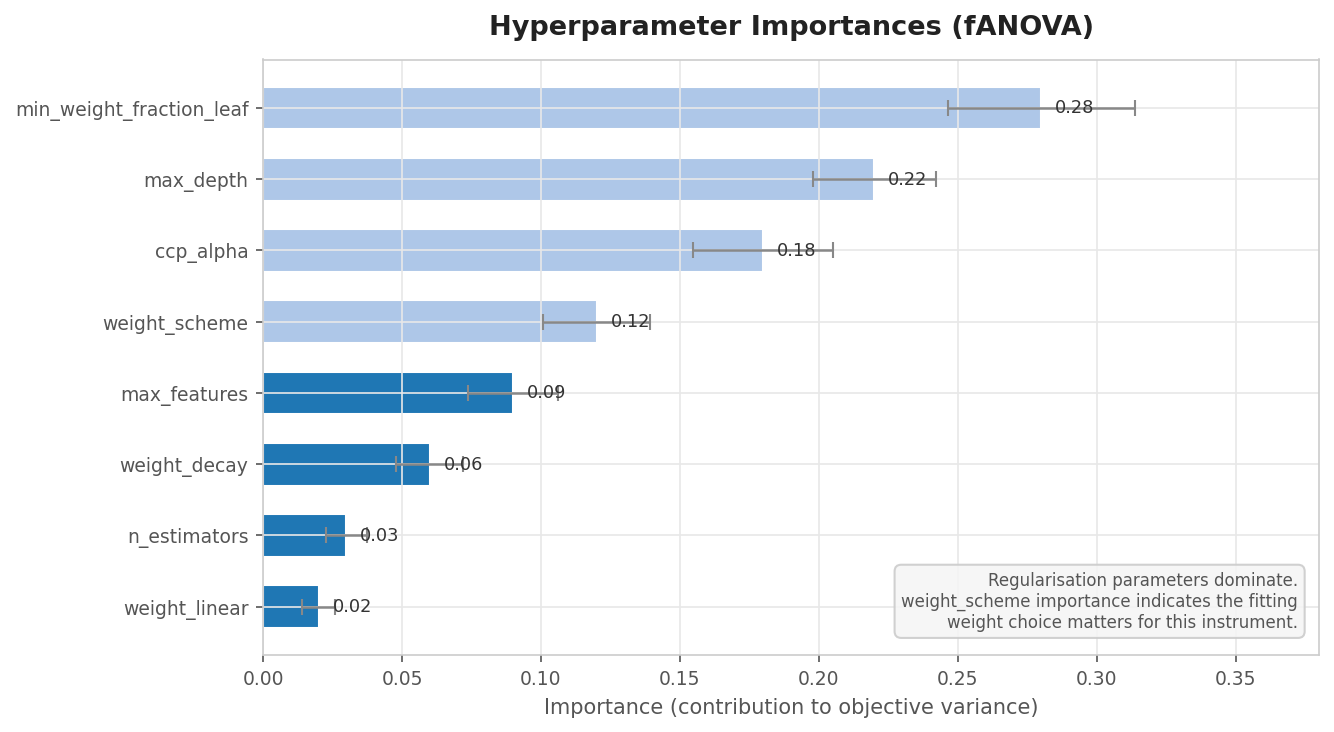

plot_param_importances(study)

Uses fANOVA to estimate each hyperparameter's contribution to objective variance. In financial ML, regularization parameters (min_weight_fraction_leaf, ccp_alpha, max_depth) typically dominate capacity parameters (n_estimators), reflecting that overfitting to the training regime is the primary failure mode. The weight_scheme importance is particularly informative: a high importance suggests that the choice between uniqueness and return-attribution training makes a measurable difference for the specific instrument and strategy type.

Fig. 4 — Parameter Importances (fANOVA)

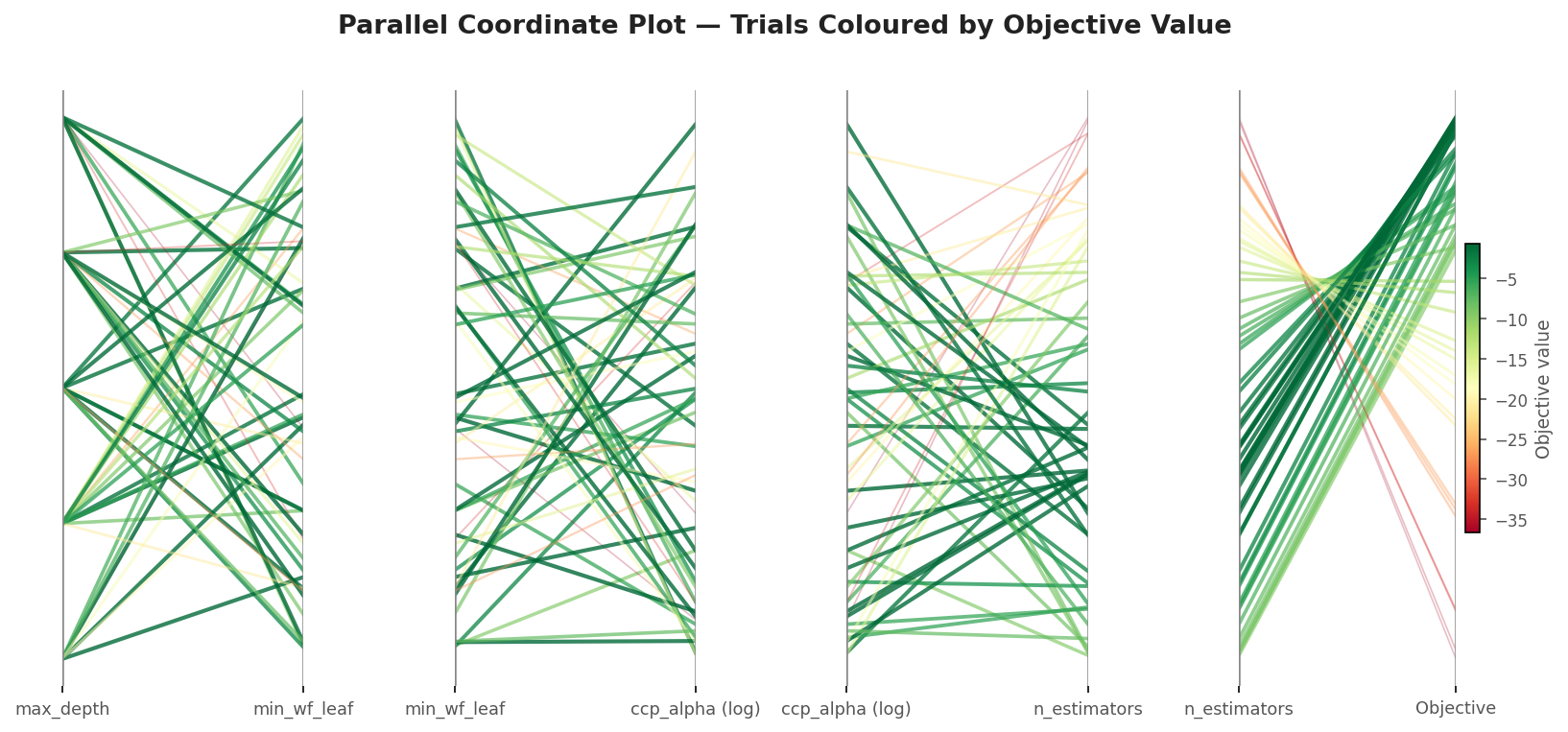

plot_parallel_coordinate(study)

Displays each trial as a line across parallel axes colored by objective value. High-performing trials (green) cluster in visible bands and reveal joint parameter interactions — for example, that a deep tree only improves performance when paired with strong cost-complexity pruning via ccp_alpha. This is the best plot for identifying which parameter combinations work together rather than individually.

Fig. 5 Parallel Coordinate Plots

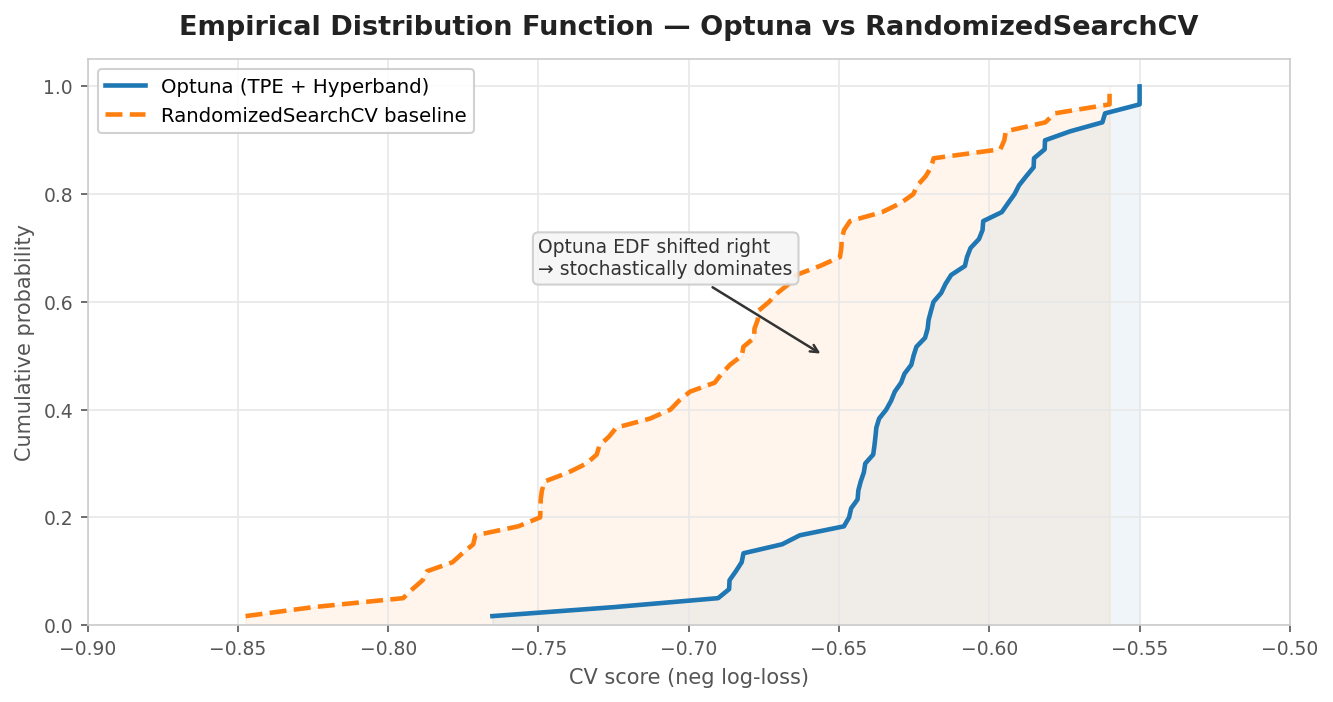

plot_edf([study_optuna, study_random])

Plots the empirical CDF of objective values across all trials for multiple studies. A study whose EDF is shifted right stochastically dominates the other across the full trial distribution. This is the correct way to demonstrate that Optuna outperforms RandomizedSearchCV at equal trial counts. A single best_score comparison is sensitive to lucky draws and should never be used as the sole benchmark — the EDF shows the full picture.

vis.plot_edf requires a list of optuna.Study objects. Since RandomizedSearchCV produces a cv_results_ DataFrame rather than a study, a converter is needed. randomized_search_to_study wraps each row of cv_results_ into a completed optuna.Trial using CategoricalDistribution stubs — plot_edf only reads trial.value so the distribution type does not matter. The plot_edf_comparison convenience function handles the conversion and trace renaming in one call.

Fig. 6 — EDF Comparison: Optuna vs RandomizedSearchCV

import optuna.visualization as vis from optuna.distributions import CategoricalDistribution fig = vis.plot_optimization_history(study) fig.show() fig = vis.plot_intermediate_values(study) # primary HyperbandPruner diagnostic fig.show() fig = vis.plot_param_importances(study) # weight_scheme importance is informative fig.show() fig = vis.plot_parallel_coordinate(study) fig.show() def randomized_search_to_study( gs, study_name: str = "randomized_search_baseline", ) -> optuna.Study: """ Convert a fitted RandomizedSearchCV into an Optuna Study for use with vis.plot_edf. vis.plot_edf requires optuna.Study objects and reads only trial.value. CategoricalDistribution stubs satisfy the API without affecting the plot. NaN mean_test_score rows (failed fits) are skipped. """ study = optuna.create_study(direction="maximize", study_name=study_name) cv_df = pd.DataFrame(gs.cv_results_) param_cols = [c for c in cv_df.columns if c.startswith("param_")] for _, row in cv_df.iterrows(): score = row["mean_test_score"] if pd.isna(score): continue params = {} for col in param_cols: val = row[col] # Skip NaN params from conditional search spaces if not (pd.isna(val) if not isinstance(val, str) else False): params[col.replace("param_", "")] = val distributions = { k: CategoricalDistribution([v]) for k, v in params.items() } trial = optuna.trial.create_trial( params=params, distributions=distributions, value=float(score), ) study.add_trial(trial) return study def plot_edf_comparison(study_optuna, gs_random): """ Compare an Optuna study against a fitted RandomizedSearchCV via EDF. Handles the conversion and trace renaming in one call. """ study_random = randomized_search_to_study(gs_random) fig = vis.plot_edf([study_optuna, study_random]) for trace in fig.data: if "random" in trace.name.lower(): trace.name = "RandomizedSearchCV" else: trace.name = f"Optuna ({study_optuna.study_name})" fig.update_layout( title="EDF Comparison — Optuna (TPE + Hyperband) vs RandomizedSearchCV" ) return fig # Usage: # study, cv_results = optimize_trading_model(...) # gs = RandomizedSearchCV(...); gs.fit(X, y, sample_weight=events['w']) # fig = plot_edf_comparison(study, gs) # fig.show()

10. Practical Considerations

Fix the random seed in the base estimator. Without it, performance differences between trials reflect randomness rather than hyperparameter quality. optimize_trading_model sets random_state on the classifier before the study begins. This is especially important for the comparison between trials that differ only in weight_scheme — the difference must be attributable to the weight scheme, not to random forest stochasticity.

Use informed search space bounds. Ranges like n_estimators in [1, 10000] waste early trials on extreme values. The get_search_space factory provides curated distributions as a starting point. Widen the range only if plot_contour shows that the best region is at the boundary of the current range.

Use log scale for multiplicative parameters. learning_rate and ccp_alpha span orders of magnitude. FinancialModelSuggester handles this automatically by checking dist.dist.name for loguniform and reciprocal distributions and passing log=True to trial.suggest_float.

Allow at least one grace fold before pruning. Financial CV folds cover different market regimes. TradingModelPruner enforces n_warmup_steps=2 before applying variance-based rules. HyperbandPruner's conservative bracket provides the same protection structurally — at least one trial always runs to completion per bracket.

Interpret weight_scheme importance carefully. If plot_param_importances shows weight_scheme as the most important parameter, it does not necessarily mean the scheme matters most for model quality — it may mean that the other hyperparameters were already well-constrained by prior knowledge and the search space needs refinement.

Conclusion

Running optimize_trading_model yields a reproducible Optuna study and three immediate, auditable artifacts: the Study itself (persisted to SQLite, resumable via load_if_exists=True, and compatible with parallel workers); a refit best_estimator_ (_WeightedEstimator wrapping a tuned base model, ready for prediction); and a scikit‑learn‑compatible cv_results_ DataFrame with per‑fold scores and fold‑stability metadata, suitable for the same downstream diagnostics used throughout the pipeline. The practical benefits close the financial bottlenecks identified in the introduction: early pruning (HyperbandPruner or, for large studies with calibrated baselines, TradingModelPruner) materially reduces total compute by terminating hopeless trials after one or a few folds; persistent SQLite storage and the caching architecture from Part 6 let long experiments resume without redoing expensive preprocessing; and the split handling of training versus scoring weights ensures economic significance is respected in both model fitting and evaluation.

Key design takeaways you can act on immediately:

- Use PurgedKFold as the only inner CV for triple‑barrier labels and always report fold‑level scores for pruning — CPCV is a backtesting tool and produces no scalar output suitable for ranking hyperparameter combinations.

- Prefer HyperbandPruner as the default for small‑to‑moderate studies (fewer than ~30 trials) or first studies on unknown instruments; use TradingModelPruner when you have a large trial budget (100+) and a calibrated economic baseline.

- Treat the training weight scheme (unweighted, uniqueness, return) as a hyperparameter and always use return‑attribution weights (events['w']) for scoring, following the dual‑weight convention from Part 5.

- Fix random seeds, use informed search bounds (log scale for multiplicative parameters like ccp_alpha and learning_rate), and allow a grace window before pruning to respect regime heterogeneity across folds.

Finally, respect the boundary: Optuna's directed search is the right tool for statistical HPO on labelled data — it finds the optimum reliably because the objective (cross‑validated log‑loss on triple‑barrier labels) is a valid measure of generalisation. It is not a safe substitute for strategy optimization on historical P&L, where that same reliability makes it more dangerous than random search, not less. Part 8.2 will demonstrate integrating this HPO contour into the production pipeline via the clf_hyper_fit wrapper, connecting it to the existing caching infrastructure, and the ONNX export path for deployment in MetaTrader 5.

Attached Files

The table below describes each file attached to this article and its role in the HPO system. Files from the production module and cross-validation module are shared with Part 7; files prefixed optuna_hyper_fit are introduced here.

| File | Module | Role in this article | Key dependencies | |

|---|---|---|---|---|

| 1. | optuna_hyper_fit.py | afml.cross_validation | Main HPO module. Contains FinancialModelSuggester, optimize_trading_model_with_pruning, TradingModelPruner, optimize_trading_model, optuna_to_cv_results, and the EDF comparison utilities introduced in this article. | cross_validation.py (PurgedKFold), model_development.py (_WeightedEstimator), optuna ≥ 3.0, scikit-learn ≥ 1.3, loguru |

| 2. | model_development.py | afml.production | Provides _WeightedEstimator — the sklearn-compatible wrapper that computes and applies training sample weights (uniqueness or return-attribution) internally so the objective function loop does not need to handle them explicitly. Also provides TickDataLoader and the broader pipeline infrastructure from Part 7. | utils.py (date_conversion), MetaTrader5 Python package, scikit-learn, pandas, numpy |

| 3. | utils.py | afml.production | Utility functions shared across the production module, including date conversion helpers used by TickDataLoader and data validation routines called at pipeline entry points. | pandas, numpy, MetaTrader5 Python package |

| 4. | cross_validation.py | afml.cross_validation | Provides PurgedKFold — the only valid inner CV splitter for financial HPO. Called directly inside optimize_trading_model_with_pruning with t1 from the events DataFrame and a 1% embargo. Also provides CombinatorialPurgedCV for the outer backtesting loop, which is distinct from HPO and not used inside this article's functions. | scikit-learn (BaseCrossValidator), pandas, numpy |

| 5. | hyper_fit_analysis.py | afml.cross_validation | Post-study analysis functions that consume the cv_results_ DataFrame produced by optuna_to_cv_results. Includes fold-level consistency plots, parameter interaction summaries, and the ml_cross_val_scores_all function from Part 5 that established the dual-weight scoring convention this article builds on. | cross_validation.py (PurgedKFold), pandas, numpy, scikit-learn, matplotlib |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

Larry Williams Market Secrets (Part 15): Trading Hidden Smash Day Reversals with Market Context

Larry Williams Market Secrets (Part 15): Trading Hidden Smash Day Reversals with Market Context

From Novice to Expert: Enhancing Liquidity Strategies with Multi-Timeframe Structural Confirmation in MQL5

From Novice to Expert: Enhancing Liquidity Strategies with Multi-Timeframe Structural Confirmation in MQL5

Price Action Analysis Toolkit Development (Part 64): Synchronizing Manually Drawn Trendlines with Automated Monitoring

Price Action Analysis Toolkit Development (Part 64): Synchronizing Manually Drawn Trendlines with Automated Monitoring

Neural Networks in Trading: Dual Clustering of Multivariate Time Series (DUET)

Neural Networks in Trading: Dual Clustering of Multivariate Time Series (DUET)

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use