MetaTrader 5 Machine Learning Blueprint (Part 12): Probability Calibration for Financial Machine Learning

Table of Contents

- Why Classifiers Are Not Calibrated

- Calibration Metrics

- Calibration Methods

- The Financial Data Contract: Calibrating Without Leakage

- The afml.calibration Module

- The Diagnostic Pipeline

- Conclusion

- Attached Files

Introduction

The bet sizing methods in Part 10 accept a predicted probability and return a position size. The Kelly multiplier in Part 11 takes the same probability and adjusts the position for payoff asymmetry. Both calculations are only as good as the probability they receive.

A Random Forest that predicts a 0.68 probability when the true win rate is actually 0.55 is not just inaccurate. It is systematically overconfident, and that overconfidence flows straight into every sizing calculation that follows. The get_signal function maps the inflated probability to a larger z-score and therefore a larger position. The Kelly fraction grows with the predicted probability. Overbetting Kelly even slightly on every trade will eventually ruin a bankroll that would have survived the same trades with correct sizing.

The symptoms only show up in the equity curve. A strategy that looks well-sized in backtesting with raw probabilities will systematically take positions that are larger than the evidence actually supports. This produces deeper drawdowns and lower geometric growth than a correctly calibrated version of the same strategy.

In this article, we cover the afml.calibration module and its role in the pipeline. You will learn (1) why tree-based classifiers tend to be overconfident and how this appears in a reliability diagram; (2) what Brier score, ECE, and MCE measure; (3) when to use isotonic regression versus Platt scaling; (4) how to calibrate without temporal leakage using out-of-fold predictions from PurgedKFold; and (5) how miscalibration propagates from probabilities to position sizes, P&L, and CPCV path Sharpe distributions.

This article is Part 12 of the MetaTrader 5 Machine Learning Blueprint series. Part 10 and Part 11 built the bet sizing toolkit that this article's calibration step feeds into. The Unified Validation Pipeline article established PurgedKFold as the correct CV splitter and CPCV as the correct backtesting framework — both are used here for calibration fitting and for the final diagnostic figures. Part 8 and Part 9 built the HPO infrastructure that produced the model whose output this article calibrates.

Why Classifiers Are Not Calibrated

The Overconfidence Problem

A classifier is well-calibrated if, among all observations it assigns a predicted probability of 0.70 to, roughly 70% are actually in the positive class. The predicted probability and the observed frequency agree. This property is called calibration, and it is distinct from accuracy. A classifier can rank observations correctly while still being systematically overconfident or underconfident in the absolute probability values it assigns.

Tree-based classifiers such as Random Forests and gradient-boosted trees are structurally prone to overconfidence. The reason is in how they generate probability estimates. A Random Forest averages the vote fractions across its constituent trees. Each tree, trained to minimize impurity, tends to produce leaf-level estimates that cluster near 0 and 1. The ensemble average of these extreme estimates ends up biased toward the tails. A true win rate of 0.55 often gets mapped to a predicted probability of 0.65 or 0.70. Gradient-boosted trees have a related but slightly different issue. They optimize a log-odds objective, which is calibrated in log-odds space rather than probability space. The final sigmoid transform then produces probabilities that are too extreme when the number of boosting rounds is large relative to the actual signal in the data.

In financial machine learning, this problem gets worse. The signal-to-noise ratio is low, models are trained on limited independent observations because label concurrency reduces the effective sample size, and out-of-sample accuracy usually sits in the 0.52–0.65 range where the gap between predicted and true probability is widest. A model with genuine but modest edge (accuracy around 0.57) frequently assigns probabilities in the 0.60–0.80 range, implying much stronger confidence than the data actually supports.

The Reliability Diagram

The reliability diagram makes this overconfidence visible. The x-axis shows predicted probability, grouped into bins. The y-axis shows the observed fraction of positive outcomes in each bin. A perfectly calibrated classifier produces a straight diagonal line from (0,0) to (1,1). An overconfident classifier produces a curve that bows below the diagonal. Observations assigned a predicted probability of 0.70 actually win closer to 55% of the time. The gap between the diagonal and the calibration curve is a direct visualization of how much confidence the model claims beyond what the data supports.

Bootstrap confidence intervals around the curve are essential in financial applications. With a small number of independent observations due to label concurrency, the reliability curve is noisy. A single-point estimate of calibration quality is unreliable. bootstrap_reliability_ci generates 95% confidence bands by resampling the observation-prediction pairs with replacement. This provides a statistically honest picture of where the calibration curve is well-estimated and where it is not.

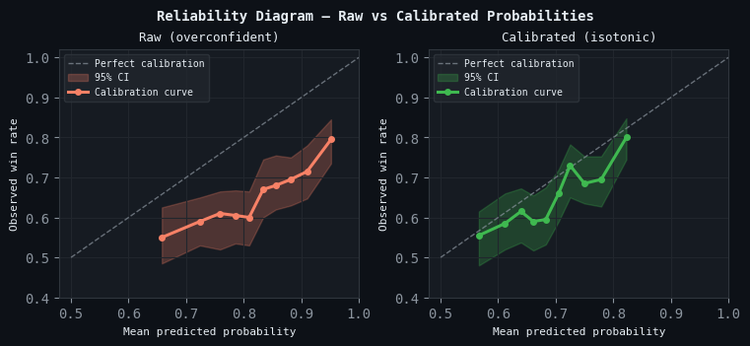

Figure 1. 2-panel illustration of calibration reliability before and after isotonic calibration

- Left — Raw probabilities: The Random Forest calibration curve bows below the diagonal throughout. Observations assigned a predicted probability of 0.70 achieve an actual win rate closer to 0.55. Bootstrap 95% confidence bands are shown as a shaded region around the curve.

- Right — Calibrated probabilities (isotonic): After isotonic calibration using out-of-fold predictions through PurgedKFold, the curve tracks the diagonal closely at all probability levels. The confidence bands narrow in the moderate-probability range where observations are densest.

Calibration Metrics

Three metrics quantify different aspects of calibration quality. They complement each other. A model can score well on one while failing on another.

Brier Score

The Brier score is the mean squared error between predicted probabilities and binary outcomes:

Brier = (1/N) * sum((p_i - y_i)**2)

It rewards sharpness (probabilities close to 0 or 1) and penalizes miscalibration. A model that predicts 0.5 for every observation achieves a Brier score of 0.25 on a balanced dataset. A model that is both well-calibrated and confident achieves a Brier score below that baseline. The Brier score decomposes into a calibration component and a resolution component. Isotonic calibration directly improves the calibration component while leaving resolution unchanged.

Expected Calibration Error

ECE measures the average weighted absolute difference between the mean predicted probability and the observed frequency across bins:

ECE = sum_b (|B_b| / N) * |mean_pred_b - mean_true_b|

where B_b is the set of observations in bin b. ECE is weighted by bin size, so bins with more observations contribute more to the score. For financial applications, quantile binning is often preferable to uniform binning. The predicted probability distribution is typically concentrated near the base rate, and uniform bins leave most bins empty while crowding everything into two or three central bins.

Maximum Calibration Error

MCE measures the worst-case absolute difference across all bins:

MCE = max_b |mean_pred_b - mean_true_b|

MCE is a conservative metric. It captures the worst region of the probability range rather than the average. In financial applications, the worst-performing probability region is usually the high-confidence tail, where the model assigns 0.75–0.90 but delivers win rates of 0.58–0.65. This is exactly the region that produces the largest position sizes through get_signal and the highest Kelly fractions. MCE flags problems in this tail that ECE may obscure through averaging.

Using the Metrics Together

The three metrics answer different questions. Brier score answers whether the model's probability output is useful for decision-making overall. ECE answers how far off the probabilities are from the observed frequencies on average. MCE answers what the worst-case probability region is and how badly it is miscalibrated. A production pipeline should monitor all three. A model with good ECE but poor MCE has a specific region of overconfidence that will produce badly-sized positions when it fires. A model with good MCE but poor Brier score is well-calibrated but unsharp: its probabilities are accurate but uninformative.

Calibration Methods

Isotonic Regression

Isotonic regression fits a non-decreasing step function mapping raw predicted probabilities to calibrated probabilities. It minimizes the sum of squared deviations between the step function and the true labels, subject to the constraint that the function is monotonically non-decreasing. The result is a piecewise-constant mapping that is fully non-parametric. It makes no assumption about the functional form of the miscalibration and can correct arbitrary calibration curves as long as they are monotone in the raw probabilities.

Isotonic regression is the preferred method for financial ML for three reasons. First, it makes no distributional assumption. The miscalibration structure of a Random Forest trained on financial data is not log-linear. Isotonic regression adapts to whatever shape the miscalibration takes. Second, it preserves the rank ordering of predictions. If observation A has a higher raw probability than observation B, it will have a higher calibrated probability. The calibrator does not change which observations the model is most confident about; it only changes the absolute probability values. Third, the step function produced by isotonic regression is auditable. Every calibration point maps a specific raw probability range to a specific calibrated value, and that mapping can be inspected.

Isotonic regression requires more calibration data than Platt scaling to produce stable estimates. With fewer than a few hundred observations in the calibration set, the step function can become irregular and may overfit the calibration data. This is the primary practical limitation in financial applications where the effective sample size is reduced by label concurrency.

Platt Scaling

Platt scaling fits a logistic regression to the raw scores, mapping them to calibrated probabilities via a sigmoid transform:

p_calibrated = 1 / (1 + exp(-(A * score + B)))

where A and B are fitted by maximum likelihood on the calibration set. Platt scaling assumes the miscalibration is log-linear. This assumption works reasonably for Support Vector Machines and some gradient boosted trees, but it is a poor fit for Random Forest vote fractions. For a Random Forest whose calibration curve has an S-shape deviation from the diagonal, a linear mapping will correct the middle range but leave the tails partially miscalibrated.

Platt scaling should be preferred over isotonic regression only when the calibration dataset is small (fewer than 200 observations) and the miscalibration is approximately log-linear. In all other cases encountered in financial ML, isotonic regression produces a more accurate and more interpretable calibrator.

Comparing the Two Methods

| Isotonic Regression | Platt Scaling | |

|---|---|---|

| Functional form | Non-parametric step function | Logistic (sigmoid) |

| Assumption | Monotone raw probabilities | Log-linear miscalibration |

| Minimum calibration data | Several hundred observations | Tens of observations |

| Preferred for Random Forest | Yes | No |

| Rank-ordering preserved | Yes | Yes |

| Overfitting risk | Moderate (step function can be noisy) | Low (only two parameters) |

The Financial Data Contract: Calibrating Without Leakage

Why Standard Calibration Procedures Fail on Financial Data

The standard calibration procedure (train the model on a training set, generate predictions on a separate calibration set, fit the calibrator on those predictions) assumes that the calibration set is drawn from the same distribution as the training data but is independent of it. In financial data with overlapping triple-barrier labels, this assumption fails in two ways.

First, a random train/calibration split leaks information across the purge boundary. Labels that span the split point are attributed to both sides. The training set contains some bars of the label's holding period, and the calibration set contains others. The model has already seen the market conditions that will determine whether the calibration-set prediction is correct. Calibration fitted on these predictions will be optimistically biased.

Second, even a time-ordered split does not respect the embargo. The last observations in the training set share return attribution with the first observations in the calibration set. Without a sufficient embargo at the fold boundary, purging and embargoing must ensure the calibration set is not contaminated by events the model implicitly learned through overlapping labels.

The Correct Procedure: Out-of-Fold Calibration Through PurgedKFold

The correct approach uses out-of-fold (OOF) predictions from PurgedKFold. For each fold, train on the training split, predict on the validation split, and concatenate the validation predictions into a full-length OOF array. This OOF array has three properties. (1) Each prediction is produced by a model that was not trained on that observation. (2) Purging prevents label leakage across fold boundaries. (3) The embargo reduces temporal contamination from adjacent bars. The calibrator is then fit on these OOF predictions against the true labels.

from afml.calibration.calibration import CalibratorCV from afml.cross_validation.cross_validation import PurgedKFold cv = PurgedKFold(n_splits=5, t1=events['t1'], pct_embargo=0.01) # Wrap the base classifier — CalibratorCV is itself an sklearn estimator calibrated_clf = CalibratorCV(estimator=clf, cv=cv) # fit() does three things in sequence: # 1. generates OOF predictions via PurgedKFold # 2. fits a calibration map (isotonic by default; Platt via method='platt') # 3. refits the base estimator on the full dataset calibrated_clf.fit(X, y, sample_weight=sample_weight) # OOF probabilities are stored for diagnostics (used in Section 6) oof_probs_raw = calibrated_clf.oof_probs_ # At inference time: predict_proba applies the fitted calibration map automatically calibrated_probs = calibrated_clf.predict_proba(X_new)[:, 1]

Sample Weights in Calibration

CalibratorCV passes sample_weight to both the base estimator's fit() call and to the calibration map's fit(). The weighted calibration fit matters for financial data because the OOF observations are not equally informative. An observation from a high-uniqueness bar and one from a heavily-overlapping label should not contribute equally to the calibration curve. Passing the same AFML-style uniqueness or time-decay weights that were used for model training ensures the calibration curve is fitted on the same information weighting as the model itself. If sample_weight is omitted, the calibrator treats all OOF observations as equally informative, which is internally inconsistent with a weighted base classifier.

The afml.calibration Module

The module has two layers. The primary interface is CalibratorCV, an sklearn-compatible estimator. It (1) generates OOF predictions via PurgedKFold, (2) fits a calibration map (isotonic by default; Platt via method='platt'), and (3) refits the base estimator on the full dataset. Because it implements the standard estimator interface, it is composable with any sklearn pipeline, grid search, or cross-validation loop. The metrics and visualization layer provides standalone functions — brier_score, expected_calibration_error, maximum_calibration_error, calibration_report, plot_reliability, and plot_reliability_with_ci — that accept numpy arrays and return scalar values or figures. fit_platt_scaling is available as a low-level constructor for Platt scaling when called outside the CalibratorCV interface.

from afml.calibration.calibration import ( CalibratorCV, brier_score, expected_calibration_error, maximum_calibration_error, calibration_report, plot_reliability_with_ci, ) from afml.cross_validation.cross_validation import PurgedKFold import matplotlib.pyplot as plt cv = PurgedKFold(n_splits=5, t1=events['t1'], pct_embargo=0.01) # Step 1: Fit — OOF generation, calibration map fitting, and base refit in one call calibrated_clf = CalibratorCV(estimator=clf, cv=cv) calibrated_clf.fit(X, y, sample_weight=sample_weight) # Step 2: Collect raw OOF probs (stored by fit()) and calibrated equivalents. # For method='isotonic' (default), calibrator_ is an IsotonicRegression: use .predict(). # For method='platt', calibrator_ is a LogisticRegression: use .predict_proba(raw.reshape(-1,1))[:,1]. oof_probs_raw = calibrated_clf.oof_probs_ oof_probs_cal = calibrated_clf.calibrator_.predict(oof_probs_raw) # Step 3: Evaluate report = calibration_report(y, oof_probs_raw, oof_probs_cal) print(report) # Step 4: Plot reliability diagram with bootstrap CI fig, axes = plt.subplots(1, 2, figsize=(14, 6)) plot_reliability_with_ci(y, oof_probs_raw, ax=axes[0], title="Raw Probabilities", random_state=42) plot_reliability_with_ci(y, oof_probs_cal, ax=axes[1], title="Calibrated Probabilities", random_state=42)

Pipeline Integration

Within the production pipeline established in Part 9, calibration is activated by passing calibrate=True to ModelDevelopmentPipeline.run(). After clf_hyper_fit returns the best estimator, calibrate_model() is called automatically. It constructs a CalibratorCV wrapping that estimator, using a PurgedKFold configured with the same n_splits and pct_embargo as the HPO step, then calls fit() on the full training dataset. From that point, self.best_model is the fitted CalibratorCV instance, so all downstream calls to predict_proba() return calibrated probabilities with no change to the calling code.

# Run the full pipeline with calibration enabled model, features, metrics, config = pipeline.run( calibrate=True, ) # pipeline.calibrator holds the fitted CalibratorCV for post-hoc diagnostics valid = ~np.isnan(pipeline.calibrator.oof_probs_) oof_brier = np.mean( (pipeline.calibrator.oof_probs_[valid] - y.values[valid]) ** 2 ) # Reliability diagram directly from OOF predictions plot_reliability_with_ci( y, pipeline.calibrator.oof_probs_, title="OOF — Raw Probabilities", ) plot_reliability_with_ci( y, pipeline.calibrator.calibrator_.predict( pipeline.calibrator.oof_probs_[valid]), title="OOF — Calibrated Probabilities", )

Two attributes are populated after pipeline.run() completes. pipeline.best_model is the CalibratorCV instance used for all subsequent inference. pipeline.calibrator holds the same object for diagnostic access, including reliability diagrams, Brier score comparisons, and the raw OOF probabilities available through calibrator.oof_probs_.

One ONNX consideration applies when export_onnx=True is set on the pipeline. CalibratorCV has no ONNX operator mapping, so _save_all_artifacts() unwraps the calibrator and exports the inner sklearn estimator as the ONNX source. The isotonic map is saved separately as a pickled IsotonicRegression object. At deployment time in MetaTrader 5, the ONNX model produces raw probabilities; those are passed through calibrator.calibrator_.predict() as a post-processing step before the probabilities reach the bet sizing layer.

The Diagnostic Pipeline

Running the calibration step in isolation establishes that the raw probabilities are miscalibrated and that isotonic regression corrects them. That is necessary but not sufficient. The argument becomes conclusive only when the effect is traced through the full chain from probabilities to position sizes to P&L and finally to path Sharpe distributions.

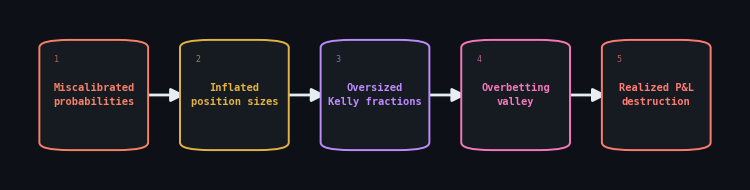

Miscalibration propagation chain from raw probabilities to path Sharpe

The following six figures make this chain explicit.

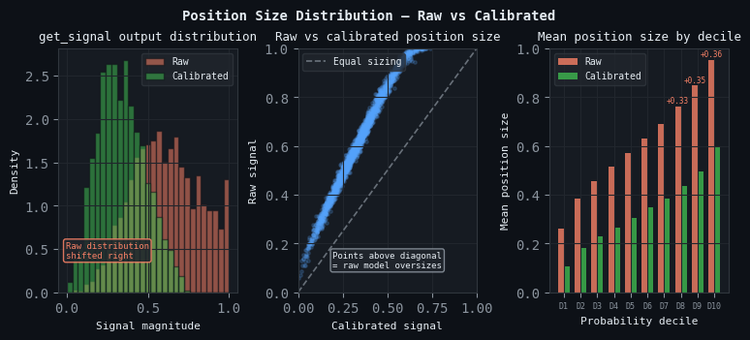

Position Size Distribution

Because get_signal is a monotone function of probability, a systematic shift in the probability distribution produces a predictable shift in the position size distribution. Overconfident probabilities concentrated in the 0.60–0.80 range map to get_signal outputs in the 0.20–0.60 range. The scatter plot of raw versus calibrated position sizes shows that almost every observation sits above the equal-sizing diagonal, confirming that the raw model consistently oversizes relative to calibration.

Figure 2. 3-panel illustration of position size distribution

- Left — Histogram: get_signal output distribution for raw (orange) and calibrated (green) probabilities. The raw distribution is shifted rightward throughout; calibration pulls it back toward the center.

- Center — Scatter: Raw versus calibrated position size per observation. Points above the diagonal represent observations the raw model oversizes; almost all observations fall above it.

- Right — Per-decile mean position size: Mean position size in each probability decile for raw and calibrated models. The excess sizing in the upper deciles — where overconfidence is greatest — is annotated.

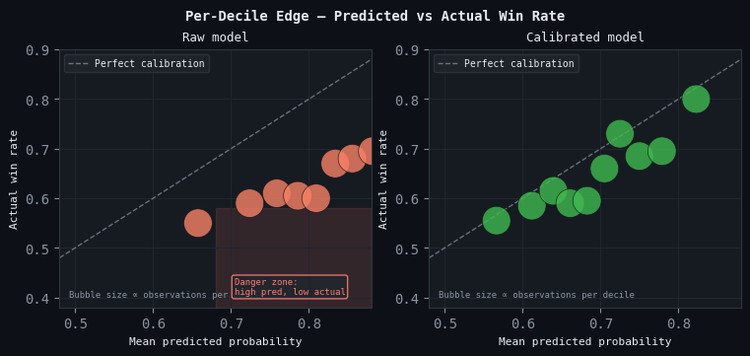

Per-Decile Edge Analysis

Oversizing becomes dangerous when combined with the fact that the raw model's high-probability predictions do not correspond to proportionally high actual win rates. The per-decile edge analysis divides observations into ten bins by predicted probability and plots the mean predicted probability against the actual win rate in each bin. A well-calibrated model produces a scatter plot where the bins track the diagonal. An overconfident model produces bins in the upper deciles that sit materially above it: the model predicts 0.70 while delivering 0.57.

Figure 3. Per-decile edge analysis (bubble size proportional to observations per decile)

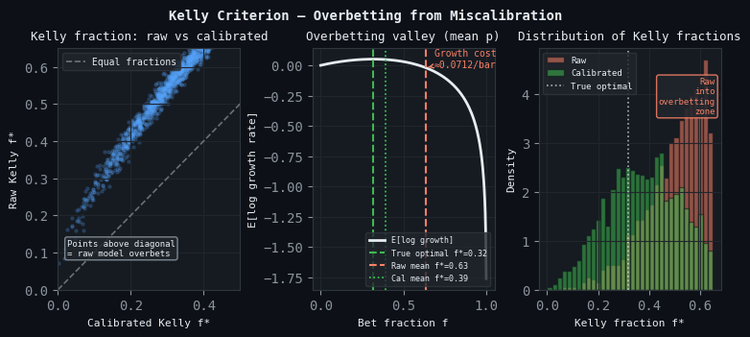

The Overbetting Valley

Kelly theory provides the analytical mechanism connecting overconfident probabilities to P&L destruction. The expected log-growth rate of a betting strategy peaks at the true Kelly optimal fraction and falls on both sides: steeply on the right (overbetting) and gradually on the left (underbetting). Because overbetting Kelly even slightly on each observation leads to eventual ruin through the compounding of negative growth, the right descending slope is the dangerous region. An overconfident model recommends Kelly fractions consistently above the optimum, placing the strategy on this slope at almost every trade.

Figure 4. The overbetting valley

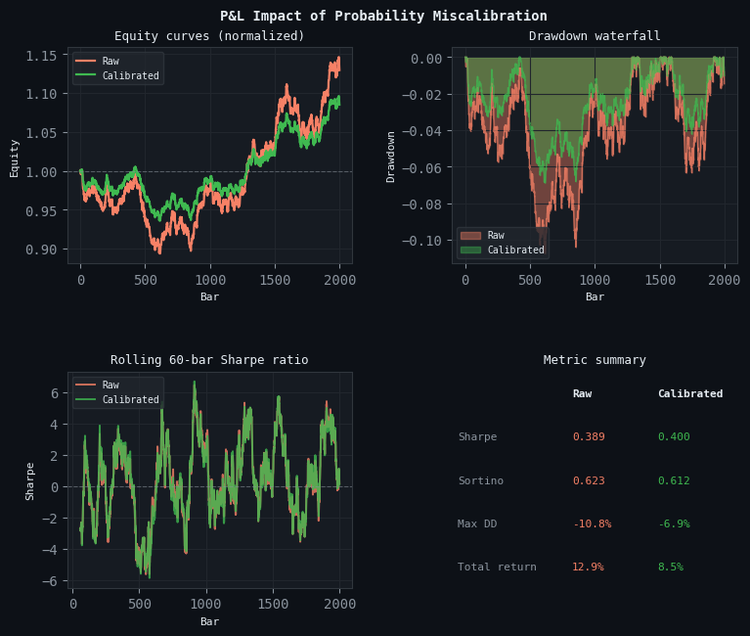

P&L Impact

Equity simulations run from both raw and calibrated probabilities using half-Kelly sizing translate the probability-space argument into account-level outcomes. Each simulation applies the same trade sequence with position sizes derived from raw and calibrated probabilities respectively. The calibrated equity curve is smoother, reaches higher terminal wealth, and produces shallower drawdowns, not because the signals are different, but because the position sizes are correct.

Figure 5. P&L impact

Robustness Across CPCV Paths

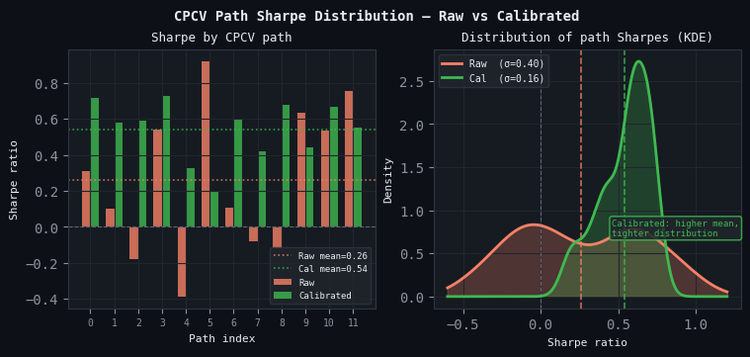

A single equity simulation is subject to path dependence. The result reflects the specific sequence of model predictions, not the general behavior of the calibrated versus uncalibrated strategy. The CPCV path distribution from the Unified Validation Pipeline article resolves this. φ[N, k] independent paths are simulated, each with a fresh sequence of OOF predictions from a different training fold combination. The Sharpe ratio distribution across paths is the appropriate evidence base for evaluating whether calibration produces a genuine structural improvement or merely shifts one lucky path.

Calibration does not just shift the mean Sharpe upward. It also tightens the standard deviation across paths. A wide distribution of path Sharpes signals fragility. Calibration reduces that path-dependence because overconfident position sizes are the primary mechanism by which a single bad sequence of trades produces a disproportionately large drawdown on one path but not others.

Figure 6. 2-panel illustration of the CPCV path Sharpe distribution

- Left — Per-path Sharpe: Sharpe ratio by CPCV path for raw (orange) and calibrated (green) probabilities. Calibrated paths are higher on average and less variable.

- Right — Distribution: Kernel density of path Sharpes across all φ[N, k] paths. Calibration shifts the mean, tightens the standard deviation, and raises the fraction of paths with positive Sharpe. The PBO computed from the calibrated return matrix is lower, confirming that the improvement is not an artifact of path selection.

Conclusion

The argument for calibration in this pipeline is not that uncalibrated models produce bad rankings. Random Forests with decent features do rank observations correctly. The real issue is that the absolute probability values they assign do not correspond to the win rates those probabilities imply. Every sizing method in Part 10 and Part 11 converts those absolute values directly into position sizes and Kelly fractions. The diagnostic pipeline in Section 6 quantifies the cost of that mismatch at each stage of the chain: probability space, position space, P&L, and path Sharpe distribution. The correction — isotonic calibration on out-of-fold predictions through PurgedKFold — is a single function call that adds no model parameters, introduces no new degrees of freedom, and produces a mapping that can be fully audited and re-estimated periodically as the data distribution evolves.

Key takeaways:

- Calibration and discrimination are independent. A model that ranks observations correctly can still be overconfident. Brier score, ECE, and MCE measure calibration; AUC measures discrimination. Both matter, and a high AUC does not excuse poor calibration when absolute probability values are used for sizing.

- Use method='isotonic' for tree-based classifiers. Platt scaling assumes log-linear miscalibration, which does not hold for Random Forests. Isotonic regression is non-parametric and adapts to the actual miscalibration shape. Use method='platt' only when the effective OOF sample size after purging and embargo falls below approximately 200 observations.

- Calibrate through PurgedKFold, not a random held-out set. A random calibration split leaks information across the purge boundary. OOF predictions through PurgedKFold with the correct embargo are the only valid calibration data source for financial data with overlapping labels.

- Re-estimate the calibrator periodically. The mapping from raw to calibrated probabilities reflects the model's miscalibration structure on the training data distribution. After a significant regime shift, or after retraining the model on a substantially different dataset, the calibrator should be re-fitted. Stale calibration is better than no calibration, but it is not indefinitely reliable.

- Monitor all three metrics. ECE measures the average gap; MCE measures the worst-case region; Brier score measures the overall usefulness of the probabilities for decision-making. A strategy that exhibits good ECE but high MCE has a specific probability region producing badly-sized positions that averaged metrics will not reveal.

Attached Files

| File | Description |

|---|---|

| __init__.py | Package initializer – exports the public API from calibration.py, making the calibration toolkit directly importable from the package level. |

| calibration.py | Primary interface: CalibratorCV An sklearn‑compatible estimator that:

brier_score, expected_calibration_error, maximum_calibration_error, compute_reliability, bootstrap_reliability_ci, plot_reliability, plot_reliability_with_ci, calibration_report, fit_platt_scaling. |

| README.md | User documentation – overview, key features, quick‑start examples, API references, and usage guidelines for the calibration module. |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

Formulating Dynamic Multi-Pair EA (Part 8): Time-of-Day Capital Rotation Approach

Formulating Dynamic Multi-Pair EA (Part 8): Time-of-Day Capital Rotation Approach

Forex Arbitrage Trading: A Matrix Trading System for Return to Fair Value with Risk Control

Forex Arbitrage Trading: A Matrix Trading System for Return to Fair Value with Risk Control

Feature Engineering for ML (Part 1): Fractional Differentiation — Stationarity Without Memory Loss

Feature Engineering for ML (Part 1): Fractional Differentiation — Stationarity Without Memory Loss

Chaos optimization algorithm (COA): Continued

Chaos optimization algorithm (COA): Continued

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use