Neural Networks in Trading: Dual Clustering of Multivariate Time Series (DUET)

Introduction

Multivariate time series are sequences of data in which each timestamp contains several interrelated variables describing complex processes. They are widely used in economic analysis, risk management, and other domains that require forecasting multivariate data. Unlike univariate time series, multivariate series make it possible to account for correlations between variables and thus enable the creation of more accurate forecasting models.

In financial markets, the analysis of multivariate time series is applied to asset price forecasting, volatility estimation, trend detection, and the development of trading strategies. For example, when forecasting stock prices, factors such as trading volume, interest rates, macroeconomic indicators, and news are taken into account. All of these parameters are interrelated, and their joint analysis makes it possible to identify patterns that cannot be detected when each variable is considered separately.

A key challenge in processing multivariate time series is the development of methods capable of identifying both temporal and cross-channel dependencies. However, in practice, difficulties arise due to data variability. During periods of economic crises, correlation structures between assets change, which complicates the use of traditional models.

Existing data processing methods can be divided into three categories. The first approach involves analyzing each channel independently, but this ignores relationships between variables. The second approach combines all channels; however, this may introduce redundant information and reduce accuracy. The third approach is variable clustering, but it limits the flexibility of the model.

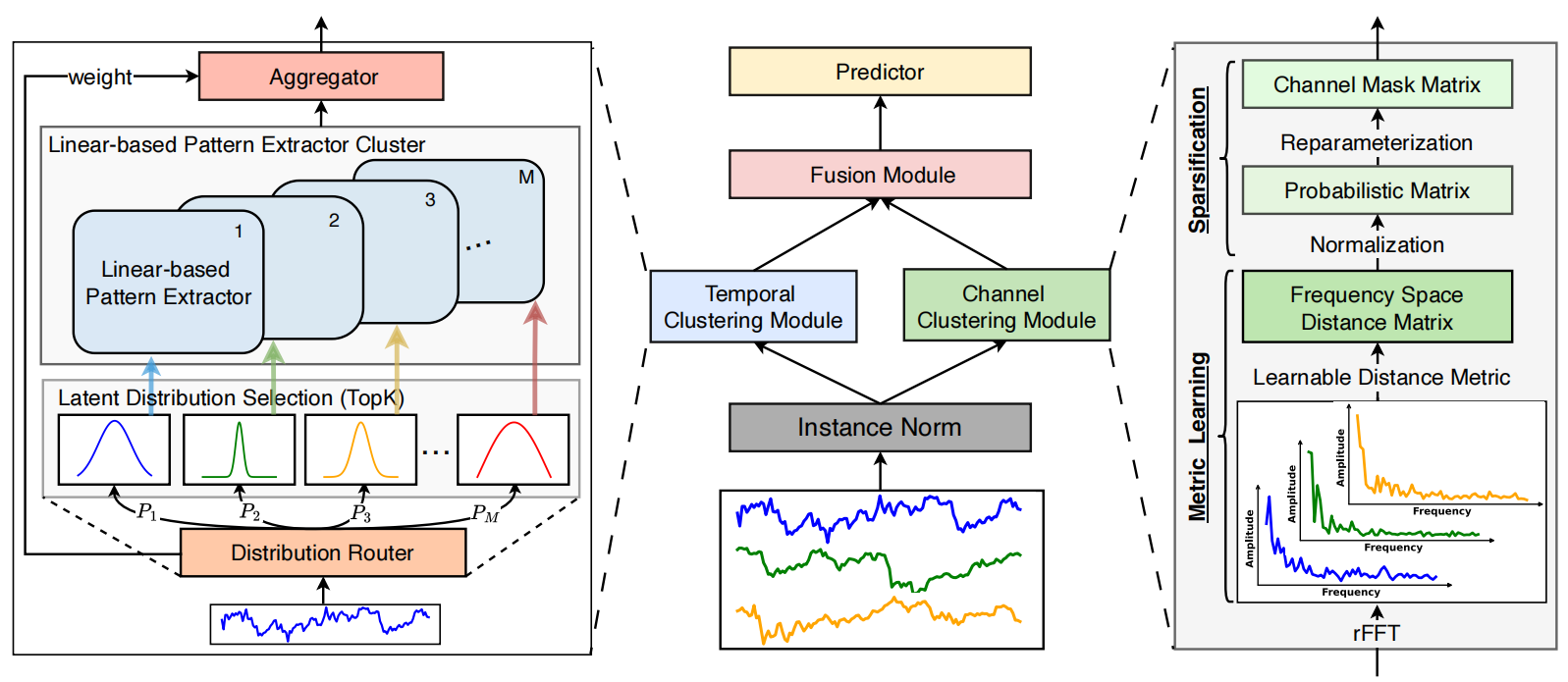

To address these issues, the authors of the paper "DUET: Dual Clustering Enhanced Multivariate Time Series Forecasting" proposed the DUET method, which combines two types of clustering: temporal and channel clustering. Temporal clustering (TCM) groups data based on similar characteristics and allows models to adapt to changes over time. In financial market analysis, this makes it possible to account for different phases of economic cycles. Channel clustering (CCM) identifies key variables, removing noise and improving forecasting accuracy. It reveals stable relationships between assets, which is particularly important for constructing diversified investment portfolios.

Afterward, the results are integrated by the Fusion Module (FM), which synchronizes information about temporal patterns and cross-channel dependencies. This approach enables more accurate forecasting of complex systems such as financial markets. Experiments conducted by the authors of the framework demonstrated that DUET outperforms existing methods, providing more accurate forecasts. It accounts for heterogeneous temporal patterns and the dynamics of cross-channel relationships, adapting to data variability.

DUET Algorithm

The DUET framework architecture represents an innovative approach to forecasting multivariate time series that uses dual clustering of the input data along temporal and channel dimensions. This improves the performance of the model and makes its results more interpretable. The approach can be compared to the work of an experienced analyst who divides a complex data system into separate blocks, analyzing them first individually and then collectively to obtain a more detailed understanding. The DUET framework includes several key modules, each performing a specialized role in the data analysis process:

- Instance Normalization

- Temporal Clustering Module — TCM

- Channel Clustering Module — CCM

- Fusion Module — FM

- Prediction Module

Input data normalization removes outliers and smooths sharp fluctuations, making the model more robust to differences between training and test datasets. This is particularly important in financial data analysis, where high-frequency noise can obscure meaningful trends. Normalization also helps align the statistical characteristics of individual time sequences originating from different sources, reducing the influence of anomalous values.

The Temporal Clustering Module (TCM) analyzes temporal dependencies and groups sequences into clusters, much like financial analysts classify assets according to their volatility, liquidity, and historical characteristics. At the core of TCM is an architecture composed of several parallel encoders (Mixture of Experts — MoE), which dynamically selects the most suitable encoders for each analyzed segment depending on the prior clustering of the time series. This ensures accurate representation of temporal sequences, since different groups of data may require unique processing methods. The MoE mechanism adaptively switches between encoders, allowing the model to efficiently work with time series of different nature, including high-frequency market data.

The encoders analyze time series represented as hidden features, which are then decomposed into long-term and short-term trends. This makes it possible to identify hidden patterns that improve the forecasting of future price movements in financial markets.

The Channel Clustering Module (CCM) performs channel clustering using the frequency characteristics of signals. This module evaluates correlations between channels, identifying key dependencies and excluding redundant or insignificant components. Similar to a financial analyst selecting meaningful macroeconomic and technical indicators while filtering out random market fluctuations, CCM helps isolate the most informative signals.

Analyzing the distances between amplitude vectors of the channels' frequency characteristics makes it possible to identify correlated signals and eliminate noise phenomena. This is particularly useful in financial markets, where hidden relationships between assets can be used to construct arbitrage strategies or identify systematic risks.

The Fusion Module (FM) integrates temporal and channel representations using a masked attention mechanism. This process resembles the analysis of complex relationships between various market factors, when an analyst synthesizes information from different sources to obtain a holistic view. FM identifies the most significant clusters and filters out irrelevant signals, thereby improving forecasting accuracy. The use of masked attention allows the importance of different data components to be dynamically adjusted, making the processing more adaptive. This is critically important in financial applications, where the dependency structure between assets can change under the influence of macroeconomic events.

At the final stage, the Prediction Module uses aggregated features to forecast future values of the time series. This process can be compared to the work of a professional investor who makes informed predictions about future price changes based on historical market data. The Prediction Module employs neural network methods capable of capturing complex nonlinear relationships and adapting to potential structural changes in the data. The final forecasts undergo inverse normalization, which allows them to be interpreted on the scale of the original data.

By applying advanced machine learning techniques such as masked attention mechanisms, frequency-domain analysis, and clustering of latent representations, DUET provides high forecasting accuracy and interpretability. It helps uncover hidden patterns in complex temporal sequences and apply these insights to optimize trading strategies, where traditional approaches often prove insufficiently effective. Compared with traditional methods that require extensive manual tuning and expert intervention, DUET automatically detects structural characteristics of the data and adapts to them in real time. This makes it particularly useful for analyzing high-frequency time series and operating in rapidly changing market environments.

The original visualization of the DUET framework is provided below.

Implementation Using MQL5

After a detailed examination of the theoretical aspects of the DUET framework, we move on to the practical part of our work, where we implement our own interpretation of the proposed approaches using MQL5.

The modular architecture of DUET makes it convenient for step-by-step development: each functional block can be considered as an independent element of the system. Dividing the architecture into autonomous modules simplifies debugging, testing, and subsequent optimization. We begin by implementing the Temporal Clustering Module.

Temporal Clustering Module

As mentioned earlier, the temporal clustering module includes several encoders operating in parallel. Within the scope of this work, we will construct a maximally simple encoder architecture consisting of two sequential fully connected layers with a nonlinearity introduced between them using an activation function. However, it should be noted that each encoder processes separate independent segments using its own trainable parameters. To organize such processing, we will use convolutional layers. By feeding the entire sequence of input data into the layer, we define the size of the analysis window and set the stride equal to the segment size. As a result, the parameters of the convolutional layer will act as the parameters of the encoder's fully connected layer, ensuring parallel processing of all sequence segments. To increase the number of encoders operating in parallel, it is sufficient to proportionally increase the number of filters in the convolutional layer.

Thus, the organization of parallel encoder operation is determined. However, it is important to note that the authors of the DUET framework propose using only the most relevant encoders. It is assumed that time series follow a latent normal distribution. As is well known, a normal distribution is characterized by its mean and variance. To select the k most probable latent distributions, the authors use the Noisy Gating method, which can be represented as follows:

![]()

Adding normally distributed noise (ε) stabilizes training, while the Softplus function ensures that the variance remains positive.

Next, we select the k most probable latent distributions and compute their weights using the SoftMax function. In this way, time series belonging to the same k most probable latent distributions are processed by a shared group of encoders. Multiplying the resulting mask by the outputs of the encoders produces a weighted result and eliminates the influence of irrelevant filters.

Having determined the architectural solution, we proceed with the implementation. First, we implement the algorithm for selecting the k most relevant encoders. Parameterization of the distribution parameters for individual segments is organized using a convolutional layer. The algorithm for selecting the k most relevant encoders, however, is implemented on the OpenCL context side. For this purpose, we create the TopKgates kernel.

__kernel void TopKgates(__global const float *inputs, __global const float *noises, __global float *gates, const uint k) { size_t idx = get_local_id(0); size_t var = get_global_id(1); size_t window = get_local_size(0); size_t vars = get_global_size(1);

The kernel parameters include pointers to three data buffers (input data, noise, and results) and the number of elements to be selected.

In the kernel body, as usual, we first identify the current thread in the task space. In this case, a two-dimensional task space is used, with grouping into local groups along the first dimension. This dimension groups together threads related to the same segment and corresponds to the number of encoders used by the model.

Next, we determine the offset within the local data buffers.

const int shift_logit = var * 2 * window + idx; const int shift_std = shift_logit + window; const int shift_gate = var * window + idx;

And load the corresponding input data.

float logit = IsNaNOrInf(inputs[shift_logit], MIN_VALUE); float noise = IsNaNOrInf(noises[shift_gate], 0); if(noise != 0) { noise *= Activation(inputs[shift_std], 3); logit += IsNaNOrInf(noise, 0); }

If the noise value is not equal to 0, we adjust the value of the logit variable according to the variance and noise.

Next, we must determine the k largest logit values within a single workgroup. To accomplish this, we create an array in local memory to serve as a data exchange medium between threads in the workgroup, and we declare auxiliary local variables.

__local float temp[LOCAL_ARRAY_SIZE]; //--- const uint ls = min((uint)window, (uint)LOCAL_ARRAY_SIZE); uint bigger = 0; float max_logit = logit;

We then define a loop that iterates over the elements of the workgroup with a step equal to the size of the local array.

//--- Top K #pragma unroll for(int i = 0; i < window; i += ls) { if(idx >= i && idx < (i + ls)) temp[idx % ls] = logit; barrier(CLK_LOCAL_MEM_FENCE);

Inside the loop, the elements of the current window store their values in the local array, followed by mandatory synchronization of the threads within the workgroup.

After that, we create a nested loop. During its iterations, each thread calculates how many elements in the local array are greater than the logit value of the current thread.

for(int i1 = 0; (i1 < min((int)ls,(int)(window-i)) && bigger <= k); i1++) { if(temp[i1] > logit) bigger++; if(temp[i1] > max_logit) max_logit = temp[i1]; } barrier(CLK_LOCAL_MEM_FENCE); }

At the same time, we search for the maximum value within the local group.

After completing all iterations of the nested loop, we again synchronize the threads of the workgroup and only then proceed to the next iteration of the outer loop.

It is easy to see that only k threads with the largest logit values do not exceed the threshold number of greater elements. These values are stored in the result buffer.

if(bigger <= k) gates[shift_gate] = logit - max_logit; else gates[shift_gate] = MIN_VALUE; }

In all other cases, a constant representing the minimum value is written to the result buffer. During the subsequent application of the SoftMax function, this value results in a zero influence coefficient.

The kernel described above organizes the forward pass of the process for selecting the k most relevant encoders in each specific case. However, in order to build a truly adaptive model, we must also organize the training process for encoder selection. Naturally, the kernel described above does not contain trainable parameters. Nevertheless, such parameters are used to generate the input data used by the kernel. Therefore, we must propagate the error gradient back to the level of the input data. This process is implemented in the TopKgatesGrad kernel. In its parameter structure, we add pointers to the buffers containing the corresponding error gradients.

__kernel void TopKgatesGrad(__global const float *inputs, __global float *grad_inputs, __global const float *noises, __global const float *gates, __global float *grad_gates) { size_t idx = get_global_id(0); size_t var = get_global_id(1); size_t window = get_global_size(0); size_t vars = get_global_size(1);

Inside the kernel body, we identify the current execution thread in the two-dimensional task space. The task space structure is inherited from the feed-forward kernel, except that in this case the threads are not grouped into workgroups.

Next, we determine the offset in the global data buffers, analogous to the forward-pass algorithm.

const int shift_logit = var * 2 * window + idx; const int shift_std = shift_logit + window; const int shift_gate = var * window + idx;

The first step is to load the feed-forward pass result corresponding to the current thread.

const float gate = IsNaNOrInf(gates[shift_gate], MIN_VALUE); if(gate <= MIN_VALUE) { grad_inputs[shift_logit] = 0; grad_inputs[shift_std] = 0; return; }

As can be easily guessed, if the obtained value equals the minimum constant, we can immediately write zero values to the buffer containing the gradients of the input data. Such a value corresponds to the exclusion of the encoder from subsequent operations.

Otherwise, we load the error gradient value at the output level and immediately propagate it to the corresponding element of the input data gradient buffer (the logit error).

float grad = IsNaNOrInf(grad_gates[shift_gate], 0); grad_inputs[shift_logit] = grad;

The error gradient is, of course, not propagated to the noise level. However, we still need to determine the error value at the variance level. During the feed-forward pass, the variance was multiplied by the noise. Therefore, the next step is to retrieve the noise value.

float noise = IsNaNOrInf(noises[shift_gate], 0); if(noise == 0) { grad_inputs[shift_std] = 0; return; }

Obviously, if the noise is equal to 0, the variance does not participate in the feed-forward operations. Consequently, in such cases, we simply store a zero gradient without performing further operations.

Finally, in the remaining case, we adjust the error gradient value by the noise coefficient and the derivative of the activation function.

grad *= noise; grad_inputs[shift_std] = Deactivation(grad, Activation(inputs[shift_std], 3), 3); }

The resulting value is written to the global data buffer, after which the kernel execution is completed.

The complete source code for both kernels described above can be found in the attachment to the article.

The next stage of our work is to organize this process on the main program side. First, we create the CNeuronTopKGates object, in which we implement the algorithm for selecting the k most relevant encoders. The structure of the new object is presented below.

class CNeuronTopKGates : public CNeuronSoftMaxOCL { protected: int iK; CBufferFloat cbNoise; CNeuronConvOCL cProjection; CNeuronBaseOCL cGates; //--- virtual bool TopKgates(void); virtual bool TopKgatesGradient(void); //--- virtual bool feedForward(CNeuronBaseOCL *NeuronOCL) override; virtual bool updateInputWeights(CNeuronBaseOCL *NeuronOCL) override; virtual bool calcInputGradients(CNeuronBaseOCL *NeuronOCL) override; public: CNeuronTopKGates(void) {}; ~CNeuronTopKGates(void) {}; //--- virtual bool Init(uint numOutputs, uint myIndex, COpenCLMy *open_cl, uint window, uint units_count, uint gates, uint top_k, ENUM_OPTIMIZATION optimization_type, uint batch); //--- virtual int Type(void) override const { return defNeuronTopKGates; } //--- virtual bool Save(int const file_handle) override; virtual bool Load(int const file_handle) override; //--- virtual bool WeightsUpdate(CNeuronBaseOCL *source, float tau) override; virtual void SetOpenCL(COpenCLMy *obj) override; //--- virtual uint GetGates(void) const { return cProjection.GetFilters() / 2; } virtual uint GetUnits(void) const { return cProjection.GetUnits(); } };

In the presented structure, we see that in addition to the usual set of overridden virtual methods, TopKgates and TopKgatesGradient are added. These are wrapper methods for the kernels described earlier that were created on the OpenCL program side. Their implementation follows an algorithm already familiar to you, so we will not dwell on it in detail here.

The few internal objects are declared statically, which allows us to leave the class constructor and destructor empty. Initialization of all declared and inherited objects is performed in the Init method, whose parameters receive constants that allow unambiguous interpretation of the architecture of the created object.

bool CNeuronTopKGates::Init(uint numOutputs, uint myIndex, COpenCLMy *open_cl, uint window, uint units_count, uint gates, uint top_k, ENUM_OPTIMIZATION optimization_type, uint batch) { if(!CNeuronSoftMaxOCL::Init(numOutputs, myIndex, open_cl, gates * units_count, optimization_type, batch)) return false; SetHeads(units_count);

The operations of the initialization method begin with a call to the method of the same name in the parent class, where the minimally required checks and initialization of inherited objects have already been organized.

Note that in this case we use the SoftMax function object as the parent class. This allows us to convert the results of selecting the k most relevant encoders into a probabilistic representation without creating an additional internal object. It is sufficient simply to use the functionality of the parent class.

After successfully executing the operations of the parent class method, we proceed to construct the initialization algorithm for the newly declared objects. Here we first initialize the convolutional layer that projects the distribution parameters of the analyzed segments.

if(!cProjection.Init(0, 0, OpenCL, window, window, 2 * gates, units_count, 1, optimization, iBatch)) return false; cProjection.SetActivationFunction(None);

At the output of this layer, we expect to obtain the mean values and variances for each encoder in our model. Consequently, the number of filters in the convolutional layer is twice the specified number of encoders.

Here we also add a data buffer in which the noise will be generated.

if(!cbNoise.BufferInit(Neurons(), 0) || !cbNoise.BufferCreate(OpenCL)) return false;

Finally, we initialize a fully connected layer that will store the results produced by the previously created TopKgates feed-forward kernel.

if(!cGates.Init(0, 1, OpenCL, Neurons(), optimization, iBatch)) return false; cGates.SetActivationFunction(None); //--- return true; }

After that, we return the logical result of the operations to the calling program and terminate the method.

Note that in this case we do not create objects for storing the Top-K encoder probability distribution. We intend to convert the absolute logit values into probability space using the functionality of the parent class. Therefore, all objects required to support this process have already been created and initialized in the parent class.

The next step is to construct the feed-forward method for selecting the k most relevant encoders, implemented in CNeuronTopKGates::feedForward. As usual, the parameters of this method include a pointer to the input data object, which is immediately passed to the method of the same name of the object responsible for generating the statistical characteristics of the distribution of the analyzed segments.

bool CNeuronTopKGates::feedForward(CNeuronBaseOCL *NeuronOCL) { if(!cProjection.FeedForward(NeuronOCL)) return false;

Next, it is important to note that the authors of the DUET framework propose adding noise to the logit values only during training. Therefore, we check the model's operating mode and generate noise if necessary.

if(bTrain) { double random[]; if(!Math::MathRandomNormal(0, 1, Neurons(), random)) return false; if(!cbNoise.AssignArray(random)) return false; if(!cbNoise.BufferWrite()) return false; } else if(!cbNoise.Fill(0)) return false;

Otherwise, the noise buffer is filled with zeros.

We then call the wrapper method responsible for selecting the k most relevant encoders.

if(!TopKgates()) return false; //--- return CNeuronSoftMaxOCL::feedForward(cGates.AsObject()); }

The obtained results are passed to the method of the same name in the parent class, which converts the absolute values into probability space.

We return the logical result of the performed operations to the calling program and complete the execution of the method.

As you may have noticed, the forward-pass method has a linear algorithm. Accordingly, the backpropagation methods also follow a linear structure. Therefore, I suggest leaving their detailed examination for independent study. The full code of this object and all its methods can be found in the attachment to the article.

At this stage, we have implemented the algorithms for selecting the k most relevant encoders, both on the main program side and in the OpenCL context. We can now proceed to building the Mixture of Experts (MoE) architecture, which we implement within the CNeuronMoE object. The structure of the new object is presented below.

class CNeuronMoE : public CNeuronBaseOCL { protected: CNeuronTopKGates cGates; CLayer cExperts; //--- virtual bool feedForward(CNeuronBaseOCL *NeuronOCL) override; virtual bool updateInputWeights(CNeuronBaseOCL *NeuronOCL) override; virtual bool calcInputGradients(CNeuronBaseOCL *NeuronOCL) override; public: CNeuronMoE(void) {}; ~CNeuronMoE(void) {}; //--- virtual bool Init(uint numOutputs, uint myIndex, COpenCLMy *open_cl, uint window, uint window_out, uint units_count, uint experts, uint top_k, ENUM_OPTIMIZATION optimization_type, uint batch); //--- virtual int Type(void) override const { return defNeuronMoE; } //--- virtual bool Save(int const file_handle) override; virtual bool Load(int const file_handle) override; //--- virtual bool WeightsUpdate(CNeuronBaseOCL *source, float tau) override; virtual void SetOpenCL(COpenCLMy *obj) override; //--- virtual void TrainMode(bool flag) { bTrain = flag; cGates.TrainMode(bTrain); } };

In the presented structure, we see only two internal objects. One of them is the previously created object responsible for selecting the k most relevant encoders. The second one is a dynamic array for storing pointers to the encoder objects. Both objects are declared statically, which allows us to keep the constructor and destructor empty. All initialization work for these objects is organized in the Init method.

The parameters of the initialization method pass constants that provide an unambiguous description of the architecture of the created object. At the same time, the possibility of changing the dimensionality of the output data is allowed.

bool CNeuronMoE::Init(uint numOutputs, uint myIndex, COpenCLMy *open_cl, uint window, uint window_out, uint units_count, uint experts, uint top_k, ENUM_OPTIMIZATION optimization_type, uint batch) { if(!CNeuronBaseOCL::Init(numOutputs, myIndex, open_cl, window_out * units_count, optimization_type, batch)) return false;

The algorithm begins with a call to the method of the same name in the parent class, where the initialization of inherited objects and validation of input data have already been organized.

Next, we initialize the object responsible for selecting the most relevant encoders.

int index = 0; if(!cGates.Init(0, index, OpenCL, window, units_count, experts, top_k, optimization, iBatch)) return false;

After that, we proceed to the direct initialization of the encoder objects. First, we prepare a dynamic array and local variables for temporarily storing pointers to the created objects.

cExperts.Clear(); cExperts.SetOpenCL(OpenCL); CNeuronConvOCL *conv = NULL; CNeuronTransposeRCDOCL *transp = NULL;

The first component created is a convolutional layer, which serves as the first layer of the encoders. The input to this object will be the input data tensor, common to all encoders. The number of filters in this layer equals the product of the result tensor size of one encoder and the total number of encoders in the model. This approach allows us to compute values for all encoders in parallel.

index++; conv = new CNeuronConvOCL(); if(!conv || !conv.Init(0, index, OpenCL, window, window, window_out * experts, units_count, 1, optimization, iBatch) || !cExperts.Add(conv)) { delete conv; return false; } conv.SetActivationFunction(SoftPlus);

To introduce nonlinearity between the encoder layers, we use SoftPlus as the activation function.

Next, we need to add the second layer of the encoders. As you understand, each encoder must receive its own set of parameters. We can implement this. This is also possible using a convolutional layer. We simply specify the number of encoders in the parameter representing the number of independent analyzed sequences. However, attention should be paid to the fact that the output of the first layer is a three-dimensional tensor with dimensions { Units, Encoders, Dimension }. This does not correspond to the operating algorithm of the convolutional layer we previously created.

To ensure correct processing, we must swap the first two dimensions. This task is performed by a data transposition layer.

transp = new CNeuronTransposeRCDOCL(); index++; if(!transp || !transp.Init(0, index, OpenCL, units_count, experts, window_out, optimization, iBatch) || !cExperts.Add(transp)) { delete transp; return false; } transp.SetActivationFunction((ENUM_ACTIVATION)conv.Activation());

After that, we can initialize the convolutional layer that will serve as the second layer of our independent encoders.

index++; conv = new CNeuronConvOCL(); if(!conv || !conv.Init(0, index, OpenCL, window_out, window_out, window_out, units_count, experts, optimization, iBatch) || !cExperts.Add(conv)) { delete conv; return false; } conv.SetActivationFunction(None);

Finally, we add a reverse data transposition layer.

transp = new CNeuronTransposeRCDOCL(); index++; if(!transp || !transp.Init(0, index, OpenCL, experts, units_count, window_out, optimization, iBatch) || !cExperts.Add(transp)) { delete transp; return false; } transp.SetActivationFunction((ENUM_ACTIVATION)conv.Activation()); //--- return true; }

At this point, the initialization algorithm for the internal objects is complete. Then we return the logical result of the operation to the caller and complete the method execution.

After completing the initialization stage, we proceed to implement the forward-pass algorithm within the CNeuronMoE::feedForward method.

bool CNeuronMoE::feedForward(CNeuronBaseOCL *NeuronOCL) { if(!cGates.FeedForward(NeuronOCL)) return false;

The method parameters include a pointer to the input data object, which is immediately passed to the corresponding method of the encoder selection object.

Next, we proceed to working with the encoders. Note that they use the same input data object received in the method parameters. We first store this pointer in a local variable.

CNeuronBaseOCL *prev = NeuronOCL; int total = cExperts.Total(); for(int i = 0; i < total; i++) { CNeuronBaseOCL *neuron = cExperts[i]; if(!neuron || !neuron.FeedForward(prev)) return false; prev = neuron; }

Then we organize a loop that sequentially iterates over the encoder layers, calling their feed-forward methods.

After completing all iterations of the loop, we obtain the full set of outputs from all encoders. Recall that earlier we already obtained the probability mask of the most relevant encoders for each segment of the input data. Therefore, to obtain the weighted sum for each segment, we simply multiply the row vector of encoder relevance probabilities for the segment by the matrix of encoder outputs corresponding to that segment.

if(!MatMul(cGates.getOutput(), prev.getOutput(), getOutput(), 1, cGates.GetGates(), Neurons() / cGates.GetUnits(), cGates.GetUnits())) return false; //--- return true; }

The resulting values are stored in the result buffer of our object. The method ends by returning a logical result to the calling program.

At this point, we conclude our examination of the algorithms used to construct the methods of the encoder set object. I suggest leaving the backpropagation methods of this object for independent study. As always, the complete source code of this object and all its methods is available in the attachment to the article.

Today we have accomplished a substantial amount of work and have practically exhausted the scope of this article. However, our work is not yet finished. We will take a short break and continue implementing our interpretation of the approaches proposed by the authors of the DUET framework in the next article.

Conclusion

Today we examined the DUET framework, which combines temporal clustering (TCM) and channel clustering (CCM) for multivariate time series in order to improve the accuracy of their analysis and forecasting. TCM adapts models to temporal changes, while CCM identifies key variables and reduces noise.

In the practical part of the article, we presented an implementation of the Temporal Clustering Module (TCM). In the next article, we will continue the implementation we have started. We will present our own interpretation of the approaches proposed by the framework authors and bring the work to its logical conclusion by testing the model on real historical data.

List of references

Programs Used in the Article

| # | Name | Type | Description |

|---|---|---|---|

| 1 | Research.mq5 | Expert Advisor | Expert Advisor for collecting samples |

| 2 | ResearchRealORL.mq5 | Expert Advisor | Expert Advisor for collecting samples using the Real-ORL method |

| 3 | Study.mq5 | Expert Advisor | Model training Expert Advisor |

| 4 | Test.mq5 | Expert Advisor | Model Testing Expert Advisor |

| 5 | Trajectory.mqh | Class library | System state and model architecture description structure |

| 6 | NeuroNet.mqh | Class library | A library of classes for creating a neural network |

| 7 | NeuroNet.cl | Code library | OpenCL program code |

Translated from Russian by MetaQuotes Ltd.

Original article: https://www.mql5.com/ru/articles/17459

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

From Novice to Expert: Enhancing Liquidity Strategies with Multi-Timeframe Structural Confirmation in MQL5

From Novice to Expert: Enhancing Liquidity Strategies with Multi-Timeframe Structural Confirmation in MQL5

Using the MQL5 Economic Calendar for News Filtering (Part 2): Stop Management Positions During News Releases

Using the MQL5 Economic Calendar for News Filtering (Part 2): Stop Management Positions During News Releases

MetaTrader 5 Machine Learning Blueprint (Part 8): Bayesian Hyperparameter Optimization with Purged Cross-Validation and Trial Pruning

MetaTrader 5 Machine Learning Blueprint (Part 8): Bayesian Hyperparameter Optimization with Purged Cross-Validation and Trial Pruning

Larry Williams Market Secrets (Part 14): Detecting Hidden Smash Day Reversals with a Custom Indicator

Larry Williams Market Secrets (Part 14): Detecting Hidden Smash Day Reversals with a Custom Indicator

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use