MetaTrader 5 Machine Learning Blueprint (Part 10): Bet Sizing for Financial Machine Learning

Table of Contents

- Introduction

- Why Naive Position Sizing Fails

- The AFML Bet Sizing Toolkit

- Probability-Based Sizing (bet_size_probability)

- Dynamic Sizing with Forecast Prices (bet_size_dynamic)

- Budget-Constrained Sizing (bet_size_budget)

- Reserve Sizing via Mixture of Gaussians (bet_size_reserve)

- Conclusion

- Attached Files

Introduction

You have probability estimates from the classifier pipeline built across this series, and you need to translate them into position sizes a trading system can act on. Standard approaches fail in four concrete ways for this use case. A fixed fractional rule treats a 51% prediction identically to a 91% prediction, discarding the confidence information the model worked to produce. Using the raw probability as the position size ignores concurrency. If ten triple‑barrier labels are active and each is sized at 10% of capital, total exposure reaches 100% without any deliberate decision to lever up.

Continuous resizing of an averaged signal generates micro‑adjustments whose transaction cost exceeds their expected P&L contribution. None of these rules incorporate payoff asymmetry. A strategy that wins three dollars for every one it loses warrants a different allocation than a symmetric bet at the same probability. The Kelly criterion captures this; probability‑only methods do not. The measurable symptoms are systematic overexposure during periods of high signal density, erosion of edge by transaction costs, and position sizes that are either recklessly large or unnecessarily small depending on the payoff structure of each trade.

This article presents the full bet sizing toolkit from Chapter 10 of Marcos López de Prado's Advances in Financial Machine Learning, implemented in the afml.bet_sizing module. After reading, you will have a complete, runnable sizing system composed of four components:

- a probability‑based first‑stage sizer (bet_size_probability / get_signal) that converts classifier output into a confidence‑weighted signal. It corrects for label concurrency by averaging all active bets over time and discretizes updates to prevent overtrading;

- a dynamic forecast‑price sizer (bet_size_dynamic) with sigmoid and power functional forms, a closed‑form calibration procedure that sets the aggressiveness parameter from a target divergence and bet size, and a limit‑price output for execution systems;

- a budget‑constrained sizer (bet_size_budget) and a data‑driven reserve sizer (bet_size_reserve) for strategies producing only directional signals. The reserve method learns its sizing curve from the empirical distribution of concurrent position imbalance using the EF3M algorithm. The output is a signed position series in [−1, 1] with concurrency adjustment and per‑bar diagnostics.

The companion article, Part 11, takes these signals and applies the Kelly criterion, a two‑stage hybrid architecture that adds payoff‑ratio awareness, a prop firm risk integration layer, and a CPCV‑based dynamic backtest. Calibration of the probability estimates themselves — and its measurable impact on position sizes and P&L — is addressed in the following article.

This article is Part 10 of the MetaTrader 5 Machine Learning Blueprint series. The preceding articles built the components this system depends on:

- Part 1 fixed data leakage at the bar level

- Part 2 introduced triple‑barrier and meta‑labels

- Part 3 added trend‑scanning labels

- Part 4 addressed label concurrency and introduced average uniqueness weights

- Part 5 introduced sequential bootstrapping and established the convention of separate training and scoring weights

- Part 6 built the caching infrastructure

- Part 7 and Part 9 assembled everything into a reproducible production pipeline

- Part 8 covered intelligent hyperparameter optimization

Why Naive Position Sizing Fails

To understand why the AFML bet sizing methods exist, it helps to understand precisely how simpler alternatives break down. There are three distinct failure modes, and financial ML suffers from all three simultaneously.

The Fixed Fraction Fallacy

The simplest sizing rule is to allocate a fixed fraction of capital — say, 2% — to every signal the model generates, regardless of its confidence. This rule treats a 51% probability prediction identically to a 95% probability prediction. In almost any reasonable model of optimal decision‑making under uncertainty, this is wrong. The expected value of a bet grows with the confidence of the prediction; the appropriate stake should too.

The fixed fraction rule also ignores the payoff structure. A bet that wins $2 for every $1 lost deserves a different allocation than a symmetric bet, even at the same probability. And it ignores drawdown history: a strategy that has already consumed 80% of its daily risk budget has less capacity for new positions than one starting the day fresh, even if the signal has identical strength in both cases.

The Concurrency Explosion Problem

The problem worsens dramatically when labels overlap in time, which is essentially universal with triple‑barrier labeling. Suppose a strategy generates signals with a typical holding period of ten days. Under normal market conditions, five or six of these signals may be active simultaneously. If each is sized at 10% of capital, total exposure is 50–60% — manageable. But during a period of unusually dense signal generation, fifteen or twenty signals may activate in quick succession, each of which will be active for the same ten days. If each is still sized at 10%, total exposure reaches 150–200% of capital — roughly three times the manageable baseline — without any model change or deliberate decision.

The Overtrading Trap

Even if confidence‑aware and concurrency‑aware, a continuous sizing signal that updates every bar creates a third problem: overtrading. If the bet size shifts from 10.2% to 10.5% of capital at each new bar, the strategy must rebalance continuously. Each adjustment incurs a spread and a commission. In a liquid futures market with a spread of 0.5 ticks and a commission of $5 per lot, micro‑adjustments of this kind can absorb the entire expected profit of the strategy before a single position is profitably closed. A practical sizing system must discretize position changes to increments that justify their transaction cost.

The AFML Bet Sizing Toolkit

The afml.bet_sizing module implements four distinct approaches. The user‑level functions in bet_sizing.py orchestrate the full pipeline. The underlying mathematics is implemented in ch10_snippets.py, preserving a direct correspondence with the numbered snippets in AFML Chapter 10. The EF3M algorithm — required by the most sophisticated method — lives in its own module, ef3m.py.

| Method | User Function | Primary Input | Core Mechanism |

|---|---|---|---|

| Probability‑based | bet_size_probability | Classifier probabilities | Z‑score through normal CDF, with optional active‑bet averaging and discretization |

| Dynamic (forecast price) | bet_size_dynamic | Forecast price vs market price | Sigmoid or power function of price divergence, calibrated to a target bet size at a target divergence; returns limit price |

| Budget‑constrained | bet_size_budget | Bet sides and timestamps | Ratio of active long minus active short positions to their respective historical maxima; no probability required |

| Reserve (EF3M mixture) | bet_size_reserve | Bet sides and timestamps | CDF of a mixture of two Gaussians fitted to the empirical distribution of concurrent position imbalance |

Each method occupies a different niche. They are not interchangeable alternatives to the same problem but responses to genuinely different problem structures. The correct choice depends on what your model outputs, whether labels overlap, how much historical data you have, and whether you can characterize your payoff structure.

Probability-Based Sizing (bet_size_probability)

This is the most commonly applicable method and the natural starting point for any classifier‑based strategy. Its core logic is implemented in get_signal (AFML Snippet 10.1).

The Statistical Mechanics of get_signal

The input is a predicted probability p from a classifier trained on a problem with num_classes possible outcomes. The null hypothesis is that the model has no skill — its predictions are no better than random guessing. Under the null, the expected probability for any single class is the base rate 1/num_classes. For binary classification, this is 0.5.

The method measures how statistically surprising the predicted probability is relative to this null, normalizing by the natural scale of uncertainty in a Bernoulli variable:

z = (p - 1/num_classes) / sqrt(p * (1 - p))

The denominator sqrt(p * (1 - p)) is the standard deviation of a Bernoulli(p) variable — the fundamental unit of uncertainty for a binary outcome. It is maximized at p = 0.5 (maximum uncertainty) and collapses toward zero as p approaches 0 or 1 (high certainty). Dividing by this quantity means the z‑score is not just the raw distance from the base rate, but that distance normalized by how uncertain the prediction itself is. This z‑score is then passed through the standard normal CDF and mapped to the interval [−1, 1]:

signal = 2 * norm.cdf(z) - 1

When a predicted side pred (+1 for long, −1 for short) is provided, the final signed bet size becomes pred × signal. Otherwise, only the unsigned magnitude is returned. The resulting signal is zero when the prediction equals the base rate (no edge, no position), monotonically increasing in confidence, and saturates — approaching ±1 asymptotically — rather than growing without bound.

Why use the normal CDF? Under the assumption that the predicted probability is drawn from a normal distribution of prediction errors around the true probability, the z‑score follows a standard normal distribution. The CDF of that distribution is therefore the natural mapping from "how many standard deviations above the null is this prediction" to "what fraction of predictions with this z‑score are genuinely informative." It provides a continuous, differentiable, bounded mapping that is stable under the kinds of probability estimates real models produce, and it has the correct qualitative shape — steep near the null and flat near the extremes.

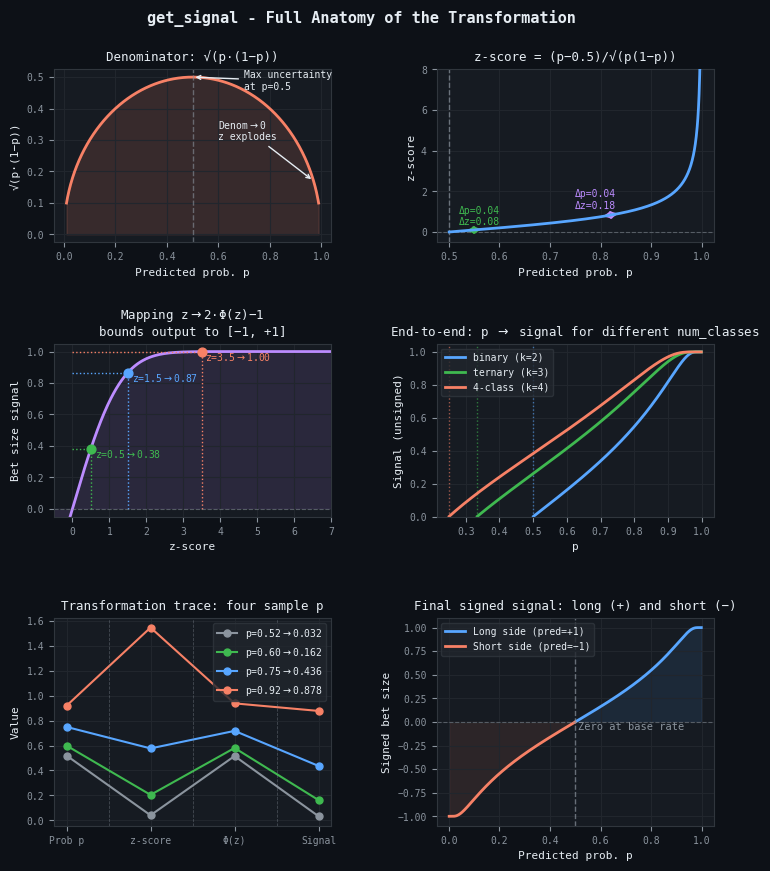

Figure 1. Full anatomy of the get_signal transformation

- Top-left: The Bernoulli standard deviation denominator is maximized at p=0.5 and collapses toward zero near p=0 and p=1. As the denominator approaches zero, the z‑score grows without bound.

- Top-right: The same absolute increment Δp=0.04 produces a much larger Δz near p=0.9 than near p=0.5, because the denominator is smaller at higher probabilities. The z‑score is a confidence-normalized distance from the base rate.

- Middle-left: The standard normal CDF compresses the unbounded z‑score into the interval [−1, +1]. Three sample points illustrate the non-linear mapping: the same Δz produces a larger change in signal near z=0 than near z=3.

- Middle-right: The complete transformation for binary (k=2), ternary (k=3), and 4-class (k=4) problems. Each curve is zero at its respective base rate 1/k and approaches 1 asymptotically.

- Bottom-left: The value at each stage of the pipeline (p → z-score → Φ(z) → signal) for four representative predicted probabilities, illustrating how p=0.92 maps to a near-maximum signal while p=0.52 maps to a near-zero signal.

- Bottom-right: The signed bet size for the long side (predicted class +1) and the short side (predicted class −1). Both are zero at the base rate p=0.5 and saturate toward ±1 at the extremes.

Averaging Active Signals: The Concurrency Fix

The z‑score transformation addresses the confidence problem. The averaging layer — implemented in avg_active_signals (AFML Snippet 10.2) — addresses the concurrency problem.

Consider a strategy whose triple‑barrier labels have a typical duration of ten bars. At any given moment, between four and twelve signals from the past ten bars are still open — their labels have been assigned but their holding periods have not expired. If you simply use each signal's get_signal output as that signal's position size, the strategy is implicitly holding multiple overlapping positions simultaneously, each sized as if it were the only open trade.

avg_active_signals corrects this by computing, at every point in time, the average bet size across all concurrently active signals. It takes a DataFrame containing a signal column (the per‑observation bet sizes from get_signal) and a t1 column (the end time of each bet). For each timestamp in the combined set of start and end times, it computes the mean signal across all bets active at that time. The result is a continuous‑time exposure profile: at each moment, the portfolio holds a single averaged position that reflects the collective conviction of all simultaneously active signals. The implementation uses a Numba‑accelerated inner loop with @njit(parallel=True) to make this computation feasible at production scale.

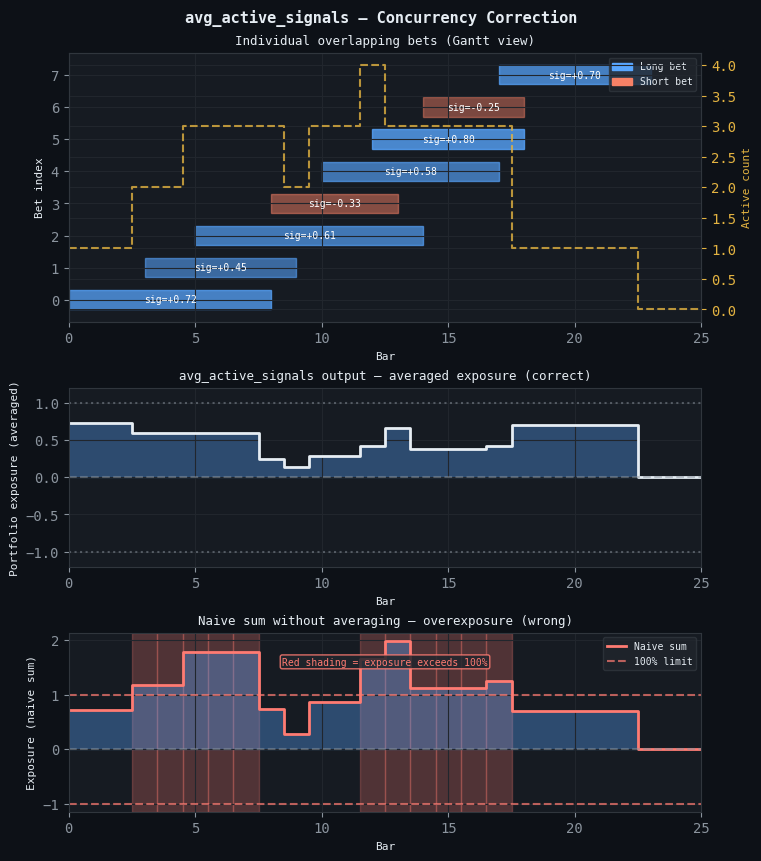

Figure 2. Three-panel illustration of avg_active_signals.

- Top: A Gantt chart of eight overlapping bets, color-coded by side (blue = long, orange = short) and opacity-scaled by signal strength. The yellow dashed step line on the right axis shows the number of concurrently active bets at each bar.

- Center: The output of avg_active_signals — the mean of all simultaneously active signals at each bar. Portfolio exposure is bounded within ±1 at all times.

- Bottom: The raw sum of all active signals at each bar without averaging. Red-shaded columns mark every point where total exposure exceeds 100% of capital; at peak signal density the naive sum reaches 1.78 — 78% above the intended maximum.

Discretization to Prevent Overtrading

After averaging, the signal is still a continuous value that may shift marginally at every new bar. AFML Snippet 10.3 provides a simple but essential discretization:

signal1 = (signal0 / step_size).round() * step_size signal1[signal1 > 1] = 1 # Cap signal1[signal1 < -1] = -1 # Floor

A step_size of 0.05 means the strategy will only adjust its position when the required change exceeds 5% of maximum. This filters out micro‑adjustments whose expected P&L contribution is dominated by transaction costs. For a strategy trading standard FX lots with a $5/lot commission, this threshold typically prevents roughly 60–80% of would‑be position adjustments without meaningfully reducing signal fidelity.

Complete Usage Example

from afml.bet_sizing.bet_sizing import bet_size_probability # events: pd.DataFrame with datetime index and 't1' column (label end times) # prob: pd.Series of predicted probabilities from your classifier # sides: pd.Series of +1 (long) / -1 (short) primary model predictions bet_sizes = bet_size_probability( events=events, prob=prob, num_classes=2, pred=sides, step_size=0.05, # 5% position increments average_active=True, # handle overlapping triple-barrier labels ) # bet_sizes: pd.Series indexed by bar time, values in [-1, 1] # Positive = long, Negative = short, 0 = flat # Evaluated at both the open and close of every active bet

When to Use

Use this method whenever the model outputs class probabilities, which is the case for virtually all classifiers in scikit‑learn. The average_active=True option should be treated as the default for any strategy built on triple‑barrier labels — omitting it is equivalent to assuming your labels never overlap, which is almost never true. The method works with any number of classes via the num_classes parameter and is the natural first‑stage sizer in a two‑stage architecture where a Kelly multiplier is applied as a second stage, as described in Part 11.

Dynamic Sizing with Forecast Prices (bet_size_dynamic)

Not all models output class probabilities. A mean‑reversion model might predict the price level at which a spread will revert. A fundamental value model might estimate a fair price. A pairs trading model might predict the equilibrium ratio between two assets. In these cases, the natural model output is a continuous forecast price rather than a probability, and the sizing question becomes: as the market price diverges from the forecast, how large a position is warranted?

AFML Snippet 10.4 provides two functional forms: sigmoid and power. Both are governed by a single calibration parameter w.

The Sigmoid Form and Its Geometry

Let price_div = forecast_price − market_price. The sigmoid bet size is:

bet_size = price_div / sqrt(w + price_div**2)

This function is zero at price_div = 0, bounded in (−1, +1), and odd‑symmetric. The parameter w controls the slope: small w produces a steep, step‑like function (aggressive sizing); large w produces a gradual function (conservative sizing). Critically, the sigmoid always has the same qualitative S‑shape — w controls only the width of the transition, not the fundamental curvature of the response.

The Power Form and When Expected Value Is Non-Linear

The power form uses a structurally different functional shape:

bet_size = sign(price_div) * abs(price_div)**w

This requires |price_div| ≤ 1 — the divergence must be normalized. The qualitative shape changes fundamentally with w: for w < 1, the function is concave — it rises steeply near zero (positions build rapidly at small divergences); for w = 1, it is exactly linear; for w > 1, it is convex — it stays low at small divergences and accelerates at large ones. This is the structural difference that makes the power form applicable where the sigmoid is not.

The power form is particularly useful when the expected value of the trade increases non‑linearly with divergence. For a mean‑reversion strategy, the relationship between divergence and expected profit is typically convex — a small deviation from fair value could easily be noise; the probability of a genuine, profitable reversion is low and grows faster than the divergence itself as the mispricing becomes statistically significant.

The optimal sizing function in this regime should therefore also be convex — holding back capital at small, noisy divergences and deploying aggressively when the signal is statistically significant. The power form with w > 1 achieves exactly this shape, which the sigmoid cannot produce regardless of how w is calibrated. For a momentum or breakout strategy, the relationship reverses: the initial breakout carries most of the information, and as divergence grows the trade becomes more crowded and mean‑reversion forces grow. The power form with w < 1 matches this concave EV curve.

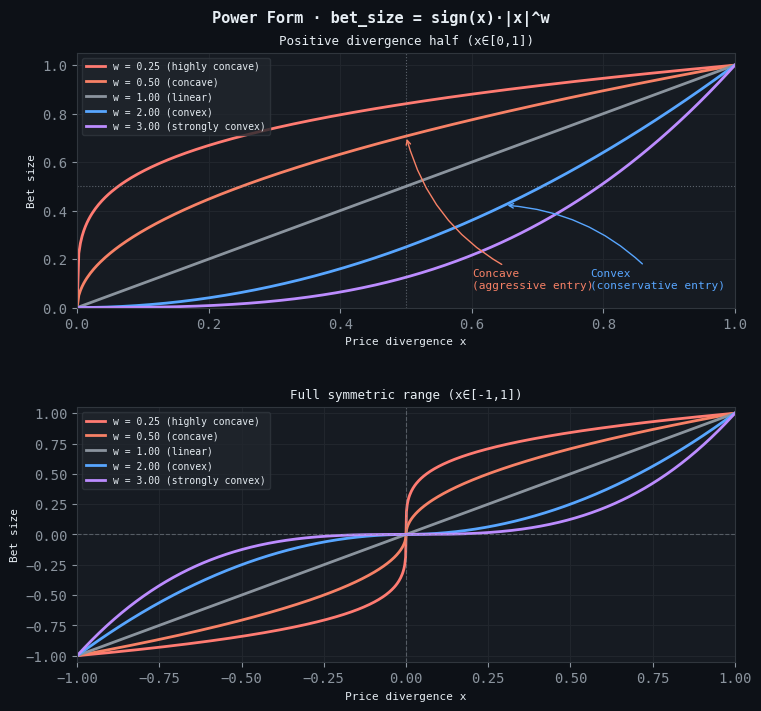

Figure 3. Power form curvature taxonomy

- Top: Positive divergence half (x∈[0,1]), showing the five qualitative families across w. w < 1 is concave — bet size builds rapidly near zero (aggressive early entry). w = 1 is exactly linear. w > 1 is convex — bet size is suppressed at small divergences and accelerates at large ones.

- Bottom: Full symmetric range (x∈[−1,1]). The same five curves extended to negative divergence, confirming odd symmetry. The sigmoid form, by contrast, always produces the same S-shape regardless of its calibration parameter.

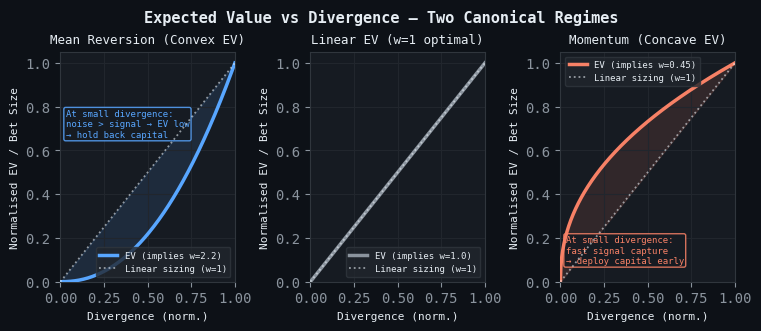

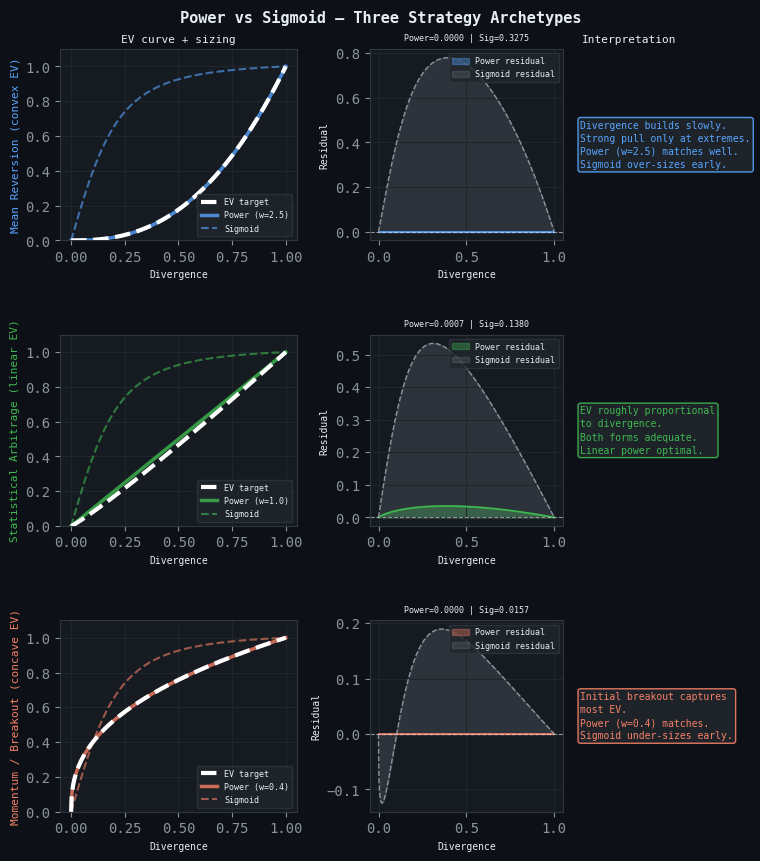

Figure 4. Two canonical EV-divergence regimes

- Left — Mean Reversion (Convex EV): EV accelerates at large divergences, where the mispricing is statistically significant and the probability of genuine reversion grows faster than the divergence itself. The implied optimal w is 2.2. The shaded region is capital misallocated by a linear sizing rule.

- Center — Linear EV (w=1 optimal): EV is proportional to divergence throughout. The dotted white reference coincides with the EV curve; no systematic misallocation from a linear sizing rule.

- Right — Momentum / Breakout (Concave EV): EV is captured largely at the initial signal and decelerates as the trade becomes crowded. The implied optimal w is 0.45. The shaded region is capital left on the table by under-sizing the initial breakout.

Figure 5. Power versus sigmoid sizing for three strategy archetypes

- Left column — EV curve + sizing: The EV target (white dashed), the best-fit power sizing (solid), and the sigmoid sizing (dashed, same color) for each archetype.

- Center column — Residual (sizing − EV): The signed difference between each sizing curve and the EV target. A residual above zero means the sizing function over-allocates relative to EV; below zero means it under-allocates.

- Right column — Interpretation: A concise summary of which form fits the archetype and why.

- Top row (Mean Reversion): Convex EV — power w=2.5 tracks throughout; sigmoid over-sizes at small divergences where EV is lowest.

- Middle row (Statistical Arbitrage): Linear EV — both forms adequate; linear power is the correct default.

- Bottom row (Momentum / Breakout): Concave EV — power w=0.4 matches the rapid early capture; sigmoid under-sizes the critical initial breakout.

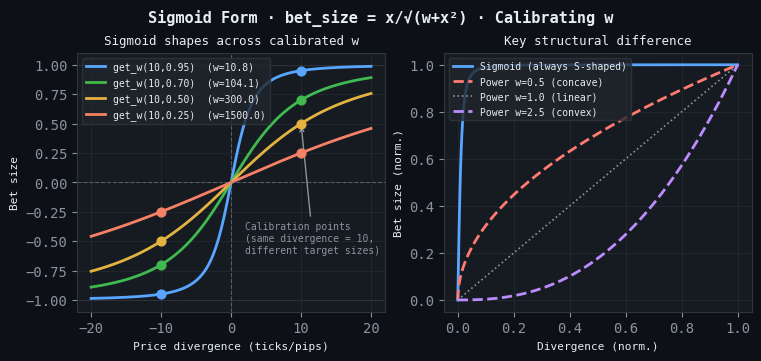

Calibrating w

Rather than guessing w, the function get_w computes it from a calibration target: "I want a bet size of cal_bet_size when the divergence is cal_divergence." For the sigmoid form, this inverts the formula analytically. For the power form, an optimal w can be found by fitting it to an empirical EV‑divergence curve estimated from the backtest.

from afml.bet_sizing.ch10_snippets import get_w # Calibrate sigmoid: at 10 pips divergence, bet size should be 0.95 w = get_w(price_div=10, m_bet_size=0.95, func='sigmoid') # w ≈ 0.28 — steep function, aggressive sizing # Calibrate sigmoid: at 10 pips divergence, bet size should be 0.50 w = get_w(price_div=10, m_bet_size=0.50, func='sigmoid') # w ≈ 75.0 — shallow function, conservative sizing

Figure 6. Sigmoid form · bet_size = x/√(w+x²) · Calibrating w

- Left — Sigmoid shapes across calibrated w: Four sigmoid curves, each passing through a calibration point (circle) where bet_size_sigmoid(w, 10) = cal_bet_size. All share the same S-shape; w controls only the width of the transition zone.

- Right — Key structural difference: The sigmoid compared with three power-form curves on the same normalized scale. The sigmoid cannot produce the purely convex shape of w=2.5 nor the purely concave shape of w=0.5, regardless of how it is calibrated.

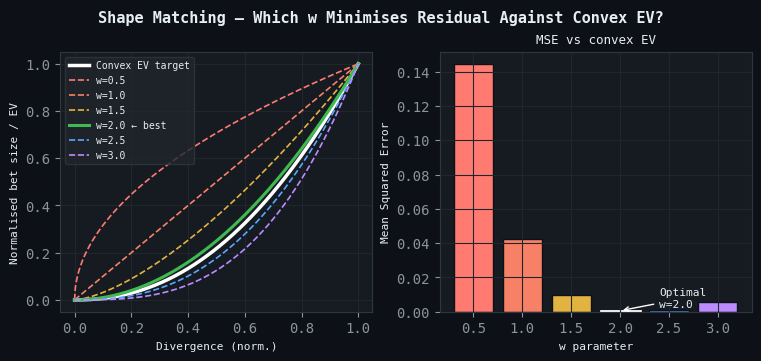

Figure 7. Shape matching — which w minimizes residual against convex EV?

- Left — Curve overlay: Power-form curves at six values of w plotted against the convex EV target (white). The best-fit curve (solid) visibly tracks the target most closely; all others are dashed.

- Right — MSE bar chart: Mean squared error between each power curve and the EV target. The optimal w (2.0) is highlighted with a white border. This calibration procedure can be applied to any empirical EV-divergence curve estimated from a backtest.

Target Position and Limit Price

bet_size_dynamic returns not just the normalized bet size but also the target integer position (t_pos) and a limit price (l_p). The limit price is computed by integrating the inverse of the bet‑size function across each discrete position unit from the current position to the target, yielding the average price at which execution is economically break‑even given the forecast. A trader or execution algorithm can use this as the upper bound for limit orders.

Code Example

from afml.bet_sizing.bet_sizing import bet_size_dynamic result = bet_size_dynamic( current_pos=current_positions, max_pos=100, market_price=prices, forecast_price=forecasts, cal_divergence=10, # calibrate: at 10 pips divergence... cal_bet_size=0.95, # ...bet size is 0.95 func='sigmoid', ) # result: DataFrame with columns bet_size, t_pos, l_p

When to Use

Use this method when the model outputs a forecast price rather than a class probability: regression models, fair‑value frameworks, mean‑reversion spread strategies, and any approach where the position should grow continuously as the market moves away from fair value. Choose the sigmoid when the EV‑divergence relationship is approximately linear or unknown. Choose the power form when you have enough historical data to characterize the EV‑divergence curve and it is demonstrably convex or concave.

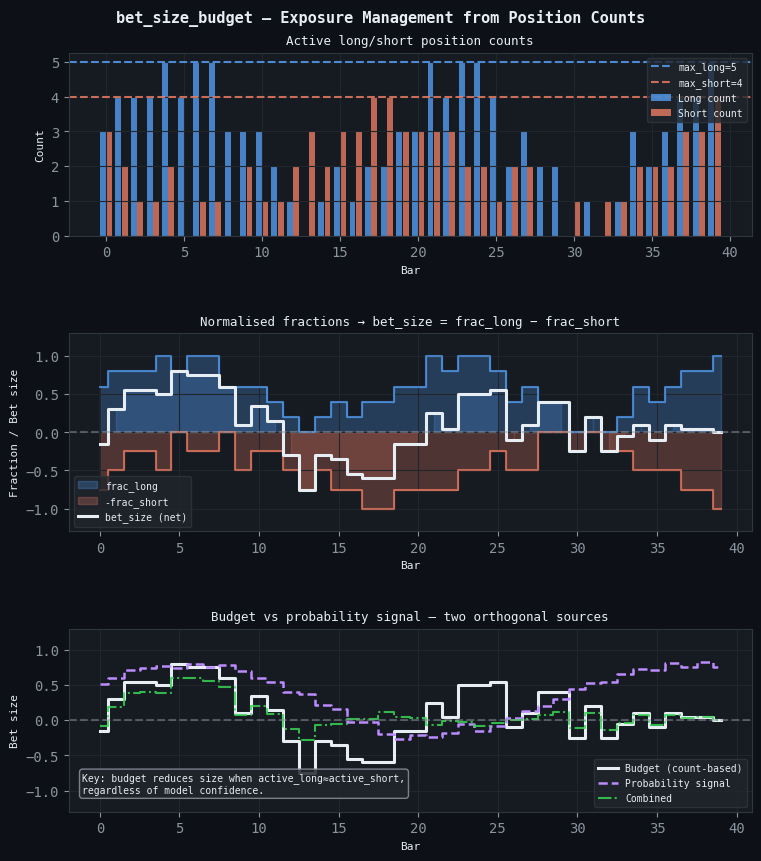

Budget-Constrained Sizing (bet_size_budget)

Some strategies provide only a binary direction without a confidence score. A simple trend‑following rule based on a moving average crossover, a momentum rank model, or a clustering‑based regime signal may generate only a buy or sell signal and a timestamp. In these cases, neither get_signal nor bet_size_dynamic is applicable.

bet_size_budget addresses this by treating position size as a function of the current state of the strategy's position book, not of any individual signal's confidence. It computes, at each timestamp, the number of concurrently active long bets and short bets using a Numba‑parallelized inner loop. The bet size for each observation is then the normalized long‑short imbalance:

bet_size = (active_long / max_long) - (active_short / max_short)

where max_long and max_short are the historical maxima of concurrent long and short bets respectively. When the strategy has its maximum historical long concentration with no active shorts, the bet size is +1. When both sides are equally concentrated relative to their maxima, the bet size is zero.

The economic intuition is that of a capacity constraint. The strategy knows how busy it typically gets. When it is unusually long‑biased relative to its own history, the portfolio should be long. When the long and short signals roughly balance, the portfolio should be close to flat. In live trading, the historical maxima should be updated with a rolling window rather than the full backtest, since the maximum concurrent positions observed historically may understate or overstate future activity.

Figure 8. Three panels illustrating bet_size_budget

- Top: Raw counts of concurrently active long and short bets at each bar. Horizontal dashed lines mark the historical maxima (max_long, max_short) used as normalization denominators.

- Center: frac_long and −frac_short as filled step areas; the white step line is the net bet size (frac_long − frac_short), bounded within [−1, +1] by construction.

- Bottom: The budget signal (white, count-based, no probability), a synthetic probability signal (purple dashed, no concurrency awareness), and their product (green dash-dot). The budget method knows the portfolio regime; the probability signal knows model confidence. Their product captures both dimensions simultaneously.

When to Use

Use this method when the model generates only directional signals without confidence scores, or as a secondary overlay on top of a probability‑based primary sizer. Applied as an overlay, it scales the signal‑level size by a factor that ensures total exposure never exceeds historical norms, even when the primary sizer is unaware of the concurrent position count.

Reserve Sizing via Mixture of Gaussians (bet_size_reserve)

The budget‑constrained method uses the long‑short imbalance as an input but maps it through a linear function. The reserve method replaces that linear map with a data‑driven shape learned from the empirical distribution of the imbalance itself. It is the most statistically sophisticated of the four approaches.

The Two-Regime Intuition

Consider the distribution of the concurrent position imbalance c_t = active_long − active_short over a long history of a typical trend‑following strategy. This distribution tends to be bimodal. There are many observations where longs and shorts roughly cancel (a period of indecision or regime transition), clustering the distribution near zero. And there are many observations where the strategy has taken a strong directional view, clustering the distribution at large positive or negative values. A unimodal Gaussian would fit this distribution poorly. A mixture of two Gaussians — one centered near zero for the neutral regime, one centered away from zero for the directional regime — captures the bimodality accurately.

This is the premise of bet_size_reserve. The fitted mixture CDF becomes the sizing function: its output is zero when c_t = 0, approaches ±1 as c_t becomes extreme, and has whatever intermediate shape the empirical distribution imposes.

The EF3M Algorithm

The parameters of the mixture — means (μ₁, μ₂), standard deviations (σ₁, σ₂), and mixing probability (p₁) — are estimated using the EF3M algorithm (Exact Fit of the first 3 Moments), developed by López de Prado and Foreman (2014). The algorithm uses the first five raw moments of the empirical distribution to pin down the five parameters of the mixture. The implementation in ef3m.py runs n_runs independent fitting attempts (default 100) from different starting conditions and selects the most likely parameter set via a kernel density estimate over the results. EF3M requires a substantial history — several hundred bets at minimum, preferably several thousand — for stable parameter estimates.

The Mixture CDF Sizing Formula

Once the parameters are estimated, the bet size for a given c_t is:

if c_t >= 0: bet_size = (F(c_t) - F(0)) / (1 - F(0)) else: bet_size = (F(c_t) - F(0)) / F(0) # F(x) = p1*norm.cdf(x, mu1, s1) + (1-p1)*norm.cdf(x, mu2, s2)

This formula maps the CDF conditional on the sign of c_t, producing a value in [−1, 1] that is zero at c_t = 0 and approaches ±1 as c_t becomes extreme. The shape is determined by the fitted mixture, not by a pre‑specified formula, and produces a customized sizing function.

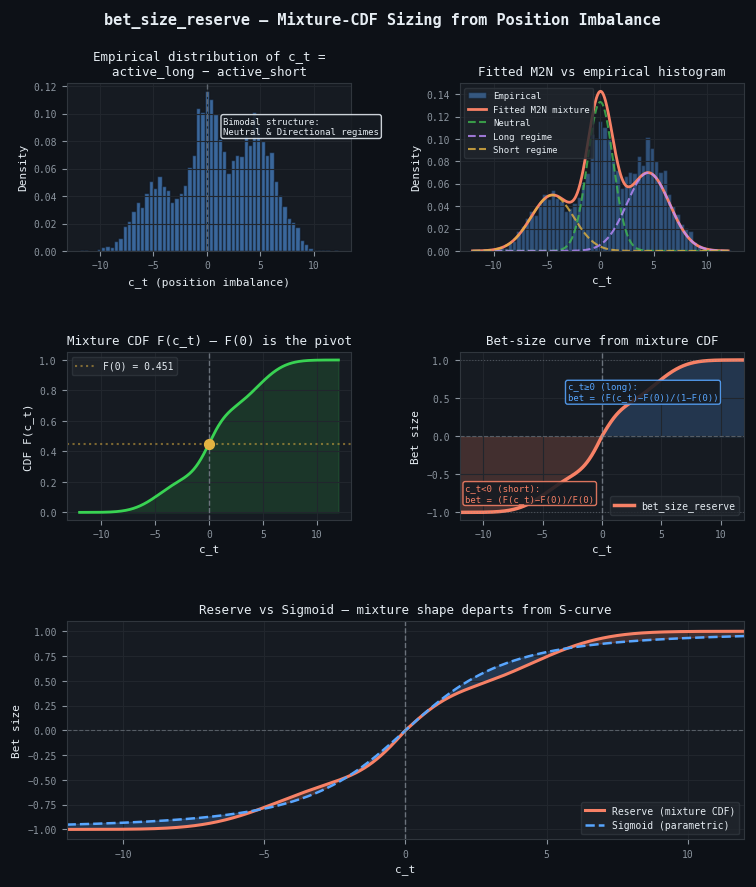

Figure 9. Five panels illustrating the full EF3M pipeline

- Top-left: Histogram of the concurrent position imbalance c_t = active_long − active_short. The bimodal structure — one cluster near zero (neutral / transitional regime) and one at larger values (directional regime) — motivates the mixture-of-Gaussians model.

- Top-right: The fitted mixture PDF (orange) overlaid on the histogram, with the two individual Gaussian components shown as dashed lines: neutral (green) and directional regime (purple).

- Middle-left: The cumulative distribution function of the fitted mixture. The pivot point F(0) is marked; this value normalizes the CDF into a bet size on each side of zero.

- Middle-right: The sizing function derived from the mixture CDF. For c_t ≥ 0: bet = (F(c_t)−F(0))/(1−F(0)). For c_t < 0: bet = (F(c_t)−F(0))/F(0). The curve is zero at c_t=0 and approaches ±1 asymptotically.

- Bottom: The data-driven reserve curve (orange) compared with a sigmoid calibrated to the same extreme value (blue dashed). Shaded regions show where the two methods diverge; the asymmetry and non-S shape of the reserve curve reflects the empirical position distribution rather than a pre-specified functional form.

Code Example

from afml.bet_sizing.bet_sizing import bet_size_reserve result, params = bet_size_reserve( events_t1=events['t1'], sides=sides, fit_runs=100, variant=2, # EF3M with first 5 moments return_parameters=True, ) # result: DataFrame with t1, side, active_long, active_short, c_t, bet_size # params: dict with mu_1, mu_2, sigma_1, sigma_2, p_1

When to Use

Use this method when you have sufficient historical bets for EF3M to produce stable parameter estimates, when the distribution of concurrent positions is demonstrably bimodal, and when you want the sizing function to be fully data‑driven. Re‑estimate the mixture parameters periodically — quarterly or whenever a significant regime shift has occurred — as the parameters depend on the joint distribution of signal activity, which itself reflects market structure.

Conclusion

The four methods in this article address each of the failure modes identified in Section 1. bet_size_probability with average_active=True handles confidence‑aware, concurrency‑corrected signal sizing for classifier‑based strategies. bet_size_dynamic with its sigmoid and power forms handles forecast‑price strategies, with the power form's flexible curvature matching the EV‑divergence relationship when it is demonstrably non‑linear. bet_size_budget provides exposure management when no confidence score is available. bet_size_reserve learns the sizing function from the data itself when the distribution of concurrent positions is bimodal and the history is sufficient to characterize it.

Key takeaways:

- Always account for label overlap. Set average_active=True unless your labels are provably non‑overlapping. The concurrency explosion problem is not hypothetical — it is a systematic risk in every triple‑barrier strategy.

- Match the sizing function shape to the EV‑divergence relationship. For mean‑reversion strategies with convex EV, use the power form with w > 1. For momentum strategies with concave EV, use w < 1. For unknown or linear EV, the sigmoid is the robust default.

- Calibrate w explicitly. Use get_w with a target divergence and bet size that corresponds to a genuine economic assumption. Never guess w.

- Be aware that sizing quality depends on probability quality. These methods propagate whatever confidence estimates they receive directly into position sizes. The impact of miscalibration on position sizes and P&L is addressed in the upcoming calibration article.

The companion article (Part 11) applies the Kelly criterion to the sizing signal produced here. It uses a two‑stage architecture that adds payoff‑ratio awareness without disrupting concurrency correction. It also integrates prop‑firm risk constraints and runs a CPCV dynamic backtest across φ(N, k) combinatorial paths.

Attached Files

| File | Module | Description | |

|---|---|---|---|

| 1. | bet_sizing.py | afml.bet_sizing | User‑level functions: bet_size_probability, bet_size_dynamic, bet_size_budget, bet_size_reserve. |

| 2. | ch10_snippets.py | afml.bet_sizing | Low‑level implementations: get_signal, avg_active_signals, discrete_signal, sigmoid and power families, get_w. Numba‑accelerated. |

| 3. | ef3m.py | afml.bet_sizing | EF3M algorithm. M2N class with mp_fit parallel fitting. Required by bet_size_reserve. |

Further Reading

- Lopez de Prado, M. (2018). Advances in Financial Machine Learning. John Wiley & Sons.

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

Swing Extremes and Pullbacks in MQL5 (Part 3): Defining Structural Validity Beyond Simple Highs/Lows

Swing Extremes and Pullbacks in MQL5 (Part 3): Defining Structural Validity Beyond Simple Highs/Lows

Market Simulation (Part 19): First Steps with SQL (II)

Market Simulation (Part 19): First Steps with SQL (II)

Market Simulation (Part 20): First steps with SQL (III)

Market Simulation (Part 20): First steps with SQL (III)

From Basic to Intermediate: Inheritance

From Basic to Intermediate: Inheritance

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use