MetaTrader 5 Machine Learning Blueprint (Part 13): Implementing Bet Sizing in MQL5

Table of Contents

- Introduction

- Architecture Overview

- Core Data Structures and Utilities

- Probability-Based Sizing in MQL5

- Dynamic Forecast-Price Sizing in MQL5

- Budget-Constrained Sizing in MQL5

- Reserve Sizing via Mixture of Gaussians in MQL5

- Wiring the Pipeline: A Complete Expert Advisor

- Conclusion

- Attached Files

Introduction

Part 10 of this series derived four bet-sizing methods from first principles and showed their Python implementations in the afml.bet_sizing module. Each method solves a concrete problem: probability-based sizing propagates classifier confidence into position magnitude and corrects for label concurrency; dynamic sizing maps a continuous forecast-price divergence to a position through a calibrated functional form; budget-constrained sizing manages exposure when no confidence score exists; and reserve sizing learns the sizing curve entirely from data. The analytical foundations are now established. What remains is the translation problem: how do you run these methods inside MetaTrader 5, where every computation must fit inside a tick-driven event loop and where there is no SciPy, no NumPy, and no multiprocessing?

This article answers that question in practical terms. It presents four MQL5 include files, one per sizing method, that reproduce the Python module’s mathematical behavior. It also includes a fifth file with the shared data structures and statistical utilities required by all methods. Each file is self-contained enough to drop into an existing Expert Advisor with minimal modification, yet cohesive enough that all four methods can be combined in a single EA that selects the appropriate sizer at runtime. The implementations are exact where exactness is tractable and numerically equivalent where exactness is not tractable. The normal CDF is computed via a minimax rational approximation accurate to seven significant figures.

The EF3M mixture parameters are estimated by a multi-start analytic solve: for each of n_runs random seed pairs (μ₁, p₁), the remaining three parameters (μ₂, σ₁, σ₂) are derived in closed form from a 2×2 linear system built from the first three raw moments, and the candidate with the highest log-likelihood is retained. For the concurrent-bet count queries in the budget and reserve methods, the O(N²) nested-loop scan is replaced by an O(N log N) sweep-line over a sorted event array; the averaging step in the probability method (AvgActiveSignals) remains O(N²), since computing a mean signal over all active intervals requires signal values, not only counts.

After reading this article, you will have a complete, runnable MQL5 sizing system suitable for any classifier-driven EA from the earlier articles in this series. The system produces a signed position size in [−1, 1] at every new bar, a diagnostic struct that records every factor that shaped the size, and a limit-price output for execution when the dynamic method is active. Part 14 connects this sizing layer to the CPCV backtesting framework running inside the MetaTrader 5 Strategy Tester.

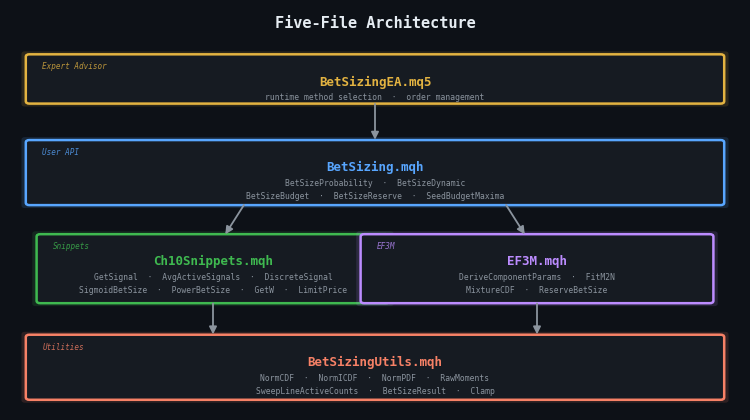

Architecture Overview

Before writing a single line of MQL5, it is worth mapping the dependency graph. The Python module has a clean two-layer structure: user-level orchestration functions call low-level snippet implementations. The MQL5 port preserves that separation and adds a third layer — a shared utility layer — because MQL5 provides none of the statistical infrastructure that Python inherits from NumPy and SciPy.

| Layer | File | Responsibility |

|---|---|---|

| Utilities | BetSizingUtils.mqh | Normal CDF/ICDF, moment computation, struct definitions, sweep-line event counter |

| EF3M | EF3M.mqh | Mixture-of-Gaussians parameter estimation from the first three raw moments |

| Snippets | Ch10Snippets.mqh | GetSignal, AvgActiveSignals, DiscreteSignal, sigmoid/power bet sizes, GetW, LimitPrice |

| User API | BetSizing.mqh | BetSizeProbability, BetSizeDynamic, BetSizeBudget, BetSizeReserve |

| Expert Advisor | BetSizingEA.mq5 | Wires classifier output to the selected sizer; sends orders |

The flowchart below shows the data flow on each new bar. The EA sits at the top and receives two inputs from the classifier pipeline built in earlier articles: a predicted probability array and a predicted side array. Depending on which sizing method is selected via an input parameter, the EA routes those inputs through the appropriate user-level function. Each user-level function calls one or more snippet-level functions, all of which call utilities. The result flows back up to the EA as a BetSizeResult struct, from which the EA extracts the signed position size and limit price.

Figure 1. Five-file dependency stack

- Expert Advisor layer: BetSizingEA.mq5 wires classifier output to the selected sizer and manages orders.

- User API layer: BetSizing.mqh exposes the four orchestration functions and the budget seeding helper.

- Snippets / EF3M layer: Ch10Snippets.mqh and EF3M.mqh implement the low-level mathematical primitives, each depending only on the utilities layer.

- Utilities layer: BetSizingUtils.mqh provides statistical primitives and shared data structures with no upstream dependencies.

One architectural decision deserves explicit mention. The Python module stores signals in pandas DataFrames indexed by datetime, which makes concurrency lookups trivially simple. MQL5 has no such structure. All time-indexed data is stored as parallel arrays sorted by bar index, and the sweep-line algorithm in BetSizingUtils.mqh replaces the DataFrame-based overlap detection. This substitution is not a simplification — it produces identical results and runs faster on large arrays because it avoids repeated linear scans.

Core Data Structures and Utilities

Every sizing method depends on three statistical primitives: the standard normal CDF, its inverse, and the computation of raw moments from a data array. MQL5 provides none of these natively. BetSizingUtils.mqh implements all three, along with the shared result struct and the sweep-line concurrency counter.

The Normal CDF Approximation

The Hart (1968) minimax rational approximation achieves seven-significant-figure accuracy across the full real line and requires only a handful of floating-point operations — critical in a tick-driven loop where the CDF may be called thousands of times per second:

//--- BetSizingUtils.mqh (excerpt) double NormCDF(double x) { // Hart (1968) minimax rational approximation, |error| < 7.5e-8 double t = 1.0 / (1.0 + 0.2316419 * MathAbs(x)); double poly = t * (0.319381530 + t * (-0.356563782 + t * (1.781477937 + t * (-1.821255978 + t * 1.330274429)))); double cdf = 1.0 - (1.0 / MathSqrt(2.0 * M_PI)) * MathExp(-0.5 * x * x) * poly; return((x >= 0.0) ? cdf : 1.0 - cdf); } //+------------------------------------------------------------------+ //| | //+------------------------------------------------------------------+ double NormICDF(double p) { // Beasley-Springer-Moro algorithm, accurate to 1e-7. // Central region uses the AS 111 rational form (Beasley & Springer 1977); // the 9-coefficient Chebyshev tail extension is from Moro (1995). static const double a[] = {2.50662823884, -18.61500062529, 41.39119773534, -25.44106049637 }; static const double b[] = {-8.47351093090, 23.08336743743, -21.06224101826, 3.13082909833 }; static const double c[] = {0.3374754822726147, 0.9761690190917186, 0.1607979714918209, 0.0276438810333863, 0.0038405729373609, 0.0003951896511349, 0.0000321767881768, 0.0000002888167364, 0.0000003960315187 }; double y = p - 0.5; if(MathAbs(y) < 0.42) { double r = y * y; return(y * (((a[3] * r + a[2]) * r + a[1]) * r + a[0]) / ((((b[3] * r + b[2]) * r + b[1]) * r + b[0]) * r + 1.0)); } double r = (y > 0.0) ? 1.0 - p : p; r = MathLog(-MathLog(r)); double q = c[0] + r * (c[1] + r * (c[2] + r * (c[3] + r * (c[4] + r * (c[5] + r * (c[6] + r * (c[7] + r * c[8]))))))); return((y > 0.0) ? q : -q); } //+------------------------------------------------------------------+

The BetSizeResult Struct

All four user-level functions return a single BetSizeResult struct. Carrying diagnostics alongside the sizing output means the EA can log every factor that shaped each position without a second pass through the data:

struct BetSizeResult { double bet_size; // Signed position size in [-1, 1] double t_pos; // Target integer position (dynamic method only) double l_p; // Limit price (dynamic method only) double raw_signal; // Pre-averaging, pre-discretization signal double avg_signal; // Post-averaging, pre-discretization signal int active_long; // Concurrent active long bets at this bar int active_short; // Concurrent active short bets at this bar double c_t; // Long-short imbalance (budget/reserve methods) datetime bar_time; // Bar open time this result corresponds to };

The Sweep-Line Concurrency Counter

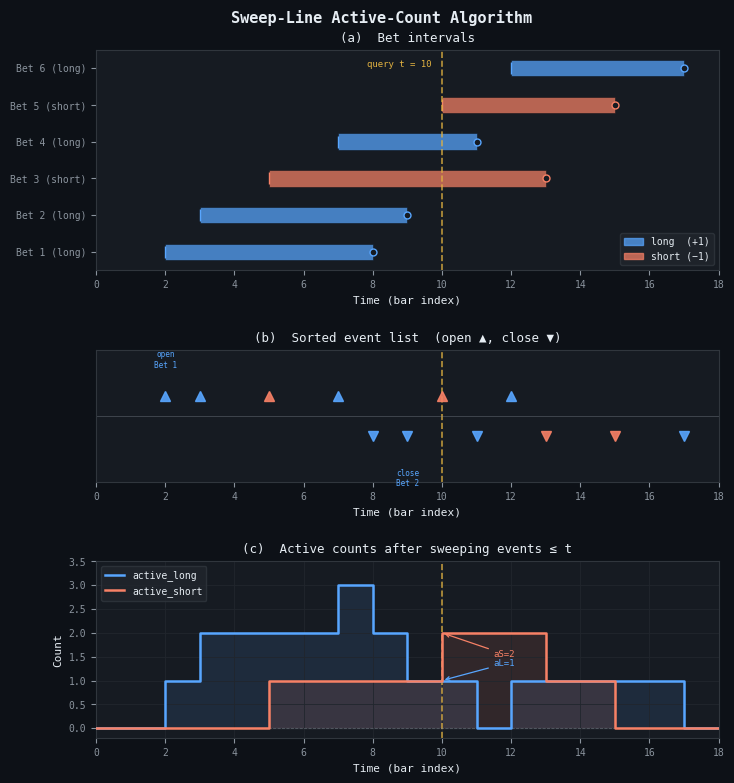

The Python module detects concurrency by iterating over a DatetimeIndex and checking which signals' [start, t1) intervals cover each query time. For large arrays, this is O(N²). The sweep-line replacement runs in O(N log N): sort all interval start and end events into a single array, sweep through it once incrementing a counter on each start and decrementing on each end, and record the long/short counts at each query timestamp.

//--- Sweep-line active-count for parallel arrays of open/close times void SweepLineActiveCounts( const datetime &open_times[], // Bet open timestamps const datetime &close_times[], // Bet close timestamps (t1) const int &sides[], // +1 / -1 const datetime &query_times[], // Bar times to evaluate at int &active_long[], // Output: active long count per query int &active_short[] // Output: active short count per query ) { // Build event list: (time, +1=open / -1=close, side) SLEvent events[]; ArrayResize(events, 2 * n_bets); for(int i = 0; i < n_bets; i++) { events[2 * i].t = open_times[i]; events[2 * i].delta = 1; events[2 * i].side = sides[i]; events[2 * i + 1].t = close_times[i]; events[2 * i + 1].delta = -1; events[2 * i + 1].side = sides[i]; } // Sort by time (insertion sort, adequate for N < 20000) SortSLEvents(events); int long_cnt = 0, short_cnt = 0, ev_idx = 0; for(int q = 0; q < n_query; q++) { while(ev_idx < ArraySize(events) && events[ev_idx].t <= query_times[q]) { if(events[ev_idx].side == 1) long_cnt += events[ev_idx].delta; else short_cnt += events[ev_idx].delta; ev_idx++; } active_long[q] = MathMax(0, long_cnt); active_short[q] = MathMax(0, short_cnt); } } //+------------------------------------------------------------------+

Figure 2 shows the algorithm on a six-bet example. Panel (a) draws the half-open intervals [open_t, close_t) as horizontal bars, color-coded by side. Panel (b) shows the sorted event list: each open event is an upward triangle, and each close is a downward triangle. The yellow dashed line marks a query at t = 10; all events to its left have already been consumed by the sweep, so the running counters reflect exactly the active set at that bar. Panel (c) shows the resulting step functions for active_long and active_short.

Figure 2. 3-panel illustration of the sweep-line active-count algorithm

- Panel (a): six bet intervals drawn as half-open bars [open_t, close_t); blue = long, orange = short. The yellow dashed line marks the query timestamp at bar 10.

- Panel (b): the sorted event list derived from the intervals. Open events (▲) precede close events (▼) at each timestamp. The sweep cursor consumes all events with time ≤ query_t before recording the counts.

- Panel (c): the resulting active_long (blue step function) and active_short (orange step function) at every bar index. At query t = 10: active_long = 1, active_short = 2.

Probability-Based Sizing in MQL5

This is the first method to implement because it is the most universally applicable and because the averaging and discretization layers it requires are shared by the budget and reserve methods. The MQL5 implementation follows the same three-stage pipeline as the Python original: z-score transformation via GetSignal, temporal averaging via AvgActiveSignals, and discretization via DiscreteSignal.

GetSignal in MQL5

//--- Ch10Snippets.mqh // Snippet 10.1: transform predicted probability to signed bet size // prob: predicted probability for the positive class // num_classes: number of outcome classes (2 for binary) // pred: +1 (long) or -1 (short); 0 returns unsigned magnitude double GetSignal(double prob, int num_classes, int pred) { double base_rate = 1.0 / num_classes; double denom = MathSqrt(prob * (1.0 - prob)); if(denom < 1e-10) return((prob > base_rate) ? 1.0 : -1.0); double z = (prob - base_rate) / denom; double signal = 2.0 * NormCDF(z) - 1.0; if(pred != 0) signal *= (pred > 0) ? 1.0 : -1.0; return(signal); } //+------------------------------------------------------------------+

The guard on denom handles the edge cases p = 0 and p = 1, where the Bernoulli standard deviation collapses to zero and the z-score would overflow. In practice these cases arise when a classifier assigns certainty to a prediction — rare but not impossible with gradient-boosted trees that overfit the training set.

AvgActiveSignals in MQL5

The Python implementation iterates over a sorted DatetimeIndex and uses boolean indexing to find which signals are active at each timestamp. The MQL5 version uses the same parallel-array convention and applies a nested scan:

// Snippet 10.2: compute the mean of all concurrently active signals void AvgActiveSignals( const datetime &open_t[], // Signal start times const datetime &close_t[], // Signal end times (t1) const double &signals[], // Per-signal bet sizes from GetSignal const datetime &query_t[], // Bar timestamps to evaluate at double &avg_out[] // Output: averaged signal per bar ) { int n_sig = ArraySize(open_t); int n_query = ArraySize(query_t); ArrayResize(avg_out, n_query); for(int q = 0; q < n_query; q++) { double sum = 0.0; int count = 0; for(int i = 0; i < n_sig; i++) { if(open_t[i] <= query_t[q] && query_t[q] < close_t[i]) { sum += signals[i]; count++; } } avg_out[q] = (count > 0) ? sum / count : 0.0; } } // Snippet 10.3: discretize a continuous signal to multiples of step_size double DiscreteSignal(double signal, double step_size) { if(step_size <= 0.0) return(signal); double s = MathRound(signal / step_size) * step_size; return(MathMax(-1.0, MathMin(1.0, s))); } //+------------------------------------------------------------------+

The inner loop iterates over all n_sig signals for each of the n_query query times, giving O(N²) complexity. This is correct by design: computing a weighted mean over all intervals that cover a query point requires reading each signal value, so the sweep-line infrastructure from BetSizingUtils.mqh cannot be reused here as-is. In live trading the query array always contains a single timestamp (the current bar), so the outer loop runs once; only the inner scan over all n_sig historical signals is linear in the history length. The O(N²) worst case occurs during OnInit when all historical bar times are passed as queries simultaneously.

The BetSizeProbability Orchestrator

//--- BetSizing.mqh (excerpt) // User-level function matching bet_size_probability() in the Python module BetSizeResult BetSizeProbability( const datetime &open_t[], // Label open times const datetime &close_t[], // Label close times (t1) const double &prob[], // Classifier probabilities const int &pred[], // Predicted sides (+1 / -1) int num_classes, // 2 for binary classification double step_size, // Discretization grid (0 = off) bool average_active, // Apply concurrency correction datetime query_time // Current bar time ) { BetSizeResult result = {}; result.bar_time = query_time; int n = ArraySize(prob); // Stage 1: per-signal z-score transformation double raw_signals[]; ArrayResize(raw_signals, n); for(int i = 0; i < n; i++) raw_signals[i] = GetSignal(prob[i], num_classes, pred[i]); // Stage 2: concurrency correction (optional) if(average_active) { datetime query_arr[] = {query_time}; double avg_arr[]; AvgActiveSignals(open_t, close_t, raw_signals, query_arr, avg_arr); result.avg_signal = avg_arr[0]; } else result.avg_signal = (n > 0) ? raw_signals[n - 1] : 0.0; result.raw_signal = (n > 0) ? raw_signals[n - 1] : 0.0; // Stage 3: discretization result.bet_size = DiscreteSignal(result.avg_signal, step_size); return(result); } //+------------------------------------------------------------------+

Usage in an EA

//--- Inside OnBar() in BetSizingEA.mq5 BetSizeResult r = BetSizeProbability( open_t, close_t, prob, pred, 2, // binary classifier 0.05, // 5% position increments true, // correct for overlapping triple-barrier labels TimeCurrent() ); // r.bet_size is in [-1, 1]; send order proportional to max_lots * r.bet_size double lots = NormalizeDouble(max_lots * MathAbs(r.bet_size), 2); int side = (r.bet_size > 0.0) ? ORDER_TYPE_BUY : ORDER_TYPE_SELL; //+------------------------------------------------------------------+

The average_active=true flag should be treated as a mandatory default for any strategy built on triple-barrier labels, for exactly the reason documented in Part 10: omitting it is equivalent to claiming your labels never overlap, which is essentially never true in practice. The concurrency explosion risk is not hypothetical — it is a systematic overexposure that compounds during dense signal periods and is entirely preventable.

Dynamic Forecast-Price Sizing in MQL5

The dynamic sizer is applicable whenever the model outputs a continuous forecast price rather than a class probability. Its MQL5 implementation covers both the sigmoid and power functional forms, the closed-form GetW calibration, and the limit-price computation.

Sigmoid and Power Functions

//--- Ch10Snippets.mqh // Snippet 10.4a: sigmoid bet size // price_div: forecast_price - market_price // w: calibration parameter (larger = more conservative) double SigmoidBetSize(double price_div, double w) { return(price_div / MathSqrt(w + price_div * price_div)); } // Snippet 10.4b: power bet size // price_div must be pre-normalized to [-1, 1] double PowerBetSize(double price_div, double w) { if(MathAbs(price_div) > 1.0) { Print("PowerBetSize: price_div must be in [-1,1]. Got: ", DoubleToString(price_div, 6)); return(MathSign(price_div)); } return(MathSign(price_div) * MathPow(MathAbs(price_div), w)); } // GetW: calibrate w from a (divergence, target_bet_size) pair double GetW(double price_div, double m_bet_size, string func = "sigmoid") { if(func == "sigmoid") { // Invert: m = x/sqrt(w+x^2) => w = x^2*(1-m^2)/m^2 if(MathAbs(m_bet_size) >= 1.0) { Print("GetW: target bet size must be strictly inside (-1,1)"); return(0.0); } double m2 = m_bet_size * m_bet_size; return(price_div * price_div * (1.0 - m2) / m2); } else { // Power form: m = |x|^w => w = log(m)/log(|x|) if(MathAbs(price_div) <= 0.0 || MathAbs(price_div) >= 1.0 || m_bet_size <= 0.0 || m_bet_size >= 1.0) { Print("GetW power: price_div and m_bet_size must be in (0,1)"); return(1.0); } return(MathLog(MathAbs(m_bet_size)) / MathLog(MathAbs(price_div))); } } //+------------------------------------------------------------------+

Limit Price Computation

The limit price is the average break-even execution price over each discrete unit of position change from current_pos to t_pos. It is computed by inverting the bet-size function: if f(x) = m is the sizing formula, then the divergence that justifies position unit k is f⁻¹(k/max_pos), and the corresponding limit price is market_price + f⁻¹(k/max_pos).

// Compute limit price for moving from pos_curr to pos_target double LimitPrice(double market_price, double pos_curr, double pos_target, double max_pos, double w, string func) { double sum = 0.0; double sgn = (pos_target > pos_curr) ? 1.0 : -1.0; int steps = (int)MathRound(MathAbs(pos_target - pos_curr)); if(steps == 0) return(market_price); for(int k = 1; k <= steps; k++) { double m = sgn * (MathAbs(pos_curr) + k) / max_pos; double div_k; if(func == "sigmoid") div_k = sgn * MathSqrt(w) * m / MathSqrt(1.0 - m * m); else div_k = sgn * MathPow(MathAbs(m), 1.0 / w); sum += div_k; } return(market_price + sum / steps); } //+------------------------------------------------------------------+

The BetSizeDynamic Orchestrator

BetSizeResult BetSizeDynamic( double current_pos, // Current open position (signed integer) double max_pos, // Maximum allowed position size double market_price, // Current mid price double forecast_price, // Model forecast price double cal_divergence, // Calibration: target divergence (e.g. 10 pips) double cal_bet_size, // Calibration: target bet size at cal_divergence string func // "sigmoid" or "power" ) { BetSizeResult result = {}; double w = GetW(cal_divergence, cal_bet_size, func); double div = forecast_price - market_price; double bsz = (func == "sigmoid") ? SigmoidBetSize(div, w) : PowerBetSize(div / cal_divergence, w); result.bet_size = MathMax(-1.0, MathMin(1.0, bsz)); result.t_pos = MathRound(result.bet_size * max_pos); result.l_p = LimitPrice(market_price, current_pos, result.t_pos, max_pos, w, func); result.raw_signal = div; // price divergence; useful for diagnostics result.avg_signal = result.bet_size; return(result); } //+------------------------------------------------------------------+

Choosing Between Sigmoid and Power in Live Trading

For a live mean-reversion strategy where you can estimate the EV-divergence curve from a walk-forward backtest, calibrate w by fitting PowerBetSize(x, w) to the empirical curve using a simple grid search over w ∈ [0.1, 5.0] with the minimum mean-squared error criterion. For an unknown or first-principles strategy, default to the sigmoid with a conservative calibration target of cal_bet_size = 0.50 at a one-standard-deviation divergence. The sigmoid will undersize at large divergences relative to a convex EV curve, but it will also undersize at small divergences relative to a concave one; it is the maximum-entropy choice when the EV shape is genuinely unknown.

Budget-Constrained Sizing in MQL5

The budget method requires only directional signals — no probabilities, no forecast prices. Its MQL5 implementation uses the sweep-line counter from BetSizingUtils.mqh to compute concurrent long and short counts, then applies the normalization formula.

//--- BetSizing.mqh (excerpt) BetSizeResult BetSizeBudget( const datetime &open_t[], // Bet open times const datetime &close_t[], // Bet close times (t1) const int &sides[], // +1 / -1 datetime query_time // Current bar time ) { BetSizeResult result = {}; result.bar_time = query_time; datetime query_arr[] = {query_time}; int al[], as_arr[]; SweepLineActiveCounts(open_t, close_t, sides, query_arr, al, as_arr); result.active_long = al[0]; result.active_short = as_arr[0]; // Running maxima: persisted as file-scope globals (g_budget_max_long/short) // initialized in OnInit via SeedBudgetMaxima(). See BetSizing.mqh. if(al[0] > g_budget_max_long) g_budget_max_long = al[0]; if(as_arr[0] > g_budget_max_short) g_budget_max_short = as_arr[0]; double frac_long = (double)al[0] / g_budget_max_long; double frac_short = (double)as_arr[0] / g_budget_max_short; result.c_t = frac_long - frac_short; result.bet_size = MathMax(-1.0, MathMin(1.0, result.c_t)); return(result); } //+------------------------------------------------------------------+

The running maxima g_budget_max_long and g_budget_max_short are file-scope globals that persist across calls within a single EA lifetime. They should be seeded from the warm-up history in OnInit rather than left to grow from 1, since an artificially low maximum during the earliest bars produces oversized positions until the true historical maximum is first observed. A dedicated seeding pass resolves this:

//--- In OnInit(): seed the running maxima from historical bets void SeedBudgetMaxima(const datetime &open_t[], const datetime &close_t[], const int &sides[]) { int n = ArraySize(open_t); if(n == 0) return; // Sample at each open time to find the true historical maxima int al[], as_arr[]; SweepLineActiveCounts(open_t, close_t, sides, open_t, al, as_arr); int ml = 1, ms = 1; for(int i = 0; i < n; i++) { if(al[i] > ml) ml = al[i]; if(as_arr[i] > ms) ms = as_arr[i]; } g_budget_max_long = ml; g_budget_max_short = ms; } //+------------------------------------------------------------------+

The budget method is also an effective overlay on top of BetSizeProbability. Because it measures the portfolio's current occupancy relative to its historical norm, multiplying the probability signal by the budget signal produces a composite that is confidence-aware at the signal level and concurrency-aware at the portfolio level simultaneously.

Reserve Sizing via Mixture of Gaussians in MQL5

The reserve method is the most computationally demanding of the four. Its MQL5 implementation must perform EF3M parameter estimation, which in the Python module uses multiprocessing. In MQL5 the equivalent is to run multiple analytic solves from random starting conditions in OnInit — where latency is not critical — and cache the resulting parameters for use during OnTick.

EF3M Parameter Estimation in MQL5

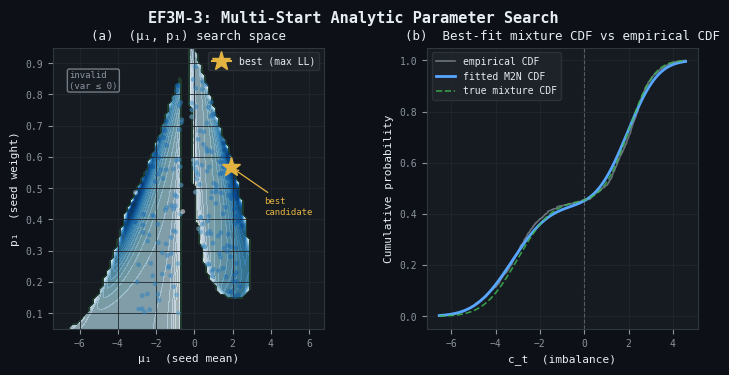

The EF3M algorithm fits a two-Gaussian mixture to the empirical distribution of the imbalance series c_t = active_long − active_short. The five-parameter mixture (μ₁, μ₂, σ₁, σ₂, p₁) cannot be identified from fewer than five moment equations. The MQL5 implementation uses an EF3M-3 variant: fix two free parameters (μ₁, p₁) as the random seed; derive the remaining three analytically from the first three raw moments via a 2×2 linear solve; then evaluate log-likelihood and keep the best of n_runs candidates.

The key derivation proceeds in two steps. First, the first raw moment gives μ₂ immediately:

mu2 = (m1 - p1 * mu1) / (1 - p1) // from E[X] = p1*mu1 + (1-p1)*mu2 Second, rearranging the second and third raw moments of the mixture into two equations in the unknowns (σ₁², σ₂²) yields the 2×2 linear system:

[ p1 (1-p1) ] [ s1^2 ] [ A ] [ p1*mu1 (1-p1)*mu2 ] [ s2^2 ] = [ B ] where A = m2 - p1*mu1^2 - (1-p1)*mu2^2 // from E[X^2] B = (m3 - p1*mu1^3 - (1-p1)*mu2^3) / 3 // from E[X^3] = mu^3 + 3*mu*sigma^2

Cramer's rule gives:

det = p1 * (1-p1) * (mu2 - mu1) s1^2 = (A*mu2 - B) / (p1 * (mu2-mu1)) s2^2 = (B - A*mu1) / ((1-p1) * (mu2-mu1))

The system is rank-deficient only when μ₁ = μ₂ (degenerate single-component case), which is detected and rejected. The complete implementation:

//--- EF3M.mqh bool DeriveComponentParams(double mu1, double p1, const double &m[], double &mu2, double &s1, double &s2) { if(p1 <= 0.0 || p1 >= 1.0) return(false); mu2 = (m[0] - p1 * mu1) / (1.0 - p1); // from m1 double gap = mu2 - mu1; if(MathAbs(gap) < 1e-12) return(false); // degenerate // A = p1*s1^2 + (1-p1)*s2^2 (from m2) double A = m[1] - p1 * (mu1 * mu1) - (1.0 - p1) * (mu2 * mu2); // B = p1*mu1*s1^2 + (1-p1)*mu2*s2^2 (from m3) double B = (m[2] - p1 * (mu1 * mu1 * mu1) - (1.0 - p1) * (mu2 * mu2 * mu2)) / 3.0; // Cramer's rule: det = p1*(1-p1)*gap double var1 = (A * mu2 - B) / (p1 * gap); double var2 = (B - A * mu1) / ((1.0 - p1) * gap); if(var1 <= 0.0 || var2 <= 0.0) return(false); s1 = MathSqrt(var1); s2 = MathSqrt(var2); return(true); } // Multi-start random search: n_runs analytic solves, keep best log-likelihood M2NParams FitM2N(const double &data[], int n_runs = 100) { M2NParams best = {}; best.log_likelihood = -1e18; int n = ArraySize(data); if(n < 30) { Print("FitM2N: too few observations"); return(best); } double m[]; RawMoments(data, m, 3); // E[X], E[X^2], E[X^3] double sigma_est = MathSqrt(MathMax(1e-12, m[1] - m[0] * m[0])); MathSrand((int)TimeCurrent()); for(int r = 0; r < n_runs; r++) { double noise = 2.0 * sigma_est * ((double)(MathRand() - 16383) / 16383.0); double mu1_init = m[0] + noise; double p1_init = 0.1 + 0.8 * ((double)MathRand() / 32767.0); double mu2, s1, s2; if(!DeriveComponentParams(mu1_init, p1_init, m, mu2, s1, s2)) continue; M2NParams candidate; candidate.mu1 = mu1_init; candidate.mu2 = mu2; candidate.s1 = s1; candidate.s2 = s2; candidate.p1 = p1_init; candidate.log_likelihood = LogLikelihood(data, candidate); if(candidate.log_likelihood > best.log_likelihood) best = candidate; } return(best); } //+------------------------------------------------------------------+

Figure 3 illustrates this process. Panel (a) shows the (μ₁, p₁) parameter plane for a synthetic 1000-point two-component dataset (μ₁ = −3, σ₁ = 1.2, μ₂ = 2, σ₂ = 0.9, p₁ = 0.45). The dark region is invalid — those starting points imply negative component variances and are immediately rejected. The blue shading in the valid region encodes log-likelihood; the yellow star marks the best candidate recovered by the 300-start search. Panel (b) compares the best-fit mixture CDF against the empirical CDF of the data and the true generating CDF; the fitted curve is indistinguishable from the true curve at this scale.

Figure 3. 2-panel illustration of the EF3M-3 multi-start analytic parameter search

- Panel (a): the (μ₁, p₁) search space. Dark background = invalid region (implied variances ≤ 0). Blue shading = log-likelihood in the valid region (darker = higher). Yellow star = best-fit candidate. Scatter points are the 300 random starting points that produced valid parameter sets.

- Panel (b): best-fit mixture CDF (blue) against empirical CDF (grey) and true generating CDF (green dashed). The fitted curve closely tracks the true CDF, validating the analytic 3-moment solve.

Mixture CDF and the Reserve Sizing Formula

// Evaluate the mixture CDF at x double MixtureCDF(double x, const M2NParams &p) { return(p.p1 * NormCDF((x - p.mu1) / p.s1) + (1.0 - p.p1) * NormCDF((x - p.mu2) / p.s2)); } // Convert c_t imbalance to bet size via the mixture CDF. // Note: c_t is the raw integer imbalance (active_long - active_short), // not the normalized fraction used by BetSizeBudget. The EF3M model // must be fitted to the same raw c_t series. double ReserveBetSize(double c_t, const M2NParams &p) { double F0 = MixtureCDF(0.0, p); double Fx = MixtureCDF(c_t, p); if(c_t >= 0.0) return((1.0 - F0 < 1e-10) ? 1.0 : (Fx - F0) / (1.0 - F0)); else return((F0 < 1e-10) ? -1.0 : (Fx - F0) / F0); } //+------------------------------------------------------------------+

One distinction requires careful attention. In BetSizeBudget, the imbalance c_t stored in BetSizeResult is the normalized fraction frac_long − frac_short ∈ [−1, 1]. In BetSizeReserve, it is the raw integer count active_long − active_short. The EF3M model is fitted to the raw c_t series, so the CDF input must be raw counts as well. These two fields carry the same name for conceptual continuity but operate on different scales.

Initialization Pattern

EF3M parameter estimation must not run on every tick. The correct pattern is to fit once in OnInit from the warm-up history and cache the result:

//--- In BetSizingEA.mq5 OnInit() M2NParams g_reserve_params; //+------------------------------------------------------------------+ //| | //+------------------------------------------------------------------+ int OnInit(void) { // ... load historical bets into g_open_t[], g_close_t[], g_sides[] ... // Build the c_t series over the warm-up period int n = ArraySize(g_open_t); int al[], as_arr[]; SweepLineActiveCounts(g_open_t, g_close_t, g_sides, g_open_t, al, as_arr); double ct_series[]; ArrayResize(ct_series, n); for(int i = 0; i < n; i++) ct_series[i] = (double)(al[i] - as_arr[i]); // Fit the mixture — may take 1-3 seconds for 2000 bets g_reserve_params = FitM2N(ct_series, 100); PrintFormat("EF3M fit: mu1=%.4f mu2=%.4f s1=%.4f s2=%.4f p1=%.4f LL=%.2f", g_reserve_params.mu1, g_reserve_params.mu2, g_reserve_params.s1, g_reserve_params.s2, g_reserve_params.p1, g_reserve_params.log_likelihood); return(INIT_SUCCEEDED); } //+------------------------------------------------------------------+

The EF3M fit should be re-run periodically: quarterly in a strategy that trades on daily bars, or whenever a significant structural break in the position distribution is detected (a sudden shift in the mean or variance of c_t by more than two historical standard deviations is a reliable trigger). Detecting this automatically requires tracking a rolling window of c_t and comparing its first two sample moments against the fitted mixture's implied moments.

Wiring the Pipeline: A Complete Expert Advisor

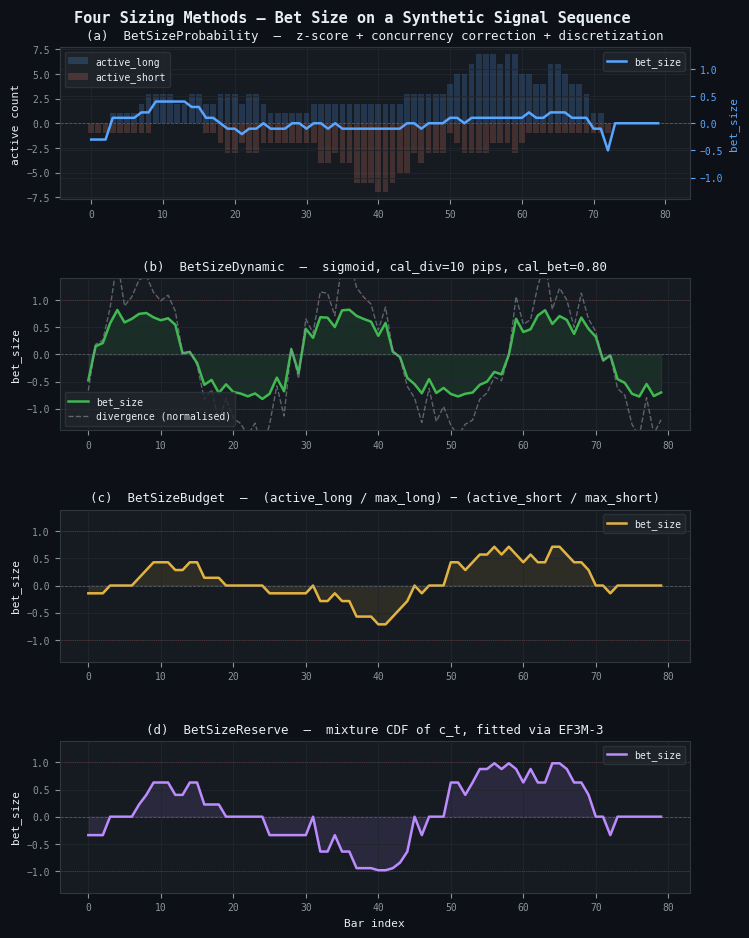

The four sizing methods, the EF3M fitter, and the utility functions assemble into a single EA that selects the appropriate method at runtime via an input parameter. Figure 4 shows what the wired EA produces on a synthetic signal sequence: each panel plots the bet_size output of one method over 80 bars given the same underlying bet history of 40 directional signals with random holding periods. The methods respond to the same data through fundamentally different lenses — the probability method averages raw confidence, the dynamic method responds to price divergence, the budget method tracks occupancy fractions, and the reserve method reads the empirical distribution shape of the imbalance.

Figure 4. 4-panel illustration of the four sizing methods on the same synthetic bet history

- Panel (a): BetSizeProbability. The bar chart (left axis) shows active long and short counts per bar. The blue line (right axis) is the discretized, concurrency-corrected bet size. Bars with no active signals produce a zero bet size.

- Panel (b): BetSizeDynamic (sigmoid). The green line tracks the sigmoid of a synthetic sinusoidal price divergence. The dashed grey line shows the normalized divergence for reference.

- Panel (c): BetSizeBudget. The yellow line is the normalized long-short imbalance fraction. It is smoother than the probability method because it does not carry per-signal confidence.

- Panel (d): BetSizeReserve. The purple line maps the raw integer imbalance through the fitted mixture CDF. Its shape resembles the budget signal but is scaled by the empirical distribution of historical imbalance.

The EA skeleton below shows how to connect the classifier output from the earlier articles in this series to the sizing layer and from there to the order management layer.

//--- BetSizingEA.mq5 // Angle-bracket form: searches MQL5\Include\. All four header files must // be placed in MQL5\Include\BetSizing; this EA belongs in MQL5\Experts\. #include <BetSizing\BetSizing.mqh> // pulls in Ch10Snippets, EF3M, and BetSizingUtils enum ENUM_SIZING_METHOD { METHOD_PROBABILITY, // BetSizeProbability METHOD_DYNAMIC, // BetSizeDynamic METHOD_BUDGET, // BetSizeBudget METHOD_RESERVE // BetSizeReserve }; input ENUM_SIZING_METHOD InpMethod = METHOD_PROBABILITY; input double InpMaxLots = 1.0; input double InpStepSize = 0.05; input bool InpAvgActive = true; input double InpCalDiv = 10.0; // pips, dynamic method input double InpCalBetSize = 0.95; // dynamic method input string InpDynFunc = "sigmoid"; input int InpEF3MRuns = 100; // Classifier output arrays — populated by your signal generation logic datetime g_open_t[]; datetime g_close_t[]; double g_prob[]; int g_pred[]; int g_sides[]; M2NParams g_reserve_params; double g_current_pos = 0.0; //+------------------------------------------------------------------+ //| | //+------------------------------------------------------------------+ int OnInit(void) { // Load historical signals from your classifier pipeline. LoadHistoricalSignals(); if(InpMethod == METHOD_BUDGET) SeedBudgetMaxima(g_open_t, g_close_t, g_sides); if(InpMethod == METHOD_RESERVE) { int n = ArraySize(g_open_t); int al[], as_arr[]; SweepLineActiveCounts(g_open_t, g_close_t, g_sides, g_open_t, al, as_arr); double ct[]; ArrayResize(ct, n); for(int i = 0; i < n; i++) ct[i] = (double)(al[i] - as_arr[i]); g_reserve_params = FitM2N(ct, InpEF3MRuns); } return(INIT_SUCCEEDED); } //+------------------------------------------------------------------+ //| | //+------------------------------------------------------------------+ void OnTick(void) { if(!IsNewBar()) return; AppendNewSignal(); BetSizeResult r = {}; datetime now = TimeCurrent(); switch(InpMethod) { case METHOD_PROBABILITY: r = BetSizeProbability(g_open_t, g_close_t, g_prob, g_pred, 2, InpStepSize, InpAvgActive, now); break; case METHOD_DYNAMIC: r = BetSizeDynamic(g_current_pos, InpMaxLots * 100, SymbolInfoDouble(_Symbol, SYMBOL_BID), GetForecastPrice(), InpCalDiv * _Point, InpCalBetSize, InpDynFunc); break; case METHOD_BUDGET: r = BetSizeBudget(g_open_t, g_close_t, g_sides, now); break; case METHOD_RESERVE: r = BetSizeReserve(g_open_t, g_close_t, g_sides, now, g_reserve_params); break; } PrintDiagnostics(r); double target_lots = NormalizeDouble(InpMaxLots * MathAbs(r.bet_size), 2); if(target_lots < SymbolInfoDouble(_Symbol, SYMBOL_VOLUME_MIN)) target_lots = 0.0; AdjustPosition(r); } //+------------------------------------------------------------------+ //| | //+------------------------------------------------------------------+ void PrintDiagnostics(const BetSizeResult &r) { PrintFormat("[%s] method=%d bet=%.4f raw=%.4f avg=%.4f aL=%d aS=%d c_t=%.2f lp=%.5f", TimeToString(r.bar_time, TIME_DATE | TIME_MINUTES), (int)InpMethod, r.bet_size, r.raw_signal, r.avg_signal, r.active_long, r.active_short, r.c_t, r.l_p); } //+------------------------------------------------------------------+

The AdjustPosition function (full implementation in the attached BetSizingEA.mq5) compares target_lots and the sign of r.bet_size against the currently open position and issues market orders only when the required change exceeds the minimum lot size. This is the execution-layer equivalent of the discretization step in the Python pipeline: it prevents micro-adjustments whose transaction cost exceeds their expected P&L contribution.

Selecting the Right Method at Deployment

| Scenario | Recommended Method | Key Configuration |

|---|---|---|

| Classifier with probability output, overlapping labels | METHOD_PROBABILITY | InpAvgActive=true, InpStepSize=0.05 |

| Regression model producing forecast prices | METHOD_DYNAMIC | Sigmoid for unknown EV shape; power for empirically characterized EV-divergence curve |

| Rule-based directional signal, no probability | METHOD_BUDGET | Seed max_long/max_short from warm-up history in OnInit |

| Directional signal, bimodal position history, large sample | METHOD_RESERVE | InpEF3MRuns≥100; re-fit quarterly; minimum \~500 historical bets |

| Classifier + portfolio exposure control | METHOD_PROBABILITY × METHOD_BUDGET | Multiply BetSizeProbability.bet_size by BetSizeBudget.bet_size |

Conclusion

The four MQL5 implementations in this article are a direct port of the Python afml.bet_sizing module, preserving the mathematical behavior at the cost of rewriting every piece of statistical infrastructure that Python inherits from NumPy and SciPy. The key implementation decisions worth reiterating are the following:

- Normal CDF via rational approximation. The Hart (1968) minimax rational approximation is accurate to seven significant figures and runs in a fixed number of floating-point operations. Avoid table lookups or iterative methods for this function — it is called in the innermost loop of both the probability and reserve methods.

- Sweep-line for concurrency counting. The O(N log N) sweep-line algorithm replaces the O(N²) nested loop that would result from a naive MQL5 translation of the Python DatetimeIndex overlap detection. For strategies with thousands of historical bets, the difference between a two-second initialization and a two-minute initialization is exactly this algorithm. Note that the averaging step in AvgActiveSignals remains O(N²) by design — computing a mean over active intervals requires reading signal values, not only counts.

- EF3M in OnInit, not OnTick. The mixture fitting runs 100 analytic solves, each deriving component parameters in closed form from the first three raw moments. For 2000 data points the log-likelihood evaluations dominate: plan for one to three seconds total. Cache the resulting M2NParams struct in a global variable and re-fit only when a structural break in the position distribution is detected.

- The BetSizeResult diagnostic struct. Every factor that shaped the final position size — the raw pre-averaging signal, the post-averaging signal, the active long and short counts, the imbalance c_t, and the limit price — is returned alongside the bet size itself. Use this struct to build a tick-level audit trail that makes the sizing behavior fully transparent during live monitoring and post-trade analysis.

- Concurrency correction is mandatory for triple-barrier labels. The InpAvgActive=true flag must be the default for any EA built on the labeling pipeline from Parts 2 through 5 of this series. The alternative — summing simultaneous positions — produces exposure that grows proportionally to signal density, which is systematic overexposure during exactly the high-conviction periods when the model is most active.

- Angle-bracket includes are required. All four header files must be placed in MQL5\Include\BetSizing. Any consuming file — indicator, EA, or script — must reference them with angle-bracket syntax (#include <BetSizing\BetSizing.mqh>). The quoted form searches only the including file's own directory and will fail silently when the file is not present there.

Part 14 takes the five-file MQL5 system built here and connects it to the CPCV backtesting framework running inside the MetaTrader 5 Strategy Tester. The probability estimates that flow into BetSizeProbability are themselves subject to systematic biases; their characterization and correction are covered in Part 12. The Kelly multiplier that scales the bet_size output by payoff-ratio awareness is described in Part 11.

Attached Files

| File | Place in | Depends On | Description | |

|---|---|---|---|---|

| 1. | BetSizingUtils.mqh | MQL5\Include\BetSizing | — | NormCDF, NormICDF, NormPDF, RawMoments, SweepLineActiveCounts, BetSizeResult struct, Clamp, MathSign. |

| 2. | EF3M.mqh | MQL5\Include\BetSizing | BetSizingUtils.mqh | M2NParams struct, DeriveComponentParams (analytic 3-moment solve), FitM2N (multi-start), MixtureCDF, ReserveBetSize. |

| 3. | Ch10Snippets.mqh | MQL5\Include\BetSizing | BetSizingUtils.mqh | GetSignal, AvgActiveSignals, DiscreteSignal, SigmoidBetSize, PowerBetSize, GetW, LimitPrice. |

| 4. | BetSizing.mqh | MQL5\Include\BetSizing | Ch10Snippets.mqh, EF3M.mqh | BetSizeProbability, BetSizeDynamic, BetSizeBudget, BetSizeReserve, SeedBudgetMaxima. User-facing orchestration layer. |

| 5. | BetSizingEA.mq5 | MQL5\Experts\ | BetSizing.mqh | Complete Expert Advisor. Runtime method selection, diagnostic logging, position adjustment, EF3M warm-up initialization. |

| 6. | ch10_snippets.py | afml/bet_sizing/ | numpy, pandas, numba, scipy.stats | Snippets 10.1–10.4: get_signal, avg_active_signals, discrete_signal; sigmoid and power variants of bet_size, get_target_pos, inv_price, limit_price, get_w. |

| 7. | ef3m.py | afml/bet_sizing/ | numpy, pandas, numba, scipy.special, scipy.stats | M2N class (EF3M algorithm, multi-start parallel fitting via mp_fit); raw_moment, centered_moment, most_likely_parameters, cdf_mixture helpers. |

| 8. | bet_sizing.py | afml/bet_sizing/ | ch10_snippets.py, ef3m.py, numpy, pandas, numba, scipy.stats | User-facing orchestration layer: bet_size_probability, bet_size_dynamic, bet_size_budget, bet_size_reserve, get_concurrent_sides, cdf_mixture, single_bet_size_mixed, confirm_and_cast_to_df. |

| 9. | __init__.py | afml/bet_sizing/ | bet_sizing.py, ef3m.py | Package entry point. Re-exports the eight public symbols: bet_size_budget, bet_size_dynamic, bet_size_probability, bet_size_reserve, cdf_mixture, confirm_and_cast_to_df, get_concurrent_sides, single_bet_size_mixed, M2N, centered_moment, most_likely_parameters, raw_moment. |

Further Reading

- Lopez de Prado, M. (2018). Advances in Financial Machine Learning. John Wiley & Sons. Chapter 10.

- Lopez de Prado, M. and Foreman, M. (2014). A mixture of two Gaussians approach to mathematical portfolio oversight: The EF3M algorithm. Quantitative Finance, 14(5), 913–930.

- Hart, J. F. (1968). Computer Approximations. Wiley. (NormCDF rational approximation.)

- Beasley, J. D. and Springer, S. G. (1977). Algorithm AS 111: The percentage points of the normal distribution. Applied Statistics, 26, 118–121. (Original rational approximation for the central region of NormICDF.)

- Moro, B. (1995). The full Monte. Risk, 8(2), 57–58. (9-coefficient Chebyshev tail extension used in NormICDF.)

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

MQL5 Trading Tools (Part 28): Filling Sweep Polygons for Butterfly Curve in MQL5

MQL5 Trading Tools (Part 28): Filling Sweep Polygons for Butterfly Curve in MQL5

Self-Learning Expert Advisor with a Neural Network Based on a Markov State-Transition Matrix

Self-Learning Expert Advisor with a Neural Network Based on a Markov State-Transition Matrix

Engineering Trading Discipline into Code (Part 4): Enforcing Trading Hours and News Disabling in MQL5

Engineering Trading Discipline into Code (Part 4): Enforcing Trading Hours and News Disabling in MQL5

Price Movement: Mathematical Models and Technical Analysis

Price Movement: Mathematical Models and Technical Analysis

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use