MetaTrader 5 Machine Learning Blueprint (Part 11): Kelly Criterion, Prop Firm Integration, and CPCV Dynamic Backtesting

Table of Contents

- The Kelly Criterion: Foundation, Crossover, and Limitations

- Five Conditions That Determine Method Choice

- The Two-Stage Hybrid Architecture

- Prop Firm Integration: Dynamic w from Drawdown Budgets

- Backtesting with CPCV and Dynamic Sizing

- Conclusion

- Attached Files

Introduction

You have a bet-sizing signal from the toolkit introduced in Part 10. The signal is confidence-aware, concurrency-corrected, and discretized. What it is not yet is payoff-aware, budget-constrained, or validated across combinatorial paths. Three concrete gaps remain.

First, the sizing methods in Part 10 treat wins and losses symmetrically. A strategy that wins three dollars for every one it loses warrants a fundamentally different allocation than a symmetric bet at the same probability, and get_signal has no mechanism to express this. Second, none of the AFML methods incorporate a hard drawdown limit. When a prop firm account has consumed 70% of its daily loss capacity, the same model signal should produce a much smaller position than it would at the start of the day, and a static sizing function cannot respond to that. Third, a single backtest of a dynamically-sized strategy is a misleading performance summary. The position sizes at each bar depend on the P&L history up to that bar, which depends on every prior sizing decision, so the result is as much a function of the specific historical path as of the strategy's genuine edge.

All three gaps are addressed here. After reading, you will have: a precise account of when the Kelly criterion should replace or supplement get_signal, including the numerical crossover point at which the two methods diverge and the five structural conditions Kelly cannot satisfy in live financial markets; a two-stage hybrid architecture in which get_signal handles confidence-aware, concurrency-corrected signal sizing and a Kelly payoff multiplier applies asymmetric win/loss adjustment as a second stage, preserving the concurrency correction while adding what Kelly alone can express; a prop firm risk integration layer (PropFirmAwareSizer) in which the sigmoid w parameter is calibrated continuously from the remaining drawdown budget under the FundedNext Stellar 2-Step rules, so that as daily or overall loss capacity is consumed, the sizing function flattens automatically without threshold logic or manual override; and a CPCV dynamic backtest framework that simulates a fresh account state bar-by-bar through each of the φ[N, k] combinatorial paths, producing a distribution of equity curves and a PBO audit rather than the single path-dependent result that standard backtesting provides. Each component comes with practical limitations that the relevant sections make explicit.

This article is Part 11 of the MetaTrader 5 Machine Learning Blueprint series. Part 10 introduced the four AFML bet-sizing methods. Readers who have not completed Part 10 should do so before proceeding, as this article assumes familiarity with get_signal, avg_active_signals, bet_size_dynamic, and the concurrency correction framework. The Unified Validation Pipeline article established the V-in-V, CPCV, and CSCV infrastructure that this article draws on directly: the φ[N, k] combinatorial path structure used in Section 5 comes from CPCV; the PBO audit at the end of that section is the CSCV procedure described in detail there; and the V-in-V three-layer partitioning governs how the win/loss ratio estimate and Kelly fraction should be derived from historical data without overfitting to the same equity curves that the sizing system will be evaluated on. Parts 8 and 9 built the HPO and production pipeline infrastructure whose _WeightedEstimator and clf_hyper_fit components produce the model whose probabilities the sizing methods in Part 10 and this article consume.

The Kelly Criterion: Foundation, Crossover, and Limitations

The Kelly criterion occupies a special place in the bet-sizing literature. It is one of the few sizing rules with a rigorous theoretical optimality property: for a sequence of independent bets with known payoffs and probabilities, the Kelly fraction maximizes the expected logarithm of terminal wealth, and no alternative fractional betting strategy does better asymptotically. Understanding how it relates to the AFML methods, and where the relationship breaks down, is essential for constructing a defensible production sizer.

The Theoretical Foundation

Kelly (1956) derived the optimal bet fraction by maximizing the expected log-growth rate over many repeated bets. For a bet that wins amount b with probability p and loses 1 unit with probability q = 1 − p, the formula is:

f* = (p*b - q) / b

For symmetric payoffs (b = 1), this simplifies to:

f* = 2p - 1

This is linear in p: a probability of 0.60 produces a Kelly fraction of 0.20, a probability of 0.70 produces 0.40, and so on. The formula is zero at p = 0.5 (no edge, no position), positive for p > 0.5, and negative for p < 0.5. Importantly, it naturally produces negative fractions for strategies with negative edge, which can be interpreted as betting the other side.

The Numerical Crossover with get_signal

For binary classification with symmetric payoffs, the Kelly fraction and the get_signal output can be directly compared at the same probability level:

| p | get_signal | Kelly f* | Relative Difference |

|---|---|---|---|

| 0.50 | 0.000 | 0.000 | — |

| 0.55 | 0.080 | 0.100 | Kelly 25% larger |

| 0.60 | 0.161 | 0.200 | Kelly 24% larger |

| 0.65 | 0.247 | 0.300 | Kelly 21% larger |

| 0.70 | 0.340 | 0.400 | Kelly 18% larger |

| 0.75 | 0.443 | 0.500 | Kelly 13% larger |

| 0.80 | 0.556 | 0.600 | Kelly 8% larger |

| 0.87 | 0.671 | 0.740 | Kelly 10% larger; near crossover |

| 0.90 | 0.818 | 0.800 | get_signal 2% larger |

| 0.95 | 0.961 | 0.900 | get_signal 7% larger |

| 0.99 | 0.999 | 0.980 | get_signal 2% larger |

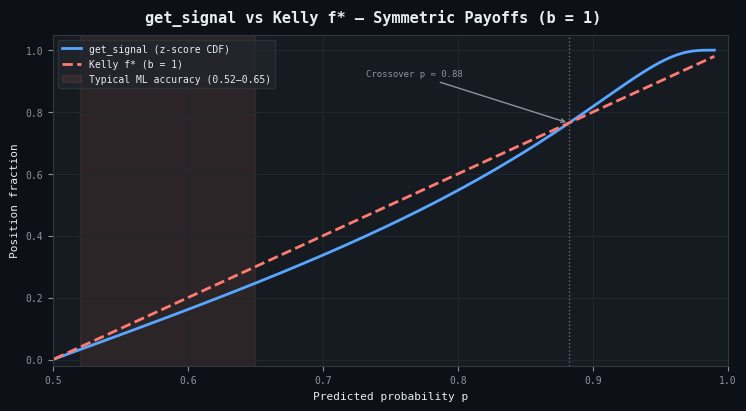

Figure 1. The structural disagreement between get_signal and the Kelly criterion under symmetric payoffs (b = 1)

Kelly is linear in p; get_signal passes through a z-score transform whose denominator √(p(1−p)) collapses as p approaches 1, causing the CDF output to saturate faster. The two curves cross near p ≈ 0.88. The shaded band marks the 0.52–0.65 range where production financial ML classifiers typically operate. Throughout this range Kelly recommends 20–25% more exposure at every probability level, and this is precisely the region where probability estimates are least reliable and overconfidence most dangerous. Above the crossover the relationship inverts: get_signal saturates toward 1.0 while Kelly remains linear, so at p = 0.95 get_signal allocates 0.96 versus Kelly's 0.90. The crossover is not a tuning parameter; it is a fixed consequence of the two functional forms and holds regardless of the asset, timeframe, or model.

The two methods cross over near p ≈ 0.88. Below that level, Kelly is consistently more aggressive. Above it, get_signal is more aggressive. This crossover is a structural consequence of the different mathematical forms: Kelly is linear in p, while the z-score denominator sqrt(p*(1−p)) collapses toward zero as p approaches 1, causing the z-score to grow super-linearly and the normal CDF to saturate rapidly.

This has a practical consequence for financial ML practitioners. Real out-of-sample model accuracy for financial prediction tasks typically lies in the range of 0.52 to 0.65. In precisely this range, Kelly is substantially more aggressive than get_signal: at p = 0.55, Kelly recommends about 25% more exposure. At the same time, probability estimation in this range is most subject to overfitting and model error. Kelly's aggressiveness in exactly the zone of highest uncertainty is its most significant practical liability.

The Payoff Ratio: Kelly's Decisive Structural Advantage

The comparison above assumes symmetric payoffs. In practice, this assumption is rarely satisfied. A strategy with a defined stop-loss and profit target has asymmetric payoffs by construction. A long position entered at 1.1000 with a stop at 1.0980 and a target at 1.1060 has a payoff ratio of b = 3. Kelly's full formula accounts for this directly:

f* = (p*b - q) / b = (0.55 * 3 - 0.45) / 3 = 0.40

Without the payoff ratio term, using the symmetric formula at p = 0.55 gives f* = 0.10. The payoff-aware formula recommends four times the position. get_signal cannot perform this adjustment because it takes only the probability as input, not the payoff structure. For strategies with significant payoff asymmetry, this is Kelly's definitive advantage.

Five Structural Requirements Kelly Cannot Satisfy in Financial Markets

Despite its theoretical elegance, Kelly faces five structural challenges in financial market applications.

Independence. Kelly requires i.i.d. bets. Financial labels are almost never independent; overlapping triple-barrier labels share bars of return attribution. Applying Kelly to correlated bets without correction produces systematic over-sizing proportional to the average label overlap.

True probability. Kelly requires the true probability, not an estimate. Any probability estimate from a finite sample is subject to model error. Kelly's formula is a knife's edge: overbetting Kelly even slightly leads to eventual ruin. Masters (1995) showed that under realistic estimation uncertainty, fractional Kelly (typically 0.25 to 0.5 of the full Kelly fraction) is strongly preferred to the full theoretical optimum.

Constant payoff ratio. Kelly requires a known, constant b. In live trading, the realized win/loss ratio drifts with execution quality, market liquidity, and regime changes.

No concurrency model. Kelly sizes each bet independently as if it were the only open position. Applied naively to overlapping labels, it produces total portfolio exposure far exceeding any reasonable risk limit.

No execution output. Kelly produces no limit price, no target position, and no discretized output. It is a formula for a fraction, not an execution-ready signal.

Five Conditions That Determine Method Choice

Given the landscape above, the choice of sizing method is determined by the structure of the strategy, the model output, data availability, and the execution environment. The following five conditions provide a systematic decision framework.

Condition 1: What does the model output?

If the model outputs class probabilities, begin with bet_size_probability. If it outputs a continuous forecast price, use bet_size_dynamic. If it outputs only a binary direction with no confidence, use bet_size_budget. These three method families address three structurally different problem types and are not interchangeable.

Condition 2: Do labels overlap?

For any strategy using triple-barrier labels with a non-trivial holding period, labels almost certainly overlap. In this case, average_active=True in bet_size_probability is not optional; it is the correct implementation. Omitting it is equivalent to assuming your labels never overlap, which will produce systematic over-sizing during periods of high signal density.

Condition 3: Are payoffs asymmetric?

If the strategy has a well-characterized stop-loss and target, and the average win-to-loss ratio deviates materially from 1.0, Kelly's payoff-ratio term deserves to be incorporated. Use get_signal for the first-stage signal sizing (which handles concurrency) and Kelly as a second-stage multiplier that adjusts for payoff asymmetry. This is the two-stage hybrid described in the next section.

Condition 4: How much historical data is available?

The EF3M reserve method requires several hundred bets at minimum, preferably several thousand, to produce stable mixture parameter estimates. For shorter histories, the budget method or probability method will be more robust. Use bet_size_reserve when the strategy has a long, stable production history whose position-imbalance distribution can be characterized reliably.

Condition 5: Is there a hard risk budget constraint?

Prop firm challenges, risk-budgeted accounts, and institutional mandates impose hard limits on drawdown. None of the four AFML methods directly incorporates such constraints. In these contexts, the w parameter of bet_size_dynamic provides a natural control lever: by calibrating w from the remaining drawdown budget rather than from a fixed target divergence, the sigmoid function flattens as the budget is consumed, automatically reducing position sizes in proportion to available risk capacity. This connection is developed in Section 4.

The Two-Stage Hybrid Architecture

The analysis above points toward a natural two-stage architecture that captures the strengths of each approach while avoiding their respective blind spots. Stage 1 handles signal-level sizing with correct concurrency adjustment. Stage 2 handles portfolio-level adjustment for payoff asymmetry.

Stage 1: Signal-Level Sizing with get_signal

The first stage uses bet_size_probability with average_active=True to produce a signal that is proportional to prediction confidence, adjusted for concurrent label overlap, and discretized to prevent overtrading. Call this signal s. It captures everything the probability and timestamp information can tell us about the appropriate position size.

Stage 2: Kelly Payoff Multiplier

The second stage computes a Kelly multiplier that adjusts s for payoff asymmetry. The multiplier is the ratio of the fractional Kelly fraction to the get_signal output at the same probability:

from scipy.stats import norm import numpy as np def kelly_payoff_multiplier(prob, avg_win_loss_ratio=1.0, kelly_fraction=0.5, max_amplification=1.5): """ Kelly multiplier relative to get_signal at the same probability. b = 1 (symmetric) → multiplier ≈ 1.0 everywhere b > 1 (favorable) → multiplier > 1.0 at moderate probabilities Kelly f* ≤ 0 → multiplier = 0.0 (no edge; close position) """ b = avg_win_loss_ratio q = 1.0 - prob f_star = (prob * b - q) / b if f_star <= 0: return 0.0 # Kelly says no edge — close position z = (prob - 0.5) / np.sqrt(prob * (1 - prob)) signal = max(2 * norm.cdf(z) - 1, 1e-6) raw = (kelly_fraction * f_star) / signal return float(np.clip(raw, 0.0, max_amplification))

When payoffs are symmetric (b = 1), the multiplier is approximately 1.0 everywhere: Kelly and get_signal agree, and the two stages are consistent. When b > 1 (favorable payoffs), the multiplier exceeds 1.0 at moderate probabilities, amplifying the Stage 1 signal to reflect the better payoff structure. When Kelly's formula produces a negative fraction (the bet is economically unattractive despite a probability above 0.5, which can occur when b is very small), the multiplier returns 0.0, closing the position regardless of the Stage 1 signal. The max_amplification parameter (default 1.5) prevents Kelly from suggesting more than 50% above the Stage 1 signal at any single observation, absorbing errors in the payoff ratio estimate.

The Combined Signal

The final position size is the product of the Stage 1 signal, the payoff multiplier, and any additional portfolio-level constraint factors:

final_size = stage1_signal × kelly_multiplier × constraint_factors

This separates the concerns cleanly. The probability model and concurrency adjustment live in Stage 1. The payoff structure lives in Stage 2. Risk constraints (drawdown limits, position caps, news-window adjustments) live in additional multiplicative factors applied after Stage 2.

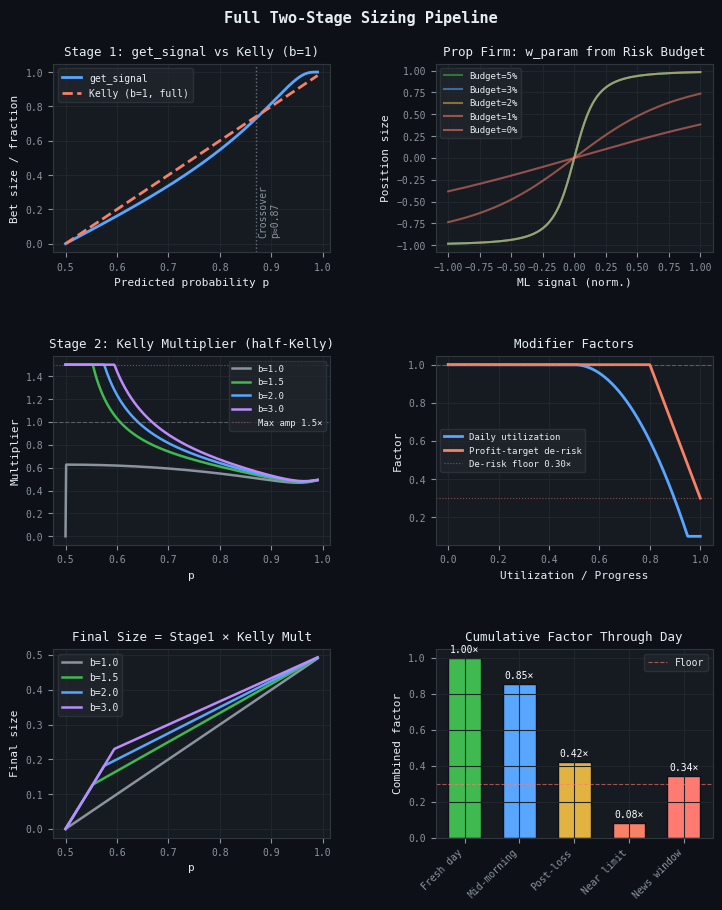

Figure 2. Full two-stage pipeline visualization

- Top-left, get_signal vs Kelly (b=1): The two methods agree near p≈0.88 and diverge on either side. Kelly is more aggressive below the crossover; get_signal is more aggressive above it.

- Center-left, Kelly Multiplier (half-Kelly): The multiplier as a function of p for four payoff ratios b=1.0, 1.5, 2.0, 3.0. At symmetric payoffs (b=1) the multiplier is approximately 1.0 throughout; at higher b it amplifies the Stage 1 signal materially at moderate probabilities.

- Bottom-left, Final Size = Stage1 × Kelly Mult: The combined two-stage position size after applying the payoff multiplier to the get_signal output, for the same four payoff ratios.

- Top-right, Prop Firm w_param from Risk Budget: Sigmoid sizing curves produced by calibrating w from the remaining drawdown budget. As the budget narrows, w increases and the function flattens, reducing position sizes automatically.

- Center-right, Modifier Factors: Daily utilization and profit-target de-risking factors as a function of progress. Both are multiplicative; neither requires threshold logic.

- Bottom-right, Cumulative Factor Through Day: The product of all modifier factors at five representative points in a simulated trading day, illustrating how the combined factor compounds as conditions change.

Prop Firm Integration: Dynamic w from Drawdown Budgets

The two-stage architecture operates independently of any external risk constraint. In prop firm trading, however, the account operates under hard drawdown limits that change continuously as the day progresses. A sizing system that ignores this dynamic is gambling with a fixed stake at a table whose limits are shifting.

The w parameter of bet_size_sigmoid provides the connection. Rather than calibrating w once from a fixed target divergence, we calibrate it continuously from the remaining drawdown budget. As the budget shrinks, w increases, flattening the sigmoid function and causing the same ML signal to produce a smaller position. The strategy automatically de-risks as its cushion narrows.

The FundedNext Stellar 2-Step Rules

To make this concrete, consider the FundedNext Stellar 2-Step Challenge, a two-phase evaluation in which a trader must demonstrate consistent profitability under hard daily and overall drawdown limits before receiving a funded account.

| Rule | Value | Key Detail |

|---|---|---|

| Daily loss limit | 5% of initial balance | Expands by intraday realized profit; includes swaps and commissions |

| Overall maximum loss | 10% of initial balance | Balance/equity floor fixed at 90% of initial; floor does not move |

| Phase 1 target | 8% profit | No time limit; minimum 5 trading days |

| Phase 2 target | 5% profit | No time limit; minimum 5 trading days |

| News window (funded) | ±5 minutes | Only 40% of profits within this window count toward balance |

| Max leverage | 1:100 | Commission: $5/lot, counts against daily drawdown |

The daily limit is dynamic and commonly misunderstood. On a $100,000 account, the base daily limit is $5,000, but if you have made $2,000 by noon, the limit expands to $7,000: your intraday realized profit provides additional cushion. Swaps, commissions, and fees all count against it. The overall floor is permanently anchored at $90,000; making profits increases your buffer above the floor but does not move the floor itself.

The w-param Calibration Chain

The chain connecting the remaining risk budget to the w-param is:

# Step 1: remaining risk budget from account state daily_limit = initial_balance * 0.05 + max(0, intraday_realized_pnl) daily_remaining = max(0, daily_limit - daily_loss_used) overall_remaining = max(0, current_equity - overall_floor) risk_budget_pct = min(daily_remaining, overall_remaining) / initial_balance # Step 2: maximum allowable position at full signal strength # position × stop_loss ≤ risk_budget × safety_factor cal_bet_size = (risk_budget_pct * safety_factor) / stop_loss_pct cal_bet_size = min(0.98, max(0.0, cal_bet_size)) if cal_bet_size < 0.02: return 0.0 # budget exhausted; no new positions # Step 3: define the signal level at which the sizer should output # cal_bet_size. Typically set to 0.95 (near-full conviction). cal_divergence = 0.95 # Step 4: calibrate w so that at cal_divergence signal strength, # bet_size_sigmoid(w, cal_divergence) = cal_bet_size w_param = get_w(price_div=cal_divergence, m_bet_size=cal_bet_size, func='sigmoid') # Step 5: apply to the ML signal from Stage 1 prop_sized = bet_size_sigmoid(w_param, ml_signal)

The safety_factor (default 0.70) reserves 30% of the calculated budget for frictions: the $5/lot commission, overnight swaps, and gap risk that prevents guaranteed exit at the stop-loss price. This buffer absorbs the costs that FundedNext counts against the daily limit before the trader has made any sizing decision.

The cal_divergence parameter (default 0.95) defines the signal strength at which the sizer should output its maximum allowed position. Setting it to 0.95 rather than 1.0 means that the sizer reaches near-full allocation at high but not perfect conviction, leaving a small margin for signals that exceed the calibration point.

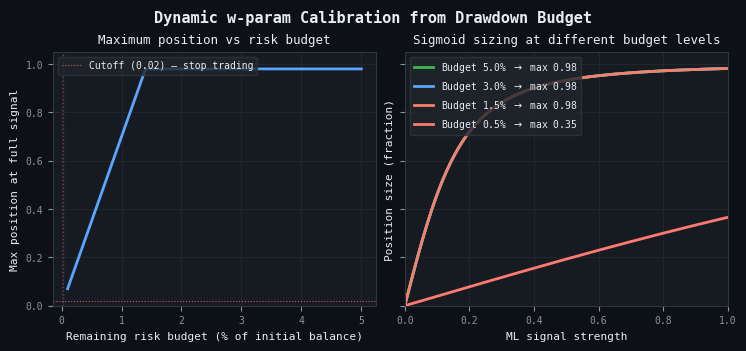

Figure 3. Dynamic w-parameter calibration from the remaining drawdown budget

- Left panel, maximum position size vs. budget: The maximum position at full signal strength declines linearly as the risk budget shrinks, with a hard cutoff at 2% of the budget (roughly $200 on a $100,000 account at a 1% stop) below which the sizer refuses new entries.

- Right panel, sigmoid curves at different budget levels: The same ML signal of 0.6 maps to a position size of roughly 0.58 when the budget is intact (green, 5.0%) but only 0.19 when the budget is nearly exhausted (red, 0.5%). The flattening is continuous, not threshold-based, ensuring that as drawdown capacity is consumed, every subsequent signal automatically produces a smaller position through a single recalibrated w parameter.

Additional Modifiers

Two additional multiplicative modifiers address rules the w-param calibration does not capture.

The profit-target proximity de-risking factor begins reducing position sizes at 80% of the phase target, scaling linearly to 30% at the target itself. FundedNext has no consistency rule, but a strategy that has earned 7% of an 8% Phase 1 target and then gives back 3% in a single bad day has wasted all time already invested in the phase. The 30% floor at 100% progress allows continued trading to satisfy the minimum trading days rule without material drawdown risk.

The news-window adjustment (funded stage only) multiplies position sizes by 0.40 during the ±5 minute window around high-impact news events. FundedNext credits only 40% of profits made in this window. If sized as if it will contribute the full P&L, the strategy will systematically fall short of payout targets. The 0.40 factor sizes positions to deliver their expected contribution to the account balance after the profit-credit haircut. Note that this adjustment is conservative: losses within the news window are counted at their full value against the drawdown limit, while only 40% of profits are credited. The 0.40 sizing factor addresses the profit asymmetry but does not eliminate the risk that a full loss within the window consumes more drawdown budget than the corresponding profit would replenish. Traders should consider whether to avoid the news window entirely rather than trade at reduced size.

Figure 4. News window profit-credit asymmetry under FundedNext rules

- Normal conditions (blue): With payoff ratio b = 1.5, the expected contribution per unit risk grows linearly with win probability; the strategy breaks even at p = 0.40.

- News window, full size (red dashed): Only 40% of profits are credited, shifting the breakeven point to approximately p = 0.63 and creating a zone (shaded red) where a strategy with genuine edge under normal conditions has negative expected contribution inside the window.

- News window, reduced size (orange dotted): Scaling position size to 40% reduces the magnitude of both gains and losses but cannot eliminate the structural asymmetry. Traders whose model accuracy falls inside the shaded region are better served by avoiding the news window entirely.

Usage

from prop_firm_sizer import PropFirmAccountState, Phase, make_stellar_2step_sizer # Initialize once per account state = PropFirmAccountState( initial_balance=100_000.0, phase=Phase.CHALLENGE_PHASE_1, ) sizer = make_stellar_2step_sizer( stop_loss_pct=0.01, # 1% account risk per trade at the stop safety_factor=0.70, # use 70% of available budget avg_win_loss_ratio=1.2, # from live trade log; update monthly kelly_fraction=0.5, # half-Kelly step_size=0.05, ) # On every bar state.update( realized_pnl_delta=last_closed_trade_pnl, unrealized_pnl=total_floating_pnl, fees_and_swaps=today_swap_and_commission, ) result = sizer.size( events=events_df, prob=calibrated_probs, # probabilities from your classifier pred=primary_sides, state=state, news_times=today_news_events, current_time=datetime.utcnow(), average_active=True, ) position_sizes = result['final_size']

The output DataFrame contains a complete diagnostic record: the Stage 1 ML signal, the calibrated w-param, the maximum allowed position at full signal strength, each modifier factor individually, and the final discretized position size. This transparency is essential in a prop firm context where a single limit breach has severe consequences. Every factor that shaped the final position can be audited.

Backtesting with CPCV and Dynamic Sizing

A natural question arises when designing the backtest for a dynamically-sized strategy: how do you evaluate it without reintroducing the overfitting you have worked to eliminate? Standard backtesting applies a fixed sizing function to a fixed set of historical predictions. Dynamic sizing is stateful: the position size on bar t depends on the entire P&L history up to bar t, which depends on every previous sizing decision. You cannot decouple the backtest from the sizer without misrepresenting how the strategy would actually behave.

The Core Challenge

CPCV produces φ paths, each covering the full timeline once with OOF predictions. On path 1, bar t's prediction came from split S3. On path 2, the same bar's prediction came from split S7. The model that produced the prediction is different because it was trained on a different subset of data. This means the probability at bar t is path-dependent, and therefore so is the bet size, and therefore so is the account state. You cannot pre-compute a single sizing grid and apply it to all paths.

Architecture: Fresh State Per Path

The solution is to embed the stateful simulation inside the CPCV path framework. For each of the φ[N, k] combinatorial backtest paths, a fresh PropFirmAccountState is initialized and run bar-by-bar through the simulation loop. Each path begins with the same initial balance and drawdown limits but receives a different sequence of OOF predictions, because each path's predictions come from a different combination of training splits. Paths are simulated in parallel using joblib.Parallel.

from cpcv_dynamic_backtest import CPCVDynamicBacktest, BacktestConfig from afml.cross_validation.combinatorial import ( CombinatorialPurgedCV, optimal_folds_number ) N, k = optimal_folds_number( n_observations=len(X), target_train_size=int(len(X) * 0.60), target_n_test_paths=5, ) cv_gen = CombinatorialPurgedCV( n_folds=N, n_test_folds=k, t1=t1, pct_embargo=0.01 ) cfg = BacktestConfig( initial_balance=100_000.0, phase=Phase.CHALLENGE_PHASE_1, pip_value=10.0, lot_size=0.01, n_jobs=-1, # all physical cores ) backtest = CPCVDynamicBacktest( cv_gen=cv_gen, estimator=estimator, sizer=sizer, cfg=cfg, close_prices=close_prices, primary_sides=sides, ) backtest.run(X=X, y=y, events=events, price_returns=returns)backtest.distribution_report() backtest.pbo_audit(n_folds=8) backtest.plot_equity_distribution()

What the Distribution Reveals

The distribution of φ equity curves is what makes CPCV genuinely more informative than a single backtest. A strategy whose Sharpe ratio distribution across paths is tight and positive has demonstrated robustness across many different temporal configurations of the training data. A strategy whose performance varies widely across paths is fragile: its historical result is as much a function of which data it was trained on as of any genuine edge. The dynamic sizing adds another dimension: some paths will breach the daily limit more frequently than others, revealing how the sizing system interacts with the sequential dependence of the P&L. The distribution_report method surfaces the fraction of paths that breach the daily limit, the fraction that breach the overall limit, and the median number of trading days to the phase target across successful paths.

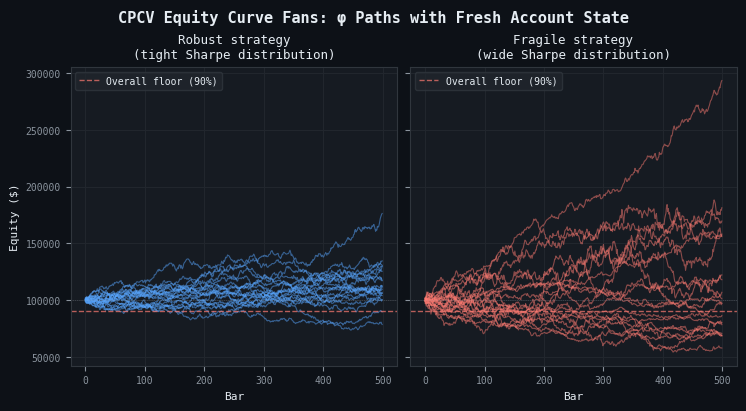

Figure 5. CPCV equity curve fans comparing robust versus fragile strategies

- Robust strategy (left, blue): Consistent drift and volatility across all 20 combinatorial paths; terminal equity tightly clustered between $110,000 and $130,000, and no path approaches the $90,000 overall floor.

- Fragile strategy (right, red): Wide dispersion in outcomes; some paths reach $160,000 while others breach the floor, demonstrating strong path dependence. The width of the fan is the visual signature of fragility—a tight fan indicates performance robust to the data partition, while a wide fan reveals that a single historical backtest could be drawn from anywhere in that spread.

The PBO Audit

The PBO (Probability of Backtest Overfitting) audit from the Unified Validation Pipeline article applies here without modification. A returns matrix is constructed from the φ path strategy returns and passed to compute_pbo. A PBO near zero means the sizing approach is not overfit to the training data: the selection of sizing parameters reliably identifies genuinely better-performing configurations. A PBO near 0.5 means the backtest result is consistent with chance. This is the quantitative audit that distinguishes a robust sizing framework from one that happened to perform well on the particular historical path that standard backtesting evaluated.

Conclusion

This article has addressed the three gaps left open in Part 10. The Kelly criterion provides the payoff-ratio adjustment that get_signal cannot, but its theoretical guarantees require conditions (independence, known true probabilities, constant payoffs) that financial markets do not provide. The two-stage hybrid architecture captures Kelly's payoff awareness as a multiplier on get_signal, preserving the concurrency correction from Stage 1 while adding what Kelly alone can express. The w-param calibration chain connects the remaining drawdown budget to the sigmoid sizing function, producing a prop firm sizer that de-risks automatically as budget is consumed without threshold logic or manual override. And the CPCV dynamic backtest framework evaluates the complete pipeline (model and sizer) across φ[N, k] combinatorial paths with a fresh account state on each, replacing a single path-dependent backtest with a distribution of equity curves and a PBO audit.

None of these components is a complete solution on its own. The Kelly multiplier depends on an estimated win/loss ratio that drifts in production; the prop firm sizer encodes one specific firm's rule set; and the CPCV dynamic backtest, while structurally sound, has not been validated with empirical results in this article. Each component narrows the gap between the AFML sizing toolkit and production requirements, but the practitioner must still monitor, recalibrate, and validate continuously.

Key takeaways:

- Kelly and get_signal agree at moderate probabilities but diverge structurally. In the 0.52–0.65 range that characterizes most financial ML models, Kelly is more aggressive in exactly the zone where probability estimation errors are largest. Use fractional Kelly.

- Payoff asymmetry is Kelly's domain. For strategies with characterized stop-loss and target levels, incorporate the win/loss ratio through a Kelly multiplier. Do not ignore it if b deviates materially from 1.

- Connect the sizer to the risk budget. In constrained accounts, dynamic w-param calibration produces a position sizer that responds to the risk budget in real time without threshold logic or manual override.

- Validate through CPCV paths, not a single backtest. The distribution of φ[N, k] equity curves, each simulated with a fresh account state, reveals the fragility or robustness of the combined model-and-sizer system. The PBO audit provides a single, defensible number that summarizes the reliability of the entire framework.

| File | Description | |

|---|---|---|

| 1. | prop_firm_sizer.py | Two-stage hybrid sizer with FundedNext Stellar 2-Step rule integration. Includes PropFirmAccountState, WParamCalibrator, PropFirmAwareSizer, modifier functions, and make_stellar_2step_sizer factory. |

| 2. | cpcv_dynamic_backtest.py | CPCV dynamic backtest orchestrator. Simulates fresh account state bar-by-bar across all φ[N, k] paths in parallel. Includes CPCVDynamicBacktest, BacktestConfig, simulate_path, and PBO audit integration. |

| 3. | README_prop_firm_sizer.md | Full documentation for the prop firm sizer module: parameter guide, FundedNext rule encoding, integration template, and design rationale for each component. |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

One-Dimensional Singular Spectrum Analysis

One-Dimensional Singular Spectrum Analysis

Low-Frequency Quantitative Strategies in Metatrader 5: (Part 2) Backtesting a Lead/Lag Analysis in SQL and in Metatrader 5

Low-Frequency Quantitative Strategies in Metatrader 5: (Part 2) Backtesting a Lead/Lag Analysis in SQL and in Metatrader 5

Predicting Renko Bars with CatBoost AI

Predicting Renko Bars with CatBoost AI

Market Simulation (Part 20): First steps with SQL (III)

Market Simulation (Part 20): First steps with SQL (III)

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use

Curious though—why did you go with CSCV/PBO instead of DSR for handling selection bias?

Would be interested to hear your thinking here.

Great article, really enjoyed it.

Curious though—why did you go with CSCV/PBO instead of DSR for handling selection bias?

Would be interested to hear your thinking here.

That’s an excellent question, and I’m glad you’re digging into the validation layer—it’s where most production systems quietly fail.

Short answer: For evaluating a single, dynamically sized, path-dependent strategy across varying historical contexts, CPCV + PBO was the more direct and appropriate diagnostic tool in this article (rather than DSR).

DSR’s Core Strength: Correcting for Multiple Testing

The Deflated Sharpe Ratio (DSR) is designed to address selection bias when you run many trials—such as thousands of hyperparameter combinations or model variants—and then select the best-performing one. It deflates the observed Sharpe ratio by accounting for the number of independent trials, the variance of the Sharpe estimator, non-normality, and sample length, giving you a more realistic probability that the reported performance is statistically significant rather than the result of luck.

The article’s primary focus was not hyperparameter optimization of the sizing logic. Instead, the pipeline was presented as a fixed, integrated system:

The central question was: “Is this particular sizer robust across different historical contexts and path realizations?” — not “Which of 5,000 sizers performs best?”

Why CPCV/PBO Fits a Stateful, Path-Dependent Strategy

A dynamically sized strategy is inherently stateful. Tuesday’s position size depends on Monday’s P&L, which in turn depends on all prior sizing decisions and the evolving account state ( PropFirmAccountState ). A single historical backtest is therefore just one random draw from the full distribution of possible equity paths.

PBO is the natural companion to CPCV because it directly leverages the same combinatorial path structure to audit a single model/sizer, rather than correcting for selection bias across many competing models.

They Are Complementary, Not Competitors

I deliberately chose CPCV/PBO for this article because it matched the immediate validation task. In a full production pipeline that does involve large-scale hyperparameter optimization (as covered in earlier parts of the series), DSR remains the essential final gatekeeper after model selection.

A robust workflow would typically combine both tools:

You’ve hit exactly the right nuance. If the workflow involved grid-searching kelly_fraction , safety_factor , or max_amplification over hundreds of points, DSR would become critical to avoid being fooled by the luckiest configuration. But to answer the concrete question — “Will this specific sizer blow up a FundedNext account in 20% of parallel universes?” — PBO computed on CPCV paths is the sharper, more targeted instrument.

That’s an excellent question, and I’m glad you’re digging into the validation layer—it’s where most production systems quietly fail.

Short answer: For evaluating a single, dynamically sized, path-dependent strategy across varying historical contexts, CPCV + PBO was the more direct and appropriate diagnostic tool in this article (rather than DSR).

DSR’s Core Strength: Correcting for Multiple Testing

The Deflated Sharpe Ratio (DSR) is designed to address selection bias when you run many trials—such as thousands of hyperparameter combinations or model variants—and then select the best-performing one. It deflates the observed Sharpe ratio by accounting for the number of independent trials, the variance of the Sharpe estimator, non-normality, and sample length, giving you a more realistic probability that the reported performance is statistically significant rather than the result of luck.

The article’s primary focus was not hyperparameter optimization of the sizing logic. Instead, the pipeline was presented as a fixed, integrated system:

The central question was: “Is this particular sizer robust across different historical contexts and path realizations?” — not “Which of 5,000 sizers performs best?”

Why CPCV/PBO Fits a Stateful, Path-Dependent Strategy

A dynamically sized strategy is inherently stateful. Tuesday’s position size depends on Monday’s P&L, which in turn depends on all prior sizing decisions and the evolving account state ( PropFirmAccountState ). A single historical backtest is therefore just one random draw from the full distribution of possible equity paths.

PBO is the natural companion to CPCV because it directly leverages the same combinatorial path structure to audit a single model/sizer, rather than correcting for selection bias across many competing models.

They Are Complementary, Not Competitors

I deliberately chose CPCV/PBO for this article because it matched the immediate validation task. In a full production pipeline that does involve large-scale hyperparameter optimization (as covered in earlier parts of the series), DSR remains the essential final gatekeeper after model selection.

A robust workflow would typically combine both tools:

You’ve hit exactly the right nuance. If the workflow involved grid-searching kelly_fraction , safety_factor , or max_amplification over hundreds of points, DSR would become critical to avoid being fooled by the luckiest configuration. But to answer the concrete question — “Will this specific sizer blow up a FundedNext account in 20% of parallel universes?” — PBO computed on CPCV paths is the sharper, more targeted instrument.

Thanks for taking the time to share your thoughts—I appreciate it. I went back and reread AFML to review things more carefully,and with your explanation as well, and it helped me understand this part much better.