Feature Engineering for ML (Part 1): Fractional Differentiation — Stationarity Without Memory Loss

Table of Contents

- Introduction

- The Problem: Integer Differentiation Destroys Memory

- The Stationarity vs. Memory Dilemma

- The Mathematics of Fractional Differentiation

- Weight Generation and Convergence

- Two Window Strategies: Expanding vs. Fixed-Width (FFD)

- Stationarity Testing with the Augmented Dickey-Fuller Test

- Finding the Optimal d*

- The afml Implementation

- Pipeline Integration

- Conclusion

- References

Introduction

Every machine learning pipeline for financial time series faces a preprocessing decision that most practitioners make without thinking: how to transform raw prices into features. The standard answer is to compute returns as first differences of log-prices. Returns are stationary, which satisfies the assumptions of most ML algorithms. But returns have a critical flaw: they erase memory. A return series contains no information about what price level the asset has visited, how far it has drifted from its long-term mean, or how its current level relates to historical support and resistance zones. Every value depends on exactly one predecessor, and everything before that predecessor is discarded.

This is not merely a theoretical concern. Equilibrium models need memory to assess how far a price process has drifted from its expected value. Mean-reversion strategies need memory to identify the mean. Trend-following strategies need memory to distinguish a true trend from noise. When integer differencing erases this memory, the ML algorithm must reconstruct it via feature engineering (lagged returns, rolling statistics, technical indicators). These features are imperfect proxies for information lost during preprocessing.

Fractional differentiation resolves this dilemma. Instead of differencing by an integer (0 for prices, 1 for returns), we difference by a real number d between 0 and 1. The result is a series that is stationary — satisfying ML assumptions — while preserving as much memory as possible from the original price series. López de Prado introduced this technique to the financial ML community in Chapter 5 of AFML, building on foundational work by Hosking (1981). This article develops the theory, explains the two implementation strategies (expanding window and fixed-width window), and walks through the production-grade Python implementation in the afml library. The next article in the series translates this engine into MQL5 for deployment on live MetaTrader 5 feeds.

The Problem: Integer Differentiation Destroys Memory

To understand what fractional differentiation solves, we need to see what integer differentiation destroys. Consider a price series Pt. We can represent any differentiation operation using the backshift operator B, defined as BkXt = Xt−k. First-order differencing is:

![]()

This is the familiar return series. The operation uses a weight vector ω = {1, −1, 0, 0, …} (it looks at exactly one previous value, then discards everything else). All the information encoded in Pt−2, Pt−3, …, P1 is gone.

Zero-order differentiation — using the raw price — preserves all memory, but prices are non-stationary. Their mean and variance drift over time. An ML algorithm trained on features at price level 100 cannot generalize to features at price level 200 because the mapping between feature space and label space has shifted.

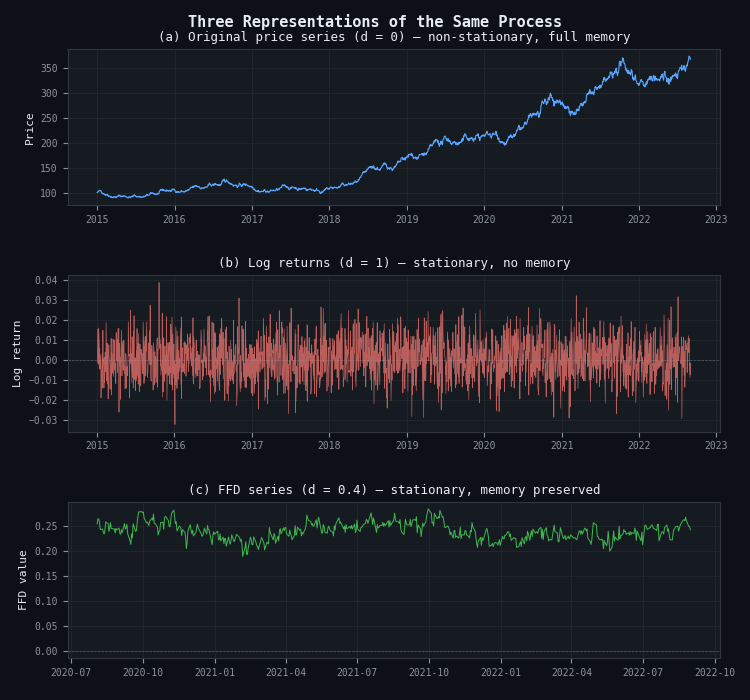

The visual impact is striking. Panel (a) below shows a synthetic price series generated from geometric Brownian motion. It is non-stationary — the ADF test cannot reject the unit root null hypothesis. Panel (b) shows log returns (d = 1): stationary, but each return value is an independent observation with no connection to the price level from which it originated. Panel (c) shows the FFD-transformed series with d = 0.4: stationary, yet the broad shape and level information of the original series are visibly preserved.

Figure 1. Three representations of the same underlying process

- Panel (a): Raw prices (d = 0), non-stationary with full memory. The series drifts freely — its mean and variance shift over time, violating ML stationarity assumptions.

- Panel (b): Log returns (d = 1), stationary with zero memory. Each return depends on exactly one predecessor; all price-level information is erased.

- Panel (c): FFD series (d = 0.4), stationary with memory preserved. The broad structure of the original series is visible, yet the process fluctuates around a stable mean.

The Stationarity vs. Memory Dilemma

The core tension is simple: stationarity and memory pull in opposite directions. Stationarity is a necessary condition for supervised learning — we need to map unseen observations to labeled training examples, and that mapping only works when the feature distribution is stable. But stationarity alone is not sufficient for prediction. A white noise series is perfectly stationary but contains zero signal. Predictive power comes from memory — the persistence of past information in current observations.

Consider a mean-reverting asset. Its price encodes the fact that it has drifted above or below its long-term equilibrium. That distance-from-equilibrium is the signal a mean-reversion model exploits. When we compute returns, we destroy precisely that information. The return tells us how much the price moved between two adjacent bars, but not where the price is relative to equilibrium.

This is not just a feature-engineering oversight — it is a fundamental information-theoretic loss. López de Prado frames it as a dilemma between two traditional econometric paradigms:

- Box-Jenkins: work with returns (stationary, memory-less).

- Engle-Granger: work with prices via cointegration (preserves memory, but cointegrating vectors are unstable and the number of cointegrated variables is limited).

Fractional differentiation opens a third path. Between the extremes of d = 0 (prices) and d = 1 (returns), there exists a continuum of transformations that trade off stationarity and memory at a granularity controlled by the real-valued parameter d. The question becomes: what is the minimum d that achieves stationarity? That minimum d* is the optimal operating point — any differentiation beyond it removes memory unnecessarily.

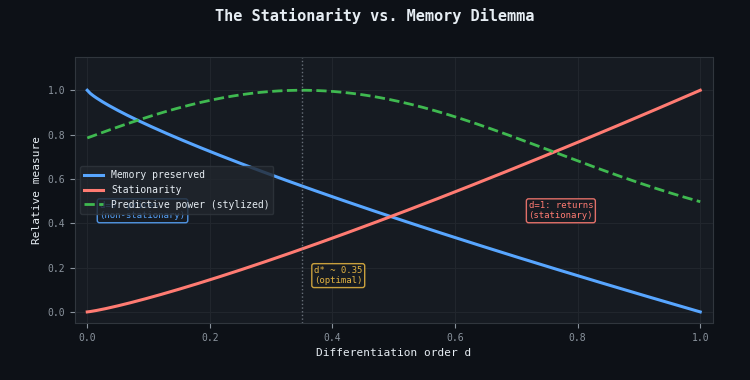

Figure 2. The stationarity vs. memory dilemma

- Blue curve: Memory preserved — decreases monotonically as d increases. At d = 0 (raw prices), all memory is intact. At d = 1 (returns), memory is effectively zero.

- Red curve: Stationarity — increases monotonically with d. At d = 0, the series is non-stationary. At d = 1, full stationarity is achieved.

- Green dashed: Stylized predictive power — peaks near the optimal d* ≈ 0.35, where stationarity is satisfied and maximum memory is retained.

The Mathematics of Fractional Differentiation

Standard integer differentiation uses the binomial expansion (1 − B)n where n is a positive integer. For n = 1: (1 − B)1 = 1 − B, giving Xt − Xt−1. For n = 2: (1 − B)2 = 1 − 2B + B2, giving Xt − 2Xt−1 + Xt−2. In all integer cases, the binomial coefficient becomes zero after k > n terms, truncating the weight vector and erasing memory beyond lag n.

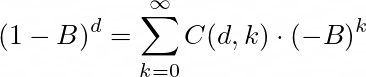

Fractional differentiation generalizes the exponent n to a real number d using the binomial series expansion. For any real d:

where the generalized binomial coefficient is:

The fractionally differentiated value is then a dot product between a weight vector ω and the historical values X:

The weight sequence is ω = {1, −d, d(d−1)/2!, −d(d−1)(d−2)/3!, …}. When d is a positive integer, the product ∏(d−i) becomes zero for k > d, and all subsequent weights vanish — memory is cut off. When d is a positive non-integer (say 0.4), the product never becomes zero, and the weights form an infinite series that decays toward zero asymptotically. This is the mechanism by which fractional differentiation preserves memory: old observations receive small but nonzero weights.

Iterative Weight Estimation

Computing the generalized binomial coefficient from scratch for each k is unnecessary. The weights satisfy a recurrence relation that makes computation efficient. Starting from ω0 = 1:

![]()

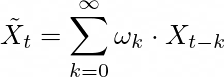

This single formula generates the entire weight sequence iteratively. Each weight depends only on the previous weight and the current index — a constant-time operation. Figure 3 shows these weight sequences for d ∈ [0, 1] and d ∈ [1, 2].

Figure 3. Fractional differentiation weights ωk for varying d

- Panel (a): For d ∈ [0, 1], all weights after ω0 = 1 are negative and bounded within (−1, 0), decaying toward zero. At d = 0, only ω0 = 1 is nonzero (identity). At d = 1, ω = {1, −1, 0, 0, …} (standard returns).

- Panel (b): For d > 1, ω1 < −1 and weights for k ≥ 2 become positive — second-difference behavior emerges.

Weight Generation and Convergence

The recurrence relation is simple to implement. In Python, it maps directly to a Numba-compiled function for speed:

@njit(cache=True) def get_weights(d, size): """Expanding window weights (AFML Section 5.4.2, page 79).""" weights = [1.0] for k in range(1, size): weights_ = -weights[-1] * (d - k + 1) / k weights.append(weights_) weights = np.array(weights[::-1]).reshape(-1, 1) return weights

Two things to notice. First, the weights are reversed before returning — the function stores them with the oldest weight first and the newest weight (ω0 = 1) last. This ordering aligns with the convention that prices[0] is the oldest and prices[-1] is the newest, so the dot product directly yields the fractionally differentiated value. Second, the @njit(cache=True) decorator compiles this to machine code via Numba, which matters because this function can be called thousands of times during hyperparameter search over d.

For the fixed-width window variant, we add a threshold-based termination criterion. The weights decay asymptotically toward zero; eventually, a weight becomes so small that including it adds negligible precision while expanding the required lookback window. The FFD weight generator stops when |ω k | falls below a threshold τ (typically 10−5):

@njit(cache=True) def get_weights_ffd(d, thres, lim): """Fixed-width window weights (AFML Section 5.4.2, page 83).""" weights = [1.0] k = 1 ctr = 0 while True: weights_ = -weights[-1] * (d - k + 1) / k if abs(weights_) < thres: break weights.append(weights_) k += 1 ctr += 1 if ctr == lim - 1: break weights = np.array(weights[::-1]).reshape(-1, 1) return weights

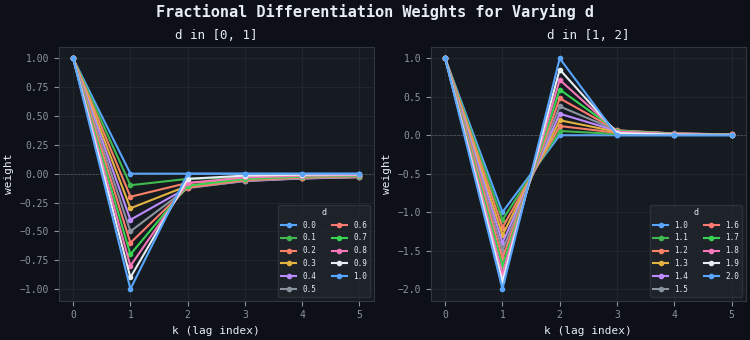

The number of weights generated — the window width — depends on both d and τ. Smaller d values produce weights that decay more slowly, requiring longer windows to reach the threshold. Figure 4 shows this relationship.

Figure 4. FFD weight vectors at τ = 10−5

- Lower d values: Produce longer weight vectors because the weights decay more slowly. At d = 0.2, the window spans hundreds of bars — each FFD observation integrates a long history.

- Higher d values: Produce shorter vectors. At d = 1.0, the vector is simply {−1, 1} — standard returns with a window width of 1.

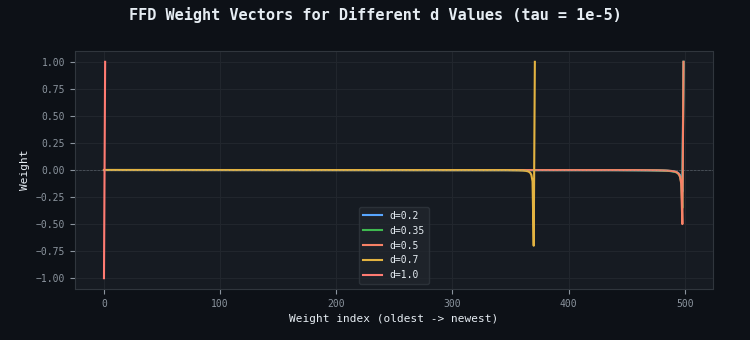

Figure 5. FFD window width and weight convergence

- Panel (a): Window width (number of weights) as a function of d. Lower d values require proportionally more historical data to fill the lookback.

- Panel (b): Cumulative weight magnitude for d = 0.4. Approximately 99% of the total weight is captured within the first ~30 lags. The remaining weights contribute diminishing information but are retained for precision.

Two Window Strategies: Expanding vs. Fixed-Width (FFD)

There are two ways to apply the weight vector to a finite time series. The choice is significant for both statistical properties and computational cost.

Expanding Window

The expanding window approach uses all available history for each point. For the last observation X̃T, it uses weights {ωk} for k = 0, …, T−1. For X̃T−l, it uses k = 0, …, T−l−1. Therefore, early observations use fewer weights than later ones, creating lookback asymmetry.

The practical consequence is negative drift. As the window expands, the growing number of negative weights (recall that all weights after ω0 are negative for d ∈ (0,1)) accumulates additional negative contributions. The fractionally differentiated series drifts downward over time; not because the underlying price is trending down, but as an artifact of the expanding window.

López de Prado mitigates this by defining a weight-loss threshold τ. For each observation, the relative weight loss is computed as the fraction of total weight magnitude that falls outside the available window. Observations where this loss exceeds τ are discarded. This improves the situation but does not eliminate the drift entirely, because observations still use windows of different lengths.

Fixed-Width Window (FFD)

The fixed-width window (FFD) approach uses the same weight vector for every observation. The weight sequence is truncated at the first index l* where |ωl*+1 | < τ, and this identical truncated vector is applied across all {X̃t} for t = l*, …, T. The first l* observations are discarded because insufficient history exists to fill the window.

This approach eliminates negative drift entirely. Every observation is computed on the same basis — the same number of weights, applied with the same lookback depth. The result is a driftless blend of level and noise. López de Prado recommends the FFD approach for practical applications, and it is the default in our afml implementation.

The trade-off is distributional: the FFD series is no longer Gaussian. Truncating the weight vector can introduce skewness and excess kurtosis. For ML applications, this is acceptable — most tree-based algorithms are distribution-agnostic — but it matters for any downstream analysis that assumes normality.

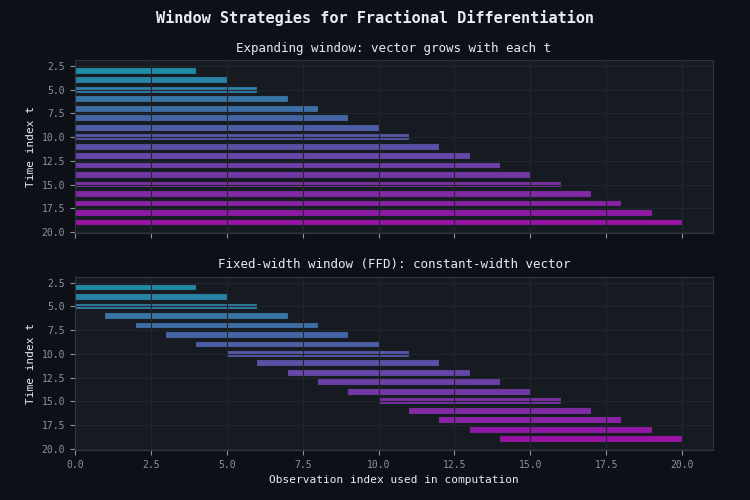

Figure 6. Window strategies for fractional differentiation

- Panel (a) — Expanding window: The weight vector grows with each observation. Later bars integrate more history than earlier ones, causing negative drift from the accumulation of negative weights.

- Panel (b) — Fixed-width window (FFD): Every observation uses the same window width. Memory depth is consistent across the entire series. The first few observations are discarded because the window cannot be filled.

Stationarity Testing with the Augmented Dickey-Fuller Test

Fractional differentiation is only useful if we can determine whether the result is stationary. The Augmented Dickey-Fuller (ADF) test is the standard tool. It tests the null hypothesis that a series contains a unit root (is non-stationary) against the alternative that it is stationary. The test returns two values we care about: the ADF statistic (more negative = more evidence for stationarity) and the p-value (probability of observing the statistic under the null).

At the 5% level, the series is considered stationary if (1) the ADF statistic is below the 5% critical value and (2) the p-value is below 0.05. The afml library wraps both checks into a single function:

def adf_data(df1, df2, d=0, out_df=None, alpha=0.05): """ADF statistics and correlation between original and differenced series.""" corr = np.corrcoef(df1.loc[df2.index], df2)[0, 1] adf = adfuller(df2, maxlag=1, regression="c", autolag=None) tc_col = f"{1 - alpha:.0%} conf" # Build results row columns = ["adfStat", "pVal", "lags", "nObs", "window", tc_col, "corr", "stationary"] vals = (list(adf[:4]) + [df1.shape[0] - adf[3]] + [adf[4][f"{alpha:.0%}"]] + [corr] + [False]) if out_df is None or out_df.empty: out_df = pd.DataFrame.from_dict( {d: {k: v for k, v in zip(columns, vals)}}, orient="index" ) else: out_df.loc[d, columns] = vals stationary = (out_df.loc[d, "adfStat"] < out_df.loc[d, tc_col]) and \ (out_df.loc[d, "pVal"] < alpha) out_df.loc[d, "stationary"] = stationary out_df.index.name = "d" return out_df

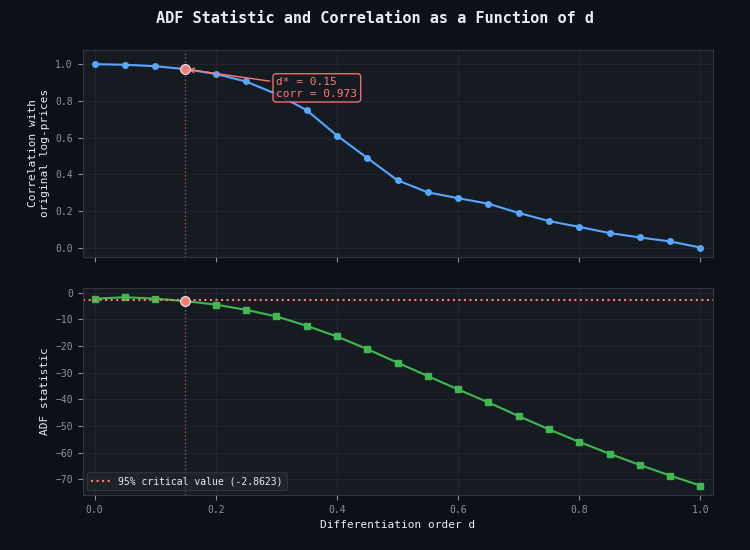

The function also computes the correlation between the original series and the fractionally differentiated series. This correlation measures memory preservation — the closer to 1.0, the more of the original series' structure survives the transformation. Figure 7 shows both quantities plotted against d.

Figure 7. ADF statistic and correlation as a function of d

- Blue (upper panel): Correlation between the FFD series and the original log-prices. Starts near 1.0 at low d and drops toward zero at d = 1 — quantifying memory loss.

- Green (lower panel): ADF statistic. Becomes increasingly negative (more stationary) as d increases. The red dotted line marks the 95% critical value (−2.8623).

- Crossing point: The ADF statistic crosses the critical value near d* ≈ 0.15, where correlation with the original series remains high.

López de Prado reports that across 87 of the most liquid futures worldwide, stationarity was achieved with d < 0.6 in every case, confirming that standard integer differentiation (d = 1) is systematic over-differentiation.

Finding the Optimal d*

Given that the ADF statistic is monotonically decreasing in d (more differentiation makes the series more stationary), the optimal d* is the smallest value that crosses the stationarity threshold. This is a root-finding problem, and binary search is the natural algorithm.

The search starts with the interval [0, max_d] (default: [0, 1]). At each iteration, it computes the FFD series at the midpoint, runs the ADF test, and narrows the interval: if the midpoint is stationary, move the upper bound down (try less differentiation); if non-stationary, move the lower bound up (need more differentiation). Convergence to tolerance tol requires ⌈log2(max_d / tol)⌉ iterations — about 10 iterations for tol = 10−3.

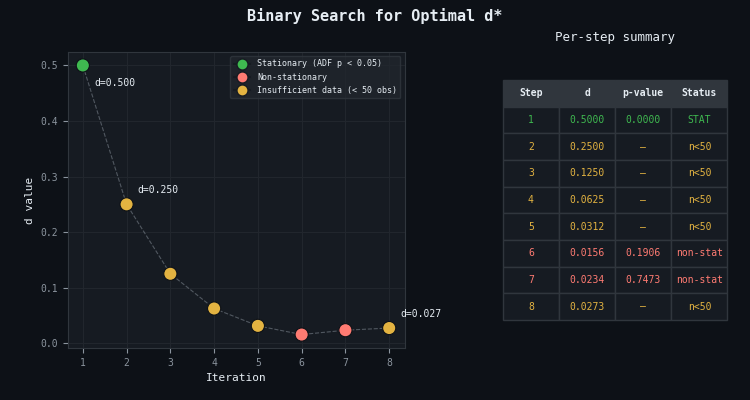

Figure 8. Binary search for optimal d*

- Green markers: Values of d where the ADF test passes (stationary).

- Red markers: Values of d where the series remains non-stationary.

- Convergence: The interval halves at each step. By step 7, the interval width is below the tolerance threshold and the search terminates.

The afml implementation adds two optimizations: (1) caching of previously evaluated d values, and (2) a minimum sample-size check (default: 100 observations). If the FFD series has fewer than 100 non-NaN values, the search moves to a smaller d.

def fracdiff_optimal( series, fixed_width=True, alpha=0.05, max_d=1.0, tol=1e-3, use_log=True, verbose=False, ): """ Binary search for minimum d that achieves stationarity. Returns (ffd_series, d_optimal, adf_dataframe). """ low, high = 0.0, max_d best_d = None diff_adf = None out_df = None frac_diff_cache, adf_cache = {}, {} def frac_diff_cached(series, d, use_log): cache_key = (d, use_log) return frac_diff_cache.setdefault( cache_key, frac_diff_ffd(series, d, use_log=use_log) if fixed_width else frac_diff(series, d, use_log=use_log), ) for i in range(20): # max 20 iterations mid = (low + high) / 2 diff = frac_diff_cached(series, mid, use_log) if len(diff) < 100: high = mid continue diff_adf = adf_data(series, diff, d=mid, out_df=diff_adf, alpha=alpha) if diff_adf.loc[mid, "stationary"]: best_series = diff.copy() best_d = mid high = mid else: low = mid if high - low < tol: break d = round(best_d, 4) if best_d is not None else max_d return best_series, d, diff_adf

The use_log Parameter

An important detail: the use_log parameter controls whether a log transformation is applied before differencing. For price series, this should be True — log transformation converts the multiplicative dynamics of prices (returns are ratios) into additive dynamics (log-returns are differences), making the fractional weights operate in the correct domain. For series that are already additive (returns, spreads, log-prices), set use_log=False to avoid a double transformation.

The afml Implementation

The production implementation in afml.features.fracdiff uses Numba-compiled inner loops for both window strategies. The outer logic handles pandas indexing, column iteration for DataFrames, NaN propagation, and forward-filling. The inner loop — the dot product between weights and values — runs as compiled machine code.

Expanding Window: frac_diff

The expanding window function computes each observation using all available history up to that point. The Numba core function runs in parallel across observations:

@njit(parallel=True, cache=True) def _frac_diff_numba_core(series_values, weights, skip): """Numba-optimized core for expanding-window fractional differencing.""" N = len(series_values) output_values = np.empty(N, dtype=np.float64) output_values[:] = np.nan for iloc in prange(skip, N): output_values[iloc] = np.dot( weights[-(iloc + 1):, :].T, series_values[:iloc + 1].reshape(-1, 1) )[0, 0] return output_values

The prange directive tells Numba to distribute iterations across CPU cores. Each iteration is independent — the dot product for observation t does not depend on the result for observation t−1 — so parallelization is safe. The skip parameter controls how many initial observations are left as NaN based on the weight-loss threshold.

Fixed-Width Window: frac_diff_ffd

The FFD function applies the same truncated weight vector to every observation. Because the weight vector is constant, the dot product for each observation is a fixed-size operation:

@njit(parallel=True, cache=True) def _frac_diff_ffd_numba_core(series_values, weights, skip): """Numba-optimized core for fixed-width window fractional differencing.""" N = len(series_values) weights = weights.T arr = np.empty(N, dtype=np.float64) for i in prange(skip, N): arr[i] = np.dot( weights, series_values[i - skip:i + 1] )[0, 0] return arr[skip:]

This function is both simpler and faster than the expanding-window version. The weight vector is transposed once before the loop (avoiding repeated transposition inside), and the slice series_values[i − skip : i + 1] always has the same length. For a typical window width of ~100 and a series of ~10,000 bars, this completes in under a millisecond.

The Outer Wrapper

The public frac_diff_ffd function handles preprocessing — log transformation, NaN propagation, DataFrame/Series dispatch — before calling the Numba core:

def frac_diff_ffd(series, d, thres=1e-5, use_log=True): """Fixed-width window fractional differentiation (AFML Section 5.5, page 83).""" if isinstance(series, pd.Series): series = series.copy().to_frame() if use_log: series_processed = np.log(series.clip(lower=1e-8)) else: series_processed = series.copy() series_processed = series_processed.astype("float64") weights = get_weights_ffd(d, thres, series_processed.shape[0]) width = len(weights) - 1 df = {} for name in series_processed.columns: series_f = series_processed[[name]].ffill().dropna() ffd = _frac_diff_ffd_numba_core(series_f.values, weights, width) df[name] = pd.Series(ffd, index=series_f.index[width:]) df = pd.concat(df, axis=1) if len(series_processed.columns) == 1: return df.squeeze() return df

The clip(lower=1e-8) before the log ensures numerical stability — if any price is zero or negative (possible with spreads or synthetic series), the log does not produce −∞. The ffill().dropna() chain fills gaps before differentiation, then drops any leading NaN rows, ensuring the Numba core receives a clean contiguous array.

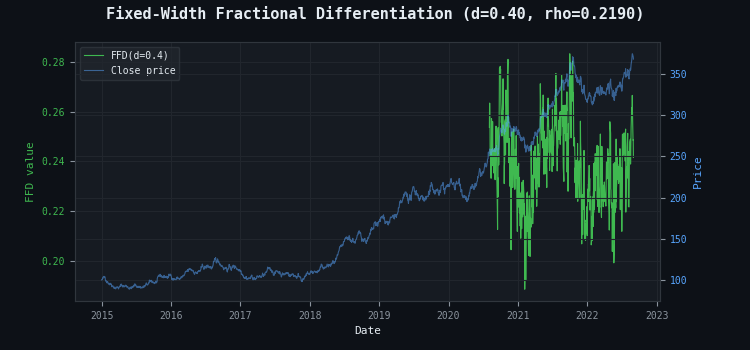

Figure 9. Fixed-width fractional differentiation overlaid on prices

- Green (left axis): The FFD series (d = 0.40) — stationary, oscillating around a stable mean.

- Blue (right axis): The original price series. Trends, regime changes, and relative levels are visibly tracked by the FFD series.

- Correlation ρ: Quantifies how much of the original structure survives the transformation.

Pipeline Integration

In the afml pipeline, fractional differentiation sits at the feature engineering stage — after raw features are computed and before they are passed to the model. The typical workflow is:

- Compute raw features from bar data (close price, VWAP, volume, microstructural features, etc.).

- For each feature that is non-stationary (prices, cumulative volumes, running sums), apply fracdiff_optimal to find the minimum d* and generate the FFD-transformed feature.

- Verify stationarity of the full feature matrix using is_stationary.

- Pass the stationary, memory-preserving features to the model training pipeline.

The is_stationary utility iterates over all columns of a DataFrame and reports which ones fail the ADF test:

def is_stationary(df: pd.DataFrame, alpha: float = 0.05, verbose: bool = True): not_stationary = [] for col in df: adf = adfuller(df[col], maxlag=1, regression="c", autolag=None) if not (adf[0] < adf[4][f"{alpha:.0%}"] and adf[1] < alpha): not_stationary.append(col) return not_stationary

López de Prado's Recommended Workflow

For practitioners starting fresh, López de Prado recommends a four-step procedure that guarantees the FFD approach is applicable regardless of the feature's starting form:

- Compute the cumulative sum of the time series. This ensures that some order of differentiation is needed, even if the original series is already stationary — the cumsum introduces a unit root.

- Compute FFD(d) for various d ∈ [0, 1].

- Determine the minimum d such that the ADF p-value falls below 5%.

- Use the FFD(d) series as the predictive feature.

This procedure is automated by fracdiff_optimal. The binary search replaces the manual grid in step 2, and the function returns both the optimal series and the full ADF results table for inspection.

Caching Considerations

The d* value is stable for a given asset and timeframe — it changes only when the memory structure of the price process shifts materially (regime changes, structural breaks). In the afml caching system, d* can be persisted and reused across runs without rerunning the binary search, provided the underlying data has not changed significantly. When the cache stores intermediate FFD series, the auto_versioning=False flag should be set for fracdiff_optimal since its source code is stable and does not warrant automatic cache invalidation on every edit.

Conclusion

Fractional differentiation resolves the tension between stationarity and memory that plagues every financial ML pipeline. The fixed-width window (FFD) method is the production-grade variant: it applies a constant, precomputed weight vector across the entire series, producing a driftless, stationary output that preserves the maximum possible memory from the original price process. The binary search in fracdiff_optimal automates the selection of the minimum differentiation order d*, balancing statistical rigor (ADF test at a chosen significance level) with memory preservation (correlation with the original series).

Three properties make FFD particularly well-suited to production pipelines:

- The weight vector is fixed — it can be precomputed once and reused indefinitely for the same d and τ.

- Each observation depends only on a bounded lookback window, making it compatible with streaming architectures.

- The computation is a simple dot product — O(l*) per bar, trivially parallelizable, and hardware-friendly.

The follow-up article uses these properties to implement a live FFD engine in MQL5 for MetaTrader 5. The weight vector is stored as a static array in OnInit(). The lookback window is obtained via CopyClose() for exactly l*+1 bars. The dot product is implemented as a tight loop that runs in microseconds.

References

- López de Prado, M. (2018). Advances in Financial Machine Learning. Wiley. Chapter 5: Fractionally Differentiated Features.

- Hosking, J. R. M. (1981). "Fractional differencing." Biometrika, 68(1), 165–176.

- Jensen, A. N. and M. Ø. Nielsen (2014). "A fast fractional difference algorithm." Journal of Time Series Analysis, 35(5), 428–436.

- Hamilton, J. D. (1994). Time Series Analysis. Princeton University Press.

- Alexander, C. (2001). Market Models. Wiley. Chapter 11.

Attached Files

| File | Description |

|---|---|

| fracdiff.py | Full fractional differentiation module: get_weights, get_weights_ffd, frac_diff, frac_diff_ffd, fracdiff_optimal, adf_data |

| stationary.py | Stationarity checking utility: is_stationary |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

GoertzelBrain: Adaptive Spectral Cycle Detection with Neural Network Ensemble in MQL5

GoertzelBrain: Adaptive Spectral Cycle Detection with Neural Network Ensemble in MQL5

Formulating Dynamic Multi-Pair EA (Part 8): Time-of-Day Capital Rotation Approach

Formulating Dynamic Multi-Pair EA (Part 8): Time-of-Day Capital Rotation Approach

MQL5 Wizard Techniques You should know (Part 86): Speeding Up Data Access with a Sparse Table for a Custom Trailing Class

MQL5 Wizard Techniques You should know (Part 86): Speeding Up Data Access with a Sparse Table for a Custom Trailing Class

MetaTrader 5 Machine Learning Blueprint (Part 12): Probability Calibration for Financial Machine Learning

MetaTrader 5 Machine Learning Blueprint (Part 12): Probability Calibration for Financial Machine Learning

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use