MetaTrader 5 Machine Learning Blueprint (Part 15): How to Calibrate Profit-Taking and Stop-Loss Targets from Synthetic Data

Table of Contents

- Introduction

- The Calibration Trap

- The O-U Process: A Model for How Price Moves After Entry

- A Note on Units: Pips vs. Returns

- Directional vs. Mean-Reversion: The Single Decision That Changes Everything

- The Five-Step Algorithm

- Implementation

- Results: Reading the Sharpe Heatmaps

- From Optimal Rules to Practice

- Conclusion

- Attached Files

Introduction

You are operating a mechanical trading strategy (an EA) that ultimately needs two deployment numbers: a profit-taking (PT) and a stop-loss (SL) target. The common practice — running a historical grid search over PT/SL and choosing the pair with the highest in-sample Sharpe — is fast but statistically dangerous: it typically selects the pair that best exploited idiosyncratic noise in that particular historical path, not the pair that is optimal for the underlying return-generating process. To make the problem concrete, assume you have (a) a cost-calibrated series of per-trade P&L, (b) accounting and execution constraints (e.g., prop-firm daily loss ceiling, lot size, maximum concurrent positions), and (c) a decision to run triple-barrier exits in an EA.

This article implements an alternative: estimate a discrete Ornstein–Uhlenbeck (O‑U) process from your trade P&L, fix the forecast (the single modeling choice that defines whether the strategy is mean-reverting or directional), and then derive PT and SL by applying the OTR procedure to 100,000 synthetic paths. The SL dimension is capped by your risk ceiling (account size, lot, concurrent positions); the PT is driven by the forecast you choose (forecast = 0 for contra/mean‑reversion, forecast = average P&L for conservative directional, forecast = average winning trade for optimistic/directed signals). The attached code implements the pipeline and uses Numba to make large-scale 100k-path sweeps practical on a single machine.

This is the second article in a two-part series. The preceding article built a transaction cost model that derives a cost-calibrated per-trade P&L series and a labeling threshold (min_ret) from measured broker costs. If you have not read that article, read it first — the P&L series this article operates on should come from that pipeline, not from a hardcoded spread constant. The quality of the O‑U parameter estimates, and therefore the quality of the PT and SL derived here, depends directly on the accuracy of the cost deduction applied to each trade outcome. The E[P&L] and average winning trade values that become the Case B and Case C forecasts are only as reliable as the spread, slippage, and commission deductions behind them.

One distinction is worth establishing before going further. The min_ret threshold from the preceding article is a labeling parameter: it determines which historical trade outcomes are treated as genuine signal versus execution friction when constructing a training set. The optimal PT derived in this article is an execution parameter: it tells the EA where to place its profit order. The two are related — the execution PT should exceed min_ret, or you are deploying a rule that would have been labeled zero in the training data — but they are derived by different procedures and should not be confused with each other. This article handles the execution side; the preceding one handles the labeling side.

The Calibration Trap

To see why calibrating a stop-loss and profit target on historical data is risky, consider what a grid search actually optimizes. You have a sample of I historical opportunities. For each candidate pair (stop-loss, profit-target) in a grid of N combinations, you compute the Sharpe ratio of the strategy applied to those I opportunities and select the pair with the highest score. The selected pair is the one that exploited the particular sequence of prices in your sample most effectively. It is not necessarily the pair that is optimal for future sequences drawn from the same process.

López de Prado formalizes this as an overfitting definition: a selected rule R* is overfit if it is expected to underperform the median alternative rule out-of-sample. This is not a theoretical concern — it is the typical outcome when two free parameters are calibrated on a finite sample. The profit target and stop-loss can each target specific outlier observations that will not repeat. The more combinations you test, the more likely you are to find one that fits the noise.

The alternative the book proposes is to separate the calibration from the history entirely. Instead of asking which (PT, SL) worked best in-sample, ask which (PT, SL) is optimal for the estimated process. That question can be answered without a historical simulation, and its answer does not overfit because it is derived from process parameters rather than from sample outcomes.

The O-U Process: A Model for How Price Moves After Entry

The mathematical engine of the framework is the discrete Ornstein-Uhlenbeck (O-U) process. The name sounds technical; the concept is not. Think of a rubber band attached at one end to a fixed anchor point and at the other end to a ball. The ball can be pulled away from the anchor by random noise at each step, but the rubber band always pulls it back. The strength of the rubber band controls how quickly the ball returns.

In the O-U process, the "ball" is the current price (or P&L), and the "anchor" is the forecast — the level the process is expected to converge to. The equation that governs each step is:

Pt = (1 − φ) · forecast + φ · Pt−1 + σ · εt

where εt is a random shock drawn from a standard normal distribution, σ controls the size of each shock, and φ controls the strength of the pull toward the forecast. When φ is close to 1, the rubber band is weak — price drifts slowly toward the forecast over many steps. When φ is close to 0, the rubber band is strong — price snaps back to the forecast almost immediately after each deviation.

The quantity φ is directly related to a more intuitive measure called the half-life: the number of steps it takes for a deviation from the forecast to decay by half. A half-life of 25 steps means price takes 25 bars to close half the distance to its target. A half-life of 2 steps means it takes 2 bars. The relationship is: φ = 2−1/hl.

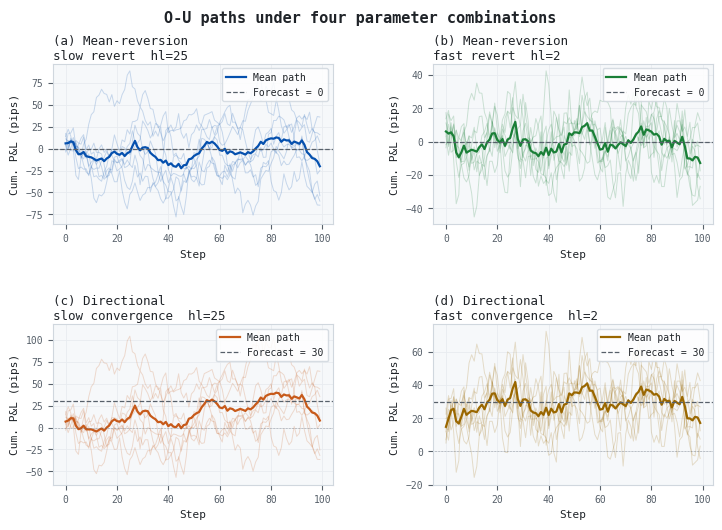

Figure 1. 4-panel illustration of O-U paths under different half-life and forecast values

- Panel (a): Mean-reversion with a slow half-life (25 steps). Paths wander widely around zero with no persistent direction.

- Panel (b): Mean-reversion with a fast half-life (2 steps). Paths snap back to zero within a few steps of each deviation.

- Panel (c): Directional with a slow half-life (25 steps). The mean path drifts gradually toward the forecast of 30 pips, with wide individual path spread.

- Panel (d): Directional with a fast half-life (2 steps). The mean path converges toward 30 pips quickly, but individual paths fluctuate around it with high noise.

Two things are worth noticing in Figure 1. First, the forecast entirely determines where the mean path goes. Without a forecast (panels a and b), the mean path stays near zero. With a positive forecast (panels c and d), it trends upward. Second, the half-life controls how tightly individual paths cluster around the mean. A short half-life does not produce a calmer strategy; it produces a strategy that oscillates rapidly around the forecast rather than drifting toward it smoothly.

One parameter requires a careful reading of the estimation output. The σ displayed in the results — 12.4 pips in the sample run — is the residual standard deviation of the OLS regression, not the raw standard deviation of the P&L series. The estimation regresses each P&L observation on the previous one, subtracts the predictable AR(1) component, and computes the standard deviation of what remains. When there is meaningful autocorrelation in the P&L (i.e., φ is noticeably above zero), the residual is smaller than the raw series. A reader who checks pnl_series.std() will therefore obtain a larger number than the σ printed by the estimator. This is correct behavior — the mesh is built on the residual volatility of the fitted process, not the unconditional volatility of the raw series — but it is worth flagging to avoid confusion when inspecting intermediate outputs.

A Note on Units: Pips vs. Returns

The framework above uses pips as the unit of P&L, because the final goal is a profit‑target and a stop‑loss the EA can place directly in the terminal. If your own P&L series is denominated in returns — for example, from the book's getEvents / getBins pipeline, or because you used the fractional-return output of min_ret_for_symbol() from the preceding article to build a consistent return-based label set — the O‑U machinery is identical. Only a linear re-scaling separates the two representations, and that scaling cancels exactly when the optimal (PT, SL) pair is converted back to price distances.

Converting a trade outcome

Let PIP be the pip size (0.0001 for most EURUSD quotes) and S the entry price. For a long trade, the two directions of conversion are:

return = (pnlpips × PIP) / S

pnlpips = (return × S) / PIP

How the O‑U parameters scale

Feeding return-denominated P&L into the O‑U estimator instead of pip-denominated P&L affects two of the three model quantities. The third — the mean-reversion speed — is unaffected.

- φ (mean‑reversion coefficient) — unchanged. The OLS regression of Pt on Pt−1 is unit‑free: dividing both sides by the same constant leaves the slope — and therefore the half‑life — identical.

- σ (shock standard deviation) — scales proportionally with the unit. At an entry of S = 1.0800, a pip-denominated σ of 12.4 pips becomes a return-denominated σ of 0.115%. The conversion follows the same ratio as the P&L itself:

σreturns = σpips × (PIP / S)

σpips = σreturns × (S / PIP)

- Forecast — likewise scales linearly. A forecast of +30 pips at an entry of 1.0800 is a return forecast of (30 × 0.0001) / 1.0800 ≈ 0.278%. The same proportionality holds for the three cases discussed in the next section.

Why the optimal rule is invariant to the choice of unit

Because the entire (PT, SL) mesh is constructed as multiples of σ, and because both the barriers and the simulated P&L are always expressed in the same unit, the Sharpe‑ratio heatmap is identical up to numerical rounding regardless of which unit is used. The optimal PT and SL expressed in return units will, when multiplied back by the entry price, produce exactly the same pip values — and therefore the same triple‑barrier parameters for the EA. The table below confirms this for the five key quantities from the sample run.

| Quantity | In pips (S = 1.0800) | In returns (S = 1.0800) |

|---|---|---|

| Forecast (Case A) | 0 pips | 0.000% |

| Forecast (Case C) | +35.6 pips | +0.330% |

| σ (residual, OLS) | 12.4 pips | 0.115% |

| Optimal PT (Case A) | 51 pips | 0.472% |

| SL ceiling (prop firm) | 125 pips | 1.157% |

Table 1. Key quantities in pip and return units at an average entry price of 1.0800; σ is the OLS residual standard deviation and optimal PT are from a sample run of the attached code.

The choice between pips and returns is therefore purely a matter of pipeline convenience. If you already have a triple‑barrier workflow that produces return labels — including one built around min_ret_for_symbol() from the preceding article, which operates entirely in fractional returns — feed those returns directly into the O‑U estimation and the mesh sweep, then multiply the resulting (PT*, SL*) by the entry price to recover the pip distances. The choice does not affect the statistical validity of the OTR or the ranking of candidate rules; it only determines whether the intermediate outputs are expressed as percentages or pip figures.

Directional vs. Mean-Reversion: The Single Decision That Changes Everything

The O-U process is a general model. It can describe a mean-reverting market-making strategy, a directional momentum strategy, or a strategy that is expected to lose money. The forecast parameter is what distinguishes these three cases — and choosing it correctly is the single most important modeling decision in the entire framework. The book covers it in two paragraphs. It deserves considerably more.

What the forecast parameter represents

The forecast, written E0[PT] in the book's notation, is the price level that the strategy's signal predicts the market will reach before the position is closed. It is not a free parameter to be tuned. It is the statement you are making about your strategy's edge, expressed in price terms. Before fitting the O-U process to anything, you must answer one question: what does the signal predict will happen to price after entry? The answer to that question is the forecast.

All three forecast values below are computed from the post-cost P&L series — that is, after deducting spread, slippage, and commission from each trade outcome using the cost model from the preceding article. A forecast derived from a gross P&L series will be more optimistic than the realized distribution warrants, and the resulting PT will be set too wide.

Case A — Mean-reversion: forecast = 0

Set the forecast to zero when the strategy's signal predicts that price will return to roughly where it started. This is the correct specification for any counter-trend strategy: a market-making system that buys the bid expecting a bounce back to mid, a statistical arbitrage that buys a spread expecting it to converge, or a reversal strategy that buys an oversold reading expecting price to recover to the recent range midpoint.

With forecast = 0, the O-U process anchors at the entry price. Any excursion in either direction is modeled as noise around a zero expected gain. The framework then asks: given that the expected outcome is zero, which profit-target and stop-loss pair optimizes the risk-adjusted distribution of exit P&L? The answer depends entirely on the half-life and volatility — the noise structure, not the directional bias.

A common mistake is setting forecast = 0 for an RSI reversal strategy solely because RSI triggers at extremes. This conflates the signal's entry logic with the within-trade price dynamic. The RSI fires when the market is oversold; it does not predict that price will simply return to where it was. If the signal has any genuine edge, price should move in the direction of the signal by more than it started from — and that expected movement is the forecast.

Case B — Directional realistic: forecast = E[P&L]

Set the forecast to the historical average post-cost P&L per trade when you believe the signal has modest or uncertain directional edge. This is the conservative directional specification. For an RSI strategy on EURUSD H1 that produced −3.3 pips per trade on average across the sample — after deducting a p95 spread of 1.2 pips and 0.4 pips of slippage on each trade — this means forecast = −3.3 pips. The O-U process then models a strategy that is slightly against you after costs, and the framework asks: given a headwind of 3.3 pips per trade, which (PT, SL) pair mitigates damage most effectively?

This case is appropriate when you are uncertain whether the signal has genuine edge, when the historical sample covers mixed market regimes, or when you want a conservative lower bound on the optimal rule parameters.

Case C — Directional optimistic: forecast = E[win]

Set the forecast to the average post-cost winning trade when you believe the signal fires correctly at the moments where a meaningful price move follows. For the RSI strategy, winning trades averaged +35.6 pips after costs. Setting forecast = +35.6 pips models a position that, when the signal fires correctly, converges toward that gain. The framework then optimizes the (PT, SL) pair for that convergence process, naturally producing a wider profit target.

This case is appropriate when the meta-labeling classifier has already filtered the signal to high-confidence events — the trades that actually reach the MetaLabeling Enhancer EA's execution threshold. For those filtered trades, the conditional expected post-cost gain is considerably higher than the raw average across all signals. Note that if the cost model changes — for example, because you re-run the MQL5 script after a broker change and the p95 spread has widened — the E[win] figure will shift and the Case C forecast must be recomputed before re-running the OTR search.

The forecast as a prior belief

The three cases are not interchangeable alternatives to the same problem. They are three different problem statements about three different strategy variants. Choosing between them requires stating clearly which variant the trading rule is being calibrated for. A rule calibrated on forecast = 0 will set a smaller profit target than one calibrated on forecast = +35.6, and will perform differently in every market condition. The framework does not make this choice for you — it enforces the consequences of the choice you make.

| Case | Forecast value | Appropriate when… | Optimal PT (pips) |

|---|---|---|---|

| A — Contra | 0 | Signal predicts reversion to entry; no assumed directional edge | 51 |

| B — Directional, realistic | −3.3 | Signal has uncertain or marginal edge; conservative specification | 45 |

| C — Directional, optimistic | +35.6 | Filtered high-confidence signals where average post-cost win is credible | 85 |

In all three cases, the stop-loss was capped at 125 pips by the prop-firm's daily loss ceiling for a $25,000 account trading 0.5 lot with up to two concurrent positions. The SL dimension is constrained by the prop-firm rule, not by the O-U process. The PT dimension is controlled by the forecast. This distinction matters practically: the SL is set by your risk management rules; the PT is set by your belief about the signal's edge.

The Five-Step Algorithm

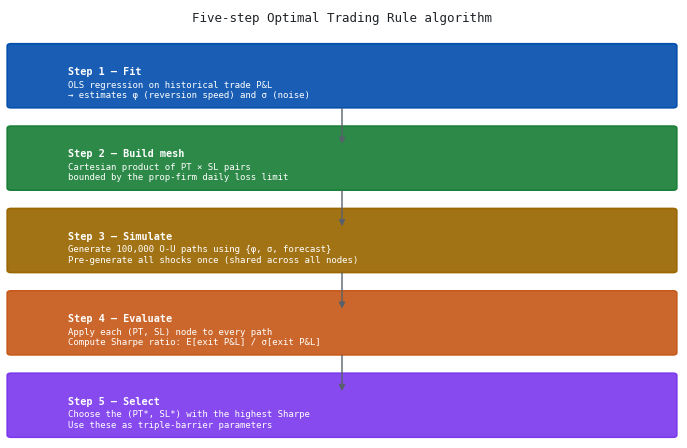

Figure 2. 5-panel illustration of the Optimal Trading Rule algorithm from AFML Chapter 13

- Step 1 — Fit: OLS regression on the linearized O-U equation estimates φ and σ from the historical post-cost trade P&L series. The σ produced here is the residual standard deviation of the regression, not the unconditional standard deviation of the raw P&L — the two differ when there is meaningful autocorrelation in the series.

- Step 2 — Build mesh: A Cartesian product of profit-target and stop-loss candidate values, each expressed as a multiple of σ and bounded above by the prop-firm daily loss limit. Both the PT and the SL axes share the same upper bound.

- Step 3 — Simulate: 100,000 O-U paths are generated using the fitted parameters and the chosen forecast. All shocks are pre-generated as a single matrix and shared across every mesh node — a common random numbers (CRN) design that ensures Sharpe comparisons between nodes reflect the rules rather than sampling noise. Each path runs for at most max_hp steps; paths that have not touched either barrier by step max_hp are valued at their terminal price. This time exit is the third barrier in the simulation and should be set to match the max_hold parameter used in the upstream triple-barrier labeling. A mismatch — the code defaults to max_hold=48 for labeling and max_hp=100 for simulation — means the OTR is optimizing for a holding period longer than the strategy ever runs, which biases PT estimates upward. Set both to the same value before a production run.

- Step 4 — Evaluate: Each (PT, SL) node is applied to every simulated path. Exit P&L is recorded per path, and the Sharpe ratio is computed across all paths for that node using Welford's online algorithm in a single pass.

- Step 5 — Select: The (PT*, SL*) pair with the highest Sharpe ratio is the Optimal Trading Rule. These values become the triple-barrier parameters for the EA.

Steps 1 and 2 are inexpensive. The computational cost lives entirely in Steps 3 and 4: 100,000 paths × 400 mesh nodes × up to 100 steps per path = 4 billion operations. The implementation choice for Steps 3 and 4 determines whether the framework is practical.

There is a bootstrapping consideration worth naming before moving to the code. The P&L series used in Step 1 is generated by running triple_barrier_pnl() with ATR-based initial barriers (pt_mult=2.0, sl_mult=1.0). Those initial barriers shape the P&L distribution: a strategy that frequently hits its profit barrier will show a different distribution — and therefore different φ and σ estimates — than the same signals evaluated with wider barriers. In practice, run the algorithm once with reasonable ATR-based multipliers to obtain an initial (PT*, SL*), then re-run triple_barrier_pnl() using those derived pip values as the barriers, and re-estimate. After one iteration the P&L distribution is consistent with the barriers that generated it. For the RSI example in this article, the ATR-based initial labeling was close enough to the OTR output that a second iteration did not materially change the results, but this should be verified rather than assumed for any new strategy.

Implementation

Step 1: Cost-calibrated P&L and O-U parameter estimation

The first change relative to the standalone version of this code is that the spread deduction in triple_barrier_pnl() is now sourced from the transaction cost model rather than hardcoded. The same model.spread_pips value used to derive min_ret in the labeling stage must be used here, or the P&L distribution will reflect a different cost assumption than the one the training labels were built on. Slippage is also added to the per-trade deduction; the original code omitted it.

from pathlib import Path from afml.transaction_costs import load_cost_model # Load the same cost model used for labeling in the preceding pipeline step. # spread_pips and slippage_pips must match the values passed to get_events(). cost_model = load_cost_model( csv_path = Path("data/EURUSD_costs.csv"), spread_percentile = "p95_pips", slippage_pips = 0.4, commission_per_lot = 7.0, lot_size = 0.5, ) bars = download_bars("EURUSD=X", period="730d", interval="1h") rsi = compute_rsi(bars["close"], period=14) at = compute_atr(bars["high"], bars["low"], bars["close"], period=14) sigs = generate_rsi_signals(rsi, overbought=70, oversold=30) # max_hold=48 H1 bars ≈ 2 calendar days — must match holding_days=2.0 # passed to min_ret_for_symbol() in the labeling stage. # max_hp in the OTR simulation (Step 3) should be set to the same value. pnl_series = triple_barrier_pnl( df = bars, signals = sigs, atr = at, pt_mult = 2.0, sl_mult = 1.0, max_hold = 48, spread_pips = cost_model.spread_pips, # ← from cost model, not hardcoded slippage_pips = cost_model.slippage_pips, # ← include slippage pip = 0.0001, ) pnl_nz = pnl_series.dropna().values.astype(float) pnl_nz = pnl_nz[pnl_nz != 0.0] avg_win = float(pnl_nz[pnl_nz > 0].mean()) expected = float(pnl_nz.mean())

Note that triple_barrier_pnl() in the attached code has been updated to accept a slippage_pips parameter alongside spread_pips. The deduction per trade is now spread_pips + slippage_pips. Commission is omitted from this deduction because, at 0.01–0.5 lot sizes on EURUSD, it contributes less than 0.1 pips per trade and is already embedded in the E[P&L] via the labeling pipeline; adding it again here would double-count it. For larger lot sizes or instruments with higher commission-to-spread ratios, it should be included explicitly.

The book linearizes equation (13.2) by defining Xt = Pt−1 − forecast and Yt = Pt, then applying OLS to estimate φ̂ = Cov(Y, X) / Var(X). The residual standard deviation of this regression is σ̂ — the per-step shock size used to construct the mesh. As noted in Section 3, this is smaller than the unconditional standard deviation of the raw P&L series whenever φ is meaningfully above zero.

def estimate_ou_params(pnl: np.ndarray, forecast: float = 0.0) -> OUParams: y = pnl[1:] x = pnl[:-1] - forecast phi = np.cov(y, x)[0, 1] / np.var(x) # OLS slope phi = np.clip(phi, -0.9999, 0.9999) residuals = y - forecast - phi * x # AR(1) residuals sigma = np.std(residuals) # residual σ, not raw std hl = -np.log(2) / np.log(phi) if 0 < phi < 1 else float("inf") return OUParams(phi=phi, sigma=sigma, hl=hl, forecast=forecast)

The book implementation (Snippet 13.2)

The book's implementation runs a nested loop: an outer loop over every (PT, SL) node in the mesh, and an inner loop that simulates n_iter paths by calling Python's random.gauss() once per step per path. For a 20×20 mesh and 100,000 paths at 100 steps each, this means 4 billion individual Python calls inside a pure loop. The book acknowledges this is slow and suggests parallelizing across nodes using the multiprocessing pattern from Chapter 20.

def batch_book(ou, n_iter=100_000, max_hp=100, rPT=..., rSLm=...): for pt, sl in product(rPT * ou.sigma, rSLm * ou.sigma): exits = [] for _ in range(n_iter): p = seed_val for _ in range(max_hp): p = (1-ou.phi)*ou.forecast + ou.phi*p + ou.sigma*gauss(0,1) if p - seed_val >= pt or p - seed_val <= -sl: break exits.append(p - seed_val)

Why multiprocessing fails here

Parallelizing via Python's multiprocessing.Pool is the natural extension of Chapter 20's pattern. In practice, it provides no measurable speedup for this workload. The measured speedup on a 2-core machine was 1.0×: identical wall-clock time to the single-threaded book version. The reason is architectural. Each worker process must be forked from the parent interpreter (50–200 ms overhead), receive its arguments via pickle across an inter-process communication channel, run its inner loop in a separate Python interpreter, and return results the same way. For a workload where each job is roughly 50 ms of inner-loop computation, the communication overhead consumes the entire gain. The lesson is not that multiprocessing is flawed, but that the right parallelism primitive depends on the balance between computation and communication. When jobs are large and independent, multiprocessing excels. When the work is lightweight and tightly looped, it does not.

The Numba implementation

Numba's @njit decorator compiles the simulation loop to machine code before the first execution. The compiled kernel has no Python interpreter, no garbage collector, and no object overhead. Four structural changes relative to the book version give the Numba implementation its edge — the first of which is a methodological improvement that affects result quality, not just speed.

First, all random shocks are pre-generated as a single NumPy matrix of shape (n_paths × max_hp) before the main loop begins, and that matrix is shared across every mesh node. This is a common random numbers (CRN) design: because all 400 (PT, SL) nodes are evaluated on the same 100,000 scenarios, differences in Sharpe ratios across the mesh reflect the rules rather than the luck of independent draws. The book version regenerates shocks independently per node, adding a noise floor to every pairwise comparison that is invisible at the cell level but smooths the heatmap at the surface level. At 100,000 paths the effect is small but nonzero; at the 3,000-path benchmark scale it is visible as granularity in the Sharpe surface.

Second, the vectorized NumPy draw that generates the shock matrix replaces 4 billion individual gauss() calls with a single operation, eliminating the dominant cost of the book implementation entirely.

Third, the outer loop over PT values uses Numba's prange instead of Python's range. Numba translates prange to OpenMP parallel for-loops, distributing PT iterations across all available CPU cores with zero serialization overhead — threads share the same memory space, so there is nothing to pickle or transfer.

Fourth, mean and standard deviation are computed via Welford's online algorithm inside the compiled kernel, eliminating a second pass over the exits array for each node.

@njit(cache=True) def _sim_paths(phi, sigma, forecast, seed_val, shocks): # shocks: (n_paths × max_hp) matrix, pre-generated once and # shared across all mesh nodes — common random numbers design. n, T = shocks.shape out = np.empty((n, T)) for i in range(n): p = seed_val for t in range(T): p = (1 - phi) * forecast + phi * p + sigma * shocks[i, t] out[i, t] = p - seed_val return out @njit(parallel=True, cache=True) def _eval_mesh(paths, PT_pips, SLm_pips): n_pt, n_sl = len(PT_pips), len(SLm_pips) n, T = paths.shape out = np.empty((n_pt, n_sl, 2)) for pi in prange(n_pt): # parallelized outer loop pt = PT_pips[pi] for si in range(n_sl): sl = SLm_pips[si] mu, M2 = 0.0, 0.0 for i in range(n): exit_pnl = paths[i, T - 1] # time exit (vertical barrier) for t in range(T): if paths[i,t] >= pt or paths[i,t] <= -sl: exit_pnl = paths[i, t]; break delta = exit_pnl - mu mu += delta / (i + 1) # Welford mean update M2 += delta * (exit_pnl - mu) # Welford variance update out[pi, si] = [mu, np.sqrt(M2 / n)] return out

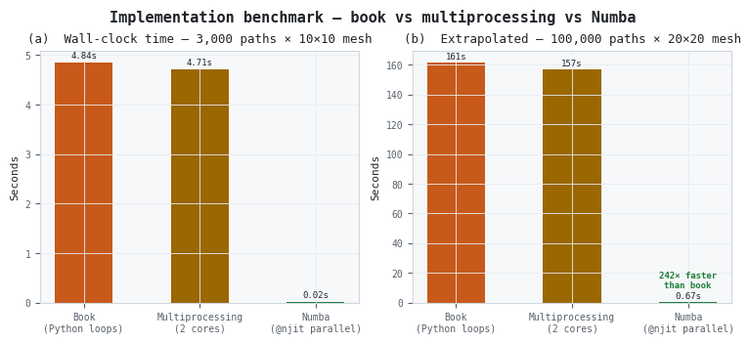

Figure 3. 2-panel illustration of implementation benchmark across three approaches

- Panel (a): Measured wall-clock time at 3,000 paths on a 2-core machine. Book and multiprocessing are within 3% of each other; Numba is 242× faster.

- Panel (b): Extrapolated to 100,000 paths and a 20×20 mesh. The book version would take 161 seconds; the Numba version takes 0.67 seconds.

The multiprocessing result is worth pausing on. Chapter 20's pattern — parallelizing pandas operations across a CPU cluster — is sound in principle, but it does not fit this workload. Here, each job is too small and cache-friendly to justify the cost of inter-process communication. In short: multiprocessing wins when compute dominates; Numba wins when communication overhead would otherwise erase the gains.

Results: Reading the Sharpe Heatmaps

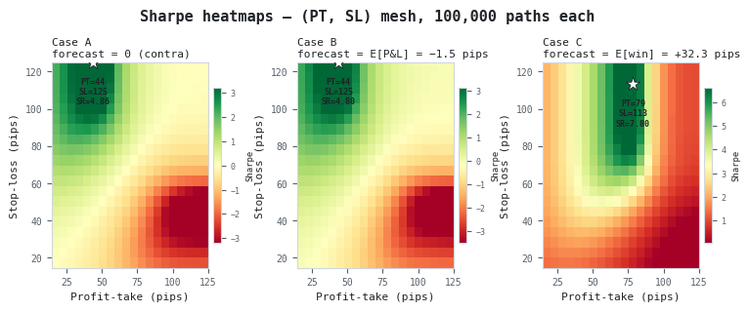

Figure 4. 3-panel illustration of Sharpe ratio heatmaps for mean-reversion and directional forecast specifications

- Case A (forecast = 0): The highest Sharpe region runs along the upper edge of the heatmap — wide stop-losses — for moderate profit targets around 51 pips. The red region at low SL and high PT represents rules that are cut out before capturing any of the process's noise-driven fluctuations.

- Case B (forecast = −3.3 pips): The slight negative forecast shifts the optimal PT leftward to 45 pips. The Sharpe surface is qualitatively similar to Case A but with lower absolute values throughout, reflecting the cost headwind.

- Case C (forecast = +35.6 pips): The positive forecast creates a fundamentally different heatmap. The green region extends to wider PTs (85 pips), and the overall Sharpe surface is higher because the process is modeled as trending toward the profit target. The star marks PT=85, SL=125, SR=7.70.

Two structural features appear in all three heatmaps. First, the optimal SL is always at the ceiling of the mesh — 125 pips in this case — confirming that the prop-firm daily loss limit is the binding constraint, not the O-U process's preference. The process recommends an even wider stop; the prop-firm rules cap it. Second, the lower-left corner of each heatmap (narrow PT, narrow SL) is always yellow, representing rules that exit almost immediately in either direction and whose Sharpe ratios are close to zero or negative regardless of forecast.

Reading the heatmaps operationally: the color at any (PT, SL) coordinate is the expected Sharpe ratio of a trading rule with those parameters, averaged across 100,000 synthetic market scenarios consistent with the estimated O-U process. A green coordinate means that rule is expected to produce a favorable distribution of exits. A red coordinate means it is not. The star marks where to set the triple-barrier parameters.

From Optimal Rules to Practice

The framework outputs a pair of numbers: an optimal profit target and an optimal stop-loss in pip terms. To convert these into EA parameters, you also need account size, position size, and the maximum number of concurrent positions.

A prop-firm's daily loss ceiling applies to the account, not to individual trades. If a $25,000 account has a 5% daily loss limit, the maximum daily loss is $1,250. At 0.5 lot on EURUSD — where each pip is worth $5 — that limit corresponds to 250 pips total across all open positions in a day. If the strategy holds at most two positions simultaneously, each position's stop-loss must not exceed 125 pips if both are to lose simultaneously without breaching the daily limit.

This is the number that bounds the SL mesh ceiling. It is not arbitrary — it is derived from a specific account configuration. A trader on a $100,000 account at 1.0 lot with three concurrent positions faces a ceiling of 167 pips. A trader on a $10,000 account at 0.1 lot with one position faces a ceiling of 500 pips. The optimal SL from the O-U framework will be the minimum of its unconstrained preference and this ceiling. In all three cases tested here, the ceiling was the binding constraint — the framework preferred a wider stop than the prop-firm allows.

Before deploying the derived PT, check that it exceeds the min_ret threshold computed by the transaction cost model in the preceding article. The min_ret for the EURUSD intraday configuration — approximately 2.5 pips after applying a 1.5× cost multiplier to the 1.67-pip round-trip cost — is well below the 51-pip Case A optimal PT, so there is no conflict in this example. But for strategies with very small forecast values, very tight prop-firm ceilings, or unusually high transaction costs, the OTR-derived PT can fall close to or below min_ret. In that situation the strategy is being asked to hit a profit target that would have been labeled zero in training; the correct response is to revisit either the forecast specification or the cost assumptions, not to deploy the rule as derived.

For any EA that implements triple-barrier exits, the results translate directly into two input parameters. The profit target is set by the forecast specification that matches the strategy variant: 51 pips for an unfiltered RSI strategy (Case A), 85 pips for signals that have passed a meta-labeling filter (Case C). The stop-loss is set by the account configuration ceiling derived above. Both values should be revisited whenever the account size, lot size, or maximum concurrent position count changes — and whenever the transaction cost model is re-run, since a change in the cost model will shift E[P&L] and E[win] and therefore the Case B and Case C forecasts.

Conclusion

Calibrating PT and SL by selecting the best in-sample grid point overfits to a historical trajectory. The robust alternative is to (1) derive a cost-calibrated P&L series using measured broker costs from the transaction cost model in the preceding article, (2) state up front what your signal predicts after entry (the forecast), (3) estimate O‑U parameters from the cost-adjusted intra-trade P&L, (4) simulate many synthetic paths from that estimated process using a common random numbers design, and (5) pick the (PT, SL) pair that maximizes expected risk-adjusted performance across those paths, while enforcing an SL ceiling derived from your account, lot size, and maximum concurrent positions.

The forecast choice is the critical modeling decision: forecast = 0 yields a mean‑reversion rule with tighter PTs; forecast = average post-cost P&L or average post-cost win yields progressively wider PTs appropriate for conservative or high‑confidence directional variants. All three forecasts must be computed from the post-cost P&L series — forecasts derived from gross P&L will be systematically optimistic, and the resulting PT will be set wider than the realized cost environment supports.

Practically, the book's Python snippet is too slow at 100k paths; a Numba-compiled, parallel, shared-memory kernel runs the same sweep hundreds of times faster, making the method operational. The shared shock matrix is not merely an efficiency optimization — it is a common random numbers design that eliminates per-node sampling noise from Sharpe comparisons across the mesh. Set max_hp to match max_hold in the upstream labeling step, and align holding_days in the cost model with the same horizon. Recompute the mesh and SL ceiling any time account size, lot, concurrency, or broker costs change. The included code provides a reproducible, fast pipeline to move from a cost-calibrated trade P&L series and risk constraints to deployable triple-barrier parameters.

Attached Files

| File name | Description |

|---|---|

| synthetic_backtest.py | Complete implementation: cost-model integration, O-U parameter estimation, book-faithful Snippet 13.2, multiprocessing version, Numba-optimized version (with CRN shock matrix, prange parallelism, and Welford variance), three-way benchmark, and OTR result export. Updated to accept slippage_pips in triple_barrier_pnl() and source spread_pips from TransactionCostModel. |

| transaction_costs.py | Dependency from the preceding article. TransactionCostModel and load_cost_model(); provides model.spread_pips and model.slippage_pips for the P&L pipeline. Place in afml/transaction_costs.py before running synthetic_backtest.py. |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

The MQL5 Standard Library Explorer (Part 11): How to Build a Matrix-Based Market Structure Indicator in MQL5

The MQL5 Standard Library Explorer (Part 11): How to Build a Matrix-Based Market Structure Indicator in MQL5

Event-Driven Architecture in MQL5: How to Turn an Expert Advisor into a Full-Fledged Trading System

Event-Driven Architecture in MQL5: How to Turn an Expert Advisor into a Full-Fledged Trading System

Creating a Custom Tick Chart in MQL5

Creating a Custom Tick Chart in MQL5

MetaTrader 5 and the MQL5 Economic Calendar: How to Turn News into a Reproducible Trading System

MetaTrader 5 and the MQL5 Economic Calendar: How to Turn News into a Reproducible Trading System

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use