Neural Networks in Trading: Integrating Chaos Theory into Time Series Forecasting (Attraos)

Introduction

Financial market time series represent sequences of price data, trading volumes, and other economic indicators that evolve over time under the influence of numerous factors. They reflect complex dynamic processes, including market trends, cycles, and short-term fluctuations driven by economic news, participant behavior, and macroeconomic conditions. Accurate forecasting of financial time series is crucial for risk management, trading strategy development, portfolio optimization, and algorithmic trading. Forecast errors can lead to significant financial losses, making the development of more precise time series analysis methods a priority for analysts, traders, and financial institutions.

Modern approaches to forecasting financial time series widely employ machine learning, including neural networks and deep learning models. However, most traditional methods are based on statistical techniques and linear models, which struggle when analyzing highly volatile and chaotic data characteristic of financial markets. Market processes often exhibit nonlinear dependencies, sensitivity to initial conditions, and complex dynamics, making prediction a challenging task. Traditional models also find it difficult to account for sudden market events, such as crises, abrupt liquidity shifts, or mass asset sell-offs triggered by investor panic. Therefore, developing approaches capable of adapting to the complex dynamics of financial markets is a critical research direction.

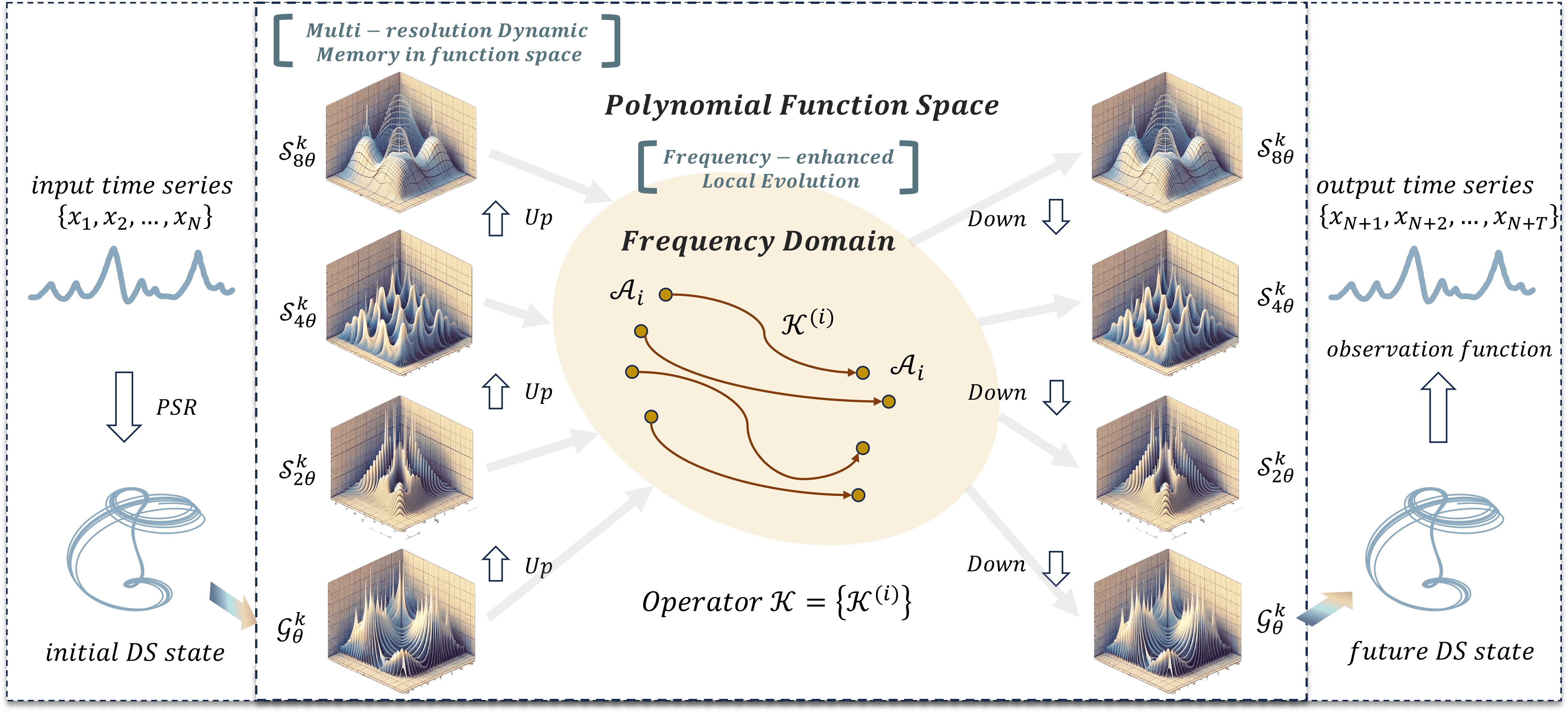

To address these challenges, the authors of the Attraos framework, proposed in "Attractor Memory for Long-Term Time Series Forecasting: A Chaos Perspective", integrate principles of chaos theory, treating time series as low-dimensional projections of multidimensional chaotic dynamical systems. This approach allows hidden nonlinear dependencies between market variables to be captured, improving forecasting accuracy. Applying chaotic dynamics methods in time series analysis enables the identification of persistent structures in market data and their incorporation into predictive models.

The Attraos framework addresses two key problems. First, it models hidden dynamic processes using phase space reconstruction methods. This allows it to identify latent patterns and consider nonlinear interactions among market variables, such as correlations between assets, macroeconomic indicators, and market liquidity. Second, Attraos uses a strategy of local evolution in the frequency domain, enabling adaptation to changing market conditions and enhancing attractor differentiation. Unlike traditional models based on fixed assumptions about data distributions, Attraos dynamically adapts to evolving market structures, providing more accurate forecasts across various time horizons.

Additionally, the model includes a multi-resolution dynamic memory module, allowing it to remember and incorporate historical price movement patterns while adapting to changing market conditions. This is especially important in financial markets, where the same patterns may recur over different time intervals but with varying amplitudes and intensities. The model's ability to learn from data at multiple levels of granularity offers a significant advantage over traditional approaches, which often neglect the multi-scale nature of market processes.

Experiments conducted by the framework authors demonstrate that Attraos outperforms traditional time series forecasting methods, providing more accurate predictions with fewer trainable parameters. Notably, leveraging chaotic dynamics allows the model to better capture long-term dependencies and mitigate the impact of cumulative errors, which is critical for medium- and long-term investment strategies.

Attraos Algorithm

In chaos theory, multidimensional dynamical systems evolving over time can exhibit behavior that appears random and complex. However, detailed analysis reveals hidden regularities governing their dynamics.

According to Takens' theorem, if a system is deterministic, its phase space can be reconstructed from observations of a single variable. This theorem is based on the idea that with a sufficiently long time series, the multidimensional trajectory of the system can be recovered, even if only one-dimensional data are available. This approach allows identifying hidden attractors, which represent regions in phase space toward which system trajectories converge over time.

In financial markets, time series such as stock prices, currency pairs, and other asset data contain information about the internal dynamics of the market system. Despite apparent external chaos, market behavior may follow deterministic laws that can be uncovered through phase space reconstruction methods. Applying these methods enables analysts to identify characteristic attractors, which can then serve as the basis for forecasting future price movements.

The Attraos framework builds upon chaos theory and phase space reconstruction principles, enabling the creation of topologically equivalent models of dynamical systems without prior knowledge of their underlying structure. The central idea is to represent a time series in a multidimensional phase space to uncover hidden patterns. This is achieved by selecting key parameters — embedding dimension d and time delay ꚍ, which form the multidimensional representation of the time series:

![]()

This approach reduces noise influence, highlights structural elements of the process, and enhances forecasting accuracy. Phase space reconstruction allows the creation of a stable phase portrait reflecting system dynamics over time, enabling analysis and modeling.

Data processing in Attraos begins with the Phase Space Reconstruction (PSR) module, responsible for selecting optimal d and τ values to correctly represent system dynamics. This step is critical, as improperly chosen parameters can significantly distort the reconstructed phase portrait. Subsequently, the Multidimensional Data Management Unit (MDMU) partitions data into non-overlapping segments using multidimensional tensors. This reduces computational complexity, accelerates model convergence, and forms the system's dynamic memory by capturing key evolutionary patterns. Consequently, forecasts become more stable and robust against potential data degradation. MDMU additionally implements an adaptive selection of significant time series components, dynamically excluding uninformative elements and emphasizing key factors that most strongly influence system evolution.

A crucial element of the Attraos architecture is the Linear Matrix Approximation (LMA) module, which implements a linear matrix approximation method. It uses polynomial projections to extract the principal features of system dynamics, employing parameterized diagonal matrices that define "measurement windows". This allows precise extraction of local dynamic characteristics and adaptive model correction as input data change. Using diagonal matrices reduces computational complexity, enabling efficient operation with multidimensional data structures. System evolution in this module is formalized as:

![]()

where M is the parameterized diagonal state matrix and ϵ represents random modeling error. Various evolution variants, including adaptive nonlinear projections, are employed to capture complex system transformations and improve forecast accuracy.

For processing dynamic processes, the Discrete Projection (DP) module discretizes the system using combined methods. In particular, an exponential matrix representation is applied, providing high accuracy in sequential data analysis. This approach ensures proper approximation of system evolution and mitigates cumulative error effects, which is especially important for long time series. The module also incorporates adaptive data quantization, optimizing approximation precision without significant computational overhead.

Additional adaptive mechanisms embedded in Attraos include a local evolution strategy with frequency amplification, which regulates the time series' spectral characteristics and compensates for distortions caused by stochastic processes. This is achieved through high-frequency component filtering and control of data spectral density. Multi-level representation of dynamic structure is also implemented, where the analysis window gradually expands, reducing projection errors and improving approximation accuracy. This allows the framework to capture complex topological organization of attractors at different scales, which is crucial for complex dynamical systems. Hybrid learning methods, combining classical numerical techniques with modern machine learning algorithms, further enhance model adaptability and generalization.

Attraos stability is ensured through error minimization and attractor deviation control. Attractor distances are calculated, monitored for change, and system trajectories are corrected when significant deviations are detected. These measures stabilize forecasts and maintain high model accuracy even under unstable dynamics. Statistical monitoring methods automatically identify unstable regions and adjust the model in real time. Active parameter control mechanisms dynamically modify optimization parameters based on system state, further improving adaptability and forecast accuracy.

The original visualization of the Attraos framework is provided below.

Implementation Using MQL5

After discussing the theoretical aspects of the Attraos framework, we now move on to the practical part of the article, where we implement our interpretation of the proposed approaches using MQL5.

We begin by preparing the key processes necessary for effective functioning of the Attraos algorithm. The algorithm relies heavily on diagonal matrices, which play a critical role in computations, simplifying data handling and reducing memory usage.

A diagonal matrix is a square matrix in which all elements except the main diagonal are zero. In traditional linear algebra, such matrices are stored as n*n two-dimensional arrays. However, this is inefficient, as the vast majority of elements are zero. A more optimal solution is to store only the nonzero diagonal elements as a one-dimensional array of length n. This significantly reduces memory usage and accelerates computation by avoiding operations on zero elements.

Using a vector representation of diagonal matrices requires a specialized algorithm for linear algebra operations, particularly for multiplying a diagonal matrix by an arbitrary matrix. Standard matrix multiplication algorithms assume explicit storage of all elements, resulting in unnecessary computations. In this case, it suffices to multiply each element of the diagonal vector by the corresponding row of the other matrix. This simplifies the algorithm and increases efficiency.

To maximize performance, this process is implemented on an OpenCL context, using parallel computation capabilities of GPUs. The core idea is that each element of the resulting matrix is computed independently, using only the relevant diagonal vector element. This reduces computational complexity and speeds execution.

Diagonal Matrix Multiplication

The multiplication algorithm is implemented in the DiagMatMult kernel, operating in a three-dimensional task space. The first two dimensions correspond to the dimensions of the second matrix, while the third dimension reflects the number of independent matrices used to process projections of unit sequences from the analyzed multidimensional time series.

As noted above, the operation of multiplying the vector representation of a diagonal matrix by an arbitrary matrix involves multiplying each element of the diagonal vector by the corresponding row of the second matrix. To optimize GPU execution, we group computation threads into workgroups. Within each workgroup, only one thread accesses global memory to fetch the required diagonal vector element and stores it in local memory. The remaining threads use this value from local memory to perform the necessary calculations without additional global memory access. This significantly improves algorithm execution speed and reduces overhead from data transfers between global memory and processing units.

The DiagMatMult kernel receives pointers to three data buffers as parameters. Two of these contain input data, while the third stores the operation results. We have also included an option to apply an activation function to the multiplication results.

__kernel void DiagMatMult(__global const float * diag, __global const float * matr, __global float * result, int activation) { size_t row = get_global_id(0); size_t col = get_local_id(1); size_t var = get_global_id(2); size_t rows = get_global_size(0); size_t cols = get_local_size(1);

Within the kernel body, the first step is to identify the current thread across all three dimensions of the task space. Then, the first thread of the workgroup stores the diagonal element in local memory, and the threads are synchronized using a barrier.

__local float local_diag[1]; if(cols==0) local_diag[0] = diag[row + var * rows]; barrier(CLK_LOCAL_MEM_FENCE);

Next, we calculate the offset in the arbitrary matrix buffer to the required element.

int shift = (row + var * rows) * cols + col;

It is important to note that the arbitrary matrix and the result matrix have identical dimensions. Therefore, the calculated offset is also valid for the result matrix.

We then extract the required element from the arbitrary matrix buffer, multiply it by the previously stored diagonal element in local memory. The result is activated using the designated activation function. The output is then saved in the result matrix buffer.

float res = local_diag[0] * matr[shift]; //--- result[shift] = Activation(res, activation); }

The kernel described above implements the forward pass for multiplying a diagonal matrix by an arbitrary matrix. However, this is only half of the process. During model training, we also need to propagate the error gradient through this operation. To achieve this, we create an additional kernel DiagMatMultGrad which involves a slightly more complex algorithm.

In this kernel, buffers for storing the corresponding error gradients are added to the parameters. But we exclude the activation function pointer. The result gradients are assumed to have already been corrected by the derivative of the relevant activation function.

__kernel void DiagMatMultGrad(__global const float *diag, __global float *grad_diag, __global const float *matr, __global float * grad_matr, __global const float * grad_result) { size_t row = get_global_id(0); size_t col = get_local_id(1); size_t var = get_global_id(2); size_t rows = get_global_size(0); size_t cols = get_local_size(1); size_t vars = get_global_size(2);

In the kernel body, we identify the current thread in the three-dimensional task space, using the same approach as in the forward pass kernel.

Similar to the feed-forward kernel, only one thread per workgroup retrieves the required diagonal matrix element into a local array.

__local float local_diag[LOCAL_ARRAY_SIZE]; if(cols==0) local_diag[0] = diag[row + var * rows]; barrier(CLK_LOCAL_MEM_FENCE);

We also synchronize threads within the workgroup.

Next, we calculate the offsets in the arbitrary and result matrix buffers.

int shift = (row + var * rows) * cols + col; //--- float grad = grad_result[shift]; float inp = matr[shift];

We save the required matrix elements in local variables.

At this point, preparation is complete. We can compute the error gradient with respect to the arbitrary matrix. This is done by multiplying the error gradient of the corresponding element in the result matrix by the diagonal element previously stored in the local array.

grad_matr[shift] = IsNaNOrInf(local_diag[0] * grad, 0); barrier(CLK_LOCAL_MEM_FENCE);

The resulting gradient is stored in the appropriate global memory buffer, and threads are synchronized within the workgroup.

Next, we determine the error gradient for the diagonal matrix elements. Here, we must aggregate values from all elements of the corresponding row in the result buffer. This requires summing values from all threads in the workgroup.

To achieve this, we implement a parallel reduction loop on the local array elements.

int loc = col % LOCAL_ARRAY_SIZE; #pragma unroll for(int c = 0; c < cols; c += LOCAL_ARRAY_SIZE) { if(c <= col && (c + LOCAL_ARRAY_SIZE) > col) { if(c == 0) local_diag[loc] = IsNaNOrInf(grad * inp, 0); else local_diag[loc] += IsNaNOrInf(grad * inp, 0); } barrier(CLK_LOCAL_MEM_FENCE); }

The maximum number of active threads per iteration is limited to the number of elements in the local array. After each iteration, we make sure to synchronize threads of the workgroup before proceeding to the next loop iteration for the next batch of active threads.

Next, we add a loop for parallel summation of the elements of the local array.

int count = min(LOCAL_ARRAY_SIZE, (int)cols); int ls = count; #pragma unroll do { count = (count + 1) / 2; if((col + count) < ls) { local_diag[col] += local_diag[col + count]; local_diag[col + count] = 0; } barrier(CLK_LOCAL_MEM_FENCE); } while(count > 1);

The number of active threads is reduced in each iteration. However, synchronization is maintained at every step to ensure correct computation.

To store the final aggregated value in global memory, only one thread per workgroup is needed.

if(col == 0) grad_diag[row + var * rows] = IsNaNOrInf(local_diag[0], 0); }

This approach minimizes costly global memory accesses and maximizes parallel execution across threads, reducing overall training overhead.

Parallel Scan Algorithm

Continuing work on the OpenCL side, we now move to implementing the Attraos framework algorithms. The next task is to implement the parallel scan algorithm, used for efficient updating of the input data array X with consideration of the interaction coefficient matrices A and normalization factors H. The main idea of the algorithm is to iteratively compute prefix sums using binary decomposition, reducing computational complexity from O(L), typical of sequential methods, to O(log L).

Array X = {x0, x1, ..., xL-1} is updated using neighboring elements according to the following recurrence:

![]()

where θ1 and θ2 are determined iteratively during the algorithm. The vector A represents the matrix of interaction coefficients between neighboring elements, and H normalizes the computed results. These parameters define an adaptive weight distribution, allowing the algorithm to account for the structure of the data and model complex dependencies effectively.

This process is implemented in the PScan kernel. Kernel parameters include pointers to four data buffers: three for input and one for writing results.

__kernel void PScan(__global const float* A, __global const float* X, __global const float* H, __global float* X_out) { const size_t idx = get_local_id(0); const size_t dim = get_global_id(1); const size_t L = get_local_size(0); const size_t D = get_global_size(1);

The kernel executes in a two-dimensional task space, with threads grouped along the first dimension. Thread identification and task-space dimensions are determined within the kernel.

The number of iterations num_steps is calculated as the binary logarithm of the sequence length:

const int num_steps = (int)log2((float)L);

Using the binary logarithm ensures the minimal number of iterations for a complete pass over the data, providing optimal utilization of computational resources.

To reduce costly global memory accesses, local arrays are created to temporarily store values.

__local float local_A[1024]; __local float local_X[1024]; __local float local_H[1024];

Each thread loads data from global memory into local memory, reducing latency for subsequent operations and improving overall performance.

//--- Load data to local memory int offset = dim + idx * D; local_A[idx] = A[offset]; local_X[idx] = X[offset]; local_H[idx] = H[offset]; barrier(CLK_LOCAL_MEM_FENCE);

After loading the data, we synchronize the threads within the workgroup, ensuring that the data is read and written correctly before continuing the calculations.

Next, the main stage of operations is performed. It involves the parallel summation of values. The number of active threads is halved at each iteration of the loop.

//--- Scan #pragma unroll for(int step = 0; step < num_steps; step++) { int halfT = L >> (step + 1); if(idx < halfT) { int base = idx * 2; local_X[base + 1] += local_A[base + 1] * local_X[base]; local_X[base + 1] *= local_H[base + 1]; local_A[base + 1] *= local_A[base]; } barrier(CLK_LOCAL_MEM_FENCE); }

Within the loop, input array values are summed, normalized using H, and interaction coefficients A updated, maintaining computation order during iterative updates. Optimization with #pragma unroll allows the compiler to unroll the loop early, reducing branching overhead and providing more efficient data processing.

After the loop iterations are completed, the resulting values are transferred from local memory to the global result buffer.

//--- Save result

X_out[offset] = local_X[idx];

}

This approach significantly accelerates data processing, enabling parallel scanning with optimized use of computational resources.

The next step is to construct the backpropagation algorithm for the parallel scan. We create a new kernel, PScan_CalcHiddenGradient, whose primary task is to differentiate parameters via reverse scanning.

Kernel parameters include pointers to buffers for storing corresponding error gradients.

__kernel void PScan_CalcHiddenGradient(__global const float* A, __global float* grad_A, __global const float* X, __global float* grad_X, __global const float* H, __global float* grad_H, __global const float* grad_X_out) { const size_t idx = get_local_id(0); const size_t dim = get_global_id(1); const size_t L = get_local_size(0); const size_t D = get_global_size(1); const int num_steps = (int)log2((float)L);

The algorithm starts by identifying threads in a task space that is similar to that used in the feed-forward pass. Since parallel scanning is performed in multiple iterations, the number of steps is calculated as the binary logarithm of the sequence length, which allows the process to be broken down into a hierarchical structure with sequential merging of values.

An important aspect of this implementation is the minimization of access to global memory, which is achieved by using local memory arrays. This significantly improves performance because local memory provides faster access to data than global memory. To store the original data and intermediate values of the error gradients, we declare corresponding buffers.

__local float local_A[1024]; __local float local_X[1024]; __local float local_H[1024]; __local float local_grad_X[1024]; __local float local_grad_A[1024]; __local float local_grad_H[1024];

After declaring local arrays, we transfer the source data from global buffers into them. This allows each thread to load the element that corresponds to it. Then, we synchronize threads within the local group, preventing access conflicts.

//--- Load data to local memory int offset = idx * D + dim; local_A[idx] = A[offset]; local_X[idx] = X[offset]; local_H[idx] = H[offset]; local_grad_X[idx] = grad_X_out[offset]; local_grad_A[idx] = 0.0f; local_grad_H[idx] = 0.0f; barrier(CLK_LOCAL_MEM_FENCE);

Next comes the key stage of the algorithm - reverse scanning. This stage is implemented as an iterative process. At each iteration, the size of the array being processed is reduced.

//--- Reverse Scan (Backward) #pragma unroll for(int step = num_steps - 1; step >= 0; step--) { int halfT = L >> (step + 1); if(idx < halfT) { int base = idx * 2; // Compute gradients float grad_next = local_grad_X[base + 1] * local_H[base + 1]; local_grad_H[base + 1] = local_grad_X[base + 1] * local_X[base]; local_grad_A[base + 1] = local_grad_X[base + 1] * local_X[base]; local_grad_X[base] += local_A[base + 1] * grad_next; } barrier(CLK_LOCAL_MEM_FENCE); }

In the loop body, we first calculate the number of active threads participating in a given iteration (halfT). Then we determine the error gradient (grad_next), which is determined by multiplying the current value by the corresponding normalization coefficient H. Next, we compute the derivatives with respect to the normalization coefficients H and interactions A, using the current valueX. properly correctly propagate the error backward, the gradient value X is adjusted taking into account the interaction coefficient. And we must synchronize the threads within the working group at each iteration of the loop.

At the end of kernel operations, the updated error gradients must be transferred back into global memory.

//--- Save gradients

grad_A[offset] = local_grad_A[idx];

grad_X[offset] = local_grad_X[idx];

grad_H[offset] = local_grad_H[idx];

}

This data processing method provides high computational efficiency due to the use of local memory and a minimal number of global memory accesses.

This concludes our work on the OpenCL-side implementation. The full OpenCL program code can be found in the attachment.

The next stage of our work is the construction of algorithms on the main program side. However, since we are nearing the article format limit, we will take a short break and continue building the Attraos framework in the next part.

Conclusion

In this article, we explored the Attraos framework, which proposes a chaos-theory-based time series forecasting algorithm. Time series are interpreted as projections of multidimensional chaotic dynamical systems, enabling identification of hidden patterns inaccessible to traditional statistical or regression models. Attraos implements phase space reconstruction and dynamic memory mechanisms, allowing detection of stable nonlinear dependencies in market data and improving forecast accuracy.

Unlike traditional linear models, which do not account for complex multidimensional interactions between variables, Attraos operates on the internal structure of chaotic attractors, ensuring high forecasting accuracy and adaptability to changing market conditions. This approach allows detection of deterministic components in processes that initially appear random, which is particularly important for high-frequency data analysis and short-term financial forecasting.

In the practical section, we began implementing our vision of the proposed methods using MQL5 with OpenCL technology, significantly accelerating computations through parallel data processing on GPUs. This enables application of the Attraos method in real-world trading systems and automated analytical platforms, providing high-speed processing of large datasets and rapid adaptation to evolving market conditions.

In the next article, we will continue the implementation of these approaches, bringing it to completion with validation on real historical data.

List of references

- Attractor Memory for Long-Term Time Series Forecasting: A Chaos Perspective

- Other articles from this series

Programs Used in the Article

| # | Name | Type | Description |

|---|---|---|---|

| 1 | Research.mq5 | Expert Advisor | Expert Advisor for collecting samples |

| 2 | ResearchRealORL.mq5 | Expert Advisor | Expert Advisor for collecting samples using the Real-ORL method |

| 3 | Study.mq5 | Expert Advisor | Model training Expert Advisor |

| 4 | Test.mq5 | Expert Advisor | Model Testing Expert Advisor |

| 5 | Trajectory.mqh | Class library | System state and model architecture description structure |

| 6 | NeuroNet.mqh | Class library | A library of classes for creating a neural network |

| 7 | NeuroNet.cl | Code library | OpenCL program code |

Translated from Russian by MetaQuotes Ltd.

Original article: https://www.mql5.com/ru/articles/17351

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

Formulating Dynamic Multi-Pair EA (Part 7): Cross-Pair Correlation Mapping for Real-Time Trade Filtering

Formulating Dynamic Multi-Pair EA (Part 7): Cross-Pair Correlation Mapping for Real-Time Trade Filtering

Price Action Analysis Toolkit Development (Part 63): Automating Rising and Falling Wedge Detection in MQL5

Price Action Analysis Toolkit Development (Part 63): Automating Rising and Falling Wedge Detection in MQL5

The MQL5 Standard Library Explorer (Part 9): Using ALGLIB to Filter Excessive MA Crossover Signals

The MQL5 Standard Library Explorer (Part 9): Using ALGLIB to Filter Excessive MA Crossover Signals

Overcoming Accessibility Challenges in MQL5 Trading Tools (Part II): Enabling EA Voice Using a Python Text-to-Speech Engine

Overcoming Accessibility Challenges in MQL5 Trading Tools (Part II): Enabling EA Voice Using a Python Text-to-Speech Engine

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use

Check out the new article: Neural Networks in Trading: Integrating Chaos Theory into Time Series Forecasting (Attraos).

Author: Dmitriy Gizlyk