Implementing the Truncated Newton Conjugate-Gradient Algorithm in MQL5

Introduction

When minimizing real-valued functions, MQL5 developers often rely on the utilities provided by the ALGLIB library. The minLBFGS ALGLIB module offers a robust implementation of the Limited-memory Broyden-Fletcher-Goldfarb-Shanno (LBFGS) method. Unfortunately, the constrained version of this method is missing from the MQL5 port of ALGLIB. This omission often forces users to turn to other nonlinear optimizers within the library, which can sometimes be overkill for simple box-constrained tasks. We need a practical, solver for box-constrained problems that can be integrated into a project as easily as ALGLIB's minLBFGS, supporting both analytical gradients and stable numerical approximations near boundaries along with the capability of supporting box constraints.

In this article, we present an implementation of the Truncated Newton Conjugate-Gradient (TNC) algorithm. In addition to covering the underlying theory, we will test its ability to find the global minimum of the RosenBrock function, as well as demonstrate its practical application by using it to implement logistic regression as an alternative to the LBFGS optimizer.

What is TNC and how does it differ from LBFGS?

The Truncated Newton Conjugate-Gradient (TNC) method is a second-order optimization algorithm that approximates the behavior of Newton’s Method. In the context of optimization, Newton's Method is a procedure used to find a function's minimum. The general method consists of an initial guess, followed by the computation of the function's gradient and Hessian matrix, before updating the objective values. This process is repeated until convergence.

The gradient of a function (the first derivative) provides information about the slope, indicating the direction of the steepest ascent. The Hessian (the second derivative) provides information about the shape, specifically the curvature of the function at a given point. If the Hessian shows positive curvature in all directions, it indicates a local minimum (a bottom). If the curvature is negative in all directions, the point is a local maximum (a summit). If the Hessian shows movement in one direction and then another, it indicates a saddle point.

In a pure Newton’s Method, the optimizer requires the calculation of a Hessian matrix, which contains the second-order derivatives describing the function's curvature. For problems with a high number of variables, calculating and storing this matrix is computationally expensive. TNC addresses this by truncating the Newton process. Instead of solving the Newton equation exactly, it uses an inner loop of the Conjugate Gradient (CG) algorithm to find an approximate search direction. This inner loop only requires Hessian-vector products, which can be efficiently estimated using finite differences of the gradient. This allows the algorithm to capture curvature information without ever explicitly forming a massive Hessian matrix.

LBFGS is also a quasi-Newton method, but it operates on a fundamentally different philosophy. LBFGS maintains a running history of gradients and positions from the last several iterations to build an implicit approximation of the inverse Hessian. Because it reuses information from previous steps, LBFGS is often extremely fast and computationally cheap per iteration, making it the industry standard for smooth, well-behaved functions in machine learning.

The TNC API Overview

The code that implements the TNC optimizer can be integrated into any MQL5 program through the inclusion of the tnc.mqh header file. Application of the TNC API follows a four step process:

- We first set up the objective function, its Jacobian and any box constraints.

- Second, we configure the optimizer itself.

- In the third step we run the optimizer.

- And the last step, is the retrieval of the results.

The API interface consists of two classes. The CFunctor class handles all aspects of the objective function and CTruncNewtonCG represents the TNC optimizer.

Specification of the objective function is done by defining a class derived from the CFunctor base. The objective function is meant to be defined by overriding CFunctor's orig_fun method. If the gradient or Jacobian is known it can be explicitly set by overriding the grad_fun method.

class CScalarFunc:public CFunctor { public: CScalarFunc(void) { } ~CScalarFunc(void) { } virtual double orig_fun(vector& x) { return np::rosen(x); } virtual vector grad_fun(vector& x) { return np::rosen_gradient(x); } };

The CFunctor derived instance then provides the interface through which, users can configure various aspects of the objective function and its derivative. This includes the specification of box constraints, done via the setBounds method, which expects a matrix where each row represents a dimension, and columns represent lower and upper limits, in that order. If the grad_fun method has been overridden it should be enabled by calling setGradOption with the GRAD_POINT_CALLABLE option. Otherwise the gradient will be calculated using finite differencing. The options availed by the ENUM_DIFF_POINTS enumeration allow for users to select the method of finite differencing applied when either the gradient function is not set or not enabled. Further details of these options are provided in the latter sections of this article.

CScalarFunc sf;

matrix bd = {{-2, 2}, {-1, 3}};

sf.setBounds(bd);

sf.setGradOption(GRAD_POINT_CALLABLE);

if(!sf.initialize(init_params))

return;Setup of the objective function is completed by invoking the initialize method, which takes a vector containing the initial guess of the solution and should return true at runtime. If the method returns false, the optimization process should not be continued. It is an indication of something not being set up correctly.

tnc::CTruncNewtonCG tnc_minim; tnc_minim.SetLoglevel(tnc::TNC_MSG_ALL); tnc_minim.SetMaxCGit(in_maxCGit); tnc_minim.SetMaxFunCalls(in_maxnfeval); tnc_minim.SetEta(in_eta); tnc_minim.SetStepMax(in_stepmx); tnc_minim.SetAccuracy(in_accuracy); tnc_minim.SetFmin(in_fmin); tnc_minim.SetFtol(in_ftol); tnc_minim.SetXtol(in_xtol); tnc_minim.SetPGtol(in_pgtol); tnc_minim.SetRescaleFactor(in_rescale);

Thereafter the TNC optimizer can be configured. Here, users can tune various parameters of the TNC minimizer. The methods used to tune the parameters of the TNC optimizer are summarized below.

- The SetLoglevel method accepts an ENUM_TNC_MESSAGE enumeration to control terminal output, ranging from a single line per iteration to detailed scaling info or just the final termination reason.

- The SetMaxCGit method sets the limit for the number of internal Conjugate Gradient iterations to ensure the inner loop remains efficient.

- The SetMaxFunCalls method defines a hard cap on the total number of objective function evaluations.

- The SetFtol and SetXtol methods set the convergence thresholds for relative changes in the function value and the position vector, respectively. The parameter ftol (function tolerance) monitors the relative change in the objective value between successive iterations. If the decrease in the function value becomes so small that it falls below this threshold, the optimizer assumes it has reached a plateau where further effort will yield negligible improvements. Setting this too high results in "premature convergence," where the algorithm stops before reaching the true bottom, while setting it too low can lead to unnecessary computations that only fight against numerical noise. The xtol parameter (step tolerance) focuses on the movement in the search space rather than the function value. It measures the Euclidean distance between the current position and the previous position; if the optimizer is making only microscopic adjustments to the variables, it triggers a shutdown. This is particularly useful in "flat" regions of a function where the altitude barely changes, but the algorithm might otherwise wander aimlessly for many iterations without actually improving the solution.

- The SetPGtol method defines the threshold for the projected gradient magnitude. The algorithm stops if the gradient falls below this level. The pgtol (projected gradient tolerance) is perhaps the most mathematically rigorous stopping condition. It measures the steepness of the local slope after accounting for constraints; a gradient of zero indicates a stationary point, which is the theoretical requirement for a local minimum. A very small pgtol forces the algorithm to pinpoint the exact bottom of a valley, whereas a larger value allows the solver to exit once it is close enough to the optimal region.

- The SetAccuracy method specifies the machine precision or estimated function error to guide gradient stepping.

- The SetEta method accepts a value between 0 and 1 for the line search, balancing function decrease against step efficiency.

Running the minimizer is done by invoking CTruncNewtonCG's Minimize method.

int return_code = tnc_minim.Minimize(sf);Which requires an instance of a CFunctor derived object as it sole argument. The results of the optimization process can then be retrieved through the following CTruncNewtonCG methods:

- The Solution method exposes the variables corresponding to the global minimum.

- The ObjectiveResult method returns the objective function's value at the global minimum.

- The ObjectiveGradient method returns gradient variables at the global minimum.

- The NumFeval returns an integer value of the number of times the objective function was evaluated.

- The NumIters method returns an integral value of the number of iterations of the optimization process.

In the next section we put our implementation to the test.

Constrained Optimization Test

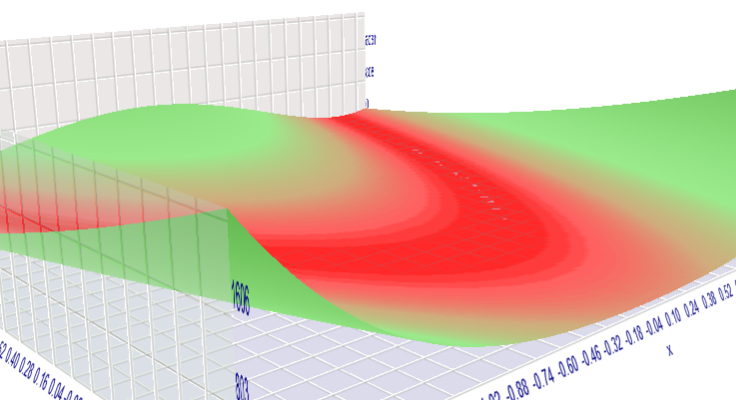

In standard optimization literature, the Rosenbrock function is a common benchmark for testing the performance of optimization algorithms. It is considered a challenging minimization problem because it features a flat, narrow valley containing the global minimum, which can make it difficult for many algorithms to converge efficiently. Functions with similar geometries are prevalent in fields such as economics and machine learning.

We can visualize this function within the MetaTrader 5 strategy tester using its math function evaluation feature. The Expert Advisor RosenBrock.mq5 implements the logic for this evaluation.

//+------------------------------------------------------------------+ //| RosenBrock.mq5 | //| Copyright 2025, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2025, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" //--- input parameters input double x=-1.2;// start=-2, step=0.01, stop=2 input double y=1.0;// start=-1, step=0.01, stop=3 //+------------------------------------------------------------------+ //| Tester function | //+------------------------------------------------------------------+ double OnTester() { //--- double ret= pow(1.-x,2.0)+100.*pow(y-pow(x,2.),2.0); //--- return(ret); } //+------------------------------------------------------------------+

Running the EA in the tester reveals the global minimum at [1,1].

A three-dimensional visualization highlights the characteristic "narrow valley" that hides the minimum—a property that often causes optimizers to oscillate or stall.

To see how closely the TNC minimizer can approach the true global minimum, we use the script TestTNC.mq5.

//+------------------------------------------------------------------+ //| TestTNC.mq5 | //| Copyright 2025, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2025, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" #property script_show_inputs #include<tnc/tnc.mqh> #include<np.mqh> //--- input double in_eta = -1.0; input double in_stepmx = 0.0; input double in_accuracy = 0.0; input double in_fmin = 0.0; input double in_ftol = -1.0; input double in_xtol = -1.0; input double in_pgtol = -1.0; input double in_rescale = -1.0; input int in_maxCGit = -1; input int in_maxnfeval = 100; //+------------------------------------------------------------------+ //| Script program start function | //+------------------------------------------------------------------+ void OnStart() { //--- vector init_params = {-1.2, 1.0};//vector::Zeros(2); CScalarFunc sf; matrix bd = {{-2, 2}, {-1, 3}}; sf.setBounds(bd); sf.setGradOption(GRAD_POINT_CALLABLE); if(!sf.initialize(init_params)) return; tnc::CTruncNewtonCG tnc_minim; tnc_minim.SetLoglevel(tnc::TNC_MSG_ALL); tnc_minim.SetMaxCGit(in_maxCGit); tnc_minim.SetMaxFunCalls(in_maxnfeval); tnc_minim.SetEta(in_eta); tnc_minim.SetStepMax(in_stepmx); tnc_minim.SetAccuracy(in_accuracy); tnc_minim.SetFmin(in_fmin); tnc_minim.SetFtol(in_ftol); tnc_minim.SetXtol(in_xtol); tnc_minim.SetPGtol(in_pgtol); tnc_minim.SetRescaleFactor(in_rescale); int return_code = tnc_minim.Minimize(sf); Print(" Optimization return code ", tnc::tnc_rc_string[return_code]); Print(" optimization solution ", tnc_minim.Solution()); } //+------------------------------------------------------------------+ class CScalarFunc:public CFunctor { public: CScalarFunc(void) { } ~CScalarFunc(void) { } virtual double orig_fun(vector& x) { return np::rosen(x); } virtual vector grad_fun(vector& x) { return np::rosen_gradient(x); } }; //+------------------------------------------------------------------+

Upon running the script, we receive the following output.

QO 0 19:06:52.209 TestTNC (GBPUSD,D1) NIT NF F GTG GJ 0 19:06:52.209 TestTNC (GBPUSD,D1) 0 1 2.420000000000000E+01 5.42273600E+04 MQ 0 19:06:52.209 TestTNC (GBPUSD,D1) tnc: fscale = 0.00107357 DE 0 19:06:52.209 TestTNC (GBPUSD,D1) 1 3 4.567781791360708E+00 9.57591179E+02 RH 0 19:06:52.209 TestTNC (GBPUSD,D1) 2 5 4.127793942693432E+00 3.78238779E+00 LO 0 19:06:52.209 TestTNC (GBPUSD,D1) tnc: fscale = 0.128546 GK 0 19:06:52.209 TestTNC (GBPUSD,D1) 3 7 4.116988513105550E+00 1.81606916E+01 MO 0 19:06:52.209 TestTNC (GBPUSD,D1) 4 16 3.317053801700717E+00 2.94434047E+02 MS 0 19:06:52.209 TestTNC (GBPUSD,D1) 5 20 3.172067249923803E+00 5.34629683E+02 HD 0 19:06:52.209 TestTNC (GBPUSD,D1) 6 26 1.770939721007689E+00 6.64565256E+01 LI 0 19:06:52.209 TestTNC (GBPUSD,D1) 7 28 1.651272391609239E+00 4.99232721E+00 GR 0 19:06:52.210 TestTNC (GBPUSD,D1) 8 38 1.269069338899967E+00 4.60590202E+01 JF 0 19:06:52.210 TestTNC (GBPUSD,D1) 9 42 1.110240253054296E+00 7.40799184E+01 MJ 0 19:06:52.210 TestTNC (GBPUSD,D1) 10 44 7.993902318805092E-01 3.13860496E+00 LM 0 19:06:52.210 TestTNC (GBPUSD,D1) 11 48 6.601238685636209E-01 3.15085702E+01 EP 0 19:06:52.210 TestTNC (GBPUSD,D1) 12 53 3.391820074593478E-01 5.23994236E+01 DD 0 19:06:52.210 TestTNC (GBPUSD,D1) 13 55 2.644309665366499E-01 7.39869479E-01 NO 0 19:06:52.210 TestTNC (GBPUSD,D1) 14 61 1.675328075835125E-01 1.16689434E+01 ER 0 19:06:52.210 TestTNC (GBPUSD,D1) 15 65 1.224744553458436E-01 3.21610219E+01 LE 0 19:06:52.210 TestTNC (GBPUSD,D1) 16 67 6.925326451315299E-02 6.87199782E-01 JI 0 19:06:52.210 TestTNC (GBPUSD,D1) 17 73 2.930633981879897E-02 1.06326643E+01 GL 0 19:06:52.210 TestTNC (GBPUSD,D1) 18 77 1.341804114201537E-03 2.64590552E+00 DG 0 19:06:52.210 TestTNC (GBPUSD,D1) 19 79 2.447262820598820E-04 6.40221664E-04 DE 0 19:06:52.210 TestTNC (GBPUSD,D1) tnc: fscale = 9.88041 FR 0 19:06:52.210 TestTNC (GBPUSD,D1) 20 81 2.442825206410282E-04 2.70915012E-04 EE 0 19:06:52.210 TestTNC (GBPUSD,D1) 21 83 5.876599419779381E-06 1.17530118E-02 FI 0 19:06:52.210 TestTNC (GBPUSD,D1) 22 85 3.385708330669465E-11 1.17585414E-10 QS 0 19:06:52.210 TestTNC (GBPUSD,D1) tnc: fscale = 23054.9 NG 0 19:06:52.210 TestTNC (GBPUSD,D1) tnc: |fn-fn-1] = 7.61917e-14 -> convergence FN 0 19:06:52.210 TestTNC (GBPUSD,D1) 23 87 3.378089160219990E-11 3.50223471E-11 DN 0 19:06:52.210 TestTNC (GBPUSD,D1) tnc: Converged (|f_n-f_(n-1)| ~= 0) MK 0 19:06:52.210 TestTNC (GBPUSD,D1) Optimization return code 1 LS 0 19:06:52.210 TestTNC (GBPUSD,D1) optimization solution [0.9999941953936243,0.9999883612512912]

The results indicate that the optimizer manages to get close to the global minimum, though the final values may not be exactly equal to 1.0 due to the specified tolerances. To demonstrate a more practical use case, we will now swap out the LBFGS minimizer in a logistic regression implementation for our TNC solver.

TNC-Based Logistic Regression Implementation

The CLogit class for logistic regression previously relied on ALGLIB's LBFGS implementation as its sole optimization engine. In this section, we augment the class with the TNC optimizer to provide a robust alternative for building logistic models. This is achieved by modifying the existing Fit method to allow for optimizer selection.

//+------------------------------------------------------------------+ //| fit a model | //+------------------------------------------------------------------+ bool Clogit::Fit(matrix &predictors, vector &targets, ENUM_MINIM_METHOD minimizer=MINIM_LBFGS, tnc::ENUM_TNC_MESSAGE in_display = tnc::TNC_MSG_NONE, double in_eta = -1.0, double in_stepmx = 0.0, double in_accuracy = 0.0, double in_fmin = 0.0, double in_ftol = -1.0, double in_xtol = -1.0, double in_pgtol = -1.0, double in_rescale = -1.0, int in_maxCGit = -1, int in_maxnfeval = 100) { switch(minimizer) { case MINIM_LBFGS: return fit_lbfgs(predictors,targets); case MINIM_TNC: return fit_tnc(predictors,targets,in_display,in_eta,in_stepmx,in_accuracy,in_fmin,in_ftol,in_xtol,in_pgtol,in_rescale,in_maxCGit,in_maxnfeval); } return false; }

To enable this, the code in logistic.mqh is updated by defining the ENUM_MINIM_METHOD enumeration, which encapsulates the available optimization algorithms.

//+------------------------------------------------------------------+ //|minimizer used | //+------------------------------------------------------------------+ enum ENUM_MINIM_METHOD { MINIM_LBFGS=0,//LBGS MINIM_TNC//TNC };

Additionally, the CLogitFunctor class, derived from CFunctor, has been added to the header file. Two private member functions, fit_lbfgs and fit_tnc, handle the execution logic for their respective optimizers.

bool fit_lbfgs(matrix &predictors, vector &targets); bool fit_tnc(matrix &predictors, vector &targets, tnc::ENUM_TNC_MESSAGE in_display = tnc::TNC_MSG_NONE, double in_eta = -1.0, double in_stepmx = 0.0, double in_accuracy = 0.0, double in_fmin = 0.0, double in_ftol = -1.0, double in_xtol = -1.0, double in_pgtol = -1.0, double in_rescale = -1.0, int in_maxCGit = -1, int in_maxnfeval = 100);

The script LogisticRegression.mq5 tests the CLogit class using the classic Iris dataset. The program fits a portion of the data using both optimizers, and the resulting model parameters are output to the terminal's journal.

void OnStart() { //--- CHighQualityRandStateShell rngstate; CHighQualityRand::HQRndSeed(Random_Seed,Random_Seed+Random_Seed,rngstate.GetInnerObj()); //--- Print(iris_data); string lines[],cells[]; int ncells,nlines; //--- nlines = StringSplit(iris_data,StringGetCharacter("\n",0),lines); matrix data = matrix::Zeros(0,0); for(int i = 1; i<nlines-2; ++i) { ncells = StringSplit(lines[i],StringGetCharacter(",",0),cells); if(!data.Rows()) data.Resize(nlines-2,ncells-1); for(int k = 1; k<(ncells); ++k) data[i-1,k-1] = StringToDouble(cells[k]); } //--- COneHotEncoder enc; ulong colum[1] = {4}; if(!enc.fit(data,colum)) { Print(" failed to encode data "); return; } //--- data = enc.transform(data); //--- long rindices[],trainset[],testset[]; np::arange(rindices,int(data.Rows())); //--- np::shuffleArray(rindices,GetPointer(rngstate)); ArrayCopy(trainset,rindices,0,0,int(ceil(Tra_Test_Split*rindices.Size()))); ArraySort(trainset); //--- CSortedSet<long> test_set(rindices); //--- test_set.ExceptWith(trainset); //--- test_set.CopyTo(testset); //--- matrix testdata = np::selectMatrixRows(data,testset); matrix test_predictors = np::sliceMatrixCols(testdata,0,4); vector test_targets = testdata.Col(4); matrix traindata = np::selectMatrixRows(data,trainset); matrix tra_preditors = np::sliceMatrixCols(traindata,0,4); vector tra_targets = traindata.Col(4); //--- logistic::Clogit logit; if(!logit.Fit(tra_preditors,tra_targets)) { Print(" failed to fit data with lbfgs"); return; } //--- Print(" LBFGS logit results "); Print(" coefs ", logit.Get_Coefs()); Print(" bias ", logit.Get_Bias()); //--- if(!logit.Fit(tra_preditors,tra_targets,logistic::MINIM_TNC,0,_eta_,_stepmx_,_accuracy_,_fmin_,_ftol_,_xtol_,_pgtol_,_rescale_,_maxCGit_,_maxnfeval_)) { Print(" failed to fit data with tnc "); return; } //--- Print(" TNC logit results "); Print(" coefs ", logit.Get_Coefs()); Print(" bias ", logit.Get_Bias()); }

The results demonstrate that both optimizers converge to similar parameters, confirming that TNC could be used as an alternative optimizer for logistic regression.

CG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) index,sl,sw,pl,pw,target GS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 0,5.1,3.5,1.4,0.2,0 HK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 1,4.9,3.0,1.4,0.2,0 LR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 2,4.7,3.2,1.3,0.2,0 CM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 3,4.6,3.1,1.5,0.2,0 ED 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 4,5.0,3.6,1.4,0.2,0 DL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 5,5.4,3.9,1.7,0.4,0 OG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 6,4.6,3.4,1.4,0.3,0 IN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 7,5.0,3.4,1.5,0.2,0 HI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 8,4.4,2.9,1.4,0.2,0 QQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 9,4.9,3.1,1.5,0.1,0 NK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 10,5.4,3.7,1.5,0.2,0 HP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 11,4.8,3.4,1.6,0.2,0 PI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 12,4.8,3.0,1.4,0.1,0 CF 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 13,4.3,3.0,1.1,0.1,0 OO 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 14,5.8,4.0,1.2,0.2,0 HD 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 15,5.7,4.4,1.5,0.4,0 JL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 16,5.4,3.9,1.3,0.4,0 PE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 17,5.1,3.5,1.4,0.3,0 OR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 18,5.7,3.8,1.7,0.3,0 NK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 19,5.1,3.8,1.5,0.3,0 HP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 20,5.4,3.4,1.7,0.2,0 GI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 21,5.1,3.7,1.5,0.4,0 HF 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 22,4.6,3.6,1.0,0.2,0 HO 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 23,5.1,3.3,1.7,0.5,0 MG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 24,4.8,3.4,1.9,0.2,0 NL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 25,5.0,3.0,1.6,0.2,0 OE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 26,5.0,3.4,1.6,0.4,0 LR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 27,5.2,3.5,1.5,0.2,0 KK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 28,5.2,3.4,1.4,0.2,0 NP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 29,4.7,3.2,1.6,0.2,0 LI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 30,4.8,3.1,1.6,0.2,0 JF 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 31,5.4,3.4,1.5,0.4,0 NN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 32,5.2,4.1,1.5,0.1,0 QG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 33,5.5,4.2,1.4,0.2,0 JL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 34,4.9,3.1,1.5,0.2,0 KE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 35,5.0,3.2,1.2,0.2,0 IR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 36,5.5,3.5,1.3,0.2,0 RK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 37,4.9,3.6,1.4,0.1,0 LP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 38,4.4,3.0,1.3,0.2,0 OI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 39,5.1,3.4,1.5,0.2,0 NQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 40,5.0,3.5,1.3,0.3,0 NN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 41,4.5,2.3,1.3,0.3,0 QG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 42,4.4,3.2,1.3,0.2,0 QL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 43,5.0,3.5,1.6,0.6,0 IE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 44,5.1,3.8,1.9,0.4,0 RR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 45,4.8,3.0,1.4,0.3,0 LK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 46,5.1,3.8,1.6,0.2,0 GP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 47,4.6,3.2,1.4,0.2,0 HH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 48,5.3,3.7,1.5,0.2,0 CQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 49,5.0,3.3,1.4,0.2,0 PN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 50,7.0,3.2,4.7,1.4,1 KG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 51,6.4,3.2,4.5,1.5,1 JL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 52,6.9,3.1,4.9,1.5,1 JE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 53,5.5,2.3,4.0,1.3,1 QR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 54,6.5,2.8,4.6,1.5,1 LK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 55,5.7,2.8,4.5,1.3,1 QS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 56,6.3,3.3,4.7,1.6,1 IH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 57,4.9,2.4,3.3,1.0,1 QQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 58,6.6,2.9,4.6,1.3,1 NN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 59,5.2,2.7,3.9,1.4,1 EG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 60,5.0,2.0,3.5,1.0,1 OL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 61,5.9,3.0,4.2,1.5,1 JE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 62,6.0,2.2,4.0,1.0,1 PR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 63,6.1,2.9,4.7,1.4,1 DJ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 64,5.6,2.9,3.6,1.3,1 NS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 65,6.7,3.1,4.4,1.4,1 PH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 66,5.6,3.0,4.5,1.5,1 JQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 67,5.8,2.7,4.1,1.0,1 NN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 68,6.2,2.2,4.5,1.5,1 JG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 69,5.6,2.5,3.9,1.1,1 RL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 70,5.9,3.2,4.8,1.8,1 RE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 71,6.1,2.8,4.0,1.3,1 EM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 72,6.3,2.5,4.9,1.5,1 JJ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 73,6.1,2.8,4.7,1.2,1 JS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 74,6.4,2.9,4.3,1.3,1 QH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 75,6.6,3.0,4.4,1.4,1 KQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 76,6.8,2.8,4.8,1.4,1 JN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 77,6.7,3.0,5.0,1.7,1 JG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 78,6.0,2.9,4.5,1.5,1 PL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 79,5.7,2.6,3.5,1.0,1 LD 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 80,5.5,2.4,3.8,1.1,1 GM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 81,5.5,2.4,3.7,1.0,1 FJ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 82,5.8,2.7,3.9,1.2,1 DS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 83,6.0,2.7,5.1,1.6,1 RH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 84,5.4,3.0,4.5,1.5,1 OQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 85,6.0,3.4,4.5,1.6,1 MN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 86,6.7,3.1,4.7,1.5,1 NG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 87,6.3,2.3,4.4,1.3,1 NO 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 88,5.6,3.0,4.1,1.3,1 QD 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 89,5.5,2.5,4.0,1.3,1 IM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 90,5.5,2.6,4.4,1.2,1 DJ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 91,6.1,3.0,4.6,1.4,1 DS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 92,5.8,2.6,4.0,1.2,1 LH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 93,5.0,2.3,3.3,1.0,1 RQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 94,5.6,2.7,4.2,1.3,1 MN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 95,5.7,3.0,4.2,1.2,1 QF 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 96,5.7,2.9,4.2,1.3,1 MO 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 97,6.2,2.9,4.3,1.3,1 LD 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 98,5.1,2.5,3.0,1.1,1 HM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 99,5.7,2.8,4.1,1.3,1 EI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 100,6.3,3.3,6.0,2.5,2 FP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 101,5.8,2.7,5.1,1.9,2 QK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 102,7.1,3.0,5.9,2.1,2 DR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 103,6.3,2.9,5.6,1.8,2 RM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 104,6.5,3.0,5.8,2.2,2 ED 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 105,7.6,3.0,6.6,2.1,2 PO 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 106,4.9,2.5,4.5,1.7,2 EF 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 107,7.3,2.9,6.3,1.8,2 OQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 108,6.7,2.5,5.8,1.8,2 RH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 109,7.2,3.6,6.1,2.5,2 FS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 110,6.5,3.2,5.1,2.0,2 RJ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 111,6.4,2.7,5.3,1.9,2 NE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 112,6.8,3.0,5.5,2.1,2 IL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 113,5.7,2.5,5.0,2.0,2 IG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 114,5.8,2.8,5.1,2.4,2 IN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 115,6.4,3.2,5.3,2.3,2 KI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 116,6.5,3.0,5.5,1.8,2 MP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 117,7.7,3.8,6.7,2.2,2 HK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 118,7.7,2.6,6.9,2.3,2 LR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 119,6.0,2.2,5.0,1.5,2 LM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 120,6.9,3.2,5.7,2.3,2 FD 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 121,5.6,2.8,4.9,2.0,2 RO 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 122,7.7,2.8,6.7,2.0,2 FF 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 123,6.3,2.7,4.9,1.8,2 CQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 124,6.7,3.3,5.7,2.1,2 MH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 125,7.2,3.2,6.0,1.8,2 LS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 126,6.2,2.8,4.8,1.8,2 FJ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 127,6.1,3.0,4.9,1.8,2 GE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 128,6.4,2.8,5.6,2.1,2 DL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 129,7.2,3.0,5.8,1.6,2 FG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 130,7.4,2.8,6.1,1.9,2 DN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 131,7.9,3.8,6.4,2.0,2 OI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 132,6.4,2.8,5.6,2.2,2 LP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 133,6.3,2.8,5.1,1.5,2 GK 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 134,6.1,2.6,5.6,1.4,2 HR 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 135,7.7,3.0,6.1,2.3,2 GM 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 136,6.3,3.4,5.6,2.4,2 ND 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 137,6.4,3.1,5.5,1.8,2 PO 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 138,6.0,3.0,4.8,1.8,2 FF 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 139,6.9,3.1,5.4,2.1,2 GQ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 140,6.7,3.1,5.6,2.4,2 NH 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 141,6.9,3.1,5.1,2.3,2 GS 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 142,5.8,2.7,5.1,1.9,2 FJ 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 143,6.8,3.2,5.9,2.3,2 EE 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 144,6.7,3.3,5.7,2.5,2 FL 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 145,6.7,3.0,5.2,2.3,2 NG 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 146,6.3,2.5,5.0,1.9,2 CN 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 147,6.5,3.0,5.2,2.0,2 LI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 148,6.2,3.4,5.4,2.3,2 LP 0 19:11:15.136 LogisticRegression (GBPUSD,D1) 149,5.9,3.0,5.1,1.8,2 LI 0 19:11:15.136 LogisticRegression (GBPUSD,D1) EN 0 19:11:15.138 LogisticRegression (GBPUSD,D1) LBFGS logit results QF 0 19:11:15.138 LogisticRegression (GBPUSD,D1) coefs [[-0.3544914154418004,0.7640895553785291,-2.029702076841132,-0.8297514969607693]] EK 0 19:11:15.138 LogisticRegression (GBPUSD,D1) bias [5.587065638186691] RJ 0 19:11:15.141 LogisticRegression (GBPUSD,D1) TNC logit results FR 0 19:11:15.141 LogisticRegression (GBPUSD,D1) coefs [[-0.3543682771949164,0.7643878803868314,-2.029620459724486,-0.8298337657974659]] IN 0 19:11:15.141 LogisticRegression (GBPUSD,D1) bias [5.585350403781237]

The following sections of the article, go describe the implementation of the TNC optimizer in finer detail. Discussing how the code works and the definition of select variables.

Core TNC Implementation

The TNC optimization implementation consists of two parts: the core logic contained in tnc.mqh and a generic secondary component that defines wrappers for the objective function, its derivative, and the Hessian. We will first focus on tnc.mqh, as it handles the core algorithm. The code in tnc.mqh begins by defining several enumerations that serve as the structural backbone for internal communication.

enum ENUM_TNC_MESSAGE { TNC_MSG_NONE = 0, /* No messages */ TNC_MSG_ITER = 1, /* One line per iteration */ TNC_MSG_INFO = 2, /* Informational messages */ TNC_MSG_EXIT = 8, /* Exit reasons */ TNC_MSG_ALL = TNC_MSG_ITER | TNC_MSG_INFO | TNC_MSG_EXIT /* All messages */ }; enum ENUM_TNC_RC { TNC_MINRC = -3, /* Constant to add to get the rc_string */ TNC_ENOMEM = -3, /* Memory allocation failed */ TNC_EINVAL = -2, /* Invalid parameters (n<0) */ TNC_INFEASIBLE = -1, /* Infeasible (low bound > up bound) */ TNC_LOCALMINIMUM = 0, /* Local minima reach (|pg| ~= 0) */ TNC_FCONVERGED = 1, /* Converged (|f_n-f_(n-1)| ~= 0) */ TNC_XCONVERGED = 2, /* Converged (|x_n-x_(n-1)| ~= 0) */ TNC_MAXFUN = 3, /* Max. number of function evaluations reach */ TNC_LSFAIL = 4, /* Linear search failed */ TNC_CONSTANT = 5, /* All lower bounds are equal to the upper bounds */ TNC_NOPROGRESS = 6, /* Unable to progress */ TNC_USERABORT = 7 /* User requested end of minization */ };

- The ENUM_TNC_MESSAGE enumeration defines bitmask flags to control output verbosity.

- The ENUM_TNC_RC enumeration provides a set of return codes indicating the reason for termination.

- The tnc_rc_string variable is a string array that maps these return codes to human-readable messages.

const string tnc_rc_string[11] = { "Memory allocation failed", "Invalid parameters (n<0)", "Infeasible (low bound > up bound)", "Local minima reach (|pg| ~= 0)", "Converged (|f_n-f_(n-1)| ~= 0)", "Converged (|x_n-x_(n-1)| ~= 0)", "Maximum number of function evaluations reached", "Linear search failed", "All lower bounds are equal to the upper bounds", "Unable to progress", "User requested end of minimization" };

Additionally, specific enumerations define return states for the linear search and point-finding sub-routines, ensuring the algorithm can precisely track its progress through each nested loop.

/* * getptc return codes */ enum ENUM_GETPTC_RC { GETPTC_OK = 0, /* Suitable point found */ GETPTC_EVAL = 1, /* Function evaluation required */ GETPTC_EINVAL = 2, /* Bad input values */ GETPTC_FAIL = 3 /* No suitable point found */ }; /* * linearSearch return codes */ enum ENUM_LS_RC { LS_OK = 0, /* Suitable point found */ LS_MAXFUN = 1, /* Max. number of function evaluations reach */ LS_FAIL = 2, /* No suitable point found */ LS_USERABORT = 3, /* User requested end of minimization */ LS_ENOMEM = 4 /* Memory allocation failed */ };

The CObjective class serves as a state manager for the function being optimized. It encapsulates metadata such as the dimensionality of the problem, whether a failure has occurred, and pointers to the actual objective function logic.

//+------------------------------------------------------------------+ //|objective function state | //+------------------------------------------------------------------+ class CObjective { protected: bool m_failed,m_fupdated,m_gupdated,m_hupdated; double m_lowestx,m_lowest_f; ulong m_size; IObjective *m_objective; public: CObjective(void):m_failed(false), m_size(0) { } ~CObjective(void) { } void set_objective(IObjective* &fun_obj) { m_objective = fun_obj; } void has_failed(bool yes) { m_failed = yes; } void set_dim(ulong size) { m_size = size; } bool failed(void) { return m_failed; } bool abort(void) { return IsStopped(); } ulong size(void) { return m_size; } ObjReturn objective(vector& x) { return m_objective.fun_and_grad(x); } virtual int callback(vector& x) { return 0; } }

By acting as a wrapper, this class allows the TNC algorithm to request function evaluations and gradients while maintaining internal flags regarding optimization progress. This abstraction layer ensures the core solver remains decoupled from the specific mathematical details of the function it is minimizing. Following the class definition, the code establishes typedef function pointers to standardize the signatures of the objective and callback functions.

//+------------------------------------------------------------------+ //| function pointers | //+------------------------------------------------------------------+ typedef int(*tnc_function)(vector&, double&, vector&, CObjective&); typedef void(*tnc_callback)(vector&, CObjective&); //+------------------------------------------------------------------+ //| fpointer | //+------------------------------------------------------------------+ int func(vector& x, double& f, vector& g, CObjective& state) { ulong n = state.size(); vector x_data,g_data; if(state.abort()) return 2; if(state.failed()) return 1; x_data = x; ObjReturn fg = state.objective(x_data); f = fg.f; if(fg.g.Size()!=n) { printf(" tnc: gradient must have length ", n); return 2; } g = fg.g; return 0; } //+------------------------------------------------------------------+ //| callback | //+------------------------------------------------------------------+ void call_back(vector& x, CObjective& state) { ulong n = state.size(); vector x_data = x; if(state.callback(x_data)) state.has_failed(true); else state.has_failed(false); return;

The func and call_back functions act as bridge logic between the low-level solver and the CObjective state. They handle the extraction of data from vectors, the verification of gradient dimensions, and checks for user-initiated aborts before passing the results back to the solver. This ensures that the core algorithm remains decoupled from the specific data structures used by the caller.

//+------------------------------------------------------------------+ //|Optimization results | //+------------------------------------------------------------------+ struct OptimizeResult { int return_code; int nfeval; int niter; vector solution; vector objective_result; vector objective_gradient; OptimizeResult(void) { return_code = WRONG_VALUE; nfeval = niter = 0; solution = objective_result = objective_gradient = vector::Zeros(0); } OptimizeResult(int rc,int feval,int iter,vector &x, vector& f, vector& g) { return_code = rc; nfeval = feval; niter = iter; solution = x; objective_result = f; objective_gradient = g; } OptimizeResult(OptimizeResult& other) { return_code = other.return_code; nfeval = other.nfeval; niter = other.niter; solution = other.solution; objective_result = other.objective_result; objective_gradient = other.objective_gradient; } void operator=(OptimizeResult& other) { return_code = other.return_code; nfeval = other.nfeval; niter = other.niter; solution = other.solution; objective_result = other.objective_result; objective_gradient = other.objective_gradient; } }

The OptimizeResult struct is a lightweight data container designed to package the final outcome of the optimization process. It stores essential metadata, including the return code, the number of function evaluations, and the total iterations performed. Additionally, it holds the final vectors for the solution, the objective result, and the gradient. To facilitate the seamless movement of data across different parts of the application once the solver concludes, the struct includes multiple constructors and an assignment operator.

//+------------------------------------------------------------------+ //|class encapsulating TNC minimizer | //+------------------------------------------------------------------+ class CTruncNewtonCG: public CObject { private: int tnc(int n, vector& x, double &f, vector& g, tnc_function& function, CObjective &state, vector& low, vector& up, vector& scale, vector& offset, int messages, int maxCGit, int maxnfeval, double eta, double stepmx, double accuracy, double _fmin, double ftol, double xtol, double pgtol, double rescale, int &nfeval, int &niter, tnc_callback &callback); void coercex(int n, vector& x, const vector& low, const vector& up); void unscalex(int n, vector& x, const vector& xscale, const vector& xoffset); void scalex(int n, vector& x, const vector& xscale, const vector& xoffset); void scaleg(int n, vector& g, const vector& xscale, double& fscale); void setConstraints(int n, vector& x, int& pivot[], vector& xscale, vector& xoffset, vector& low, vector& up); ENUM_TNC_RC minize_tnc(int n, vector& x, double &f, vector& gfull, tnc_function function, CObjective &state, vector& xscale, vector& xoffset, double &fscale, vector& low, vector& up, ENUM_TNC_MESSAGE messages, int maxCGit, int maxnfeval, int &nfeval, int &niter, double eta, double stepmx, double accuracy, double _fmin, double ftol, double xtol, double pgtol, double rescale, tnc_callback& callback); void printCurrentIteration(int n, double f, vector& g, int niter, int nfeval, int &pivot[]); void project(int n, vector& x, const int &pivot[]); void projectConstants(int n, vector& x, const vector& xscale); double stepMax(double step, int n, vector& x, vector& dir, int &pivot[], vector& low, vector& up, vector& xscale, vector& xoffset); bool addConstraint(int n, vector& x, vector& p, int &pivot[], vector& low, vector& up, vector& xscale, vector& xoffset); bool removeConstraint(double gtpnew, double gnorm, double pgtolfs, double f, double fLastConstraint, vector& g, int &pivot[], int n); */ int tnc_direction(vector &zsol, vector &diagb, vector &x, vector& g, int n, int maxCGit, int maxnfeval, int &nfeval, bool upd1, double yksk, double yrsr, vector &sk, vector &yk, vector &sr, vector &yr, bool lreset, tnc_function & function, CObjective &state, vector& xscale, vector& xoffset, double fscale, int &pivot[], double accuracy, double gnorm, double xnorm, vector& low, vector& up); void diagonalScaling(int n, vector& e, vector& v, vector& gv, vector& r); double initialStep(double fnew, double _fmin, double gtp, double smax); int hessianTimesVector(vector& v, vector& gv, int n, vector& x, vector& g, tnc_function & function, CObjective &state, vector& xscale, vector& xoffset, double fscale, double accuracy, double xnorm, vector& low, vector& up); int msolve(vector& g, vector& y, int n, vector& sk, vector& yk, vector& diagb, vector& sr, vector& yr, bool upd1, double yksk, double yrsr, bool lreset); void ssbfgs(int n, double gamma, vector& sj, vector& hjv, vector& hjyj, double yjsj, double yjhyj, double vsj, double vhyj, vector& hjp1v); int initPreconditioner(vector& diagb, vector& emat, int n, bool lreset, double yksk, double yrsr, vector& sk, vector& yk, vector& sr, vector& yr, bool upd1); ENUM_LS_RC linearSearch(int n, tnc_function & function, CObjective &state, vector& low, vector& up, vector& xscale, vector& xoffset, double fscale, int &pivot[], double eta, double ftol, double xbnd, vector& p, vector& x, double &f, double &alpha, vector& gfull, int maxnfeval, int &nfeval); ENUM_GETPTC_RC getptcInit(double &reltol, double &abstol, double tnytol, double eta, double rmu, double xbnd, double &u, double &fu, double &gu, double &xmin, double &_fmin, double &gmin, double &xw, double &fw, double &gw, double &a, double &b, double &oldf, double &b1, double &scxbnd, double &e, double &step, double &factor, bool & braktd, double >est1, double >est2, double &tol); ENUM_GETPTC_RC getptcIter(double big, double rtsmll, double &reltol, double &abstol, double tnytol, double fpresn, double xbnd, double &u, double &fu, double &gu, double &xmin, double &_fmin, double &gmin, double &xw, double &fw, double &gw, double &a, double &b, double &oldf, double &b1, double &scxbnd, double &e, double &step, double &factor, bool & braktd, double >est1, double >est2, double &tol); void dxpy1(int n, const vector& dx, vector& dy); void daxpy1(int n, double da, const vector& dx, vector& dy); void dcopy1(int n, const vector& dx, vector& dy); void dneg1(int n, vector& v); double ddot1(int n, const vector& dx, const vector& dy); double dnrm21(int n, const vector& dx); OptimizeResult tnc_minimize(CFunctor &fungrad,vector& scale, vector& offset, int messages, int maxCGit, int maxfun, double eta, double stepmax, double accuracy, double fmin_, double ftol, double xtol,double pgtol, double rescale); vector m_scale,m_offset; int m_messages,m_maxCGit, m_maxfun; double m_eta, m_stepmax, m_accuracy, m_fmin,m_ftol,m_xtol,m_pgtol, m_rescale; OptimizeResult m_result; public: CTruncNewtonCG(void); ~CTruncNewtonCG(void); void SetScale(vector& scale); void SetOffset(vector& offset); void SetLoglevel(ENUM_TNC_MESSAGE messages); void SetMaxCGit(int maxCGit); void SetMaxFunCalls(int maxfun); void SetEta(double eta); void SetStepMax(double stepmax); void SetAccuracy(double accuracy); void SetFmin(double f_min); void SetFtol(double ftol); void SetXtol(double xtol); void SetPGtol(double pgtol); void SetRescaleFactor(double rescale); int Minimize(CFunctor &fungrad); vector Solution(void); double ObjectiveResult(void); vector ObjectiveGradient(void); int NumFevals(void); int NumIters(void); };

The CTruncNewtonCG class contains the core logic of the optimization algorithm. It includes the tnc entry method, which performs initial parameter validation, handles variable scaling, and sets default values for tolerances and step sizes.

int tnc(int n, vector& x, double &f, vector& g, tnc_function& function, CObjective &state, vector& low, vector& up, vector& scale, vector& offset, int messages, int maxCGit, int maxnfeval, double eta, double stepmx, double accuracy, double _fmin, double ftol, double xtol, double pgtol, double rescale, int &nfeval, int &niter, tnc_callback &callback) { int rc, frc, i, nc, nfeval_local, free_low = TNC_FALSE,free_up = TNC_FALSE, free_g = TNC_FALSE; double fscale, rteps; vector xscale,xoffset; nfeval = nfeval_local = 0; /* Check for errors in the input parameters */ if(n == 0) { rc = TNC_CONSTANT; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } if(n < 0) { rc = TNC_EINVAL; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } /* Check bounds arrays */ if(!low.Size()) { low = vector::Zeros(n); free_low = TNC_TRUE; for(i = 0; i < n; i++) { low[i] = -HUGE_VAL; } } if(!up.Size()) { up = vector::Zeros(n); if(up.Size()==0) { rc = TNC_ENOMEM; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } free_up = TNC_TRUE; for(i = 0; i < n; i++) { up[i] = HUGE_VAL; } } /* Coherency check */ for(i = 0; i < n; i++) { if(low[i] > up[i]) { rc = TNC_INFEASIBLE; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } } /* Coerce x into bounds */ coercex(n, x, low, up); if(maxnfeval < 1) { rc = TNC_MAXFUN; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } /* Allocate g if necessary */ if(g.Size()==0) { g = vector::Zeros(n); if(g.Size()==0) { rc = TNC_ENOMEM; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } free_g = TNC_TRUE; } /* Initial function evaluation */ //Print(__FUNCTION__," - ", x); frc = function(x, f, g, state); (nfeval)++; if(frc) { rc = TNC_USERABORT; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } /* Constant problem ? */ for(nc = 0, i = 0; i < n; i++) { if((low[i] == up[i]) || (scale.Size() != 0 && scale[i] == 0.0)) { nc++; } } if(nc == n) { rc = TNC_CONSTANT; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } /* Scaling parameters */ xscale = vector::Zeros(n); if(xscale.Size()==0) { rc = TNC_ENOMEM; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc;; } xoffset = vector::Zeros(n); if(xoffset.Size()==0) { rc = TNC_ENOMEM; nfeval = (nfeval==0)?nfeval_local:nfeval; if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; } fscale = 1.0; for(i = 0; i < n; i++) { if(scale.Size() != NULL) { xscale[i] = fabs(scale[i]); if(xscale[i] == 0.0) { xoffset[i] = low[i] = up[i] = x[i]; } } else if(low[i] != -HUGE_VAL && up[i] != HUGE_VAL) { xscale[i] = up[i] - low[i]; xoffset[i] = (up[i] + low[i]) * 0.5; } else { xscale[i] = 1.0 + fabs(x[i]); xoffset[i] = x[i]; } if(offset.Size() != NULL) { xoffset[i] = offset[i]; } } /* Default values for parameters */ rteps = sqrt(DBL_EPSILON); if(stepmx < rteps * 10.0) { stepmx = 1.0e1; } if(eta < 0.0 || eta >= 1.0) { eta = 0.25; } if(rescale < 0) { rescale = 1.3; } if(maxCGit < 0) /* maxCGit == 0 is valid */ { maxCGit = n / 2; if(maxCGit < 1) { maxCGit = 1; } else if(maxCGit > 50) { maxCGit = 50; } } if(maxCGit > n) { maxCGit = n; } if(accuracy <= DBL_EPSILON) { accuracy = rteps; } if(ftol < 0.0) { ftol = accuracy; } if(pgtol < 0.0) { pgtol = 1e-2 * sqrt(accuracy); } if(xtol < 0.0) { xtol = rteps; } /* Optimisation */ rc = minize_tnc(n, x, f, g, function, state, xscale, xoffset, fscale, low, up,(ENUM_TNC_MESSAGE)messages, maxCGit, maxnfeval, nfeval, niter, eta, stepmx, accuracy, _fmin, ftol, xtol, pgtol, rescale, callback); if(bool(messages & TNC_MSG_EXIT)) printf(" tnc: %s", tnc_rc_string[rc - TNC_MINRC]); return rc; }

This section is responsible for coercing variables into their defined bounds and initializing the scaling parameters. These parameters ensure the algorithm remains numerically stable, even when dealing with variables of vastly different magnitudes.

ENUM_TNC_RC minize_tnc(int n, vector& x, double &f, vector& gfull, tnc_function function, CObjective &state, vector& xscale, vector& xoffset, double &fscale, vector& low, vector& up, ENUM_TNC_MESSAGE messages, int maxCGit, int maxnfeval, int &nfeval, int &niter, double eta, double stepmx, double accuracy, double _fmin, double ftol, double xtol, double pgtol, double rescale, tnc_callback& callback) { double fLastReset, difnew, epsred, oldgtp, difold, oldf, xnorm, newscale, gnorm, ustpmax, fLastConstraint, spe, yrsr, yksk; vector temp,sk,yk,diagb,sr,yr,oldg,pk,g; double alpha = 0.0; /* Default unused value */ int i, icycle, oldnfeval, frc; bool lreset, newcon, upd1, remcon; ENUM_TNC_RC rc = TNC_ENOMEM; /* Default error */ niter = 0; int pivot[]; /* Allocate temporary vectors */ oldg = vector::Zeros(n); if(oldg.Size()==0) { return rc; } g = vector::Zeros(n); if(g.Size()==0) { return rc; } temp = vector::Zeros(n); if(temp.Size()==0) { return rc; } diagb = vector::Zeros(n); if(diagb.Size()==0) { return rc; } pk = vector::Zeros(n); if(pk.Size()==0) { return rc; } sk = vector::Zeros(n); if(sk.Size()==0) { return rc; } yk = vector::Zeros(n); if(yk.Size()==0) { return rc; } sr = vector::Zeros(n); if(sr.Size()==0) { return rc; } yr = vector::Zeros(n); if(yr.Size()==0) { return rc; } ArrayResize(pivot,n); if(pivot.Size()==0) { return rc; } /* Initialize variables */ difnew = 0.0; epsred = 0.05; upd1 = TNC_TRUE; icycle = n - 1; newcon = TNC_TRUE; /* Unneeded initialisations */ lreset = TNC_FALSE; yrsr = 0.0; yksk = 0.0; /* Initial scaling */ scalex(n, x, xscale, xoffset); f *=fscale; /* initial pivot calculation */ setConstraints(n, x, pivot, xscale, xoffset, low, up); dcopy1(n, gfull, g); scaleg(n, g, xscale, fscale); /* Test the lagrange multipliers to see if they are non-negative. */ for(i = 0; i < n; i++) { if(-pivot[i] * g[i] < 0.0) { pivot[i] = 0; } } project(n, g, pivot); /* Set initial values to other parameters */ gnorm = dnrm21(n, g); fLastConstraint = f; /* Value at last constraint */ fLastReset = f; /* Value at last reset */ if(bool(messages & TNC_MSG_ITER)) { printf(" NIT NF F GTG"); } if(bool(messages & TNC_MSG_ITER)) { printCurrentIteration(n, f / fscale, gfull, niter, nfeval, pivot); } /* Set the diagonal of the approximate hessian to unity. */ diagb = vector::Ones(diagb.Size()); /*for(i = 0; i < n; i++) { diagb[i] = 1.0; } */ /* Start of main iterative loop */ while(TNC_TRUE && !IsStopped()) { /* Local minimum test */ if(dnrm21(n, g) <= pgtol * (fscale)) { /* |PG| == 0.0 => local minimum */ dcopy1(n, gfull, g); project(n, g, pivot); if(bool(messages & TNC_MSG_INFO)) { printf("tnc: |pg| = %g -> local minimum", dnrm21(n,g)/(fscale)); } rc = TNC_LOCALMINIMUM; break; } /* Terminate if more than maxnfeval evaluations have been made */ if(nfeval >= maxnfeval) { rc = TNC_MAXFUN; break; } /* Rescale function if necessary */ newscale = dnrm21(n, g); if((newscale > DBL_EPSILON) && (fabs(log10(newscale)) > rescale)) { newscale = 1.0 / newscale; f *= newscale; fscale *= newscale; gnorm *= newscale; fLastConstraint *= newscale; fLastReset *= newscale; difnew *= newscale; g*=newscale; diagb = vector::Ones(diagb.Size()); upd1 = TNC_TRUE; icycle = n - 1; newcon = TNC_TRUE; if(bool(messages & TNC_MSG_INFO)) { printf("tnc: fscale = %g", fscale); } } dcopy1(n, x, temp); project(n, temp, pivot); xnorm = dnrm21(n, temp); oldnfeval = nfeval; /* Compute the new search direction */ frc = tnc_direction(pk, diagb, x, g, n, maxCGit, maxnfeval, nfeval, upd1, yksk, yrsr, sk, yk, sr, yr, lreset, function, state, xscale, xoffset, fscale, pivot, accuracy, gnorm, xnorm, low, up); if(frc == -1) { rc = TNC_ENOMEM; break; } if(frc) { rc = TNC_USERABORT; break; } if(!newcon) { if(!lreset) { /* Compute the accumulated step and its corresponding gradient difference. */ dxpy1(n, sk, sr); dxpy1(n, yk, yr); icycle++; } else { /* Initialize the sum of all the changes */ dcopy1(n, sk, sr); dcopy1(n, yk, yr); fLastReset = f; icycle = 1; } } dcopy1(n, g, oldg); oldf = f; oldgtp = ddot1(n, pk, g); /* Maximum unconstrained step length */ ustpmax = stepmx / (dnrm21(n, pk) + DBL_EPSILON); /* Maximum constrained step length */ spe = stepMax(ustpmax, n, x, pk, pivot, low, up, xscale, xoffset); if(spe > 0.0) { ENUM_LS_RC lsrc; /* Set the initial step length */ alpha = initialStep(f, _fmin / (fscale), oldgtp, spe); /* Perform the linear search */ lsrc = linearSearch(n, function, state, low, up, xscale, xoffset, fscale, pivot, eta, ftol, spe, pk, x, f, alpha, gfull, maxnfeval, nfeval); if(lsrc == LS_ENOMEM) { rc = TNC_ENOMEM; break; } if(lsrc == LS_USERABORT) { rc = TNC_USERABORT; break; } if(lsrc == LS_FAIL) { rc = TNC_LSFAIL; break; } /* If we went up to the maximum unconstrained step, increase it */ if(alpha >= 0.9 * ustpmax) { stepmx *= 1e2; if(bool(messages & TNC_MSG_INFO)) { printf("tnc: stepmx = %g", stepmx); } } /* If we went up to the maximum constrained step, a new constraint was encountered */ if(alpha - spe >= -DBL_EPSILON * 10.0) { newcon = TNC_TRUE; } else { /* Break if the linear search has failed to find a lower point */ if(lsrc != LS_OK) { if(lsrc == LS_MAXFUN) { rc = TNC_MAXFUN; } else { rc = TNC_LSFAIL; } break; } newcon = TNC_FALSE; } } else { /* Maximum constrained step == 0.0 => new constraint */ newcon = TNC_TRUE; } if(newcon) { if(!addConstraint(n, x, pk, pivot, low, up, xscale, xoffset)) { if(nfeval == oldnfeval) { rc = TNC_NOPROGRESS; break; } } fLastConstraint = f; } (niter)++; /* Invoke the callback function */ if(callback) { dcopy1(n, x, temp); unscalex(n, temp, xscale, xoffset); callback(temp, state); } /* Set up parameters used in convergence and resetting tests */ difold = difnew; difnew = oldf - f; /* If this is the first iteration of a new cycle, compute the percentage reduction factor for the resetting test */ if(icycle == 1) { if(difnew > difold * 2.0) { epsred += epsred; } if(difnew < difold * 0.5) { epsred *= 0.5; } } dcopy1(n, gfull, g); scaleg(n, g, xscale, fscale); dcopy1(n, g, temp); project(n, temp, pivot); gnorm = dnrm21(n, temp); /* Reset pivot */ remcon = removeConstraint(oldgtp, gnorm, pgtol * (fscale), f, fLastConstraint, g, pivot, n); /* If a constraint is removed */ if(remcon) { /* Recalculate gnorm and reset fLastConstraint */ dcopy1(n, g, temp); project(n, temp, pivot); gnorm = dnrm21(n, temp); fLastConstraint = f; } if(!remcon && !newcon) { /* No constraint removed & no new constraint : tests for convergence */ if(fabs(difnew) <= ftol * (fscale)) { if(bool(messages & TNC_MSG_INFO)) { printf( "tnc: |fn-fn-1] = %g -> convergence", fabs(difnew) / (fscale)); } rc = TNC_FCONVERGED; break; } if(alpha * dnrm21(n, pk) <= xtol) { if(bool(messages & TNC_MSG_INFO)) { printf( "tnc: |xn-xn-1] = %g -> convergence", alpha * dnrm21(n, pk)); } rc = TNC_XCONVERGED; break; } } project(n, g, pivot); if(bool(messages & TNC_MSG_ITER)) { printCurrentIteration(n, f / fscale, gfull, niter, nfeval, pivot); } /* Compute the change in the iterates and the corresponding change in the gradients */ if(!newcon) { yk = g - oldg; sk = alpha*pk; /* Set up parameters used in updating the preconditioning strategy */ yksk = ddot1(n, yk, sk); if(icycle == (n - 1) || difnew < epsred * (fLastReset - f)) { lreset = TNC_TRUE; } else { yrsr = ddot1(n, yr, sr); if(yrsr <= 0.0) { lreset = TNC_TRUE; } else { lreset = TNC_FALSE; } } upd1 = TNC_FALSE; } } if(bool(messages & TNC_MSG_ITER)) { printCurrentIteration(n, f / fscale, gfull, niter, nfeval, pivot); } /* Unscaling */ unscalex(n, x, xscale, xoffset); coercex(n, x, low, up); (f) /= fscale; return rc;

The minimize_tnc method implements the main iterative loop of the algorithm. It calculates the search direction, performs a line search to determine the optimal step length, and manages the logic for tracking variables that hit their upper or lower bounds. The method also includes functionality for projecting gradients to account for constraints and updating the preconditioning strategy to accelerate convergence. This loop continues until a convergence criterion is met—such as the gradient norm falling below a specific threshold—or the maximum number of function evaluations is reached.

The final section of the code consists of various utility methods used for vector manipulation and constraint management. Methods such as scalex, unscalex, and scaleg manage the transformation of data between the user's coordinate space and the internal scaled space.

/* Unscale x */ void unscalex(int n, vector& x, const vector& xscale, const vector& xoffset) { x = x*xscale+xoffset; } /* Scale x */ void scalex(int n, vector& x, const vector& xscale, const vector& xoffset) { x = (x-xoffset)/xscale; } /* Scale g */ void scaleg(int n, vector& g, const vector& xscale, double& fscale) { g*=xscale*fscale; }

The project and addConstraint methods handle the "bounds" of the problem. They are responsible for manually zeroing out gradient components or adjusting variables that attempt to move outside the permitted range, ensuring the solution remains feasible throughout the optimization process.

void project(int n, vector& x, const int &pivot[]) { int i; for(i = 0; i < n; i++) { if(pivot[i] != 0) { x[i] = 0.0; } } } /* * Set x[i] = 0.0 if direction i is constant */ void projectConstants(int n, vector& x, const vector& xscale) { int i; for(i = 0; i < n; i++) { if(xscale[i] == 0.0) { x[i] = 0.0; } } }

The Objective Function Wrapper

The second component of the implementation handles the objective function and its first- and second-order derivatives, all of which are defined in the separate header file num_diff.mqh. This header begins with several enumerations that serve as configuration settings for the numerical differentiation process. These enums—such as ENUM_SCHEME_DIRECTION, ENUM_DIFF_POINTS, and ENUM_HESS_DIFF_POINTS—allow the user to choose between one-sided or two-sided search direction schemes and specify the number of points used to estimate gradients and Hessians. These settings ultimately determine the balance between computational speed and the mathematical accuracy of the derivative approximations.

//+------------------------------------------------------------------+ //| directional scheme options | //+------------------------------------------------------------------+ enum ENUM_SCHEME_DIRECTION { SCHEME_1=0,//1 sided SCHEME_2//2 sided }; //+------------------------------------------------------------------+ //| num points of evaluation | //+------------------------------------------------------------------+ enum ENUM_DIFF_POINTS { GRAD_POINT_2=0,//2-point GRAD_POINT_3,//3-point GRAD_POINT_CS,//complex GRAD_POINT_CALLABLE//callable }; //+------------------------------------------------------------------+ //| num points of evaluation | //+------------------------------------------------------------------+ enum ENUM_HESS_DIFF_POINTS { HESS_POINT_2=0,//2-point HESS_POINT_3,//3-point HESS_POINT_CS,//complex HESS_POINT_HESS_STRATEGY,//hessian update strategy HESS_POINT_CALLABLE//callable };

The code then introduces the ObjReturn struct and the IObjective interface, which establish a standardized framework for the optimization problem. The ObjReturn structure is a simple container designed to hold both the function value and the gradient vector simultaneously, eliminating the need for separate, redundant function calls. The IObjective interface ensures that any objective function provided to the solver follows a consistent structure, requiring implementations for both the raw objective calculation and the combined function-gradient return.

//+------------------------------------------------------------------+ //|struct objective function return | //+------------------------------------------------------------------+ struct ObjReturn { double f; vector g; ObjReturn(void) { f = double(0); g = vector::Zeros(0); } ObjReturn(ObjReturn& other) { f = other.f; g = other.g; } void operator=(ObjReturn& other) { f = other.f; g = other.g; } }; //+------------------------------------------------------------------+ //|IObjective provides the base interface for an objective function | //|that will be provided to a minimizer routine | //+------------------------------------------------------------------+ interface IObjective { //---the objective function vector objective_function(vector& x); ObjReturn fun_and_grad(vector& x); };

The GradDiffOptions and HessDiffOptions structs serve as configuration packages that store the parameters required for finite difference calculations. These structures hold the chosen estimation method, the relative and absolute step sizes, and the boundary constraints. By grouping these variables, the code passes differentiation settings through the solver's layers without cluttering function signatures.

//+------------------------------------------------------------------+ //|differentiation options | //+------------------------------------------------------------------+ struct GradDiffOptions { ENUM_DIFF_POINTS method; vector rel_step; vector abs_step; matrix bounds; GradDiffOptions(void) { method = WRONG_VALUE; rel_step = abs_step = vector::Zeros(0); bounds = matrix::Zeros(0,0); } GradDiffOptions(ENUM_DIFF_POINTS m, vector& relstep, vector& absstep, matrix& bnds) { method = m; rel_step = relstep; abs_step = absstep; bounds = bnds; } GradDiffOptions(GradDiffOptions& other) { method = other.method; rel_step = other.rel_step; abs_step = other.abs_step; bounds = other.bounds; } void operator=(GradDiffOptions& other) { method = other.method; rel_step = other.rel_step; abs_step = other.abs_step; bounds = other.bounds; } }; //+------------------------------------------------------------------+ //|differentiation options | //+------------------------------------------------------------------+ struct HessDiffOptions { bool as_linear_operator; ENUM_HESS_DIFF_POINTS method; vector rel_step; vector abs_step; HessDiffOptions(void) { method = WRONG_VALUE; as_linear_operator = false; rel_step = abs_step = vector::Zeros(0); } HessDiffOptions(ENUM_HESS_DIFF_POINTS m, vector& relstep, vector& absstep, bool aslinearoperator) { method = m; rel_step = relstep; abs_step = absstep; as_linear_operator = aslinearoperator; } HessDiffOptions(HessDiffOptions& other) { method = other.method; rel_step = other.rel_step; abs_step = other.abs_step; as_linear_operator = other.as_linear_operator; } void operator=(HessDiffOptions& other) { method = other.method; rel_step = other.rel_step; abs_step = other.abs_step; as_linear_operator = other.as_linear_operator; } }

The CFunctor class is the central manager of the optimization state, implementing the logic for calculating and caching function values, gradients, and Hessians. It includes internal methods to check if a value has already been calculated for the current position, avoiding expensive, redundant computations. This class also tracks the best function value and position found so far, serving as a wrapper that can switch between user-provided derivatives and automated finite difference estimations.

//+---------------------------------------------------------------------------+ //|function objective representing the objective function and its derivatives.| //+---------------------------------------------------------------------------+ class CFunctor:public IObjective { protected: vector m_xp; vector m_x; ulong m_n; matrix m_H; int m_nfev,m_ngev,m_nhev; bool m_fupdated,m_gupdated,m_hupdated; double m_lowest_f,m_f; vector m_lowest_x,m_g; GradDiffOptions m_grad_options; HessDiffOptions m_hess_options; void update_fun(void) { if(!m_fupdated) { double fx = wrapped_fun(m_x); if(fx<m_lowest_f) { m_lowest_f = fx; m_lowest_x = m_x; } m_f = fx; m_fupdated = true; } } void update_grad(void) { if(!m_gupdated) { if(m_grad_options.method!=GRAD_POINT_CALLABLE) update_fun(); vector ff(1); ff[0] = m_f; m_g = wrapped_grad(m_x,ff); m_gupdated = true; } } void update_hess(void) { if(!m_hupdated) { if(m_hess_options.method != HESS_POINT_CALLABLE) { update_grad(); m_H = wrapped_hess(m_x,m_g); } else { vector a = vector::Zeros(0); m_H = wrapped_hess(m_x,a); } m_hupdated = true; } } void update_x(vector& x) { m_x = x; m_fupdated = m_hupdated = m_gupdated = false; } public: CFunctor(void) { m_fupdated = m_hupdated = m_gupdated = false; m_lowest_f = DBL_MAX; m_lowest_x = m_g = vector::Zeros(0); m_H = matrix::Zeros(0,0); m_nfev = m_ngev = m_nhev = 0; m_grad_options.method = GRAD_POINT_2; m_hess_options.method = HESS_POINT_CALLABLE; m_hess_options.as_linear_operator = true; } ~CFunctor(void) { } void setGradOption(ENUM_DIFF_POINTS grad) { m_grad_options.method = grad; } void setAbsoluteStep(vector& epsilon) { m_grad_options.abs_step = epsilon; m_hess_options.abs_step = epsilon; } void setBounds(matrix& finite_bounds) { m_grad_options.bounds = finite_bounds; } void setRelativeStep(vector& finite_diff_rel_step) { m_grad_options.rel_step = finite_diff_rel_step; m_hess_options.rel_step = finite_diff_rel_step; } void setHessOption(ENUM_HESS_DIFF_POINTS hess) { m_hess_options.method = hess; } bool initialize(vector& x) { m_x = x; m_xp = m_x; m_n = x.Size(); if(m_grad_options.method != GRAD_POINT_CALLABLE && m_hess_options.method != HESS_POINT_CALLABLE) { Print(__FUNCTION__, "Whenever the gradient is estimated via " "finite-differences, it is required that" " the Hessian " "be estimated using one of the " "quasi-Newton strategies."); return false; } double check = orig_fun(m_x); if(MathClassify(check)!=FP_NORMAL) { Print(__FUNCTION__," check the implementation of the objective function, currently evaluates to an invalid number "); return false; } if(m_grad_options.method == GRAD_POINT_CALLABLE) { vector a = grad_fun(m_x); if(!a.Size()) { Print(__FUNCTION__, " check the implementation of the overriden gradient function, currently evaluates to an empty vector "); return false; } } update_fun(); update_grad(); if(m_hess_options.method == HESS_POINT_CALLABLE) { vector a = vector::Zeros(0); m_H = wrapped_hess(x,a); m_hupdated = true; } return true; } double wrapped_fun(vector& x) { m_nfev += 1; vector copy = x; return orig_fun(copy); } vector objective_function(vector& x) { vector r(1); r[0] = wrapped_fun(x); return r; } vector wrapped_grad(vector& x,vector& f0) { m_ngev += 1; vector copy = x; if(m_grad_options.method == GRAD_POINT_CALLABLE) return grad_fun(copy); IObjective* objective = GetPointer(this); matrix ad = approx_derivative(objective,copy,f0,m_grad_options.method,m_grad_options.rel_step,m_grad_options.abs_step,m_grad_options.bounds); //Print(__FUNCTION__, " - ", x, " - ", f0, " -> ", ad.Row(0), " | ", m_ngev); return ad.Row(0); } matrix wrapped_hess(vector& x, vector& f0) { m_nhev += 1; vector copy = x; if(m_hess_options.method == HESS_POINT_CALLABLE) return hess_fun(copy); IObjective* objective = GetPointer(this); return approx_derivative(objective,x,f0,m_grad_options.method,m_grad_options.rel_step,m_grad_options.abs_step,m_grad_options.bounds); } vector lower_bounds(void) { return m_grad_options.bounds.Col(0); } vector upper_bounds(void) { return m_grad_options.bounds.Col(1); } vector initial_params(void) { return m_xp; } virtual double orig_fun(vector& x) { return double("nan"); } virtual vector grad_fun(vector& x) { return vector::Zeros(0); } virtual matrix hess_fun(vector& x) { return matrix::Zeros(0,0); } ObjReturn fun_and_grad(vector& x) { vector dif = MathAbs(m_x - x); if(dif.Sum() >= DBL_EPSILON || dif.HasNan()) { update_x(x); } update_fun(); update_grad(); ObjReturn out; out.f = m_f; out.g = m_g; return out; } }

The next major section in num_diff.mqh consists of utility functions eps_for_method and compute_absolute_step, which calculate the optimal step size for numerical differentiation. Because computers have finite precision, a step that is too small leads to round-off errors, while a step that is too large leads to truncation errors. These functions use machine epsilon and the specific differentiation method to find a mathematically sound distance to move the variables when probing the function's slope.

//+----------------------------------------------------------------------------------+ //|Calculates relative EPS step to use for a given data type and numdiff step method.| //+----------------------------------------------------------------------------------+ double eps_for_method(ENUM_DIFF_POINTS method) { switch(method) { case GRAD_POINT_2: case GRAD_POINT_CS: return pow(2.220446049250313e-16,0.5); case GRAD_POINT_3: return pow(2.220446049250313e-16,(1./3.)); }; return DBL_EPSILON; } //+---------------------------------------------------------------------------------+ //|Computes an absolute step from a relative step for finite difference calculation.| //+---------------------------------------------------------------------------------+ vector compute_absolute_step(vector& rel_step,vector& x0, vector& f0, ENUM_DIFF_POINTS method) { vector signx0 = x0; vector abs_step = signx0; for(ulong i = 0; i<signx0.Size(); ++i) { if(x0[i] >= 0.) signx0[i]=1.*2-1; else signx0[i] = 0.0*2-1; } double rstep = eps_for_method(method); if(rel_step.Size()==0) for(ulong i = 0; i<abs_step.Size(); ++i) abs_step[i] = rstep*signx0[i]*MathMax(1.,fabs(x0[i])); else { abs_step = rstep*signx0*MathAbs(x0); vector dx = ((x0+abs_step) - x0); for(ulong i = 0; i<abs_step.Size(); ++i) if(dx[i] == 0.0) abs_step[i] = rstep*signx0[i]*MathMax(1.,fabs(x0[i])); } return abs_step; }

The adjust_scheme_to_bounds function prevents numerical probes from stepping outside the permitted variable boundaries. If the algorithm is near a limit, this function automatically flips the direction of the finite difference step or switches from a centered scheme to a one-sided scheme. This ensures the objective function is never evaluated at an invalid point, which is essential for stability in constrained optimization.

//+------------------------------------------------------------------+ //|Adjust final difference scheme to the presence of bounds. | //+------------------------------------------------------------------+ vector adjust_scheme_to_bounds(vector& x0, vector& h, int num_steps,ENUM_SCHEME_DIRECTION scheme, vector& lb, vector& ub, vector &one_sided) { switch(scheme) { case SCHEME_1: one_sided = vector::Ones(h.Size()); break; case SCHEME_2: one_sided = vector::Ones(h.Size()); h = MathAbs(h); break; } bool all_true = true; for(ulong i = 0; i<x0.Size(); ++i) if(lb[i] != -double("inf") || ub[i] != double("inf")) { all_true = false; break; } if(all_true) return h; vector h_total = h * double(num_steps); vector h_adjusted = h; vector lower_dist = x0 - lb; vector upper_dist = ub - x0; int forward,backward,fitting,violated,central, adjusted_central; forward = backward = violated = fitting = central = false; double x = 0.; double min_dist = 0.; switch(scheme) { case SCHEME_1: { for(ulong i = 0; i<h.Size(); ++i) { x = x0[i] + h_total[i]; violated = int(x<lb[i]|x>ub[i]); fitting = int(fabs(h_total[i])<=MathMax(lower_dist[i],upper_dist[i])); if(bool(violated & fitting)) h_adjusted[i]*=-1.; forward = int((upper_dist[i] >= lower_dist[i]) & ~fitting); if(forward) h_adjusted[i] = upper_dist[i]/double(num_steps); backward = int((upper_dist[i]<lower_dist[i]) & ~fitting); if(backward) h_adjusted[i] = -lower_dist[i]/double(num_steps); } } break; case SCHEME_2: { for(ulong i = 0; i<h.Size(); ++i) { central = int(((lower_dist[i]>=h_total[i]) & (upper_dist[i] >= h_total[i]))); forward = int(((upper_dist[i]>=lower_dist[i]) & ~central)); if(forward) { h_adjusted[i] = MathMin(h[i],0.5*upper_dist[i]/double(num_steps)); one_sided[i] = 1.; } backward = int(((upper_dist[i]<lower_dist[i]) & ~central)); if(backward) { h_adjusted[i] = -1.* MathMin(h[i],0.5*lower_dist[i]/double(num_steps)); one_sided[i] = 1.0; } min_dist = MathMin(upper_dist[i],lower_dist[i])/double(num_steps); adjusted_central = int((~central & (fabs(h_adjusted[i])<=min_dist))); if(adjusted_central) { h_adjusted[i] = min_dist; one_sided[i] = 0.; } } } break; } return h_adjusted; }

The final section contains the dense_difference and approx_derivative functions, which execute the numerical math required to build the Jacobian or gradient matrix. The dense_difference function iterates through every dimension of the problem, perturbing the input vector and measuring the resulting change in output. The approx_derivative method acts as the high-level coordinator—validating inputs, managing step adjustments, and returning the final derivative matrix to the optimizer.

//+------------------------------------------------------------------+ //|dense difference | //+------------------------------------------------------------------+ matrix dense_difference(IObjective* fun, vector& x0, vector& f0, vector& h, vector& use_one_sided, ENUM_DIFF_POINTS method) { ulong m = f0.Size(); ulong n = x0.Size(); matrix j_transposed = matrix::Zeros(n,m); vector x1 = x0; vector x2 = x0; vector df = vector::Zeros(x0.Size()); for(ulong i = 0; i<h.Size(); ++i) { double dx = 1.e-12; if(method == GRAD_POINT_2) { x1[i] += h[i]; dx = x1[i] - x0[i]; df = fun.objective_function(x1) - f0; } else if(method == GRAD_POINT_3 && use_one_sided[i]!=0.0) { x1[i] += h[i]; x2[i] += 2. * h[i]; dx = x2[i] - x0[i]; df = -3.0 * f0 + 4 * fun.objective_function(x1) - fun.objective_function(x2); } else if(method == GRAD_POINT_3 && use_one_sided[i]==0.0) { x1[i] -= h[i]; x2[i] += h[i]; dx = x2[i] - x1[i]; df = fun.objective_function(x2) - fun.objective_function(x1); } j_transposed.Row(df/dx,i); x1[i] = x2[i] = x0[i]; } return j_transposed.Transpose(); } //+---------------------------------------------------------------------------------------+ //|Compute finite difference approximation of the derivatives of a vector-valued function.| //+---------------------------------------------------------------------------------------+ matrix approx_derivative(IObjective* fun,vector& x0,vector &f0,ENUM_DIFF_POINTS method, vector &rel_step,vector &abs_step, matrix& bounds/*sparsity,as linear_operator*/) { if(CheckPointer(fun)==POINTER_INVALID) { Print(__FUNCTION__, " fun variable is an invalid pointer "); return matrix::Zeros(0,0); } vector lb,ub; lb = bounds.Col(0); ub = bounds.Col(1); if(lb.Size()!=x0.Size() ||ub.Size()!=x0.Size()) { Print(__FUNCTION__, " inconsistent shaptes between bounds and x0 "); return matrix::Zeros(0,0); } if(!f0.Size()) f0 = fun.objective_function(x0); for(ulong i = 0; i<x0.Size(); ++i) if(x0[i]<lb[i] || x0[i]>ub[i]) { Print(__FUNCTION__, " x0 violates bound constraints "); return matrix::Zeros(0,0); } vector h; if(!abs_step.Size()) h = compute_absolute_step(rel_step,x0,f0,method); else { h = abs_step; vector signx0 = vector::Zeros(x0.Size()); for(ulong i = 0; i<x0.Size(); ++i) { if(x0[i]>=0.0) signx0[i] = 1.0*2.-1.; else signx0[i] = 0.0*2.-1.; if(((x0[i]+h[i]) - x0[i]) == 0.0) h[i] = eps_for_method(method)*signx0[i]*MathMax(1.,fabs(x0[i])); } } vector use_one_sided; switch(method) { case GRAD_POINT_2: h = adjust_scheme_to_bounds(x0,h,1,SCHEME_1,lb,ub,use_one_sided); break; case GRAD_POINT_3: h = adjust_scheme_to_bounds(x0,h,1,SCHEME_2,lb,ub,use_one_sided); break; case GRAD_POINT_CS: use_one_sided = vector::Zeros(x0.Size()); break; } return dense_difference(fun,x0,f0,h,use_one_sided,method); }

Conclusion

This article presented the implementation of the Truncated Newton Conjugate-Gradient optimization algorithm in MQL5. The provided implementation supports the minimization of optimization problems both with and without box constraints, offering a versatile tool for MQL5 developers. The component managing the objective function calculation is flexible, supporting both explicitly defined gradient functions and automated numerical differentiation. We validated the implementation by applying it to the challenging Rosenbrock function, demonstrating its ability to navigate complex mathematical surfaces. Finally, we showcased a practical application by integrating the TNC optimizer into a logistic regression model as an alternative to LBFGS. All code referenced in the article is attached and listed below. Readers gain a ready to use optimizer by simply including the primary header files tnc.mqh and num_diff.mqh.

| FIle | Description |

|---|---|

| MQL5/experts/RosenBrock.mq5 | This is the EA used to evaluate the Rosenbrock function in the Strategy Tester. |

| MQL5/files/iris.csv | The iris dataset used in the LogisticRegression script. |

| MQL5/include/tnc | This is folder containing the tnc.mqh header file. |

| MQL5/include/Regression | This is also a folder containing the logistic.mqh header file. |

| MQL5/include/np.mqh | This header contains various vector and matrix manipulation utilities. |

| MQL5/include/num_diff.mqh | This header file containes utilities used to implement differentiation. |

| MQL5/scripts/LogisticRegression.mq5 | The script demonstrates an implementation of logistic regression using the TNC solver. |

| MQL5/scripts/TestTNC.mq5 | This script implements an evaluation of the TNC solver on the Rosenbrock function. |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.