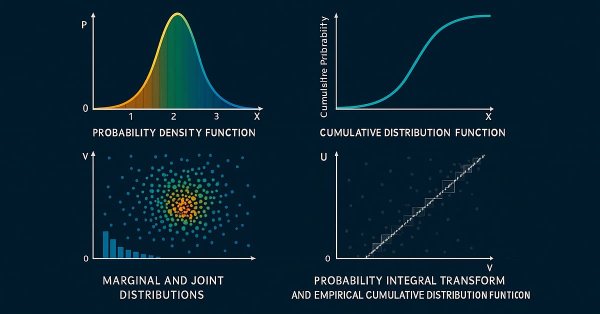

Bivariate Copulae in MQL5 (Part 1): Implementing Gaussian and Student's t-Copulae for Dependency Modeling

This is the first part of an article series presenting the implementation of bivariate copulae in MQL5. This article presents code implementing Gaussian and Student's t-copulae. It also delves into the fundamentals of statistical copulae and related topics. The code is based on the Arbitragelab Python package by Hudson and Thames.

Neural Networks in Trading: Multi-Task Learning Based on the ResNeXt Model (Final Part)

We continue exploring a multi-task learning framework based on ResNeXt, which is characterized by modularity, high computational efficiency, and the ability to identify stable patterns in data. Using a single encoder and specialized "heads" reduces the risk of model overfitting and improves the quality of forecasts.

MQL5 Wizard Techniques you should know (Part 20): Symbolic Regression

Symbolic Regression is a form of regression that starts with minimal to no assumptions on what the underlying model that maps the sets of data under study would look like. Even though it can be implemented by Bayesian Methods or Neural Networks, we look at how an implementation with Genetic Algorithms can help customize an expert signal class usable in the MQL5 wizard.

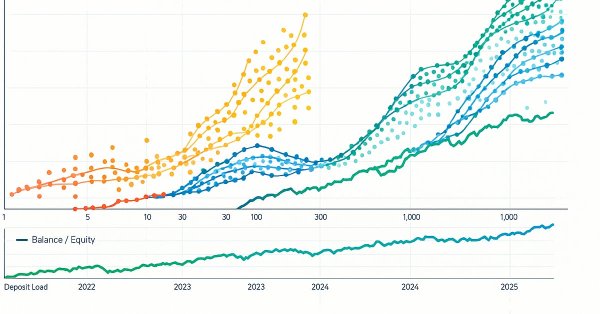

Overcoming The Limitation of Machine Learning (Part 4): Overcoming Irreducible Error Using Multiple Forecast Horizons

Machine learning is often viewed through statistical or linear algebraic lenses, but this article emphasizes a geometric perspective of model predictions. It demonstrates that models do not truly approximate the target but rather map it onto a new coordinate system, creating an inherent misalignment that results in irreducible error. The article proposes that multi-step predictions, comparing the model’s forecasts across different horizons, offer a more effective approach than direct comparisons with the target. By applying this method to a trading model, the article demonstrates significant improvements in profitability and accuracy without changing the underlying model.

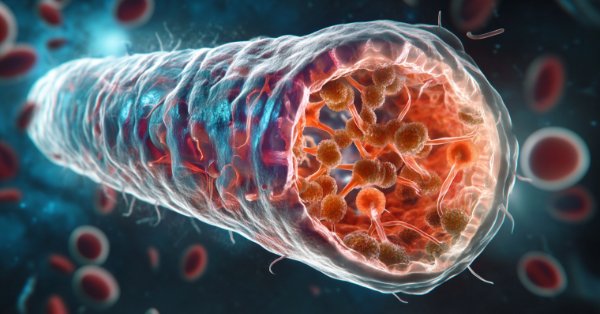

Bacterial Chemotaxis Optimization (BCO)

The article presents the original version of the Bacterial Chemotaxis Optimization (BCO) algorithm and its modified version. We will take a closer look at all the differences, with a special focus on the new version of BCOm, which simplifies the bacterial movement mechanism, reduces the dependence on positional history, and uses simpler math than the computationally heavy original version. We will also conduct the tests and summarize the results.

Chaos optimization algorithm (COA): Continued

We continue studying the chaotic optimization algorithm. The second part of the article deals with the practical aspects of the algorithm implementation, its testing and conclusions.

Population optimization algorithms: Artificial Multi-Social Search Objects (MSO)

This is a continuation of the previous article considering the idea of social groups. The article explores the evolution of social groups using movement and memory algorithms. The results will help to understand the evolution of social systems and apply them in optimization and search for solutions.

Population optimization algorithms: Binary Genetic Algorithm (BGA). Part I

In this article, we will explore various methods used in binary genetic and other population algorithms. We will look at the main components of the algorithm, such as selection, crossover and mutation, and their impact on the optimization. In addition, we will study data presentation methods and their impact on optimization results.

MQL5 Wizard Techniques you should know (Part 60): Inference Learning (Wasserstein-VAE) with Moving Average and Stochastic Oscillator Patterns

We wrap our look into the complementary pairing of the MA & Stochastic oscillator by examining what role inference-learning can play in a post supervised-learning & reinforcement-learning situation. There are clearly a multitude of ways one can choose to go about inference learning in this case, our approach, however, is to use variational auto encoders. We explore this in python before exporting our trained model by ONNX for use in a wizard assembled Expert Advisor in MetaTrader.

Neuroboids Optimization Algorithm (NOA)

A new bioinspired optimization metaheuristic, NOA (Neuroboids Optimization Algorithm), combines the principles of collective intelligence and neural networks. Unlike conventional methods, the algorithm uses a population of self-learning "neuroboids", each with its own neural network that adapts its search strategy in real time. The article reveals the architecture of the algorithm, the mechanisms of self-learning of agents, and the prospects for applying this hybrid approach to complex optimization problems.

Hidden Markov Models in Machine Learning-Based Trading Systems

Hidden Markov Models (HMMs) are a powerful class of probabilistic models designed to analyze sequential data, where observed events depend on some sequence of unobserved (hidden) states that form a Markov process. The main assumptions of HMM include the Markov property for hidden states, meaning that the probability of transition to the next state depends only on the current state, and the independence of observations given knowledge of the current hidden state.

Neural networks made easy (Part 63): Unsupervised Pretraining for Decision Transformer (PDT)

We continue to discuss the family of Decision Transformer methods. From previous article, we have already noticed that training the transformer underlying the architecture of these methods is a rather complex task and requires a large labeled dataset for training. In this article we will look at an algorithm for using unlabeled trajectories for preliminary model training.

Chaos optimization algorithm (COA): Continued

We continue studying the chaotic optimization algorithm. The second part of the article deals with the practical aspects of the algorithm implementation, its testing and conclusions.

MQL5 Wizard Techniques you should know (Part 35): Support Vector Regression

Support Vector Regression is an idealistic way of finding a function or ‘hyper-plane’ that best describes the relationship between two sets of data. We attempt to exploit this in time series forecasting within custom classes of the MQL5 wizard.

Gating mechanisms in ensemble learning

In this article, we continue our exploration of ensemble models by discussing the concept of gates, specifically how they may be useful in combining model outputs to enhance either prediction accuracy or model generalization.

Population optimization algorithms: Micro Artificial immune system (Micro-AIS)

The article considers an optimization method based on the principles of the body's immune system - Micro Artificial Immune System (Micro-AIS) - a modification of AIS. Micro-AIS uses a simpler model of the immune system and simple immune information processing operations. The article also discusses the advantages and disadvantages of Micro-AIS compared to conventional AIS.

Integrating MQL5 with Data Processing Packages (Part 8): Using Graph Neural Networks for Liquidity Zone Recognition

This article shows how to represent market structure as a graph in MQL5, turning swing highs/lows into nodes with features and linking them by edges. It trains a Graph Neural Network to score potential liquidity zones, exports the model to ONNX, and runs real-time inference in an Expert Advisor. Readers learn how to build the data pipeline, integrate the model, visualize zones on the chart, and use the signals for rule-based execution.

Quantization in machine learning (Part 2): Data preprocessing, table selection, training CatBoost models

The article considers the practical application of quantization in the construction of tree models. The methods for selecting quantum tables and data preprocessing are considered. No complex mathematical equations are used.

Neural Networks in Trading: Two-Dimensional Connection Space Models (Chimera)

In this article, we will explore the innovative Chimera framework: a two-dimensional state-space model that uses neural networks to analyze multivariate time series. This method offers high accuracy with low computational cost, outperforming traditional approaches and Transformer architectures.

Eigenvectors and eigenvalues: Exploratory data analysis in MetaTrader 5

In this article we explore different ways in which the eigenvectors and eigenvalues can be applied in exploratory data analysis to reveal unique relationships in data.

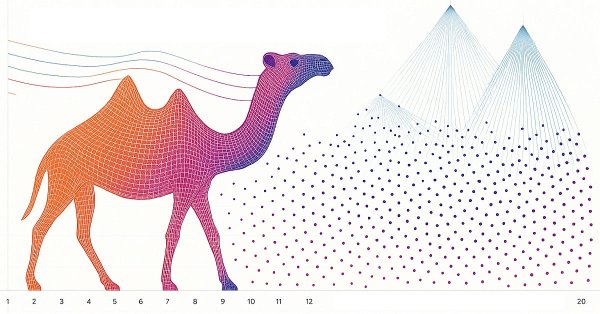

Camel Algorithm (CA)

The Camel Algorithm, developed in 2016, simulates the behavior of camels in the desert to solve optimization problems, taking into account temperature, supply, and endurance. This article also presents a modified version of the algorithm (CAm) with key improvements: the use of a Gaussian distribution in generating solutions and the optimization of the oasis effect parameters.

Analyzing binary code of prices on the exchange (Part I): A new look at technical analysis

This article presents an innovative approach to technical analysis based on converting price movements into binary code. The author demonstrates how various aspects of market behavior — from simple price movements to complex patterns — can be encoded in a sequence of zeros and ones.

MQL5 Wizard Techniques you should know (Part 81): Using Patterns of Ichimoku and the ADX-Wilder with Beta VAE Inference Learning

This piece follows up ‘Part-80’, where we examined the pairing of Ichimoku and the ADX under a Reinforcement Learning framework. We now shift focus to Inference Learning. Ichimoku and ADX are complimentary as already covered, however we are going to revisit the conclusions of the last article related to pipeline use. For our inference learning, we are using the Beta algorithm of a Variational Auto Encoder. We also stick with the implementation of a custom signal class designed for integration with the MQL5 Wizard.

Artificial Showering Algorithm (ASHA)

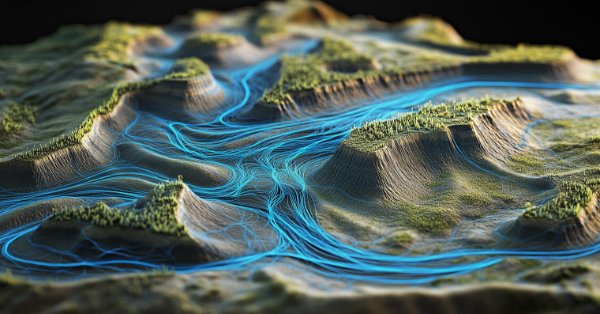

The article presents the Artificial Showering Algorithm (ASHA), a new metaheuristic method developed for solving general optimization problems. Based on simulation of water flow and accumulation processes, this algorithm constructs the concept of an ideal field, in which each unit of resource (water) is called upon to find an optimal solution. We will find out how ASHA adapts flow and accumulation principles to efficiently allocate resources in a search space, and see its implementation and test results.

MQL5 Wizard Techniques you should know (Part 18): Neural Architecture Search with Eigen Vectors

Neural Architecture Search, an automated approach at determining the ideal neural network settings can be a plus when facing many options and large test data sets. We examine how when paired Eigen Vectors this process can be made even more efficient.

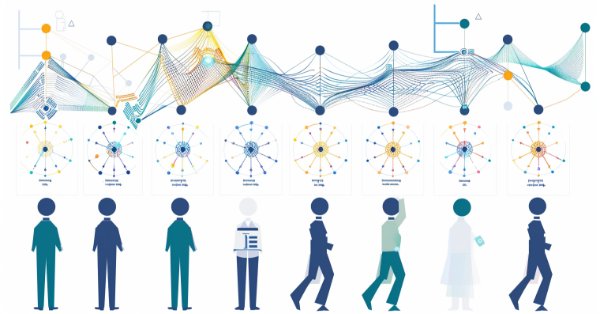

The Disagreement Problem: Diving Deeper into The Complexity Explainability in AI

In this article, we explore the challenge of understanding how AI works. AI models often make decisions in ways that are hard to explain, leading to what's known as the "disagreement problem". This issue is key to making AI more transparent and trustworthy.

The Group Method of Data Handling: Implementing the Multilayered Iterative Algorithm in MQL5

In this article we describe the implementation of the Multilayered Iterative Algorithm of the Group Method of Data Handling in MQL5.

Arithmetic Optimization Algorithm (AOA): From AOA to SOA (Simple Optimization Algorithm)

In this article, we present the Arithmetic Optimization Algorithm (AOA) based on simple arithmetic operations: addition, subtraction, multiplication and division. These basic mathematical operations serve as the foundation for finding optimal solutions to various problems.

MQL5 Wizard Techniques you should know (Part 10). The Unconventional RBM

Restrictive Boltzmann Machines are at the basic level, a two-layer neural network that is proficient at unsupervised classification through dimensionality reduction. We take its basic principles and examine if we were to re-design and train it unorthodoxly, we could get a useful signal filter.

MQL5 Wizard Techniques you should know (Part 23): CNNs

Convolutional Neural Networks are another machine learning algorithm that tend to specialize in decomposing multi-dimensioned data sets into key constituent parts. We look at how this is typically achieved and explore a possible application for traders in another MQL5 wizard signal class.

Foundation Models in Trading: Time Series Forecasting with Google's TimesFM 2.5 in MetaTrader 5

Time series forecasting in trading has evolved from traditional statistical models (like ARIMA) to deep learning approaches, but both require heavy tuning and training. Inspired by advances in NLP, Google’s TimesFM introduces a pretrained “foundation model” for time series that can perform strong forecasts even without task-specific training. For traders, this is powerful because it can be efficiently fine-tuned on their own data using lightweight methods like LoRA, reducing overfitting while adapting to changing market conditions.

Neural networks made easy (Part 70): Closed-Form Policy Improvement Operators (CFPI)

In this article, we will get acquainted with an algorithm that uses closed-form policy improvement operators to optimize Agent actions in offline mode.

Neural networks made easy (Part 77): Cross-Covariance Transformer (XCiT)

In our models, we often use various attention algorithms. And, probably, most often we use Transformers. Their main disadvantage is the resource requirement. In this article, we will consider a new algorithm that can help reduce computing costs without losing quality.

Atmosphere Clouds Model Optimization (ACMO): Theory

The article is devoted to the metaheuristic Atmosphere Clouds Model Optimization (ACMO) algorithm, which simulates the behavior of clouds to solve optimization problems. The algorithm uses the principles of cloud generation, movement and propagation, adapting to the "weather conditions" in the solution space. The article reveals how the algorithm's meteorological simulation finds optimal solutions in a complex possibility space and describes in detail the stages of ACMO operation, including "sky" preparation, cloud birth, cloud movement, and rain concentration.

Overcoming The Limitation of Machine Learning (Part 8): Nonparametric Strategy Selection

This article shows how to configure a black-box model to automatically uncover strong trading strategies using a data-driven approach. By using Mutual Information to prioritize the most learnable signals, we can build smarter and more adaptive models that outperform conventional methods. Readers will also learn to avoid common pitfalls like overreliance on surface-level metrics, and instead develop strategies rooted in meaningful statistical insight.

A feature selection algorithm using energy based learning in pure MQL5

In this article we present the implementation of a feature selection algorithm described in an academic paper titled,"FREL: A stable feature selection algorithm", called Feature weighting as regularized energy based learning.

Neural Networks in Trading: Hyperbolic Latent Diffusion Model (HypDiff)

The article considers methods of encoding initial data in hyperbolic latent space through anisotropic diffusion processes. This helps to more accurately preserve the topological characteristics of the current market situation and improves the quality of its analysis.

Neuro-Structural Trading Engine — NSTE (Part II): Jardine's Gate Six-Gate Quantum Filter

This article introduces Jardine's Gate, a six-gate orthogonal signal filter for MetaTrader 5 that validates LSTM predictions across entropy, expert interference, confidence, regime-adjusted probability, trend direction, and consecutive-loss kill switch dimensions. Out of 43,200 raw signals per month, only 127 pass all six gates. Readers get the complete QuantumEdgeFilter MQL5 class, threshold calibration logic, and gate performance analytics.

Feature Engineering for ML (Part 1): Fractional Differentiation — Stationarity Without Memory Loss

Integer differentiation forces a binary choice between stationarity and memory: returns (d=1) are stationary but discard all price-level information; raw prices (d=0) preserve memory but violate ML stationarity assumptions. We implement the fixed-width fractional differentiation (FFD) method from AFML Chapter 5, covering get_weights_ffd (iterative recurrence with threshold cutoff), frac_diff_ffd (bounded dot product per bar), and fracdiff_optimal (binary search for minimum stationary d*).

Neural networks made easy (Part 69): Density-based support constraint for the behavioral policy (SPOT)

In offline learning, we use a fixed dataset, which limits the coverage of environmental diversity. During the learning process, our Agent can generate actions beyond this dataset. If there is no feedback from the environment, how can we be sure that the assessments of such actions are correct? Maintaining the Agent's policy within the training dataset becomes an important aspect to ensure the reliability of training. This is what we will talk about in this article.