Low-Frequency Quantitative Strategies in Metatrader 5: (Part 2) Backtesting a Lead/Lag Analysis in SQL and in Metatrader 5

Introduction

You have probably already heard of “the butterfly effect”. It is an easy-to-understand metaphor popularized in pop culture to illustrate chaos theory. In summary, it says that the flapping of a butterfly's wings in, for example, Australia could cause an earthquake in North America. It is a beautiful image to say that tiny changes in complex systems, like the weather, may be the cause of huge, unpredictable consequences.

This image would also be a perfect illustration of the central idea behind this article and the kind of analysis it describes. Financial markets are like complex systems. They are frequently modeled as stochastic processes, processes in which the future state cannot be predicted with precision because of the impact of random factors. Each tick, each price change encapsulates these random factors — the flapping of many butterflies' wings, so to speak. Some are close to the change and have a direct impact; others are distant and have an indirect impact. But they are all there, making the price.

Despite this uncertainty, finance professionals try to trace the root causes of events such as price changes. They hope that understanding the drivers will allow them to estimate future outcomes with reasonable probabilities. In this quest, at least one now-obvious truth emerged: irrespective of the nature of the random factors, the change propagates to different assets at different speeds. Since the time the change takes to propagate can be valuable information to better understand the behavior of the assets involved, we developed methods to measure it. In this article, we’ll see one of these methods.

Lead/lag analysis allows us to see whether price changes in asset A lead the price changes in asset B, that is, if asset A would be acting as a leader, while asset B would be acting as a follower. Tipically, both assets have a known correlation or cointegration relationship already established, so they are supposed to move together. But this doesn’t mean that they will always move simultaneously. There may be a time span between the movement in A and the movement in B. By characterizing the magnitude and frequency of this time span, we can gather valuable knowledge about the correlated or cointegrated portfolio and potentially identify mean-reversion trading opportunities with very low risk.

Even without known correlation or cointegration, lead/lag analysis may reveal a lead/follower pattern. In that case, you can look for momentum opportunities, but the risk is higher. We’ll describe the former scenario in this article, the lead/follower mean-reversion trading with cointegrated assets. Eventually, the scenario with momentum trading for non-correlated assets will be the subject of a future installment.

Because of this choice, this article leverages the techniques we discussed in the Statistical Arbitrage Through Cointegrated Stocks series, in particular the use of hedge ratios for market-neutral portfolio trading. In the last article of that series, we presented some reasons why we should have an OLAP-friendly system integrated into our statistical arbitrage pipeline, and in the previous article of this series, we suggested a simple setup for it. Now we’ll be using the system for performing this Lead/Lag analysis.

By the end of this article, you should understand what to expect from low-frequency lead/lag analysis. You will also learn how to build a Python analyzer, avoid common pitfalls, interpret results, and identify trading opportunities.

We start looking for Low-Frequency Lead/Lag trading opportunities because it is where retail traders with a consumer notebook, a regular network connection, and limited capital, can turn small size into an advantage.

What is Low-Frequency Lead/Lag analysis?

When a retail trader sees the expression “Lead/Lag trading”, they might almost automatically reject the mere idea of developing a trading strategy around it. It is a reasonable reaction. Lead/Lag trading is usually associated with High-Frequency trading (HFT), the fight for microseconds in order routing, data center collocation, high-performance code, and, eventually, the front-running slower orders. There are good reasons for this reaction, since the exploitation of temporal price divergences between correlated assets - or even between the same asset on different Exchanges - is the easiest to understand and the most popular tactic used by HFT prop desks.

However, there are real opportunities, often overlooked opportunities, in low-frequency lead/lag (LFLL). What counts as low-frequency varies widely by perspective. It may be a monthly timeframe for a swing trader, or it may be the five-minute chart for a DOM scalper. So, let’s be clear from the start: for our purposes here, high-frequency is anything below a few seconds. We’ll be looking for trading opportunities in the one-minute timeframe and above. It is where we can, reasonably, expect to have our orders filled, with manageable slippage. In these low frequencies, we can expect to find an edge from the depth and accuracy of our analysis, not from the speed of execution. In these low frequencies, any reasonable network connection can turn latency irrelevant, and our alpha should come from our ability to better understand the information diffusion path and timing.

We are interested, for example, in the time it takes for the price movement of an industry leader blue-chip stock to propagate down its supply chain, until the impact hits a specific ticker symbol; or we may be analysing the effects of the post-earnings drift, in which the announcement of above expectations earnings may take days to propagate to some tickers while the big players adjust their positions. In any case, we would not be fighting for milliseconds. We’ll be in the hours-to-few-days space, where the fact of being small can be a vantage point.

Each of these examples has its own specifics, not only for the data analysis step, but for the trading rules as well. In the following weeks, we may take some of them, among others, for a detailed discussion. For now, we’ll take the supply chain leader to follower propagation time as a case study. This kind of Lead/Lag analysis often gets the fancy name of Cross-Asset Information Diffusion analysis.

Building the analyser

The Cross-Asset Information Diffusion analysis involves calculating the cross-correlation between the log-returns of a leader asset at time t-n and the log-returns of a follower asset at time t. This cross-correlation calculation may identify Lead/Lag relationships where the leader asset’s price movement precedes the follower price movement with a statistically significant time span regularity.

Cross-correlation is indeed a large topic of study for experts, but for our purposes here, it can be understood in a very intuitive manner. According to Wikipedia,

“the mathematical concepts of covariance and correlation are very similar. Both describe the degree to which two random variables or sets of random variables tend to deviate from their expected values in similar ways”.

While the correlation coefficient tells us the degree to which two or more time series (our price history) tend to vary together, the cross-correlation does the same with a time displacement applied to one of these time series. While the correlation calculates “how much” they move together by taking their values at the same point in time (same minute, or second, for example), the cross-correlation calculates “how much” they move together by applying a “lag” to one of them (five minutes, or ten seconds in the past, for example).

To ease our understanding of the cross-correlation concept, it may be useful to see it applied to a simplified, well-known “dataset”. For this, we created a script that generates synthetic data (controlled values) for a leader and a follower in a 5-minute timeframe (sampling frequency). The leader price is time-shifted by 3 periods (15 minutes), and the cross-correlation is calculated for different time shifts (lags), from 0 to 4. The variable lag_0 stores the cross-correlation coefficient at zero minutes of lag, that is, when there is no lag. The variable lag_1 is for a one-period lag, that is, five minutes. And so on. Since we know that the follower lags by 3 periods, we expect the correlation to peak in lag_3.

--- Step 1: Synthetic Cross-Correlation Results --- Correlation lag_0_corr -0.031678 lag_1_corr -0.038247 lag_2_corr -0.016094 lag_3_corr 0.996679 lag_0_corr -0.031678 lag_1_corr -0.038247 lag_2_corr -0.016094 lag_3_corr 0.996679 lag_1_corr -0.038247 lag_2_corr -0.016094 lag_3_corr 0.996679 lag_2_corr -0.016094 lag_3_corr 0.996679 lag_4_corr -0.015409

These fabricated results are telling us that the information diffusion takes 15 minutes. If we see the lead move now, and since we are sampling every 5 minutes, we can expect the follower to move in 3 periods, or 15 minutes from now.

The purpose of this simple script is only to make it clear how we expect the cross-correlation results to appear, that is, how we expect its coefficient to peak at the best lag, indicating the time span required for the follower’s price to effectively follow the leader’s price. The script is attached to the article’s footer.

How to handle the Non-Determinism in SQL

In the made-up example above, we are querying a Pandas dataframe with only 100 data points time-shifted over themselves. In production, we query a Hive-partitioned store with thousands or millions of points. We also join at least two time series that must be perfectly time-aligned to produce deterministic results. If we run the same test over the same data, we must obtain the same results.

However, in SQL-based engines like DuckDB, the rows are not processed in a natural order. DuckDB docs make it clear:

“without [ordering] the results of general-purpose window functions and order-sensitive aggregate functions, and the order of framing are not well-defined.”

Among the general-purpose window functions are the LEAD() and LAG() functions. What the docs are saying is that a simple SELECT without an explicit ORDER BY can return rows in a different sequence every time. If window functions like LAG() or CORR() are applied to an unordered set, the correlations and other results will vary between executions. We do not want this kind of uncertainty.

Besides the general SQL unordered set, the column-oriented storage model, which is the DuckDB storage model, stores data in chunks to enable massive parallelism.

“A typical data warehouse query contains several join, filtering, grouping, and aggregation operations. The MPP [massively parallel processing] query optimizer breaks this complex query into a number of execution stages and partitions, many of which can be executed in parallel on different nodes of the database cluster. Queries that involve scanning over large parts of the dataset particularly benefit from such parallel execution.” (Mark Kleppmann, Designing Data-Intensive Applications, O’Reilly, 2017)

That is, the database reads different columns or chunks in parallel across different CPU cores. The rebuilt or “composed” rows arrive at the window function stage in whatever order they were finished being read. Without an ORDER BY, the database simply processes them in the order they arrived.

My partner and I made this mistake when we were starting out on our own, without prior experience with this kind of analysis in columnar databases. Until we realized that the database was like “shuffling” the records, we kept consistently obtaining inconsistent results. Sometimes this is not so obvious. If you do not address this, the results can become unreliable. To make the problem visible, we wrote a small script that simulates record 'shuffling' at the database level.

We created a simple timeline with five data points.

data = {

'time': pd.to_datetime(['10:00', '10:05', '10:10', '10:15', '10:20']),

'price': [100.0, 102.0, 101.0, 105.0, 104.0]

}

df = pd.DataFrame(data)

Then, to force the “failure”, we shuffled the rows physically.

df_shuffled = df.sample(frac=1, random_state=42).reset_index(drop=True)

Note that, although the row numbers are in the ascending ordered sequence, the time column is not.

--- Step 2: Visualizing the Non-Determinism Pitfall --- time price 0 2026-03-11 10:05:00 102.0 1 2026-03-11 10:20:00 104.0 2 2026-03-11 10:10:00 101.0 3 2026-03-11 10:00:00 100.0 4 2026-03-11 10:15:00 105.0

When we call the LAG(price) function without ORDER BY, it retrieves the lagged price in physical memory order:

# 3. THE DANGEROUS QUERY (Physical Order) query_broken = """ SELECT time, price, lag(price) OVER () as prev_price_broken FROM df_shuffled """

time price prev_price_broken 0 2026-03-11 10:05:00 102.0 NaN 1 2026-03-11 10:20:00 104.0 102.0 2 2026-03-11 10:10:00 101.0 104.0 3 2026-03-11 10:00:00 100.0 101.0 4 2026-03-11 10:15:00 105.0 100.0

Note the highlighted second row, price 104.0 at 10:20. What would be the previous price, correct answer from a logical perspective? Obviously, it would be the price at row 4, at time 10:15, that is 105.00. But the database is returning the price at row 0, at time 10:05, that is 102.0. This is not the logical previous price from 5 minutes before; it is just the previous physical row, the previous row in its memory.

What can we do to ensure our results are meaningful and deterministic? We start by calling the LAG() function with the ORDER BY clause.

# 4. THE ROBUST QUERY (Logical Order) query_correct = """ SELECT time, price, lag(price) OVER (ORDER BY time ASC) as prev_price_correct FROM df_shuffled """

Then it follows a logical time order and returns the correct previous price at row 3, time 10:15, that is 105.0.

time price prev_price_correct 0 2026-03-11 10:00:00 100.0 NaN 1 2026-03-11 10:05:00 102.0 100.0 2 2026-03-11 10:10:00 101.0 102.0 3 2026-03-11 10:15:00 105.0 101.0 4 2026-03-11 10:20:00 104.0 105.0

In a database, physical order is NOT logical order. If we don't explicitly call our window function with ORDER BY, we cannot be sure we are not addressing a logical query on physically ordered data. So, this is the first measure we take while building our Lead/Lag analyser: every calculation involving a time-shift (LAG) is wrapped in an explicit OVER (ORDER BY time ASC) clause.

The second measure is that we use the row_number() function to generate a row_id for every ticker. By doing this, even if two bars share a timestamp, their sequence is already defined by us. That is because if two rows have the same time, the database will shuffle them randomly. So, we establish a canonical order by creating a unique, defined sequence for every row.

SELECT time, ticker, close, -- Ensure every row has a unique, deterministic ID row_number() OVER (PARTITION BY ticker ORDER BY time ASC) as row_id

When we are calculating the log-returns, we partition by ticker and make sure to order by time. We are ensuring that the LAG() return for 10:05 is always calculated against 10:00, regardless of how the data is stored on disk. Every LAG and OVER clause now explicitly uses ORDER BY time ASC, row_id ASC. This forces the execution engine to follow the canonical order instead of the physical memory order. We never let the database decide the order. Every time we calculate a return or a shift, we reassert our chronological requirement.

returns AS ( SELECT time, ticker, row_id, -- Returns must follow the canonical order log(close / lag(close) OVER (PARTITION BY ticker ORDER BY time ASC, row_id ASC)) as ret FROM filtered_data

Finally, as the third measure, we take care of our ‘JOIN’ clauses. We ensure a strict temporal alignment on the lead and follower timestamps before shifting the lead's returns. This is to make sure we are always comparing the same points in time. Take this JOIN clause, for example:

JOIN ... ON l.time = c.time

It remains the anchor, ensuring the lead and follower are perfectly synchronized in time before any cross-correlation is computed. We must be attentive here because the lead and the follower might have different trading hours or missing bars. If we are in a hurry and just line up the rows side-by-side (row 1 of lead_asset vs row 1 of follower_asset), we might be comparing lead_asset’s Monday morning to follower_asset’s Tuesday afternoon. This temporal alignment ensures we only compare the lead and follower when they both exist at the same moment.

joined AS ( SELECT l.time, l.ret as lead_ret, c.ret as lag_ret, l.row_id as lead_row_id FROM (SELECT * FROM returns WHERE ticker = '{lead_symbol}') l -- TEMPORAL ALIGNMENT: Sync the clocks of both assets JOIN (SELECT * FROM returns WHERE ticker = '{ticker}') c ON l.time = c.time ),

This ensures the join acts as a synchronized clock. It throws away any mismatched data and ensures that we calculate a correlation by comparing them always in the same timezone and trading session.

This three-step approach should be enough to provide the deterministic, reproducible results we need. I strongly recommend that you not overlook them when writing or requesting your queries to an AI assistant. Be strict: ensure ordering by time, pre-defined row sequence, and temporal alignment on JOIN clauses. You may avoid hours of hard work being lost, as it happened to us.

The Diffusion Query

The provided lead_lag_analyser.py follows a three-stage pipeline. We start by synchronizing the local data store as we usually do. The script calls the DataDownloader class to verify that the local Hive-partitioned Parquet store has the required price history. It automatically fills the gaps between the local cache and the MetaTrader 5 server.

Once the required data is available locally, the diffusion query filters by timeframe, lookback period and optional session hours. Note that this last filter is especially relevant when analysing non-OTC symbols, those that do not trade 24 hours. If any symbol in our analysis is traded in centralized Exchanges only, you should consider filtering the data by its trading session times to avoid meaningless results in the cross-correlation calculation.

for ticker in candidates: query = f""" WITH filtered_data AS ( SELECT time, ticker, close, -- Ensure every row has a unique, deterministic ID row_number() OVER (PARTITION BY ticker ORDER BY time ASC) as row_id FROM market_view WHERE ticker IN ('{lead_symbol}', '{ticker}') AND tf = '{tf_str}' AND time >= '{start_date}' {session_filter} ), returns AS ( SELECT time, ticker, row_id, -- Returns must follow the canonical order log(close / lag(close) OVER (PARTITION BY ticker ORDER BY time ASC, row_id ASC)) as ret FROM filtered_data ), """

Note the use of log(close/lag(close)) to calculate each symbol's returns; that is, we evaluate the cross-correlation against the log-returns, instead of the simple returns.

As said above about the measures we adopt to avoid being trapped by the non-deterministic SQL ordering, we JOIN on time to ensure the temporal alignment, before calculating the cross-correlation.

joined AS ( SELECT l.time, l.ret as lead_ret, c.ret as lag_ret, l.row_id as lead_row_id FROM (SELECT * FROM returns WHERE ticker = '{lead_symbol}') l -- TEMPORAL ALIGNMENT: Sync the clocks of both assets JOIN (SELECT * FROM returns WHERE ticker = '{ticker}') c ON l.time = c.time ),

Each lead return is shifted by N periods. The maximum number of lag periods is a parameter to the analyze_diffusion method.

def analyze_diffusion(self, lead_symbol, candidates, timeframe, lookback_days, max_lag_periods=10, session_start=None, session_end=None): """ Analyzes information diffusion by checking cross-correlation at various lags. """ (...) lagged AS ( SELECT time, lead_ret, lag_ret, -- Shifting the lead returns deterministically {' , '.join([f"lag(lead_ret, {i}) OVER (ORDER BY time ASC, lead_row_id ASC) as lead_lag_{i}" for i in range(1, max_lag_periods + 1)])} FROM joined )

Finally, the query computes the Pearson correlation coefficient for every lag simultaneously and ensures that we only get cross-correlation results from non-null lead and lag returns.

"""

SELECT

corr(lead_ret, lag_ret) as corr_0,

{' , '.join([f"corr(lead_lag_{i}, lag_ret) as corr_{i}" for i in range(1, max_lag_periods + 1)])},

count(*) as sample_size

FROM lagged

WHERE lead_ret IS NOT NULL AND lag_ret IS NOT NULL

""" If you are following this series of articles about statistical arbitrage, you probably will remember that when analysing the Pearson Correlation Coefficient between two assets for pairs trading, we were looking for positive (or negative) correlation coefficients above 0.80, ideally above 0.90. In a Lead/Lag study, we are looking for very small signals. A correlation of 0.05 or 0.10 would be significant, or not, depending mainly on the sample size, that is, the number of periods (bars) under analysis.

To avoid leaving this coefficient significance entirely to our subjective criteria, the analyzer includes a significance test based on the square root of the sample size.

# Significance threshold (rough estimate: 2/sqrt(N)) significance = 2.0 / np.sqrt(sample_size)

It is simple, but it works. For a small sample, let’s say, 100 periods, the significance threshold is 2 / 10 = 0.20. In this case, a correlation of 0.08 is insignificant, or just noise. On the other hand, for a large sample with 10,000 periods, the threshold becomes 2 / 100 = 0.02, and the same 0.08 correlation is highly significant.

If the correlation at a specific lag is lower than the significance threshold, the relationship is likely noise, and your first step should be to increase the sample size before discarding the pair or basket from the analysis.

While this simple and well-known formula helps with noise filtering, you should note that our Lead/Lag analysis is entirely based on cross-correlation, which is a special case of the Pearson Correlation test. As a statistical test, the Pearson Correlation has a confidence interval, meaning that if you test 100 different follower candidates against a lead, the laws of probability say that at least 5 of them may show significant correlations purely by luck. (Statisticians call this a Type I Error.)

Also, price returns aren't perfectly random, independently and identically distributed (I.I.D.) variables. If an asset has momentum, it can artificially inflate the correlation. In this case, our significance testing may report it as significant when it isn't.

Use a reasonable sample size (rule of thumb: >500 bars). Do not test dozens of candidates in one run without correction for multiple testing. Do not trade live before extensive out-of-sample backtests. If possible, experiment with some paper-trading on a demo account before going live.

The Epps Effect

Before delving into the main example of this article, I would like to bring your attention to a phenomenon known as The Epps Effect that may be confusing when you start tweaking the timeframe parameter. It is described as a phenomenon in which

“the empirical correlation between the returns of two different stocks decreases with the length of the interval for which the price changes are measured.”

Let’s say we are looking for Lead/Lag opportunities in the commodity market, more specifically in the mining sector. We want to know if there is any lead-follower price movement gap between gold and some gold-related securities. We take XAUUSD as a leader. For follower candidates, we chose a mining corporation stock (NEM.US) and two gold-related ETFs (SGOL.US and GDX.US).

Then we run our analyser without session-time filtering on the H1 timeframe with a 30-day lookback (the sample size is very small, but you can ignore it for now, as it is irrelevant to understanding the Epps Effect).

lead_lag_analyser.py Analyzing Information Diffusion for XAUUSD (H1, 30 days)... === Diffusion Ranking (Best Non-Zero Lag) === Ticker Lag_0_Corr Best_Lag Best_Lag_Corr Is_Significant Sample NEM.US 0.6169 10 0.2155 True 119 GDX.US 0.6968 10 0.1975 True 119 SGOL.US 0.7168 10 0.1899 True 119 Top Candidate: NEM.US Correlation at Lag 10 (600 mins): 0.2155 >>> CONTEMPORANEOUS: Moves mostly in sync with the lead asset.

By reading the results, we may conclude that they are all moving in sync because the ten-hour lag is probably due to the absence of session time filtering. The lag is the same for the three symbols, with a relatively high correlation on the tenth hour. Since XAUUSD is traded 24 hours OTC and the other three symbols are traded only on the Exchange open market hours, they are probably “following the leader” when their market is open. This reading makes sense.

Also, the results are telling us that for these symbols, the market is showing no inefficiency in the H1 timeframe. GDX and NEM represent miners, and the high correlation in Lag_0 would tell us that they are reacting to changes in gold spot prices within the same hour. Probably, it was already arbitrated by HFTs and other players. What if we change the timeframe to 5 minutes?

Analyzing Information Diffusion for XAUUSD (M5, 30 days)... === Diffusion Ranking (Best Non-Zero Lag) === Ticker Lag_0_Corr Best_Lag Best_Lag_Corr Is_Significant Sample NEM.US 0.4576 6 0.0520 False 1099 GDX.US 0.5217 6 0.0486 False 1099 SGOL.US 0.6450 6 0.0446 False 1099 Top Candidate: NEM.US Correlation at Lag 6 (30 mins): 0.052 >>> CONTEMPORANEOUS: Moves mostly in sync with Lead.

By changing from the hourly to a five-minute timeframe, we see a dramatic drop in lag correlations. This is the Epps Effect in practice. However, besides the well-known statistical phenomenon, since we are not using the session time filter, the different market hours contribute to the vanishing correlation we see in the M5 timeframe. The XAUUSD forex pair is highly active during the London session, while SGOL.US and GDX.US are flat because the US markets are closed. When we calculate correlation over a 24-hour window at M5, we are including thousands of bars where the XAUUSD is moving fast, but the mining securities and the ETFs are waiting for the market to open. This dilutes the overall correlation coefficient significantly.

Make sure to use the session time filter to minimize this distortion. Use the session_start and session_end parameters to focus on the core trading hours of the assets. If Lag_0_Corr is significantly higher than all lagged correlations, as we see in both examples above, the assets are moving in sync (Contemporaneous). A true follower with lag will show a distinct peak in correlation at a non-zero lag. We’ll see many examples of this in the following analysis.

Also, when running tests for relatively very high and very low timeframes, ensure the sample count is high enough in the highest timeframe. As said above, > 500 bars should be enough to provide statistical confidence.

A take on cointegrated stocks

We already did several experiments with cointegrated stocks. We know that they tend to move together in the long-run. In our experiments, we took NVDA (Nvidia Co.) as a semiconductor industry leader, and we ranked several semiconductor-related stocks by their cointegration strength with NVDA. So we know that they tend to move together, we know that there is a leader, and also that there are some “followers” as well. What if some of these followers have a consistent, measurable price movement lag relative to the NVDA price movement? It may be a reasonable hypothesis to be tested, may it not?

This example was cherry-picked to illustrate the main goal of the lead/lag analysis.

We took the top-ranked NVDA cointegrated stocks and tested them in the M5 timeframe, with a lookback period of 60 days.

Analyzing Information Diffusion for NVDA (M5, 60 days)... === Diffusion Ranking (Best Non-Zero Lag) === Ticker Lag_0_Corr Best_Lag Best_Lag_Corr Is_Significant Sample ASX 0.4637 1 0.0933 True 3162 AVGO 0.5514 1 0.0640 True 3199 MU 0.4594 2 0.0522 True 3199 AMD 0.4345 2 0.0423 True 3199 LAES 0.3179 9 0.0336 False 3199 INTC 0.2617 2 0.0330 False 3199 NVTS 0.4348 2 0.0329 False 3199 Top Candidate: ASX Correlation at Lag 1 (5 mins): 0.0933 >>> CONTEMPORANEOUS: Moves mostly in sync with Lead.

We were expecting the results to identify them as ‘contemporaneous’ (not informative, as would be ideal). After all, they are cointegrated. This is clear in the high correlations at Lag_0, which is much higher than the lagged correlations. It tells us that the market is highly efficient here. Most of the NVDA price movement is priced into ASX or AVGO almost instantly within the same 5M bar.

In the Best Lag column, we have two groups of followers:

- 5 minutes lag (Best_Lag 1). These stocks are the most sensitive to NVDA’s price movement. A significant correlation at Lag 1 means that if NVDA has a massive move, there is a statistically measurable echo in ASX and AVGO 5 minutes later. If NVDA breaks out, we have 5 minutes to check if ASX or AVGO reacted.

- 10 minutes lag (Best_Lag 2) - These have a slightly longer period. Their peak correlation happens 10 minutes after NVDA's move.

The reasons for the time span difference between these two groups are irrelevant for our purposes here. All that we want to know is if there is a time lag and if it is tradeable. Note that, although ASX figures as a top candidate, the best-lag signal is weak. The relatively small 0.0933 correlation at Lag 1 means that NVDA's past move explains only about 9% of ASX's current move. We may say it is only a probabilistic edge. We wouldn't trade this signal per se, but it could be used as a confirmation tool if we were already looking to take a position on ASX. A sudden spike in NVDA around 5 minutes ago could be the green light.

The ASX top candidate is a textbook example of why we call it Supply Chain Information Diffusion analysis. ASX often follows NVDA with a small lag because it is a semiconductor assembly and test provider. NVDA designs the chips. ASX provides the backend services. NVDA's demand lead takes a few minutes to ripple down to the service providers.

While the market is 90% efficient (contemporaneous), we have found a pattern that may be worth further investigation or even a backtest. Maybe we have identified a potential trading opportunity. How would we explore it? Maybe we could start looking for a divergence that expects a convergence. Does it make sense?

No, because it lacks that peak in correlation at a non-zero lag we mentioned above: “A true follower with lag will show a distinct peak in correlation at a non-zero lag.”

The pair XAUUSD (gold spot) and the ETF GDX.US shows exactly this property in the last 30 days, at the minute timeframe.

Syncing Lead: XAUUSD [XAUUSD] No local data. Starting from 2026-03-01 02:36:52.562940+00:00 Analyzing Information Diffusion for XAUUSD (M1, 30 days)... === Diffusion Ranking (Best Non-Zero Lag) === Ticker Lag_0_Corr Best_Lag Best_Lag_Corr Is_Significant Sample GDX.US 0.0764 1 0.0843 True 6198 Top Candidate: GDX.US Correlation at Lag 1 (1 mins): 0.0843 >>> INFORMATIVE LEAD: This asset follows with a distinct delay.

This is what we are looking for to justify a real backtest in the MetaTrader 5.

Backtesting

Before developing a full-featured Expert Advisor for backtest, we can check if the lead/follower relationship between XAUUSD and GDX.US would be profitable by running a pure SQL backtest. The MetaTrader 5 EA is the last phase in our pipeline, when we already have a strategy that is worth the effort involved in EA development. The pure SQL backtest method is less common in our community. It can be helpful to showcase the practical benefits of the OLAP system we are presenting in this series.

A pure SQL backtest is very limited in terms of the complexity of trading rules that can be tested, but it is the easiest to implement and the fastest to run. Because of this, it fits well at the beginning of our pipeline, when we need to screen dozens, hundreds, or even thousands of symbols. This technique offers a massive pre-screening tool that makes it easy for us to scale a strategy backtest from a single pair to an entire sector with zero code complexity.

It is worth noting that, irrespective of the lead/follower relationship, we must take into account the differences between trading pairs or baskets with a cointegrated relationship and the non-cointegrated ones, as is the case with the XAUUSD/GDX.US pair we are using as an example here.

Cointegrated pairs or baskets: mean-reversion

The lead/follower relationship is not tied to the cointegration relationship. It only indicates a lagged cross-correlation and can appear where there is no cointegration. But if there is cointegration, it affects the way we may trade the pair or basket. When a lead/lag relationship exists alongside cointegration, we have a mean-reverting system. Cointegration implies that while the two assets can wander apart, they share a long-term equilibrium, so any divergence in the lead/lag relationship is viewed as a temporary one. If the leader moves and the follower doesn't, we know that there is a high probability that the follower will eventually follow the leader and maintain the statistical relationship.

There is a low risk of a permanent divergence. It is the market-neutral way for cointegrated portfolios. We remove the market risk by buying/selling the follower and the leader, always in opposite directions. The order volume is dictated by the hedge ratio obtained with the Johansen eigenvector or with a simple ordinary least squares (OLS).

Non-cointegrated pairs or baskets: momentum

But the presence of a lead/follower relationship allows us to trade a simple directional trend, without hedging. If the lead moved but the follower stayed flat, we can bet that the follower will follow suit within the expected time span. This is a riskier scenario. Here, the leader predicts the follower's direction or momentum, but they are not necessarily “walking together”. The leader provides a directional signal, but the two assets can drift infinitely far apart over time. This is pure momentum trading. We are not trading a return to mean. We cannot sit comfortably, waiting for a hedged pair to converge. If the follower fails to react, the loss can be infinite because the spread is not stationary. Thus, a tight stop-loss, stop by time, or close by opposite signal is mandatory. Also, the order volume is not dictated by a statistical measurement. It is arbitrary, which increases the risk.

Backtesting with pure SQL

An OLAP system at the start of the pipeline lets us analyze large historical datasets in seconds, even offline. We must be able to search for patterns, recurrences, and anomalies in a large number of symbols simultaneously, and have a response in a few seconds on a consumer notebook. We want to test dozens of symbol combinations for pairs trading, and cointegrated basket permutations, and we want to re-run the relevant ones in a loop every five minutes, or each minute, for trade monitoring, eventually for updating the parameters of an Expert Advisor running live trading. We want all of this with ease, repeatability, precision, and speed.

A pure SQL backtest illustrates perfectly how this goal can be achieved. However, it has some limitations: in pure SQL, it may be hard to test complex trading rules. Detailed reporting and plotting, which we have for free in the MetaTester, may require the use of external tools. Some statistical functions may not be available by default in the system. They can be implemented as user-defined functions (UDF), according to the requirements of each system, but we must take into account this limitation. While developing quantitative strategies, we depend on statistical functions all the time. We’ll see an example of this limitation right now, in this example. Despite these limitations, it is the fastest way to test new ideas when the limitations don't apply, as is the case with a lead/lag analysis.

Once the historic data is available and the system is set up, we can test hypotheses with only our data and some lines of SQL.

Attached to this article, you’ll find the lead_lag_backtest.sql. Since it is not our goal to teach SQL or provide a tutorial, we’ll not describe it step-by-step. Instead, we want to share with you a high-level view of the overall structure and, most importantly, how it relates to the trading rules we will use when evolving to an Expert Advisor-based backtest. That is, we want to use this script as a tool to understand the principles of a lead/lag backtest that apply to any method.

lead_lag_backtest.sql

The first thing to note is that this is a vectorized backtest, meaning it calculates all rows in parallel, not in loops through each bar, which is the case when using an Event-Driven backtest framework. This translates to speed and low-memory requirements, but the script expects minute-level prices to be already available in our Hive-partitioned local datastore.

In this example, we are using XAUUSD (gold spot) as the leader asset and a gold ETF, GDX.US, as the follower (or “laggard”) asset. The strategy we are testing assumes that the ETF price follows the spot price with a 5-minute lag.

Once the two price series are aligned by timestamps, the gold spot price (the leader) is shifted backward by five periods, five minutes in this case. At this point, we have three time series aligned: the ETF close price, the gold spot close price, and the shifted gold spot close price. This table illustrates the shifted alignment.

| time | GDX.US close | XAUUSD close | XAUUSD shifted close |

|---|---|---|---|

| 5 | 2 | 15 | 17 |

| 10 | 4 | 17 | 19 |

| 15 | 6 | 19 | 21 |

| 20 | 8 | 21 | ?? |

Table 1 - simplified representation of the three aligned time series

Then the script calculates a rolling hedge ratio. In our previous examples, we’ve been using the eigenvectors of the Johansen cointegration test to obtain our hedge ratios. Here, it is replaced by an ordinary least squares regression (OLS) provided by the function REGR_SLOPE()

REGR_SLOPE(lagg_close, lead_shifted)

Here, lead_shifted is the regression independent variable, that is, the variable used to “predict” the lagg_close, which is the dependent variable. The function returns the rolling hedge ratio, usually represented as Beta. By multiplying the shifted leader’s price by Beta, we have the expected follower’s price.

Expected follower price = beta * lead_shifted

Since there is a difference between the expected follower price and the actual follower price, we have a residual.

Residual = lagg_close - (beta * lead_shifted)

The residual is the value we use for generating signals, the deviation from the expected value. Then we calculate the mean and the variance of the residual for each timestamp (a rolling mean and a rolling variance) over the lookback period. They will be used to calculate the z-score.

z-score: (residual - r_mean) / SQRT(r_var)

The z-score tells us how many standard deviations the current residual (the residual at a specific timestamp) is from its rolling mean. This acts as a typical indicator, informing us about possible overbought/oversold conditions. The script assumes a z-score of 2.0 as a threshold. Based on the violations of this threshold, the script generates trading signals.

If the z-score is 0, there is no deviation from the residual rolling mean. If it is < 0, the follower was left behind and is “oversold”. Conversely, if the z-score is > 0, the leader has fallen, and the follower was again left behind, but now it is “overbought”. In any case, when the z-score is above +2.0 or below the -2.0 threshold, we go short on the “overbought”, or we go long on the “oversold” follower, respectively.

As you can see, the trading rules are very similar to the usual mean-reversion strategy for correlated pairs. We are always expecting that deviations will revert to the mean. The difference is that we have less statistical evidence that it will happen.

Finally, the script calculates the log returns for each bar.

target_pos * (LN(lagg_close) - LN(LAG(lagg_close)))

This computes the profit/loss from holding the position over each minute, assuming the position is adjusted instantly based on the signal. That is, it tells us how much we would profit or lose if we had an open position at all signals.

At the end, the script runs an aggregation of the results across all bars where returns were not null.

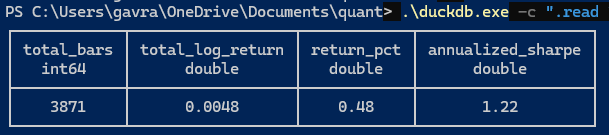

Fig. 1. Capture of lead_lag_backtest.sql results for gold spot x gold-related ETF

It may be worth noting that this vectorized processing approach makes negligible the difference between running the backtest for a simple pair like the above, and running it for ten, twenty, or two hundred symbols. We can easily screen dozens of symbols simultaneously in a single query.

Backtesting with an MQL5 Expert Advisor

Now that we’ve filtered down our lead/follower candidates from dozens of symbols, we can develop a more robust and performant backtest in Metatrader 5 and explore its optimization features. Remember that lead/lag opportunities can be extremely ephemeral. It is not uncommon that you find a pair performing very well in a week, and a broken relationship next week. It requires continuous monitoring and portfolio updates. The most effective path to portfolio updates is the pure SQL backtest we saw above.

The EA is attached to the footer for your testing and experiments. I would only like to draw your attention to some parts of the code.

//+------------------------------------------------------------------+ //| Lead-Lag.mq5 | //| Copyright 2025, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ (..) //+------------------------------------------------------------------+ //| inputs | //+------------------------------------------------------------------+ input string InpLeaderSymbol = "XAUUSD"; // Leader input string InpLaggardSymbol = "GDX.US"; // Laggard (trade one side) input ENUM_TIMEFRAMES InpTimeframe = PERIOD_M1; input int InpLagBars = 5; // Leader shift input int InpWindow = 60; // Rolling window input double InpZOpen = 2.0; input double InpZClose = 0.5; input double InpLot = 1.0; input int InpSlippage = 5; input int InpMagic = 20260325;

Among the EA parameters, the main candidates for optimization are InpWindow, InpZOpen, and InpZClose. In our optimization (see below), we used the rolling window and the z-score close threshold. You should avoid changing the InpLagBars because this lag is what the lead/lag analysis indicates as the statistically significant one.

//+------------------------------------------------------------------+ //| OnTick Function | //+------------------------------------------------------------------+ void OnTick() { (...) // 1) fetch aligned close arrays for laggard and leader int bars = MathMax(InpWindow + InpLagBars + 5, 200); double laggClose[]; double leadClose[]; ArraySetAsSeries(laggClose, true); ArraySetAsSeries(leadClose, true); if(CopyClose(InpLaggardSymbol, InpTimeframe, 0, bars, laggClose) <= 0 || CopyClose(InpLeaderSymbol, InpTimeframe, 0, bars, leadClose) <= 0) { return; }

The required alignment between the two-symbol prices is implemented with the MQL5 built-in CopyClose function.

// 2) Build shifted leader series (lag by InpLagBars) double leadShifted[]; ArrayResize(leadShifted, ArraySize(leadClose)); for(int i = 0; i < ArraySize(leadClose); i++) leadShifted[i] = (i + InpLagBars < ArraySize(leadClose)) ? leadClose[i + InpLagBars] : 0.0;

The 5-bar lag is implemented by adding the value of InpLagBars to the leadClose array index.

// 3) compute rolling regression slope (beta), residual, mean, var, zscore int idx = 0; // current bar index 0 if(ArraySize(laggClose) < InpWindow + InpLagBars + 1) return; int valid = InpWindow; // compute beta at current bar (using most recent up to InpWindow) double sumX = 0, sumY = 0, sumXY = 0, sumX2 = 0; int n = 0; for(int j = idx; j < idx + InpWindow; j++) // AsSeries so 0==latest { double x = leadShifted[j]; double y = laggClose[j]; if(x == 0.0) continue; // skip misaligned values sumX += x; sumY += y; sumXY += x * y; sumX2 += x * x; n++; } if(n < 2) return; double beta = (n * sumXY - sumX * sumY) / (n * sumX2 - sumX * sumX);

In the Expert Advisor, the REGR_SLOPE() built-in function, which we used to obtain Beta in SQL, is replaced by a simple linear regression model.

// rolling residual stats over InpWindow double mean = 0, var = 0; int count = 0; for(int k = idx; k < idx + InpWindow; k++) { double x = laggClose[k] - beta * leadShifted[k]; if(!IsFiniteDouble(x)) continue; mean += x; var += x * x; count++; } if(count < 2) return; mean /= count; var = var / count - mean * mean; if(var < 1e-12) var = 1e-12; double zscore = (resid - mean) / MathSqrt(var); // 4) position logic double wantPos = 0.0; if(zscore < -InpZOpen) wantPos = +1.0; else if(zscore > +InpZOpen) wantPos = -1.0; else if(MathAbs(zscore) < InpZClose) wantPos = 0.0; else { if(positionLong) wantPos = 1.0; if(positionShort) wantPos = -1.0; } ManagePosition(wantPos); }

The enter/exit logic is based on the z-score threshold set in the input parameters InpZOpen and InpZClose. The var wantPos +1 means buy the laggard/follower; wantPos -1 means sell it; and wantPos 0 means do nothing. InpZOpen and InpZClose are the main defaults subject to optimization, along with the InpWindow.

//+------------------------------------------------------------------+ //| Close positions by opposite signal | //+------------------------------------------------------------------+ void ManagePosition(double wantPos) { int total = PositionsTotal(); bool hasLong = false; bool hasShort = false; for(int i = 0; i < total; i++) { ulong posTicket = PositionGetTicket(i); if(PositionGetString(POSITION_SYMBOL) != InpLaggardSymbol) continue; ENUM_POSITION_TYPE ptype = (ENUM_POSITION_TYPE)PositionGetInteger(POSITION_TYPE); if(ptype == POSITION_TYPE_BUY) hasLong = true; if(ptype == POSITION_TYPE_SELL) hasShort = true; } // close opposite positions if(wantPos == 0.0) { if(hasLong) ClosePosition(POSITION_TYPE_BUY); if(hasShort) ClosePosition(POSITION_TYPE_SELL); positionLong = positionShort = false; return; } if(wantPos > 0 && !hasLong) { if(hasShort) ClosePosition(POSITION_TYPE_SELL); OpenPosition(POSITION_TYPE_BUY); positionLong = true; positionShort = false; } else if(wantPos < 0 && !hasShort) { if(hasLong) ClosePosition(POSITION_TYPE_BUY); OpenPosition(POSITION_TYPE_SELL); positionLong = false; positionShort = true; } }

Positions are closed by the opposite signal.

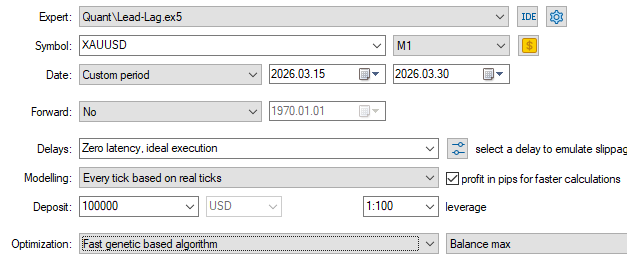

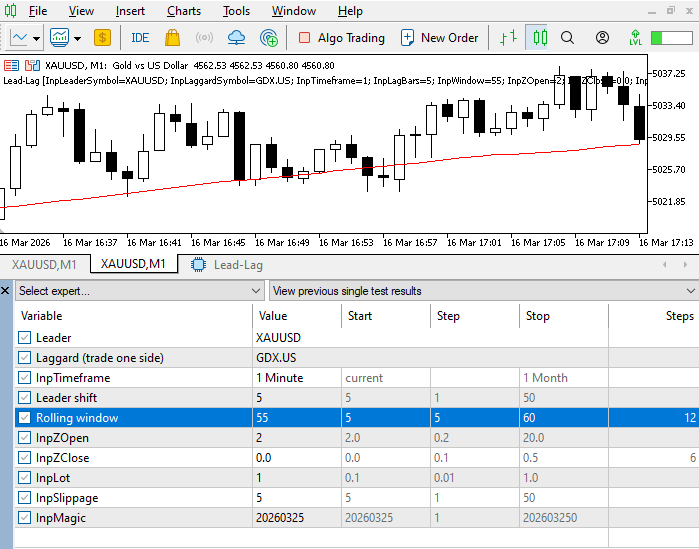

These are the settings used in the backtest. The respective *.set file is attached.

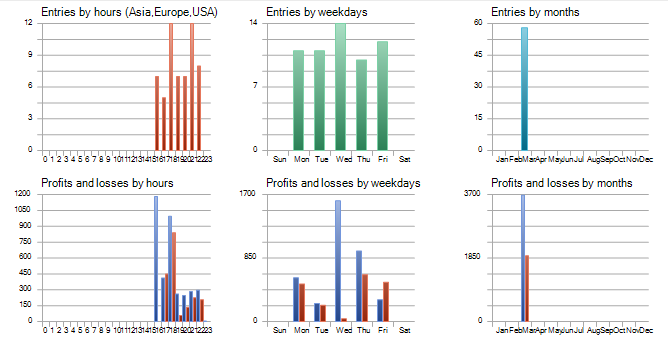

Fig. 2. MetaTester settings for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF

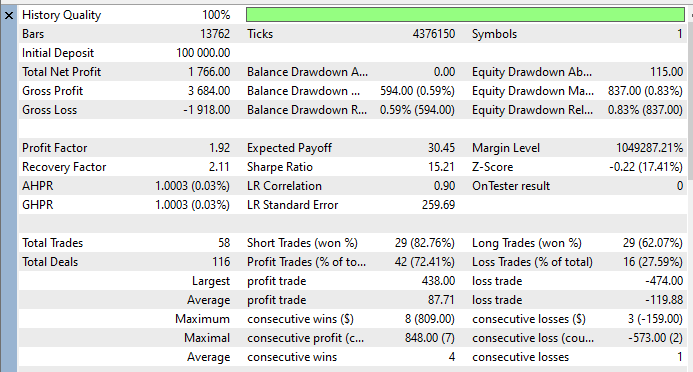

Fig. 3. MetaTester statistics for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF.

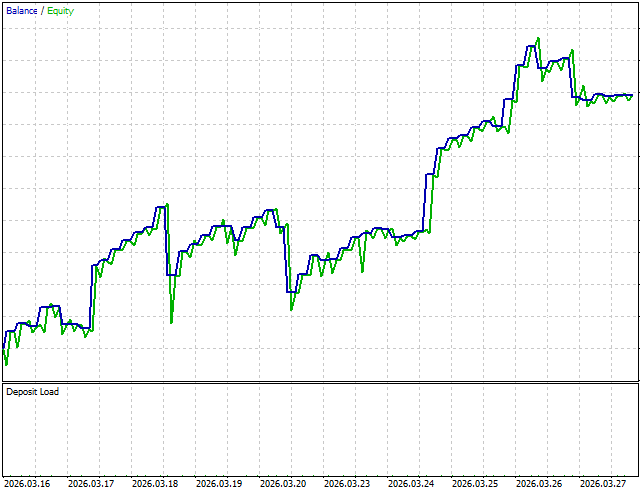

Fig. 4. MetaTester graph for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF

Fig. 5. MetaTester trading times for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF

Note that we’ve been trading on USA session times only, because of GDX.US, which is traded primarily on the NYSE. Also, maybe we should backtest a weekday filter for trading only on Wednesdays.

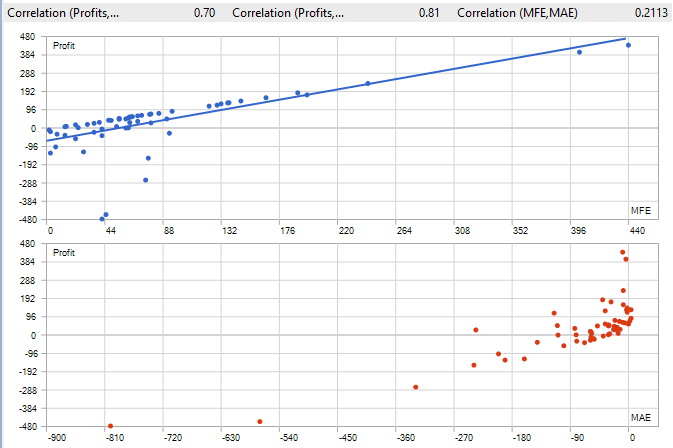

Fig. 6. MetaTester correlation (MFE, MAE) for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF

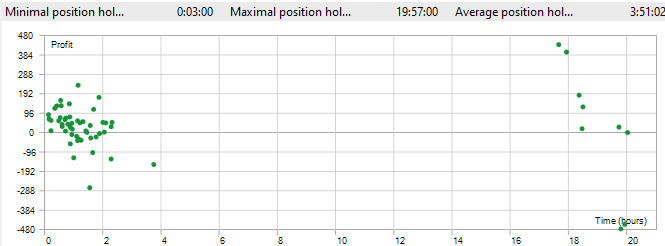

Fig. 7. MetaTester position holding times for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF

Optimization

We optimized for the rolling window lookback period and for the z-score threshold for closing positions. These were the inputs. The respective *.ini file is attached.

Fig. 8. MetaTester optimization inputs for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF

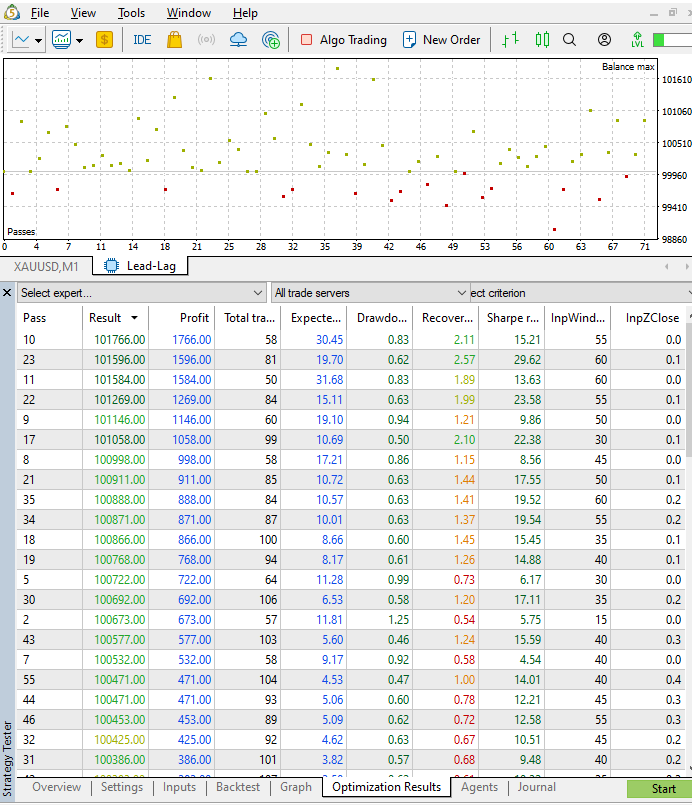

These were the optimization results. We chose the first one (pass 10) to run the single test with the results above.

Fig. 9. MetaTester optimization results for lead/lag backtest of XAUUSD (gold spot) and GDX.US ETF

Please take into account that the results don't include the transaction costs (commissions, XAUUSD swaps, etc.).

Conclusion

In this article, we presented a complete lead/lag analysis pipeline, from the leader/follower relationship discovery, through the pure SQL backtest filtering, to the final Metatrader 5 backtest.

We used a columnar database’s vectorized execution and strict window ordering to perform lead/lag screenings across dozens of assets in seconds. This deterministic approach ensures that our research results are reproducible, as is required by any quantitative trading strategy.

We focus on three common mistakes in lead/lag diffusion queries: missing ORDER BY in window functions, missing deterministic row numbering, and timestamp misalignment between series.

Finally, we used a sample of well-known and already ranked cointegrated stocks to show a very brief, but practical analysis example, which might have exposed a potential trading opportunity. However, the lack of a correlation peak in non-zero lag was indicative that the lag was not tradeable. So, we chose a pair that has had this correlation peak in non-zero lag in the last 30 days and ran a backtest for the last two weeks, optimizing the rolling windows period and the position exit z-score threshold for better results.

| Filename | Description |

|---|---|

| cross_correlation_synth.py | Python script for simulating cross-correlation with synthetic data |

| non_determinism_synth.py | Python script for simulating SQL non-deterministic ordering with synthetic data |

| lead_lag_analyser.py | Python script for running the Lead/Lag analysis |

| lead_lag_backtest.sql | SQL file for running a pure SQL backtest |

| Lead_Lag.mq5 | Expert Advisor MQL5 source file |

| tester-set-lead-lag-xauusd-gdxus.ini | Tester settings INI file |

| expert-set-lead-lag-xauusd-gdxus-opt-params.set | Optimization parameters SET file |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

MetaTrader 5 Machine Learning Blueprint (Part 11): Kelly Criterion, Prop Firm Integration, and CPCV Dynamic Backtesting

MetaTrader 5 Machine Learning Blueprint (Part 11): Kelly Criterion, Prop Firm Integration, and CPCV Dynamic Backtesting

Market Simulation (Part 20): First steps with SQL (III)

Market Simulation (Part 20): First steps with SQL (III)

One-Dimensional Singular Spectrum Analysis

One-Dimensional Singular Spectrum Analysis

Swing Extremes and Pullbacks in MQL5 (Part 3): Defining Structural Validity Beyond Simple Highs/Lows

Swing Extremes and Pullbacks in MQL5 (Part 3): Defining Structural Validity Beyond Simple Highs/Lows

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use