From Matrices to Models: How to Build an ML Pipeline in MQL5 and Export It to ONNX

Introduction

What makes Machine learning (ML) in the terminal intimidating is not so much the model itself as everything around it. It might seem that we cannot do without a separate stack, Python scripts and complex integration. As a result we get a feeling that ML is something external in relation to MetaTrader 5.

But once we remove the extra layers, the picture becomes clearer. Any ML pipeline is a sequence of basic operations: generate features, scale them, remove redundancy, and pass the result to the model. This is not the magic of neural networks, but ordinary linear algebra.

And here comes the key point. Data preparation is an MQL5 task. It is in the terminal that features are formed, normalization is performed and, if necessary, PCA (Principal Component Analysis) is applied to reduce the dimensionality of data and remove correlated noise. That is, the entire data path to the model must be reproduced where the model is used. Without this, the matching of results is impossible.

This is critical because any attempt to partially transfer the pipeline breaks consistency. In Python, data goes one way, while in the terminal it goes another. The difference may be minimal, but for the model this is already a different space. As a result, the signal does not disappear - it is simply distorted.

At this point, the role of Python becomes much easier and more accurate. It is used to train the model and calculate the parameters — normalization, PCA and the weights themselves. After this the model is exported to ONNX (Open Neural Network Exchange) — a format that preserves the computational graph and allows the model to be transferred without rewriting the code.

Then everything is decided by MQL5. The ONNX model performs calculations but does not control the input. This means that it is in the terminal that it is necessary to reproduce the same data path used during training.

This is where matrices come to the fore. The built-in matrix and vector allow us to describe all transformations directly, just as they are defined in math. Normalization, projection, input preparation - all this is performed as a sequence of linear operations without intermediate distortions. The code does not interpret equations, but repeats them.

It is at this moment that the sense of complexity disappears. ML ceases to look like a set of disparate technologies and turns into a sequence of clear steps, completely controlled within the terminal.

In this article we will go through this path sequentially without unnecessary theory based on matrices and vectors as the main tool. The focus is on the main goal: how to achieve results that are reproducible both in training and in a real trading environment.

Matrices as a pipeline basis: From features to data space

Market data is a continuous stream of prices. But the model itself does not work directly with it. It needs a pre-prepared set of features: returns, ranges, deviations, lags. And here a practical question immediately arises: keep each feature separately or collect them into a single structure. In practice, the second path turns out to be much more stable.

Therefore, it is convenient to reduce the features into the X matrix, whose rows correspond to observations, while the columns correspond to features. From this point on, the data ceases to be a scattering of individual values. Data becomes a single object for sequential processing. Built-in MQL5 matrix operations allow transformations to be performed on the entire data space at once, rather than on individual values. This changes not only the code, but also the handling logic.

This is especially important for normalization. Market features almost always exist on different scales: price, range, returns, deviation, volume. If they are not brought into a comparable form, the model will begin to respond to the magnitude of the numbers, and not to the signal structure. Therefore, normalization is needed as a mandatory step in data preparation. And it is more convenient to handle this on MQL5 side. Here the built-in matrix and vector allow us to immediately obtain market series and then apply statistical operations like Mean and Std directly to the prepared matrix.

matrix<double> vRates; if(!vRates.CopyRates(cSymbol.Name(), PERIOD_CURRENT, COPY_RATES_OHLC | COPY_RATES_VERTICAL, 1, HistoryBars)) { PrintFormat("Error of load rates %d", GetLastError()); return; } matrix<double> means=matrix<double>::Zeros(vRates.Rows(),vRates.Cols()); matrix<double> STDs=matrix<double>::Zeros(vRates.Rows(),vRates.Cols()); means.Row(vRates.Mean(0),0); STDs.Row(vRates.Std(0)+DBL_EPSILON,0); means=means.CumSum(0); STDs=STDs.CumSum(0); vRates=(vRates-means)/STDs;

At first glance, it may seem that normalization is easier to hide inside the ONNX model. But this solution has its limits. In MQL5, ONNX is conceived primarily as an execution layer. The model is loaded via OnnxCreate. Then its input and output shapes are specified. After that, it is launched via OnnxRun. This is great for inference, but it does not provide the same convenience and transparency for managing the input space. When normalization is set in MQL5, it is easier to test, repeat, change and compare it with real market data directly in the tester or on the chart.

There is one more important point. When we change the symbol or timeframe, the data distribution changes. If normalization is embedded in the ONNX graph, the model turns out to be rigidly tied to the old input distribution. Then any significant change in the environment requires not just targeted adjustments, but a full retraining and re-export. If normalization is set in MQL5, it is enough to update its parameters and feed the model with data of the required scale. This preserves the input structure and allows us to do without completely rebuilding the entire model.

This is why the external matrix layer in the terminal gives more flexibility than trying to close everything inside ONNX. In Python, the model is trained once. In MQL5, the data is transformed consistently every time. This is the strength of the architecture: the model remains a model, and the matrices and vectors in the terminal take on the work of preparing and controlling the input.

Compression and stabilization: PCA as an extension of matrix logic

Market indicators are almost never independent. Price, returns, range, deviations - all these are different projections of the same movement. As a result, redundancy arises within the data: several features describe the same market behavior, only from different angles. For the model, this is not a signal enhancement, but a source of noise and instability.

This is where PCA (Principal Component Analysis) becomes the natural continuation of the matrix approach. The idea behind its use is simple: do not try to feed the model everything at once, but rather collect information into more compact components that capture most of the variance in the data.

From a mathematical point of view, nothing new appears. It is the same linear algebra. The X matrix of features is centered and multiplied by the matrix of eigenvectors. The result is a new space, in which features represent independent components of market change.

And the important point here is not the equation, but the effect. PCA solves two problems at once. On the one hand, it compresses feature space removing unnecessary dimensions. On the other hand, it stabilizes data, because noise and correlations no longer scatter the signal between a set of variables. The model begins to work with a cleaner data structure.

The practical result is felt immediately. Training becomes more robust, model behavior becomes more predictable, and sensitivity to small and random fluctuations decreases. Moreover, the code does not become more complicated at all. All that changes is another matrix operation on the already prepared X.

And here it is important to maintain the same architectural logic as before. PCA is not set in separate calculations and cycles. It remains part of a single matrix circuit in MQL5.

As a result, the data is not only brought to a single scale, but also become structurally simplified. It loses redundancy but retains the information core. This is the key effect of PCA in the context of trading: the model gets less noise but more meaning.

This is how the next level of the pipeline is formed. After normalizing the data, we organize its structure. And the cleaner this space becomes, the more stable the model behaves.

Model and ONNX: From training to execution

Once the data space has been cleaned and brought to a stable form, the pipeline logic naturally moves to the next step - the model itself. But it is important to immediately establish the principle: the model here is not the center of the system, but its final expression.

Training takes place outside the terminal, in Python. This is not a random choice, but a question of convenience and ecosystem. Model training consists of two clearly separated stages, and this is what determines the stability of the entire system.

At the first stage, the entire volume of the training set is used. Here, the model itself is not yet being built, but rather its coordinate system is formed. Normalization parameters are calculated: mean values and standard deviations for each feature. PCA is calculated in parallel. It specifies a transformation of the original feature space into a more compact and ordered representation.

# Standardize features and apply PCA to retain 99% variance scaler = StandardScaler() X_scaled = scaler.fit_transform(X) pca = PCA(n_components=NComponents, random_state=42) X_pca = pca.fit_transform(X_scaled)

The data preparation stage is critical. Essentially, the space the model will work in is fixed here. Any deviation at this level is automatically carried forward. Therefore, it determines the entire subsequent stability of the system.

Once the data space is fixed, the transition to the second stage occurs. The model is assembled as a very specific mechanism. By this point, the features have been normalized and have passed PCA, so that a coherent, dense representation of the market is fed into the network. This is an important point: LSTM does not have to sort out the chaos of initial values. It immediately works with the space that has been prepared for it.

First, the data is converted into a format convenient for PyTorch.

# --- LSTM training block with PyTorch --- # Prepare data for LSTM: form rolling sequence windows X_np = X_pca.astype(np.float32) y_np = y.to_numpy(dtype=float) n_sequences = X_np.shape[0] if n_sequences <= 0: print("Not enough samples for sequence windows; reduce window_size or collect more data.") quit() X_tensor = torch.tensor(X_np, dtype=torch.float32).unsqueeze(1) # (n_samples, 1, n_features) y_tensor = torch.tensor(y_np.reshape(-1, 1), dtype=torch.float32)

Nothing smart happens at this stage but it is here that the technical accuracy of all further work is laid. The array is turned into a tensor and an extra dimension is added to it. It is a small detail, but for LSTM it is fundamental: the network is waiting not just for a set of features, but for a sequence. Even if the sequence length is one, the input shape itself corresponds to the architecture of a recurrent network.

After this, the data is divided into training and validation parts. A seemingly ordinary step here plays an important role: the model does not just remember history, but gets the opportunity to test itself on a held-out portion of the dataset. This is how you can tell whether it is learning to find a pattern or just repeating the noise.

# Train/validation split split = int(Train_test_split * len(X_tensor)) train_ds = TensorDataset(X_tensor[:split], y_tensor[:split]) val_ds = TensorDataset(X_tensor[split:], y_tensor[split:]) train_loader = DataLoader(train_ds, batch_size=Batch_size, shuffle=False) val_loader = DataLoader(val_ds, batch_size=Batch_size, shuffle=False)

The data is then fed into DataLoader. And here the process takes on a working rhythm. Instead of feeding the network one example at a time, we serve it in batches. This makes training more stable and significantly easier to optimize.

Next, the model itself is built. The important thing here is not to overload it with unnecessary complexity. In the code, this is a neat, compact architecture: a few recurrent layers, a fixed-size hidden state, Dropout to reduce overfitting and a final linear layer that converts the internal state of the network into a single prediction number.

class LSTMRegressor(nn.Module): def __init__(self, input_size, hidden_size=64, num_layers=3, dropout=0.1): super().__init__() self.lstm = nn.LSTM(input_size=input_size, hidden_size=hidden_size, num_layers=num_layers, batch_first=True, dropout=dropout) self.fc = nn.Linear(hidden_size, 1) def forward(self, x): # x: (batch, seq_len, input_size) out, (hn, cn) = self.lstm(x) last_h = hn[-1] return self.fc(last_h) device = torch.device('cuda' if torch.cuda.is_available() else 'cpu') print(f"Using device: {device}") model = LSTMRegressor(input_size=X_np.shape[1], hidden_size=64, num_layers=Layers, dropout=0.2).to(device)

The logic is simple, but very expressive: the network first collects the meaning and then formulates the answer.

The model is moved to an available device. If there is GPU, the calculations go there. If not, everything works on CPU. For financial data, this is not a luxury, but a normal engineering practice: sequential calculations and large data sets quickly become heavy, and acceleration really matters here.

After that, the Adam optimizer and the MSELoss loss function.

optimizer = torch.optim.Adam(model.parameters(), lr=1e-3)

criterion = nn.MSELoss() Only at this stage does real training begin. Up to this point, we have only carefully built the scene: prepared the data, defined the input shape, assembled the architecture, and configured the weight update mechanism.

for epoch in range(1, Epochs + 1): model.train() train_loss = 0.0 for xb, yb in train_loader: xb, yb = xb.to(device), yb.to(device) optimizer.zero_grad() preds = model(xb) loss = criterion(preds, yb) loss.backward() optimizer.step() train_loss += loss.item() * xb.size(0) train_loss /= len(train_loader.dataset) model.eval() val_loss = 0.0 with torch.no_grad(): for xb, yb in val_loader: xb, yb = xb.to(device), yb.to(device) preds = model(xb) loss = criterion(preds, yb) val_loss += loss.item() * xb.size(0) val_loss /= len(val_loader.dataset) if len(val_loader.dataset) > 0 else float('nan') print(f"Epoch {epoch}/{Epochs} — train_loss: {train_loss:.6f} val_loss: {val_loss:.6f}")

The strength of the pipeline lies in the sequence of steps: data preparation → model assembly → training. This order simplifies the transfer to MQL5 via ONNX without loss of entry matching.

During training, the behavioral part of the system is formed — the model weights, its response to the analyzed data, and the forecasting structure. But it is important to understand: the model is trained not on raw data, but on the already provided and compressed representation.

The result is two blocks that cannot be mixed. The first is the statistical basis: normalization and PCA. The second is the model itself, trained in this space. Together they form a single data flow pipeline.

After training, the model is exported to ONNX. The format captures the computational graph and makes the model portable.

# Try exporting to ONNX try: onnx_path = os.path.join(data_path, 'MQL5', 'Files', 'lstm3.onnx') dummy_input = torch.randn(1, 1, X_np.shape[1], device=device) model.eval() torch.onnx.export( model, dummy_input, onnx_path, input_names=['PCA_features'], output_names=['Forecast'], opset_version=18, dynamo=True, external_data=False, verify=True ) print(f"Exported model to ONNX: {onnx_path}") except Exception as e: print("ONNX export failed:", e)

ONNX remains a container of computations in the architecture. It stores the model as a fixed function, ready for execution in MQL5 via OnnxCreate and OnnxRun. But the entire data preparation logic remains outside ONNX and is strictly repeated in the terminal through matrices and vectors.

That is why Python plays a strictly limited role in this structure. It is needed once - to train the model and fix its parameters. After the export, it is no longer involved in the decision-making. All further work is transferred to the terminal.

This is how a clear division of roles is formed. Python is responsible for building the model. ONNX captures it as a computational object. MQL5 provides reproduction of the input pipeline and inference execution. And the more accurately this division is observed, the more stable the system behaves in a real trading environment.

Playback and data control in MQL5

After exporting the model to ONNX, the work is transferred to the terminal. And here it is especially clear why matrices and vectors were key elements of the entire architecture from the very beginning. In this structure, MQL5 reproduces the entire data preparation path the model was trained on.

And here we must immediately distinguish between two different modes. The first one is initialization, which is performed once when the EA is launched. The second one is a runtime loop repeated on each new bar. This division is very important. It makes computing stable. Everything that can be done should be prepared in advance. Only the live market logic should remain in the per-bar cycle.

At the initialization stage, MQL5 raises the entire infrastructure the model is to be based on. The normalization parameters, the PCA space center, and the component matrix are loaded first.

if(!LoadBinaryParams(sFileName, vMeans, vScales, vPCAmeans, mPCAcomponents, vEvr, iNFeatures, iNComponents)) { PrintFormat("Error of load PCA params: %d", GetLastError()); return INIT_FAILED; } PrintFormat("Loaded PCA params: features=%d components=%d", iNFeatures, iNComponents);

Here it is worth paying attention to one important detail. The normalization parameters are loaded into vectors, while PCAcomponents are loaded into the matrix. This looks natural, but it actually shows the structure of the data preparation well.

Means and standard deviations are univariate statistics. Each feature has its own mean and deviation. Therefore, they are represented as vectors. Each element of such a vector corresponds to a separate feature of the input space. The logic is linear and transparent.

With PCA, the situation is different. Here we are not talking about a set of individual ratios, but about the transformation of the entire feature space. This is why the components are loaded into the matrix. And this is no longer just a data container. The matrix defines the rule of transition from the original feature space to the new, compressed component space. Each row here describes the direction of the new axis after a PCA transformation. In fact, the terminal receives a ready-made geometry of space, built in Python on the training set.

This is a very important point for understanding the entire architecture. Vectors are responsible for local operations on individual features - centering and scaling. The matrix is responsible for the collective transformation of the entire data space. Built-in matrix and vector MQL5 perfectly reflect this mathematical structure directly in the code.

The ONNX model is loaded next, input and output dimensions are specified.

//--- load models hONNX = OnnxCreateFromBuffer(model, ONNX_DEFAULT); if(hONNX == INVALID_HANDLE) { Print("OnnxCreateFromBuffer error ", GetLastError()); return INIT_FAILED; } const ulong input_state[] = {1, 1, iNComponents}; if(!OnnxSetInputShape(hONNX, 0, input_state)) { PrintFormat("OnnxSetInputShape error: %d ", GetLastError()); OnnxRelease(hONNX); return INIT_FAILED; } const ulong output_forecast[] = {1, vForecast.Size()}; if(!OnnxSetOutputShape(hONNX, 0, output_forecast)) { Print("OnnxSetOutputShape error ", GetLastError()); OnnxRelease(hONNX); return INIT_FAILED; }

This is where a very important architectural point comes in. The input size of the model is no longer equal to the number of original features. It is equal to the number of PCA components, the dimensions of the already compressed data space.

This means that the model does not work with a full set of initial indicators and derived values, but with their compact representation after PCA. Noise and excess correlations remain in the original space, while ONNX receives a more stable and concentrated signal.

In practice, this produces several effects at once. Reduced input tensor size. Low computing load. Simplified model task. But the main thing is that the input space becomes more stable. The network stops wasting resources on processing mutually duplicate features and begins to work with a denser representation of the market structure.

That is why PCA here is not just a way to reduce dimensionality. It becomes an intermediate layer between raw market data and the model. MQL5 matrices allow us to reproduce this layer directly inside the terminal in almost the same form it existed in Python.

After this, the indicators are connected and the working buffers are prepared.

//--- Indicators if(!ciSMA.Create(Symb.Name(), TimeFrame, 12, 0, MODE_SMA, PRICE_CLOSE)) { Print("SMA create error ", GetLastError()); OnnxRelease(hONNX); return INIT_FAILED; } ciSMA.BufferResize(2); for(uint i = 0; i < ciMACD.Size(); i++) { if(!ciMACD[i].Create(Symb.Name(), TimeFrame, int(mMACDset[i, 0]), int(mMACDset[i, 1]), int(mMACDset[i, 2]), PRICE_CLOSE)) { PrintFormat("MACD %d create error %d", i, GetLastError()); OnnxRelease(hONNX); return INIT_FAILED; } ciMACD[i].BufferResize(4); }

This is not just a technical formality. This is the moment when the terminal receives the same statistical basis, on which the model was trained in Python. In other words, MQL5 reconstructs the environment, in which the prediction generated by the model makes sense.

This is especially evident when loading data preparation parameters. They are not recalculated or selected on site. They arrive at the terminal already prepared. This is the strength of the matrix approach. Data preparation parameters are loaded once and fixed in the computational structure; they are not recalculated on-site. As a result, the terminal begins to work with a model tied to a specific data space.

After this, the work cycle begins. It is of a completely different type now. At each new bar, the EA collects fresh market data.

ciSMA.Refresh(); for(uint i = 0; i < ciMACD.Size(); i++) ciMACD[i].Refresh(); if(!vRates.CopyRates(Symb.Name(), TimeFrame, COPY_RATES_CLOSE, 1, 12)) { Print("CopyRates error ", GetLastError()); return; }

The feature vector is generated...

vInputs[0] = vRates[11] - vRates[10]; vInputs[1] = (vRates[11] - vRates[0]) / 11; vInputs[2] = vInputs[1] - vInputs[0]; vInputs[3] = float(ciSMA.Main(1)); for(uint i = 0; i < ciMACD.Size(); i++) { vInputs[4 + i * 6] = float(ciMACD[i].Main(1)); vInputs[5 + i * 6] = float(ciMACD[i].Main(1) - ciMACD[i].Main(2)); vInputs[6 + i * 6] = float(ciMACD[i].Signal(1)); vInputs[7 + i * 6] = float(ciMACD[i].Signal(1) - ciMACD[i].Signal(2)); vInputs[8 + i * 6] = vInputs[6 + i * 6] - vInputs[4 + i * 6]; vInputs[9 + i * 6] = vInputs[7 + i * 6] - vInputs[5 + i * 6]; }

...and passed through the same transformations that were used during training. First comes normalization, then PCA projection.

bool TransformPCA(const vector<float> &data, const vector<float> &scaler_mean, const vector<float> &scaler_scale, const vector<float> &pca_mean, const matrix<float> &pca_components, vector<float> &out) { ulong n = data.Size(); if(n == 0) return false; //--- vector<float> centered = (data - scaler_mean) / scaler_scale - pca_mean; //--- projection: out = pca_components * centered (matrix * vertical vector) out = pca_components.MatMul(centered); return true; }

The advantage of MQL5 built-in math is particularly evident here. The code practically repeats the mathematical notation itself. The terminal simply reproduces the same operations used to train the model.

Only after that the prepared vector is passed to ONNX.

//--- ONNX if(!OnnxRun(hONNX, ONNX_LOGLEVEL_INFO, vCompressed, vForecast)) { PrintFormat("OnnxRun error: %d ", GetLastError()); return; }

There is no longer any room for heavy preparation or re-initialization here. Everything works like a conveyor belt. New bar - new input. But the route of this entrance remains unchanged.

This is where the practical value of MQL5 built-in matrices and vectors comes into play. They allow us to assemble the entire data structure once, and then reproduce it as many times as needed, without unnecessary fuss and without loss of consistency. Initialization sets the framework. The work cycle simply uses it. This makes the system not only compact, but also disciplined. For ML in the terminal, discipline is more important than flashy words. Because it is discipline which keeps the model in the same space, in which it can really work.

Testing the result: From model to trading behavior

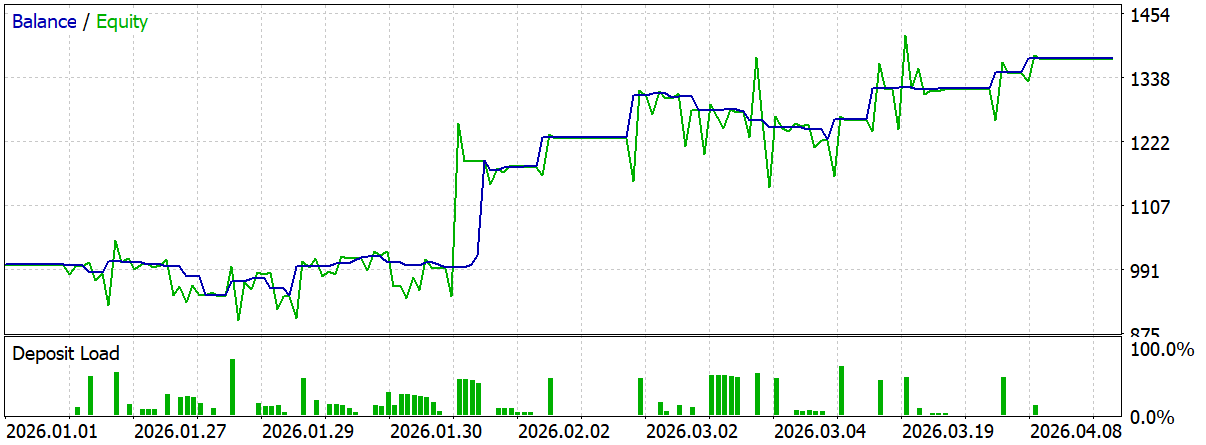

The model was trained in Python, while the computational pipeline is carefully reproduced in MQL5. Normalization and PCA work through matrices and vectors. The ONNX model is uploaded into the terminal. All elements are connected into a single system. Now the main question is simple: how will this system behave in the market?

This is where the MetaTrader 5 strategy tester comes into play. It shows not only the fact that the model works, but also how stable the entire ML pipeline is — from data preparation to trading decision. This is a full-fledged test of the entire architecture on live market dynamics.

This is one of the platform’s greatest strengths. In Python, it is easy to obtain good loss function values or high accuracy on the training set. But the market quickly tests the strength of such results. The MetaTrader 5 tester allows us to see this moment immediately. The combination of metrics provided by MetaTrader 5 is important. This is where the tester shows its real value. It allows us to look at strategy as a living system with its own behavior, risk, stability, and internal structure.

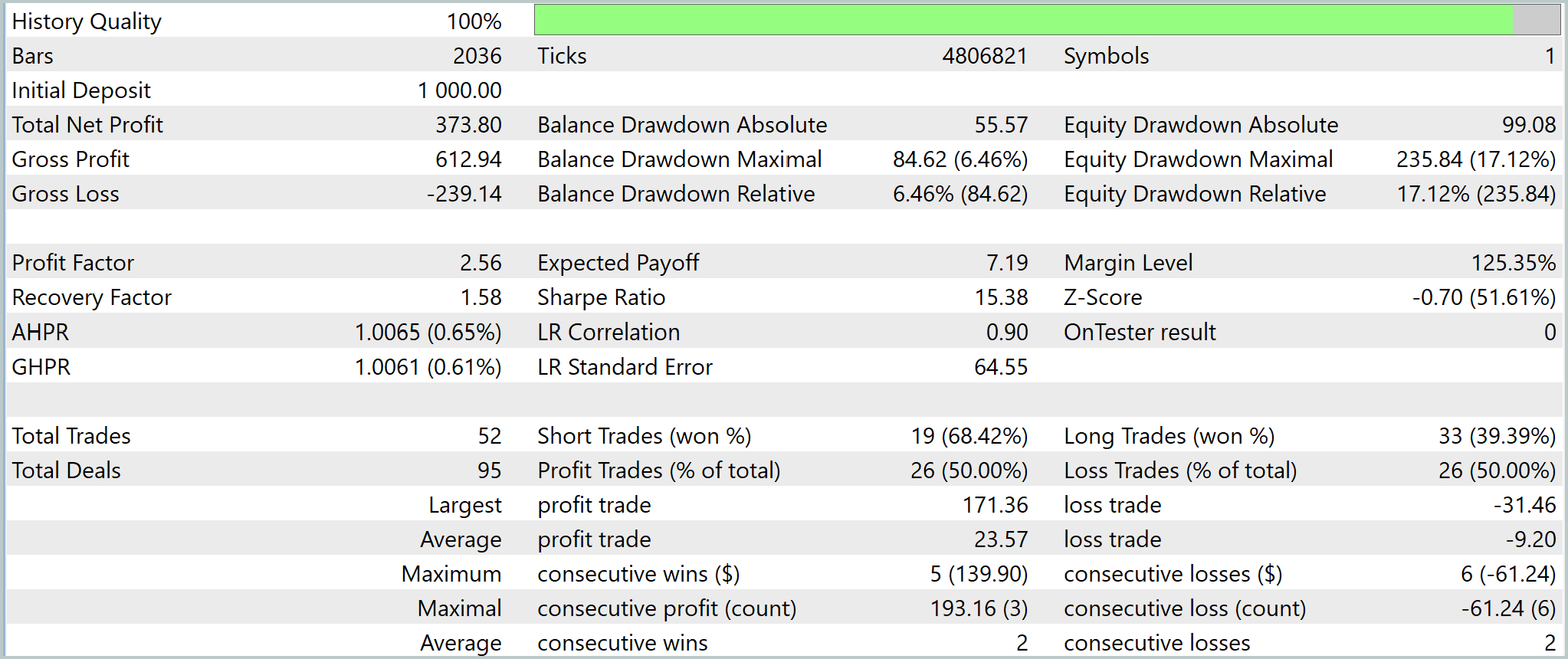

- History Quality is an important starting point.

The testing conditions remove any doubts about the correctness of the run. The results can be considered technically reliable, which means that metrics analysis is meaningful. - Financial result (Total Net Profit) compiled USD 373.80 with the initial deposit of USD 1000.00.

This means the strategy completed the test with positive results. However, this metric alone does not say anything about the logic quality, so it should be read in conjunction with other metrics. - Gross Profit turned out to be equal to USD 612.94, while Gross Loss — USD -239.14.

This means that profitable trades significantly outweighed losing ones leading to the positive result. The strategy profits from a consistent predominant of positive outcomes. - Profit Factor is equal to 2.56.

This is a strong metric. The profit is more than two and a half times greater than the loss. For a trading system, this is already a sign that positive trades actually outweigh negative ones. - Expected Payoff comprised 7.19.

This means that on average, each trade produced a positive mathematical contribution. The system does not live off one or two successful streaks, but maintains a positive expectation at the deal level. - Recovery Factor equal to 1.58 shows to what extent the result justifies the drawdown experienced.

In this case, the system is able to recover from losses, although the safety margin cannot be called huge. The strategy works, but drawdowns should not be underestimated. - Sharpe Ratio reached 15.38.

This is a very strong value, which indicates a high ratio of return to the outcome volatility. But here it is important to remember that in the tester this indicator should be read together with drawdowns and the seriality of trades. - AHPR comprised 1.0065, i.e. 0.65%. GHPR comprised 1.0061, i.e. 0.61%.

These metrics show the average profitability of a trade in arithmetic and geometric terms. The conclusion here is clear: there is growth, and it is built into the structure of the trade flow. - Balance Drawdown Absolute comprised USD 55.57, Balance Drawdown Maximal — USD 84.62, which corresponds to 6.46% (Balance Drawdown Relative).

This means that the strategy remained relatively balanced. Closed deals formed an acceptable capital curve. - Equity Drawdown shows a completely different picture. The absolute drawdown comprised USD 99.08, the maximum one — USD 235.84, which corresponds to 17.12%.

This is an important point: open positions created market exposure, even if the final balance looked moderate. The conclusion here is honest: the strategy is able to make money, but it does so not without internal tension. - Margin Level comprised 125.35%.

This is a safe level, but without a significant margin. It shows that the margin reserve was sufficient. However, the system did not operate under sterile conditions. The trading logic remained within the working range, without critical pressure on the account. - Z-Score turned out to be equal to -0.70 at the significance level of 51.61%.

This indicates the absence of a clearly defined anomalous series of wins and losses. In other words, the results do not look like a random burst of luck. The sequence of trades is closer to a working trading structure than to random noise. - LR Correlation is equal to 0.90, while LR Standard Error — 64.55.

The correlation indicates a fairly smooth linear trend of the equity curve. The standard error shows that the motion was not perfectly smooth. There is growth, but it was formed through normal market fluctuations. - In total, 52 positions (Total Trades) were opened and 95 deals (Total Deals) registered.

This is already a sufficient volume to see the nature of the strategy. The test is not limited to a few random inputs, but provides a very meaningful picture. - Short Trades comprised 19 trades with 68.42% of profitable ones, while Long Trades — 33 trades with 39.39% profitable ones.

There is an interesting discrepancy in the direction of the trades. This is a very important signal. The strategy handles short trades noticeably better than long ones. This means that it may have a pronounced bias towards downward movements. - Profit Trades and Loss Trades turned out to be the same — 26 trades each, i.e. 50.00% each.

At first glance this is a parity, but it does not prevent the system from remaining profitable. The strategy makes money through the quality of trades. - A maximum profitable trade (Largest profit trade) comprised USD 171.36, while the loss-making one (Largest loss trade) — -USD 31.46.

Average profit trade is equal to USD 23.57, while the loss-making one (Average loss trade) — USD -9.20. These are perhaps some of the most important metrics in the report. The average winning trade is more than twice as big as the average losing one. The system is able to maintain an edge even with a less than ideal share of successful entries. - Maximum consecutive wins comprised 5 trades of USD 139.90, while Maximum consecutive losses — 6 trades of -USD 61.24.

This shows that the strategy is capable of catching good streaks, but is not immune to a losing streak.

Each metric represents a different aspect of the strategy mechanics. This is the main advantage of the platform: it allows us to see not only the result, but also its structure.

Conclusion

Machine learning in trading often seems like something heavy and overloaded. But practice shows a different picture. MetaTrader 5 contains most of the tools needed to build a full-fledged ML pipeline. This was precisely the main purpose of the article. Instead of demonstrating a magic model and making yet another attempt to predict the market, it analyzes the path itself: from ordinary market data to a working ONNX model inside the terminal. It is especially important that the built-in MQL5 matrices and vectors play a key role here. They transform data preparation from a set of disparate operations into a clear and manageable computational structure.

Normalization, statistical computing, working with feature space, PCA transformation — all this is reproduced directly inside the terminal in almost the same form, in which it is described mathematically. The code ceases to be overloaded with technical details and begins to reflect the logic of the calculations themselves. This dramatically lowers the entry threshold to ML development for a trader.

Support for ONNX is also important. MetaTrader 5 allows the model to be used as a ready-made computational block. Python remains a tool for preparing the model, and the terminal becomes an environment for its stable execution and control. This division makes the architecture much cleaner and more practical. The model is trained once, and further work is transferred to MQL5. This provides transparency, reproducibility and complete control over the input data.

The MetaTrader 5 strategy tester is of particular value. It allows us to evaluate not only the final profit, but also the internal behavior of the entire system. Drawdown, equity stability, trade structure, quality of recovery from losses, stability of trading rhythm — all this becomes measurable through a rich set of built-in metrics. Behind each of these metrics lies a separate facet of the strategy's behavior. It is this approach that transforms testing from a formal check into a full-fledged engineering analysis.

As a result, MetaTrader 5 can be used for construction and reproduction of ML pipelines without transferring logic to external infrastructure. The main conclusion: ML in MetaTrader 5 is implemented through linear mathematics, matrices and transparent data preparation without the feeling that ML exists somewhere separate from the terminal.

Programs used in the article

| # | Name | Type | Description |

|---|---|---|---|

| 1 | create_pca_lstm.py | Script | Script for building and training the model |

| 2 | MLpipeline.mq5 | Expert Advisor | EA for testing the ONNX model |

Translated from Russian by MetaQuotes Ltd.

Original article: https://www.mql5.com/ru/articles/22474

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

Features of Custom Indicators Creation

Features of Custom Indicators Creation

Gaussian Processes in Machine Learning: Regression Model in MQL5

Gaussian Processes in Machine Learning: Regression Model in MQL5

Features of Experts Advisors

Features of Experts Advisors

Feature Engineering for ML (Part 3): Session-Aware Time Features for Forex Machine Learning

Feature Engineering for ML (Part 3): Session-Aware Time Features for Forex Machine Learning

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use

IMHO, normalising and calculating PCA on the whole dataset and only then splitting it into training and validation samples means looking into the future. When working online you don't have the ability to change the normalisation or adapt the PCA to the latest data. An honest experiment would be to initially split the data into two sets and then normalise+PCA only on the in-sample part, and then apply the metaparameters you found to transform the input data on the out-of-sample.

As a result, even a regular arrow indicator shows arrows "on the very turns".

Dangerous thing, you can't understand the catch at once.