GoertzelBrain: Adaptive Spectral Cycle Detection with Neural Network Ensemble in MQL5

Introduction

Cycle analysis has a long history in financial markets. Traders have always sought to identify repeating patterns — periodic structures in price that, if reliably detected, can provide an edge in timing entries and exits. The challenge is that financial cycles are non-stationary: they appear, shift, strengthen, weaken, and vanish in ways that defeat static measurement tools.

The Goertzel algorithm, first introduced by Gerald Goertzel in 1958, provides an efficient method for computing individual frequency components of the Discrete Fourier Transform. Its application to financial markets was explored in the earlier MQL5 article written by F. Dube and titled "Cycle analysis using the Goertzel algorithm", which presented the CGoertzel and CGoertzelCycle classes for MQL5. That work demonstrated how the algorithm can identify dominant cycles in price data with greater computational efficiency than the full FFT, and with superior noise handling compared to Ehlers' MESA technique.

However, knowing which cycle is dominant at any given moment is only half the problem. The real question is: what does the cycle tell us about what happens next? A 40-bar cycle at peak amplitude might mean a reversal is imminent — or it might mean the cycle is about to break down entirely. Context matters, and context is exactly what simple spectral analysis cannot provide alone.

This article presents GoertzelBrain — an indicator that combines Goertzel spectral analysis with an ensemble of self-training neural networks to produce an adaptive, context-aware cycle signal. Rather than simply reporting which cycle is present, GoertzelBrain learns to interpret the spectral features in the context of recent price behavior and produces a directional confirmation signal that adapts as market conditions change.

We will cover the mathematical foundation, the complete MQL5 implementation, the architecture of the neural network ensemble, and practical applications for using the indicator as a directional filter.

The Problem with Static Cycle Detection

Traditional cycle detection methods share a fundamental limitation: they tell you what is happening in the frequency domain but not what it means for the next bar. Consider the output of a standard Goertzel spectrum analyzer. At any given bar, it might report that a 34-bar cycle is dominant with strong amplitude. But this information alone cannot answer the trader's question: should I be long or short?

The difficulty arises from several factors:

- Phase ambiguity. The Goertzel algorithm computes amplitude and phase for each frequency bin, but the phase value is notoriously unstable at the boundary of the analysis window. Small changes in price can produce large phase shifts, making it unreliable as a direct trading signal.

- Cycle regime transitions. Financial cycles do not switch cleanly from one period to another. There are transition zones where multiple cycles overlap, compete, and interfere. A static spectral snapshot cannot distinguish between a stable dominant cycle and one that is in the process of breaking down.

- Non-stationarity. The statistical properties of price data change continuously. A cycle that was highly significant during a trending regime may become meaningless during a range-bound period. Any useful cycle indicator must somehow account for this.

GoertzelBrain addresses these problems by extracting a multi-dimensional feature vector from the Goertzel output and feeding it into an ensemble of neural networks that learn to interpret the spectral context over time.

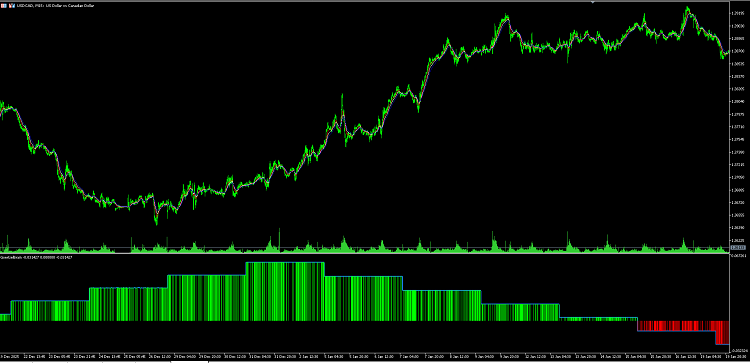

Figure 1. GoertzelBrain on USDCAD M15: green histogram confirms long bias during the December–January uptrend; red bars appear as the cycle regime shifts bearish

Architecture Overview

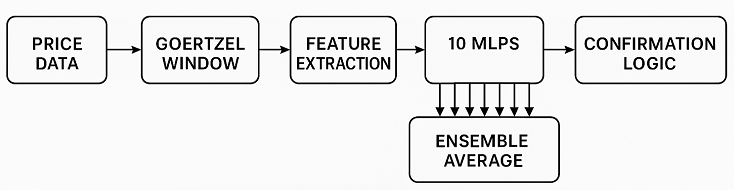

The indicator consists of three layers:

- Layer 1 — Spectral Feature Extraction. For each bar, a rolling window of 3 × MaxPeriod bars is extracted and passed through the Goertzel DFT. The dominant cycle period, its amplitude, spectral confidence, and their rates of change are computed. Combined with a simple price slope and volatility measure, this produces a 7-dimensional feature vector.

- Layer 2 — Neural Network Ensemble. Ten small multi-layer perceptrons (7 inputs, 12 hidden neurons, 1 output) process the feature vector independently. Each MLP has randomly initialized weights and is retrained online every RetrainEvery bars using single-sample stochastic gradient descent. The diversity of random initialization means each MLP develops a slightly different interpretation of the spectral features.

- Layer 3 — Ensemble Output and Confirmation Logic. The outputs of all ten MLPs are averaged to produce a single ensemble value. A confirmation signal is then derived: when the ensemble is above zero and rising, long direction is confirmed. When below zero and falling, short direction is confirmed.

Figure 2. Architecture of GoertzelBrain: from price data to confirmation signal

Implementation

File Structure

The indicator depends on two include files from the original Goertzel article:

- Goertzel.mqh — The CGoertzel class implementing the core Goertzel DFT algorithm

- GoertzelCycle.mqh — The CGoertzelCycle class providing cycle peak detection and spectrum analysis

The indicator itself is a single file: GoertzelBrain.mq5 .

Indicator Properties and Inputs

The indicator draws in a separate window with three visible plots and one hidden calculation buffer:

#property strict #property indicator_separate_window #property indicator_buffers 4 #property indicator_plots 3 #property indicator_label1 "Ensemble" #property indicator_type1 DRAW_LINE #property indicator_color1 clrDodgerBlue #property indicator_style1 STYLE_SOLID #property indicator_width1 2 #property indicator_label2 "LongConfirm" #property indicator_type2 DRAW_HISTOGRAM #property indicator_color2 clrLime #property indicator_style2 STYLE_SOLID #property indicator_width2 3 #property indicator_label3 "ShortConfirm" #property indicator_type3 DRAW_HISTOGRAM #property indicator_color3 clrRed #property indicator_style3 STYLE_SOLID #property indicator_width3 3

The first plot is the blue ensemble line — the raw averaged output of all MLPs. The second and third plots are green and red histogram bars that appear only when the confirmation conditions are met. This visual design allows the trader to immediately see both the underlying spectral signal and the specific bars where directional confirmation is active.

The input parameters control the spectral analysis range and the learning behavior of the neural network ensemble:

input uint MinPeriod = 10; // Minimum cycle period to analyze input uint MaxPeriod = 80; // Maximum cycle period to analyze input uint RetrainEvery = 200; // Bars between MLP retraining steps input double LearnRate = 0.001; // SGD learning rate input int VolWindow = 20; // Lookback for volatility feature

MinPeriod and MaxPeriod define the frequency band examined by the Goertzel algorithm. These should be set based on the timeframe and instrument. For example, on M5 charts, a range of 10–80 covers cycles from 50 minutes to approximately 6.5 hours. RetrainEvery controls how frequently the MLPs update their weights. Lower values produce faster adaptation but risk overfitting to noise. LearnRate controls the step size of gradient descent — too high causes instability, too low makes adaptation sluggish.

Four buffers are declared:

double EnsembleBuffer[]; // Plot 0: raw ensemble output double LongConfirmBuffer[]; // Plot 1: green histogram when long confirmed double ShortConfirmBuffer[]; // Plot 2: red histogram when short confirmed double ConfirmBuffer[]; // Buffer 3: hidden, +1/-1/0 for EA access via iCustom

The fourth buffer ( ConfirmBuffer ) is not plotted but is accessible via iCustom() at buffer index 3, making it straightforward to incorporate the confirmation signal into an Expert Advisor.

The TinyMLP Class

The neural network component is implemented as a self-contained class with no external dependencies. Each MLP has a simple two-layer architecture: 7 inputs → 12 hidden neurons (tanh activation) → 1 linear output.

class TinyMLP { public: int input_dim; int hidden_dim; double W1[H_DIM][IN_DIM]; // input-to-hidden weights double b1[H_DIM]; // hidden biases double W2[H_DIM]; // hidden-to-output weights double b2; // output bias

Weights are initialized to small random values after MathSrand() is called in OnInit() . The Init() method is separated from the constructor deliberately — MQL5 constructs global objects before OnInit() runs, so MathSrand() has not yet been called when constructors execute. If weights were randomized in the constructor, all MLPs would share the same pseudo-random seed.

void Init()

{

for(int j = 0; j < hidden_dim; j++)

{

b1[j] = (MathRand() / 32767.0 - 0.5) * 0.2;

for(int i = 0; i < input_dim; i++)

W1[j][i] = (MathRand() / 32767.0 - 0.5) * 0.2;

}

b2 = (MathRand() / 32767.0 - 0.5) * 0.2;

for(int j = 0; j < hidden_dim; j++)

W2[j] = (MathRand() / 32767.0 - 0.5) * 0.2;

}The weight initialization scale of ±0.1 is chosen to keep initial outputs near zero while providing enough variance for the MLPs to diverge during training. This divergence is important — an ensemble of identical networks provides no benefit.

The tanh activation function includes overflow clamping to prevent numerical issues when large feature values propagate through the network:

static double Tanh(double x)

{

if(x > 20.0) return 1.0;

if(x < -20.0) return -1.0;

return 2.0 / (1.0 + MathExp(-2.0 * x)) - 1.0;

}The forward pass computes the hidden layer activations and produces a single scalar output:

double Forward(double &x[]) { double hidden[]; ArrayResize(hidden, hidden_dim); for(int j = 0; j < hidden_dim; j++) { double s = b1[j]; for(int i = 0; i < input_dim; i++) s += W1[j][i] * x[i]; hidden[j] = Tanh(s); } double out = b2; for(int j = 0; j < hidden_dim; j++) out += W2[j] * hidden[j]; return out; }

Training uses standard backpropagation with mean squared error loss. Each training step processes a single sample — there is no batch accumulation. This is intentional: the indicator processes bars sequentially, and single-sample SGD provides the fastest adaptation to changing conditions:

void TrainStep(double &x[], double target, double lr) { // ... forward pass identical to Forward() ... double d_out = out - target; // MSE gradient at output // Output layer weight update for(int j = 0; j < hidden_dim; j++) W2[j] -= lr * d_out * hidden[j]; b2 -= lr * d_out; // Hidden layer backpropagation for(int j = 0; j < hidden_dim; j++) { double d_h = d_out * W2[j] * (1.0 - hidden[j] * hidden[j]); // tanh derivative b1[j] -= lr * d_h; for(int i = 0; i < input_dim; i++) W1[j][i] -= lr * d_h * x[i]; } }

The tanh derivative (1 - tanh²(x)) is computed directly from the cached hidden activation values, avoiding redundant computation.

Heap Allocation of CGoertzelCycle

A critical implementation detail concerns the CGoertzelCycle object. The class internally allocates a CGoertzel pointer using new in its constructor. If the object is declared globally and then re-assigned in OnInit() , the temporary object's destructor deletes the internal pointer, leaving the global object with a dangling pointer. This causes silent failures when GetSpectrum() or GetDominantCycles() are called.

The solution is to allocate the object on the heap:

CGoertzelCycle *goertzel = NULL; int OnInit() { if(goertzel != NULL) delete goertzel; goertzel = new CGoertzelCycle(true, false, false, MinPeriod, MaxPeriod); // ... } void OnDeinit(const int reason) { if(goertzel != NULL) { delete goertzel; goertzel = NULL; } }

This pattern ensures proper lifetime management and prevents the dangling pointer issue.

Feature Extraction

The BuildFeatures() function constructs the 7-dimensional input vector for the neural networks. For each bar, it extracts a sub-window of 3 × MaxPeriod bars and passes it through the Goertzel stack:

bool BuildFeatures( const double &price[], int barIdx, double &X[], double in_prev_amp, double in_prev_period ) { int winLen = (int)(MaxPeriod * 3); int winStart = barIdx - winLen + 1; if(winStart < 0) { ArrayInitialize(X, 0.0); return false; } // Extract chronological sub-window for this specific bar double window[]; ArrayResize(window, winLen); ArrayCopy(window, price, 0, winStart, winLen); // Goertzel spectrum double amplitude[]; goertzel.GetSpectrum(window, amplitude); // Dominant cycle detection double cycles[]; uint ncycles = goertzel.GetDominantCycles(true, window, cycles); double dominant_period = (ncycles > 0 ? cycles[0] : 0.0);

The per-bar sub-window extraction is essential. Without it, the Goertzel algorithm always analyzes the same global window regardless of which bar is being processed, producing identical features for every bar.

The seven features are:

| Index | Feature | Description |

|---|---|---|

| 0 | Dominant Period | The cycle length with the highest spectral peak |

| 1 | Dominant Amplitude | The strength of the dominant cycle |

| 2 | Phase Proxy | Spectral confidence multiplied by the sign of the price slope |

| 3 | Normalized Confidence | Dominant amplitude as a fraction of total band energy |

| 4 | Amplitude Slope | Bar-to-bar change in dominant cycle strength |

| 5 | Period Slope | Bar-to-bar change in dominant cycle length |

| 6 | Volatility | Standard deviation of price over VolWindow bars |

Features 0–3 describe the current spectral state. Features 4–5 capture the dynamics of the spectral state — whether the dominant cycle is strengthening or weakening, and whether it is shifting to a longer or shorter period. Feature 6 provides price context that the spectral features alone cannot capture.

The phase proxy (feature 2) deserves special mention. The CGoertzelCycle class computes phase values internally but stores them in private members that are not directly accessible. Rather than modifying the library, we construct a proxy by multiplying the normalized confidence by the sign of the recent price direction. This captures the essential information: whether the current cycle regime is aligned with bullish or bearish price movement.

The OnCalculate Loop

The main calculation loop processes bars in chronological order (index 0 = oldest, consistent with the default non-series array direction in the single-price OnCalculate signature):

int OnCalculate(const int rates_total, const int prev_calculated, const int begin, const double &price[]) { int minBars = (int)(MaxPeriod * 3); if(rates_total < minBars) return 0; int start = (prev_calculated == 0) ? minBars : prev_calculated - 1; if(start < minBars) start = minBars; for(int i = start; i < rates_total; i++) { double X[IN_DIM]; if(!BuildFeatures(price, i, X)) { /* set all buffers to 0.0, continue */ } // Target: next-bar direction double target = 0.0; if(i + 1 < rates_total) { double diff = price[i + 1] - price[i]; target = (diff >= 0.0 ? 1.0 : -1.0); } // Retrain periodically if(bars_since_train >= (int)RetrainEvery) { for(int m = 0; m < NUM_MLPS; m++) mlp[m].TrainStep(X, target, LearnRate); bars_since_train = 0; } // Ensemble inference double sum = 0.0; for(int m = 0; m < NUM_MLPS; m++) sum += mlp[m].Forward(X); double ensemble = sum / (double)NUM_MLPS; EnsembleBuffer[i] = ensemble;

Note the deliberate separation between training frequency and inference frequency. Training occurs only every RetrainEvery bars, but inference runs on every bar. This prevents the weights from chasing every tick while still producing continuous output.

Confirmation Logic

The confirmation signal implements the two-condition filter:

double prevEnsemble = (i > minBars) ? EnsembleBuffer[i - 1] : 0.0; double delta = ensemble - prevEnsemble; if(ensemble > 0.0 && delta > 0.0) { LongConfirmBuffer[i] = ensemble; // green histogram ConfirmBuffer[i] = 1.0; // +1 for EA } else if(ensemble < 0.0 && delta < 0.0) { ShortConfirmBuffer[i] = ensemble; // red histogram ConfirmBuffer[i] = -1.0; // -1 for EA }

Long confirmation requires the ensemble to be above zero and increasing. This means the spectral regime favors the upside and the conviction is building. Short confirmation is the mirror: below zero and decreasing. All other states produce zero — no confirmation in either direction.

This design ensures that the signal is not just about position (above/below zero) but also about momentum (direction of change). A declining positive ensemble means the bullish cycle is fading — not a good time to enter longs even though the raw signal is still positive.

Practical Usage

As a Directional Filter

GoertzelBrain is designed to be used as a confirmation filter, not a standalone entry signal. The intended workflow is:

- Your primary system generates a trade signal (e.g., based on price action, orderflow, or another indicator)

- Before executing, check GoertzelBrain's confirmation buffer

- Only take the trade if the confirmation aligns with your signal direction

To read the confirmation signal from an EA:

int hGBrain = iCustom(_Symbol, _Period, "GoertzelBrain", MinPeriod, MaxPeriod, RetrainEvery, LearnRate, VolWindow); double confirm[]; CopyBuffer(hGBrain, 3, 0, 1, confirm); // buffer 3 = confirmation if(confirm[0] > 0.5) // long confirmed // ... proceed with buy logic if(confirm[0] < -0.5) // short confirmed // ... proceed with sell logic

Reading the Ensemble Line

The blue ensemble line provides additional context beyond the binary confirmation signal:

- Magnitude indicates conviction. A value of ±0.03 is weak; ±0.15 is strong.

- Zero crossings mark regime transitions. When the ensemble crosses zero, the dominant cycle's directional influence has reversed.

- Flatline near zero suggests no dominant cycle is present or the MLPs cannot extract a reliable signal from the current spectral features. This is valuable information — it tells you the market lacks cyclical structure at the moment.

Parameter Optimization

The five input parameters can be optimized in the Strategy Tester:

- MinPeriod / MaxPeriod — Defines which cycle frequencies are visible. Narrower bands focus on specific cycle regimes; wider bands capture more but dilute the signal.

- RetrainEvery — Controls adaptation speed. Values of 50–200 work well for most instruments on M5–H1.

- LearnRate — Values between 0.0001 and 0.005 are the practical range. Higher values risk weight explosion; lower values make adaptation too slow.

- VolWindow — Should roughly match the typical swing duration on your timeframe.

Why This Approach Is Different

Several aspects distinguish GoertzelBrain from existing cycle indicators:

- Adaptive interpretation. Traditional cycle indicators output raw spectral data — period, amplitude, phase — and leave interpretation entirely to the trader or a fixed set of rules. GoertzelBrain uses neural networks that learn to interpret spectral features in context, adapting their interpretation as market behavior changes.

- Self-training. The MLPs retrain online during indicator calculation. There is no offline training phase, no external data pipeline, and no model file to manage. The indicator is self-contained and adapts automatically when applied to any symbol or timeframe.

- Ensemble robustness. A single neural network can converge to a local optimum that produces poor predictions. By using ten independently initialized networks and averaging their outputs, the ensemble smooths out individual errors and produces a more stable signal.

- Confirmation rather than prediction. The indicator does not attempt to predict specific price targets or turning points. Instead, it answers a simpler and more reliable question: does the current spectral regime confirm a directional bias? This makes it a natural complement to any existing trading system rather than a replacement.

- Spectral dynamics as features. Most cycle indicators report a snapshot of the spectrum at each bar. GoertzelBrain includes the rate of change of spectral features (amplitude slope and period slope), capturing whether the cycle regime is strengthening, weakening, or transitioning. This temporal context is invisible to static spectral analysis.

Limitations and Considerations

- Repainting. Like all Goertzel-based indicators, the spectral analysis recalculates when new data arrives. The ensemble output on the current bar may change as new bars form. For backtesting purposes, only use values from fully formed bars.

- Random initialization. Because MLP weights are randomly initialized, the indicator will produce slightly different outputs each time it is applied to a chart. The ensemble mitigates this, but users should be aware that two instances of the indicator on the same chart will not produce identical results.

- Computational cost. The Goertzel DFT runs for every bar in the calculation range, and with ten MLPs performing forward passes, the indicator is more computationally intensive than simple oscillators. On modern hardware this is negligible for live trading, but large backtests on M1 data may be noticeably slower.

- No guaranteed edge. The indicator detects spectral structure and learns to interpret it, but the existence of a dominant cycle does not guarantee that it will continue. All cycle detection methods face this fundamental uncertainty. GoertzelBrain should be treated as one input among many in a trading decision, not as a crystal ball.

Conclusion

GoertzelBrain combines the precision of the Goertzel algorithm with the adaptive capacity of neural network ensembles to produce a cycle-aware directional filter for MetaTrader 5. By extracting multi-dimensional spectral features and learning to interpret them through online training, it bridges the gap between raw frequency analysis and actionable trading signals.

The indicator is designed as a confirmation tool — a go/no-go gate for trades generated by other systems. Its self-training architecture means it requires no external configuration beyond the five input parameters, and it adapts automatically to any instrument and timeframe.

All source code referenced in this article is available in the attached files. The indicator builds upon the CGoertzel and CGoertzelCycle classes from the article "Cycle analysis using the Goertzel algorithm", which must be present in the Include directory for compilation.

Files Attached

| File | Description |

|---|---|

| GoertzelBrain.mq5 | The complete indicator source code |

| Goertzel.mqh | Core Goertzel DFT algorithm class |

| GoertzelCycle.mqh | Cycle analysis and peak detection class |

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

MQL5 Wizard Techniques You should know (Part 86): Speeding Up Data Access with a Sparse Table for a Custom Trailing Class

MQL5 Wizard Techniques You should know (Part 86): Speeding Up Data Access with a Sparse Table for a Custom Trailing Class

Feature Engineering for ML (Part 1): Fractional Differentiation — Stationarity Without Memory Loss

Feature Engineering for ML (Part 1): Fractional Differentiation — Stationarity Without Memory Loss

Fractal-Based Algorithm (FBA)

Fractal-Based Algorithm (FBA)

Formulating Dynamic Multi-Pair EA (Part 8): Time-of-Day Capital Rotation Approach

Formulating Dynamic Multi-Pair EA (Part 8): Time-of-Day Capital Rotation Approach

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use