Market Microstructure in MQL5: Robust Foundation (Part 1)

Introduction

You trade NQ around the New York open and therefore rely on minute‑level microstructure to stay ahead of fast price moves. At this frequency, standard calculations break quietly: NaN/Inf and overflows from divisions by near‑zero, log of non‑positive prices, zero or missing closes at the edge of history, and degenerate variance estimates from tiny samples. These are not compiler errors—they are plausible numbers that mislead decision logic and cost trades.

This article addresses that engineering gap. Its goal is practical and specific: provide a defensive MQL5 foundation that guarantees intraday measurements do not emit silent numerical failures. The target audience is MQL5 developers working with CopyClose/SymbolInfoDouble on intraday data (e.g., M1 NQ). Deliverable: a compilable include file that enforces minimum sample sizes, rejects invalid price data, bounds and validates mathematical operations, and supplies stable statistical and spectral primitives so downstream microstructure metrics are meaningful when it matters most.

Why Intraday Data Is Different

Academic research has established that intraday price data has statistical properties that lower-frequency data does not. As Engle (2000) notes, observations at this frequency occur at irregular time intervals and exhibit fat tails, volatility clustering, and long-range dependence. These are not inconvenient edge cases—they are the defining characteristics of the data.

Fat tails mean extreme values appear far more often than a normal distribution predicts. At the NY open on NQ, a single one-minute bar can move further than the previous hour of price action. Any calculation that assumes returns are normally distributed will be wrong in the moments that matter most.

Volatility clustering means large moves follow large moves and small moves follow small moves. This is not random noise—it is structure. But it also means that a volatility estimate built on a quiet period will be dangerously wrong when applied to an active period.

Long-range dependence means the market has memory. A price move an hour ago is still statistically relevant to what happens now. Standard short-window calculations discard this information entirely.

These properties create specific failure modes in naive MQL5 code, for example, log of a non-positive price, division by zero variance, and array access on sparse history. None of these raise a compiler error. They produce invalid numbers—often massive—that propagate unnoticed through all subsequent calculations.

The foundation layer exists to catch every one of these cases before they reach the calculations that matter.

The Architecture of the Toolkit

Before writing any code, it is worth understanding what we are building across the full series.

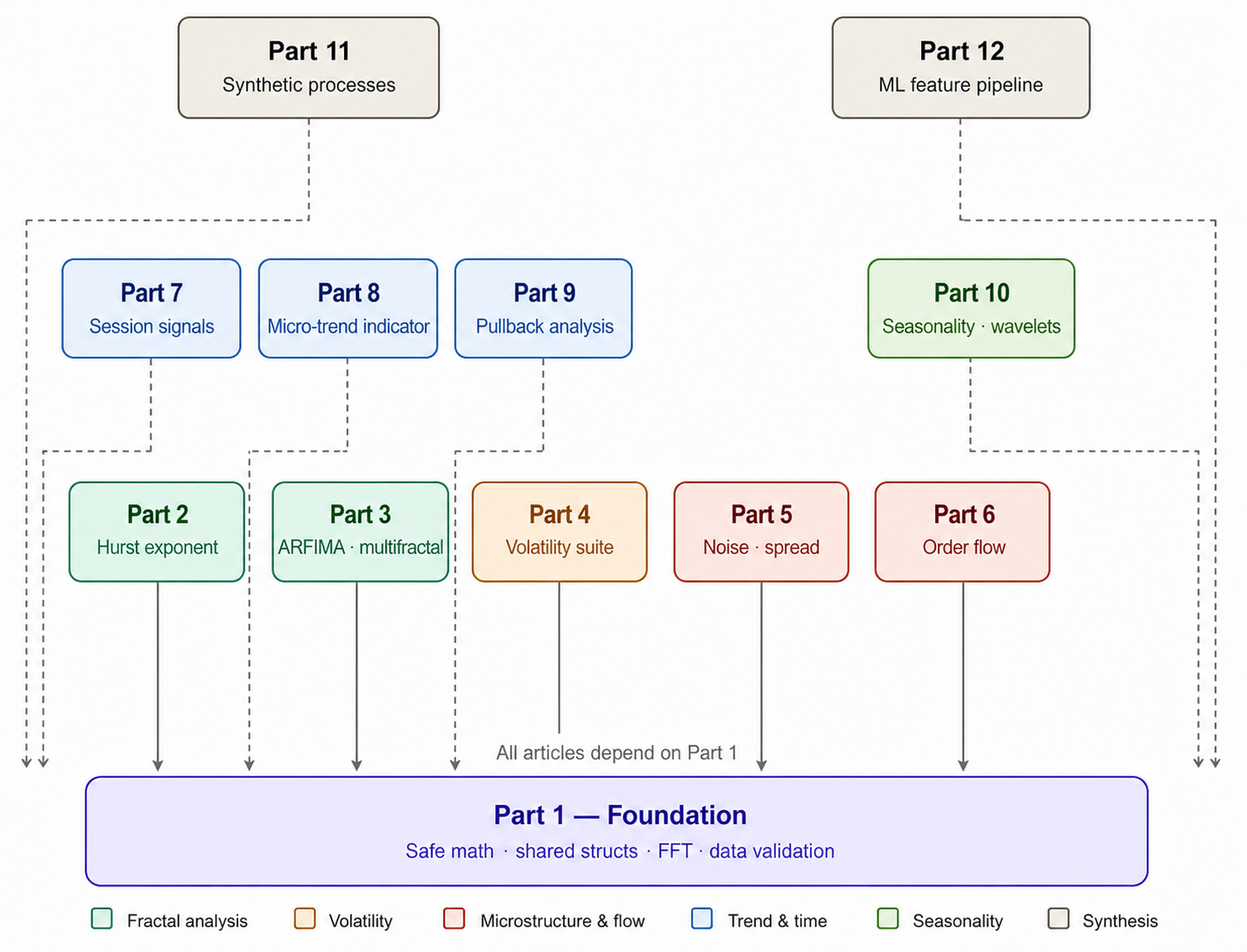

Figure 1: The twelve-part series structure. Each article depends on the shared include file built in Part 1. Articles are grouped by theme: fractal analysis (Parts 2 and 3), volatility (Part 4), microstructure noise and order flow (Parts 5 and 6), time-aware signals and trend indicators (Parts 7, 8, and 9), seasonality (Part 10), and the synthesis articles (Parts 11 and 12).

Every article in this series produces functions that call the foundation layer. SafeLog, SafeDivide, and SafeSqrt appear in almost every subsequent calculation. The shared structs—RobustFractalAnalysis, OrderFlowSignal, and TimeAwareSignal—are the containers that pass results between articles. The FFT lives in the foundation layer because multiple parts reuse it. Part 3 uses it for the multifractal spectrum, Part 10 for Fourier seasonality, and Part 11 for synthetic fractional Gaussian noise.

Building this layer first means every subsequent article can focus entirely on the measurement logic without repeating defensive boilerplate. It also means that when you eventually assemble the full toolkit, every component speaks the same language.

The include file is structured as follows:

//+------------------------------------------------------------------+ //| MicroStructure_Foundation.mqh | //| Market Microstructure in MQL5: Robust Foundation — Part 1 of 12 | //| | //| DEPENDS ON : nothing | //| REQUIRED BY : Parts 2 through 12 | //+------------------------------------------------------------------+

Constants and Configuration

We begin with a set of compile-time constants that define the numerical boundaries the entire toolkit operates within.

//+------------------------------------------------------------------+ //| CONSTANTS | //+------------------------------------------------------------------+ #define DBL_MIN_POSITIVE 1e-20 // Smallest value treated as non-zero in division and log guards #define MS_DBL_EPSILON 1e-10 // General floating-point comparison tolerance #define MATH_PI 3.14159265358979323846 // Pi to full double precision for FFT and trigonometric use #define MIN_SAMPLE_SIZE 8 // Minimum bars required before any statistical calculation proceeds #define MAX_SAMPLE_SIZE 5000 // Maximum bars fetched in a single CopyClose call #define MAX_AGGREGATION_LEVEL 10 // Maximum scale levels used in aggregated variance estimators #define NUMERICAL_STABILITY_BOUND 1e100 // Any result exceeding this is treated as a numerical failure #define GMT_OFFSET_HOURS 2 // Broker GMT offset; adjust if your broker runs on a different zone

DBL_MIN_POSITIVE is the smallest value we treat as non-zero in division and logarithm guards. Using MQL5's built-in DBL_MIN would be too aggressive—there are legitimate small numbers in normalized microstructure calculations. The value 1e-20 is conservative enough to catch genuine zeros while leaving room for small but valid results.

NUMERICAL_STABILITY_BOUND defines the largest result we consider valid. Any calculation returning a value larger than 1e100 has gone wrong. This is the upper gate that would have caught the ten-digit number from the introduction.

A MIN_SAMPLE_SIZE of 8 is the absolute minimum number of data points any statistical calculation will accept. Below this, any result is statistically meaningless regardless of how mathematically valid the formula appears.

GMT_OFFSET_HOURS of 2 reflects a broker on GMT+2. All session detection in Part 7 is built on this offset. If your broker runs on a different offset, change this constant once, and the entire time-aware system adjusts automatically.

Data Structures

The toolkit uses shared structs that carry analysis results between functions and between articles. Defining them here means every subsequent article can use them without redefinition.

//+------------------------------------------------------------------+ //| Primary result container for fractal and long-memory analysis. | //| Confidence fields support weighted blending of estimators. | //| computation_status: 0 = success, non-zero = specific failure. | //+------------------------------------------------------------------+ struct RobustFractalAnalysis { double hurst_exponent; // Weighted-average Hurst exponent across estimators double hurst_confidence; // Confidence weight for the Hurst estimate (0–1) double fractal_dimension; // Higuchi fractal dimension blended with Hurst fallback double dimension_confidence; // Confidence weight for the fractal dimension estimate double arfima_d; // GPH fractional differencing parameter d double multifractal_width; // Spectrum width from MFDFA-style tau(q) regression double volatility_persistence; // Normalised persistence: 2 * |H - 0.5| double microstructure_noise; // Enhanced noise estimate from range, body, and close-open double seasonality_strength; // Intraday seasonality ratio (edge vs midday variance) int computation_status; // 0 = success; non-zero codes indicate failure mode string validation_message; // Human-readable description of any failure condition };

The hurst_confidence and dimension_confidence fields are what distinguish this from a naive implementation. Part 2 implements three independent Hurst estimators and blends them by confidence weight. Without these fields, the blending has nowhere to store its intermediate results. The computation_status carries an integer code—zero for success, non-zero for specific failure modes. This allows calling code to detect and handle failure conditions rather than receiving a plausible-looking but incorrect value.

//+------------------------------------------------------------------+ //| Composite order flow signal combining directional pressure, | //| momentum, exhaustion, and smart-money contribution. | //| direction_numeric enables downstream arithmetic without | //| string comparisons. | //+------------------------------------------------------------------+ struct OrderFlowSignal { double strength; // Volume-weighted directional imbalance (-1 to +1) double momentum; // Short-window minus long-window flow differential double exhaustion; // Current absolute flow relative to recent peak double smart_money; // Large-bar directional bias (signed volume ratio) double convergence; // Mean signal score across sub-indicators double confidence; // Signal reliability weight (0–1) string direction; // "BULLISH", "BEARISH", or "NEUTRAL" double direction_numeric; // +1.0, -1.0, or 0.0 for use in calculations };

Every field in OrderFlowSignal has a specific meaning. Strength is the directional imbalance; momentum is its rate of change; exhaustion captures fading flow; and smart_money reflects the impact of high-volume bars. The direction_numeric field exists specifically for use in mathematical expressions downstream—string comparisons have no place in a calculation pipeline.

//+------------------------------------------------------------------+ //| Time-aware composite signal layering session context over flow. | //| Embeds OrderFlowSignal and adds regime, liquidity, and event | //| adjustments for session-adaptive position sizing. | //+------------------------------------------------------------------+ struct TimeAwareSignal { OrderFlowSignal flow_signal; // Underlying order flow analysis double fractal_synergy; // Hurst-regime aligned with flow direction double time_regime_multiplier; // Session weight: 1.5 at NY open, 0.5 off-peak double session_liquidity_factor; // Spread-based liquidity scaling (0.3–1.2) double pre_event_boost; // Additive boost near scheduled events double final_signal; // Combined directional signal (-2 to +2) double time_adjusted_confidence; // Confidence scaled by regime and liquidity string time_context; // Human-readable session label datetime signal_time; // TimeCurrent() at signal generation double time_until_event; // Minutes until next scheduled event double max_position_size; // Suggested size fraction (0–1) double stop_loss_multiplier; // ATR stop multiplier based on confidence };

TimeAwareSignal is the composite output of Part 7. It embeds an OrderFlowSignal and layers session context on top. The stop_loss_multiplier and max_position_size fields exist because position sizing should respond to signal quality—a high-confidence signal at the NY open warrants a different position size than the same signal during the Asian session.

The PULLBACK_QUALITY enum used in Part 9 is also defined here:

//+------------------------------------------------------------------+ //| Pullback quality classification based on Fibonacci retracement | //| depth relative to the most recent swing range. | //+------------------------------------------------------------------+ enum PULLBACK_QUALITY { PBQ_NO_TREND = 0, // No identifiable trend; classification not meaningful PBQ_STRONG = 1, // Retrace below 23.6%: trend intact, shallow pullback PBQ_HEALTHY = 2, // Retrace 23.6%–38.2%: normal corrective structure PBQ_WARNING = 3, // Retrace 38.2%–61.8%: deeper correction, caution advised PBQ_DEEP = 4, // Retrace above 61.8%: trend at risk of reversal PBQ_BROKEN = 5 // Retrace beyond 100%: trend structure broken };

Safe Math Functions

This is the core of the foundation layer. Every function here wraps a standard mathematical operation with validation that catches the failure modes described earlier.

SafeDivide

//+------------------------------------------------------------------+ //| SafeDivide: two-gate guard. | //| Gate 1 checks denominator before division. | //| Gate 2 checks result for overflow or NaN after division. | //+------------------------------------------------------------------+ double SafeDivide(double numerator, double denominator, double fallback = 0.0) { if(!MathIsValidNumber(denominator) || MathAbs(denominator) < DBL_MIN_POSITIVE) return fallback; double result = numerator / denominator; if(!MathIsValidNumber(result) || MathAbs(result) > NUMERICAL_STABILITY_BOUND) return fallback; return result; }

Two gates, not one. The first checks the denominator before the division happens. The second checks the result afterward. This catches the case where both numerator and denominator are valid numbers, but their ratio produces an overflow or NaN due to floating-point arithmetic. The fallback parameter defaults to zero but can be set to any contextually appropriate value by the caller.

SafeLog

//+------------------------------------------------------------------+ //| SafeLog: guards against non-positive input. | //| Fallback of -20.0 signals "invalid point" to downstream | //| regression slope calculations without distorting the fit. | //+------------------------------------------------------------------+ double SafeLog(double x, double fallback = -20.0) { if(!MathIsValidNumber(x) || x <= 0) return fallback; double result = MathLog(x); if(!MathIsValidNumber(result)) return fallback; return result; }

The fallback of -20.0 is deliberate. Most uses of SafeLog in this toolkit feed into regression calculations on log-transformed data. A fallback of zero would distort the regression. A very negative number flags the point as an outlier that regression slope calculations will naturally discount. This is why the fallback is not zero—it is a value that communicates "this point is invalid" in the mathematical language of the downstream function.

SafeSqrt and SafeExp

//+------------------------------------------------------------------+ //| SafeSqrt: guards against negative radicand. | //+------------------------------------------------------------------+ double SafeSqrt(double x, double fallback = 0.0) { if(!MathIsValidNumber(x) || x < 0) return fallback; double result = MathSqrt(x); if(!MathIsValidNumber(result)) return fallback; return result; } //+------------------------------------------------------------------+ //| SafeExp: soft-clips extreme inputs rather than hard-failing. | //| Large inputs are not always bad data; they can be valid | //| log-return values. Clamping preserves scale while avoiding NaN. | //+------------------------------------------------------------------+ double SafeExp(double x, double fallback = 0.0) { if(!MathIsValidNumber(x)) return fallback; if(x > 100) return 1e100; if(x < -100) return 1e-100; double result = MathExp(x); if(!MathIsValidNumber(result)) return fallback; return result; }

SafeExp uses soft clipping for extreme inputs to prevent overflow. Large inputs are not always bad data; they can be valid log-return values. Clamping preserves scale while avoiding NaN/Inf.

SafeTanh

//+------------------------------------------------------------------+ //| SafeTanh: soft-bounding operator used throughout order flow. | //| Compresses signals smoothly to [-1, +1] without hard clipping. | //| DBL_MIN_POSITIVE in the denominator prevents degenerate zero. | //+------------------------------------------------------------------+ double SafeTanh(double x) { if(!MathIsValidNumber(x)) return 0.0; if(x > 10.0) return 1.0; if(x < -10.0) return -1.0; double ex = SafeExp(x); double emx = SafeExp(-x); return (ex - emx) / (ex + emx + DBL_MIN_POSITIVE); }

SafeTanh appears throughout the order flow functions in Part 6 as a soft-bounding operator. Rather than clipping a signal to a range, it compresses it smoothly. The DBL_MIN_POSITIVE in the denominator prevents division by zero in the degenerate case where both exponentials round to zero simultaneously.

Data Validation and Access

ValidateSymbolV2

//+------------------------------------------------------------------+ //| ValidateSymbolV2: three-attempt validation with spread check. | //| Returns true immediately for the current chart symbol. | //| Spread above 10000 points indicates a data feed problem. | //+------------------------------------------------------------------+ bool ValidateSymbolV2(const string symbol) { if(symbol == NULL || symbol == "") return false; if(symbol == Symbol()) return true; for(int i = 0; i < 3; i++) { double bid = SymbolInfoDouble(symbol, SYMBOL_BID); double ask = SymbolInfoDouble(symbol, SYMBOL_ASK); double point = SymbolInfoDouble(symbol, SYMBOL_POINT); if(bid > 0 && ask > 0 && ask > bid && point > 0) { double spread = (ask - bid) / point; if(spread < 10000) return true; } Sleep(10); } return false; }

Three attempts with a 10-millisecond pause between each. The spread check of less than 10,000 points is a sanity filter—a spread wider than this indicates either a data feed problem or a symbol that is not tradable. The function returns true immediately for the current chart symbol, avoiding a redundant market data request in the most common case.

SafeCopyClose

//+------------------------------------------------------------------+ //| SafeCopyClose: validated data fetch with per-value zero check. | //| Caps request at MAX_SAMPLE_SIZE to prevent memory overrun. | //| Returns 0 if any copied bar has a non-positive close price. | //+------------------------------------------------------------------+ int SafeCopyClose(const string symbol, const int tf, const int start, const int count, double &out[]) { if(!ValidateSymbolV2(symbol) || count <= 0) return 0; int safe_count = MathMin(count, MAX_SAMPLE_SIZE); ArraySetAsSeries(out, true); int copied = CopyClose(symbol, (ENUM_TIMEFRAMES)tf, start, safe_count, out); if(copied <= 0) return 0; for(int i = 0; i < copied; i++) if(out[i] <= 0) return 0; return copied; }

MAX_SAMPLE_SIZE of 5,000 prevents a memory allocation request that the terminal cannot honor. The full validation of every copied value catches the case where CopyClose succeeds but returns bars with zero price—which can occur at the boundary of available history. Returning zero from a partial failure is preferable to returning a partially valid array that downstream functions will process without knowing some values are invalid.

Statistical Primitives

mean_var

//+------------------------------------------------------------------+ //| mean_var: two-pass variance estimation. | //| Two passes avoid numerical instability when the mean is large | //| relative to the variance, as is typical with price data. | //+------------------------------------------------------------------+ void mean_var(const double &arr[], const int n, double &mean, double &var) { mean = 0; var = 0; if(n <= 0) return; for(int i = 0; i < n; i++) mean += arr[i]; mean /= n; for(int i = 0; i < n; i++) var += (arr[i] - mean) * (arr[i] - mean); var /= n; }

Two passes rather than one because the single-pass formula for variance is numerically unstable for datasets where the mean is large relative to the variance—a similar situation occurs with price data.

robust_mean_var

//+------------------------------------------------------------------+ //| robust_mean_var: trimmed mean and variance with higher moments. | //| Removes the most extreme 10% of values symmetrically before | //| computing mean, variance, skewness, and excess kurtosis. | //| Falls back to mean_var if the trimmed sample is too small. | //+------------------------------------------------------------------+ void robust_mean_var(const double &arr[], const int n, double &mean, double &var, double &skew, double &kurt) { mean = 0; var = 0; skew = 0; kurt = 0; if(n < 4) return; double sorted[]; ArrayResize(sorted, n); ArrayCopy(sorted, arr, 0, 0, n); ArraySort(sorted); int trim = MathMax(1, (int)(n * 0.10)); int used = n - 2 * trim; if(used < 4) { mean_var(arr, n, mean, var); return; } for(int i = trim; i < n - trim; i++) mean += sorted[i]; mean /= used; for(int i = trim; i < n - trim; i++) var += MathPow(sorted[i] - mean, 2); var /= used; double sd = SafeSqrt(var); if(sd > DBL_MIN_POSITIVE) { for(int i = trim; i < n - trim; i++) { double z = (sorted[i] - mean) / sd; skew += MathPow(z, 3); kurt += MathPow(z, 4); } skew /= used; kurt = kurt / used - 3.0; } }

This is the function that separates this toolkit from a naive implementation. The 10% symmetric trim removes the most extreme values before calculating mean and variance. On NQ M1 data at the NY open, those extreme values are real—they are not measurement errors—but they should not dominate a statistical estimate intended to characterize typical behavior. The function falls back to standard mean_var if the dataset is too small to trim sensibly.

The skewness and kurtosis outputs are used in Part 4's volatility analysis. A dataset with high kurtosis confirms that fat tails are present—which on NQ at the NY open is almost always true.

LinearRegressionSlope

//+------------------------------------------------------------------+ //| LinearRegressionSlope: ordinary least squares slope estimate. | //| Used by Hurst estimators (Part 2), fractal dimension (Part 3), | //| and multifractal spectrum (Part 3). Centralising here ensures | //| identical arithmetic across all three. SafeDivide guards against | //| the degenerate case where all x values are identical. | //+------------------------------------------------------------------+ double LinearRegressionSlope(const double &x[], const double &y[], int n) { if(n < 2) return 0.0; double sx = 0, sy = 0, sxy = 0, sxx = 0; for(int i = 0; i < n; i++) { sx += x[i]; sy += y[i]; sxy += x[i] * y[i]; sxx += x[i] * x[i]; } double denom = n * sxx - sx * sx; return SafeDivide(n * sxy - sx * sy, denom); }

This function is used by every Hurst estimator in Part 2, by the fractal dimension in Part 3, and by the multifractal spectrum also in Part 3. Centralizing it here means all three share identical arithmetic. SafeDivide guards against the degenerate case where all x values are identical—which would produce a zero denominator.

The FFT Implementation

The FFT lives in the foundation layer because multiple parts reuse it. Part 3 uses it for the multifractal spectrum, Part 10 for Fourier seasonality, and Part 11 for synthetic fractional Gaussian noise. Placing it here avoids three separate implementations that could diverge.

//+------------------------------------------------------------------+ //| FFT IMPLEMENTATION | //| Cooley-Tukey radix-2 in-place. Input length must be power of 2. | //| Reused by: Part 3 (multifractal), Part 10 (Fourier seasonality), | //| Part 11 (fractional Gaussian noise generation). | //+------------------------------------------------------------------+ void fft(const double &real_in[], const double &imag_in[], double &real_out[], double &imag_out[], int n, bool inverse) { ArrayResize(real_out, n); ArrayResize(imag_out, n); ArrayCopy(real_out, real_in, 0, 0, n); ArrayCopy(imag_out, imag_in, 0, 0, n); //--- Bit-reversal permutation int j = 0; for(int i = 1; i < n; i++) { int bit = n >> 1; for(; (j & bit) != 0; bit >>= 1) j ^= bit; j ^= bit; if(i < j) { double tr = real_out[i]; real_out[i] = real_out[j]; real_out[j] = tr; double ti = imag_out[i]; imag_out[i] = imag_out[j]; imag_out[j] = ti; } } //--- Cooley-Tukey butterfly for(int len = 2; len <= n; len <<= 1) { double ang = 2.0 * MATH_PI / len * (inverse ? -1 : 1); double wr = MathCos(ang); double wi = MathSin(ang); for(int i = 0; i < n; i += len) { double cr = 1.0, ci = 0.0; for(int k = 0; k < len / 2; k++) { double ur = real_out[i + k]; double ui = imag_out[i + k]; double vr = real_out[i + k + len / 2]; double vi = imag_out[i + k + len / 2]; double pvr = vr * cr - vi * ci; double pvi = vr * ci + vi * cr; real_out[i + k] = ur + pvr; imag_out[i + k] = ui + pvi; real_out[i + k + len / 2] = ur - pvr; imag_out[i + k + len / 2] = ui - pvi; double ncr = cr * wr - ci * wi; ci = cr * wi + ci * wr; cr = ncr; } } } //--- Normalise output for inverse transform if(inverse) for(int i = 0; i < n; i++) { real_out[i] /= n; imag_out[i] /= n; } }

This is a standard Cooley-Tukey radix-2 in-place FFT. The input length must be a power of two—all callers in this series handle this requirement before calling the function. The inverse flag performs the inverse FFT by negating the twiddle factor angle and normalizing the output by n. Both forward and inverse transforms use the same butterfly code, which keeps the implementation compact and reduces the chance of asymmetry errors between the two directions.

Completing the Include File

The foundation layer is complete. Use this relative include path in all subsequent articles to avoid modifying the global MQL5\Include folder:

#include "Includes\MicroStructure_Foundation.mqh"

With this single line, every function and struct built in this article becomes available to the calling indicator or expert advisor. The safe math layer, the validation functions, the statistical estimators, and the FFT are all in scope. Part 2 can begin measuring the Hurst exponent immediately, with no defensive boilerplate to repeat.

Conclusion

The foundation implemented here removes the silent failure modes that make intraday microstructure unusable in practice. MicroStructure_Foundation.mqh is a single include you add to any indicator or EA; it provides well‑defined guarantees so subsequent measurements can be trusted.

Practical outcome:

- safe math primitives (SafeDivide, SafeLog, SafeSqrt, SafeExp, SafeTanh) that prevent NaN/Inf and soft-clip overflows;

- validated market access and history reads (ValidateSymbolV2, SafeCopyClose) that reject non‑tradable symbols, anomalous spread, and non‑positive close prices;

- numerically stable statistical primitives (two‑pass variance, trimmed estimators, OLS slope) that tolerate fat tails and regime shifts;

- a single FFT implementation for consistent spectral calculations.

With these guarantees in place, microstructure metrics no longer “lie” at critical moments. Part 2 builds on this layer to implement three independent Hurst estimators combined by confidence weighting, relying on the defensive primitives supplied here.

References

- Engle, R. (2000). The econometrics of ultra-high-frequency data. Econometrica, 68(1), 1–22.

- Madhavan, A. (2000). Market microstructure: a survey. Journal of Financial Markets, 3, 205–258.

- Dacorogna, M., Gencay, R., Muller, U., Olsen, R., and Pictet, O. (2001). An Introduction to High-Frequency Finance. Academic Press.

Warning: All rights to these materials are reserved by MetaQuotes Ltd. Copying or reprinting of these materials in whole or in part is prohibited.

This article was written by a user of the site and reflects their personal views. MetaQuotes Ltd is not responsible for the accuracy of the information presented, nor for any consequences resulting from the use of the solutions, strategies or recommendations described.

MQL5 Trading Tools (Part 30): Class-Based Tool Palette Sidebar

MQL5 Trading Tools (Part 30): Class-Based Tool Palette Sidebar

Three MACD Filters on US_TECH100: Five Years of Broker Data

Three MACD Filters on US_TECH100: Five Years of Broker Data

MetaTrader 5 Machine Learning Blueprint (Part 14): Transaction Cost Modeling for Triple-Barrier Labels in MQL5

MetaTrader 5 Machine Learning Blueprint (Part 14): Transaction Cost Modeling for Triple-Barrier Labels in MQL5

Beyond the Clock (Part 1): Building Activity and Imbalance Bars in Python and MQL5

Beyond the Clock (Part 1): Building Activity and Imbalance Bars in Python and MQL5

- Free trading apps

- Over 8,000 signals for copying

- Economic news for exploring financial markets

You agree to website policy and terms of use