Building a Forex Expert Advisor with Ensemble Intelligence: From Chaos to Consistency

About four months ago, I dove deep into the world of ensembles for building Forex expert advisors. Day and night, I ran experiments, chasing a simple but powerful idea. Combine multiple timeframes, multiple folds, and different perspectives into one unified system that produces a consensus decision. At first, it felt almost too elegant to fail.

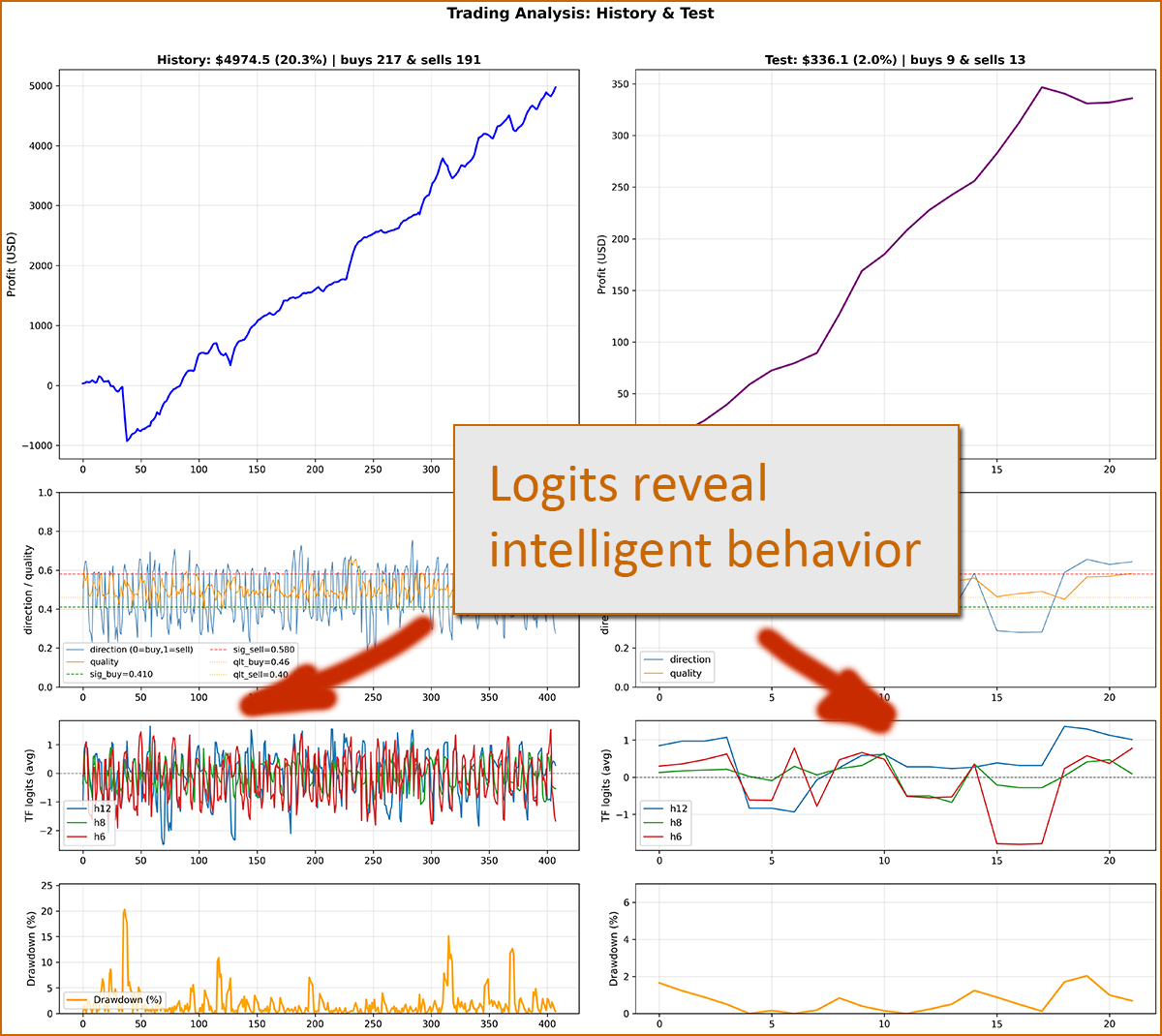

The core intuition was straightforward: logits that correctly detect their regime should overpower the noise from weaker signals. When aggregated, these logits form a clearer directional bias. In the subplot “TF logits (avg)” (shown below), you can see how different timeframes frequently diverge—some pointing up, others down—creating moments where the consensus becomes strong enough to justify a trade or avoid one entirely.

But very quickly, I ran into a classic and frustrating problem: unknown future periods. Imagine two test windows—August to December and November to March. Both span five months. You train one model up to August, and another up to November. And here’s the catch: when one performs well, the other fails. When the second succeeds, the first collapses. This instability persisted for months.

I tried everything. Fixed folds, where the dataset is split into equal chunks—no success. Expanding folds, where each new fold includes all previous data—still no luck. Then regime-based folds, isolating volatile, trending, or flat markets—again, no consistency. Each approach seemed promising, yet ultimately failed to generalize.

Then came a critical realization. Why should folds be tied to specific dates at all? Why anchor everything to August or November as if those points define the future? What if a model simply isn’t ready by that date? That assumption alone could break the entire system.

The breakthrough was simple, yet powerful: instead of anchoring folds to fixed endpoints, I began selecting them within a rolling window—say, six months. Each subsequent fold would slightly overlap with the previous one, touching its boundary and extending further into the past. This created a smooth transition between folds, rather than abrupt, artificial splits.

Each fold has a fixed duration and moves forward in time with a constant step—one week. This results in hundreds of folds: over 500 on the 6-hour timeframe, more than 400 on the 8-hour timeframe, and so on. The diversity and continuity of these folds became the key.

Below is a minimal piece of code that demonstrates this “simple magic” of generating weekly rolling folds:

def make_weekly_folds( self, span_train_months, span_val_months, log_path='empty', pass_num=0, fold_filter=None ): self._ensure() train_sec = span_train_months * SEC_IN_MONTH val_sec = span_val_months * SEC_IN_MONTH T0 = float(self._T.min()) T1 = float(self._T.max()) folds = [] fold_id = 1 if log_path != 'empty': f = open(log_path, "w", encoding="utf-8") # Align timestamps to Monday 00:00 for consistent weekly stepping def to_monday(ts): d = dt.datetime.fromtimestamp(ts) d = d.replace(hour=0, minute=0, second=0, microsecond=0) return (d - dt.timedelta(days=d.weekday())).timestamp() current_start = to_monday(T0) if current_start < T0: current_start += 7 * 86400 prev_train_start = None while True: train_start = current_start train_end = train_start + train_sec val_start = train_end val_end = val_start + val_sec # Stop if validation exceeds dataset bounds if val_end > T1: break fold = self._make_fold( fold_id, train_start, train_end, val_start, val_end ) if fold: if fold_filter is None or fold_id in fold_filter: folds.append(fold) if prev_train_start is None: shift_days_info = "N/A" else: shift_days_val = (train_start - prev_train_start) / 86400 shift_days_info = f"{shift_days_val:.1f}d" def ts_to_str(ts): return dt.datetime.fromtimestamp(ts).strftime("%Y-%m-%d %H:%M") if log_path != 'empty': line = ( f"PASS-{pass_num} FOLD-{fold_id} | " f"SHIFT: {shift_days_info} | " f"TRAIN: {ts_to_str(train_start)} -> {ts_to_str(train_end)} | " f"VAL: {ts_to_str(val_start)} -> {ts_to_str(val_end)}\n" ) f.write(line) fold_id += 1 prev_train_start = train_start # Fixed step: move forward by one week current_start = to_monday(current_start + 7 * 86400) return folds

This approach finally allowed me to build ensembles that survive unknown periods with solid profitability. Importantly, the same methodology is applied consistently across both training and ensemble construction.

In the August–December test, the advisor stays flat during the first month, then starts capturing strong profits. In the November–March test, performance is smoother: it begins collecting gains almost immediately and scales up over time.

A notable pattern is the preference for USDJPY, which, as we know, was highly volatile during this period. That volatility created exactly the kind of opportunity the ensemble was designed to detect and exploit.

The above results come from the LSTM Ensemble with default settings.

For a balanced setup targeting around 10% drawdown and 400+ USD profit over five months, I recommend:

- Deposit: 1500 USD

- Max trades: 2

- Range: 100

- Risk: 0.5

At this point, I’m seriously considering starting a GitHub repository or even a YouTube channel. The topic has grown far beyond a single post. There’s also a meta-model layer, additional neural architectures, and cross-symbol training (gold + major FX pairs). There’s a lot of room to expand—and I’m just getting started.