Participe de nossa página de fãs

Coloque um link para ele, e permita que outras pessoas também o avaliem

Avalie seu funcionamento no terminal MetaTrader 5

- Visualizações:

- 4015

- Avaliação:

- Publicado:

- 2020.05.02 18:45

- Atualizado:

- 2020.11.08 04:13

-

Precisa de um robô ou indicador baseado nesse código? Solicite-o no Freelance Ir para Freelance

In probability theory, the multi-armed bandit problem (sometimes called the K- or N-armed bandit problem) is a problem in which a fixed limited set of resources must be allocated between competing (alternative) choices in a way that maximizes their expected gain, when each choice's properties are only partially known at the time of allocation, and may become better understood as time passes or by allocating resources to the choice. This is a classic reinforcement learning problem that exemplifies the exploration–exploitation tradeoff dilemma. The name comes from imagining a gambler at a row of slot machines (sometimes known as "one-armed bandits"), who has to decide which machines to play, how many times to play each machine and in which order to play them, and whether to continue with the current machine or try a different machine. The multi-armed bandit problem also falls into the broad category of stochastic scheduling.

https://en.wikipedia.org/wiki/Multi-armed_bandit

To get you started thinking algorithmically about the Explore-Exploit dilemma, in computer science, a greedy algorithm is an algorithm that always takes whatever action seems best at the present moment, even when that decision might lead to bad long term consequences. The epsilon-Greedy algorithm is almost a greedy algorithm because it generally exploits the best available option, but every once in a while the algorithm explores the other available options.

more information: https://web.stanford.edu/class/psych209/Readings/SuttonBartoIPRLBook2ndEd.pdf

Edit:07/11/2020

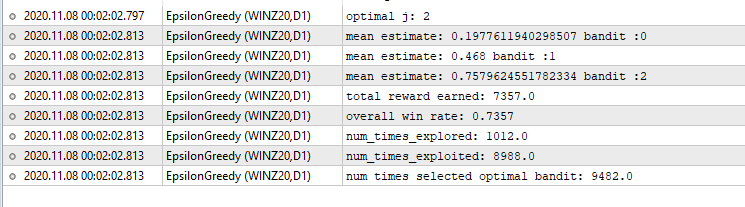

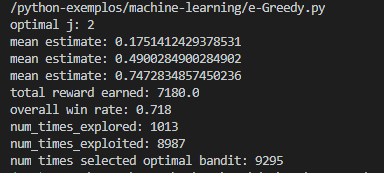

comparison with python model

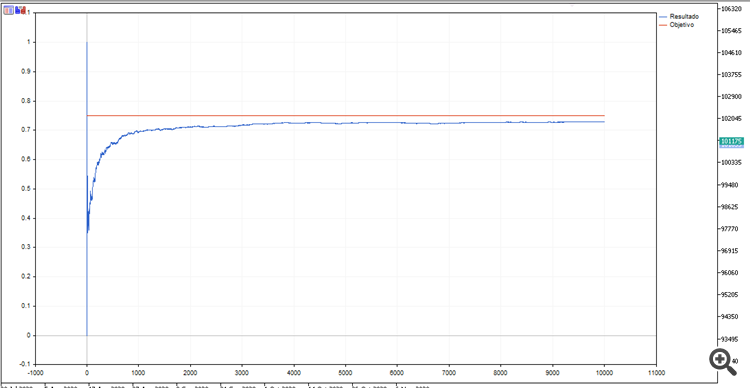

MQL

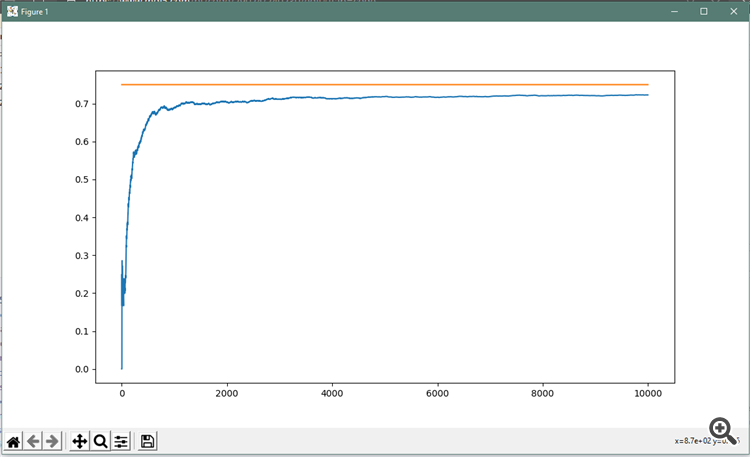

Python:

Veja onde está sendo a atuação dos grandes players de mercado

Veja onde está sendo a atuação dos grandes players de mercado

Quando os números aparecem na parte inferior indica alta, quando aparece na parte superior indica baixa, quando aparece no meio do candlestick indica continuidade do movimento.

Greedy Algorithm

Greedy Algorithm

Program for Greedy Algorithm to find Minimum number of Coins.

Exemplo de um Robô usando medias moveis para um cruzamento de medias

Exemplo de um Robô usando medias moveis para um cruzamento de medias

Esse trabalho foi feito pensando em como se deve usar a Orientação a Objetos em nossos trabalhos.

custom trail-stop

custom trail-stop

it creates a trail-stop with negative values trailing based on moving average indicator.