Classification models in the Scikit-Learn library and their export to ONNX

MetaQuotes | 13 October, 2023

The development of technology has led to the emergence of a fundamentally new approach to building data processing algorithms. Previously, for solving each specific task, a clear formalization and development of corresponding algorithms were required.

In machine learning, the computer learns to find the best ways to process data on its own. Machine learning models can successfully solve classification tasks (where there is a fixed set of classes and the goal is to find the probabilities of a given set of features belonging to each class) and regression tasks (where the goal is to estimate a numerical value of the target variable based on a given set of features). More complex data processing models can be built based on these fundamental components.

The Scikit-learn library provides a multitude of tools for both classification and regression. The choice of specific methods and models depends on the characteristics of the data since different methods can have varying effectiveness and provide different results depending on the task.

In the press release "ONNX Runtime is now open source", it is claimed that ONNX Runtime also supports the ONNX-ML profile:

The ONNX-ML profile is a part of ONNX designed specifically for machine learning (ML) models. It is intended for describing and representing various types of ML models, such as classification, regression, clustering, and others, in a convenient format that can be used on various platforms and environments that support ONNX. The ONNX-ML profile simplifies the transmission, deployment, and execution of machine learning models, making them more accessible and portable.

In this article, we will explore the application of all classification models in the Scikit-learn package for solving the Fisher's Iris classification task. We will also attempt to convert these models into the ONNX format and use the resulting models in MQL5 programs.

Furthermore, we will compare the accuracy of the original models with their ONNX versions on the complete Iris dataset.

Table of Contents

- 1. Fisher's Irises

- 2. Models for Classification

List of Scikit-learn Classifiers

Different Output Representations of Models iris.mqh - 2.1. SVC Classifier

2.1.1. Code for Creating the SVC Classifier Model

2.1.2. MQL5 Code for Working with the SVC Classifier Model

2.1.3. ONNX Representation of the SVC Classifier Model - 2.2. LinearSVC Classifier

2.2.1. Code for Creating the LinearSVC Classifier Model

2.2.2. MQL5 Code for Working with the LinearSVC Classifier Model

2.2.3. ONNX Representation of the LinearSVC Classifier Model - 2.3. NuSVC Classifier

2.3.1. Code for Creating the NuSVC Classifier Model

2.3.2. MQL5 Code for Working with the NuSVC Classifier Model

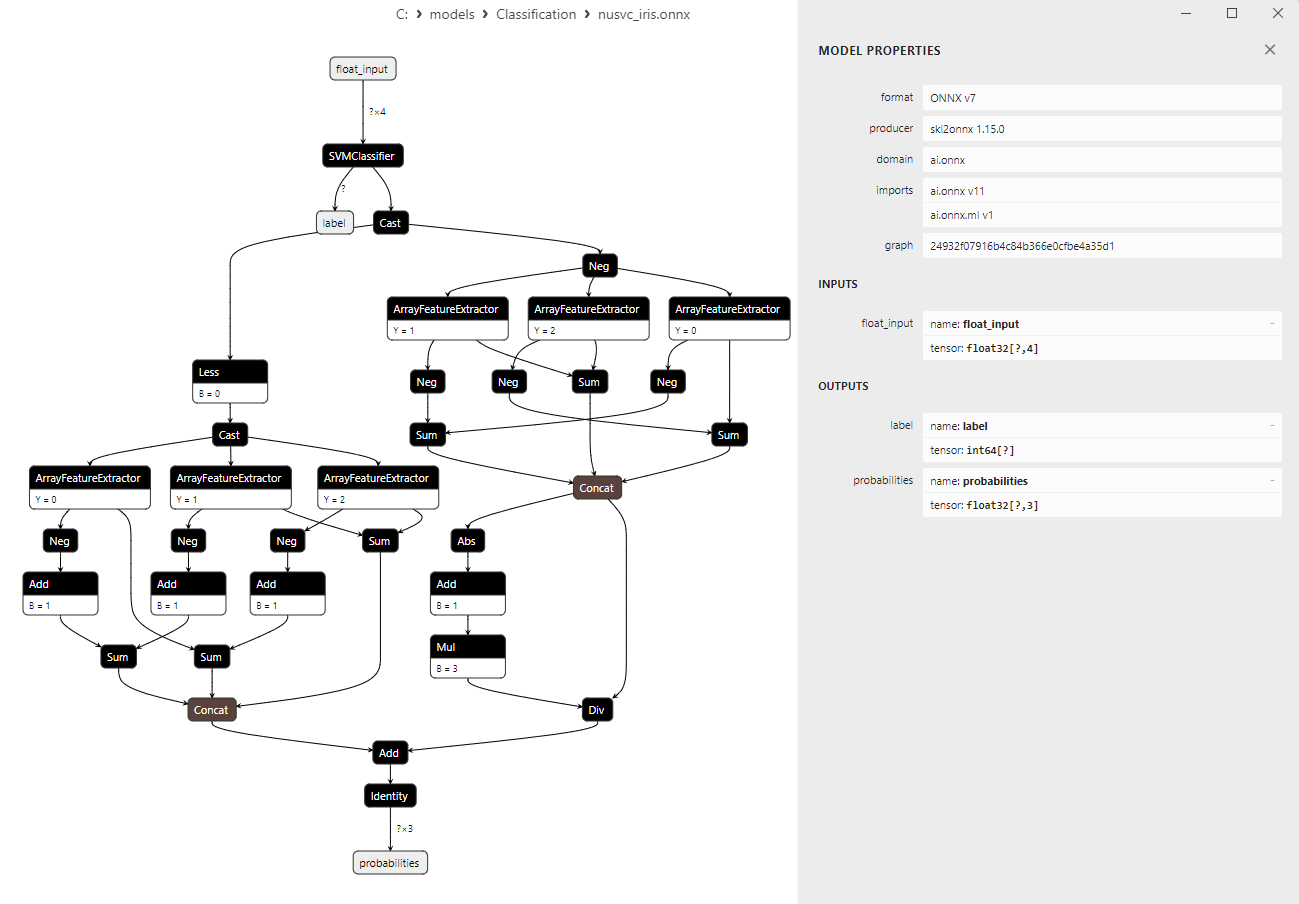

2.3.3. ONNX Representation of the NuSVC Classifier Model - 2.4. Radius Neighbors Classifier

2.4.1. Code for Creating the Radius Neighbors Classifier Model

2.4.2. MQL5 Code for Working with the Radius Neighbors Classifier Model

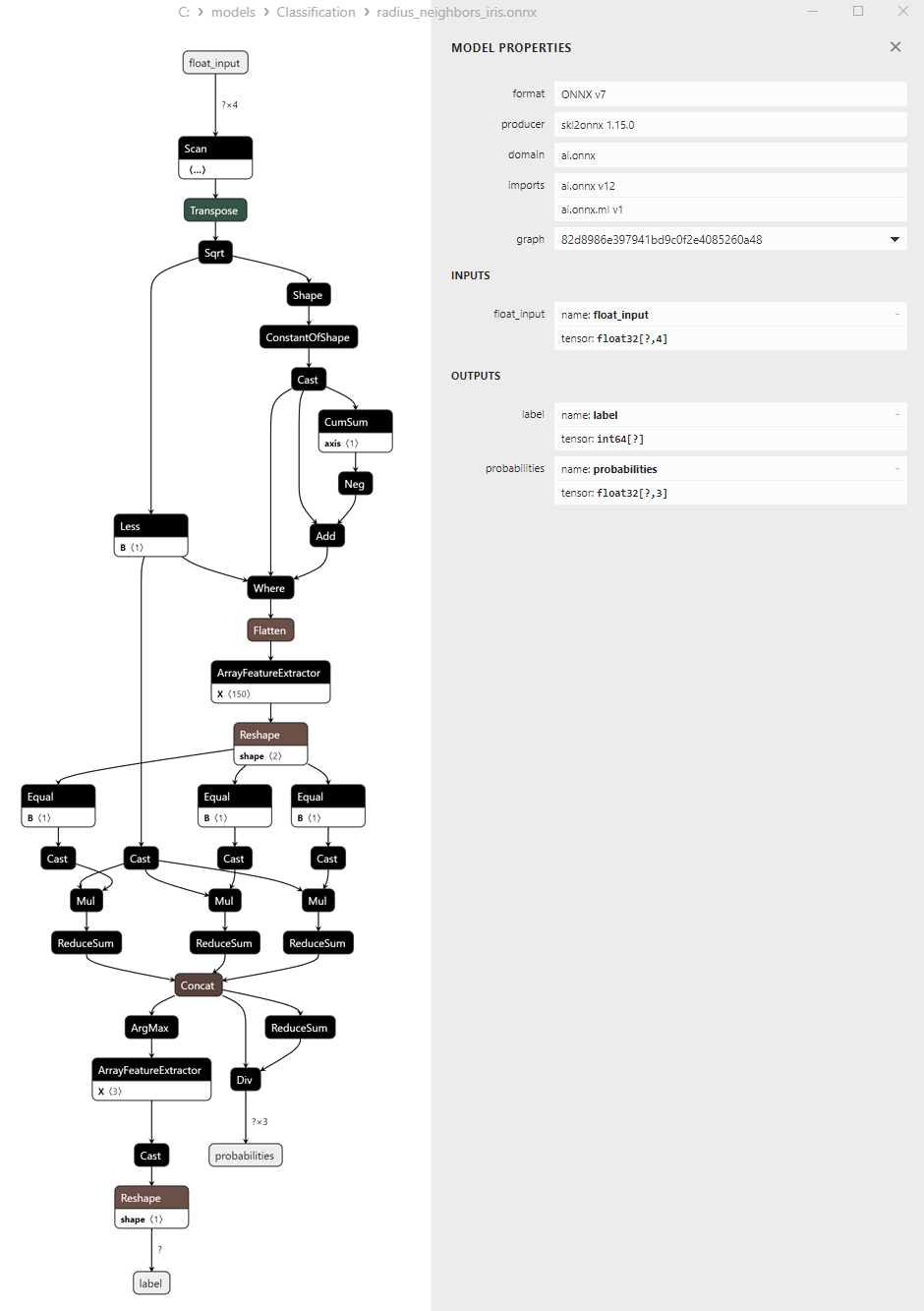

2.3.3. ONNX Representation of the Radius Neighbors Classifier Model - 2.5. Ridge Classifier

2.5.1. Code for Creating the Ridge Classifier Model

2.5.2. MQL5 Code for Working with the Ridge Classifier Model

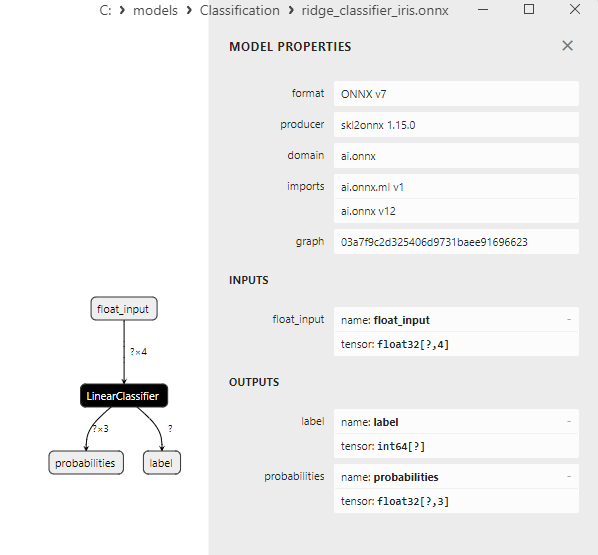

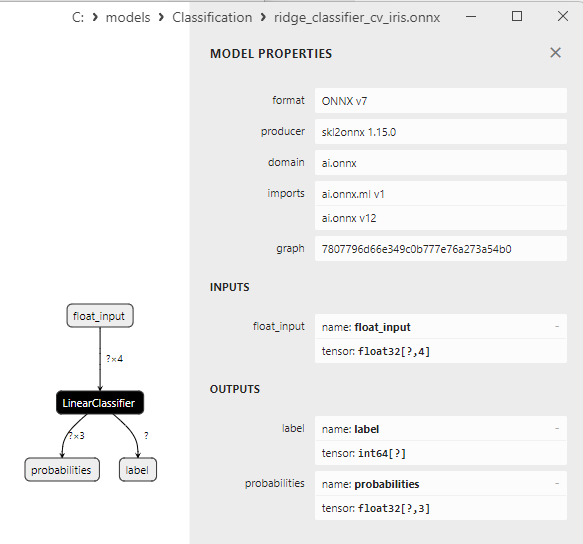

2.5.3. ONNX Representation of the Ridge Classifier Model - 2.6. RidgeClassifierCV

2.6.1. Code for Creating the Ridge ClassifierCV Model

2.6.2. MQL5 Code for Working with the Ridge ClassifierCV Model

2.6.3. ONNX Representation of the Ridge ClassifierCV Model - 2.7. Random Forest Classifier

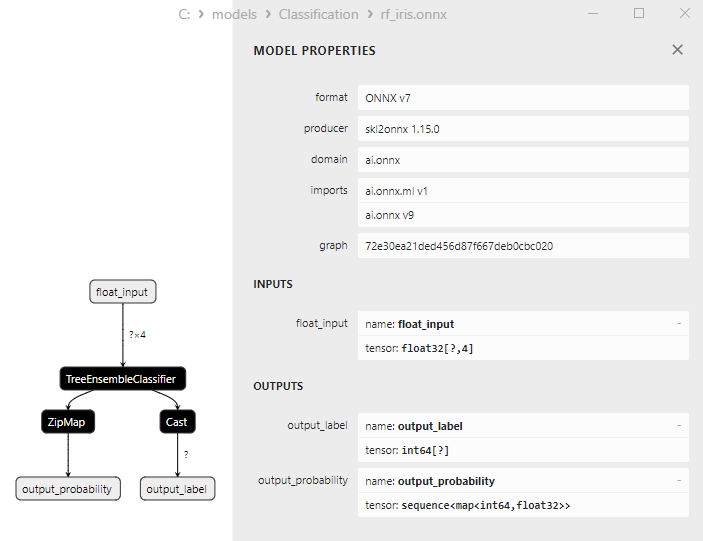

2.7.1. Code for Creating the Random Forest Classifier Model

2.7.2. MQL5 Code for Working with the Random Forest Classifier Model

2.7.3. ONNX Representation of the Random Forest Classifier Model - 2.8. Gradient Boosting Classifier

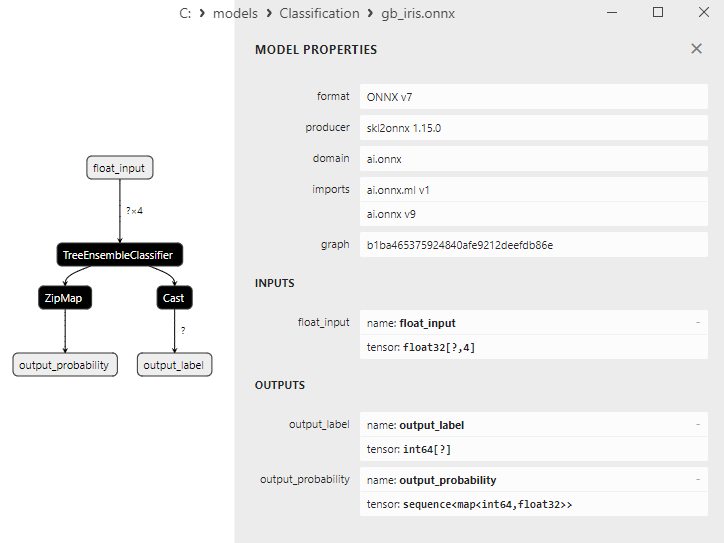

2.8.1. Code for Creating the Gradient Boosting Classifier Model

2.8.2. MQL5 Code for Working with the Gradient Boosting Classifier Model

2.8.3. ONNX Representation of the Gradient Boosting Classifier Model - 2.9. Adaptive Boosting Classifier

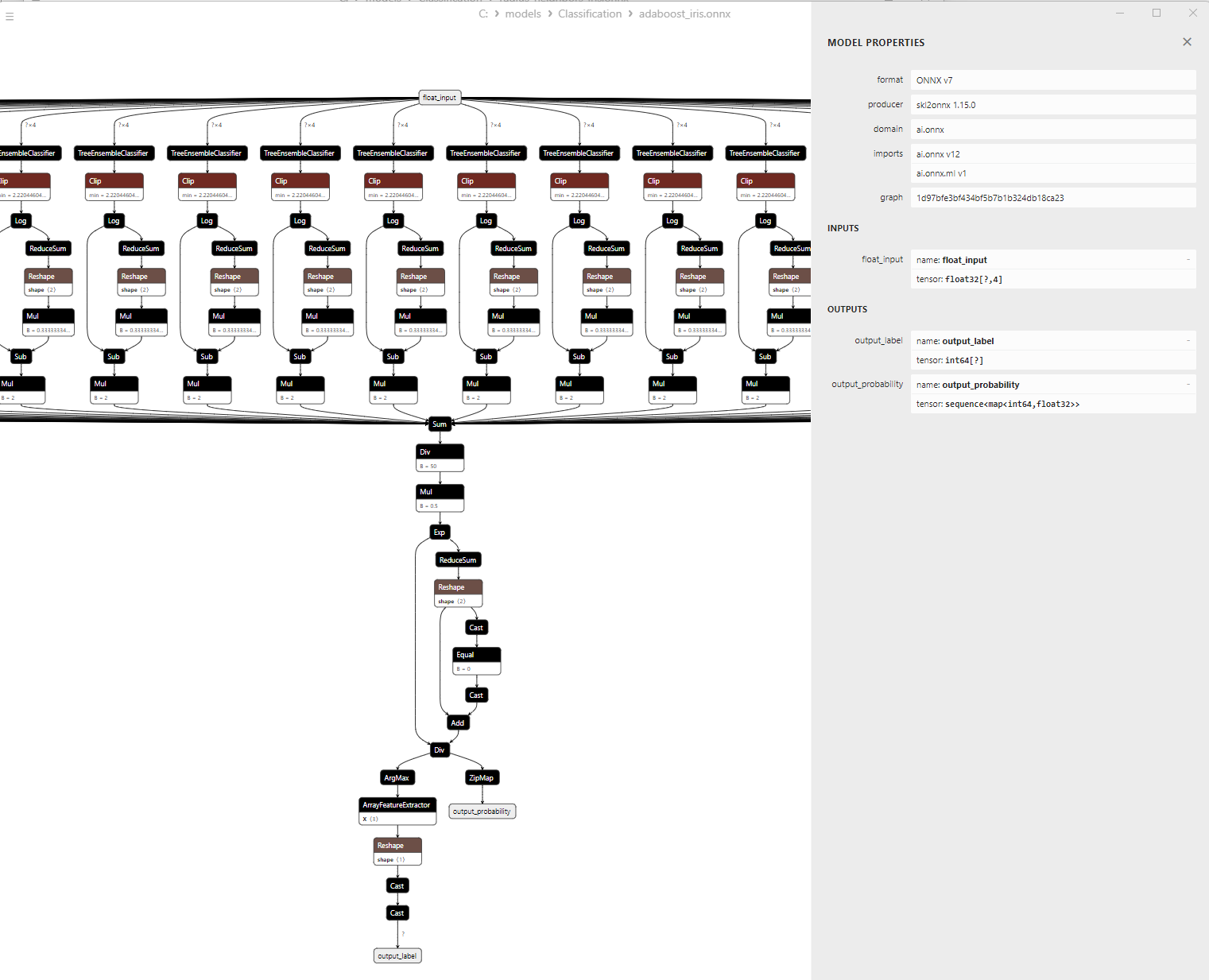

2.9.1. Code for Creating the Adaptive Boosting Classifier Model

2.9.2. MQL5 Code for Working with the Adaptive Boosting Classifier Model

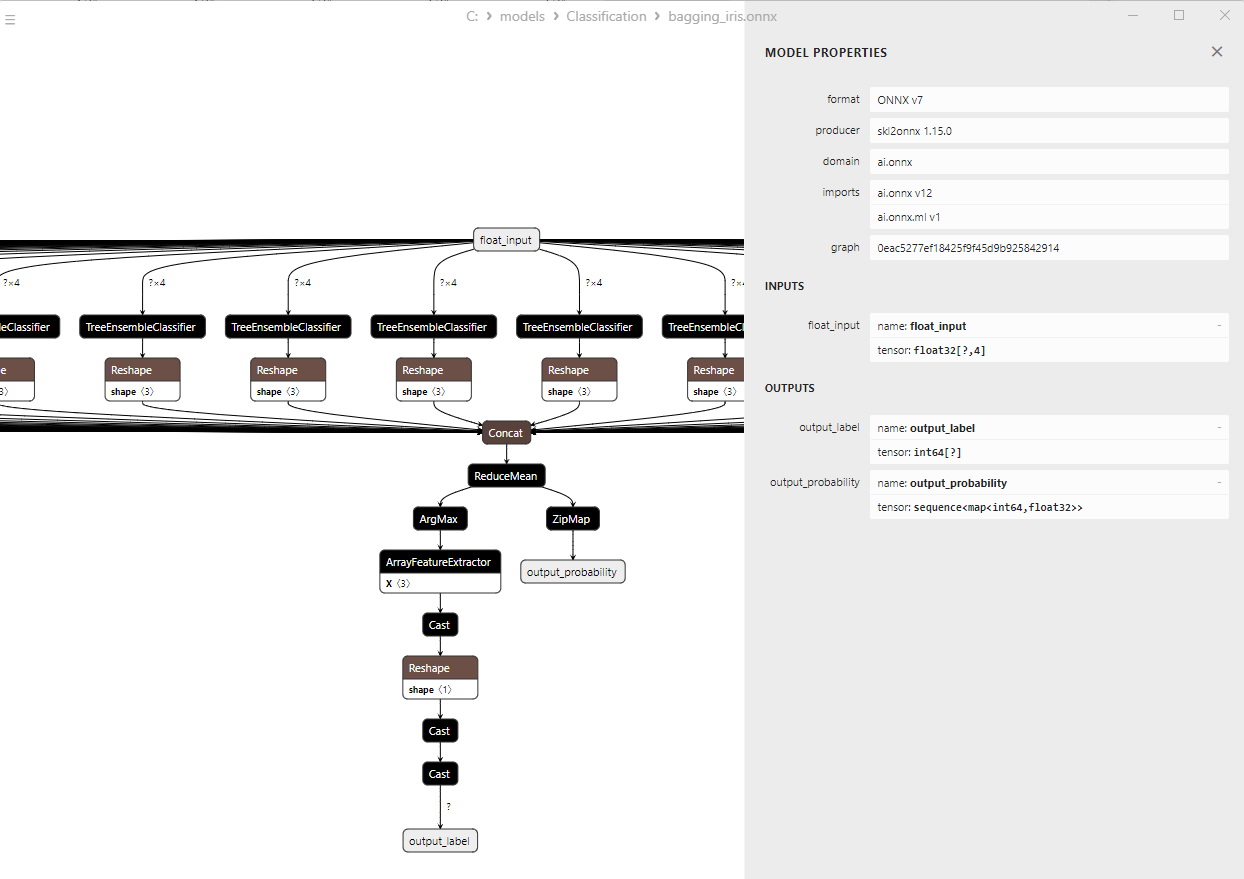

2.9.3. ONNX Representation of the Adaptive Boosting Classifier Model - 2.10. Bootstrap Aggregating Classifier

2.10.1. Code for Creating the Bootstrap Aggregating Classifier Model

2.10.2. MQL5 Code for Working with the Bootstrap Aggregating Classifier Model

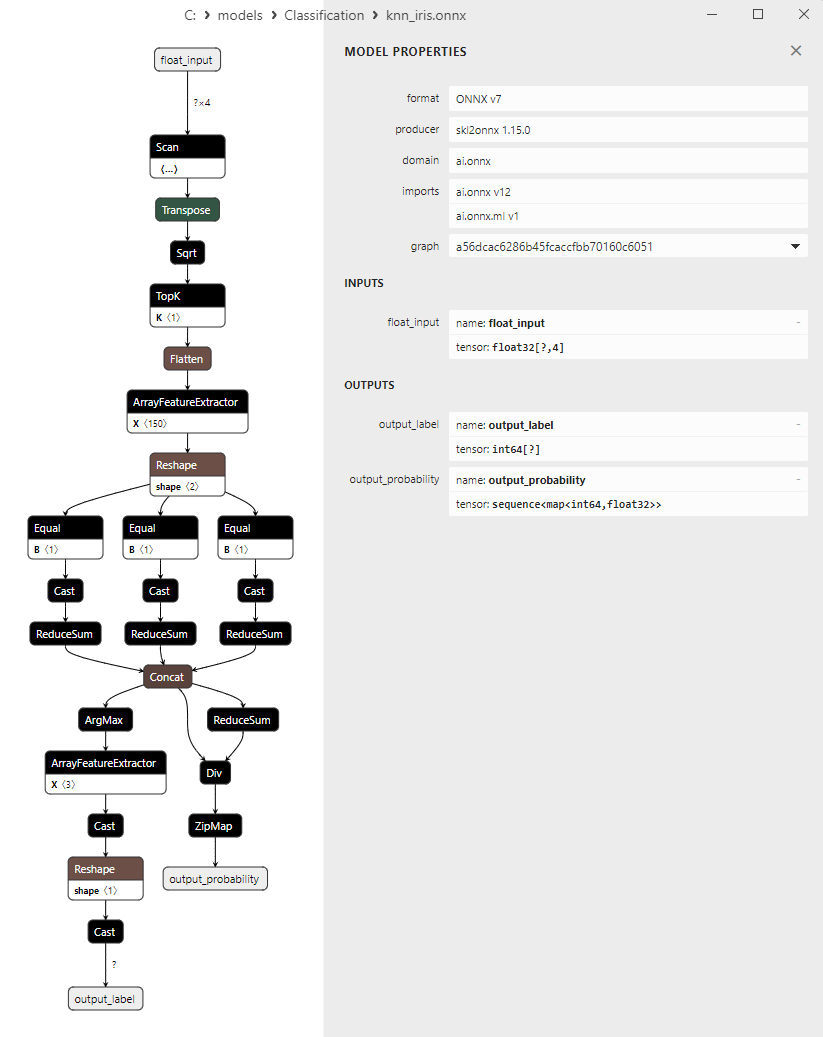

2.10.3. ONNX Representation of the Bootstrap Aggregating Classifier Model - 2.11. K-Nearest Neighbors (K-NN) Classifier

2.11.1. Code for Creating the K-Nearest Neighbors (K-NN) Classifier Model

2.11.2. MQL5 Code for Working with the K-Nearest Neighbors (K-NN) Classifier Model

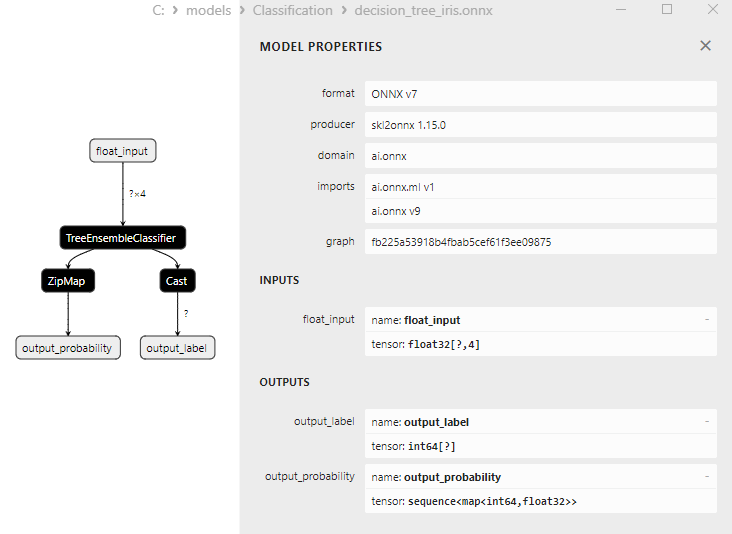

2.11.3. ONNX Representation of the K-Nearest Neighbors (K-NN) Classifier Model - 2.12. Decision Tree Classifier

2.12.1. Code for Creating the Decision Tree Classifier Model

2.12.2. MQL5 Code for Working with the Decision Tree Classifier Model

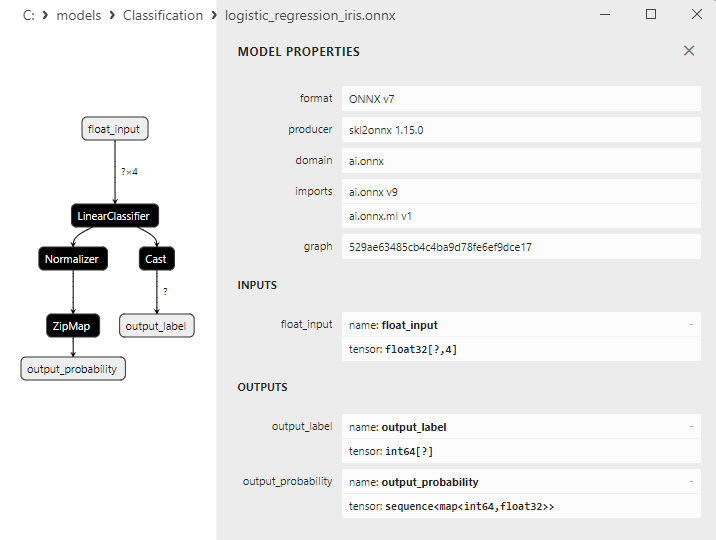

2.12.3. ONNX Representation of the Decision Tree Classifier Model - 2.13. Logistic Regression Classifier

2.13.1. Code for Creating the Logistic Regression Classifier Model

2.13.2. MQL5 Code for Working with the Logistic Regression Classifier Model

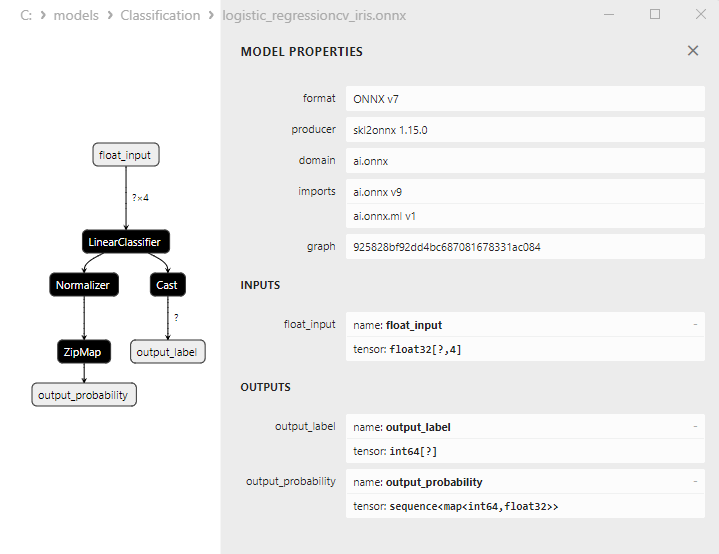

2.13.3. ONNX Representation of the Logistic Regression Classifier Model - 2.14. LogisticRegressionCV Classifier

2.14.1. Code for Creating the LogisticRegressionCV Classifier Model

2.14.2. MQL5 Code for Working with the LogisticRegressionCV Classifier Model

2.14.3. ONNX Representation of the LogisticRegressionCV Classifier Model - 2.15. Passive-Aggressive (PA) Classifier

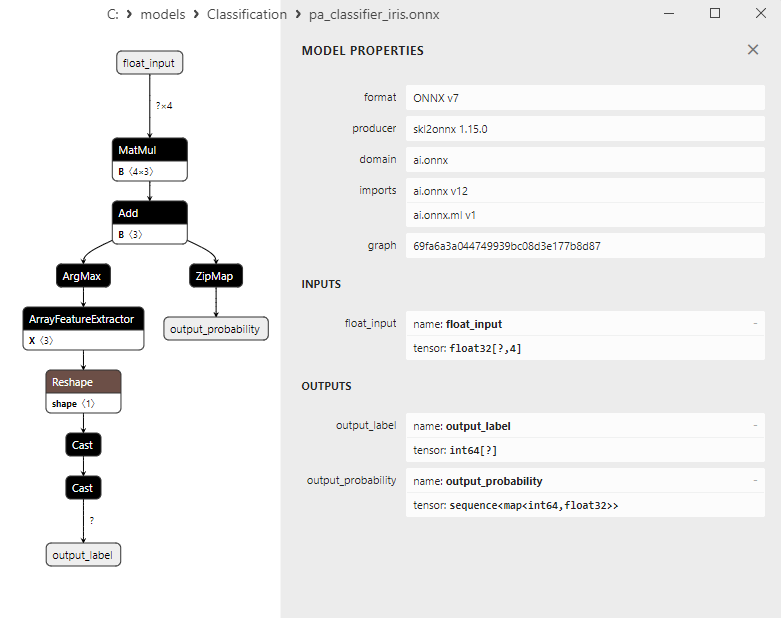

2.15.1. Code for Creating the Passive-Aggressive (PA) Classifier Model

2.15.2. MQL5 Code for Working with the Passive-Aggressive (PA) Classifier Model

2.15.3. ONNX Representation of the Passive-Aggressive (PA) Classifier Model - 2.16. Perceptron Classifier

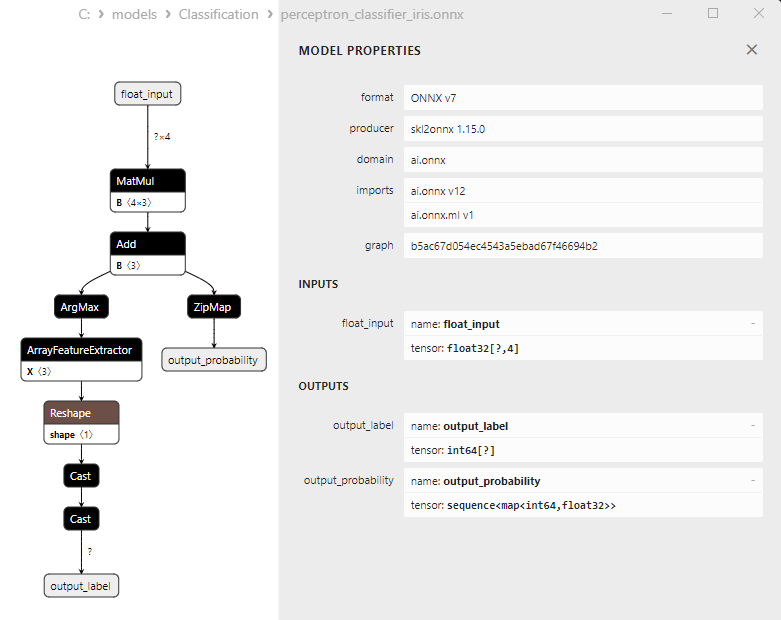

2.16.1. Code for Creating the Perceptron Classifier Model

2.16.2. MQL5 Code for Working with the Perceptron Classifier Model

2.16.3. ONNX Representation of the Perceptron Classifier Model - 2.17. Stochastic Gradient Descent Classifier

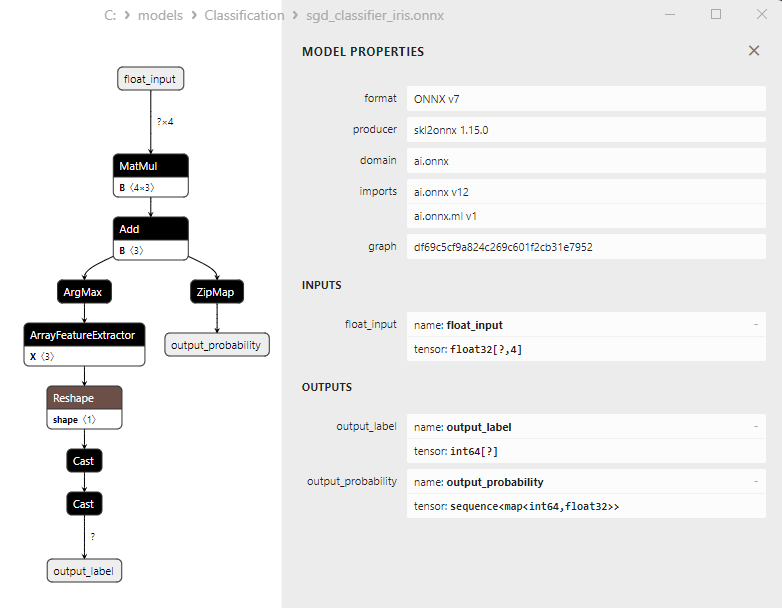

2.17.1. Code for Creating the Stochastic Gradient Descent Classifier Model

2.17.2. MQL5 Code for Working with the Stochastic Gradient Descent Classifier Model

2.17.3. ONNX Representation of the Stochastic Gradient Descent Classifier Model - 2.18. Gaussian Naive Bayes (GNB) Classifier

2.18.1. Code for Creating the Gaussian Naive Bayes (GNB) Classifier Model

2.18.2. MQL5 Code for Working with the Gaussian Naive Bayes (GNB) Classifier Model

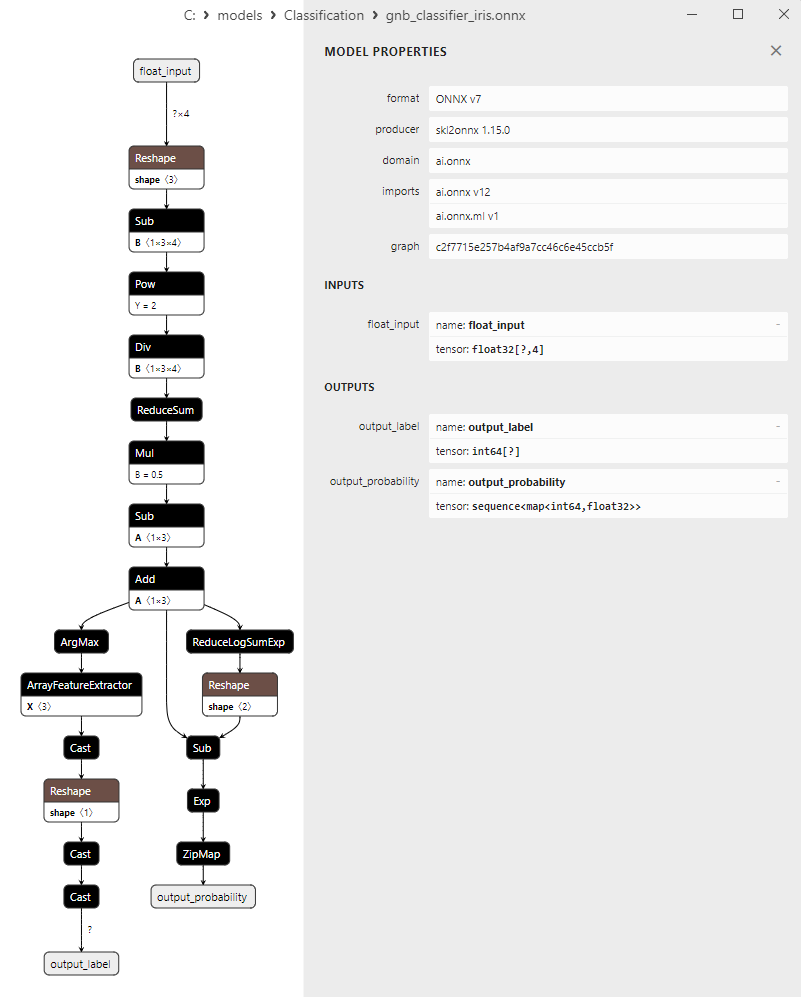

2.18.3. ONNX Representation of the Gaussian Naive Bayes (GNB) Classifier Model - 2.19. Multinomial Naive Bayes (MNB) Classifier

2.19.1. Code for Creating the Multinomial Naive Bayes (MNB) Classifier Model

2.19.2. MQL5 Code for Working with the Multinomial Naive Bayes (MNB) Classifier Model

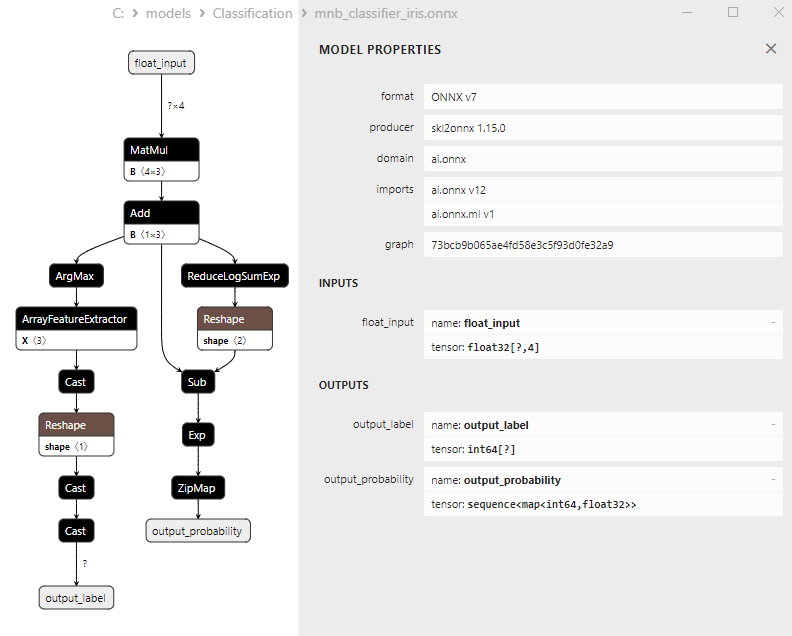

2.19.3. ONNX Representation of the Multinomial Naive Bayes (MNB) Classifier Model - 2.20. Complement Naive Bayes (CNB) Classifier

2.20.1. Code for Creating the Complement Naive Bayes (CNB) Classifier Model

2.20.2. MQL5 Code for Working with the Complement Naive Bayes (CNB) Classifier Model

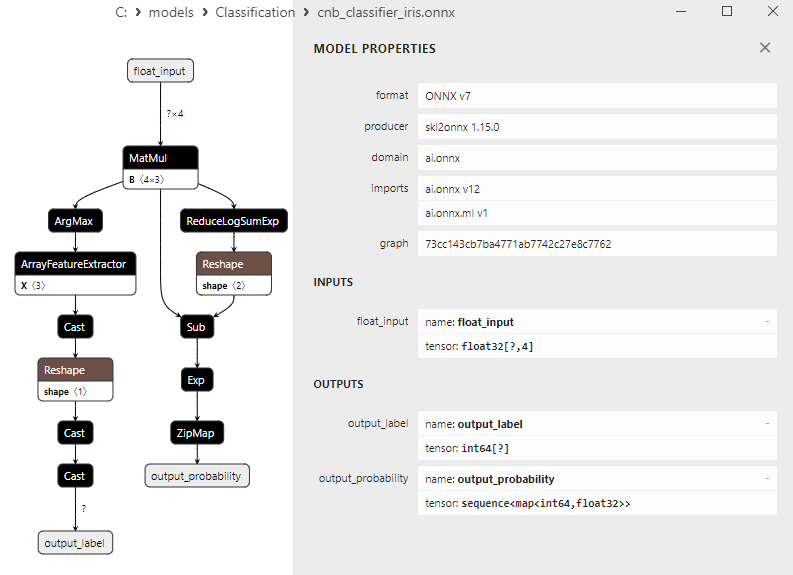

2.20.3. ONNX Representation of the Complement Naive Bayes (CNB) Classifier Model - 2.21. Bernoulli Naive Bayes (BNB) Classifier

2.21.1. Code for Creating the Bernoulli Naive Bayes (BNB) Classifier Model

2.21.2. MQL5 Code for Working with the Bernoulli Naive Bayes (BNB) Classifier Model

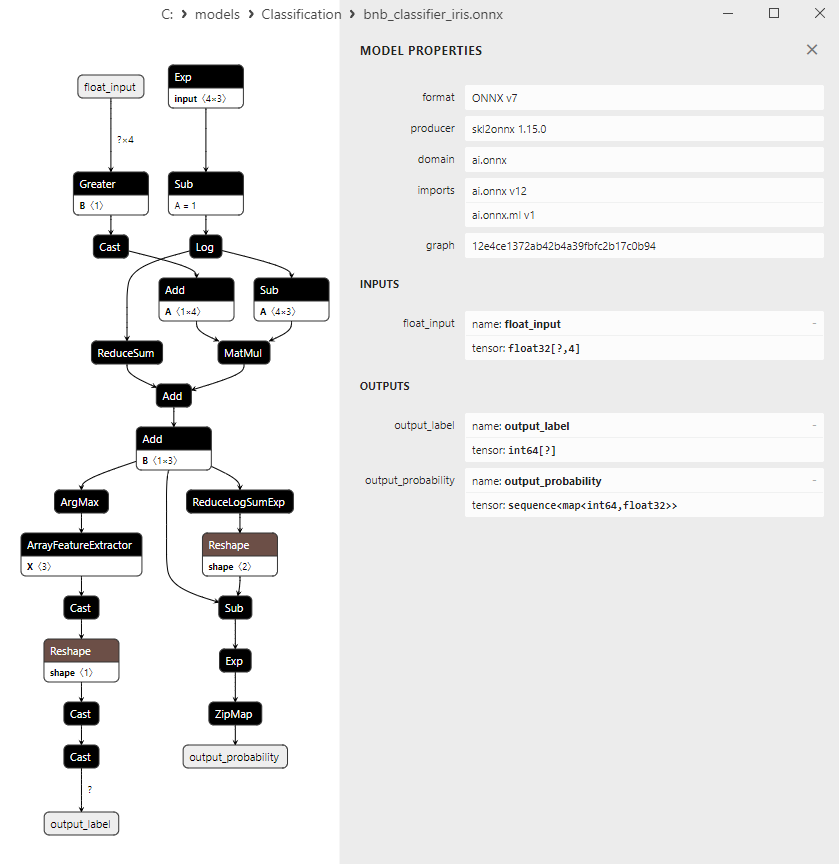

2.21.3. ONNX Representation of the Bernoulli Naive Bayes (BNB) Classifier Model - 2.22. Multilayer Perceptron Classifier

2.22.1. Code for Creating the Multilayer Perceptron Classifier Model

2.22.2. MQL5 Code for Working with the Multilayer Perceptron Classifier Model

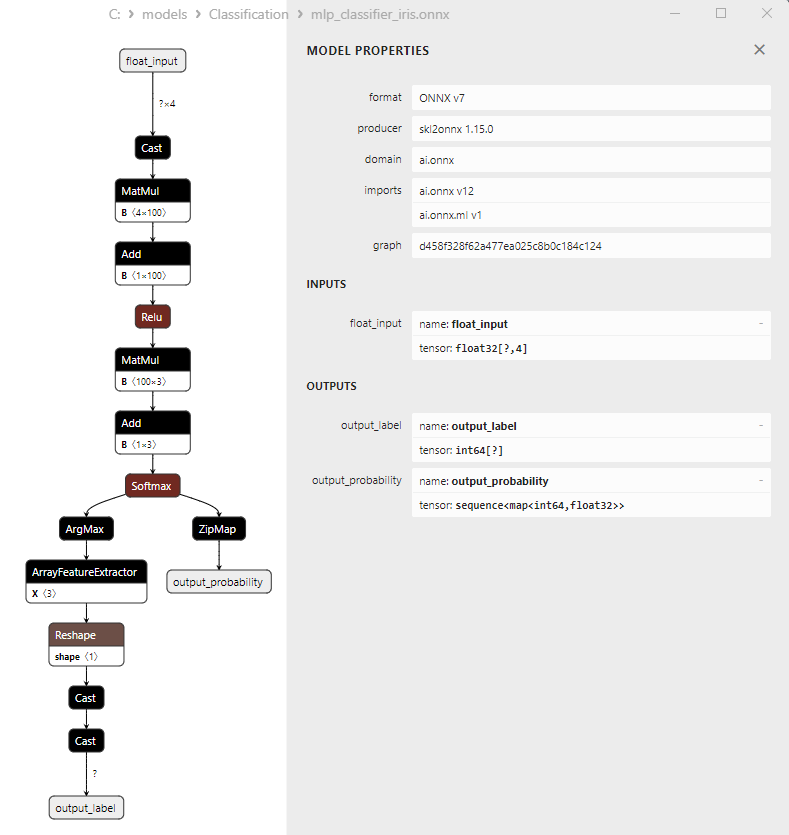

2.22.3. ONNX Representation of the Multilayer Perceptron Classifier Model - 2.23. Linear Discriminant Analysis (LDA) Classifier

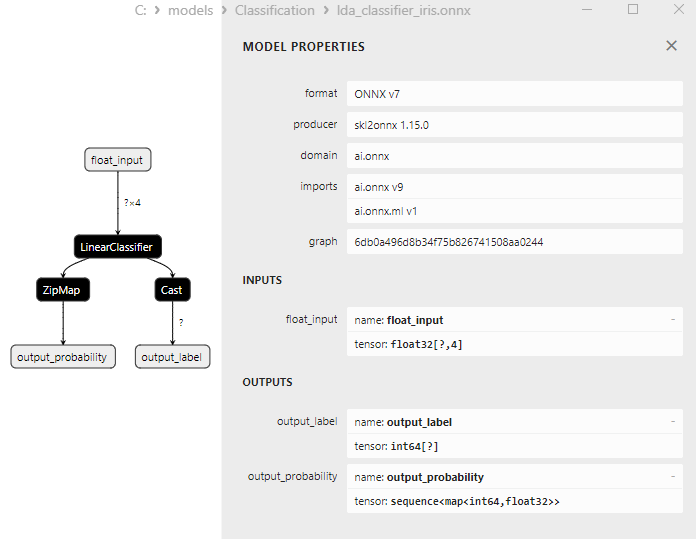

2.23.1. Code for Creating the Linear Discriminant Analysis (LDA) Classifier Model

2.23.2. MQL5 Code for Working with the Linear Discriminant Analysis (LDA) Classifier Model

2.23.3. ONNX Representation of the Linear Discriminant Analysis (LDA) Classifier Model - 2.24. Hist Gradient Boosting

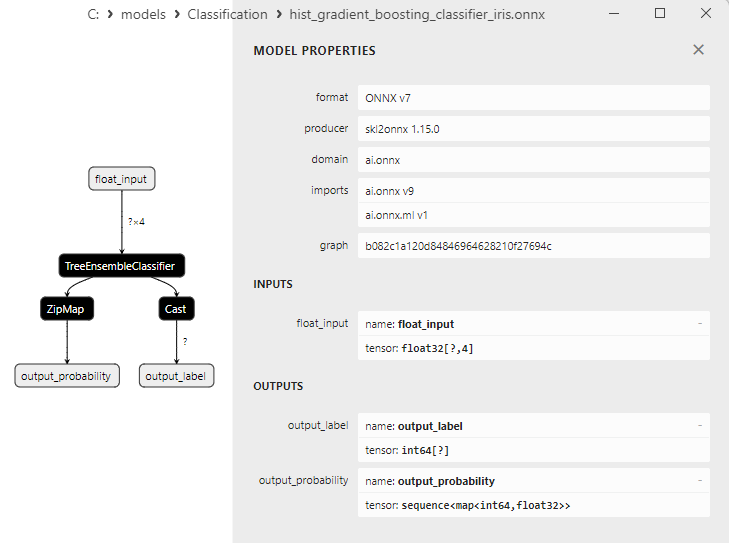

2.24.1. Code for Creating the Histogram-Based Gradient Boosting Classifier Model

2.24.2. MQL5 Code for Working with the Histogram-Based Gradient Boosting Classifier Model

2.24.3. ONNX Representation of the Histogram-Based Gradient Boosting Classifier Model - 2.25. CategoricalNB Classifier

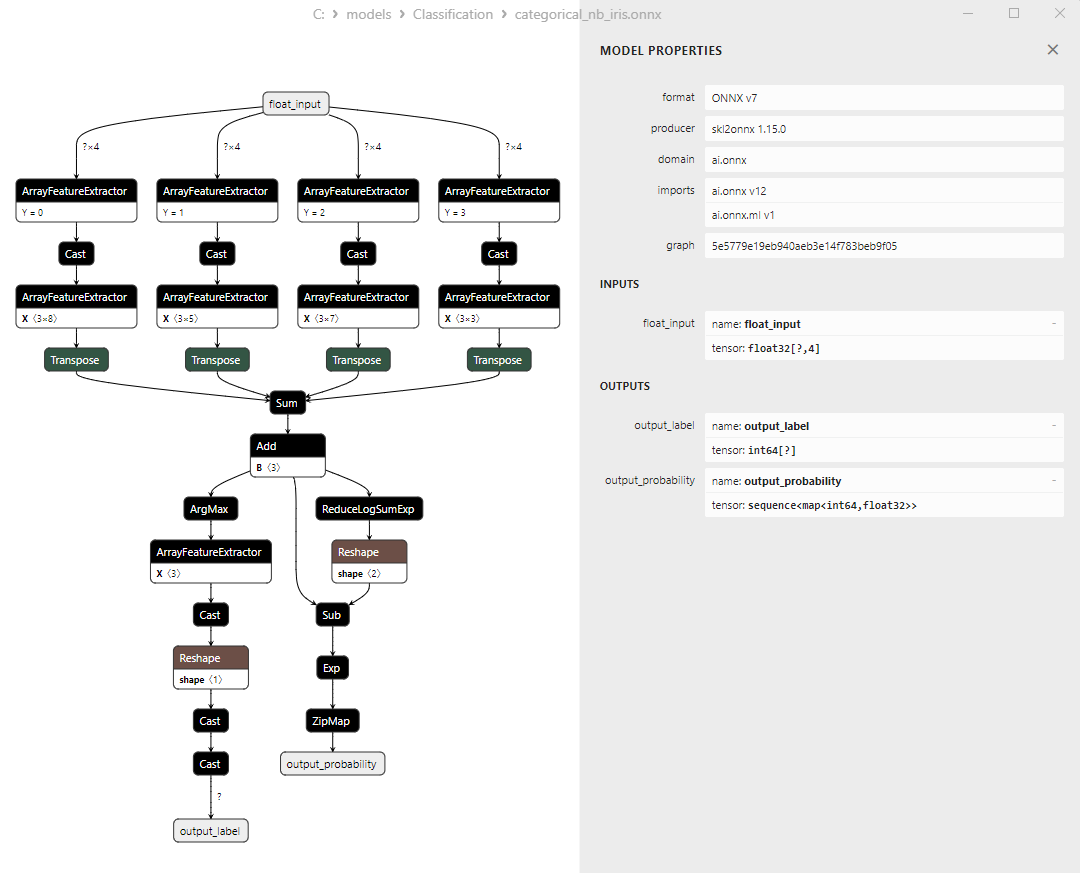

2.25.1. Code for Creating the CategoricalNB Classifier Model

2.25.2. MQL5 Code for Working with the CategoricalNB Classifier Model

2.25.3. ONNX Representation of the CategoricalNB Classifier Model - 2.26. ExtraTreeClassifier

2.26.1. Code for Creating the ExtraTreeClassifier Model

2.26.2. MQL5 Code for Working with the ExtraTreeClassifier Model

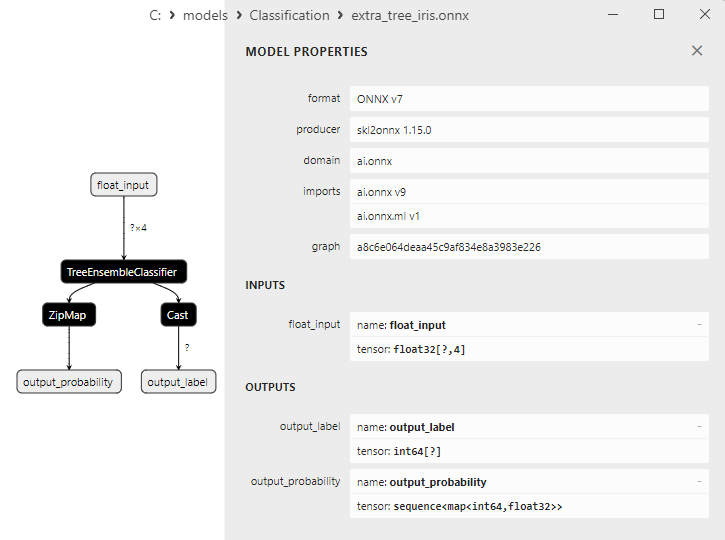

2.26.3. ONNX Representation of the ExtraTreeClassifier Model - 2.27. ExtraTreesClassifier

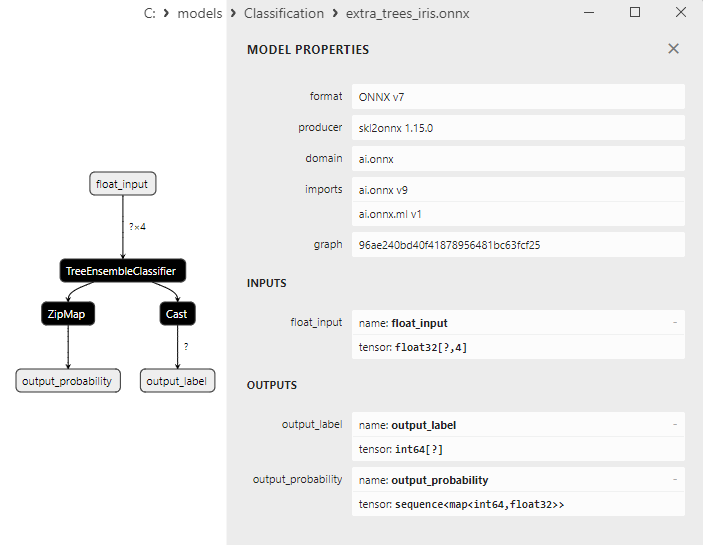

2.27.1. Code for Creating the ExtraTreesClassifier Model

2.27.2. MQL5 Code for Working with the ExtraTreesClassifier Model

2.27.3. ONNX Representation of the ExtraTreesClassifier Model - 2.28. Comparing the Accuracy of All Models

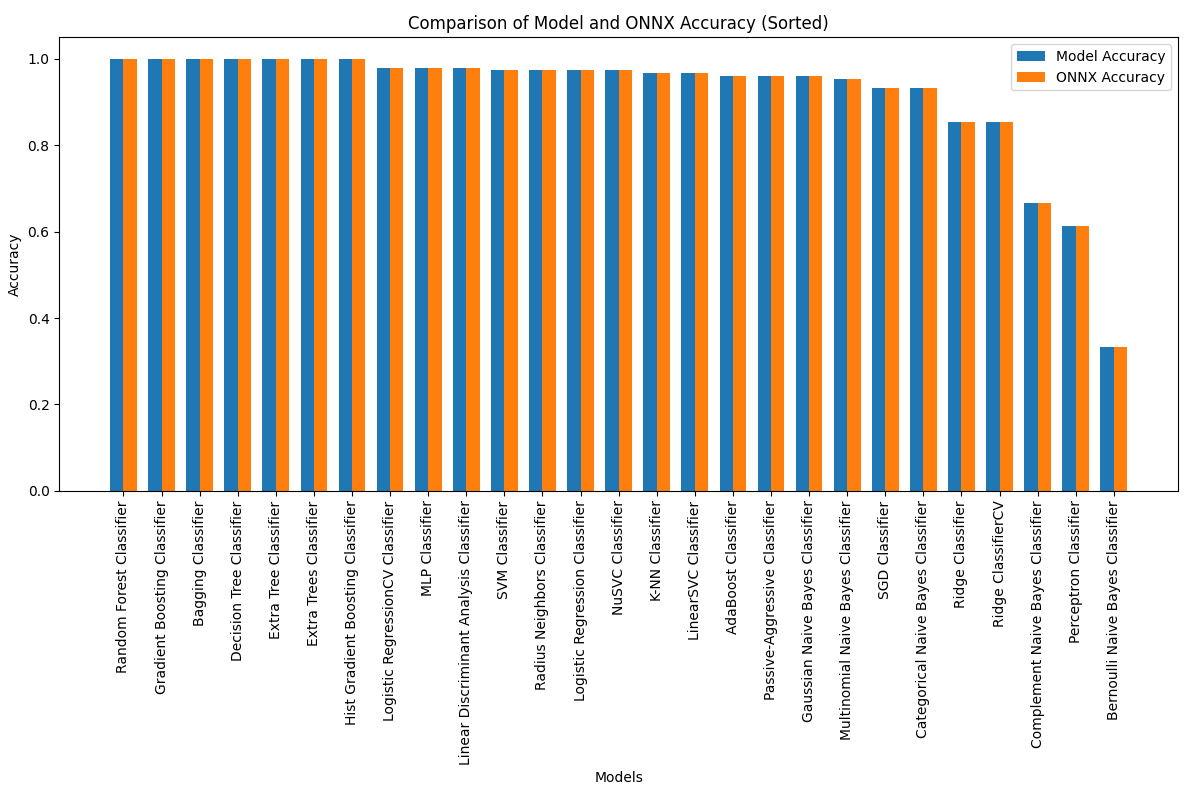

2.28.1. Code for Calculating All Models and Building an Accuracy Comparison Chart

2.28.2. MQL5 Code for Executing All ONNX Models - 2.29. Scikit-Learn Classification Models That Could Not Be Converted to ONNX

- 2.29.1. DummyClassifier

2.29.1.1. Code for Creating the DummyClassifier Model - 2.29.2. GaussianProcessClassifier

2.29.2.1. Code for Creating the GaussianProcessClassifier Model - 2.29.3. LabelPropagation Classifier

2.29.3.1. Code for Creating the LabelPropagationClassifier Model - 2.29.4. LabelSpreading Classifier

2.29.4.1. Code for Creating the LabelSpreadingClassifier Model - 2.29.5. NearestCentroid Classifier

2.29.5.1. Code for Creating the NearestCentroid Model - 2.29.6. Quadratic Discriminant Analysis Classifier

2.29.6.1. Code for Creating the Quadratic Discriminant Analysis Model - Conclusions

1. Fisher's Irises

The Iris dataset is one of the most well-known and widely used datasets in the field of machine learning. It was first introduced in 1936 by the statistician and biologist R.A. Fisher and has since become a classic dataset for classification tasks.

The Iris dataset consists of measurements of sepals and petals of three species of irises - Iris setosa, Iris virginica, and Iris versicolor.

Figure 1. Iris setosa

Figure 2. Iris virginica

Figure 3. Iris versicolor

The Iris dataset comprises 150 instances of irises, with 50 instances of each of the three species. Each instance has four numerical features (measured in centimeters):

- Sepal length

- Sepal width

- Petal length

- Ppetal width

Each instance also has a corresponding class indicating the iris species (Iris setosa, Iris virginica, or Iris versicolor). This classification attribute makes the Iris dataset an ideal dataset for machine learning tasks such as classification and clustering.

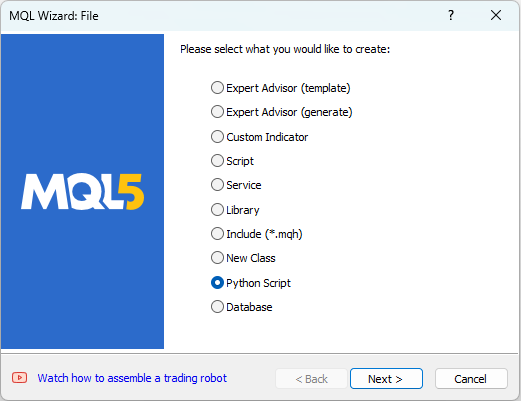

MetaEditor allows working with Python scripts. To create a Python script, select "New" from the "File" menu in MetaEditor, and a dialog for choosing the object to be created will appear (see Figure 4).

Figure 4. Creating a Python script in MQL5 Wizard - Step 1

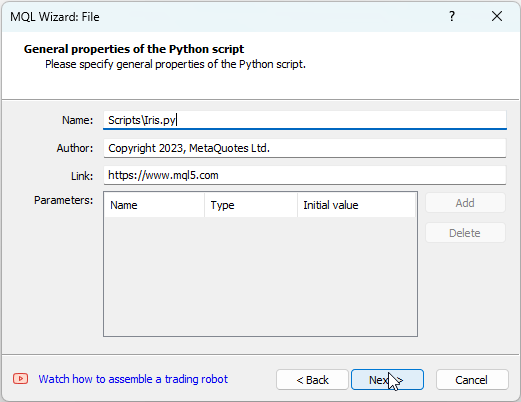

Next, provide a name for the script, for example, "IRIS.py" (see Figure 5).

Figure 5. Creating a Python script in MQL5 Wizard - Step 2 - Script Name

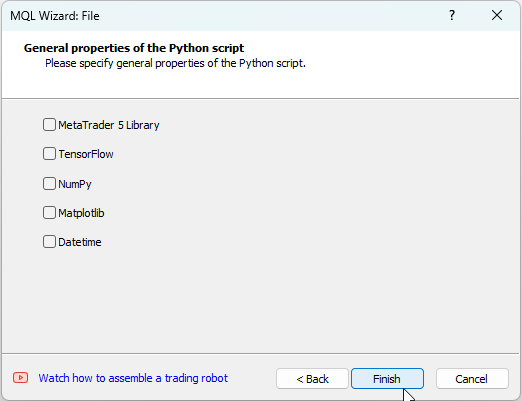

After that, you can specify which libraries will be used. In our case, we will leave these fields empty (see Figure 6).

Figure 6: Creating a Python script in MQL5 Wizard - Step 3

One way to start analyzing the Iris dataset is by visualizing the data. A graphical representation allows us to better understand the data's structure and relationships between features.

For example, you can create a scatter plot to see how different species of irises are distributed in the feature space.

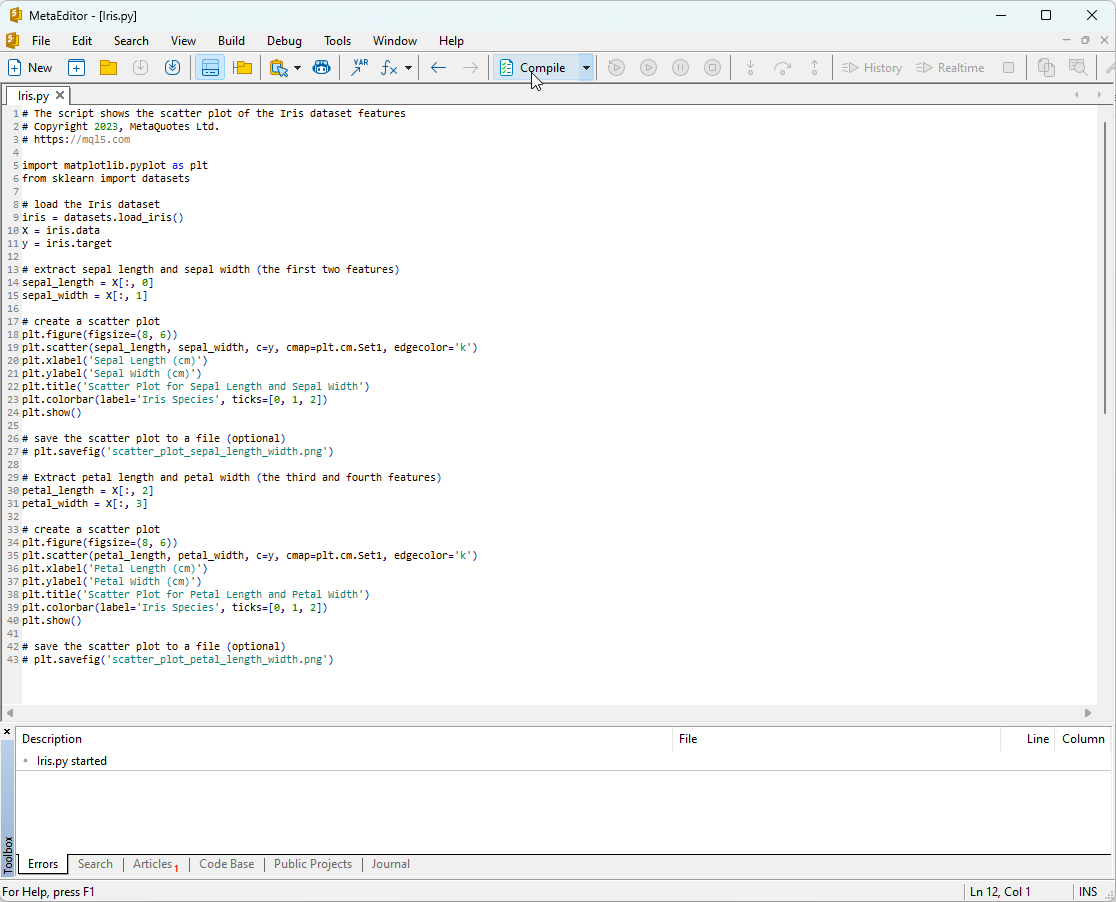

Python script code:

# The script shows the scatter plot of the Iris dataset features # Copyright 2023, MetaQuotes Ltd. # https://mql5.com import matplotlib.pyplot as plt from sklearn import datasets # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # extract sepal length and sepal width (the first two features) sepal_length = X[:, 0] sepal_width = X[:, 1] # create a scatter plot plt.figure(figsize=(8, 6)) plt.scatter(sepal_length, sepal_width, c=y, cmap=plt.cm.Set1, edgecolor='k') plt.xlabel('Sepal Length (cm)') plt.ylabel('Sepal Width (cm)') plt.title('Scatter Plot for Sepal Length and Sepal Width') plt.colorbar(label='Iris Species', ticks=[0, 1, 2]) plt.show() # save the scatter plot to a file (optional) # plt.savefig('scatter_plot_sepal_length_width.png') # Extract petal length and petal width (the third and fourth features) petal_length = X[:, 2] petal_width = X[:, 3] # create a scatter plot plt.figure(figsize=(8, 6)) plt.scatter(petal_length, petal_width, c=y, cmap=plt.cm.Set1, edgecolor='k') plt.xlabel('Petal Length (cm)') plt.ylabel('Petal Width (cm)') plt.title('Scatter Plot for Petal Length and Petal Width') plt.colorbar(label='Iris Species', ticks=[0, 1, 2]) plt.show() # save the scatter plot to a file (optional) # plt.savefig('scatter_plot_petal_length_width.png')

To run this script, you need to copy it into MetaEditor (see Figure 7) and click "Compile."

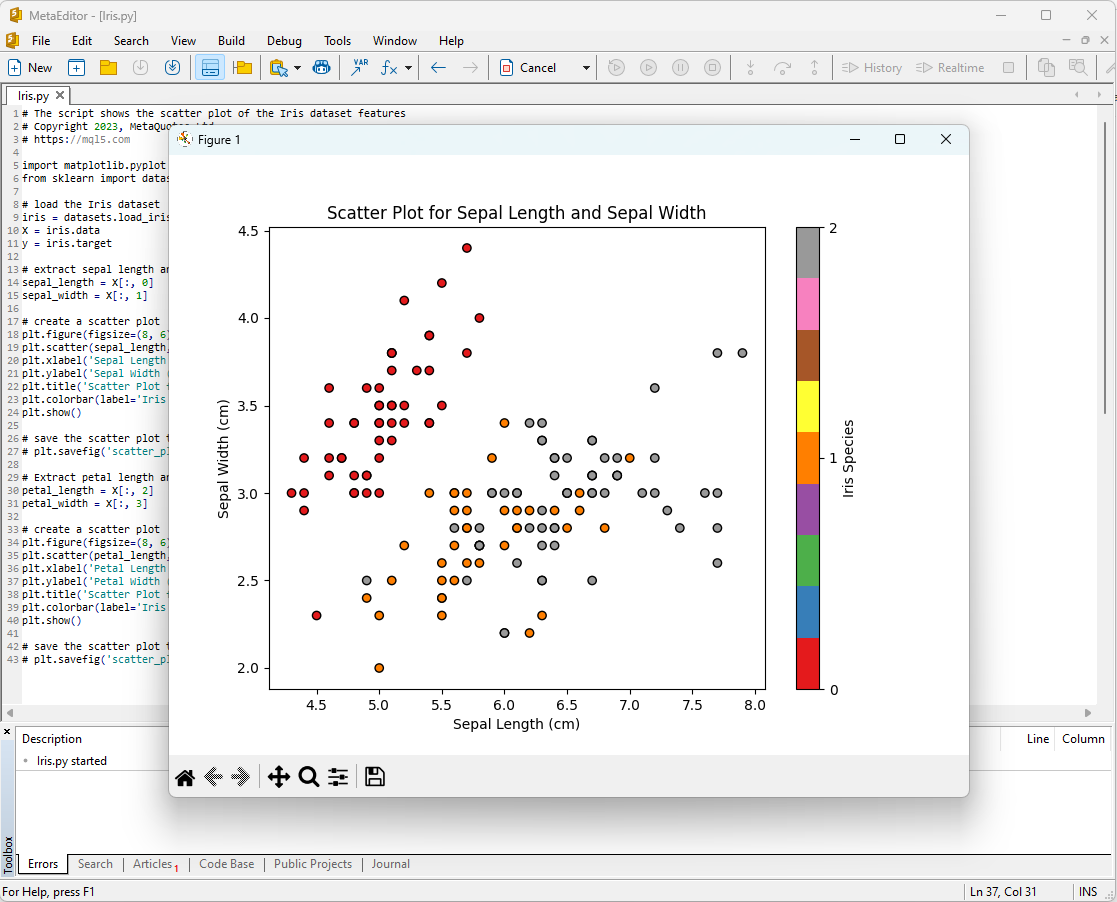

Figure 7: IRIS.py script in MetaEditor

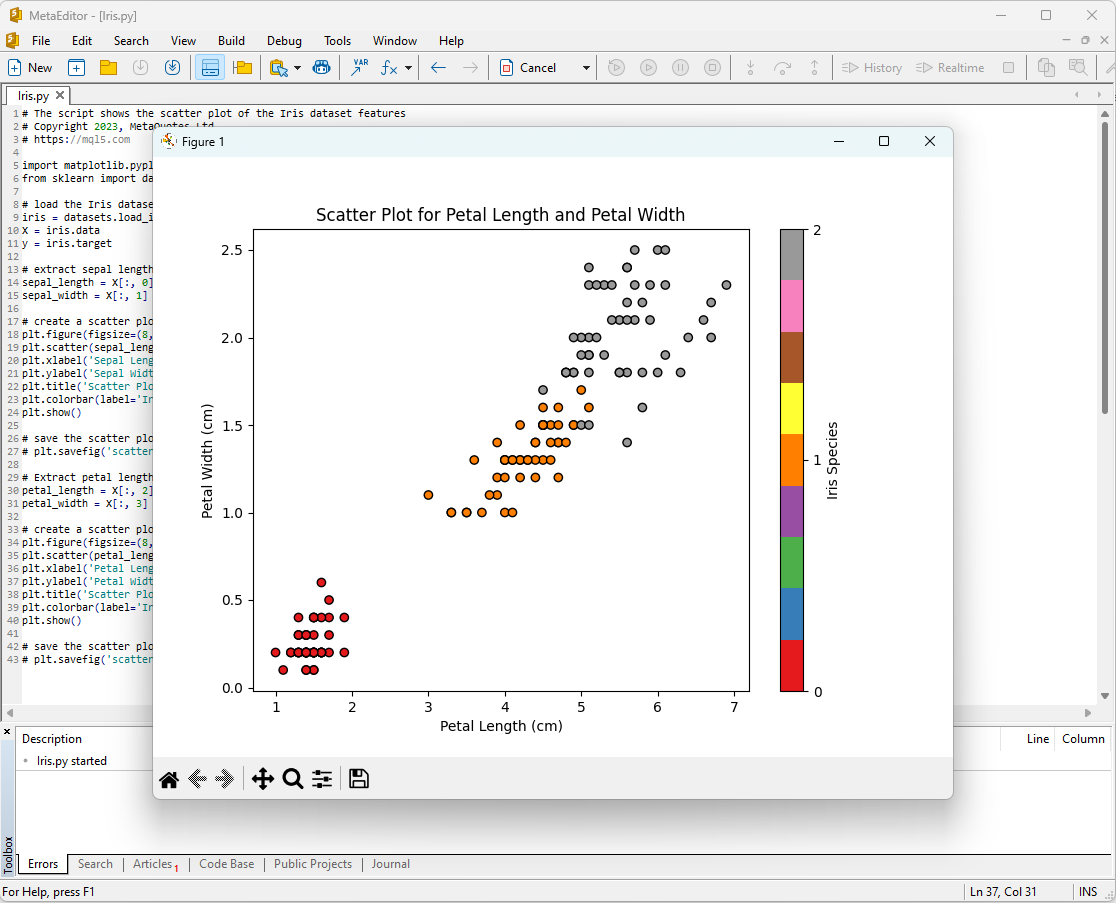

After that, the plots will appear on the screen:

Figure 8: IRIS.py script in MetaEditor with Sepal Length/Sepal Width plot

Figure 9: IRIS.py script in MetaEditor with Petal Length/Petal Width plot

Let's take a closer look at them.

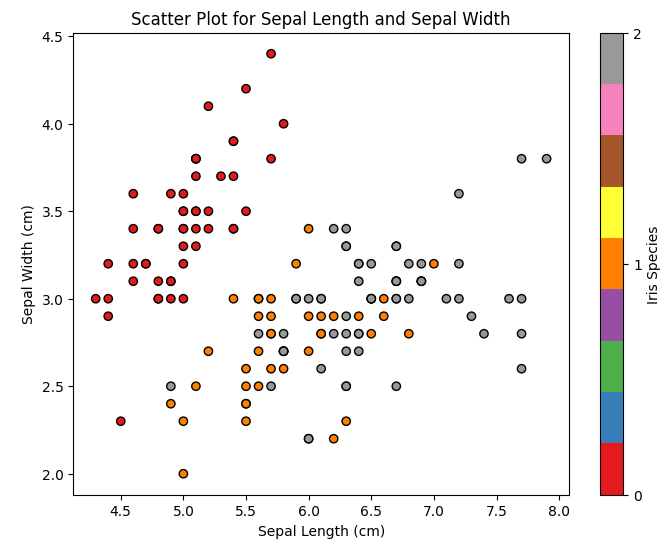

Figure 10: Scatter Plot Sepal Length vs Sepal Width

In this plot, we can see how different iris species are distributed based on sepal length and sepal width. We can observe that Iris setosa typically has shorter and wider sepals compared to the other two species.

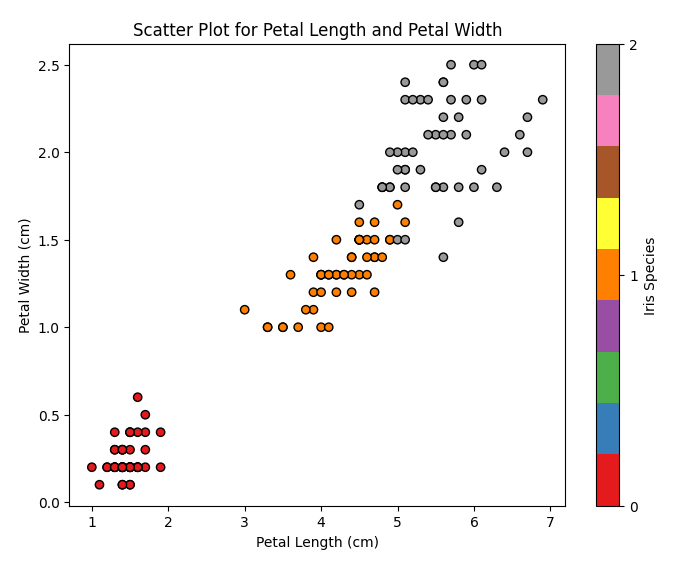

Figure 11: Scatter Plot Petal Length vs Petal Width

In this plot, we can see how different iris species are distributed based on petal length and petal width. We can notice that Iris setosa has the shortest and narrowest petals, Iris virginica has the longest and widest petals, and Iris versicolor falls in between.

The Iris dataset is an ideal dataset for training and testing machine learning models. We will use it to analyze the effectiveness of machine learning models for a classification task.

2. Models for Classification

Classification is one of the fundamental tasks in machine learning, and its goal is to categorize data into different categories or classes based on certain features.

Let's explore the main machine learning models in the scikit-learn package.

List of Scikit-learn classifiers

To display a list of available classifiers in scikit-learn, you can use the following script:

# ScikitLearnClassifiers.py # The script lists all the classification algorithms available in scikit-learn # Copyright 2023, MetaQuotes Ltd. # https://mql5.com # print Python version from platform import python_version print("The Python version is ", python_version()) # print scikit-learn version import sklearn print('The scikit-learn version is {}.'.format(sklearn.__version__)) # print scikit-learn classifiers from sklearn.utils import all_estimators classifiers = all_estimators(type_filter='classifier') for index, (name, ClassifierClass) in enumerate(classifiers, start=1): print(f"Classifier {index}: {name}")

Output:

Python The scikit-learn version is 1.2.2.

Python Classifier 1: AdaBoostClassifier

Python Classifier 2: BaggingClassifier

Python Classifier 3: BernoulliNB

Python Classifier 4: CalibratedClassifierCV

Python Classifier 5: CategoricalNB

Python Classifier 6: ClassifierChain

Python Classifier 7: ComplementNB

Python Classifier 8: DecisionTreeClassifier

Python Classifier 9: DummyClassifier

Python Classifier 10: ExtraTreeClassifier

Python Classifier 11: ExtraTreesClassifier

Python Classifier 12: GaussianNB

Python Classifier 13: GaussianProcessClassifier

Python Classifier 14: GradientBoostingClassifier

Python Classifier 15: HistGradientBoostingClassifier

Python Classifier 16: KNeighborsClassifier

Python Classifier 17: LabelPropagation

Python Classifier 18: LabelSpreading

Python Classifier 19: LinearDiscriminantAnalysis

Python Classifier 20: LinearSVC

Python Classifier 21: LogisticRegression

Python Classifier 22: LogisticRegressionCV

Python Classifier 23: MLPClassifier

Python Classifier 24: MultiOutputClassifier

Python Classifier 25: MultinomialNB

Python Classifier 26: NearestCentroid

Python Classifier 27: NuSVC

Python Classifier 28: OneVsOneClassifier

Python Classifier 29: OneVsRestClassifier

Python Classifier 30: OutputCodeClassifier

Python Classifier 31: PassiveAggressiveClassifier

Python Classifier 32: Perceptron

Python Classifier 33: QuadraticDiscriminantAnalysis

Python Classifier 34: RadiusNeighborsClassifier

Python Classifier 35: RandomForestClassifier

Python Classifier 36: RidgeClassifier

Python Classifier 37: RidgeClassifierCV

Python Classifier 38: SGDClassifier

Python Classifier 39: SVC

Python Classifier 40: StackingClassifier

Python Classifier 41: VotingClassifier

For convenience in this list of classifiers, they are highlighted with different colors. Models that require base classifiers are highlighted in yellow, while other models can be used independently.

Looking ahead, it's worth noting that green-colored models have been successfully exported to the ONNX format, while red-colored models encounter errors during conversion in the current version of scikit-learn 1.2.2.

Different representation of output data in models

It should be noted that different models represent output data differently, so when working with models converted to ONNX, one should be attentive.

For the Fisher's Iris classification task, the input tensors have the same format for all these models:

1. Name: float_input, Data Type: tensor(float), Shape: [None, 4]

The output tensors of ONNX models differ.

1. Models that do not require post-processing:

- SVC Classifier;

- LinearSVC Classifier;

- NuSVC Classifier;

- Radius Neighbors Classifier;

- Ridge Classifier;

- Ridge Classifier CV.

1. Name: label, Data Type: tensor(int64), Shape: [None]

2. Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

These models return the result (class number) explicitly in the first output integer tensor "label," without requiring post-processing.

2. Models whose results require post-processing:

- Random Forest Classifier;

- Gradient Boosting Classifier;

- AdaBoost Classifier;

- Bagging Classifier;

- K-NN_Classifier;

- Decision Tree Classifier;

- Logistic Regression Classifier;

- Logistic Regression CV Classifier;

- Passive-Aggressive Classifier;

- Perceptron Classifier;

- SGD Classifier;

- Gaussian Naive Bayes Classifier;

- Multinomial Naive Bayes Classifier;

- Complement Naive Bayes Classifier;

- Bernoulli Naive Bayes Classifier;

- Multilayer Perceptron Classifier;

- Linear Discriminant Analysis Classifier;

- Hist Gradient Boosting Classifier;

- Categorical Naive Bayes Classifier;

- ExtraTree Classifier;

- ExtraTrees Classifier.

1. Name: output_label, Data Type: tensor(int64), Shape: [None]

2. Name: output_probability, Data Type: seq(map(int64,tensor(float))), Shape: []

These models return a list of classes and probabilities of belonging to each class.

To obtain the result in these cases, post-processing is required, such as seq(map(int64, tensor(float)) (finding the element with the highest probability).

Therefore, it is essential to be attentive and consider these aspects when working with ONNX models. An example of different result processing is presented in script in 2.28.2.

iris.mqh

To test models on the full Iris dataset in MQL5, data preparation is required. For this purpose, the function PrepareIrisDataset() will be used.

It's convenient to move these functions to the iris.mqh file.

//+------------------------------------------------------------------+ //| Iris.mqh | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" //+------------------------------------------------------------------+ //| Structure for the IRIS Dataset sample | //+------------------------------------------------------------------+ struct sIRISsample { int sample_id; // sample id (1-150) double features[4]; // SepalLengthCm,SepalWidthCm,PetalLengthCm,PetalWidthCm string class_name; // class ("Iris-setosa","Iris-versicolor","Iris-virginica") int class_id; // class id (0,1,2), calculated by function IRISClassID }; //--- Iris dataset sIRISsample ExtIRISDataset[]; int Exttotal=0; //+------------------------------------------------------------------+ //| Returns class id by class name | //+------------------------------------------------------------------+ int IRISClassID(string class_name) { //--- if(class_name=="Iris-setosa") return(0); else if(class_name=="Iris-versicolor") return(1); else if(class_name=="Iris-virginica") return(2); //--- return(-1); } //+------------------------------------------------------------------+ //| AddSample | //+------------------------------------------------------------------+ bool AddSample(const int Id,const double SepalLengthCm,const double SepalWidthCm,const double PetalLengthCm,const double PetalWidthCm, const string Species) { //--- ExtIRISDataset[Exttotal].sample_id=Id; //--- ExtIRISDataset[Exttotal].features[0]=SepalLengthCm; ExtIRISDataset[Exttotal].features[1]=SepalWidthCm; ExtIRISDataset[Exttotal].features[2]=PetalLengthCm; ExtIRISDataset[Exttotal].features[3]=PetalWidthCm; //--- ExtIRISDataset[Exttotal].class_name=Species; ExtIRISDataset[Exttotal].class_id=IRISClassID(Species); //--- Exttotal++; //--- return(true); } //+------------------------------------------------------------------+ //| Prepare Iris Dataset | //+------------------------------------------------------------------+ bool PrepareIrisDataset(sIRISsample &iris_samples[]) { ArrayResize(ExtIRISDataset,150); Exttotal=0; //--- AddSample(1,5.1,3.5,1.4,0.2,"Iris-setosa"); AddSample(2,4.9,3.0,1.4,0.2,"Iris-setosa"); AddSample(3,4.7,3.2,1.3,0.2,"Iris-setosa"); AddSample(4,4.6,3.1,1.5,0.2,"Iris-setosa"); AddSample(5,5.0,3.6,1.4,0.2,"Iris-setosa"); AddSample(6,5.4,3.9,1.7,0.4,"Iris-setosa"); AddSample(7,4.6,3.4,1.4,0.3,"Iris-setosa"); AddSample(8,5.0,3.4,1.5,0.2,"Iris-setosa"); AddSample(9,4.4,2.9,1.4,0.2,"Iris-setosa"); AddSample(10,4.9,3.1,1.5,0.1,"Iris-setosa"); AddSample(11,5.4,3.7,1.5,0.2,"Iris-setosa"); AddSample(12,4.8,3.4,1.6,0.2,"Iris-setosa"); AddSample(13,4.8,3.0,1.4,0.1,"Iris-setosa"); AddSample(14,4.3,3.0,1.1,0.1,"Iris-setosa"); AddSample(15,5.8,4.0,1.2,0.2,"Iris-setosa"); AddSample(16,5.7,4.4,1.5,0.4,"Iris-setosa"); AddSample(17,5.4,3.9,1.3,0.4,"Iris-setosa"); AddSample(18,5.1,3.5,1.4,0.3,"Iris-setosa"); AddSample(19,5.7,3.8,1.7,0.3,"Iris-setosa"); AddSample(20,5.1,3.8,1.5,0.3,"Iris-setosa"); AddSample(21,5.4,3.4,1.7,0.2,"Iris-setosa"); AddSample(22,5.1,3.7,1.5,0.4,"Iris-setosa"); AddSample(23,4.6,3.6,1.0,0.2,"Iris-setosa"); AddSample(24,5.1,3.3,1.7,0.5,"Iris-setosa"); AddSample(25,4.8,3.4,1.9,0.2,"Iris-setosa"); AddSample(26,5.0,3.0,1.6,0.2,"Iris-setosa"); AddSample(27,5.0,3.4,1.6,0.4,"Iris-setosa"); AddSample(28,5.2,3.5,1.5,0.2,"Iris-setosa"); AddSample(29,5.2,3.4,1.4,0.2,"Iris-setosa"); AddSample(30,4.7,3.2,1.6,0.2,"Iris-setosa"); AddSample(31,4.8,3.1,1.6,0.2,"Iris-setosa"); AddSample(32,5.4,3.4,1.5,0.4,"Iris-setosa"); AddSample(33,5.2,4.1,1.5,0.1,"Iris-setosa"); AddSample(34,5.5,4.2,1.4,0.2,"Iris-setosa"); AddSample(35,4.9,3.1,1.5,0.2,"Iris-setosa"); AddSample(36,5.0,3.2,1.2,0.2,"Iris-setosa"); AddSample(37,5.5,3.5,1.3,0.2,"Iris-setosa"); AddSample(38,4.9,3.6,1.4,0.1,"Iris-setosa"); AddSample(39,4.4,3.0,1.3,0.2,"Iris-setosa"); AddSample(40,5.1,3.4,1.5,0.2,"Iris-setosa"); AddSample(41,5.0,3.5,1.3,0.3,"Iris-setosa"); AddSample(42,4.5,2.3,1.3,0.3,"Iris-setosa"); AddSample(43,4.4,3.2,1.3,0.2,"Iris-setosa"); AddSample(44,5.0,3.5,1.6,0.6,"Iris-setosa"); AddSample(45,5.1,3.8,1.9,0.4,"Iris-setosa"); AddSample(46,4.8,3.0,1.4,0.3,"Iris-setosa"); AddSample(47,5.1,3.8,1.6,0.2,"Iris-setosa"); AddSample(48,4.6,3.2,1.4,0.2,"Iris-setosa"); AddSample(49,5.3,3.7,1.5,0.2,"Iris-setosa"); AddSample(50,5.0,3.3,1.4,0.2,"Iris-setosa"); AddSample(51,7.0,3.2,4.7,1.4,"Iris-versicolor"); AddSample(52,6.4,3.2,4.5,1.5,"Iris-versicolor"); AddSample(53,6.9,3.1,4.9,1.5,"Iris-versicolor"); AddSample(54,5.5,2.3,4.0,1.3,"Iris-versicolor"); AddSample(55,6.5,2.8,4.6,1.5,"Iris-versicolor"); AddSample(56,5.7,2.8,4.5,1.3,"Iris-versicolor"); AddSample(57,6.3,3.3,4.7,1.6,"Iris-versicolor"); AddSample(58,4.9,2.4,3.3,1.0,"Iris-versicolor"); AddSample(59,6.6,2.9,4.6,1.3,"Iris-versicolor"); AddSample(60,5.2,2.7,3.9,1.4,"Iris-versicolor"); AddSample(61,5.0,2.0,3.5,1.0,"Iris-versicolor"); AddSample(62,5.9,3.0,4.2,1.5,"Iris-versicolor"); AddSample(63,6.0,2.2,4.0,1.0,"Iris-versicolor"); AddSample(64,6.1,2.9,4.7,1.4,"Iris-versicolor"); AddSample(65,5.6,2.9,3.6,1.3,"Iris-versicolor"); AddSample(66,6.7,3.1,4.4,1.4,"Iris-versicolor"); AddSample(67,5.6,3.0,4.5,1.5,"Iris-versicolor"); AddSample(68,5.8,2.7,4.1,1.0,"Iris-versicolor"); AddSample(69,6.2,2.2,4.5,1.5,"Iris-versicolor"); AddSample(70,5.6,2.5,3.9,1.1,"Iris-versicolor"); AddSample(71,5.9,3.2,4.8,1.8,"Iris-versicolor"); AddSample(72,6.1,2.8,4.0,1.3,"Iris-versicolor"); AddSample(73,6.3,2.5,4.9,1.5,"Iris-versicolor"); AddSample(74,6.1,2.8,4.7,1.2,"Iris-versicolor"); AddSample(75,6.4,2.9,4.3,1.3,"Iris-versicolor"); AddSample(76,6.6,3.0,4.4,1.4,"Iris-versicolor"); AddSample(77,6.8,2.8,4.8,1.4,"Iris-versicolor"); AddSample(78,6.7,3.0,5.0,1.7,"Iris-versicolor"); AddSample(79,6.0,2.9,4.5,1.5,"Iris-versicolor"); AddSample(80,5.7,2.6,3.5,1.0,"Iris-versicolor"); AddSample(81,5.5,2.4,3.8,1.1,"Iris-versicolor"); AddSample(82,5.5,2.4,3.7,1.0,"Iris-versicolor"); AddSample(83,5.8,2.7,3.9,1.2,"Iris-versicolor"); AddSample(84,6.0,2.7,5.1,1.6,"Iris-versicolor"); AddSample(85,5.4,3.0,4.5,1.5,"Iris-versicolor"); AddSample(86,6.0,3.4,4.5,1.6,"Iris-versicolor"); AddSample(87,6.7,3.1,4.7,1.5,"Iris-versicolor"); AddSample(88,6.3,2.3,4.4,1.3,"Iris-versicolor"); AddSample(89,5.6,3.0,4.1,1.3,"Iris-versicolor"); AddSample(90,5.5,2.5,4.0,1.3,"Iris-versicolor"); AddSample(91,5.5,2.6,4.4,1.2,"Iris-versicolor"); AddSample(92,6.1,3.0,4.6,1.4,"Iris-versicolor"); AddSample(93,5.8,2.6,4.0,1.2,"Iris-versicolor"); AddSample(94,5.0,2.3,3.3,1.0,"Iris-versicolor"); AddSample(95,5.6,2.7,4.2,1.3,"Iris-versicolor"); AddSample(96,5.7,3.0,4.2,1.2,"Iris-versicolor"); AddSample(97,5.7,2.9,4.2,1.3,"Iris-versicolor"); AddSample(98,6.2,2.9,4.3,1.3,"Iris-versicolor"); AddSample(99,5.1,2.5,3.0,1.1,"Iris-versicolor"); AddSample(100,5.7,2.8,4.1,1.3,"Iris-versicolor"); AddSample(101,6.3,3.3,6.0,2.5,"Iris-virginica"); AddSample(102,5.8,2.7,5.1,1.9,"Iris-virginica"); AddSample(103,7.1,3.0,5.9,2.1,"Iris-virginica"); AddSample(104,6.3,2.9,5.6,1.8,"Iris-virginica"); AddSample(105,6.5,3.0,5.8,2.2,"Iris-virginica"); AddSample(106,7.6,3.0,6.6,2.1,"Iris-virginica"); AddSample(107,4.9,2.5,4.5,1.7,"Iris-virginica"); AddSample(108,7.3,2.9,6.3,1.8,"Iris-virginica"); AddSample(109,6.7,2.5,5.8,1.8,"Iris-virginica"); AddSample(110,7.2,3.6,6.1,2.5,"Iris-virginica"); AddSample(111,6.5,3.2,5.1,2.0,"Iris-virginica"); AddSample(112,6.4,2.7,5.3,1.9,"Iris-virginica"); AddSample(113,6.8,3.0,5.5,2.1,"Iris-virginica"); AddSample(114,5.7,2.5,5.0,2.0,"Iris-virginica"); AddSample(115,5.8,2.8,5.1,2.4,"Iris-virginica"); AddSample(116,6.4,3.2,5.3,2.3,"Iris-virginica"); AddSample(117,6.5,3.0,5.5,1.8,"Iris-virginica"); AddSample(118,7.7,3.8,6.7,2.2,"Iris-virginica"); AddSample(119,7.7,2.6,6.9,2.3,"Iris-virginica"); AddSample(120,6.0,2.2,5.0,1.5,"Iris-virginica"); AddSample(121,6.9,3.2,5.7,2.3,"Iris-virginica"); AddSample(122,5.6,2.8,4.9,2.0,"Iris-virginica"); AddSample(123,7.7,2.8,6.7,2.0,"Iris-virginica"); AddSample(124,6.3,2.7,4.9,1.8,"Iris-virginica"); AddSample(125,6.7,3.3,5.7,2.1,"Iris-virginica"); AddSample(126,7.2,3.2,6.0,1.8,"Iris-virginica"); AddSample(127,6.2,2.8,4.8,1.8,"Iris-virginica"); AddSample(128,6.1,3.0,4.9,1.8,"Iris-virginica"); AddSample(129,6.4,2.8,5.6,2.1,"Iris-virginica"); AddSample(130,7.2,3.0,5.8,1.6,"Iris-virginica"); AddSample(131,7.4,2.8,6.1,1.9,"Iris-virginica"); AddSample(132,7.9,3.8,6.4,2.0,"Iris-virginica"); AddSample(133,6.4,2.8,5.6,2.2,"Iris-virginica"); AddSample(134,6.3,2.8,5.1,1.5,"Iris-virginica"); AddSample(135,6.1,2.6,5.6,1.4,"Iris-virginica"); AddSample(136,7.7,3.0,6.1,2.3,"Iris-virginica"); AddSample(137,6.3,3.4,5.6,2.4,"Iris-virginica"); AddSample(138,6.4,3.1,5.5,1.8,"Iris-virginica"); AddSample(139,6.0,3.0,4.8,1.8,"Iris-virginica"); AddSample(140,6.9,3.1,5.4,2.1,"Iris-virginica"); AddSample(141,6.7,3.1,5.6,2.4,"Iris-virginica"); AddSample(142,6.9,3.1,5.1,2.3,"Iris-virginica"); AddSample(143,5.8,2.7,5.1,1.9,"Iris-virginica"); AddSample(144,6.8,3.2,5.9,2.3,"Iris-virginica"); AddSample(145,6.7,3.3,5.7,2.5,"Iris-virginica"); AddSample(146,6.7,3.0,5.2,2.3,"Iris-virginica"); AddSample(147,6.3,2.5,5.0,1.9,"Iris-virginica"); AddSample(148,6.5,3.0,5.2,2.0,"Iris-virginica"); AddSample(149,6.2,3.4,5.4,2.3,"Iris-virginica"); AddSample(150,5.9,3.0,5.1,1.8,"Iris-virginica"); //--- ArrayResize(iris_samples,150); for(int i=0; i<Exttotal; i++) { iris_samples[i]=ExtIRISDataset[i]; } //--- return(true); } //+------------------------------------------------------------------+

Let's compare three popular classification methods: SVC (Support Vector Classification), LinearSVC (Linear Support Vector Classification), and NuSVC (Nu Support Vector Classification).

Principles of Operation:

SVC (Support Vector Classification)

Working Principle: SVC is a classification method based on maximizing the margin between classes. It seeks an optimal separating hyperplane that maximally separates classes and supports support vectors - points closest to the hyperplane.

Kernel Functions: SVC can use various kernel functions, such as linear, radial basis function (RBF), polynomial, and others. The kernel function determines how data is transformed to find the optimal hyperplane.

LinearSVC (Linear Support Vector Classification)

Working Principle: LinearSVC is a variant of SVC specializing in linear classification. It seeks an optimal linear separating hyperplane without using kernel functions. This makes it faster and more efficient when working with large volumes of data.

NuSVC (Nu Support Vector Classification)

Working Principle: NuSVC is also based on support vector methods but introduces a parameter Nu (nu), which controls the model's complexity and the fraction of support vectors. The Nu value falls in the range from 0 to 1 and determines how much of the data can be used for support vectors and errors.

Advantages:

SVC

Powerful Algorithm: SVC can handle complex classification tasks and work with non-linear data thanks to the use of kernel functions.

Robustness to Outliers: SVC is robust to data outliers as it uses support vectors to build the separating hyperplane.

LinearSVC

High Efficiency: LinearSVC is faster and more efficient when dealing with large datasets, especially when the data is large and linear separation is suitable for the task.

Linear Classification: If the problem is well-linearly separable, LinearSVC can yield good results without the need for complex kernel functions.

NuSVC

Model Complexity Control: The Nu parameter in NuSVC allows you to control the model's complexity and the trade-off between fitting the data and generalization.

Robustness to Outliers: Similar to SVC, NuSVC is robust to outliers, making it useful for tasks with noisy data.

Limitations:

SVC

Computational Complexity: SVC can be slow on large datasets and/or when using complex kernel functions.

Kernel Sensitivity: Choosing the right kernel function can be a challenging task and significantly impact model performance.

LinearSVC

Linearity Constraint: LinearSVC is constrained by linear data separation and can perform poorly in cases with non-linear dependencies between features and the target variable.

NuSVC

Nu Parameter Tuning: Tuning the Nu parameter may require time and experimentation to achieve optimal results.

Depending on the task characteristics and data volume, each of these methods can be the best choice. It's important to conduct experiments and select the method that best suits the specific classification task requirements.

2.1. SVC Classifier

The Support Vector Classification (SVC) classification method is a powerful machine learning algorithm widely used for solving classification tasks.

Principles of Operation:

- Optimal Separating Hyperplane

Working Principle: The main idea behind SVC is to find the optimal separating hyperplane in the feature space. This hyperplane should maximize the separation between objects of different classes and support support vectors, which are data points closest to the hyperplane.

Maximizing Margin: SVC aims to maximize the margin between classes, which means the distance from support vectors to the hyperplane. This allows the method to be robust to outliers and generalize well to new data. - Utilization of Kernel Functions

Kernel Functions: SVC can use various kernel functions, such as linear, radial basis function (RBF), polynomial, and others. The kernel function allows data to be projected into a higher-dimensional space where the task becomes linear, even if there is no linear separability in the original data space.

Kernel Selection: Choosing the right kernel function can significantly impact the performance of the SVC model. A linear hyperplane is not always the optimal solution.

Advantages:

- Powerful Algorithm. Handling Complex Tasks: SVC can solve complex classification tasks, including those with non-linear dependencies between features and the target variable.

- Robustness to Outliers: The use of support vectors makes the method robust to data outliers. It depends on support vectors rather than the entire dataset.

- Kernel Flexibility. Adaptability to Data: The ability to use different kernel functions allows SVC to adapt to specific data and discover non-linear relationships.

- Good Generalization. Generalization to New Data: The SVC model can generalize well to new data, making it useful for prediction tasks.

Limitations:

- Computational Complexity. Training Time: SVC can be slow to train, especially when dealing with large volumes of data or complex kernel functions.

- Kernel Selection. Choosing the Right Kernel Function: Selecting the correct kernel function may require experimentation and depends on data characteristics.

- Sensitivity to Feature Scaling. Data Normalization: SVC is sensitive to feature scaling, so it is recommended to normalize or standardize data before training.

- Model Interpretability. Interpretation Complexity: SVC models can be complex to interpret due to the use of non-linear kernels and a multitude of support vectors.

Depending on the specific task and data volume, the SVC method can be a powerful tool for solving classification tasks. However, it's essential to consider its limitations and tune parameters to achieve optimal results.

2.1.1. Code for Creating the SVC Classifier Model

This code demonstrates the process of training an SVC Classifier model on the Iris dataset, exporting it to the ONNX format, and performing classification using the ONNX model. It also evaluates the accuracy of both the original model and the ONNX model.

# Iris_SVCClassifier.py # The code demonstrates the process of training SVC model on the Iris dataset, exporting it to ONNX format, and making predictions using the ONNX model. # It also evaluates the accuracy of both the original model and the ONNX model. # Copyright 2023, MetaQuotes Ltd. # https://www.mql5.com # import necessary libraries from sklearn import datasets from sklearn.svm import SVC from sklearn.metrics import accuracy_score, classification_report from skl2onnx import convert_sklearn from skl2onnx.common.data_types import FloatTensorType import onnxruntime as ort import numpy as np from sys import argv # define the path for saving the model data_path = argv[0] last_index = data_path.rfind("\\") + 1 data_path = data_path[0:last_index] # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # create an SVC Classifier model with a linear kernel svc_model = SVC(kernel='linear', C=1.0) # train the model on the entire dataset svc_model.fit(X, y) # predict classes for the entire dataset y_pred = svc_model.predict(X) # evaluate the model's accuracy accuracy = accuracy_score(y, y_pred) print("Accuracy of SVC Classifier model:", accuracy) # display the classification report print("\nClassification Report:\n", classification_report(y, y_pred)) # define the input data type initial_type = [('float_input', FloatTensorType([None, X.shape[1]]))] # export the model to ONNX format with float data type onnx_model = convert_sklearn(svc_model, initial_types=initial_type, target_opset=12) # save the model to a file onnx_filename = data_path +"svc_iris.onnx" with open(onnx_filename, "wb") as f: f.write(onnx_model.SerializeToString()) # print model path print(f"Model saved to {onnx_filename}") # load the ONNX model and make predictions onnx_session = ort.InferenceSession(onnx_filename) input_name = onnx_session.get_inputs()[0].name output_name = onnx_session.get_outputs()[0].name # display information about input tensors in ONNX print("\nInformation about input tensors in ONNX:") for i, input_tensor in enumerate(onnx_session.get_inputs()): print(f"{i + 1}. Name: {input_tensor.name}, Data Type: {input_tensor.type}, Shape: {input_tensor.shape}") # display information about output tensors in ONNX print("\nInformation about output tensors in ONNX:") for i, output_tensor in enumerate(onnx_session.get_outputs()): print(f"{i + 1}. Name: {output_tensor.name}, Data Type: {output_tensor.type}, Shape: {output_tensor.shape}") # convert data to floating-point format (float32) X_float32 = X.astype(np.float32) # predict classes for the entire dataset using ONNX y_pred_onnx = onnx_session.run([output_name], {input_name: X_float32})[0] # evaluate the accuracy of the ONNX model accuracy_onnx = accuracy_score(y, y_pred_onnx) print("\nAccuracy of SVC Classifier model in ONNX format:", accuracy_onnx)

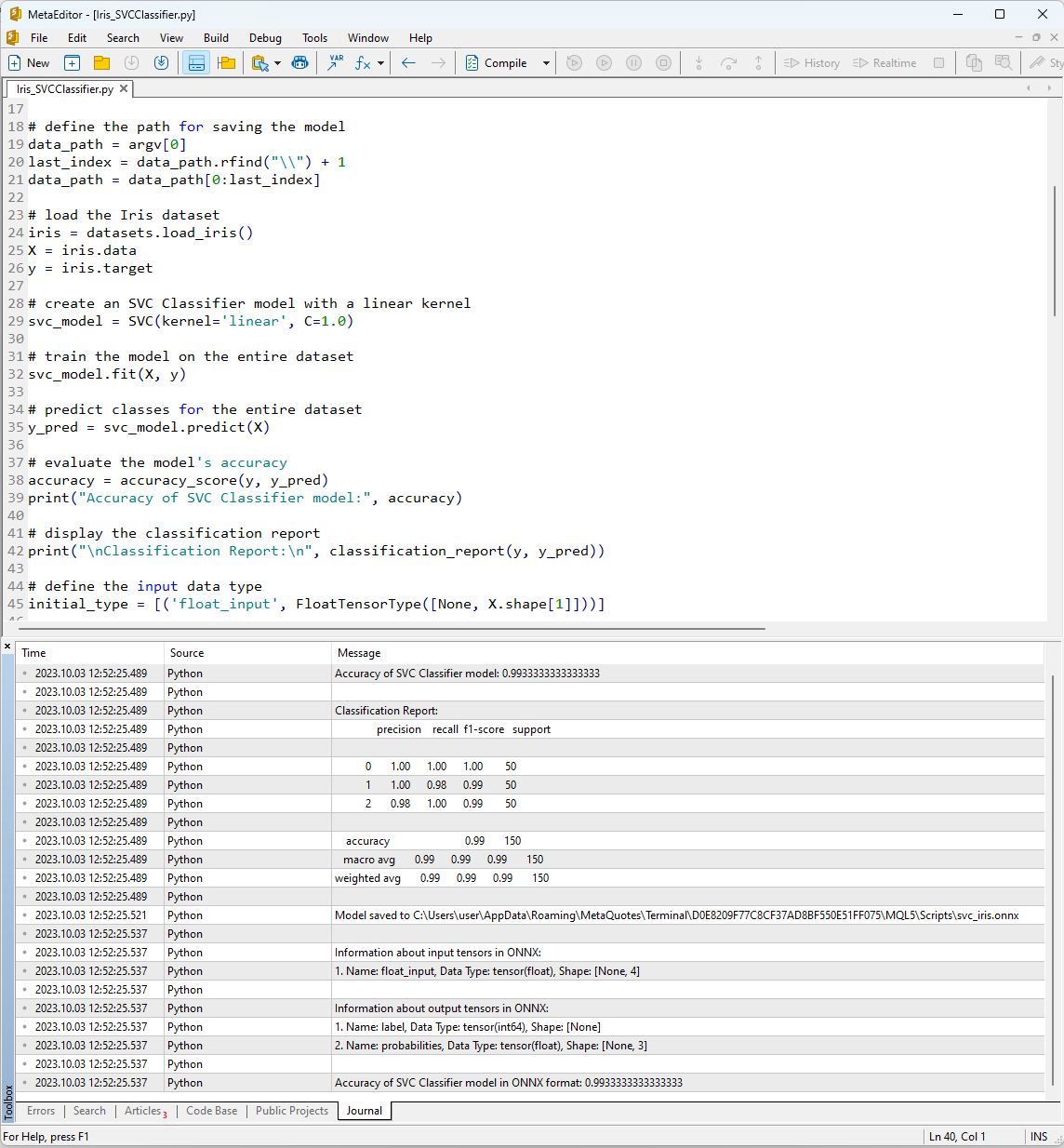

After running the script in MetaEditor by using the "Compile" button, you can view the results of its execution in the Journal tab.

Figure 12. Results of the Iris_SVMClassifier.py script in MetaEditor

Output of the Iris_SVCClassifier.py script:

Python Accuracy of SVC Classifier model: 0.9933333333333333

Python

Python Classification Report:

Python precision recall f1-score support

Python

Python 0 1.00 1.00 1.00 50

Python 1 1.00 0.98 0.99 50

Python 2 0.98 1.00 0.99 50

Python

Python accuracy 0.99 150

Python macro avg 0.99 0.99 0.99 150

Python weighted avg 0.99 0.99 0.99 150

Python

Python Model saved to C:\Users\user\AppData\Roaming\MetaQuotes\Terminal\D0E8209F77C8CF37AD8BF550E51FF075\MQL5\Scripts\svc_iris.onnx

Python

Python Information about input tensors in ONNX:

Python 1. Name: float_input, Data Type: tensor(float), Shape: [None, 4]

Python

Python Information about output tensors in ONNX:

Python 1. Name: label, Data Type: tensor(int64), Shape: [None]

Python 2. Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

Python

Python Accuracy of SVC Classifier model in ONNX format: 0.9933333333333333

Here, you can find information about the path where the ONNX model was saved, the types of input and output parameters of the ONNX model, as well as the accuracy in describing the Iris dataset.

The accuracy of describing the dataset using the SVM Classifier is 99%, and the model exported to the ONNX format shows the same level of accuracy.

Now, we will verify these results in MQL5 by running the constructed model for each of the 150 data samples. Additionally, the script includes an example of batch data processing.

2.1.2. MQL5 Code for Working with the SVC Classifier model

//+------------------------------------------------------------------+ //| Iris_SVCClassifier.mq5 | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" #include "iris.mqh" #resource "svc_iris.onnx" as const uchar ExtModel[]; //+------------------------------------------------------------------+ //| Test IRIS dataset samples | //+------------------------------------------------------------------+ bool TestSamples(long model,float &input_data[][4], int &model_classes_id[]) { //--- check number of input samples ulong batch_size=input_data.Range(0); if(batch_size==0) return(false); //--- prepare output array ArrayResize(model_classes_id,(int)batch_size); //--- ulong input_shape[]= { batch_size, input_data.Range(1)}; OnnxSetInputShape(model,0,input_shape); //--- int output1[]; float output2[][3]; //--- ArrayResize(output1,(int)batch_size); ArrayResize(output2,(int)batch_size); //--- ulong output_shape[]= {batch_size}; OnnxSetOutputShape(model,0,output_shape); //--- ulong output_shape2[]= {batch_size,3}; OnnxSetOutputShape(model,1,output_shape2); //--- bool res=OnnxRun(model,ONNX_DEBUG_LOGS,input_data,output1,output2); //--- classes are ready in output1[k]; if(res) { for(int k=0; k<(int)batch_size; k++) model_classes_id[k]=output1[k]; } //--- return(res); } //+------------------------------------------------------------------+ //| Test all samples from IRIS dataset (150) | //| Here we test all samples with batch=1, sample by sample | //+------------------------------------------------------------------+ bool TestAllIrisDataset(const long model,const string model_name,double &model_accuracy) { sIRISsample iris_samples[]; //--- load dataset from file PrepareIrisDataset(iris_samples); //--- test int total_samples=ArraySize(iris_samples); if(total_samples==0) { Print("iris dataset not prepared"); return(false); } //--- show dataset for(int k=0; k<total_samples; k++) { //PrintFormat("%d (%.2f,%.2f,%.2f,%.2f) class %d (%s)",iris_samples[k].sample_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3],iris_samples[k].class_id,iris_samples[k].class_name); } //--- array for output classes int model_output_classes_id[]; //--- check all Iris dataset samples int correct_results=0; for(int k=0; k<total_samples; k++) { //--- input array float iris_sample_input_data[1][4]; //--- prepare input data from kth iris sample dataset iris_sample_input_data[0][0]=(float)iris_samples[k].features[0]; iris_sample_input_data[0][1]=(float)iris_samples[k].features[1]; iris_sample_input_data[0][2]=(float)iris_samples[k].features[2]; iris_sample_input_data[0][3]=(float)iris_samples[k].features[3]; //--- run model bool res=TestSamples(model,iris_sample_input_data,model_output_classes_id); //--- check result if(res) { if(model_output_classes_id[0]==iris_samples[k].class_id) { correct_results++; } else { PrintFormat("model:%s sample=%d FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f]",model_name,iris_samples[k].sample_id,model_output_classes_id[0],iris_samples[k].class_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3]); } } } model_accuracy=1.0*correct_results/total_samples; //--- PrintFormat("model:%s correct results: %.2f%%",model_name,100*model_accuracy); //--- return(true); } //+------------------------------------------------------------------+ //| Here we test batch execution of the model | //+------------------------------------------------------------------+ bool TestBatchExecution(const long model,const string model_name,double &model_accuracy) { model_accuracy=0; //--- array for output classes int model_output_classes_id[]; int correct_results=0; int total_results=0; bool res=false; //--- run batch with 3 samples float input_data_batch3[3][4]= { {5.1f,3.5f,1.4f,0.2f}, // iris dataset sample id=1, Iris-setosa {6.3f,2.5f,4.9f,1.5f}, // iris dataset sample id=73, Iris-versicolor {6.3f,2.7f,4.9f,1.8f} // iris dataset sample id=124, Iris-virginica }; int correct_classes_batch3[3]= {0,1,2}; //--- run model res=TestSamples(model,input_data_batch3,model_output_classes_id); if(res) { //--- check result for(int j=0; j<ArraySize(model_output_classes_id); j++) { //--- check result if(model_output_classes_id[j]==correct_classes_batch3[j]) correct_results++; else { PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch3[j],input_data_batch3[j][0],input_data_batch3[j][1],input_data_batch3[j][2],input_data_batch3[j][3]); } total_results++; } } else return(false); //--- run batch with 10 samples float input_data_batch10[10][4]= { {5.5f,3.5f,1.3f,0.2f}, // iris dataset sample id=37 (Iris-setosa) {4.9f,3.1f,1.5f,0.1f}, // iris dataset sample id=38 (Iris-setosa) {4.4f,3.0f,1.3f,0.2f}, // iris dataset sample id=39 (Iris-setosa) {5.0f,3.3f,1.4f,0.2f}, // iris dataset sample id=50 (Iris-setosa) {7.0f,3.2f,4.7f,1.4f}, // iris dataset sample id=51 (Iris-versicolor) {6.4f,3.2f,4.5f,1.5f}, // iris dataset sample id=52 (Iris-versicolor) {6.3f,3.3f,6.0f,2.5f}, // iris dataset sample id=101 (Iris-virginica) {5.8f,2.7f,5.1f,1.9f}, // iris dataset sample id=102 (Iris-virginica) {7.1f,3.0f,5.9f,2.1f}, // iris dataset sample id=103 (Iris-virginica) {6.3f,2.9f,5.6f,1.8f} // iris dataset sample id=104 (Iris-virginica) }; //--- correct classes for all 10 samples in the batch int correct_classes_batch10[10]= {0,0,0,0,1,1,2,2,2,2}; //--- run model res=TestSamples(model,input_data_batch10,model_output_classes_id); //--- check result if(res) { for(int j=0; j<ArraySize(model_output_classes_id); j++) { if(model_output_classes_id[j]==correct_classes_batch10[j]) correct_results++; else { double f1=input_data_batch10[j][0]; double f2=input_data_batch10[j][1]; double f3=input_data_batch10[j][2]; double f4=input_data_batch10[j][3]; PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch10[j],input_data_batch10[j][0],input_data_batch10[j][1],input_data_batch10[j][2],input_data_batch10[j][3]); } total_results++; } } else return(false); //--- calculate accuracy model_accuracy=correct_results/total_results; //--- return(res); } //+------------------------------------------------------------------+ //| Script program start function | //+------------------------------------------------------------------+ int OnStart(void) { string model_name="SVCClassifier"; //--- long model=OnnxCreateFromBuffer(ExtModel,ONNX_DEFAULT); if(model==INVALID_HANDLE) { PrintFormat("model_name=%s OnnxCreate error %d for",model_name,GetLastError()); } else { //--- test all dataset double model_accuracy=0; //-- test sample by sample execution for all Iris dataset if(TestAllIrisDataset(model,model_name,model_accuracy)) PrintFormat("model=%s all samples accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- test batch execution for several samples if(TestBatchExecution(model,model_name,model_accuracy)) PrintFormat("model=%s batch test accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- release model OnnxRelease(model); } return(0); } //+------------------------------------------------------------------+

The results of the script's execution are displayed in the "Experts" tab of the MetaTrader 5 terminal.

Iris_SVCClassifier (EURUSD,H1) model:SVCClassifier sample=84 FAILED [class=2, true class=1] features=(6.00,2.70,5.10,1.60] Iris_SVCClassifier (EURUSD,H1) model:SVCClassifier correct results: 99.33% Iris_SVCClassifier (EURUSD,H1) model=SVCClassifier all samples accuracy=0.993333 Iris_SVCClassifier (EURUSD,H1) model=SVCClassifier batch test accuracy=1.000000

The SVC model correctly classified 149 out of 150 samples, which is an excellent result. The model made only one classification error in the Iris dataset, predicting class 2 (versicolor) instead of class 1 (virginica) for sample #84.

It's worth noting that the accuracy of the exported ONNX model on the full Iris dataset is 99.33%, which matches the accuracy of the original model.

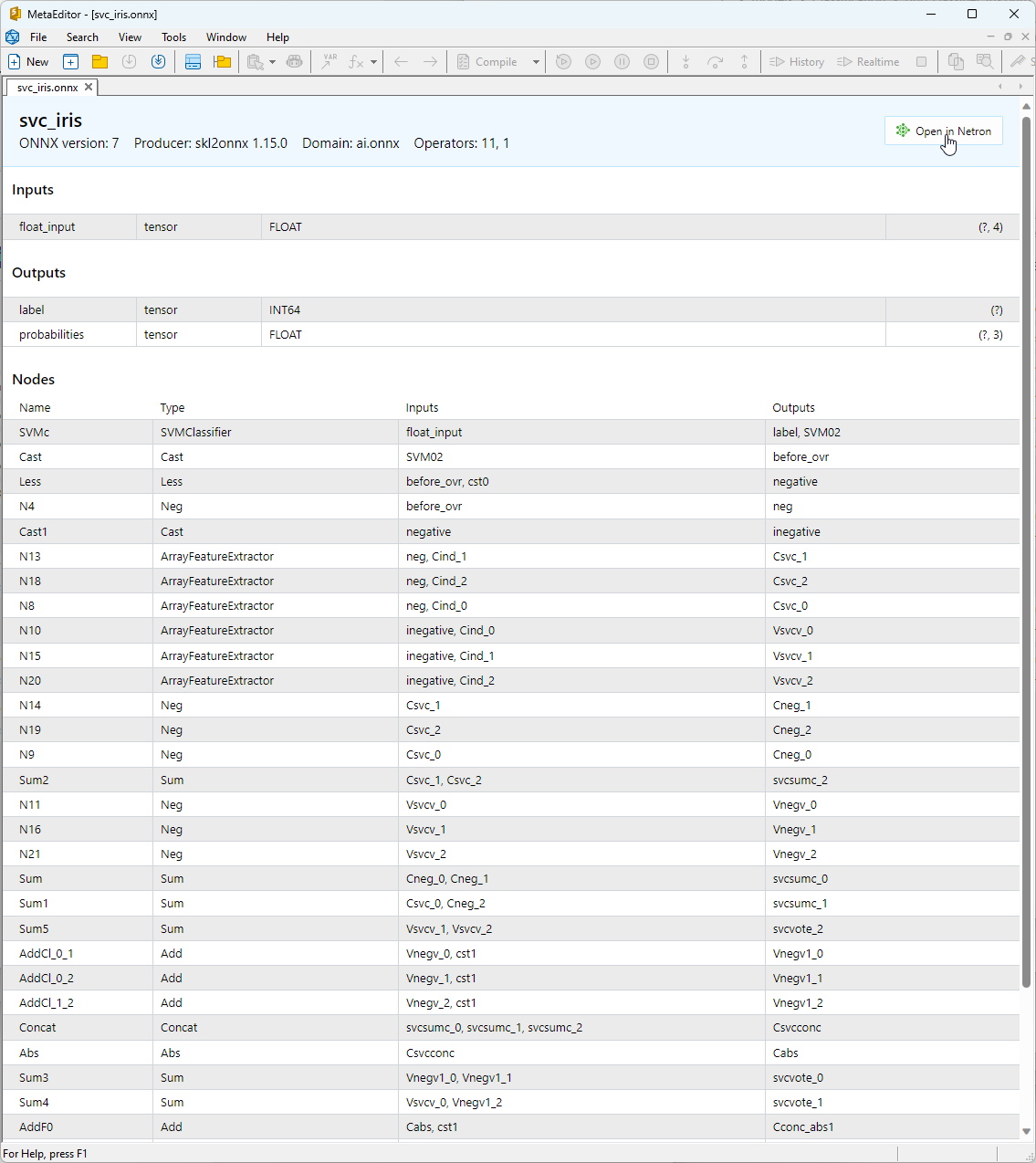

2.1.3. ONNX Representation of the SVC Classifier Model

You can view the built ONNX model in MetaEditor.

Figure 13. ONNX Model svc_iris.onnx in MetaEditor

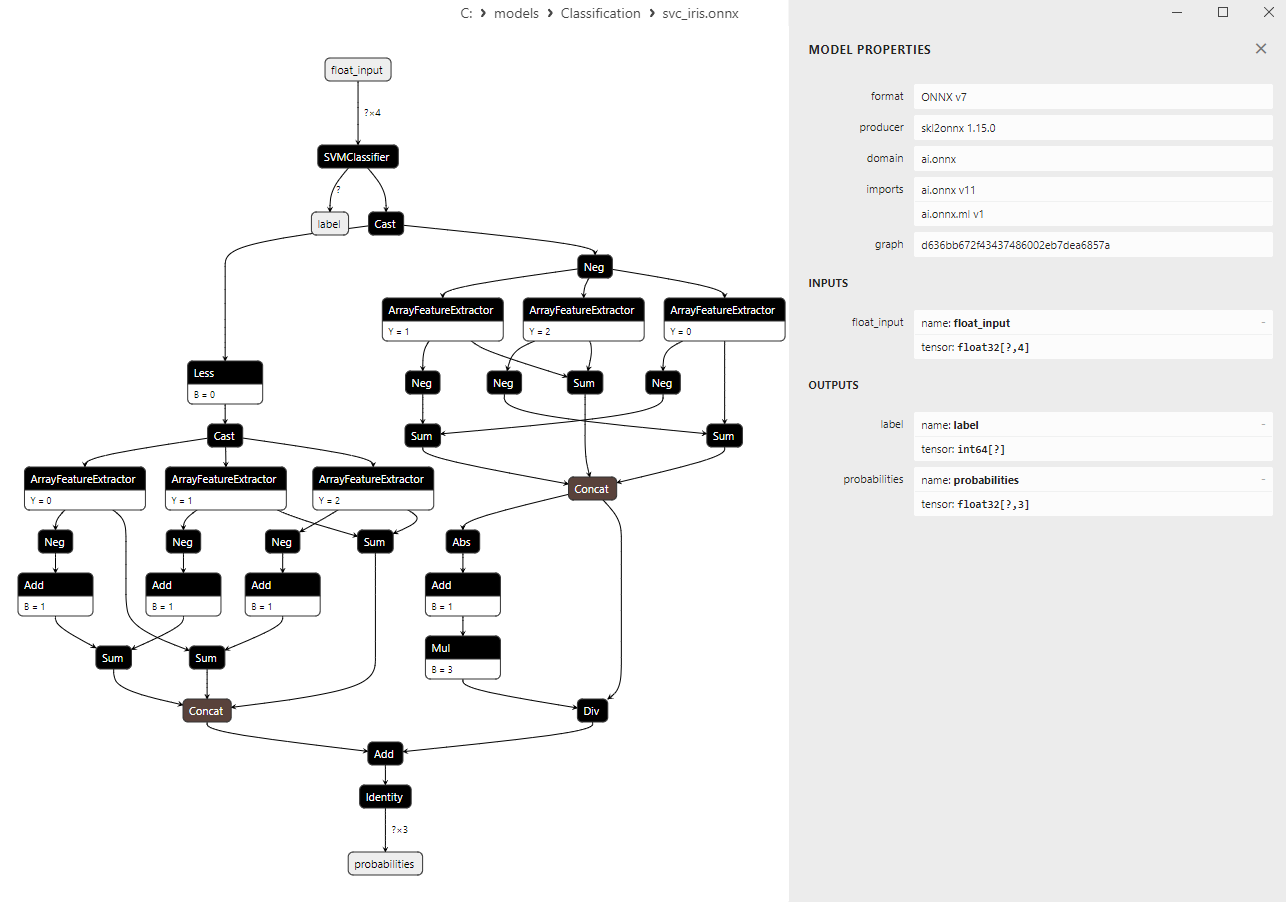

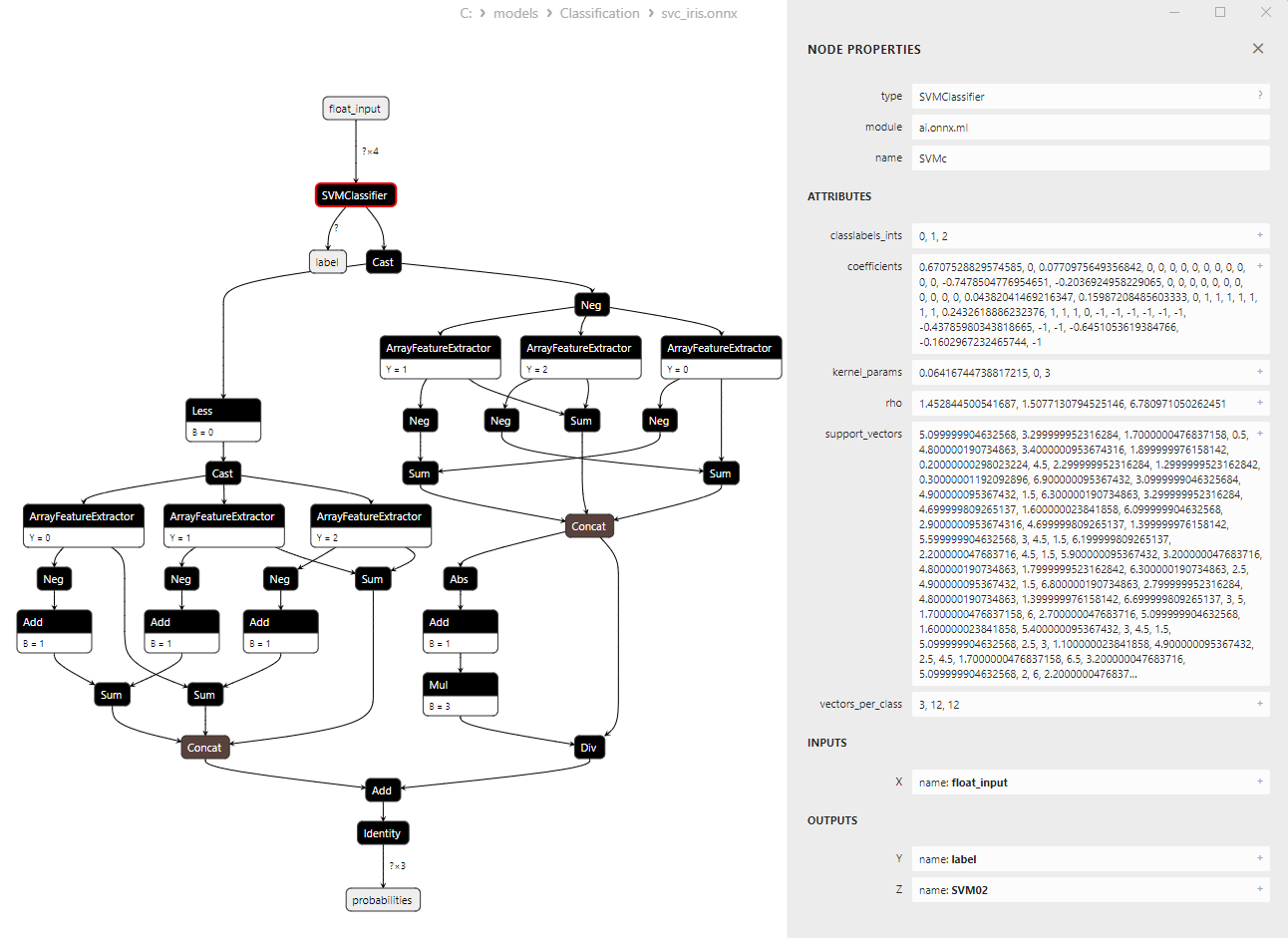

For more detailed information about the model's architecture, you can use Netron. To do this, click the "Open in Netron" button in the model's description in MetaEditor.

Figure 14. ONNX Model svc_iris.onnx in Netron

Figure 15. ONNX Model svc_iris.onnx in Netron (SVMClassifier ONNX Operator Parameters)

2.2. LinearSVC Classifier

LinearSVC (Linear Support Vector Classification) is a powerful machine learning algorithm used for binary and multiclass classification tasks. It is based on the idea of finding a hyperplane that best separates the data.

Principles of LinearSVC:

- Finding the optimal hyperplane: The main idea of LinearSVC is to find the optimal hyperplane that maximally separates the two classes of data. A hyperplane is a multi-dimensional plane defined by a linear equation.

- Margin minimization: LinearSVC aims to minimize the margins (the distances between data points and the hyperplane). The larger the margins, the more effectively the hyperplane separates the classes.

- Handling linearly non-separable data: LinearSVC can work with data that cannot be linearly separated in the original feature space, thanks to the use of kernel functions (kernel trick) that project the data into a higher-dimensional space where they can be linearly separated.

Advantages of LinearSVC:

- Good generalization: LinearSVC has good generalization ability and can perform well on new, unseen data.

- Efficiency: LinearSVC works quickly on large datasets and requires relatively few computational resources.

- Handling linearly non-separable data: Using kernel functions, LinearSVC can address classification tasks with linearly non-separable data.

- Scalability: LinearSVC can be efficiently used in tasks with a large number of features and substantial data volumes.

Limitations of LinearSVC:

- Only linear separating hyperplanes: LinearSVC constructs only linear separating hyperplanes, which may be insufficient for complex classification tasks with non-linear dependencies.

- Parameter selection: Choosing the right parameters (e.g., regularization parameter) may require expert knowledge or cross-validation.

- Sensitivity to outliers: LinearSVC can be sensitive to outliers in the data, which can affect classification quality.

- Model interpretability: Models created using LinearSVC may be less interpretable compared to some other methods.

LinearSVC is a powerful classification algorithm that excels in generalization, efficiency, and handling linearly non-separable data. It finds applications in various classification tasks, especially when data can be separated by a linear hyperplane. However, for complex tasks that require modeling non-linear dependencies, LinearSVC may be less suitable, and in such cases, alternative methods with more complex decision boundaries should be considered.

2.2.1. Code for Сreating LinearSVC Classifier Model

This code demonstrates the process of training a LinearSVC Classifier model on the Iris dataset, exporting it to ONNX format, and performing classification using the ONNX model. It also evaluates the accuracy of both the original model and the ONNX model.

# Iris_LinearSVC.py # The code demonstrates the process of training LinearSVC model on the Iris dataset, exporting it to ONNX format, and making predictions using the ONNX model. # It also evaluates the accuracy of both the original model and the ONNX model. # Copyright 2023, MetaQuotes Ltd. # https://www.mql5.com # import necessary libraries from sklearn import datasets from sklearn.svm import LinearSVC from sklearn.metrics import accuracy_score, classification_report from skl2onnx import convert_sklearn from skl2onnx.common.data_types import FloatTensorType import onnxruntime as ort import numpy as np from sys import argv # define the path for saving the model data_path = argv[0] last_index = data_path.rfind("\\") + 1 data_path = data_path[0:last_index] # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # create a LinearSVC model linear_svc_model = LinearSVC(C=1.0, max_iter=10000) # train the model on the entire dataset linear_svc_model.fit(X, y) # predict classes for the entire dataset y_pred = linear_svc_model.predict(X) # evaluate the model's accuracy accuracy = accuracy_score(y, y_pred) print("Accuracy of LinearSVC model:", accuracy) # display the classification report print("\nClassification Report:\n", classification_report(y, y_pred)) # define the input data type initial_type = [('float_input', FloatTensorType([None, X.shape[1]]))] # export the model to ONNX format with float data type onnx_model = convert_sklearn(linear_svc_model, initial_types=initial_type, target_opset=12) # save the model to a file onnx_filename = data_path + "linear_svc_iris.onnx" with open(onnx_filename, "wb") as f: f.write(onnx_model.SerializeToString()) # print model path print(f"Model saved to {onnx_filename}") # load the ONNX model and make predictions onnx_session = ort.InferenceSession(onnx_filename) input_name = onnx_session.get_inputs()[0].name output_name = onnx_session.get_outputs()[0].name # display information about input tensors in ONNX print("\nInformation about input tensors in ONNX:") for i, input_tensor in enumerate(onnx_session.get_inputs()): print(f"{i + 1}. Name: {input_tensor.name}, Data Type: {input_tensor.type}, Shape: {input_tensor.shape}") # display information about output tensors in ONNX print("\nInformation about output tensors in ONNX:") for i, output_tensor in enumerate(onnx_session.get_outputs()): print(f"{i + 1}. Name: {output_tensor.name}, Data Type: {output_tensor.type}, Shape: {output_tensor.shape}") # convert data to floating-point format (float32) X_float32 = X.astype(np.float32) # predict classes for the entire dataset using ONNX y_pred_onnx = onnx_session.run([output_name], {input_name: X_float32})[0] # evaluate the accuracy of the ONNX model accuracy_onnx = accuracy_score(y, y_pred_onnx) print("\nAccuracy of LinearSVC model in ONNX format:", accuracy_onnx)

Output:

Python

Python Classification Report:

Python precision recall f1-score support

Python

Python 0 1.00 1.00 1.00 50

Python 1 0.96 0.94 0.95 50

Python 2 0.94 0.96 0.95 50

Python

Python accuracy 0.97 150

Python macro avg 0.97 0.97 0.97 150

Python weighted avg 0.97 0.97 0.97 150

Python

Python Model saved to C:\Users\user\AppData\Roaming\MetaQuotes\Terminal\D0E8209F77C8CF37AD8BF550E51FF075\MQL5\Scripts\linear_svc_iris.onnx

Python

Python Information about input tensors in ONNX:

Python 1. Name: float_input, Data Type: tensor(float), Shape: [None, 4]

Python

Python Information about output tensors in ONNX:

Python 1. Name: label, Data Type: tensor(int64), Shape: [None]

Python 2. Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

Python

Python Accuracy of LinearSVC model in ONNX format: 0.9666666666666667

2.2.2. MQL5 Code for Working with the LinearSVC Classifier Model

//+------------------------------------------------------------------+ //| Iris_LinearSVC.mq5 | //| Copyright 2023, MetaQuotes Ltd. | //| https://www.mql5.com | //+------------------------------------------------------------------+ #property copyright "Copyright 2023, MetaQuotes Ltd." #property link "https://www.mql5.com" #property version "1.00" #include "iris.mqh" #resource "linear_svc_iris.onnx" as const uchar ExtModel[]; //+------------------------------------------------------------------+ //| Test IRIS dataset samples | //+------------------------------------------------------------------+ bool TestSamples(long model,float &input_data[][4], int &model_classes_id[]) { //--- check number of input samples ulong batch_size=input_data.Range(0); if(batch_size==0) return(false); //--- prepare output array ArrayResize(model_classes_id,(int)batch_size); //--- ulong input_shape[]= { batch_size, input_data.Range(1)}; OnnxSetInputShape(model,0,input_shape); //--- int output1[]; float output2[][3]; //--- ArrayResize(output1,(int)batch_size); ArrayResize(output2,(int)batch_size); //--- ulong output_shape[]= {batch_size}; OnnxSetOutputShape(model,0,output_shape); //--- ulong output_shape2[]= {batch_size,3}; OnnxSetOutputShape(model,1,output_shape2); //--- bool res=OnnxRun(model,ONNX_DEBUG_LOGS,input_data,output1,output2); //--- classes are ready in output1[k]; if(res) { for(int k=0; k<(int)batch_size; k++) model_classes_id[k]=output1[k]; } //--- return(res); } //+------------------------------------------------------------------+ //| Test all samples from IRIS dataset (150) | //| Here we test all samples with batch=1, sample by sample | //+------------------------------------------------------------------+ bool TestAllIrisDataset(const long model,const string model_name,double &model_accuracy) { sIRISsample iris_samples[]; //--- load dataset from file PrepareIrisDataset(iris_samples); //--- test int total_samples=ArraySize(iris_samples); if(total_samples==0) { Print("iris dataset not prepared"); return(false); } //--- show dataset for(int k=0; k<total_samples; k++) { //PrintFormat("%d (%.2f,%.2f,%.2f,%.2f) class %d (%s)",iris_samples[k].sample_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3],iris_samples[k].class_id,iris_samples[k].class_name); } //--- array for output classes int model_output_classes_id[]; //--- check all Iris dataset samples int correct_results=0; for(int k=0; k<total_samples; k++) { //--- input array float iris_sample_input_data[1][4]; //--- prepare input data from kth iris sample dataset iris_sample_input_data[0][0]=(float)iris_samples[k].features[0]; iris_sample_input_data[0][1]=(float)iris_samples[k].features[1]; iris_sample_input_data[0][2]=(float)iris_samples[k].features[2]; iris_sample_input_data[0][3]=(float)iris_samples[k].features[3]; //--- run model bool res=TestSamples(model,iris_sample_input_data,model_output_classes_id); //--- check result if(res) { if(model_output_classes_id[0]==iris_samples[k].class_id) { correct_results++; } else { PrintFormat("model:%s sample=%d FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f]",model_name,iris_samples[k].sample_id,model_output_classes_id[0],iris_samples[k].class_id,iris_samples[k].features[0],iris_samples[k].features[1],iris_samples[k].features[2],iris_samples[k].features[3]); } } } model_accuracy=1.0*correct_results/total_samples; //--- PrintFormat("model:%s correct results: %.2f%%",model_name,100*model_accuracy); //--- return(true); } //+------------------------------------------------------------------+ //| Here we test batch execution of the model | //+------------------------------------------------------------------+ bool TestBatchExecution(const long model,const string model_name,double &model_accuracy) { model_accuracy=0; //--- array for output classes int model_output_classes_id[]; int correct_results=0; int total_results=0; bool res=false; //--- run batch with 3 samples float input_data_batch3[3][4]= { {5.1f,3.5f,1.4f,0.2f}, // iris dataset sample id=1, Iris-setosa {6.3f,2.5f,4.9f,1.5f}, // iris dataset sample id=73, Iris-versicolor {6.3f,2.7f,4.9f,1.8f} // iris dataset sample id=124, Iris-virginica }; int correct_classes_batch3[3]= {0,1,2}; //--- run model res=TestSamples(model,input_data_batch3,model_output_classes_id); if(res) { //--- check result for(int j=0; j<ArraySize(model_output_classes_id); j++) { //--- check result if(model_output_classes_id[j]==correct_classes_batch3[j]) correct_results++; else { PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch3[j],input_data_batch3[j][0],input_data_batch3[j][1],input_data_batch3[j][2],input_data_batch3[j][3]); } total_results++; } } else return(false); //--- run batch with 10 samples float input_data_batch10[10][4]= { {5.5f,3.5f,1.3f,0.2f}, // iris dataset sample id=37 (Iris-setosa) {4.9f,3.1f,1.5f,0.1f}, // iris dataset sample id=38 (Iris-setosa) {4.4f,3.0f,1.3f,0.2f}, // iris dataset sample id=39 (Iris-setosa) {5.0f,3.3f,1.4f,0.2f}, // iris dataset sample id=50 (Iris-setosa) {7.0f,3.2f,4.7f,1.4f}, // iris dataset sample id=51 (Iris-versicolor) {6.4f,3.2f,4.5f,1.5f}, // iris dataset sample id=52 (Iris-versicolor) {6.3f,3.3f,6.0f,2.5f}, // iris dataset sample id=101 (Iris-virginica) {5.8f,2.7f,5.1f,1.9f}, // iris dataset sample id=102 (Iris-virginica) {7.1f,3.0f,5.9f,2.1f}, // iris dataset sample id=103 (Iris-virginica) {6.3f,2.9f,5.6f,1.8f} // iris dataset sample id=104 (Iris-virginica) }; //--- correct classes for all 10 samples in the batch int correct_classes_batch10[10]= {0,0,0,0,1,1,2,2,2,2}; //--- run model res=TestSamples(model,input_data_batch10,model_output_classes_id); //--- check result if(res) { for(int j=0; j<ArraySize(model_output_classes_id); j++) { if(model_output_classes_id[j]==correct_classes_batch10[j]) correct_results++; else { double f1=input_data_batch10[j][0]; double f2=input_data_batch10[j][1]; double f3=input_data_batch10[j][2]; double f4=input_data_batch10[j][3]; PrintFormat("model:%s FAILED [class=%d, true class=%d] features=(%.2f,%.2f,%.2f,%.2f)",model_name,model_output_classes_id[j],correct_classes_batch10[j],input_data_batch10[j][0],input_data_batch10[j][1],input_data_batch10[j][2],input_data_batch10[j][3]); } total_results++; } } else return(false); //--- calculate accuracy model_accuracy=correct_results/total_results; //--- return(res); } //+------------------------------------------------------------------+ //| Script program start function | //+------------------------------------------------------------------+ int OnStart(void) { string model_name="LinearSVC"; //--- long model=OnnxCreateFromBuffer(ExtModel,ONNX_DEFAULT); if(model==INVALID_HANDLE) { PrintFormat("model_name=%s OnnxCreate error %d for",model_name,GetLastError()); } else { //--- test all dataset double model_accuracy=0; //-- test sample by sample execution for all Iris dataset if(TestAllIrisDataset(model,model_name,model_accuracy)) PrintFormat("model=%s all samples accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- test batch execution for several samples if(TestBatchExecution(model,model_name,model_accuracy)) PrintFormat("model=%s batch test accuracy=%f",model_name,model_accuracy); else PrintFormat("error in testing model=%s ",model_name); //--- release model OnnxRelease(model); } return(0); } //+------------------------------------------------------------------+

Output:

Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=71 FAILED [class=2, true class=1] features=(5.90,3.20,4.80,1.80] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=84 FAILED [class=2, true class=1] features=(6.00,2.70,5.10,1.60] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=85 FAILED [class=2, true class=1] features=(5.40,3.00,4.50,1.50] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=130 FAILED [class=1, true class=2] features=(7.20,3.00,5.80,1.60] Iris_LinearSVC (EURUSD,H1) model:LinearSVC sample=134 FAILED [class=1, true class=2] features=(6.30,2.80,5.10,1.50] Iris_LinearSVC (EURUSD,H1) model:LinearSVC correct results: 96.67% Iris_LinearSVC (EURUSD,H1) model=LinearSVC all samples accuracy=0.966667 Iris_LinearSVC (EURUSD,H1) model=LinearSVC batch test accuracy=1.000000

The accuracy of the exported ONNX model on the full Iris dataset is 96.67%, which corresponds to the accuracy of the original model.

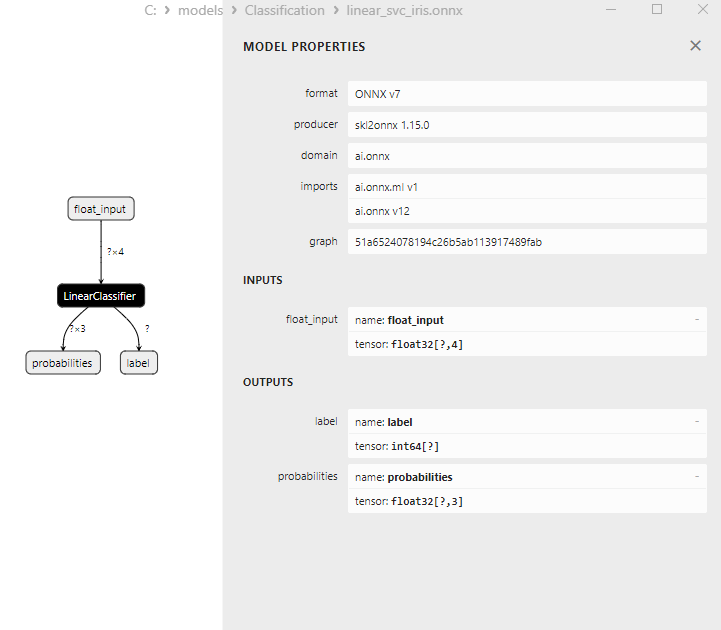

2.2.3. ONNX Representation of the LinearSVC Classifier Model

Figure 16. ONNX Representation of the LinearSVC Classifier Model in Netron

2.3. NuSVC Classifier

The Nu-Support Vector Classification (NuSVC) method is a powerful machine learning algorithm based on the Support Vector Machine (SVM) approach.

Principles of NuSVC:

- Support Vector Machine (SVM): NuSVC is a variant of SVM used for binary and multiclass classification tasks. The core principle of SVM is to find the optimal separating hyperplane that maximally separates classes while maintaining the maximum margin.

- The Nu Parameter: A key parameter in NuSVC is the Nu parameter (nu), which controls the model's complexity and defines the proportion of the sample that can be used as support vectors and errors. The value of Nu ranges from 0 to 1, where 0.5 means roughly half of the sample will be used as support vectors and errors.

- Parameter Tuning: Determining the optimal values for the Nu parameter and other hyperparameters may require cross-validation and a search for the best values on the training data.

- Kernel Functions: NuSVC can use various kernel functions such as linear, radial basis function (RBF), polynomial, and others. The kernel function determines how the feature space is transformed to find the separating hyperplane.

Advantages of NuSVC:

- Efficiency in High-Dimensional Spaces: NuSVC can work efficiently in high-dimensional spaces, making it suitable for tasks with a high number of features.

- Robustness to Outliers: SVM, and NuSVC in particular, are robust to outliers in data due to the use of support vectors.

- Control of Model Complexity: The Nu parameter allows for controlling model complexity and balancing data fitting with generalization.

- Good Generalization: SVM and NuSVC, in particular, exhibit good generalization, resulting in excellent performance on new, previously unseen data.

Limitations of NuSVC:

- Inefficiency with Large Data Volumes: NuSVC can be inefficient when trained on large data volumes due to computational complexity.

- Parameter Tuning Required: Tuning the Nu parameter and kernel function may require time and computational resources.

- Kernel Function Linearity: The effectiveness of NuSVC can significantly depend on the choice of kernel function, and for some tasks, experimentation with different functions may be necessary.

- Model Interpretability: SVM and NuSVC provide excellent results, but their models can be complex to interpret, especially when non-linear kernels are used.

Nu-Support Vector Classification (NuSVC) is a powerful classification method based on SVM with several advantages, including robustness to outliers and good generalization. However, its effectiveness depends on parameter and kernel function selection, and it can be inefficient for large data volumes. It is essential to carefully select parameters and adapt the method to specific classification tasks.

2.3.1. Code for Creating NuSVC Classifier Model

This code demonstrates the process of training a NuSVC Classifier model on the Iris dataset, exporting it to ONNX format, and performing classification using the ONNX model. It also evaluates the accuracy of both the original model and the ONNX model.

# Iris_NuSVC.py # The code demonstrates the process of training NuSVC model on the Iris dataset, exporting it to ONNX format, and making predictions using the ONNX model. # It also evaluates the accuracy of both the original model and the ONNX model. # Copyright 2023, MetaQuotes Ltd. # https://www.mql5.com # import necessary libraries from sklearn import datasets from sklearn.svm import NuSVC from sklearn.metrics import accuracy_score, classification_report from skl2onnx import convert_sklearn from skl2onnx.common.data_types import FloatTensorType import onnxruntime as ort import numpy as np from sys import argv # define the path for saving the model data_path = argv[0] last_index = data_path.rfind("\\") + 1 data_path = data_path[0:last_index] # load the Iris dataset iris = datasets.load_iris() X = iris.data y = iris.target # create a NuSVC model nusvc_model = NuSVC(nu=0.5, kernel='linear') # train the model on the entire dataset nusvc_model.fit(X, y) # predict classes for the entire dataset y_pred = nusvc_model.predict(X) # evaluate the model's accuracy accuracy = accuracy_score(y, y_pred) print("Accuracy of NuSVC model:", accuracy) # display the classification report print("\nClassification Report:\n", classification_report(y, y_pred)) # define the input data type initial_type = [('float_input', FloatTensorType([None, X.shape[1]]))] # export the model to ONNX format with float data type onnx_model = convert_sklearn(nusvc_model, initial_types=initial_type, target_opset=12) # save the model to a file onnx_filename = data_path + "nusvc_iris.onnx" with open(onnx_filename, "wb") as f: f.write(onnx_model.SerializeToString()) # print model path print(f"Model saved to {onnx_filename}") # load the ONNX model and make predictions onnx_session = ort.InferenceSession(onnx_filename) input_name = onnx_session.get_inputs()[0].name output_name = onnx_session.get_outputs()[0].name # display information about input tensors in ONNX print("\nInformation about input tensors in ONNX:") for i, input_tensor in enumerate(onnx_session.get_inputs()): print(f"{i + 1}. Name: {input_tensor.name}, Data Type: {input_tensor.type}, Shape: {input_tensor.shape}") # display information about output tensors in ONNX print("\nInformation about output tensors in ONNX:") for i, output_tensor in enumerate(onnx_session.get_outputs()): print(f"{i + 1}. Name: {output_tensor.name}, Data Type: {output_tensor.type}, Shape: {output_tensor.shape}") # convert data to floating-point format (float32) X_float32 = X.astype(np.float32) # predict classes for the entire dataset using ONNX y_pred_onnx = onnx_session.run([output_name], {input_name: X_float32})[0] # evaluate the accuracy of the ONNX model accuracy_onnx = accuracy_score(y, y_pred_onnx) print("\nAccuracy of NuSVC model in ONNX format:", accuracy_onnx)

Output:

Python

Python Classification Report:

Python precision recall f1-score support

Python

Python 0 1.00 1.00 1.00 50

Python 1 0.96 0.96 0.96 50

Python 2 0.96 0.96 0.96 50

Python

Python accuracy 0.97 150

Python macro avg 0.97 0.97 0.97 150

Python weighted avg 0.97 0.97 0.97 150

Python

Python Model saved to C:\Users\user\AppData\Roaming\MetaQuotes\Terminal\D0E8209F77C8CF37AD8BF550E51FF075\MQL5\Scripts\nusvc_iris.onnx

Python

Python Information about input tensors in ONNX:

Python 1. Name: float_input, Data Type: tensor(float), Shape: [None, 4]

Python

Python Information about output tensors in ONNX:

Python 1. Name: label, Data Type: tensor(int64), Shape: [None]

Python 2. Name: probabilities, Data Type: tensor(float), Shape: [None, 3]

Python

Python Accuracy of NuSVC model in ONNX format: 0.9733333333333334

2.3.2. MQL5 Code for Working with the NuSVC Classifier Model